Fifty Shades of Ratings: How to Benefit from a Negative Feedback in Top-N Recommendations Tasks

Conventional collaborative filtering techniques treat a top-n recommendations problem as a task of generating a list of the most relevant items. This formulation, however, disregards an opposite - avoiding recommendations with completely irrelevant i…

Authors: Evgeny Frolov, Ivan Oseledets

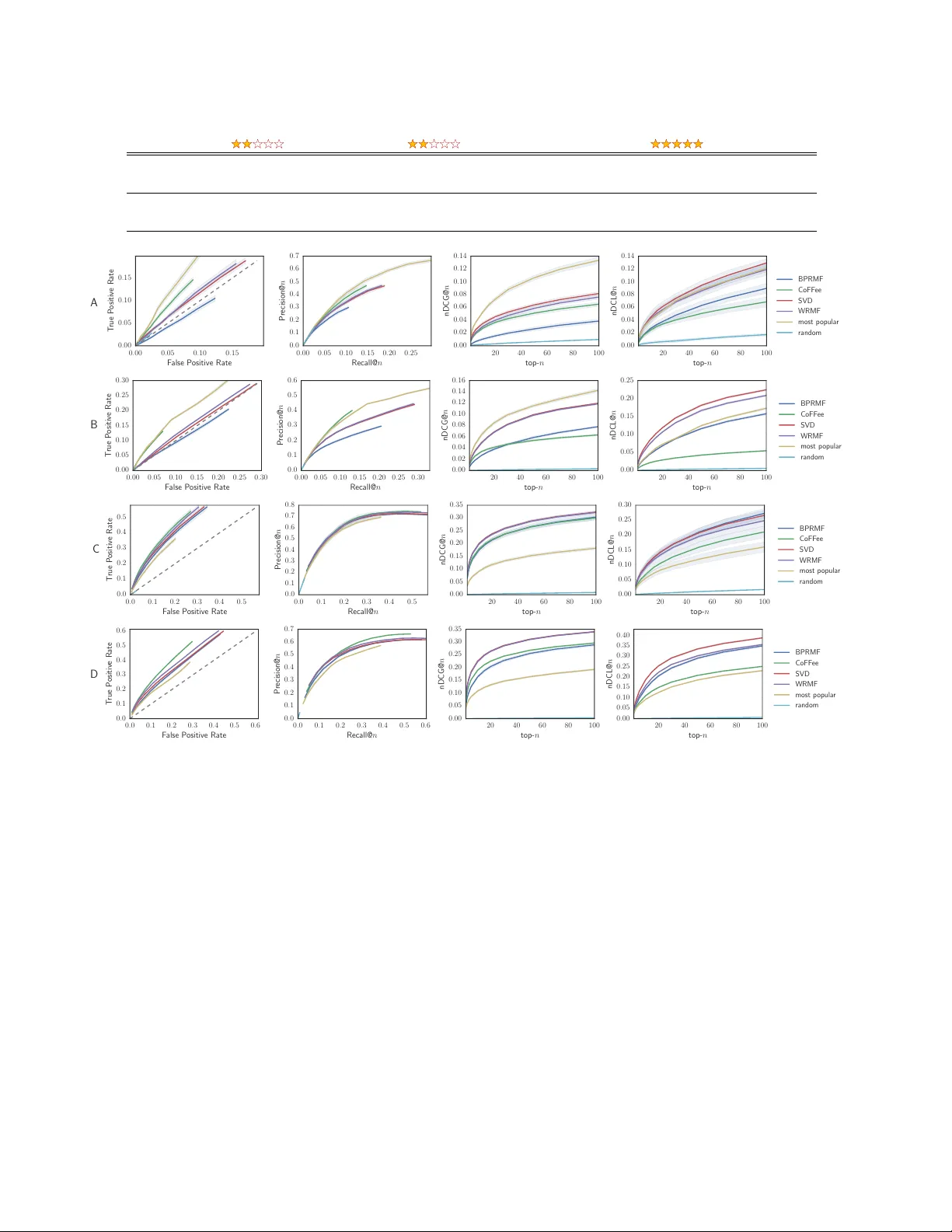

Fifty Shades of Ratings: Ho w to Benefit fr om a Negative Feedbac k in T op-N Recommendations T asks Evgeny F rolov e vgeny .frolov@sk oltech.r u Skolk ov o Institute of Science and T echnology 143025, Nobel St. 3, Skolk ov o Innov ation Center Moscow , Russia Iv an Oseledets i.oseledets@skoltech.ru Skolk ov o Institute of Science and T echnology Institute of Numerical Mathematics of the Russian Academy of Sciences 119333, Gubkina St. 8 Moscow , Russia ABSTRA CT Con v en tional collab orativ e filtering techniques treat a top- n recommendations problem as a task of generating a list of the most relev an t items. This formulation, how ever, disre- gards an opposite – a vo iding recommendations with com- pletely irrelev ant items. Due to that bias, standard algo- rithms, as well as commonly used ev aluation metrics, b e- come insensitive to negativ e feedback. In order to resolve this problem we propose to treat user feedback as a categor- ical v ariable and mo del it with users and items in a ternary w ay . W e emplo y a third -order tensor factorization tec hnique and implement a higher order folding-in method to supp ort online recommendations. The method is equally sensitive to en tire spectrum of user ratings and is able to accurately pre- dict relev ant items even from a negative only feedback. Our method ma y partially eliminate the need for complicated rating elicitation pro cess as it provides means for p ersonal- ized recommendations from the v ery b eginning of an in ter- action with a recommender system. W e also propose a mo d- ification of standard metrics whic h helps to reveal un wan ted biases and account for sensitivity to a negative feedback. Our mo del achiev es state-of-the-art quality in standard rec- ommendation tasks while significantly outp erforming other methods in the cold-start “no-positive-feedbac k” scenarios. K eywords Collaborative filtering; recommender systems; explicit feed- bac k; cold-start; tensor factorization 1. INTR ODUCTION One of the main c hallenges faced across different recom- mender systems is a c old-start problem. F or example, in a user cold-start scenario, when a new (or unrecognized) user is introduced to the system and no side information is av ail- able, it is imp ossible to generate relev ant recommendations without asking the user to pro vide initial feedbac k on some Permission to make digital or hard copies of all or part of this work for personal or classroom use is granted without fee provided that copies are not made or distributed for profit or commercial advantage and that copies bear this notice and the full citation on the first page. Copyrights for components of this work o wned by others than the author(s) must be honored. Abstracting with credit is permitted. T o copy otherwise, or republish, to post on servers or to redistribute to lists, requires prior specific permission and /or a fee. Request permissions from permissions@acm.org. RecSys ’16, September 15–19, 2016, Boston, MA, USA. c 2016 Copyright held by the owner/author(s). Publication rights licensed to A CM. ISBN 978-1-4503-4035-9/16/09. . . $15.00 DOI: http://dx .doi.org/10.1145/2959100.2959170 items. Randomly pic king items for this purp ose might b e ineffectiv e and frustrating for the user. A more common ap- proac h, t ypically referred as a rating elicitation, is to pro vide a pre-calculated non-p ersonalized list of the most represen- tativ e items. How ever, making this list, that on the one hand helps to better learn the user preferences, and on the other hand does not lead to the user boredom, is a no n-trivial task and still is a sub ject for activ e researc h. The problem becomes even worse if a pre-built list of items resonate p o orly with the user’s tastes, resulting in mostly negativ e feedback (e.g. items that get lo w scores or lo w rat- ings from the user). Conv entional collaborative filtering al- gorithms, suc h as matrix factorization or similarity-bas ed models, tend to fa vor similar items, whic h are lik ely to be irrelev an t in that case. This is typic ally av oided b y gener- ating more items, until enough p ositive feedback (e.g. items with high scores or high ratings) is collected and relev an t recommendations can b e made. Ho w ever, this makes en- gagemen t with the system less effortless for the user and ma y lead to a loss of interest in it. W e argue that these problems can b e alleviated if the sys- tem is able to learn equally well from b oth p ositiv e and negativ e feedback. Consider the following mo vie recommen- dation example: a new user marks the “Scarface” movie with a lo w rating, e.g. 2 stars out of 5, and no other information is present in his or her profile. This is likely to indicate that the user do es not like movies ab out crime and violence. It also seems natural to assume that the user probably prefers “opposite” features, such as sentimen tal story (which can b e presen t in roman tic movies or drama), or happy and joyful narrativ e (pro vided by animation or comedies). In this case, asking to rate or recommending the “Godfather” mo vie is definitely a redundant and inappropriate action. Similarly , if a user provides some negative feedback for the first part of a series (e.g. the first movie from the “Lord of the rings” trilogy), it is quite natural to expect that the system will not immediately recommend another part from the same series. A more prop er wa y to engage with the user in that case is to lev erage a sort of “users, who dislike that item, do like these items instead ” scenario. Users certainly can share pref- erences not only in what they lik e, but also in what they do not like and it is fair to exp ect that technique s, based on collaborative filtering approach , could exploit this for more accurate predictions even from a solely negativ e feedback. In addition to that, a negative feedback may hav e a greater importance for a user, than a p ositive one. Some psycholog- ical studies demonstrate, that not only emotionally negativ e experience has a stronger impact on an individual’s memory [11], but also hav e a greater effect on h umans b ehavi or in general [18], known as the ne gativity bias . Of course, a num b er of heuristics or tw eaks could be pro- posed for traditional techniques to fix the problem, how ever there are intrinsic limitations within the mo dels that make the task hardly solv able. F or example, algorithms could start lo oking for less similar items in the presence of an item with a negative feedback. How ever, there is a problem of preserving relev ance to the user’s tastes. It is not enough to simply pic k the most dissimilar items, as they are most lik ely to lo ose the connection to user preferences. Moreov er, it is not even clear when to switch betw een the “least simi- lar” and the “most similar” mo des. If a user assigns a 3 star rating for a movie, do es it mean that the system still has to lo ok for the least similar items or should it switch back to the most similar ones? User-based similarit y approach is also problematic, as it tends to generate a very broad set of recommendations with a mix of similar and dissimilar items, which again leads to the problem of extracting the most relev ant, yet unlike recommendations. In order to deal with the denoted problems we prop ose a new tensor-based model, that treats feedback data as a special type of categorical v ariable. W e sho w that our ap- proac h not only improv es user cold-start scenarios, but also increases general recommendations accuracy . The con tribu- tions of this pap er are three-fold: • W e introduce a collab orative filtering model based on a third order tensor factorization tec hnique. In con- trast to commonly used approach, our mo del treats explicit feedback (such as movie ratings) not as a car- dinal, but as an ordinal utility measure. The mo del benefits greatly from such a representation and pro- vides a m uch richer information about all possible user preferences. W e call it shades of r atings . • W e demonstrate that our model is equally sensitive to both p ositive and negative user feedback, which not only improv es recommendations quality , but also re- duces the efforts needed to learn user preferences in cold-start scenarios. • W e also prop ose a higher ord er folding-in metho d for real-time generation of recommendations in support to cold-start scenarios. The metho d do es not require re- computation of the full tensor-based mo del for serving new users and can be used online. 2. PR OBLEM FORMULA TION The goal for conv entional recommender system is to b e able to accurately generate a personalized list of new and in- teresting items ( top-n r e c ommendations ), given a sufficient n um b er of examples with user preferences. As has b een noted, if preferences are unknown this requires sp ecial tech- niques, such as rating elicitation, to b e inv olved first. In order to av oid that extra step we introduce the following additional requirements for a recommender system: • the system must b e sensitiv e to a full user feedback scale and not disregard its negative part, • the system must be able to resp ond prop erly even to a single feedbac k and tak e in to accoun t its type (positive or negativ e). These requirements should help to gen tly navigate new users through the catalog of items, making the experience highly p ersonalized, as after each new step the system nar- ro ws do wn user preferences. 2.1 Limitations of traditional models Let us consider without the loss of generalit y the problem of movi es recommendations. T raditionally , this is formu- lated as a prediction task: f R : User × Movie → Rating , (1) where User is a set of all users, Movie is a set of all movies and f R is a utility function, that assigns predicted v alues of ratings to every ( user, movie ) pair. In collaborative fil- tering models the utility function is learned from a prior history of in teractions, i.e. previous examples of how users rate mo vies, which can be conv eniently represen ted in the form of a matrix R with M rows corresp onding to the num- ber of users and N columns corresp onding to the num b er of mo vies. Elements r ij of the matrix R denote actual movie ratings assigned by users. As users tend to provide feedbac k only for a small set of movies, no t all en tries of R are known, and the utility function is expected to infer the rest v alues. In order to pro vide recommendations, the predicted v alues of ratings ˆ r ij are used to rank movies and build a ranked list of top- n recommendations, that in the simplest case is generated as: toprec( i, n ) := n arg max j ∈ Movie ˆ r ij . (2) where toprec( i, n ) is a list of n top-rank ed mo vies predicted for a user i . The wa y the v alues of ˆ r ij are calculated de- pends on a collab orative filtering algorithm and w e argue that standard algorithms are unable to accurately predict relev an t movies giv en only an example of user preferences with lo w ratings. 2.1.1 Matrix factorization Let us first start with a matrix factorization approac h. As w e are not aiming to predict the exact v alues of ratings and more interested in correct ranking, it is adequate to employ the singular v alue decomposition (SVD) [10] for this task. Originally , SVD is not defined for data with missing v alues, ho w ever, in case of top- n recommendations formulation one can safely impute missing en tries with zero es, especially tak- ing into account that pure SVD can provide state-of-the-art qualit y [5, 13]. Note, that by SVD w e mean its truncated form of rank r < min ( M , N ), which can be expressed as: R ≈ U Σ V T ≡ P Q T , (3) where U ∈ R M × r , V ∈ R N × r are orthogonal factor matri- ces, that embed users and movies resp ectiv ely onto a lo wer dimensional space of latent (or hidden) features, Σ is a ma- trix of singular v alues σ 1 ≥ . . . ≥ σ r > 0 that define the strength or the contribution of every laten t feature into re- sulting score. W e also provide an equiv alen t form with fac- tors P = U Σ 1 2 and Q = V Σ 1 2 , commonly used in other matrix factorization techniques. One of the key prop erties, that is pro vided b y SVD and not by many other factorization techniques is an orthog- onalit y of factors U and V right “out of the b ox” . This property helps to find approximate v alues of ratings even for users that were not a part of original matrix R . Using a w ell known folding-in approac h [8], one can easily obtain an expression for predicted v alues of ratings: r ≈ V V T p , (4) where p is a (sparse) vec tor of initial user preferences, i.e. a vector of lenght M , where a p osition of every non-zero elemen t corresp onds to a movie, rated b y a new user, and its v alue corresponds to the actual user’s feedbac k on that mo vie. Resp ectively , r is a (dense) vector of length M of all predicted mo vie ratings. Note, that for an existing user (e.g. whos preferences are present in matrix R ) it returns exactly the corresponding v alues of SVD. Due to factors ortho gonalit y the expression ca n be treated as a pro je ction of user preferences on to a space of laten t features. It also provides means for quick recommendations generation, as no recomputation of SVD is required, and th us is suitable for online engagemen t with new users. It should b e noted also, that the expression (4) do es not hold if matrix V is not orthogonal, which is typically the case in man y other factorization techniques (and even for Q matrix in (3)). Ho wev er, it can b e transformed to an orthogonal form with QR decomp osition. Nev ertheless, there is a subtle issue here. If, for instance, p con tains only a single rating, then it does not matter what exact v alue it has. Different v alues of the rating will simply scale all t he resulting sc ores, given b y (4), and will not affect the actual ranking of recommendations. In other words, if a user provides a single 2-star rating for some movie, the recommendations list is going to be the same, as if a 5-star rating for that movie is pro vided. 2.1.2 Similarity-based approac h It ma y seem that the problem can be alleviated if we ex- ploit some user similarity technique. Indeed, if users share not only what they like, but also what they dislike, then users, similar to the one with a negative only feedbac k, migh t giv e a go o d list of candidate movies for recommendations. The list can b e generated with help of the following expres- sion: r ij = 1 K X k ∈ S i ( r kj ) sim( i, k ) , (5) where S i is a set of users, the most similar to user i , sim( i, k ) is some similarity measure b et w een users i and k and K is a normalizing factor, equal to P i ∈ S i | sim ( i, k ) | in the sim- plest case. The similarit y b etw een users can b e computed b y comparing either their latent features (giv en by the ma- trix U ) or simply the ro ws of initial matrix R , which turns the task in to a traditional user-based k-Ne ar est Neighb ors problem (kNN). It can b e also mo dified to a more adv anced forms, that take into account user biases [2]. Ho w ever, ev en though more relev ant items are lik ely to get higher scores in user-similarity approac h, it still do es not guaran tee an isolation of irrelev an t items. Let us demon- strate it on a simple example. F or the illustration purposes w e will use a simple kNN approach, based on a cosine sim- ilarit y measure. How ever, it can b e generalized to more adv anced v ariations of (5). Let a new user T om hav e rated the “Scarface” movie with rating 2 (see T able 1) and w e need to decide which of tw o other movies, namely “T oy Story” or “Godfather” , should b e recommended to T om, given an information on how other users - Alice, Bob and Carol - hav e also rated these movies. T able 1: Similarity-based recommendations issue. Scarface T o y Story Godfather Observation Alice 2 5 3 Bob 4 5 Carol 2 5 New user T om 2 ? ? Pr e diction 2.6 3.1 As it can be seen, Alice and Carol, similarly to T om, do not like criminal movies. They also b oth enjoy the “T o y Story” animation. Ev en though Bob demonstrates an op- posite set of in terests, the preferences of Alice and Carol prev ail. F rom here it can b e concluded that the most rele- v ant (or safe) recommendation for T om would b e the “T oy Story” . Nevertheless, the prediction formula (5) assigns the highest score to the “Go dfather” movie, whic h is a result of a higher v alue of cosine similarity b et w een Bob’s and T om’s preference v ectors. 2.2 Resolving the inconsistencies The problems, describ ed ab ov e, suggest that in order to build a mo del, that fulfills the requirements, prop osed in Section 2, we hav e to mov e aw ay from traditional represen- tation of ratings. Our idea is to restate the problem form u- lation in the following wa y: f R : User × Movie × Rating → Relev ance Score , (6) where Rating is a domain of ordinal (categorical) v ariables, consisting of all p ossible user ratings, and Relev ance Score denotes the likelin ess of observing a certain ( user, movie, r ating ) triplet. With this formulation relations betw een users, movies and ratings are mo delled in a ternary wa y , i.e. all three v ariables influence each other and the result- ing score. This t ype of relations can b e mo delled with sev- eral metho ds, such as F actorization Machines [17] or other con text-a w are metho ds [1]. W e propose to solv e the prob- lem with a tensor-based approach, as it seems to b e more flexible, naturally fit the formu lation (6) and has a num b er of adv antages, describ ed in Section 3. U ser s It ems 3 U s er s 3 1 2 5 4 1 Figure 1: F rom a matrix to a third order tensor. 3. TENSOR CONCEPTS The ( user, movie, r ating ) triplets can b e encoded within a three-dimensional array (see Figure 1) whic h we will call a third order tensor and denote with calligraphic capital letter X ∈ R M × N × K . The sizes of tensor dimensions (or modes ) correspond to the total num b er of unique users, movies and ratings. The v alues of the tensor X are binary (see Figure 1): ( x ij k = 1 , if ( i, j, k ) ∈ S, x ij k = 0 , otherwise , (7) where S is a history of known in teractions, i.e. a set of ob- serv ed ( user, movie, r ating ) triplets. Similarly to a matrix factorization case (3), we are interested in finding such a tensor factorization that reveals some common patterns in the data and finds laten t represen tation of users, mo vies and ratings. There are tw o commonly used tensor factorization tec h- niques, namely Candecomp/Parafac (CP) and T uck er De- composition (TD) [12]. As we will show in Section 3.2, the use of TD is more adv an tageous, as it giv es orthogonal fac- tors, that can be used for quick recommendations compu- tation, similarly to (4). Moreov er, an optimization task for CP , in contrast to TD, is ill-posed in general [7]. 3.1 T ensor factorization The tensor in TD format can b e represen ted as follows: X ≈ G × 1 U × 2 V × 3 W , (8) where × n is an n -mode product, defined in [12], U ∈ R M × r 1 , V ∈ R N × r 2 , W ∈ R K × r 3 are orthogonal matrices, that rep- resen t em b edding of the users, movies and ratings onto a reduced space of latent features, similarly to SVD case. T ensor G ∈ R r 1 × r 2 × r 3 is called the core of TD and a tuple of num bers ( r 1 , r 2 , r 3 ) is called a multiline ar r ank of the decomp osition. The decomp osition can b e effectively computed with a higher order orthogonal iterations (HOOI) algorithm [6]. 3.2 Efficient computation of recommendations As recommender systems t ypically ha v e to deal with large n um b ers of users and items, this renders the problem of fast recommendations computation. F actorizing the tensor for ev ery new user can tak e prohibitivel y long time which is inconsisten t with the requirement of real-time recommenda- tions. F or this purposes w e prop ose a higher or der folding-in method (see Figure 2) that finds approximate recommenda- tions for an y unseen user with comparativ ely low computa- tional cost (cf. (4)): R ( i ) = V V T P ( i ) W W T , (9) where P ( i ) is an N × K binary matrix of an i -th user’s pref- erences and R ( i ) ∈ R N × K is a matrix of recommendations. Similarly to SVD-based folding-in, (9) can b e treated as a 𝒢 𝑈 𝑉 𝑊 𝒳 ≈ Figure 2: Higher order folding-in for T uck er decom- p osition. A slice with a new user information in the original data (left) and a corresp onding row up date of the factor matrix in T uck er decomp osition (righ t) are marked with solid a color. 0 1 2 3 4 5 Rating 0 200 400 600 800 1000 Movie id Figure 3: Example of the predicted user preferences, that we call shades of r atings . Every horizon tal bar can b e treated as a lik eliness of some movie to ha ve a sp ecific rating for a sel ected user. More dense colors corresp ond to higher relev ance scores. sequence of pro jections to latent spaces of mo vies and rat- ings. Note, this is a straigh tforward generalization of matrix folding-in and w e omit its deriv ation due to space limits. In the case of a known user the expression also giv es the exact v alues of the TD. 3.3 Shades of ratings Note that even though (9) lo oks very similar to (4), there is a substantial difference in what is b eing scored. In the case of a matrix factorization we score ratings (or other forms of feedback) directly , whereas in the tensor case we sc or e the likeliness of a r ating to have a p articular value for an item . This gives a new and more informative view on predicted user preferences (see Figure 3). Unlike the con- v en tional metho ds, every movie in recommendations is not associated with just a single score, but rather with a full range of all possible rating v alues, that users are exp osed to. Another remark able prop erty of “rating shades” is that it can b e naturally utilized for b oth ranking and rating pre- diction tasks. The ranking task corresponds to finding a maxim um score along the mo vies mo de (2nd mo de of the tensor) for a selected (highest) rating. Note, that the rank- ing can b e p erformed within every rating v alue. Rating pre- diction corresp onds to a maximization of relev ance scores along the ratings mo de (i.e. the 3rd mo de of the tensor) for a selected movie. W e utilize this feature to additionally v erify the mo del’s qualit y (see Section 6). If a p ositive feedbac k is defined by several ratings (e.g. 5 and 4), than the sum of scores from these ratings can b e used for ranking. Our exp eriments sho w that this t ypically leads to an improv ed quality of predictions comparing to an unmodified version of an algorithm. 4. EV ALU A TION As has b een discussed in Section 2.1, standard recom- mender mo dels are unable to prop erly op erate with a nega- tiv e feedback and more often simply ignore it. As an exam- ple, a well known recommender systems library MyMedi- aLite [9], that features man y state-of-the-art algorithms, does not support a negativ e feedback for item recommen- dation tasks. In addition to that, a common wa y of p erforming an of- fline ev aluation of recommendations quality is to measure only how well a tested algorithm can retrieve highly rele- v ant items. Nevertheless, both relev ance-based (e.g. preci- sion, recall, etc.) and ranking-based (e.g. nDCG, MAP , etc.) metrics, are completely insensitive to irrelev ant items predic- tion: an algorithm that recommends 3 p ositive ly rated and 7 negatively rated items will gain the same ev aluation score as an algorithm that recommends 3 p ositively rated and 7 items with unknown (not necessarily negativ e) ratings. This leads to sev eral imp ortant questions, that are t ypi- cally obscured and that w e aim to find an answer to: • How likely an algorithm is to place irrelev an t items in top- n recommendations list and rank them highly? • Do es high ev aluation p erformance in terms of relev ant items prediction guarantee a low er num b er of irrele- v ant recommendations? Answ ering these questions is imp ossible within standard ev al- uation paradigm and we prop ose to adopt commonly used metrics in a wa y that resp ects crucial difference b etw een the effects of relev an t and irrelev ant recommendations. W e also exp ect that mo dified metrics will reflect the effects, de- scribed in Section 1 (the Scarface and Go dfather example). 4.1 Negativity bias compliance The first step for the metrics modification is to split rating v alues in to 2 classes: the class of a negativ e feedback and the class of a positive feedbac k. This is done by selecting a ne gativity thr eshold v alue, such that the v alues of ratings abov e this threshold are treated as positive examples and all other v alues - as negativ e. The next step is to allow generated recommendations to be ev aluated against the negative user feedback, as well as the p ositive one. This leads to a classical notion of true positive (tp), true negative (tn), false p ositive (fp) and false negativ e (fn) types of predictions [19], which also renders a classical definition of relev ance metrics, namely precision ( P ) and recall ( R , also referred as T rue Positive R ate (TPR)): P = tp tp + f p , R = tp tp + f n . Similarly , F alse Positive R ate (FPR) is defined as F P R = f p tp + f p . The TPR to FPR curve, also known as a Receiver Operat- ing Characteristics (ROC) curve, can b e used to assess the tendency of an algorithm to recommend irrelev ant items. W orth noting here, that if items, recommended by an algo- rithm, are not rated by a user (question marks on Figure 4), then w e simply ignore them and do not mark as false positive in order to a void fp rate o verest imation [19]. The Disc ounte d Cumulative Gain (DCG) metric will lo ok v ery similar to the original one with the exception that we do not include the negative ratings in to the calculations at all: DCG = X p 2 r p − 1 log 2 ( p + 1) , (10) where p : { r p > negativity threshold } and r p is a rating of a positively rated item. This gives an nDCG metric: nDCG = DCG iDCG , where iDCG is a v alue returned by an ideal ordering or rec- ommended items (i.e. when more relev ant items are ranked higher in top- n recommendations list). + - + + - - + + + tp tn fp fn fp + ? ? Us e r p r e f e r e n c e s R e c o m m e n d at i o n s Figure 4: Definition of matc hing and mismatc hing predictions. Recommendations that are not a part of the known user preferences (question marks) are ignored and not considered as false p ositive. 4.2 Penalizing irr elevant r ecommendations The nDCG metric indicates how close tp predictions are to the beginning of a top- n recommendations list, how ever, it tells nothing ab out the ranking of irrelev ant items. W e fix this by a modification of (10) with respect to a negative feedbac k, whic h we call a Disc ounted Cumulative L oss : DCL = X n 2 − r n − 1 − log 2 ( n + 1) , (11) where n : { r n ≤ negativity threshold } and r n is a rating of a negativ ely rated item. Similarly to nDCG, nDCL metric is defined as: nDCL = DCL iDCL , where iDCL is a v alue returned by an ideal ranking or irrel- ev an t predictions (i.e. the more irrelev ant are ranked lo wer). Note, that as nDCL p enalizes high ranking of irrelev an t items, therefore the lower ar e the values of nDCL the b etter . In the exp eriments all the metrics are measured for differ- en t v alues of top- n list length, i.e. the metrics are metrics at n . The v alues of metrics are av eraged ov er all test users. 4.3 Evaluation methodology F or the ev aluation purp oses w e split datasets in to tw o sub- sets, disjoin t by users (e.g. ev ery user can only b elong to a single subset). First subset is used for learning a mo del, it con tains 80% of all users and is called a tr aining set . The remaining 20% of users (the test users) are unseen in the training set and are used for mo dels ev aluation. W e holdout a fixed num b er of items from every test user and put them in to a holdout set . The remaining items form an observation set of the test users. Recommendations, generated based on an observ ation set are ev aluated against a holdout set. W e also p erform a 5-fold cross v alidation by selecting differ- en t 20% of users eac h time and av eraging the results ov er all 5 folds. The main difference from common ev aluation methodologies is that w e allow b oth relev ant and irrelev ant items in the holdout set (see Figure 4 and Section 4.1). F ur- thermore, w e v ary the num b er and the type of items in the observ ation set, which leads to v arious scenarios: • leaving only one or few items with the low est ratings leads to the case of “no-p ositiv e-feedbac k” cold-start; • if all the observ ation set items are used to predict user preferences, this serves as a pro xy to a standard rec- ommendation scenario for a kno wn user. W e hav e also conducted rating prediction exp eriments when a single top-rated item is held out fro m every test user (see Section 6). In this experiment we verify what ratings are predicted by our model (see Section 3.3 for explanation of rating calculation) against the actual ratings of the hold- out items. 5. EXPERIMENT AL SETUP 5.1 Datasets W e use publicly a v ailable Mo vielens 1 1M and 10M datasets as a common standard for offlin e recommen der sys tems ev al- uation. W e hav e also trained a few mo dels on the latest Mo vielens dataset (22M rating, up dated on 1/2016) and de- plo y ed a movie recommendations web application for online ev aluation. This is esp ecially handy for our specific scenar- ios, as the conten t of each movie is easily understoo d and con tradictions in recommendations can b e ea sily ey e spotted (see T able 2). 5.2 Algorithms W e compare our approac h with the state-of-the-art matrix factorization metho ds, designed for items recommendations task as well as tw o non-p ersonalized baselines. • CoFF ee (Collaborative F ull F eedback mo del) is the proposed tensor-based approac h. • SVD , also referred as Pur eSVD [5], uses standard SVD. As in the CoFF ee model, missing v alues are simply im- puted with zeros. • WRMF [15] is a matrix factorization metho d, where missing ratings are not ignored or imputed with zeroes, but rather are uniformly w eighted. • BPRMF [16] is a matrix factorization method, p ow- ered by a Bay esian p ersonalized ranking approach, that optimizes pair-wise preferences b et ween observed and unobserv ed items. • Most popu lar mo del alw a ys recommends top- n items with the highest num b er of ratings (indep endently of ratings v alue). • R andom guess model generates recommendations ran- domly . SVD is based on Python’s Nump y , and SciPy pack ages, whic h hea vily use BLAS and LAP ACK functions as well as MKL optimizations. CoFF ee is also implemen ted in Python, using the same pac k ages for most of the linear algebra op- erations. W e additionally use Pandas pac k age to supp ort sparse tensors in COO format. BPRMF and WRMF implementations are taken from the MyMediaLite [9] library . W e wrap the command line util- ities of these metho ds with Python, so that all the tested algorithms share the same namespace and configuration. Command line utilities do not supp ort online ev aluation and w e implemen t our o wn (orthogonalized) folding-in on the factor matrices generated by these mo dels. Learning times of the mo dels are depicted on Figure 5. The source co de as w ell as the link to our web app can be found at Gith ub 2 . 5.3 Settings W e prepro cess these datasets to con tain only users who ha v e rated no less than 20 mo vies. Number of holdout items is alwa ys set to 10. The top- n v alues range from 1 to 100. The test set items selection is 1 or 3 negativ ely rated, 1, 3 or 5 with random ratings and all (see Section 4.3 for details). W e include higher v alues of top- n (up to 100) as we allow random items to app ear in the holdout set. This helps to mak e experimentation more sensitiv e to wrong recommen- dations, that match negative feedback from the user. The 1 h ttps://grouplens.org/datasets/mo vielens/ 2 h ttps://gith ub.com/Evfro/fifty-shades 0 3 : 1 0 . 5 0 1 : 1 9 . 6 0 0 : 0 0 . 2 0 0 : 1 7 . 7 BR P MF W R M F SV D Co F F e e Figure 5: Mo dels’ learning time, mm:ss.ms (single laptop, Intel i5 2.5GHz CPU, Mo vielens 10M). negativit y threshold is set to 3 for Mo vielens 1M and 3.5 for Mo vielens 10M. Both observ ation and holdout sets are cleaned from the items that are not presen t in the training set. The num b er of laten t factors for all matrix factoriza- tion mo dels is set to 10, CoFF ee multilinear rank is (13, 10, 2). Regularization parameters of WRMF and BPRMF algorithms are set to MyMediaLite’s defaults. 6. RESUL TS Due to a space constraints we pro vide only the most im- portant and informative part of ev aluation results. They are presented on the Figure 6. Rows A and C corresp ond to Mo vielens 1M dataset, rows B and D correspond to Movie- lens 10M dataset. W e also rep ort a few interesting hand- pic k ed examples of mo vies recommendations, generated from a single negative feedback (see T able 2). How to read the graphs. The results are better understoo d with particular exam- ples. Let us start with the first tw o rows on Figure 6 (ro w A is for Movielens 1M and ro w B is for Movielens 10M). These ro ws corresp ond to a p erformance of the mo dels, when only a single (random) negativ e feedbac k is pro vided. First of all, it can b e seen that the item p opularity mo del gets v ery high scores with TPR to FPR, precision-recall and nDCG metrics (first 3 columns on the figure). One could conclude that this is the most appropriate mo del in that case (when almost nothing is know ab out user preferences). Ho w ever, high nDCL score (4th column) of this mo del indi- cates that it is also very likely to place irrelev ant items at the first place, whic h can b e disapp oin ting for users. Similar po or ranking of irrelev ant items is observed with SVD and WRMF mo dels. On the other hand, the low est nDCL score belongs to the random guess model, whic h is trivially due to a very p o or ov erall performance. The same conclusion is v alid for BPRMF mo del, that hav e low nDCL (row A), but fails to recommend relev ant items from a negative feedbac k. The only model, that b ehav es reasonably well is the pro- posed CoFF ee mo del. It has low nDCL, i.e. it is mor e suc- c essful at avoiding irr elevant r e c ommendations at the first plac e . This effect is especially strong on the Movielens 10M dataset (ro w B). The mo del also exhibits a better or compa- rable to the i tem popularity mo del’s p erformance on relev ant items prediction. At first glance, the surprising fact is that the mo del has a low nDCG score. Considering the fact that this can not b e due to a higher ranking of irrelev ant items (as it follows from lo w nDCL), this is simply due to a higher ranking of items, that w ere not y et rated by a user (recall the question marks on Figure 4). The model tries to mak e a safe guess by filtering out irrel- ev an t items and proposing those items that are more likely to be relev ant to an original negative feedback (unlike pop- ular or similar items recommendation). This conclusion is T able 2: Hand-pic ked examples from top-10 recommendations generated on a single feedbac k. The mo dels are trained on the latest Movielens dataset. Scarface LOTR: The Two T ow ers Star W ars: Episo de VI I - The F orce Awak ens CoFF ee T oy Story Net, The Dark Knight, The Mr. Holland’s Opus Cliffhanger Batman Begins Independence Day Batman F orever Star W ars: Episo de IV - A New Hop e SVD Reservoir Dogs LOTR: The F ellowship of the Ring Dark Knight, The Goo dfellas Shrek Inception Godfather: P art I I, The LOTR: The Return of the King Iron Man 0 . 00 0 . 05 0 . 10 0 . 15 F alse P ositive Rate 0 . 00 0 . 05 0 . 10 0 . 15 T rue P ositive Rate A 0 . 00 0 . 05 0 . 10 0 . 15 0 . 20 0 . 25 Recall@ n 0 . 0 0 . 1 0 . 2 0 . 3 0 . 4 0 . 5 0 . 6 0 . 7 Precision@ n 20 40 60 80 100 top- n 0 . 00 0 . 02 0 . 04 0 . 06 0 . 08 0 . 10 0 . 12 0 . 14 nDCG@ n 20 40 60 80 100 top- n 0 . 00 0 . 02 0 . 04 0 . 06 0 . 08 0 . 10 0 . 12 0 . 14 nDCL@ n BPRMF CoFF ee SVD WRMF most p opula r random 0 . 00 0 . 05 0 . 10 0 . 15 0 . 20 0 . 25 0 . 30 F alse P ositive Rate 0 . 00 0 . 05 0 . 10 0 . 15 0 . 20 0 . 25 0 . 30 T rue P ositive Rate B 0 . 00 0 . 05 0 . 10 0 . 15 0 . 20 0 . 25 0 . 30 Recall@ n 0 . 0 0 . 1 0 . 2 0 . 3 0 . 4 0 . 5 0 . 6 Precision@ n 20 40 60 80 100 top- n 0 . 00 0 . 02 0 . 04 0 . 06 0 . 08 0 . 10 0 . 12 0 . 14 0 . 16 nDCG@ n 20 40 60 80 100 top- n 0 . 00 0 . 05 0 . 10 0 . 15 0 . 20 0 . 25 nDCL@ n BPRMF CoFF ee SVD WRMF most p opula r random 0 . 0 0 . 1 0 . 2 0 . 3 0 . 4 0 . 5 F alse P ositive Rate 0 . 0 0 . 1 0 . 2 0 . 3 0 . 4 0 . 5 T rue P ositive Rate C 0 . 0 0 . 1 0 . 2 0 . 3 0 . 4 0 . 5 Recall@ n 0 . 0 0 . 1 0 . 2 0 . 3 0 . 4 0 . 5 0 . 6 0 . 7 0 . 8 Precision@ n 20 40 60 80 100 top- n 0 . 00 0 . 05 0 . 10 0 . 15 0 . 20 0 . 25 0 . 30 0 . 35 nDCG@ n 20 40 60 80 100 top- n 0 . 00 0 . 05 0 . 10 0 . 15 0 . 20 0 . 25 0 . 30 nDCL@ n BPRMF CoFF ee SVD WRMF most p opula r random 0 . 0 0 . 1 0 . 2 0 . 3 0 . 4 0 . 5 0 . 6 F alse P ositive Rate 0 . 0 0 . 1 0 . 2 0 . 3 0 . 4 0 . 5 0 . 6 T rue P ositive Rate D 0 . 0 0 . 1 0 . 2 0 . 3 0 . 4 0 . 5 0 . 6 Recall@ n 0 . 0 0 . 1 0 . 2 0 . 3 0 . 4 0 . 5 0 . 6 0 . 7 Precision@ n 20 40 60 80 100 top- n 0 . 00 0 . 05 0 . 10 0 . 15 0 . 20 0 . 25 0 . 30 0 . 35 nDCG@ n 20 40 60 80 100 top- n 0 . 00 0 . 05 0 . 10 0 . 15 0 . 20 0 . 25 0 . 30 0 . 35 0 . 40 nDCL@ n BPRMF CoFF ee SVD WRMF most p opula r random Figure 6: The ROC curv es (1st column), precision-recall curves (2nd column), nDCG@ n (3rd column) and nDCL@ n (4th column). Rows A, B corresp ond to a cold-start with a single ne gative feedbac k. Rows C, D corresp ond to a known user recommendation scenario. Odd rows are for Movielens 1M, even rows are for Mo vielens 10M. F or the first 3 columns the higher the curve, the b etter, for the last column the low er the curv e, the b etter. Shaded areas show a standard deviation of an av eraged ov er all cross v alidation runs v alue. also supp orted by the examples from the first 2 columns of T able 2. It can b e easily seen, that unlike SVD, the CoFF ee model mak es safe recommendations with “opp osite” movie features (e.g. T oy Story against Scarface). Such an effects are not captured b y standard metrics and can b e revealed only by a side b y side comparison with the prop osed nDCL measure. In standard recommendations scenario, when user prefer- ences are known (rows C, D) our model also demonstrates the b est p erformance in all but nDCG metrics, which again is explained by the presence of unrated items rather than a po or qualit y of recommendations. In con trast, matrix fac- torization mo dels, SVD and WRMF, while also b eing the top-performers in the first three metrics, demonstrate the w orst qualit y in terms of nDCL almost in all cases. W e additionally test our model in rating prediction exper- imen t, where the ratings of the holdout items are predicted as describ ed in Section 3.3. On the Movielens 1M dataset our mo del is able to predict the exact rating v alue in 47% cases. It also correctly predicts the rating positivity (e.g. predicts rating 4 when actual rating is 5 and vice versa) in another 4 8% of cases, giving 95% of correctly predicted feed- bac k p ositivit y in total. As a result it achiev es a 0.77 RMSE score on the holdout set for Movielens 1M. 7. RELA TED WORK A few research pap ers hav e studied an effect of different t yp es of user feedback on the quality of recommender sys- tems. The authors of Preference model [13] prop osed to split user ratings in to categories and compute the relev ance scores based on user-specific ratings distribution. The authors of SLIM metho d [14] hav e compared mo dels that learn ratings either explicitly (r-mo dels) or in a binary form (b-mo dels). They compare initial distribution of ratings with the recom- mendations b y calculating a hit rate for every rating v alue. The authors sho w that r-mo dels ha ve a stronger ability to predict top-rated items even if ratings with the highest v al- ues are not prev alent. The authors of [3] hav e studied the sub jective nature of ratings from a user p ersp ective. They ha v e demonstrated that a rating scale p er se is non-uniform, e.g. distances b etw een differen t rating v alues are perceived differen tly even by the same user. The authors of [4] state that disco vering what a user does not lik e can b e easier than disco v ering what the user do es lik e. They propose to filter all negative preferences of individuals to a voi d unsatisfac- tory recommendations to a group. How ever, to the best of our kno wledge, there is no published research on the le arning fr om an explicit ne gative fe e db ack paradigm in personalized recommendations task. 8. CONCLUSION AND PERSPECTIVES T o conclude, let us first address the tw o questions, posed in the b eginning of Section 4. As we ha ve shown, standard ev aluation metrics, that do not treat irrelev an t recommen- dations prop erly (as in the case with nDCG), might obscure a significant part of a mo del’s p erformance. An algorithm, that highly scores b oth relev ant and irrelev ant items, is more lik ely to b e fa vo red by such a metrics, while increasing the risk of a user disappointmen t. W e hav e prop osed mo difications to b oth standard metrics and ev aluation procedure, that not only rev eal a p ositiv- it y bias of standard ev aluation, but also help to p erform a comprehensive examination of recommendations quality from the p ersp ective of both p ositive and negativ e effects. W e ha ve also proposed a new model, that is able to learn equally well from full sp ectrum of user feedbac ks and pro- vides state-of-the-art quality in differen t recommendation scenarios. The mo del is unified in a sense, that it can be used b oth at initial step of learning user preferences and at standard recommendation scenarios for already known users. W e b eliev e that the model can b e used to comple- men t or ev en replace standard rating elicitation pro cedures and help to safely int ro duce new users to a recommender system, providing a highly p ersonalized exp erience from the v ery beginning. 9. A CKNO WLEDGEMENTS This material is based on work supp orted by the Russian Science F oundation under grant 14-11-00659-a. 10. REFERENCES [1] G. Adomavi cius, B. Mobasher, F. Ricci, and A. T uzhilin. Context-Aw are Recommender Systems. AI Mag. , 32(3):67–80, 2011. [2] G. Adomavi cius and A. T uzhilin. T ow ard the Next Generation of Recommender Systems: a Surv ey of the State of the Art and Possible Extensions. IEEE T r ans. Know l. Data Eng. , 17(6):734–749, 2005. [3] X. Amatriain, J. M. Pujol, and N. Oliver. I Like It... I Lik e It Not: Ev aluating User Ratings Noise in Recommender Systems. In Pr o c. 17th Int. Conf. User Mo del. A dapt. Pers. F ormer. UM AH , UMAP ’09, pages 247–258, Berlin, Heidelberg, 2009. Springer-V erlag. [4] D. L. Chao, J. Balthrop, and S. F orrest. Adaptive radio: ac hieving consensus using negative preferences. In Pr oc. 2005 Int. ACM Siggr. Conf. Supp ort. Gr. Work , pages 120–123. ACM , 2005. [5] P . Cremonesi, Y. Koren, and R. T urrin. P erformance of recommender algorithms on top-n recommendation tasks. Pr oc. fourth ACM Conf. R e c omm. Syst. - R e cSys ’10 , 2010. [6] L. De Lathau wer, B. De Mo or, and J. V andewalle. On the best rank-1 and rank-(r 1, r 2,..., r n) appro ximation of higher-order tensors. SIAM J. Matrix Anal. Appl. , 21(4):1324–1342, 2000. [7] V. De Silv a and L.-H. Lim. T ensor rank and the ill-posedness of the best low-rank approximatio n problem. SIAM J. Matrix Anal. Appl. , 30(3):1084—1127, 2008. [8] M. D. Ekstrand, J. T. Riedl, and J. A. Konstan. Collaborative filtering recommender systems. F ound. T r ends Human-Computer Inter act. , 4(2):81–173, 2011. [9] Z. Gantner, S. Rendle, C. F reuden thaler, and L. Sc hmidt-Thieme. { MyMediaLite } : A F ree Recommender System Library. In Pr o c. 5th ACM Conf. R e c omm. Syst. (R e cSys 2011) , 2011. [10] G. H. Golub and C. Reinsc h. Singular v alue decomposition and least squares solutions. Numer. Math. , 14(5):403–420, 1970. [11] E. A. Kensinger. Remembering the details: Effects of emotion. Emot. R ev. , 1(2):99–113, 2009. [12] T. G. Kolda and B. W. Bader. T ensor Decomp ositions and Applications. SIAM Rev . , 51(3):455–500, aug 2009. [13] J. Lee, D. Lee, Y.-C. Lee, W.-S. Hwang, and S.-W. Kim. Impro ving the Accuracy of T op-N Recommendation using a Preference Model. Inf. Sci. (Ny). , 2016. [14] X. Ning and G. Karypis. Slim: Sparse linear metho ds for top-n recommender systems. In Data Min. (ICDM), 2011 IEEE 11th Int. Conf. , pages 497–506. IEEE, 2011. [15] R. Pan, Y. Zhou, B. Cao, N. N. Liu, R. Luko se, M. Sc holz, and Q. Y ang. One-class collab orative filtering. In Data Mining, 2008. ICDM’08. Eighth IEEE Int. Conf. , pages 502–511. IEEE, 2008. [16] S. Rendle, C. F reudenthaler, Z. Gantner, and L. Sc hmidt-thieme. BPR : Bay esian Personalized Ranking from Implicit F eedback. Pr o c. Twenty-Fifth Conf. Unc ertain. Artif. Intel l. , cs.LG:452–461, 2009. [17] S. Rendle, Z. Gantner, C. F reudenthaler, and L. Sc hmidt-Thieme. F ast con text-a ware recommendations with factorization mac hines. In Pr o c. 34th Int. AC M SIGIR Conf. R es. Dev. Inf. R etr. , pages 635–644. ACM, 2011. [18] P . Rozin and E. B. Royzman. Negativit y bias, negativit y dominance, and con tagion. Personal. So c. Psychol. R ev. , 5(4):296–320, 2001. [19] G. Shani and A. Gunaw ardana. Ev aluating recommendation systems. R e comm . syst. handb o ok , pages 257–298, 2011.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment