How to Allocate Resources For Features Acquisition?

We study classification problems where features are corrupted by noise and where the magnitude of the noise in each feature is influenced by the resources allocated to its acquisition. This is the case, for example, when multiple sensors share a comm…

Authors: Oran Richman, Shie Mannor

Ho w to Allo cate Resources F or F eatures Acquisition? Oran Ric hm an Department of El ectrical Engi n eer ing T ec hnio n Haifa, Israel rora n@tx.tec hnion.ac.il Shie Mannor Depart men t of Electr ical Engineerin g T echnion Haifa , Israel shie @ee.tech nion.ac.il Marc h 2, 202 2 Abstract W e study classificati on problems where features a r e corrupted b y noise and wh e re the magnitude of th e n o ise in eac h feature is influ ence d by the resources allo cated to its acquisition. This is the case, for example, when m ultiple se n sors sh are a common resource (p o w er, bandw idth, atten tion, etc.). W e d e velop a metho d for compu ti n g the optimal resource allocation for a v ariet y of scenarios and deriv e theoretical b ounds con- cerning the b enefit that ma y arise b y non-uniform allo cat ion. W e f urther demonstrate the effectiv eness of the develo p ed metho d in sim ulations. 1 In tro duc tion Most mac hine learning settings take feature ve cto r s as input. These features are often ac- quired using some pro cess resulting in less than optimal data quality . In many situations, the data qualit y dep e nds on the resources allo cated for the dat a acquisition pr o cess. Exam- ples of p ossible resources are sample rate, tot al sample time, CPU allo cated to some costly pre-pro cess ing , and transmitted p o w er. 1 Sev eral approache s hav e b een prop osed in order to deal with some uncertain ty in learn- ing sc hemas (for example [20, 27]). In many cases, how ev er, one do es not merely deal with existing uncertaint y , but can sometimes “ s hap e” the uncertain ty t o meet one’s needs. This is of t en the case when sev eral sensors share a common resource. F or example , mobile appli- cations use sensors that share p ow er, CPU and bandwidth. Eac h of those resources can b e divided b et wee n sensors according to the designer wish. Another example is t he design of a system with fixed budget (money wise), eac h t yp e of sensor incorp orated can hav e a v ariet y of qualities (with a price tag to matc h). Whic h sensor is “w orth” in v esting in? In this work w e explore the follo wing problem: Sev eral sensors that share a common resource acquire inputs that will b e used fo r classification. What is the b est w ay to divide the resource b et w een the sensors? The resource s allo cated for eac h sensor affect the qualit y o f the data it collects. W e wish to maximize classification p erformance b y correctly allo cating the av a ilable resources. W e emphasize that different resource a llocation sc hemes may result in differen t optimal classifiers. This coupling increases the complexit y of the problem. W e presen t a framework for “uncertaint y managemen t”: This fr a me work for mulates the presen ted pro ble m a s an optimization problem. The direct form ulation, ho wev er, is not easily solv able so we derive an equiv alen t solv able problems for v arious scenarios. W e further b ound the b enefit that may arise from optimally allo cating the resources. Based on the results presen ted w e devis e an algor ithm for deriving the optimal resource allo cation a nd presen t some sim ulation results tha t sho w the p oten tial b enefits. An application do main o f suc h an appro a c h is that of sens or managemen t (see [6]), where mostly state-estimation pro ble ms ha v e b ee n in v estigated. Among the most studied a ppli- cations is the real-time allo cation of radar resources (for example [25]). Ho w eve r, other applications suc h as m ulti sensor managemen t [26] ha v e also been studied. One more emerg- ing application is the use of services lik e Mec hanical T urk in o rder to extract features (for example sub jectiv e features regarding an imag e o r a text). The more av eraging p erformed, the more accurate the features are. How ev er, not all features require the same accuracy . 2 In our mo del, collected features are corrupted b y some disturbance. W e explore tw o ty p es of disturbances : sto c hastic and adv ersarial. A stochastic disturbance corr esp onds to common situations where features are corrupted by some, t ypically additiv e, noise. An adv ersarial disturbance concerns the worst p ossible deterministic lo ss maximizing noise corresp onding to “w orst-case” scenarios. W e assume that sp ecial effort is made so that the tr aining data are of the highest qualit y . During the test phase, ho w ev er, resources a re limited and should b e allo cated sparingly . This is often the case in applications whe r e the num b er of samples to b e classified is larger by sev eral orders of magnitude tha n the t r aining set size. This w ork fo c uses on metho ds for con trolling uncertain ty in problems of binar y classification with real v alued features. W e consider supp ort v ector machines (SVM) st yle classific ation [7] due to its man y b enefi cial prop erties (for example [2 2 ] and [28]). Ho w ev er, our method can be easily a dapted to a wide v ariet y of learning sc hemes. W e further explore a second sce na r io in whic h w e assume that the training data a re noisy while during the test phase data qualit y is sup erb. This can o ccur fo r a n um b er of reasons. One example is some difficult y to gather information in the learning phase whic h do not exist in the test-phase. F or example, patien t s ma y b e mo r e willing to conduct a CT scan when some serious illness is susp ected but conv incing them to p erform one for the sak e of exp erimentation require the use of less radiatio n therefore more noise [4]. Another example is when the learning da t a -set is “sensitized” by art ific ia lity adding noise in order to comply with priv acy issue s. Scenarios in whic h noise a ris e in b oth training and testing phase can b e accommo dated by a combination of the metho ds presen ted. In most of the pap er w e assume that the relation b et wee n the resources to b e allo cated and the disturbance is know n. This scenario is quite reasonable, examples include influence of sampling rate on tempora l features, sampling time on sp ectral features, p o w er on c ha nne l error rate in comm unication and many more. Ho we ver, since there are also cases where this relation is unkno wn w e introduce a n algorithm that is c ompletely data-driv en. W e do not 3 assume G auss ia n noise. How ev er, in many a reas of con tro l and signal pro cessing Gaussian noise is used t o mo del sensors noise. F or that reason the examples give n consider Gaussian noise. Related w orks. The problem of resource allo cation b et w een sensors has b een inv esti- gated in sev eral disciplines and fro m sev eral persp ectiv es. Most w orks come fr o m an adaptiv e con trol p erspectiv e. Almost half a cen tury ago, Meier [14] defined a setting where sensors parameters can b e controlled. The con tro l p erspectiv e has b een studied extensiv ely since, mostly for the sp ecial case of sensor switc hing, namely dynamically c ho osing one sensor from sev eral av aila ble ones; see [2] and man y ot hers. In contrast with those w orks we are dealing with classification problem. The existence of some decis ion b oundary makes the pro ble m more in volv ed and the con tro l theory framew ork inadequate. In a dditio n, this line of researc h generally assumes full kno wledge of the underline mo de l, an assum ptio n w e w ould lik e to a void. In [3] the a uthors considered the problem of finding an optimal least-squares linear re- gressor as we ll as noise parameters of a static estimation problem when the underlying mo de l is know n. They explore the spacial case of estimating a scalar using square loss. A mild extension to this spacial case is giv en in [19]. W e generally f ollo w the same approach, al- though o ur problem definition is more general. W e for tunately hav e the privilege of enjo ying a la ter ric h b ody of researc h concerning dealing with know n uncertain ty in learning scenarios (e.g., [20, 27]). Classification problems in this con text w ere considered by trying to maximize some mea- sure of information in the data. In this setting o ne tries to optimize some information measures lik e sample conditional entrop y or the Kullback - Leible r (K L) dive r g en ce (for ex- ample [8]). Suc h metho ds lead to an elegant solution but are heuristic a nd ignore kno wledge ab out the desired utility function, so that some informat io n “quantit y” is optimized instead of the relev ance to classification. Resource efficien t lear ning is a growin g field of researc h in r ecen t years. Most research 4 is fo cused on dynamic acquisition of features where differen t f e a tures a re acquired fo r differ- en t samples. Multiple mo dels w ere prop osed including trees [30], cascades [23] and Mark ov decision pro cesse s [5]. Our work explores the situation where features are acquired sim ulta- neously and not seque ntially . Some work had also conside red in tro ducing resource a w ar eness in to the classifier learning pro cess. This is usually done using some greedy pro cess where features are added to a classifier un til the resource budget run o ut [16, 29]. Similar metho ds whic h treat the learning sc heme as “ blac k-b ox” are wrapper feature selection [10]. Some w ork had explored similar issues when resources are scarce in the learning phase instead of the testing phase [12, 15]. While our work shares a similar motiv ation with those fields, o ur decision space is contin uous a nd not discrete. W e are inspired by problems in whic h sensors use a phys ical resource whic h need to b e a llo cated (time, p o w er, bandwidth, etc.). Exis t ing metho ds cannot supp ort suc h problems. In addition, the use of a con tinuous decision space circum v en ts t he need to solv e complex combinatorial problems and allo ws the use of v arious to ols from o ptimiz a tion theory . Another setting whic h had b een explored is o n- line learning in the pr esence of noise. An algorithm for on-line lear ning from noisy data is presen ted in [4]. W e improv e the algorithm presen ted there b y allo wing on- line con trol of features qualit y and sho w that learning can b e done more efficien tly . Con tributions. The con tributions o f this pap er are: • W e dev elop a framew ork for considering feature a cq uisition quality as a resource allo- cation problem in classification. • W e deriv e algorithms for o ptimal resource allo cation a nd optimal classification for a v ariet y of scenarios. • W e analyse the p erformance gain t ha t can b e ac hiev ed. • W e demonstrate the b enefit tha t can arise from using those metho ds in sim ulation. 5 The structure of this pap er is as follo wing: Section 2 introduces the framew ork of uncer- tain ty managemen t and prov ides a metho d for determining the optimal r e source allo cation for sto c hastic disturbances. Section 3 explores the case of adv ersarial noise. The results presen ted in t hos e sections characterize the optimal allo cation for a wide array of problems. Section 4 prop ose s an algorithm for the scenario where the disturbance c haracteristics is un- kno wn and giv es a theoretical guarantee on its regret. Section 5 explores the case where the training set is noisy and provides an efficien t algorithm fo r the sp ecial case of linear classifie r with Gaussian noise and square loss. Section 6 presen ts some sim ulations that demonstrate the feasibilit y of the results and Section 7 concludes with some final though ts. Pro ofs for all of the theorems in this pap er can b e found in the app endix. 2 Uncertain ty allo cation : St o c hastic d isturbances This section explores the case in which the disturbance is sto c hastic. W e assum e that M samples ( x, y ) ∈ ( R d , {− 1 , 1 } ) are generated from some joint distribution (i.i.d.). Denote b y X ij the j ’th feature of sample i . Eac h X ij is measured with some disturbance δ ij . The disturbance is g e nerat ed from a distribution with some v ector of parameters (resources) r = ( r 1 , . . . , r d ). Denote the resulting vec t o r of disturbances in sample i as δ i . W e follow the empirical risk minimization fr ame work [24]. Let L ( h, r ) b e the cost incurred when the disturbance is generated using resource vec to r r . That is. L ( h, r ) = 1 M M X i =1 E δ ( l ( h, X i + δ i , Y i )) . Our ob jectiv e is to optimize b oth the resource v ector ( r 1 , . . . , r d ) and the classifier h ( x ) suc h that L ( h, r ) is minimized. F or simplicit y , w e fo cus our atten t io n on the spacial case of linear classifiers. Ho w ev er, the framew ork presen ted in this pap er can b e easily extended to o t her families of classifiers. Also, w e assume that the noise is indep enden t b et w een samples, namely tha t each δ ij is 6 generated i.i.d. using a distribution with parameter r j . W e note that L ( h, r ) can also b e written as L ( h, r ) = ˜ L ( h, σ ( h, r )) where σ ( h, r ) ∈ R . The v ariable σ ( h, r ) is a measure of the noise influence on the cost function. F or example, for linear classifiers it is often the standard deviation of the noise in the axis p erpendicular to the decision b oundary . This is helpful since many loss function may b e defined this w a y . W e g ive details of such an example b elo w (Example 1). F or a linear classifier we define, L ( w , b, r ) = 1 M M X i =1 E δ ( l ( Y i , ( w T ( X i + δ i ) + b )) . Assume that σ ( · , · , r ) is a con v ex function in r , p ositiv e and strictly decreas ing in eac h elemen t for r j > 0. Also, assume that ˜ L ( w , b, σ ) is strictly increasing and conv ex in σ . W e refer to a loss function that satisfies these assumptions as an ac c eptable loss function . Those assumption can b e informally in terpreted as a s suming that mor e resources pro vide b etter accuracy and that increasing p erformance pro vide diminishing return. The problem can now b e stated as: min r,w,b L ( w , b, r ) △ = ˜ L ( w , b, σ ( w , b, r )) s.t. d X i =1 r i ≤ R (1) r j ≥ 0 ∀ j. Example 1 Consider the case in wh ich δ ij is Gaussian with zero mean and standard deviation σ j ( r j ), where σ j ( r j ) is a con v ex strictly decreasing function. Assume also that l ( x, y , w , b ) = l ( w ⊤ x + b, y ). In this case, σ is the standard deviation of the distance from the decision b oundary , namely , σ ( w , b, r ) = s d P i =0 w 2 i σ i ( r i ) 2 . No w, t here are t wo natural lo s s functions w e can explore: hing e loss and square loss. F or the hinge loss l ( x, y , w , b ) = max(0 , 1 − y ( w ⊤ x + b )). In suc h case L ( w , b, r ) can be calculated 7 directly: L ( w , b, r ) = 1 M M X i =1 1 √ π σ ( w , b, r ) 1 Z −∞ (1 − z ) e − ( Y i ( w ⊤ X i + b ) − z ) 2 2 σ ( r ) 2 dz . F or the square loss l ( x, y , w , b ) △ = ( w ⊤ x + b − y ) 2 . In this case a simple calculatio n show s that the o ve ra ll loss is: L ( w , b, r ) = σ ( w , b, r ) 2 + 1 M M X i =1 ( Y i − ( w ⊤ X i + b )) 2 . (2) Similarly , one can use other loss functions and obtain a n umerical if not exact expressions. The follo wing theorem c haracterizes the optimal resource allo cation for problem (1). According to Theorem 1 the resource allo cation depends only on σ ( w , b, r ) suc h that one can deriv e the optimal resource allo cation ev en without kno wing L ( w , b, r ). The pro of of the theorem and all other pro ofs a ppear in the app endix. Theorem 1. Supp ose that L ( w , b, σ ) is an acceptable loss function. F or the optimal solution ( w , b, r ) of problem (1) there exists λ > 0 such that d P i =1 r i = R , and for ev ery i it holds that r i = 0 if − ∂ σ ∂ r i ( w , 0) < λ − ∂ σ ∂ r i = λ el se . (3) Using Theorem 1 and greedy searc h ov er λ problem (1) can b e solv ed. 2.1 Examples W e now o utline a few examples of optimal allo cation of resources for differen t relations b et w een the resources and the noise v ariance. While all of the examples relate to zero mean Gaussian noise, Theorem 1 is general and can b e applied for other distributions a s long as their v ariance is finite. 8 Example 2 - Standard deviation prop ortional to in v erse of r esou r ce W e explore the scenario in whic h the standard deviation is prop ortional to the inv erse of the resources allo cated. Namely , σ i ( r i ) = 1 r i . This is the case, for example, when the resource is the sampling rate and the features measured are timing of v arious ev ents . In this case: r i = Rw 2 3 i d P j =1 w 2 3 j . Example 3: V ariance prop ortional to in verse of resources A p opular relation b e- t w een resources and noise is when the v aria nc e is prop ortional to the inv erse of resources allo cated. Namely , σ i ( r i ) = 1 / √ r i . This is the case in man y situations including: p o wer in activ e sensors, dura t ion of sampling f or sp ectral features a nd num ber of measuremen ts tak en when av eraging (for example if features are extracted using a Mech a nic a l T urk). In this case, the optimal allo cation can b e easily computed to b e: ˆ r i = R | w i | | w | 1 . (4) Corollary 1. In the case of square-loss and uniform allo cation of r e sources r i = R/d , it follo ws that σ ( r ) 2 ∝ | w | 2 2 . Corollary 2. When applying optimal allo cation of resources according to (4) it results that σ ( r ) 2 = | w | 2 1 /R . In terestingly , the optimization problem derive d f or square-loss (2) with uniform allo ca- tion of resources is equiv alen t to the optimizatio n problem deriv ed when p erforming ridge regression. Similarly , using optimal allo cation of resources is similar to p erforming lasso regularization. This supp ort claims that using lasso regularizat io n pro duce classifiers whic h are more robust to noise than other regularization techniq ues [27] Since for the square-loss optimizing (2 ) is equiv alent to p erforming lasso, one can use complicit y b ounds derive d f or this case. It is know n tha t a bound on the error resulting 9 from lasso regularizatio n | w | 1 < B is increasing in B [9]. Since B is decreasing with 1 / R , it is increasing with R . This surprisingly implies that less r esour c es r e quir e less exam ples to le arn . This can b e explained in the f ollo wing manner: with less resources there is more noise in the decision making phase, the larger the noise the less impact small c hanges in the classifier mak es (in the limit, there a re no resources and therefore t he no is e is infinite and there is nothing to learn). Example 4: Quan tization noise It is kno wn that rounding quantization noise can b e treated a s G auss ia n with standard deviation of 1 12 LS B where the LS B is the accuracy of the least significan t bit [21]. Consider a scenario in whic h w e w ould lik e to ma intain t he n um b er of bits used to represen t all features under some threshold R . W e will first disregard the fact that r must b e an integer and deriv e the solution for σ i ( r ) = 2 − ( r i ) while r i ≥ 1 fo r all i . The solution of (1) for any fixed w will b e r i = 1 log | w i | < λ 1 + log | w i | + 1 | C | ( R − d − P i ∈ C log | w i | ) log | w i | ≥ λ λ = 1 | C | ( P i ∈ C log | w i | ) − R + d ) C = { i | log | w i | ≥ λ } . Notice that we still need to transform r i in to integers. This can b e done by “searching” in the vicinit y of the optimal v ector r . 2.2 P erformance analysis T o gain some insigh t ab out the expected b e nefit of using this metho d w e explore the special case of square lo s s with σ i ( r i ) = 1 / √ r i . Observ e tha t L is decreasing in R . F rom Corollary 1 and 2 w e know that finding an optimal w for uniform allo cation is equiv alen t to p e r forming ridge regression while optimizing (2) is equiv alent to p erforming lasso. W e ask the following question: for the same exp ecte d loss ho w muc h resources can we sa v e b y using the metho d 10 presen ted? In order to answ er t his question w e start b y fixing w and analyze the exp ected loss for differen t resources allo cations. F or ev ery admissible ( w , b ) denote b y R unif ( w , l ) the re source budget that holds L ( w , b, r unif ) = l when r unif = ( R /d, . . . , R/ d ). Also, denote b y R opt ( w , l ) the resource budget that holds L ( w , b, r opt ( w )) = l when r opt ( w ) = ( R | w 1 | / | w | 1 , . . . , R | w d | / | w | 1 ). The following result b ounds the ratio b et we en resources required f or ac hieving the same loss. Theorem 2. F or ev ery w and for l ( x, y , w , b ) = ( w ⊤ x + b − y ) 2 it holds that R unif ( w, l ) R opt ( w, l ) = d | w | 2 2 | w | 2 1 . The pro of can b e found in the app endix. Denote by w opt the optimal classifier when resources are allo cated optimally and b y w unif the optimal classifier when resources are allo cated uniformly . The next cor o llary follo ws directly from Theorem 2 ; it b ounds the total b enefit that can arise from the joint optimization of b oth resource allo cation and classifier. It holds since L ( w unif , r unif ) ≤ L ( w opt , r unif ) and L ( w opt , r opt ( w opt )) ≤ L ( w unif , r opt ( w unif )). Corollary 3. F o r ev ery w and for l ( x, y , w , b ) = ( w ⊤ x + b − y ) 2 it holds that d | w unif | 2 2 | w unif | 2 1 ≤ R unif ( w unif , l ) R opt ( w opt , l ) ≤ d | w opt | 2 2 | w opt | 2 1 . It is clear from Corollary 3 that in cases where some features hold little information (small co efficie nts in the classifier) the b ene fit of optimized resource allo cation can b e very larg e. It should b e noted that in extreme cases this is equiv alen t to using f eatur e selection (meaning, c ho osing whic h features should b e allo cated zero resources). Ho wev er, in man y cases ev en when considering only relev an t features the v ariance of their influence is significan t. In suc h cases our metho d provides considerable b enefit. 11 3 Adv ersarial disturb a n ce W e now consider the case where the disturbance is adv ersarial. Sev era l mo de ls for adv er- sarial disturbance ha v e b een considered in the literature, we will adopt the mo del from [27]. F ormally , consider some samples { ( X i , Y i ) } M i =1 where X i ∈ χ ⊂ R d and Y i ∈ {− 1 , 1 } . W e only ha ve access to some corrupted v ersion of this set { ( X i + δ i , Y i ) } M i =1 . The distur- bances δ i are determined b y an adve r sary , ho w ev er t he adv ersary can only affect sam- ples in a certain w ay . F ormally , the v ector δ = ( δ 1 , . . . , δ M ) is in a set defined b y : N ( N 0 ) △ = { ( α 1 δ 1 , . . . , α M δ M ) | δ i ∈ N 0 for i = 1 , . . . , M , M P j =1 α j = 1 } , where N 0 is some symmetric uncertain ty set that con tains the origin. In our setting, we wish to optimize b oth the classifier parameters w , b and the shap e o f N 0 under the constraint of av ailable p o w er (or budget) for the a dv ersary . The main difference here from other works (see [27] and follo w-ups) is tha t w e can optimize o v er N 0 out of a family of sets (set o f sets). Such a family can b e, f o r example, the set of ellipsoid sets while main taining some constan t fixed resource budget. N set = N 0 = x | d X i =1 ( x i σ i ( r i ) ) 2 ≤ 1 , d X i =0 r i = R . F ormally , the problem is optimizing inf N 0 ∈N set sup δ ∈N ( N 0 ) min w ,b L ( w , b, X + δ, Y ) (5) for some N set that defines the problem. W e will fo cus o ur atten tion on the hinge lo ss, L △ = M P i =1 max(0 , (1 − y i ( < w , X i > + b ))). F or hinge loss the following result is given in [27]: Lemma 1. (Xu et a l. 2009 [27]) Assume { X i , Y i } M i =1 are non-separable then t he follow ing min-max problem min w ,b sup δ ∈N M X i =1 max(0 , (1 − y i ( < w , X i > + b ))) 12 is equiv a le nt to the following o ptimiz a t io n problem min w ,b,ξ sup δ ∈N 0 ( w T δ ) + M P i =1 ξ i s.t. ξ i ≥ 1 − y i ( w T x i + b ) , ξ i ≥ 0 , i = 1 . . . , M . W e use this result in order to deriv e the following theorem: Theorem 3. Consider the solution ( ˆ N 0 , ˆ w , ˆ b ) for the problem inf N 0 ∈N set min w ,b sup ( δ 1 ,,δ M ) ∈N L ( w , b, X + δ i ) , Y ) . (6) Then the solution satisfies ˆ N 0 ∈ arg min N 0 ∈N set sup δ ∈N 0 ( w T δ ) . Theorem 3 is analogous to Theorem 1 and allow s to optimize resource allo cation in the adv ersarial setting. Example 4 Gaussian no is e is a p opular mo delling c hoice in many domains. W e wish to find some constrain t whic h will create in the adv ersarial setting an effect that reassem bles Ga us - sian noise. F or this purp ose w e use an ellipsoid uncertaint y set. Instead of assuming G auss ia n noise w e b ound the uncertain t y to a fixed width of standard deviations. Consider the mo del presen ted in [27] with an ellipsoid uncertaint y set namely , N 0 = { x | d P i =1 ( x i /σ i ( r i )) 2 ≤ 1 } . The function σ i ( r i ) can b e any of t he f ormer examples. No w, under non separabilit y assumption the solution of the problem min r min w ,b sup ( δ 1 ,,δ M ) ∈N ( r ) M P i =1 max(1 − y i ( w T ( x + δ i ) + b ) , 0 ) , s.t. d P i =0 r i = R satisfies, w 2 i σ i ( r i ) dσ i ( r i ) dr i = λ, d P i =1 r i = R. . 13 Fixing σ i allo ws the deriv ation of w , b b y solving the conic o pt imizatio n problem min w ,b,ξ s d P i =1 w 2 i σ 2 i + M P i =1 ξ i s.t ξ i ≥ 1 − y i ( w T x i + b ) , ξ i ≥ 0 , i = 1 . . . , M . Theorems 1 and 3 allow to solv e either (1) or (5) using alternating o ptimizatio n. Moreo v er, in the sp ecial case where σ i ( r i ) = 1 / √ r i the optimal allo cation of resources is analogous to lasso regression: v u u t d X i =1 w 2 i σ 2 i = | w | 1 √ R , r i = R | w i | | w | 1 for i = 1 , 2 , . . . , d . (7) 4 Unkno wn s to c hastic distu rbance In this sec t io n w e consider the case of sto c hastic disturbance t ha t is unkno wn. W e wish do devise a dat a -driv en algo rithm that finds the o ptimal resource allo cation ev en when the disturbance is initially unknow n. W e use sto c hastic gradien t descen t in order to minimize the cumulativ e loss function. In this section w e explore the sp ecial case of square-loss with some assumptions on the structure o f the disturbance. W e deriv e a concrete algorithm and a corresp onding b ound for this sp ecial case. It is p ossible to easily extend this a lgorithm to v arious ot her scenarios. W e make the following assumptions o n the structure of the disturbance: • The disturbance and data-p oin t s a re indep en dent ( X i is indep e ndent from δ i ). • The disturbance is indep enden t b et w een features. • The distribution of the disturbance in each feature is symmetric. • The second momen t of the disturbance, σ 2 i ( r i ) is con v ex in r i . 14 The last assum ptio n is reasonable since w e exp ect diminishing return from increas ing al- lo cated resources. W e use ridge regularization and b ound the p ossible set of classifiers b y || w || 2 ≤ B w . The optimization problem can b e stated as: min r,w T X t =1 l ( w , r, x t , y t ) △ = T X t =1 d X i =1 w 2 i σ 2 i ( r i ) + ( w T x t − y t ) 2 s.t. d X i =1 r i = R (8) r j ≥ 0 ∀ j || w || 2 ≤ B w . (9) The gradien t is giv en b y: ∂ l ∂ w i = 2 w i σ 2 i ( r i ) + 2 x i ( w T x − y ) ∂ l ∂ r i = w 2 i ∂ σ 2 i ( r i ) ∂ r i . Since ∂ σ 2 i ( r i ) ∂ r i is unkno wn w e will approximate it using the Kiefer-W olf o witz pro cedure [11]. This results in ∂ σ 2 i ( r i ) ∂ r i ≈ σ 2 i ( r i + ǫ ) − σ 2 i ( r i ) ǫ . W e will denote b y Π( w , r ) the pro jection of classifier w and resource ve ctor r into the set of feasible solutions N △ = {| w | 2 < B W , P r i = R, r i > 0 } . W e further denote the maxim um distance b et wee n tw o v ectors in this set by B = 2 p R 2 + B 2 W . It is now p ossible to use standar d sto c hastic gradien t des cent. F ollowing the Kiefer- W olfo witz pro cedure, at eac h step measure tw o data p oin ts with t w o slightly differen t re- source a llocations. Then, estimate the g r a die nt and up date the classifier a nd resource allo ca- tion accordingly . Finally , pro ject the solution into the feasible solutions space a nd con tinue to the next step. The resulting algo rithm is presen ted as Algorithm 1. 15 Algorithm 1 Learning when the disturbance is unkno wn P arameters B W , R, ǫ initialize w 1 = 0, r 1 i = R/d for t = 1 , 2 , 3 , . . . , T do receiv e ˆ x t, 1 , y t using resource distribution r t receiv e ˆ x t, 2 , y t using resource distribution r t + ǫ η = 1 /sq r t ( t ) for i=1,. . . ,d do w ′ i = w t i − η (( < w t , ˆ x t, 1 > − y t, 1 ) ˆ x t, 1 i ) r i = r t i − η ( w t i ) 2 [( ˆ x t, 2 i ) 2 − ( ˆ x t, 1 i ) 2 ] ǫ end for ( w t +1 , r t +1 ) = Π( w , r ) ;Π( w , r ) is the pro jection in to the feasible solutions space end for It is easy to verify that the estimated gradien t is indeed un biased. Notice that unlik e standard on-line learning the measuremen t x n are not i.i.d. since c ho osing r creates a cou- pling b et w een measureme nts. How ev er, the “noise” of the estimated gradien t is a martingale difference sequence and therefore sto c hastic estimation theory can b e easily applied. W e pro ceed to b ound the regret whic h arise from algorithm 1. Since w e use Keifer- W olfo witz pro cedure the regret m ust be measured in comparison to the biased functions created b y the pro ce dure. Namely , ˜ σ 2 i ( r ) = r R 0 σ 2 i ( s + ǫ ) − σ 2 i ( s ) ǫ ds a nd ˜ l ( w , r, x, y ) △ = d P i =1 w 2 i ˜ σ 2 i ( r i ) + ( w T x − y ) 2 . When ǫ is small enough ˜ σ 2 i ( r ) is appro ximately σ 2 i ( r ). It is now p ossible to deriv e a b ound on the regret. Theorem 4. If ˜ l ( w , r, x, y ) is j oin tly conv ex in w , r fo r ev ery x, y , E ( x ) = 0, E ( || x || 2 2 ) = 1 , E ( || x || 4 2 ) = B 4 x , E (( ˆ x i − x i ) 2 ) ≤ B 2 δ and E (( ˆ x i − x i ) 4 ) ≤ B 4 δ then E ( T X i =1 ( w t x t, 1 − y t ) 2 ) − min ( w, r ) ∈N T X i =1 ˜ l ( w , r, x t , y t ) ≤ B √ T 2 + ( √ T − 1 2 ) ||∇ l || 2 . 16 Where, B = 2 p R 2 + B 2 W ||∇ l || 2 = 2 B 2 w B 4 ˜ x + 2 B 2 ˜ x + 2 B 4 ˜ x B 4 W ǫ 2 B 4 ˜ x = B 4 x + 6 B 2 x B 2 δ + B 4 δ B 2 ˜ x = B 2 x + B 2 δ (10) The pro of follow s similar lines to that used t o deriv e a b ound in [4] and can b e found in the app endix. Theorem 4 implies tha t the optimal classifier and optimal resource allo cation can b e learned with sub-linear regret. Note that decreasing ǫ , whic h is the step-size used to estimate the gradient, will increase learning time. This is since we assume that noise is indep enden t b et w een samples. In this setting decreasing ǫ increases the noise lev el in estimating the gradien t. Cho osing large ǫ , how ev er, can result in large bias from the optimal solutio n. The next t wo remarks show that assuming some dep endence b et wee n samples may r e duc e learning time significan tly . R emark 1 . The term 2 B 4 ˜ x B 4 W ǫ 2 can b e quite large. F or r educing the v ariance in the lear ning pro cess it is p ossible at some cases during training to sample m ultiple t imes the same dat a p oin t . In suc h cases it is p ossible to deriv e a muc h b etter b ound in whic h || ∇ l || 2 = 2 B 2 w B 4 ˜ x + 2 B 2 ˜ x + 2 B 4 δ B 4 W ǫ 2 . R emark 2 . In many cases the measuremen ts noise of the same sample with differen t resources is correlated. This is fo r example the case when the resource is CPU time and the disturbance is caused from pro cessing only part of the data. Tw o acquisitions of the same sample share a v ast amount of common data . In suc h cases the difference b et w een measuremen ts with r + δ and r can b e b ounded m uch more tightly then the b ound used in Theorem 4. If ( ˆ x t, 2 i − x t i ) 2 − ( ˆ x t, 1 i − x t i 2 ) ǫ ≤ B g rad then | |∇ l || 2 in Theorem 4 can be rewritten as ||∇ l || 2 = 2 B 2 w B 4 ˜ x + 2 B 2 ˜ x + 2 B 4 W B 2 g rad . 17 Algorithm 2 Efficien t learning from noisy da t a P arameters η , B W , R, r ( w ) initialize w 1 = 0, r 1 i = R/d for t = 1 , 2 , 3 , . . . , T do receiv e x t , y t using resource distribution r t ∇ t = 2( < w t , x t > − y t ) x t − Σ( r t ) w t w ′ = w t − η ∇ t w t +1 = arg min | u | 1 − y t ) 2 ) − min | w | 1 − y t ) 2 ≤ 1 2 ( G + 1) B W √ T . Where, G = 32 B 2 w d 3 R 2 + 98 B 2 w d 2 R 2 + 32 B 2 W d 2 R + 32 B 2 W d R + 16 d 2 R + 32 B 2 W B 4 x + 16 = O ( B 2 W d 3 ) Ho w ev er, in case resources are a llocated efficien tly the corr esp onding b ound is giv en b y the follo wing theorem. Theorem 6. Assume r t i ( w ) = R 2 d + Rw t i 2 | w t | 1 and η = B w / p ( T ) t hen E ( T X i =1 ( w t x − y ) 2 − min | w | 1 − y ) 2 ) ≤ 1 2 ( G + 1) B W √ T 19 Where, G = 64 d 2 R 2 B 2 W + 64 d 2 R B 2 W + 32 d 2 R + 392 d R 2 B 2 W + 64 B 2 W R + 32 B 2 W B 4 x + 16 = O ( B 2 W d 2 ) Notice tha t efficien t learning requires some balance b et w een t w o terms. The term R 2 d is required for estimating E ( x ) while the term Rw t i 2 | w t | 1 is required for estimating E ( w T x ). W e ha ve created r ( w ) b y balancing those t w o terms ev enly . It is p ossible tha t a differen t balance will pro vide b etter results. R emark 3 . When w is dense the efficien t allo cation is almost uniform. Therefore, the regret of the tw o resources a llo cation sc hemes should b e similar. This is not eviden t from t he b ounds pro vided. The reason is that the pro of of Theorem 5 uses the fact that in the w orst case | w | 2 = | w | 1 . In cases where w is dense this is lo ose. Using a tighter b ound, | w | 2 ≅ | w | 1 √ d ≤ B w √ d results in a b ound with o rde r O ( B 2 w d 2 ) for the uniform allo cation case, similar to that receiv ed for efficien t a llo cation of resources. 6 Sim ulation s tudy W e tested the metho d on three data s ets, one syn thetic and t wo real-life pro ble ms fro m the UCI rep ository . Noise w as added to a ll dat a artificially a ccording to the relation σ i = 1 √ r i . F or all datasets, measuremen t noise w as created using the normal distribution with parameters (0 , σ i 3 ) and w as added to t he test samples. W e applied the algorithm fro m the previous section to deriv e b oth an optimal classifier and an optimal resource allo cation. T he result giv en in Eq. (7) w as used to deriv e the optimal resource allo cation for a fixed classifier. W e used hinge-loss a s the loss function to b e minimized and appro ximated L ( w , b, r ) by using an a dv ersarial ellipsoid uncertain ty-set. Optimization w as p erformed using the commercially a v ailable Mosek solv er [1]. Syn thetic problem. W e generated 2 40000 samples uniformly distributed in a b o x in R 3 . W e used z = x + 7 y as the divider and created a data-set with lab els that ob ey sg n ( z − 20 0 0.5 1 1.5 2 2.5 3 x 10 −3 0.2 0.25 0.3 0.35 0.4 Total resources Error rate Error rate as a function of total resources synthetic data zero noise classifier with naive noise optimal classifier with optimal noise Figure 1: Error rate for syn thetic data. x − 7 y + N ), where N is some small Gaussian no is e w e added in or der to make the dat a -set non-separable. A random subset of 1 0 000 samples w a s used for learning while p erformance w as measured o n the rest. T enfold cross v alidation was p erformed. The result for different R v alues is depicted at Figure 1 . The metho d results in ab out 50% reduction in resources required for meeting the same error rate. In this case, the optimal classifier is similar to the classifier deriv ed without noise and the b enefit arise mainly from the redistribution of noise. W e wish to confirm the result of Theorem 2 using similar syn thetic data -sets . F or t his purp ose, w e hav e generated nine data-sets eac h using as a divider z = x + ay for a = 1 , 2 , . . . , 9. F or eac h da ta-set w e ha ve extracted the resources needed fo r ac hieving an error rate of 0 . 1 5. W e calculated the ratio b et w een the tota l resources required when resources are allo cated optimally a nd those required when resources are allo cated uniformly . When a = 1 the optimal allo cation is unifo rm and w e exp ec t no b enefit ( the ratio equals one). As w e increase a , more resources should b e allo cated to y a nd therefore the rat io is improving (decreasing). Fig ur e 2 sho ws the resulting graph compared with the theoretical r esult of Theorem 2 (using the optima l classifier). It can b e seen that the sim ulation result is almost iden tical to the theoretical o ne , though con trary to the assumptions of Theorem 2 w e a re optimizing the hinge- loss and measuring error-rate. Observ e in Figure 2 that considerable b enefits ar ise ev en when the differen tia tion b et w een features is ra ther small. 21 1 2 3 4 5 6 7 8 9 Slope of dividing hyperplane 0.5 0.6 0.7 0.8 0.9 1 Ratio of resource needed with optimal allocation Resource saving-comparison between theroy and practice thoery actual Figure 2: Ratio of resources needed for the same error lev el- syn t hetic data . L ow er is b etter 1 2 3 4 5 6 7 8 x 10 −4 0.08 0.1 0.12 0.14 0.16 0.18 0.2 0.22 Total resources − R Error rate Error rate as a function of resources for skin segmentation data−set zero noise classifier with naive noise optimal classifier with optimal noise Figure 3: Error rate for skin segmen t a tion data-set Real data sets. Next, we t e sted the metho d on real-life databases from the UCI rep os- itory . W e started with the skin segmen tation data set [17] where R G B pixels are classified as skin or non-skin. Noise w as added ar tific ia lly to eac h pixel F rom the 24 5 057 av ailable samples, a random subset of 10000 was used fo r learning while the rest w as used to estimate p erformance. T en- f old cross v alidat io n w as p erformed. The results for differen t R v alues can b e seen in Figure 3. It can b e seen that the metho d results in a bout 3 0 % reduction in resources. W e tested the metho d on the breast cancer data set from the UCI rep ository [13]. This 22 0.05 0.1 0.15 0.2 0 0.05 0.1 0.15 0.2 0.25 Total resources − R Error rate Error rate as a function of resources for breast cancer data−set zero noise classifier with naive noise optimal classifier with optimal noise zero noise classifier with optimal noise Figure 4: Error rate for breast cancer data- s et data-set con ta ins 9 features that r epresen t measuremen ts from a biopsy and classified each sample as malig nan t or b enign. The 68 3 samples w ere rando mly divided, 2/3 of the data w as used for training and the remaining 1/3 fo r testing. The results are depicted in F igure 4. The optimal classifier is differen t than t he zero-noise classifier. In order to demonstrate what p ortion o f the b enefit arise fr o m the resource allo cation and what p ortion from the difference b et w een the classifiers w e a dd ed a plot of the error-rate of the zero- noise classifier when resources a re a llo cated optimally . Most of the b enefit comes from the correct allo cation of resources. 7 Conclus ion W e presen ted a metho d for optimal resource allo cation in classification pro blems a long with an analysis o f the exp ected b enefits from using this metho d. Our framew o rk is general a nd w e sp ecialized it for the imp ortan t sp ecial case o f linear classifiers with Gaussian no is e or with certain adv ersarial disturbances. The framew ork w e presen ted op ens up sev eral directions f o r future researc h. First, a natural extension of our w ork is to consider non-linear classifiers. This can b e easily done using the “k ernel-tric k” computationally . Ho w ev er, while the disturbance (sto c hastic or 23 adv ersarial) has a comfortable shap e in the input space, this do es not necessarily happ en in the feature space. This can proba bly b e accommo dated using t he same tec hniques as [2 7 ] to obtain p erformance b ounds. Second, an expansion of the framew o rk presen ted is the case where resources can b e further divided b et w een samples suc h that “hard” to classify examples will receiv e more resources. The k ey o bs erv ation for this is the fact that allo cation of resources b et w een features is lo cal in nature. T he global cost f unction L ( h, r ) can b e replaced b y l ( x, y , h, r ) and therefore allows deciding on the allo cation of resources fo r eac h sample separately . The optimal allo cation creates a function l ( x , y , h, R ) that can b e used in the metho d presen ted in [18] to pro duce optimal allo cation b et w een samples. Finally , the sim ulation results in this pap er include only noise that w a s artificially gen- erated. This is due to the complexit y o f creating a closed-lo op system that controls the acquisition pro cess. W e b eliev e that closing a complete feedbac k lo op in applications suc h as sensor net w orks and radar will provide similar b enefit to that presen ted as long as the noise is appropriately mo delled. 8 App endi x Pro of of Theorem 1 Pr o of. W e start by proving the follo wing lemma: Lemma 3. Let L ( w , b, σ ) b e an acceptable lo s s function. If L ( w , b, r ) is tw ice differentiable in r then it is con vex in r . Pr o of. Since L is twic e differen tiable in r w e can calculate the Hessian ∂ G ∂ r i ∂ r j = ∂ 2 L ∂ 2 σ ∂ σ ∂ r i ∂ σ ∂ r j + ∂ L ∂ σ ∂ 2 σ ∂ r i ∂ r j . The first term is a p ositiv e sem i- defin it e mat r ix that is multiplied b y a p ositiv e factor 24 (since L is conv ex). The second term is the Hessian of σ whic h is p ositiv e semi-definite (since σ ( r ) is con v ex) m ultiplied b y a po sitive factor (since L is increasing). The r e f o re, the Hessian is p ositiv e semi-definite and L is con vex in r . W e no w con tinue to prov e the theorem by noting that problem (1) can b e r ewritten as min w ,b (min r ( L ( w , b, r ))) s.t d P i =1 r i = R r i > 0 (11) The inner optimization is conv ex, therefore necessary and sufficien t conditions are given by Karush-Kh un- T uck er ∂ L ∂ r i = ∂ L ∂ σ ∂ σ ∂ r i = − λ + µ i µ i r i = 0 d P i =1 r i = R µ i ≥ 0 . Since ∂ L ∂ σ ( r ) is p ositiv e a nd t he same fo r eac h r i w e can denote ˜ λ = λ ∂ L ∂ σ − 1 and obtain the result. Pro of of Theorem 2 Pr o of. F ro m the definition of R unif ( w , l ) and R opt ( w , l ) it holds that L ( w , b, r unif ) = L ( w, b, r opt ( w )). No w, d | w | 2 2 R unif + E (( w x − y ) 2 ) = | w | 2 1 R opt + E (( w x − y ) 2 ) Since the second term is the same in b oth sides of the equalit y it is easily deriv ed that R unif ( w , l ) R opt ( w , l ) = d | w | 2 2 | w | 2 1 . 25 Pro of of Theorem 3 Pr o of. Using L emma 1 , problem (6) turns into: min N 0 min w ,b,ξ sup δ ∈N 0 ( w T δ ) + M P i =1 ξ i s.t : ξ i ≥ 1 − y i ( w T x i + b ) i = 1 . . . , M ξ i ≥ 0 i = 1 . . . , M (12) substituting the order of the min prov e the theorem. Pro of of Theorem 4 Pr o of. W e will first cite a sligh t adaptation of theorem 1 f rom [31] (similar adaptation w a s made in [4]) Lemma 4. Assume max t =1 ,...,T E ( ||∇ l ( w t , r t ) || 2 ) ≤ || ∇ l || 2 then the regret of Algorithm 1 satisfies E ( T P i =1 ( w t x − y ) 2 − min | w | 1 − y ) 2 ) ≤ B √ ( T ) 2 + ( p ( T ) − 1 2 ) ||∇ l || 2 where B = 2 p R 2 + B 2 W No w it is only left to pro ve that max t =1 ,...,T E ( ||∇ l ( w t , r t ) || 2 ) ≤ 2 B 2 w B 4 ˜ x + 2 B 2 ˜ x + 2 B 4 ˜ x B 4 W ǫ 2 E ( ||∇ l ( w t , r t ) || 2 ) = E || ( < w , ˜ x > − y ) ˜ x || 2 + d X i =1 w 4 i (( ˆ x t, 2 i ) 2 − ( ˆ x t, 1 i ) 2 ) 2 ǫ 2 ] ≤ 2 || w || 2 E ( || ˜ x || 4 ) + 2 E ( y 2 || ˜ x || 2 ) + || w || 4 ǫ 2 max i =1 ,...,d E [( ˆ x t, 2 i ) 2 − ( ˆ x t, 1 i ) 2 ≤ 2 B 2 w B 4 ˜ x + 2 B 2 ˜ x + 2 B 4 ˜ x B 4 W ǫ 2 . 26 The first inequalit y results from the fact that || a + b || 2 ≤ 2 | | a | 2 + 2 || b | 2 . The second inequalit y stands since E ( ˜ x 4 ) ≤ B 4 x + 6 B 2 x B 2 δ + B 4 δ E ( ˜ x 2 ) ≤ B 2 x + B 2 δ y 2 = 1 . Pro of of Theorem 5 Pr o of. Denote the noisy measurem ent ˜ x as x + N where N is the noise vec t o r. The follow ing relations are obtained by assigning r i = R d and E || N i || 2 2 = 1 r i = d R : || Σ t w t || 2 = d X i =1 w 2 i r 2 i ≤ d 2 R 2 B 2 W E ( || < W t , N > || 2 2 ) = d X i =1 w 2 i r i ≤ d R B 2 W E ( || N || 2 2 ) = d X i =1 1 r i ≤ d 2 R . Also, E ( || < w , N > N || 2 ) = ( d X i =1 w i N i ) 2 || N || 2 2 = X i,j,k w i w j N i N j N 2 k . Since N i is a zero mean gaussian random v ariable where for i 6 = j N i and N j are inde- 27 p enden t all exp e ctation of o dd p o w er in N i is 0. in addition E ( N 4 i ) = 3 E 2 ( N 2 i ). No w. E ( || < w , N > N || 2 )= d X i =1 w 2 i E ( N 2 i d X k =1 N 2 k ) = d X i =1 w 2 i E ( N 4 i ) + d X i,j,i 6 = j w 2 i E ( N 2 i N 2 j )) ≤ 3 d 2 R 2 B 2 W + d 3 R 2 B 2 W . No w, using the fact that || a + b || 2 < 2 | | a || 2 + 2 || b || 2 at eac h stag e, E ( ||∇ t || 2 2 ) = E t || 2( < w t , ˜ x t > − y t ) ˜ x t − Σ t w t || 2 2 ≤ 8 E ( || ( < w t , ˜ x t > − y t ) ˜ x t || 2 ) + 2 || Σ t w t || 2 ≤ 16 E ( || ( < w t , x t > + < w t , N > ))( x t + N ) || 2 ) + 16 E ( || y t ( x t + N ) || 2 ) + 2 || Σ t w t || 2 ≤ 32 E ( || ( < w t , x t > )) x t || 2 ) + 32 E ( || ( < w t , N > )) N || 2 ) +32 E ( || ( < w t , x t > )) N || 2 ) + 32 E ( || ( < w t , N > )) x t || 2 ) +16 E ( || y t x t || 2 ) + 16 E ( || y t N || 2 ) + 2 || Σ t w t || 2 ≤ 32 B 2 w d 3 R 2 + 98 B 2 w d 2 R 2 + 32 B 2 W d 2 R + 32 B 2 W d R + 16 d 2 R + 32 B 2 W B 4 x + 16 = G where the last inequalit y is due to the relations ab o ve. Pro of of Theorem 6 Pr o of. Now , r i ( w ) = R 2 d + Rw i 2 | w | 1 . Therefore r i ≥ R 2 d and r i ≥ Rw i 2 | w | 1 . This results in E || N i || 2 2 ≤ 2 d R and E || N i || 2 2 ≤ 2 | w | 1 Rw i 28 No w, || Σ t w t || 2 = d P i =1 w 2 i r 2 i ≤ 4 d R 2 B 2 W E ( || < W t , N > || 2 2 ) = d P i =1 w 2 i r i ≤ 2 R B 2 W E ( || N || 2 2 ) = d P i =1 1 r i ≤ 2 d 2 R In a similar fashion to the deriv ation in the pro of o f Theorem 5 it results that E ( | | < w , N > N || 2 ) = d X i =1 w 2 i E ( N 4 i ) + d X i,j,i 6 = j w 2 i E ( N 2 i N 2 j )) ≤ 12 d R 2 B 2 W + 4 d 2 R 2 B 2 W . The rest o f the pro of is similar to that of Theorem 5 using the a bov e relations instead of the corresp onding relatio ns in Theorem 5. References [1] M. ApS. The MOSEK optimizatio n t o olb ox for MA TLAB manual. V ersion 7.1 (Rev ision 28). , 2015. [2] M. Athans. On the determination of optimal costly measur emen t strategies for linear sto chastic s ystems. Automatic a , 8 (4):397–412, 19 72. [3] D. Avitzour a nd S. R. Ro gers. Optimal measur emen t s c heduling for prediction and estimatio n. A c ous- tics, Sp e e ch and Signal Pr o c essing, IEEE T r ansactions on , 38(10):17 33–1739, 19 90. [4] N. Cesa-Bianchi, S. Sha lev-Sh wartz, and O. Shamir. Online learning of noisy data. Information The ory, IEEE T r a nsactions on , 57 (12):7907–79 31, 2 011. [5] T. Gao and D. Koller . Active classification based on v alue of cla ssifier. In A dvanc es in Neur al Infor- mation Pr o c essing Systems , pag es 1062 –1070, 2011 . [6] A. O. Hero and D. Co chran. Sensor management: Past, present, a nd future. Sensors Journ al, IEEE , 11(12):30 64–3075, 2 0 11. [7] C.-W. Hsu, C.-C. Chang, C.-J . Lin, et a l. T echnical re p ort, a practical guide to supp ort vector clas sifi- cation, 20 03. [8] K. Je nk ins and D. A. Castanon. Adaptive s ensor manage ment for feature-based classifica t ion. In De cision and Contr ol (CDC), 2010 49th IEEE Confer enc e on , pages 5 2 2–527. IEEE , 201 0. [9] S. M. Ka k a de, K. Sridha ran, and A. T ew ar i. On the complexity of linear pr ediction: Risk b ounds, marg in bo unds, a nd reg ula rization. In A dvanc es in neur al information pr o c essing syst ems , pa ges 793 –800, 200 9. [10] R. K oha vi and G. H. Jo hn. W ra ppers for feature s ubset selection. Artificial Intel ligenc e , 97(12):2 73 – 324, 199 7. 29 [11] H. J. Kus hner and G. G. Yin. Sto chastic appr oximation a lgorithms and applicatio ns. 1 997. [12] D. J. Lizotte, O. Ma da ni, a nd R. Gre iner. Budgeted lea r ning of naive-bay es classifiers. In Pr o c e e dings of the Ninete enth c onfer enc e on Unc ertainty in Artificia l Intel ligenc e , pages 378–3 85. Morg an K a ufm a nn Publishers Inc ., 2002. [13] O. L. Mangas a rian, W. N. Street, a nd W. H. W olberg . Breas t ca ncer diagnosis and prognos is via linear progra mming. Op er ations R ese ar ch , 4 3(4):570–577 , 1 995. [14] L. Meie r I II, J. Pesc ho n, a nd R. Dressler. Optima l control of measurement subsystems. Automatic Contr ol, IEEE T r ansactions on , 12(5):528 –536, 1967 . [15] P . Melville, M. Saar -Tsec hans ky , F. Prov o st, and R. Moo ney . Active featur e-v alue a cquisition for classifier induction. In Data Mining, 2004. ICDM’04. F ourth IEEE Int ernatio n a l Confer enc e on , pages 483–4 86. IEE E, 2004 . [16] F. Nan, J. W ang, and V. Sa ligrama. F eature-budgeted random forest. arXiv pr eprint arXiv:1502. 05925 , 2015. [17] A. D. Ra jen Bhatt. Skin segment a tion datas et. UCI Mach ine Learning Rep ository . [18] O. Richman and S. Ma nnor. Dynamic sensing: B e t ter class ific a tion under acquisition cons tr ain ts. In Pr o c e e dings of The 32st International Confer enc e on Mac hine L e arning , 2 015. [19] M. Shakeri, K. Pattipati, and D. Kleinman. Optimal measur e men t scheduling for state estimation. A er osp ac e and Ele ctr onic Systems, IEEE T r ansactions on , 31(2):716–7 29, 19 95. [20] P . K. Shiv aswam y , C. Bhattacharyya, and A. J. Smola. Sec o nd order cone prog ramming a pproach e s for handling missing and uncertain data. The Journal of Machine L e arning R ese ar ch , 7:1283–1 3 14, 2006 . [21] S. Stein and J. Jones. Mo dern c ommunic ation principles: with applic a t i on to digital signaling . McGraw- Hill, 19 6 7. [22] I. Steinw ar t. Consistency of supp ort vector ma chines and other reg ula rized kernel classifiers . Information The ory, IEEE T r ansactions on , 51(1):128 –142, 2005. [23] K. T rapez nikov, V. Saligrama, and D. Casta ˜ n´ on. Multi-stage clas sifier des ign. Machine le arning , 92 (2- 3):479– 502, 20 13. [24] V. N. V apnik. St a t istic a l L e arning The ory . Wiley-Interscience, 19 98. [25] J. Winten by and V. Krishnamurth y . Hierarchical res ource management in adaptive air borne surveillance radars . A er osp ac e and Ele ctr onic Systems, IEEE T r ansactions on , 42(2):401 – 420, 2 006. [26] N. Xiong and P . Svensson. Multi-senso r manag emen t for infor mation fusion: issues a nd appr o ac hes. Information fusion , 3(2 ):163–186, 20 0 2. [27] H. Xu, C. Carama nis , a nd S. Mannor . Robustness and r egularization of support v ector ma chines. The Journal of Machi n e L e arning R ese ar ch , 10 :1485–151 0 , 200 9. [28] H. Xu, C. Ca ramanis, and S. Mannor. A distributiona l interpretation of r o bust optimization. In Communic ation, Contr ol, and Computing (Al lerton), 2010 48th Annual Al lerton Confer enc e on , pag es 552–5 56. IEE E, 2010 . 30 [29] Z. Xu, O. Chap e lle , and K . Q. W ein b erger. The greedy mis er: Learning under test-time budg e ts . In Pr o c e e dings of t he 29th International Confer enc e on Machine L e arning (ICML-12) , pages 1175 –1182, 2012. [30] Z. Xu, M. K usner, M. Che n, and K. Q . W e inber ger. Co st-sensitiv e tree of c la ssifiers. In Pr o c e e dings of the 30th International Confer enc e on Machi n e L e arning (ICML-13 ) , pages 133– 1 41, 20 13. [31] M. Zinkevich. Online co n vex pr ogramming a nd g eneralized infinitesimal g radien t ascent. In Pr o c e e dings of the 20th International Confer enc e on Machi ne L e arning (ICML-03) , pages 928 –936, 2003. 31

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

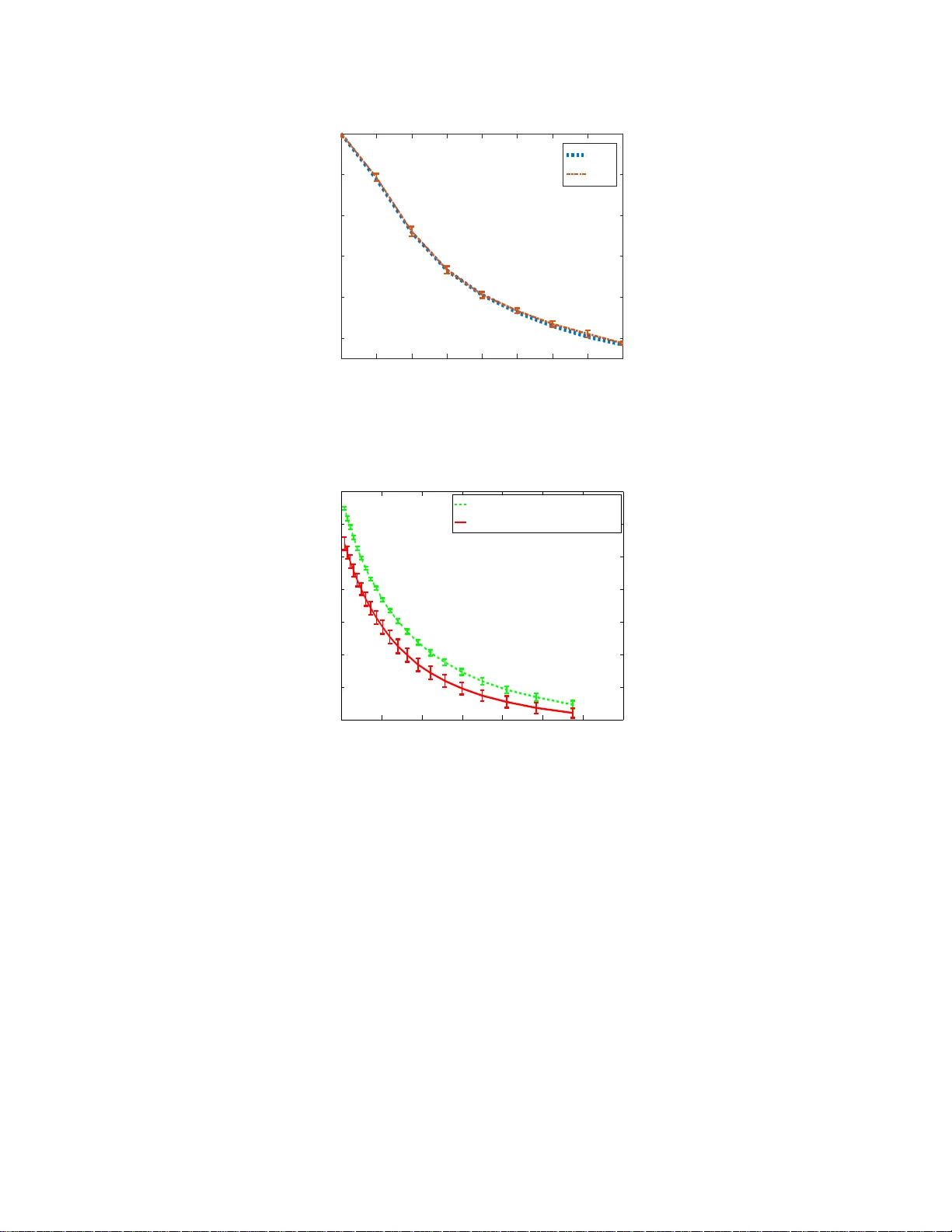

Leave a Comment