Efficient Reinforcement Learning in Deterministic Systems with Value Function Generalization

We consider the problem of reinforcement learning over episodes of a finite-horizon deterministic system and as a solution propose optimistic constraint propagation (OCP), an algorithm designed to synthesize efficient exploration and value function g…

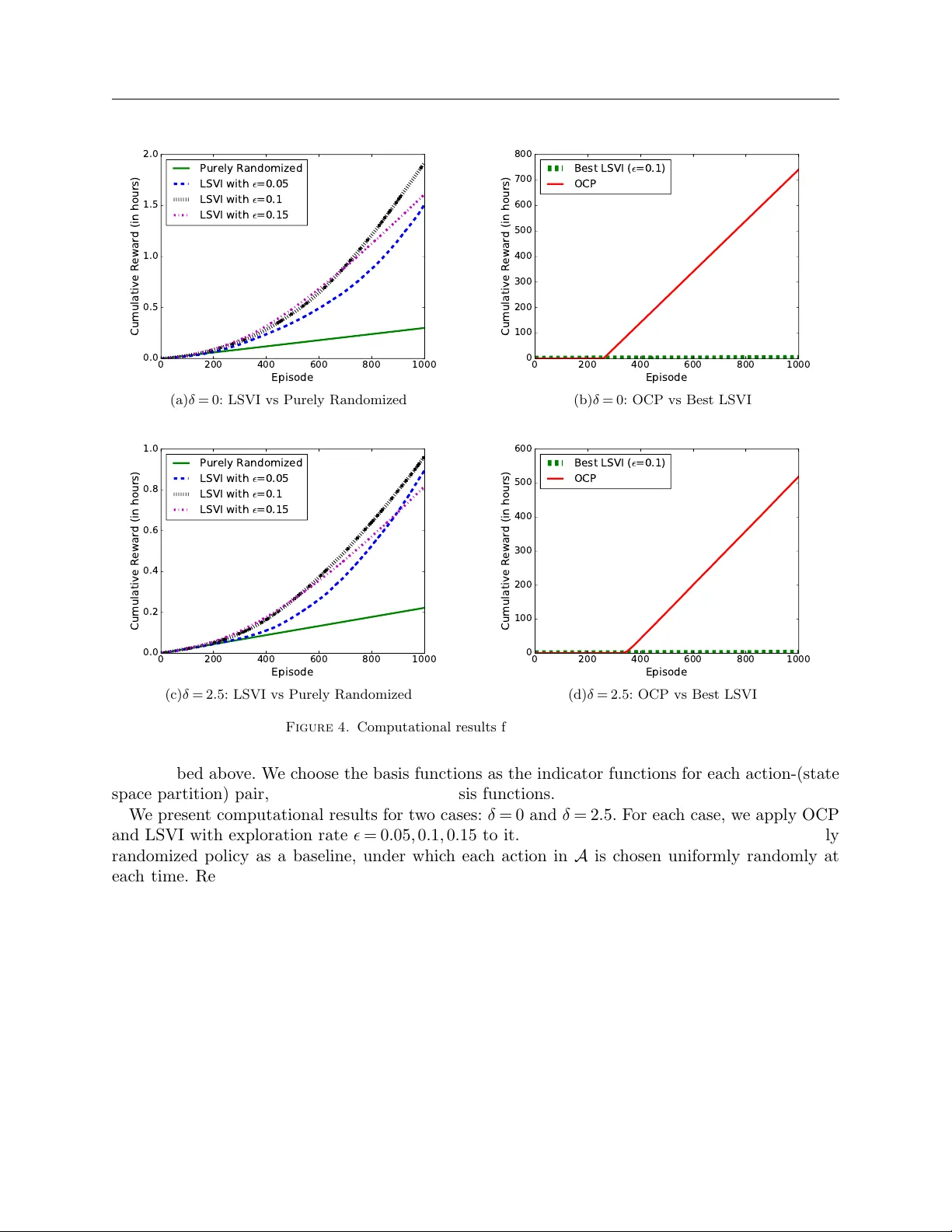

Authors: Zheng Wen, Benjamin Van Roy