Information Flows? A Critique of Transfer Entropies

A central task in analyzing complex dynamics is to determine the loci of information storage and the communication topology of information flows within a system. Over the last decade and a half, diagnostics for the latter have come to be dominated by…

Authors: Ryan G. James, Nix Barnett, James P. Crutchfield

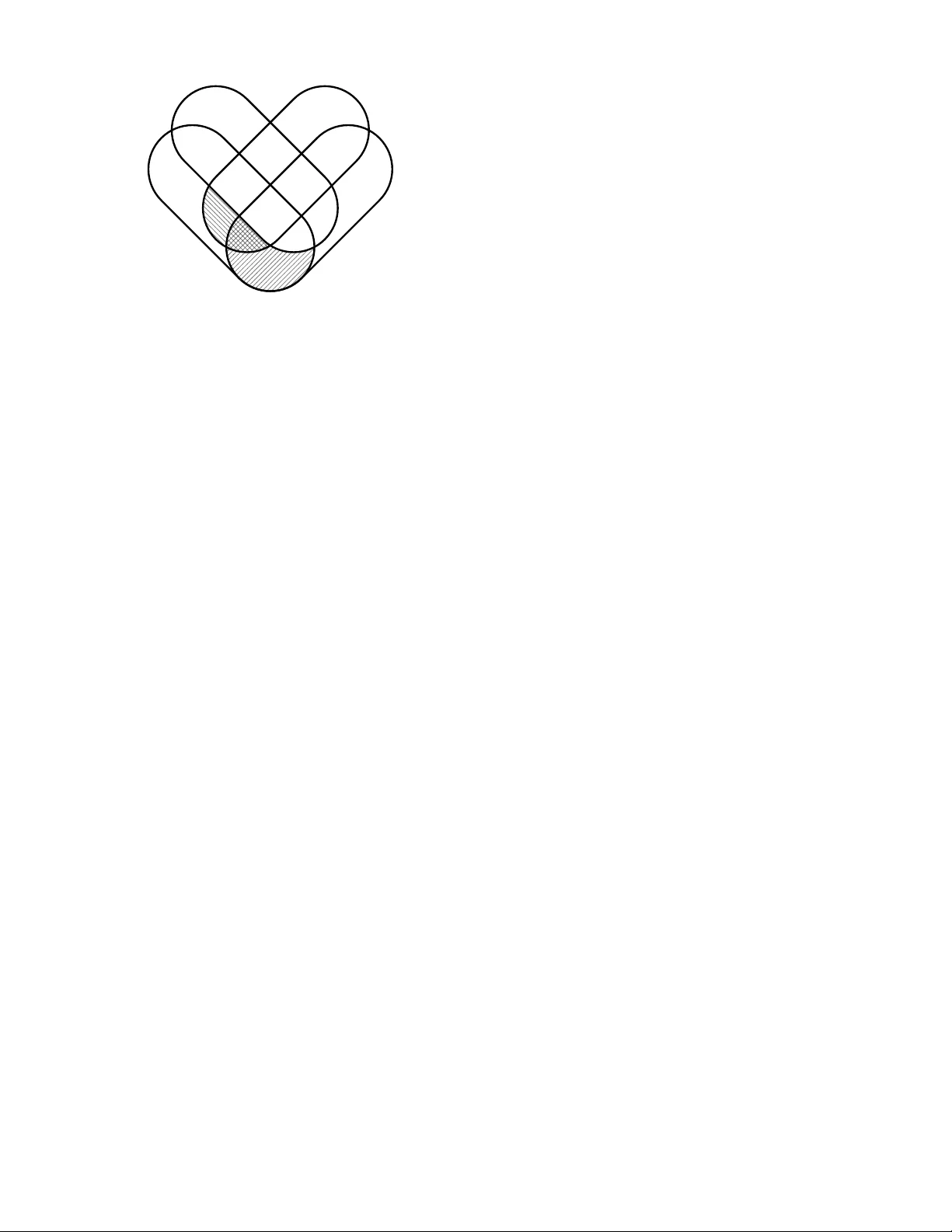

San ta F e Institute W orking P ap er 16-01-001 arXiv :1512.06479 Information Flo ws? A Critique of T ransfer En tropies R y an G. James, 1, 2 , ∗ Nix Barnett, 1, 3 , † and James P . Crutc hfield 1, 2, 3 , ‡ 1 Complexity Scienc es Center 2 Physics Dep artment 3 Mathematics Dep artment, University of California at Davis, One Shields A venue, Davis, CA 95616 (Dated: June 20, 2016) A central task in analyzing complex dynamics is to determine the lo ci of information storage and the communication topology of information flows within a system. Over the last decade and a half, diagnostics for the latter ha v e come to be dominated by the transfer entr opy . Via straightforw ard examples, we show that it and a deriv ativ e quan tit y , the c ausation entr opy , do not, in fact, quantify the flow of information. At one and the same time they can ov erestimate flo w or underestimate influence. W e isolate why this is the case and prop ose several av en ues to alternate measures for information flow. W e also address an auxiliary consequence: The proliferation of netw orks as a no w-common theoretical mo del for large-scale systems, in concert with the use of transfer-like en tropies, has sho ehorned dyadic relationships into our structural interpretation of the organization and b ehavior of complex systems. This interpretation thus fails to include the effects of p olyadic dep endencies. The net result is that m uc h of the sophisticated organization of complex systems may go undetected. Keyw ords : sto chastic pro cess, transfer entrop y , causation entrop y , partial information decomp osi- tion, netw ork science P ACS n um bers: 05.45.-a 89.75.Kd 89.70.+c 05.45.T p 02.50.Ey An imp ortan t task in understanding a complex system is determining its information dynamics and informa- tion architecture—what mechanisms generate informa- tion, where is that information stored, and ho w is it transmitted within a system? While this pursuit go es bac k p erhaps as far as Shannon’s foundational work on comm unication [ 1 ], in man y w a ys it w as Kolmogoro v [ 2 – 4 ] who highligh ted the transmission of information from the micro- to the macroscales as central to the b ehavior of complex systems. Later, Lin show ed that “information flo w” is key to understanding netw ork controllabilit y [ 5 ] and Shaw sp eculated that such flows b etw een information sources and sinks is a necessary descriptive framework for spatially extended chaotic systems—an alternative to narrativ es based on tracking energy flows [ 6 , Sec. 14]. A common thread in these works is quantifying the flow of information. T o facilitate our discussion, let’s first consider an intuitiv e definition: Information flo w from pro cess X to pro cess Y is the existence of information that is curr ently in Y , the “cause” of which can b e solely attributed to X ’s p ast . If information can b e solely at- tributed in such a manner, w e refer to it as lo c alize d . This notion of lo calized flow mirrors the intuitiv e general definitions of “causal” flo w prop osed by Granger [ 7 ] and, b efore that, Wiener [ 8 ]. Ostensibly to measure information flo w—and notably m uc h later than the ab o v e efforts—Schreiber introduced the transfer entrop y [ 9 ] as the information shared b etw een X ’s past and the present Y t , conditioning on information from Y ’s past. Perhaps not surprisingly , given the broad and pressing need to prob e the organization of mo dern life’s increasingly complex systems, the transfer entrop y’s use has b een substantial—o ver the last decade and a half, its introduction alone garnered an av erage of 100 citations p er y ear. The primary goal of the following is to show that the trans- fer entrop y do es not, in fact, measure information flow, sp ecifically in that it attributes an information source to influences that are not lo calizable and so not flows. W e dra w out the interpretational errors, some quite subtle, that result—including o v erestimating flow, underestimat- ing influence, and more generally misidentifying structure when mo deling complex systems as netw orks with edges giv en by transfer entropies. Iden tifying shortcomings in the transfer entrop y is not new. Smirnov [ 10 ] p oin ted out three: T w o relate to how it resp onds to using undersampled empirical distributions and are therefore not conceptual issues with the measure. The third, how ever, w as its inability to differentiate in- direct influences from direct influences. This weakness motiv ated Sun and Bollt to prop ose the causation en- trop y [ 11 ]. While their measure do es allo w differentiating 2 b et w een direct and indirect effects via the addition of a third hidden v ariable, it too ascrib es an information source to unlo calizable influences. Our exposition reviews the notation and information theory needed and then considers t wo rather similar examples—one inv olving influences b et ween tw o pro cesses and the other, influences among three. They make op era- tional what we mean by “lo calized”, “flow”, and “influ- ence”, leading to the conclusion that the transfer entrop y fails to capture information flow. W e close b y discussing a distinctiv e philosophy underlying our critique and then turn to p ossible resolutions and to concerns ab out mo del- ing practice in netw ork science. Backgr ound F ollo wing standard notation [ 12 ], we denote random v ariables with capital letters and their asso ciated outcomes using low er case. F or example, the observ ation of a coin flip migh t b e denoted X , while the coin actually landing Heads or T ails w ould b e x . Emphasizing temp oral pro cesses, we subscript a random v ariable with a time index; e.g ., the random v ariable representing a coin flip at time t is denoted X t . W e denote a temp orally con tiguous blo c k of random v ariables (a time series) using a Python- slice-lik e notation X i : j = X i X i +1 . . . X j − 1 , where the final index is exclusive. When X t is distributed according to Pr ( X t ) , w e denote this as X t ∼ Pr ( X t ) . W e assume familiarit y with basic information measures, sp ecifically the Shannon entrop y H [ X ] , mutual information I [ X : Y ] , and their conditional forms H [ X | Z ] and I [ X : Y | Z ] [ 12 ]. The tr ansfer entr opy T X → Y from time series X to time series Y is the information shared b et w een X ’s past and Y ’s present, given knowledge of Y ’s past [ 9 ]: T X → Y = I [ Y t : X 0: t | Y 0: t ] . (1) In tuitively , this quan tifies ho w muc h better one predicts Y t using b oth X 0: t and Y 0: t o ver using Y 0: t alone. A nonzero v alue of the transfer entrop y certainly implies a kind of influence of X on Y . Our questions are: Is this influence necessarily via information flow? Is it necessarily direct? A ddressing the last question, the c ausation entr opy C X → Y | ( Y ,Z ) is similar to the transfer entrop y , but condi- tions on the past of a third (or more) time series [ 11 ]: C X → Y | ( Y ,Z ) = I [ Y t : X 0: t | Y 0: t , Z 0: t ] . (2) (It is also known as the c onditional tr ansfer entr opy .) The primary improv ement ov er T X → Y is the causation en tropy’s ability to determine if a dep endency is indirect ( i.e ., mediated by the third pro cess Z ) or not. Consider, for example, the follo wing system X → Z → Y : v ariable X influences Z and Z in turn influences Y . Here, any influence that X has on Y m ust pass through Z . In this case, the transfer entrop y T X → Y > 0 bit ev en though X do es not directly influence Y . The causation entrop y C X → Y | ( Y ,Z ) = 0 bit, how ever, due to conditioning on Z . Man y concerns and pitfalls in applying information mea- sures comes not in their definition, estimation, or deriv a- tion of asso ciated prop erties. Rather, many arise in in- terpr eting results. Prop erly interpreting the meaning of a measure can b e the most subtle and imp ortant task we face when using measures to analyze a system’s structure, as we will now demonstrate. F urthermore, while these examples may seem pathological, they were chosen for their transparency and simplicity; similar failures arise in Gaussian systems [ 13 ] signifying that the issue at hand is widespread. Example: Two Time Series Consider tw o time series, sa y X and Y , given by the probability laws: X t ∼ ( 0 with probability 1 / 2 1 with probability 1 / 2 , Y 0 ∼ ( 0 with probability 1 / 2 1 with probability 1 / 2 , and Y t = X t − 1 ⊕ Y t − 1 ; that is, X t and Y 0 are indep endent and take v alues 0 and 1 with equal probability , and y t is the Exclusive OR of the prior v alues x t − 1 and y t − 1 . By a straightforw ard calculation we find that T X → Y = 1 bit . Do es this mean that one bit of information is b eing tr ansferr e d from X to Y at each time step? Let’s take a closer lo ok. W e first observe that the amount of information in Y t is H [ Y t ] = 1 bit . Therefore, the uncertaint y in Y t can be reduced by at most 1 bit . F urthermore, the information shared by Y t and the prior b ehavior of the tw o time series is I [ Y t : ( X 0: t , Y 0: t )] = 1 bit . And so, the 1 bit of Y t ’s uncertain ty in fact can b e remo ved by the prior observ ations of b oth time series. Ho w muc h do es Y 0: t alone help us predict Y t ? W e quantify this using mutual information. Since I [ Y t : Y 0: t ] = 0 bit , the v ariables are indep endent: Y 0: t alone do es not help in predicting Y t . Ho wev er, knowing Y 0: t , how muc h do es X 0: t help in predicting Y t ? The conditional mutual information I [ Y t : X 0: t | Y 0: t ] = 1 bit —the transfer entrop y w e just computed—quan tifies this. This situation is graphically analyzed via the information diagram (I-diagram) [ 14 ] in Fig. 1a . T o obtain a more complete picture of the information dynamics under consideration, let’s rev erse the order in 3 Y t X 0: t Y 0: t 0 1 (a) Y 0: t alone does not predict Y t . (The -shaped region I [ Y 0: t : Y t ] = 0 bit.) Ho wev er, when used in conjunction with X 0: t , they completely predict its v alue. (The -shaped region I [ X 0: t : Y t | Y 0: t ] = 1 bit.) Y t X 0: t Y 0: t 0 1 (b) X ’s past X 0: t alone does not aid in predicting Y t . (The -shaped region I [ X 0: t : Y t ] = 0 bit.) Ho wev er, given knowledge of X 0: t , then Y 0: t can predict Y t . (The -shaped region I [ Y 0: t : Y t | X 0: t ] = 1 bit.) FIG. 1. T w o complementary wa ys to view the information shared b etw een X 0: t , Y 0: t , and Y t . In each I-Diagram, a circle represen ts a random v ariable whose area measures the ran- dom v ariable’s entrop y . Overlapping regions are information that is shared. The transfer entrop y is a conditional mutual information; a region where tw o random v ariables ov erlap, but that falls outside the random v ariable b eing conditioned on. whic h the time series are queried. The mutual information I [ Y t : X 0: t ] = 0 bit tells us that the X time series alone do es not help predict Y t . How ev er, the conditional mutual information I [ Y t : Y 0: t | X 0: t ] = 1 bit . And so, from this p oin t of view it is Y ’s past that helps predict Y t , con- tradicting the preceding analysis. This complementary situation is presented diagrammatically in Fig. 1b . Ho w can we rectify the seemingly inconsistent conclusions dra wn b y these tw o lines of reasoning? The answer is quite straightforw ard: the 1 bit of information ab out Y t do es not come from either time series individually , but rather from b oth of them sim ultaneously . (In fact, the I-Diagrams are naturally consistent, once one recognizes that the c o-information [ 15 ], the inner-most information atom, is I [ Y t : X 0: t : Y 0: t ] = − 1 bit.) In short, the 1 bit of reduction in uncertaint y H [ Y t ] should not b e lo c alize d to either time series. The transfer en- trop y , how ever, erroneously lo calizes this information to X 0: t . In light of this, the transfer entrop y over est imates information flow. This example shows that the transfer entrop y can b e p ositiv e due not to information flow, but rather to nonlo- calizable influence—in this case, a c onditional dep endenc e b et w een v ariables. This suggests that, though inappropri- ate for measuring information flow, the transfer en tropy ma y b e a viable measure of such influence. Our next example illustrates that this to o is incorrect. Example: Thr e e Time Series Our second example paral- lels the first. Before, we considered the case where one of t wo time series is determined by the past of b oth , we now consider the case where two time series determine a thir d , again via an Exclusive OR op eration. Their probability la ws are: X t ∼ ( 0 with probability 1 / 2 1 with probability 1 / 2 , Y t ∼ ( 0 with probability 1 / 2 1 with probability 1 / 2 , and Z t = X t − 1 ⊕ Y t − 1 , in whic h z 0 ’s v alue is irrelev an t. Unlik e the prior ex- ample, the transfer entrop y from either X or Y to Z is zero: T X → Z = T Y → Z = 0 bit , and it therefore un- der estimates influence that is present. F urthermore, the relev ant pairwise m utual informations all v anish: I [ Z t : X 0: t ] = I [ Z t : Y 0: t ] = I [ Z t : Z 0: t ] = 0 bit . The time series are pairwise indep endent. Giv en that we are probing the influences b etw een three time series, it is natural no w to consider the behav- ior of the causation entrop y . In this case, we ha ve C X → Z | ( Y ,Z ) = C Y → Z | ( X,Z ) = 1 bit , indicating that given the past b ehavior of Z and X (or Y ), the past of Y (or X ) can b e used to predict the b ehavior of Z t . Like b efore, this 1 bit of information cannot b e lo calized to either X or Y and so it is inaccurate to ascrib e the 1 bit of infor- mation in Z t to either X or Y alone. In this wa y , the causation entrop y also erroneously lo calizes the 1 bit of join t influence. While the causation entrop y succeeds here as a measure of nonlo calizable influence, as a measure of information flow, it o verestimates. (This is known to Sun and Bollt, but here we stress that the failure is a general issue with interpreting its v alue, not merely a lim- itation regarding netw ork inference.) These information quan tities are display ed in the I-Diagram in Fig. 2 . Discussion W e see that transfer-like entropies can b oth o verestimate information flo w (first example) and un- derestimate influence (second example). The primary misunderstanding of these quantities stems from a mis- c haracterization of the conditional mutual information. Most basically , probabilistic conditioning is not a “sub- tractiv e” op eration: I [ X : Y | Z ] is not the information shared by X and Y once the influences of Z ha ve b een remo ved. Rather, it is the information shared by X and Y taking into ac c ount Z . This is not a game of mere seman- tics: Conditioning can incr e ase the information shared b et w een tw o pro cesses: I [ X : Y ] < I [ X : Y | Z ] . This cannot happ en if conditioning merely remov ed influence: 4 Z t Z 0: t X 0: t Y 0: t 1 1 1 1 1 1 1 1 1 1 1 1 1 1 1 1 1 1 1 1 1 1 1 1 1 1 1 1 1 1 1 1 1 1 1 1 1 1 1 1 1 1 1 1 1 1 1 1 1 1 1 1 1 1 1 1 1 1 1 1 1 1 1 1 1 1 -1 -1 -1 -1 -1 -1 -1 -1 -1 -1 -1 -1 -1 -1 -1 -1 -1 -1 -1 -1 -1 -1 -1 -1 -1 -1 -1 -1 -1 -1 -1 -1 -1 0 0 0 0 FIG. 2. Information diagram depicting b oth transfer entropies and causation entropies for three times series X , Y , and Z . T X → Z = 0 bit corresp onds to the tw o regions shaded with south-east sloping lines and T Y → Z = 0 bit , the tw o regions shaded with north-east sloping lines. C X → Z | ( Y ,Z ) = 1 bit is the region con taining only south-east sloping lines and, similarly , C Y → Z | ( X,Z ) = 1 bit is the region containing only north-east sloping lines. conditional dep endence includes additional dep endence that o ccurs in the presence of a third v ariable [ 16 ]. Mea- suring information flow—as we hav e defined it—requires a metho d of lo c alizing information. Since simple con- ditioning can fail to lo calize information, the transfer en tropy , causation entrop y , and other measures utilizing the conditional mutual information can fail as measures of information flow. Another wa y to understand conditional dep endence is through the p artial information de c omp osition [ 17 ]. Within this framework, the mutual information b etw een t wo random v ariables X 1 and X 2 (call them inputs ) and a third random v ariable Y (the output ) is decomp osed into four mutually exclusive comp onents: I [( X 1 , X 2 ) : Y ] = R + U 1 + U 2 + S . R quan tifies ho w the inputs X 1 and X 2 r e dundantly inform the output Y , U 1 and U 2 quan tify ho w each provides unique information to Y , and finally S quan tifies how the inputs together syner gistic al ly inform the output. In this decomp osition, the mutual informa- tion b etw een one input and the output is equal to what uniquely comes from that input plus what is redundantly pro vided by b oth inputs; I [ X 1 : Y ] = R + U 1 , for example. Ho wev er, the mutual information b et ween that input and the output conditioned on the other input is equal to what uniquely comes from that one input, plus what is synergis- tically provided by b oth inputs: I [ X 1 : Y | X 2 ] = U 1 + S . In other words, conditioning remov es the redundant in- formation, but adds the synergistic information. Here, conditional dep endencies manifest themselves as synergy . T reating X 0: t and Y 0: t as inputs and Y t as output, the partial information decomp osition identifies the transfer en tropy T X → Y as the sum of the unique information from X 0: t plus the synergistic information from both X 0: t and Y 0: t together. It seems natural, and has b een previously prop osed [ 13 , 18 ], to asso ciate only this unique information with information flo w. The transfer entrop y , ho wev er, conflates unique information and synergistic information leading to inconsistencies, suc h as analyzed in the examples. Similar conclusions follow for the causation en tropy; ho wev er, due to the additional v ariable, the analysis is more inv olved. Though there is as yet no broadly accepted quantification of unique information [ 19 ], if one were able to accurately measure it, it may pro ve to be a viable measure of informa- tion flow. It is notable that Stramaglia et al ., building on Ref. [ 20 ], considered how total synergy and redundancy of a collection of v ariables influence each other [ 21 ]. Other quantifications of information flow b etw een time series ha v e b een prop osed. The dir e cte d information [ 22 ] is essentially a sum of transfer entropies and so inherits the same flaws. F urthermore, b oth the transfer entrop y and directed information hav e b een shown to b e general- izations of Gr anger c ausality [ 7 , 23 – 25 ], itself purp ortedly a measure of “predictive causality” [ 26 ]. A y and P olani prop osed a measure of information flow based on ac- tiv e interv ention in which an outside agent mo difies the system in question by removing comp onen ts [ 27 ]. W e conjecture that all these measures suffer for the same reasons—conflation of dyadic and p olyadic relationships. Conclusions and Conse quenc es Although the examples w ere inten tionally straightforw ard, the consequences ap- p ear far-reac hing. Let’s consider netw ork science [ 28 ] whic h, ov er the same decade and a half p erio d since the in tro duction of the transfer entrop y , has developed into a v ast and vibrant field, with significant successes in man y application areas. Standard (graph-based) netw orks are comp osed of no des , represen ting system observ ables, and e dges , representing relationships b etw een them. As com- monly practiced, such netw orks represent dy adic (binary) relationships b etw een no des [ 29 ]—article co-authorship, p o w er transmission b et ween substations, and the like. It w ould seem, then, that muc h of the popularity of us- ing the transfer entrop y to analyze large-scale complex systems is that it is an information measure sp ecifically adapted to quan tifying dyadic relationships. Such a to ol go es hand-in-hand with standard netw ork mo deling. As the examples emphasized, though, observ ables may b e related by p olyadic relationships that cannot b e naturally represen ted on a standard net work as commonly practiced. F or example, all three v ariables in our second example are pairwise indep endent. A standard netw ork representing 5 dep endence b etw een them therefore consists of three dis- connected no des, th us failing to capture the dep endence b et w een v ariables that is, in fact, presen t. As a start to repair this deficit, it would b e more appropriate to represen t suc h a complex system as a hyp er gr aph [ 30 , 31 ]. Con tinuing this line of thought, if one b elieves that a stan- dard netw ork is an accurate mo del of a complex system, then one implicitly assumes that p olyadic relationships are either not imp ortan t or do not exist. Said this wa y , it is clear that when mo deling a complex system, one m ust test for this lack of p olyadic relationship first. With this assumption generally unsp oken, though, it is not surprising that a nonzero v alue of the transfer en tropy leads analysts to interpret it as information flo w. Within that narro w view, indeed, how else could one time series influence another if all interactions are dy adic? Restated, when a system is mo deled as a standard netw ork, all relationships are assumed to b e dyadic. One is therefore naturally inclined to explain all observed dep endencies as b eing dyadic. The cost, of course, is either a greatly imp o v erished or a spuriously embellished view of organi- zation in the w orld. As such, mo deling a complex system b y wa y of a graph with edges determined by transfer or causation entropies is intrinsically flaw ed. Man y of the preceding issues are difficult to analyze since at present notions of “influence” are not sufficiently pre- cise and, even when they are as with the use of informa- tion diagrams and measures and the partial information decomp osition, there is a combinatorial explosion in p os- sible types of dep endence relationships. Said differently , what one needs is a more explicit, ev en more elemen- tary , structural view of how one pro cess can b e trans- formed to another. P aralleling the canonical -mac hine minimal sufficient statistic representation of stationary pro cesses, t wo of us (NB and JPC) recen tly introduced a minimal optimal transformation of one pro cess into another, the -transducer [ 32 ]. This provides a structural represen tation for the minimal optimal predictor of one pro cess ab out another. The corresp onding transducer analysis, paralleling that ab ov e in Figs. 1 and 2 , identifies new informational atoms b eyond those of the transfer en tropies [ 33 ]. In short, the transfer entrop y can b oth ov erestimate infor- mation flow (first example) and underestimate influence (second example). These effects are comp ounded when viewing complex systems as standard net works since the latter further misconstrue p olyadic relationships. While w e do not ob ject to the transfer entrop y as a measure of the reduction in uncertaint y ab out one time series given another, we do find its mechanistic interpretation as in- formation flow or tr ansfer to b e incorrect. In fact, this is true for any related measures—such as the causation en tropy—that are based on conditional mutual informa- tion b etw een observed v ariables. In light of these in terpre- tational concerns, it seems that several recent works that rely hea vily on transfer-like entropies—ranging from cellu- lar automata [ 34 ] and information thermo dynamics [ 35 ] to cell regulatory netw orks [ 36 ] and consciousness [ 37 ]—will b enefit from a close reexamination. W e thank A. Boyd, K. Burk e, J. Emenheiser, B. Johnson, J. Mahoney , A. Mullokandov, P .-A. Noël, P . Riechers, N. Timme, D. P . V arn, and G. Wimsatt for helpful feedback. This material is based up on w ork supp orted by , or in part b y , the U. S. Army Research Lab oratory and the U. S. Arm y Research Office under contracts W911NF-13-1-0390 and W911NF-13-1-0340. ∗ rg james@ucda vis.edu † nix@math.ucda vis.edu ‡ c haos@ucda vis.edu [1] C. E. Shannon. Bel l Sys. T e ch. J. , 27:379–423, 623–656, 1948. [2] A. N. Kolmogoro v. IRE T r ans. Info. Th. , 2(4):102–108, 1956. Math. Rev. vol. 21, nos. 2035a, 2035b. [3] Ja. G. Sinai. Dokl. Akad. Nauk. SSSR , 124:768, 1959. [4] D. S. Ornstein. Scienc e , 243:182, 1989. [5] C. T. Lin. IEEE T r ans. Auto. Control , 19(3):201–208, 1974. [6] R. Shaw. Z. Naturforsh. , 36a:80, 1981. [7] C. W. J. Granger. Ec onometric a , 37(3):424–438, 1969. [8] N. Wiener. In E. Bec ken bach, editor, Mo dern Mathematics for the Engine er . McGraw-Hill, New Y ork, 1956. [9] T. Schreiber. Phys. R ev. L ett. , 85(2):461, 2000. [10] D. A. Smirnov. Phys. R ev. E , 87(4):042917, 2013. [11] J. Sun and E. M. Bollt. Physic a D , 267:49–57, 2014. [12] T. M. Co v er and J. A. Thomas. Elements of Information The ory . John Wiley & Sons, New Y ork, 2012. [13] A. B. Barrett. Phys. R ev. E , 91(5):052802, 2015. [14] R. W. Y eung. IEEE T r ans. Info. Th. , 37(3):466–474, 1991. [15] A. J. Bell. In S. Makino S. Amari, A. Cichocki and N. Mu- rata, editors, Pr o c. Fifth Intl. W orkshop on Indep endent Comp onent A nalysis and Blind Signal Sep ar ation , volume ICA 2003, pages 921–926, New Y ork, 2003. Springer. [16] I. Nemenman. q-bio/0406015 . [17] P . L. Williams and R. D. Beer. . [18] P . L. Williams and R. D. Beer. . [19] N. Bertschinger, J. Rauh, E. Olbrich, J. Jost, and N. A y . Entr opy , 16(4):2161–2183, 2014. [20] L. M. A. Bettencourt, V. Gin tautas, and M. I. Ham. Phys. R ev. L et. , 100(23):238701, 2008. [21] S. Stramaglia, G.-R. W u, M. Pellicoro, and D. Marinazzo. Phys. R ev. E , 86(6):066211, 2012. 6 [22] J. Massey . In Pro c. Intl. Symp. Info. The ory Applic. , v olume ISIT A-90, pages 303–305, Y okohama National Univ ersit y , Y okohama, Japan, 1990. [23] L. Barnett, A. B. Barrett, and A. K. Seth. Phys. R ev. L et. , 103(23):238701, 2009. [24] P .-O. Amblard and O. J. J. Michel. J. Comp. Neur osci. , 30(1):7–16, 2011. [25] Granger causality can refer to either Granger’s general in tuitiv e definition of predictive causality or the sp ecific (linear) statistical metho ds that he prop osed. W e refer to the latter, more commonly used meaning. [26] F. X. Dieb old. Elements of F or e c asting . Thomson/South- W estern, Mason, OH, 2007. [27] N. A y and D. Polani. A dv. Complex Sys. , 11(01):17–41, 2008. [28] M. E. J. Newman. SIAM R eview , 45(2):167–256, 2003. [29] Higher-order dep endencies can b e represented with stan- dard netw orks using additional, so-called laten t v ariables. F or example, one can represent p olyadic relationships by building a new bipartite netw ork consisting of the orig- inal no des (type A) plus additional no des representing p oly adic relationships (type B). Here, an edge exists b e- t ween a no de of type A and a node of type B if that no de is inv olved in that p olyadic relationship. In any case, directly measuring and interpreting information flow b e- t ween no des b ecomes a muc h more subtle issue in such augmen ted, hidden-v ariable netw orks. [30] R. Ramanathan, A. Bar-Noy , P . Basu, M. Johnson, W. Ren, A. Swami, and Q. Zhao. In IEEE Conf. Computer Commun. , pages 870–875, 2011. [31] E. Estrada and J. A. Ro driguez-V elazquez. Systems R e- se ar ch . [32] N. Barnett and J. P . Crutchfield. J. Stat. Phys. , 161(2):404–451, 2015. [33] N. Barnett and J. P . Crutchfield. In pr epar ation . [34] J. T. Lizier, M. Prokopenko, and A. Y. Zomay a. Phys. R ev. E , 77(2):026110, 2008. [35] J. M. R. Parrondo, J. M. Horo witz, and T. Saga wa. Natur e Physics , 11(2):131–139, 2015. [36] S. I. W alk er, H. Kim, and P . C. W. Da vies. arXiv:1507.03877 . [37] U. Lee, S. Blain-Moraes, and G. A. Mashour. Phil. T r ans. R oy. So c. L ond. A , 373(2034):20140117, 2015.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment