Community Recovery in Graphs with Locality

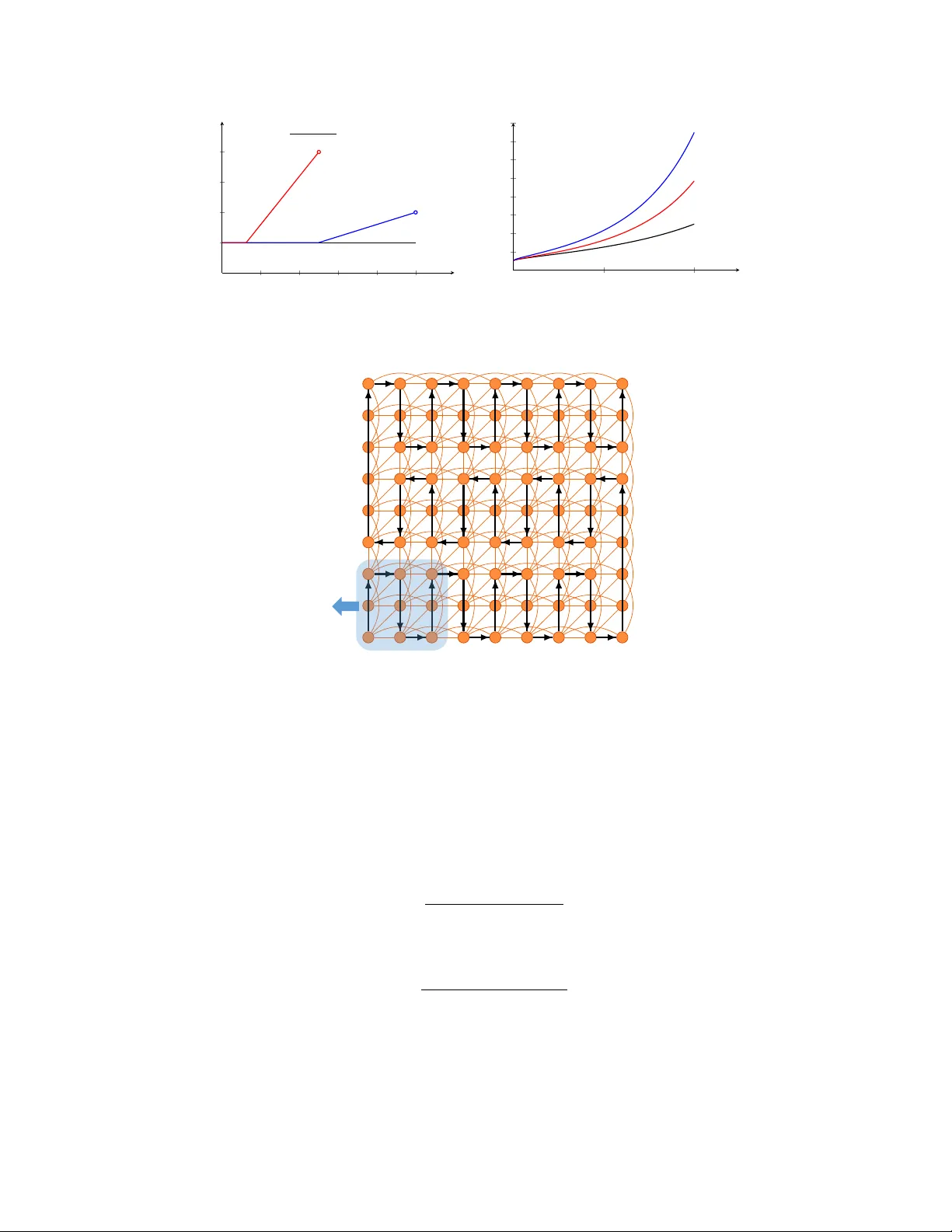

Motivated by applications in domains such as social networks and computational biology, we study the problem of community recovery in graphs with locality. In this problem, pairwise noisy measurements of whether two nodes are in the same community or…

Authors: Yuxin Chen, Govinda Kamath, Changho Suh