Distributed Flexible Nonlinear Tensor Factorization

Tensor factorization is a powerful tool to analyse multi-way data. Compared with traditional multi-linear methods, nonlinear tensor factorization models are capable of capturing more complex relationships in the data. However, they are computationall…

Authors: Sh, ian Zhe, Kai Zhang

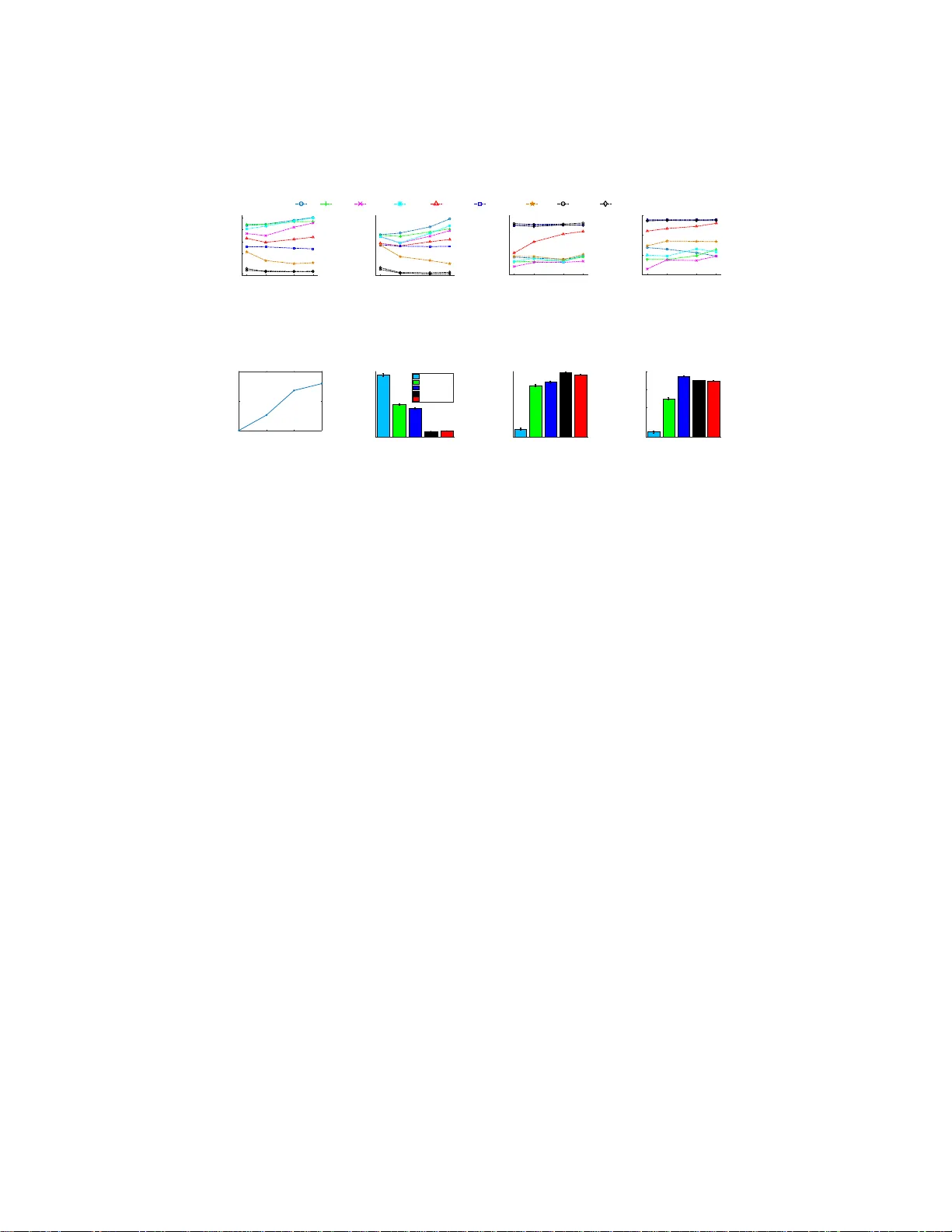

Distributed Flexible Non linear T ensor Factorization Shandian Zhe Purdue Univ ersity szhe@purdue.edu Kai Zhang Lawrence B erkeley Lab kzhang980@gmail .com Pengyuan W ang Y ahoo! Resear ch pengyuan@yahoo-inc.com Kuang-chih Lee Y ahoo! Resear ch kclee@yahoo-inc.com Zenglin Xu Univ ersity of Electronic Science and T echnology of China zlxu@uestc.edu.cn Y uan Qi Purdue University alanqi@cs.purdue.edu Zoubin Ghahraman Univ ersity of Cambridge zoubin@eng.cam.ac.uk May 24, 2016 Abstract T ensor factorization is a powerful tool to analyse multi -way data. Compared with traditional multi-linear methods, nonlinear tensor facto rization models are capable of capturing more comple x relationships in the data. Ho wev er, the y are computationally expensi ve and may suffer sev ere learning bias in case of extreme data sparsity . T o overco me these limitations, in this paper we propose a distributed, flexible nonlinear tensor factorization mod el. Our model ca n effecti vely avoid the expen siv e computations and structural restrictions of the Kron ecker - product in e x- isting TGP formulations, allowing an arbitrary subset of tensorial entries to be selected to contribute to the t raining. At the same t ime, we deriv e a t ractable and tight v ariational e vidence lower bound (ELBO) that en ables highly decoupled, parallel co mputations and high-quality inference. Based on the ne w boun d, we de- velop a distributed inference algorithm i n the M A P R E D U C E frame work, which is ke y-v alue-free and can fully exploit the memory cache mechanism in fast M A P R E - D U C E systems such as S PA R K. Experimental resu lts fully demonstrate the advan- tages of our method over se veral st ate-of-the-art approaches, in terms of both pre- dictiv e performance and computational efficienc y . Moreover , our approach shows a promising potential in the application of Click-Through-Rate (CT R) prediction for online advertising. 1 1 Introd uction T e nsors, or multidimensional arrays, are generalization s o f matrices (from b inary in- teractions) to hig h-ord er in teractions between multiple entities. For example, w e can extract a t hree-m ode tensor ( user , ad vertisement , c ontext ) f rom online ad vertising data. T o analyze tensor data, p eople usually turn to factorization ap proache s that use a set of latent factors to r epresent each entity and model how the latent factors interact with each other to genera te tensor elements. Classical ten sor factorization models include T ucker [24] and CANDECOMP/P ARAF A C (CP) [8] d ecompo sitions, which have been widely used in r eal-world application s. Howev e r , b ecause they all assume a multi- linear intera ction between the laten t factors, they are u nable to c apture more complex, nonlinear relationships. Recently , Xu et al. [25] pro posed Infinite Tucker deco mpo- sition (Inf T ucker), which generalizes the T ucker m odel to infinite f eature space using a T ensor-v a riate Gaussian process (T GP) an d thu s is powerful to model intricate non - linear interactions. Howe ver , InfT ucker an d its v ar iants [28 , 29] are computationally expensiv e, becau se the Kronecker prod uct between the covariances of all the modes re- quires the TGP to mod el the entir e tensor structure. In add ition, they may suffer from the extreme sparsity of real-world tensor data, i.e., when the prop ortion of the nonzero entries is extremely lo w . As is often th e case, most of the zero elemen ts in real tensors are meaningless: they simply indicate missing or unobserved entrie s. I ncorpo rating a ll of them in th e train ing pro cess m ay affect the facto rization quality a nd lead to biased prediction s. T o add ress th ese issues, in this p aper we p ropose a distrib uted, flexible nonlin- ear tensor factorization mod el, which h as se veral important advantages. First, it can capture highly non linear inter actions in the tensor , and is flexible enough to inco rpo- rate arbitra ry subset of (mean ingful) tensorial entries for the tra ining. This is achieved by placin g Gau ssian process pr iors over tensor en tries, wh ere th e inp ut is constructed by con catenating the laten t factors from each mode and the intricate rela tionships are captured by usin g the kernel function. By using such a constructio n, the covariance function is t hen free of the Kronecker-product stru cture, and as a result users can freely choose any s ubset of tensor elements for the training process and incorporate prior do- main kn owledge. For example, o ne can ch oose a co mbination of balan ced zero an d nonzer o elem ents to ov ercome the learning bias. Sec ond, the tight v ariational evidence lower bound (ELBO) we deriv ed using functio nal deri vati ves and con vex c onjugates subsumes optimal variational po steriors, thu s ev ades inefficient, seq uential E-M up- dates and enables highly ef ficien t, parallel computations a s well as improved in ference quality . Moreover, the new bo und allows u s to develop a distributed, gradien t-based op- timization alg orithm. Fin ally , we dev elop a simple yet very efficient pr ocedur e to av oid the data shuffling o peration , a major perform ance bottleneck in the (ke y-value) so rting proced ure in M A P R E D U C E . That is, r ather than sending out ke y-value pairs, each mapper si mply calculates an d send s a global gradient vector without keys. T his k ey- value-free procedu re is gener al and can effecti vely prev ent massi ve disk IOs and fu lly exploit the memory cache mechanism in f a st M A P R E D U C E systems, such as S P A R K . Evaluation using small real-world tensor data ha ve fully demonstrated the superior prediction accuracy of our model in comparison with In fT ucker and other state-o f- the-art. On large tensors with millions of non zero elements, our app roach is signifi- 2 cantly better than, or at lea st as goo d as two popular large-scale nonlinear factoriza- tion methods b ased on TGP: one uses hier archical m odeling to perf orm distributed infinite T ucker decomp osition [28]; the other f urther enhanc es InfT ucker by using Dirichlet proc ess mixture prio r over the latent factors and employs an online learn- ing scheme [29]. Ou r m ethod also outperforms Giga T en sor [11], a typical large-scale CP factorization algo rithm, by a large ma rgin. In addition , our meth od achieves faster training speed and enjoys alm ost lin ear scalability on the number o f computational nodes. W e app ly ou r mod el to CTR pr ediction fo r online advertising and achieves a significant, 20% improvemen t over th e popular logistic regression an d linear SVM approa ches. 2 Backgrou nd W e first introd uce the back groun d knowledge. For c on venience, we will use th e same notations in [ 25]. Specifically , we denote a K -m ode te nsor by M ∈ R d 1 × ... × d K , wh ere the k -th mode is o f dimen sion d k . The tensor entry at lo cation i ( i = ( i 1 , . . . , i K ) ) is denoted by m i . T o introduce Tucker deco mposition, we need to gene ralize matrix- matrix produ cts to tensor-matrix p roduc ts. Specifically , a tensor W ∈ R r 1 × ... × r K can multiply with a matr ix U ∈ R s × t at mode k when its dimension at mode - k is consistent with the nu mber of columns in U , i.e. , r k = t . The prod uct is a new tensor, with size r 1 × . . . × r k − 1 × s × r k +1 × . . . × r K . Each ele ment is calculated by ( W × k U ) i 1 ...i k − 1 j i k +1 ...i K = P r k i k =1 w i 1 ...i K u j i k . The T ucker d ecomposition model uses a latent factor matrix U k ∈ R d k × r k in each mod e k and a core ten sor W ∈ R r 1 × ... × r K and assumes the whole ten sor M is generated b y M = W × 1 U (1) × 2 . . . × K U ( K ) . Note that this is a multilinear function of W a nd { U 1 , . . . , U K } . It can be furth er simplified b y restricting r 1 = r 2 = . . . = r K and the o ff-diagonal elements of W to b e 0 . In this case, the T u cker model becomes CANDECOMP/P ARAF A C (CP). The infinite T u cker decompo sition (In fT uc ker) generalizes the T ucker model to infinite feature space via a tensor-variate Gaussian pro cess (TGP) [25]. Specifically , in a probabilistic framework, we assign a standard normal p rior over e ach elem ent of the co re ten sor W , and then marginalize ou t W to obtain the probability of th e tensor giv en the latent factors: p ( M| U (1) , . . . , U ( K ) ) = N (v ec( M ); 0 , Σ (1) ⊗ . . . ⊗ Σ ( K ) ) (1) where v ec( M ) is the vectorized whole tensor, Σ ( k ) = U ( k ) U ( k ) ⊤ and ⊗ is the Kron ecker- produ ct. Next, we a pply th e kern el trick to m odel no nlinear intera ctions between the latent factors: Each row u k t of the latent factors U ( k ) is replaced by a nonlin- ear feature transfor mation φ ( u k t ) and thu s an equi valent no nlinear covariance matrix Σ ( k ) = k ( U ( k ) , U ( k ) ) is used to replace U ( k ) U ( k ) ⊤ , whe re k ( · , · ) is the covariance function . After the no nlinear feature ma pping, the origin al T ucker deco mposition is perfor med in an (unkn own) infinite feature space. Further , since the covariance of vec ( M ) is a fu nction of the latent factors U = { U (1) , . . . , U ( K ) } , E quation (1) actu- ally defines a Gaussian process (GP) on tensors, namely tensor-variate GP (TGP) [25], 3 where the input are based on U . Finally , we can use different noisy models p ( Y |M ) to sample th e ob served tensor Y . For exam ple, we can use Ga ussian mo dels an d Pro bit models for continuo us and binary observations, respecti vely . 3 Model Despite being able to capture no nlinear in teractions, InfT ucker m ay s uffer fro m the e x - treme s parsity issue in real-world tensor data sets . The reason is that its full cov ariance is a Kronecker-produ ct between the covariances over all th e m odes— { Σ (1) , . . . , Σ ( K ) } (see Equ ation (1)). Each Σ ( k ) is of size d k × d k and th e full covariance is of size Q k d k × Q k d k . Thus T GP is projected onto the entire tensor wi th respect to the latent factors U , including all zero and nonzero elemen ts, rather th an a (meaning ful) sub set of them. Howe ver, the real-world tensor data a re usually extremely sparse, with a huge number of zero entries and a tiny portio n of n onzero e ntries. On one ha nd, because mo st zero entries are meaning less—they are either missing or unob served, using them can adversely affect the tensor factorizatio n q uality and lead to b iased p redictions; on the other hand, incorpora ting numero us z ero entries into GP models w ill result in large c o- variance matrices and high co mputation al co sts. Although Zhe et al. [ 2 8, 29] proposed to improve the scalability by modeling subtenso rs instead, the sampled sub tensors can still b e very sp arse. Even worse, becau se subtensors are typically restricted to a small dimension du e to the effi ciency con siderations, it is often p ossible to encoun ter one tha t does not con tain any nonzer o entry . This may furth er inc ur n umerical instabilities in model estimation. T o address these i ssues, we propo se a flexible Gaussian process tensor factoriz ation model. While in heriting the nonlin ear mo deling power , ou r model disposes of the Kronecker-produ ct structure in t he full co variance and can therefore select an arbitrary subset of tensor entries for training. Specifically , giv en a tensor M ∈ R d 1 × ... × d K , for each tensor entry m i ( i = ( i 1 , . . . , i K ) ), we c onstruct an input x i by con catenating the corr espondin g latent fac- tors fro m all the mod es: x i = [ u (1) i 1 , . . . , u ( K ) i K ] , where u ( k ) i k is the i k -th row in the latent factor matrix U ( k ) for mode k . W e assume that th ere is an underlying fun ction f : R P K j =1 d j → R such that m i = f ( x i ) = f ([ u (1) i 1 , . . . , u ( K ) i K ]) . This fu nction is unknown and can b e com plex and nonlin ear . T o lear n the functio n, we assign a Gaus- sian pr ocess pr ior over f : f or a ny set of tensor en tries S = { i 1 , . . . , i N } , the func tion values f S = { f ( x i 1 ) , . . . , f ( x i N ) } are distrib uted accordin g to a multivariate Gaussian distribution with mean 0 and covariance determine d by X S = { x i 1 , . . . , x i N } : p ( f S |U ) = N ( f S | 0 , k ( X S , X S )) where k ( · , · ) is a (nonlinear ) covariance f unction. Because k ( x i , x j ) = k ([ u (1) i 1 , . . . , u ( K ) i K ] , [ u (1) j 1 , . . . , u ( K ) j K ]) , there is no Kro necker- produ ct structur e c onstraint and so any subset of ten sor entries can be selected for training. T o pr ev ent the learnin g proc ess to be biased tow ard zero , we can use a set o f entries with balanced zer os and nonzero s. Furthermore, useful domain knowledge can also be i ncorp orated to select meaning ful entr ies for training. Note, ho wever , that if we 4 still use all the tensor e ntries and intensionally impose the Kr onecker-product structu re in th e fu ll covariance, our model is reduced to InfT ucker . T herefor e, from th e m odeling perspective, the pr oposed model is more general. W e fur ther assign a stand ard n ormal prior over th e late nt factors U . Gi ven the selected tensor entries m = [ m i 1 , . . . , m i N ] , the observed entries y = [ y i 1 , . . . , y i N ] are sam pled fro m a n oise m odel p ( y | m ) . In th is p aper, we deal with b oth c ontinuo us and binary observations. For co ntinuou s data, we use the Gaussian mod el, p ( y | m ) = N ( y | m , β − 1 I ) and the joint prob ability i s p ( y , m , U ) = Y K t =1 N (vec( U ( t ) ) | 0 , I ) N ( m | 0 , k ( X S , X S )) N ( y | m , β − 1 I ) (2) where S = [ i 1 , . . . , i N ] . For b inary da ta, we use the Probit m odel in the following manner . W e first intro duce augmented variables z = [ z 1 , . . . , z N ] an d then decompose the Pro bit mod el into p ( z j | m i j ) = N ( z j | m i j , 1) and p ( y i j | z j ) = 1 ( y i j = 0) 1 ( z j ≤ 0) + 1 ( y i j = 1) 1 ( z j > 0) where 1 ( · ) is the indicator functio n. Then the jo int p roba- bility is p ( y , z , m , U ) = Y K t =1 N (vec( U ( t ) ) | 0 , I ) N ( m | 0 , k ( X S , X S )) N ( z | m , I ) · Y j 1 ( y i j = 0) 1 ( z j ≤ 0) + 1 ( y i j = 1) 1 ( z j > 0) . (3) 4 Distrib uted V ariational Infer ence Real-world tensor d ata often compr ise a large numb er of e ntries, say , millions of no n- zeros an d billions of zeros. Even by on ly using nonzer o entries for train ing, exact inference of the pr oposed model may still be intractable. This mo tiv ates us to develop a distributed v ar iational inference algorithm, presented as follo w s. 4.1 T ractable V ariational Evidence Lower Boun d Since the GP cov arian ce term — k ( X S , X S ) (see Equations (2) and (3)) intertwines all the late nt factors, exact infer ence in parallel is dif ficult. T herefor e, we first der i ve a tractable variational evidence lower boun d (ELBO), following the sparse Gaussian process framew ork by T itsias [23]. The k ey idea i s to introduce a small set of inducing points B = { b 1 , . . . , b p } an d latent targets v = { v 1 , . . . , v p } ( p ≪ N ). Then we augmen t the orig inal model with a joint multi variate Gaussian distribution of the latent tensor entries m and targets v , p ( m , v |U , B ) = N ( m v ; 0 0 , K S S K S B K B S K B B ) where K S S = k ( X S , X S ) , K B B = k ( B , B ) , K S B = k ( X S , B ) and K B S = k ( B , X S ) . W e use Jensen’ s inequality and condition al Gaussian distrib utions to c on- struct the ELBO. Using a very similar d eriv ation to [23], we can obtain a tractable 5 ELBO for our model on continuou s data, log p ( y , U | B ) ≥ L 1 U , B , q ( v ) , where L 1 U , B , q ( v ) = log( p ( U )) + Z q ( v ) lo g p ( v | B ) q ( v ) d v + X j Z q ( v ) F v ( y i j , β )d v . (4) Here p ( v | B ) = N ( v | 0 , K B B ) , q ( v ) is the variational posterior for the latent targets v and F v ( · j , ∗ ) = R log N ( · j | m i j , ∗ ) N ( m i j | µ j , σ 2 j )d m i j , wh ere µ j = k ( x i j , B ) K − 1 B B v and σ 2 j = Σ ( j, j ) = k ( x i j , x i j ) − k ( x i j , B ) K − 1 B B k ( B , x i j ) . Note that L 1 is d ecom- posed into a summation of terms inv olving indi v idual tensor entries i j (1 ≤ j ≤ N ) . The additive form enables us to distrib ute the computation across multiple computer s. For binary data, we in troduce a variational posterio r q ( z ) an d make the m ean- field assumption that q ( z ) = Q j q ( z j ) . Following a similar d eriv ation to the con- tinuous case, we can obtain a tractable ELBO for b inary data, log p ( y , U | B ) ≥ L 2 U , B , q ( v ) , q ( z ) , where L 2 U , B , q ( v ) , q ( z ) = log( p ( U )) + Z q ( v ) lo g ( p ( v | B ) q ( v ) )d v + X j q ( z j ) log ( p ( y i j | z j ) q ( z j ) ) + X j Z q ( v ) Z q ( z j ) F v ( z j , 1)d z j d v . (5) One can simply use the standard Exp ectation-max imization (EM) framework to op - timize (4) and (5) for model in ference, i.e., th e E step u pdates the v ar iational posteriors { q ( v ) , q ( z ) } an d the M step up dates the latent factors U , the ind ucing points B and the kernel pa rameters. Howe ver , the sequential E-M updates can n ot fully e x ploit the paralleling computing resou rces. Due to the s trong dependenc ies b etween the E step and the M step, the sequential E-M updates may take a lar ge n umber of iterations to conv erge. Thing s become worse for binary case: in the E step, the updates of q ( v ) a nd q ( z ) are also depe ndent on each other , making a parallel inferen ce ev en less efficient. 4.2 Tight and Parallelizable V ariational Evidence Lower Bound In this section, we further deriv e tight(er) ELBOs that subsume the optimal variational posteriors for q ( v ) an d q ( z ) . Thereby we can av o id the seq uential E-M up dates to perfor m decoup led, highly efficient parallel inference. Moreover, the inference quality is very likely to b e improved using tigh ter bou nds. Due to th e space limit, we only present ke y ideas a nd results here. Detailed discussions a re gi ven in Section 1 of the supplemen tary material. Tight ELBO f or continuous tensors. W e take fu nctional d eriv ative of L 1 with respect to q ( v ) in (4). By setting the der iv ative to zer o, we obtain the o ptimal q ( v ) (which is a Gaussian distribution) and then substitute it in to L 1 , manip ulating the terms, we achieve th e following tig hter ELBO. 6 Theorem 4.1. F or continuou s data, w e have log p ( y , U | B ) ≥ L ∗ 1 ( U , B ) = 1 2 log | K B B | − 1 2 log | K B B + β A 1 | − 1 2 β a 2 − 1 2 β a 3 + β 2 tr( K − 1 B B A 1 ) − 1 2 K X k =1 k U ( k ) k 2 F + 1 2 β 2 a ⊤ 4 ( K B B + β A 1 ) − 1 a 4 + N 2 log( β 2 π ) , (6) wher e k · k F is F r obeniu s norm, and A 1 = X j k ( B , x i j ) k ( x i j , B ) , a 2 = X j y 2 i j , a 3 = X j k ( x i j , x i j ) , a 4 = X j k ( B , x i j ) y i j . Tight ELBO for binary tensors. The binary case is more difficult because q ( v ) an d q ( z ) a re co upled to gether (see (5)). W e use the following step s: we first fix q ( z ) and plug the o ptimal q ( v ) in the same way as th e con tinuous case. Th en we ob tain an intermediate E LBO ˆ L 2 that on ly con tains q ( z ) . Howev er , a quadratic ter m in ˆ L 2 , 1 2 ( K B S h z i ) ⊤ ( K B B + A 1 ) − 1 ( K B S h z i ) , inter twines all { q ( z j ) } j in ˆ L 2 , making it in- feasible to analytically derive o r parallelly compute the optima l { q ( z j ) } j . T o overcome this difficulty , we exploit the conv ex conju gate of the quadr atic ter m to introduce an e x- tra variational paramete r λ to decou ple t he depend ences between { q ( z j ) } j . After that, we are able to d eriv e the op timal { q ( z j ) } j using functional deri vati ves an d to obtain the following tight ELBO. Theorem 4.2. F or binary data, we have log p ( y , U | B ) ≥ L ∗ 2 ( U , B , λ ) = 1 2 log | K B B | − 1 2 log | K B B + A 1 | − 1 2 a 3 + X j log Φ((2 y i j − 1) λ ⊤ k ( B , x i j )) − 1 2 λ ⊤ K B B λ + 1 2 tr( K − 1 B B A 1 ) − 1 2 K X k =1 k U ( k ) k 2 F (7) wher e Φ( · ) is the cumulative distrib ution function of the standard Gaussian. As we can see, due to the additiv e fo rms of the terms in L ∗ 1 and L ∗ 2 , such as A 1 , a 2 , a 3 and a 4 , the compu tation of the tight ELBOs a nd their gradients ca n be efficiently perfor med in parallel. The deriv ation of the full gradient is given in Section 2 of the supplemen tary material. 4.3 Di strib uted Infer ence on Tight Bound 4.3.1 Distribu ted Gradient- based Optimization Giv en the tighter ELBOs in (6 ) and (7) , we develop a distributed algorithm to o ptimize the laten t factors U , the indu cing points B , the variational param eters λ (for binary 7 data) and the kernel par ameters. W e distribute the comp utations over multiple compu- tational nodes ( M A P step) and then collect the r esults to calculate the EL BO and its gradient ( R E D U C E step). A standard ro utine, such as gradient descent and L-BFGS, is then used to solve the optimization problem. For binar y data, we f urther find th at λ can b e up dated with a simple fixed p oint iteration: λ ( t +1) = ( K B B + A 1 ) − 1 ( A 1 λ ( t ) + a 5 ) (8) where a 5 = P j k ( B , x i j )(2 y i j − 1) N k ( B , x i j ) ⊤ λ ( t ) | 0 , 1 Φ (2 y i j − 1) k ( B , x i j ) ⊤ λ ( t ) . Apparen tly , the updating c an be efficiently perform ed in parallel (d ue to the additive structure o f A 1 and a 5 ). Moreover , th e conver gence is guaran teed by the f ollowing lemma. The proof is gi ven in Section 3 of the supplementa ry material. Lemma 4.3 . Given U and B , w e have L ∗ 2 ( U , B , λ t +1 ) ≥ L ∗ 2 ( U , B , λ t ) and th e fixed point iteration (8) always con verges. In our experience, the fixed-point iterations are muc h more ef ficient than general search s trategies (such as line-search ) to identity an appropriate step length a long the gradient direction. T o use it, before we calcu late the gra dients with respect to U and B , we first o ptimize λ using the fixed point itera tion (in an inner loop) . In the o uter control, we then employ gra dient d escent or L -BFGS to optimize U and B . This will lead to an even tighter boun d for our model: L ∗∗ 2 ( U , B ) = max λ L ∗ 2 ( U , B , λ ) = max q ( v ) ,q ( z ) L 2 ( U , B , q ( v ) , q ( z )) . Empirica lly , this con verges must faster than feed- ing the optimization algorithms with ∂ λ , ∂ U an d ∂ B altog ether . 4.3.2 Key-V a lue-Free M A P R E D U C E In th is section we present th e detailed d esign o f M A P R E D U C E pr ocedur es to fulfill our distributed inf erence. Basically , we first allocate a set o f tensor entries S t on each M A P P E R t such that the correspondin g comp onents of the ELBO and t he gradients are calculated. Then th e R E D U C E R aggr egates local results fro m eac h M A P P E R to ob tain the integrated, global ELBO and gradient. W e first conside r the standard (ke y -value) de sign. For brevity , we take the gradient computatio n for the latent f actors as an example. For e ach t ensor entry i on a M A P P E R , we calculate the corresp onding gradients { ∂ u (1) i 1 , . . . ∂ u ( K ) i K } and then send o ut the key- value pairs { ( k, i k ) → ∂ u ( k ) i k } k , where the ke y indicates the mode and the index of the latent factors. T he R E D U C E R aggregates gradients w ith the same key to re cover th e full gradient with respect to each latent factor . Although the (k ey-value) M A P R E D U C E h as been successfully applied in numer ous applications, it relies on an expensi ve data s huffling o peration: the R E D U C E step has to sort the M A P P E R S ’ outpu t by the keys before aggregation. Sin ce the sortin g is usually perfor med o n disk due to significant d ata size, intensi ve disk I/Os a nd network com mu- nications will be come seriou s c omputatio nal overheads. T o ov ercome th is deficien cy , we devise a key-value-free M A P - R E D U C E scheme to a void o n-disk data shuffling op- erations. Sp ecifically , o n each M A P P E R , a co mplete gradien t vector is ma intained fo r 8 all the para meters, in cluding U , B and the kernel par ameters. Howe ver , o nly relev an t compon ents of the gradient, as specified b y the tensor e ntries allocated to this M A P - P E R , will be updated. After updates, each M A P P E R will then send ou t the full gradien t vector , an d the R E D U C E R will simply sum them up together to obtain a global gradient vector without having to per form any extra data sorting. Note that a similar pr ocedure can also be used to perfor m the fixed point iteration for λ (in binar y tensors). Efficient M A P R E D U C E systems, such as S P A R K [2 7], can fully optimize the non- shuffling M A P and R E D U C E , where mo st of th e data are buf fered in mem ory and d isk I/Os are circumvented to the u tmost; b y contra st, th e perfo rmance with data shu ffling degrades severely [6]. This is verified in our evaluations: on a sm all tensor of size 100 × 1 0 0 × 100 , our key-value-free M A P R E D U C E gains 30 times speed acceleration over th e traditional k ey-value pr ocess. Theref ore, our algor ithm c an fully exploit the memory -cache mechanism to achieve fast infe rence. 4.4 Al gorithm Complexity Suppose we use N tensor entries f or trainin g, with p ind ucing points an d T M A P P E R , the time complexity for each M A P P E R node is O ( 1 T p 2 N ) . Since p ≪ N is a fixed constant ( p = 100 in our exper iments), the time complexity is linear in the nu mber of tensor entries. The space complexity for each M A P P E R no de is O ( P K j =1 m j r j + p 2 + N T K ) , in order to store the latent factors, their gradients, the cov arian ce m atrix on inducing points, and the indices of the latent factors for each tensor e ntry . Ag ain, th e space complexity is linear in the number of tenso r entries. In comparison , In fT ucker utilizes the Kro necker-product p roperties to calculate the gr adients and has to pe rform eigenv alue decomp osition of the covariance matrices in each tensor mode. T herefor it has a high er tim e and space com plexity ( see [25] for details) and is not scalab le to large dimensions. 5 Related work Classical tenso r factoriza tion mod els includ e T uc ker [24] and CP [8], b ased o n wh ich many excellen t work s have been proposed [19, 5, 22, 1, 9, 26, 18, 21, 10, 17]. T o deal with big data, se veral distributed factorization algor ithms have been recently de- veloped, such as GigaT e nsor [11] and DFacT o [4]. Despite th e widespread success of these method s, their und erlying multilinear factor ization structure may limit their capability to cap ture m ore com plex, n onlinear relationship in r eal-world a pplications. Infinite T ucker decomp osition [25], and its distributed or o nline extensions [28, 29] address this iss ue by modelin g tensors or subtensors via a tensor - variate Gau ssian pro- cess (TGP). Howe ver , these meth ods ma y suffer fro m the extreme spar sity in real-world tensor data, because the Kronecker-product structur e in the cov ariance o f TGP requir es modeling the e ntire ten sor sp ace no matter the eleme nts are meanin gful (non -zeros) or not. By co ntrast, ou r flexible GP factorization model eliminates the Kro necker- produ ct restrictio n and can model an arbitrary subset of tensor entries. In theory , all such n onlinear f actorization models belon g to the random func tion prio r mo dels [1 4] for exchangeable multidimensional arrays. 9 Our distributed variational inference algorithm is based on sparse GP [1 6], an effi- cient ap proxim ation framew ork to scale up GP mod els. Sp arse GP u ses a small set of inducing points to br eak the d ependen cy b etween random f unction v alues. Recen tly , T itsias [ 23] p roposed a variational lear ning f ramework for sparse GP , based on which Gal et al. [7] derived a tight variational lower bound for distributed inferen ce of GP regression and GPL VM [13]. The deriv ation of the tight ELBO in ou r mo del for con - tinuous tensors is similar to [ 7]. Howe ver, th e gradien t calculation is substan tially dif- ferent, because the inp ut to o ur GP factoriza tion m odel is the conc atenation of the latent factors. Ma ny tensor entr ies may partly share th e same latent factor s, causing a large amount of key-value pair to be sent du ring the distrib uted gradient calculation. This will incur an expen si ve data shu ffling proce dure tha t ta kes place on disk. T o imp rove the computational effi ciency , we de velop a non-key-value M A P - R E D U C E to a void data shuffling and fully exploit the memory-c ache m echanism in efficient M A P R E D U C E systems. This strategy is also applicable to other M A P - R E D U C E based learning a l- gorithms. In addition to con tinuous data, we also develop a tigh t ELBO for binary data on optimal variational p osteriors. By introducing p extra v ar iational parameters with con vex co njugates ( p is th e number of inducing points), our inferen ce can be per - formed efficiently in a d istributed ma nner, which a voids explicit o ptimization on a l arge number o f variational posteriors fo r the latent tensor entries and ind ucing targets. Our method can also be useful for GP classification problem . 6 Experiments 6.1 Evaluation on Small T ensor Data For e valuation, we first compar ed our method with various existing tensor factoriza- tion me thods. T o this end, we used fou r small real datasets where all method s are computatio nally f easible: ( 1) Alog , a r eal-valued tensor of size 200 × 100 × 20 0 , representin g a three-way in teraction (user , actio n, resou rce) in a file access log. I t contains 0 . 33% nonzero en tries.(2) AdClick , a real-v alued tensor o f size 80 × 100 × 100 , describ ing ( user , pub lisher , advertisement) clicks for online advertising. It con- tains 2 . 39 % nonze ro entries. (3) Enr on , a b inary tensor extracted fro m the Enr on email d ataset ( www.cs.cmu. edu/ ˜ ./enron/ ) d epicting the three-way relation- ship ( sender, re ceiv er , time). It contains 203 × 203 × 200 elements, of which 0 . 01% are no nzero. (4) NellSma ll , a binar y ten sor extracted fr om the NELL knowledge base ( rtw.ml .cmu.edu/rtw /resources ), of size 2 95 × 17 0 × 94 . It depicts the knowledge pr edicates (entity , relationship, entity). The data set c ontains 0 . 05 % nonzer o elements. W e c ompared with CP , no nnegativ e CP ( NN-CP) [19], hig h or der SVD (HOSVD) [12], T ucker, infinite T u cker (InfT ucker) Xu et al. [25] and its extension (Inf T uckerEx ) which uses the Dirichlet process mixture (DPM) pr ior to model latent clusters and local TGP to p erform scalable, online factorization [29]. Note th at I nfT u cker a nd InfT u ckerEx are nonlinear factorization approache s. For testing, we used the same setting as in [29]. All the meth ods were evaluated via a 5-fold cross v alida tion. The nonzero entries were randomly split into 5 fo lds: 4 folds 10 were u sed f or tr aining and the remain ing non -zero entries an d 0 . 1% z ero entries were used for testing so that the number of non- zero entries is co mparab le to the numb er of z ero entrie s. By doing this, zero and n onzero entries are tr eated e qually imp ortant in testing , and so the evaluation will not be dominated by large po rtion of zeros. For InfT ucker and InfT uckerEx, we carried out extra cross-validations to select th e kernel form ( e.g., RBF , ARD a nd Matern kernels) and the kernel parameter s. For I nfT u ck- erEx, we rando mly sam pled su btensors and tuned the learning rate following [2 9]. For our model, the numbe r of ind ucing poin ts was set to 1 00 , and we used a balanced training set generated as follows: in additio n to nonzero entries, we randomly sampled the same number o f zero e ntries and made sure that they would n ot ov erlap with th e testing zero elements. Our model used ARD kernel a nd the kernel pa rameters were estimated jointly with the latent factors. Thus, th e expe nsiv e parameter selectio n procedure was not needed. W e implem ented our d istributed inference algorithm with two optimization frameworks, gradie nt de scent and L-BFGS ( denoted by Ou rs-GD and Our s-LBFGS respectively). For a co mprehe nsiv e ev aluation, we also examined CP on b alanced training e ntries gener ated in the same way as our mo del, d enoted by CP-2. The mean squared error (MSE) is used to ev aluate predictiv e performance on Alog and Click and area-un der-curve (A UC) on En r on and Nell . The averaged results from the 5 -fold cross validation are repor ted. Our mod el achieves a higher pr ediction accuracy than Inf T ucker, and a b etter or compara ble accuracy than InfT uckerEx (see Figure 1). A t -test sho ws th at ou r m odel outperf orms InfT ucker significan tly ( p < 0 . 05 ) in alm ost all situation s. Althou gh In f- T uckerEx uses th e DPM prior to improve factorization, our model still ob tains signifi- cantly be tter p redictions on Alog an d AdClick and co mparab le o r b etter per forman ce on Enr on and NellSma ll . This mig ht be attributed to the flexibility of our mo del in using balanced trainin g entries to prevent the learning bia s (toward n umerou s zero s). Simi- lar improvements can be o bserved f rom CP to CP-2. Finally , our model o utperfo rms all the r emaining method s, demonstrating the advantage of our nonlin ear factorization approa ch. 6.2 Scalabil ity Analysis T o examine the scalability o f the proposed distributed inference algorithm, we used the following lar ge real-world datasets: (1 ) A CC, A real-valued tensor describ ing three- way i nteraction s (user , action, resource) in a code repository manageme nt system [29]. The tensor is of size 3 K × 150 × 30 K , wher e 0 . 009% are nonzero. (2) DBLP: a binary tensor depicting a th ree-way b ibliograp hy relationship (author, confer ence, ke yword) [29]. Th e ten sor was extracte d from DBLP data base an d contain s 10 K × 200 × 10 K elements, where 0 . 001% are nonzero entrie s. (3 ) NELL: a binary tensor representing the knowledge p redicates, in th e fo rm o f (entity , entity , relationship) [28]. The tensor size is 20 K × 1 2 . 3 K × 28 0 and 0 . 0 001% are nonzer o. The scalability of ou r distributed inferenc e algorithm w as examined with regard to the number of machines on A CC dataset. T he number of latent factors w as set to 3. W e ran our algorith m using the gradient descent. Th e results are sho w n in Figure 2(a). The Y -ax is sh ows the recip rocal of the r unning time mu ltiplied b y a con stant—which 11 CP NNCP HOSVD Tucker InfTucker InfTuckerEx CP-2 Ours-GD Ours-LBFGS Number of Factors 3 5 8 10 Mean Squared Error (MSE) 0.65 1.5 2 2.5 3 (a) Alog Number of Factors 3 5 8 10 Mean Squared Error (MSE) 0.3 0.8 1.2 1.9 (b) AdClick Number of Factors 3 5 8 10 AUC 0.7 0.8 0.9 1 (c) Enr on Number of Factors 3 5 8 10 AUC 0.7 0.8 0.9 1 (d) NellSmall Figure 1 : The prediction results o n sma ll datasets. The results are a veraged over 5 runs. Number of Machines 5 10 15 20 1 / RunningTime X Const 1 3 5 (a) Scalabil ity Mean Squared Error (MSE) 0.1 0.5 0.7 0.9 GigaTensor DinTucker InfTuckerEx Ours-GD Ours-LBFGS (b) A CC AUC 0.82 0.9 0.95 (c) DBLP AUC 0.82 0.9 0.95 1 (d) NELL Figure 2: Pred iction a ccuracy ( av eraged on 50 test datasets) on large ten sor d ata and the scalability . correspo nds to the run ning speed. As we can see, th e speed of our algo rithm scales up linearly to the number of machines. 6.3 Evaluation on Large T ensor Data W e then co mpared ou r appro ach with three state-of-th e-art large-scale tensor factor- ization method s: Giga T e nsor [ 11], Distributed infinite T ucker decomp osition ( Din- T ucker) [28], and In fT uckerE x [29]. Both GigaT ensor an d DinT ucker are developed on H A D O O P , while InfT uckerEx u ses o nline infer ence. Ou r mo del was im plemented on S P A R K . W e ran Giga tensor, DinT ucker and our approach on a large Y ARN cluster and InfT uc kerEx on a single computer . W e set the numb er of latent factors to 3 for A CC an d DBLP data set, and 5 for NELL data s et. Follo win g t he settings in [29, 28], we ra ndomly chose 80% of nonzer o entries for training, and then sampled 50 test data sets from the rema ining e ntries. For ACC and DBLP , each test data set c omprises 200 nonzero elem ents and 1 , 800 zero eleme nts; for NELL, ea ch test data set co ntains 200 n onzero elem ents and 2 , 000 zero elements. The r unning of GigaT ensor was b ased on the default settin gs of the software pac kage. For DinT uc ker and InfTuckerEx, we rand omly sampled su btensors for distributed or online infe rence. The parameters, inclu ding the number an d size of the subtensors and the learning rate, were selected in the same way as [29 ]. The k ernel form and par ameters were chosen by a cross-validation on the tr aining tensor . For our mo del, we used th e same setting as in the small d ata. W e set 50 M A P P E R S for GigaT ensor , DinT uc ker and our mode l. Figure 2(b)-(d) sh ows the predictive perfo rmance o f all the method s. W e observe that our approach consistently outperfo rms GigaT ensor and DinT u cker on all the three datasets; o ur ap proach outper forms In fT uckerE x on A CC and DBLP and is sligh tly worse than InfT uckerEx on NELL. Note again that InfT uckerEx uses DPM p rior to en- 12 hance the factorizatio n while our model doesn ’t; finally , all the no nlinear factorization methods o utperfo rm GigaT en sor , a distributed CP factorization algo rithm by a large margin, con firming the adv antages of nonlinear facto rizations on lar ge data. In terms of speed, ou r algor ithm is m uch faster th an GigaT ensor a nd D inT ucker . For example, on DB LP dataset, the average per-iteration running time were 1.45 , 15.4 and 20.5 min- utes fo r o ur mod el, GigaT ensor and DinT ucker, resp ectiv ely . This is not su rprising, because (1 ) our model u ses th e data sparsity and can exclud e numer ous, mean ingless zero elements from training; (2) our algor ithm is b ased on S P A R K , a more efficient M A P R E D U C E system than H A D O O P ; (3) our algor ithm gets rid of data shuffling and can fully exploit the memory-cach e m echanism of S P A R K . 6.4 Application on Click-Thr ough-Rate Pred iction In this section, we rep ort the results of ap plying our no nlinear tensor factorization approa ch on Click-Thro ugh- Rate (CTR) predic tion for online advertising. W e used the online ads click log from a major Internet company , from which we extracted a four mode ten sor ( user , advertisement , publishe r , page-section ) . W e used the first three day s’ s log on May 2 015, trained our model o n o ne d ay’ s data and used it to p redict the click behaviour on the next day . T he sizes o f the extracted tensor s for the th ree days are 1 79 K × 81 K × 35 × 3 55 , 16 7 K × 78 K × 35 × 354 and 213 K × 82 K × 37 × 354 respectively . The se tensors are very sparse ( 2 . 7 × 10 − 8 % non zeros on av erage). In oth er word s, the o bserved clicks ar e very rare. Howe ver , we do not want our prediction c ompletely bias tow ar d z ero (i.e., non-click); oth erwise, a ds ranking and recommen dation will be infeasible . Thus we samp led n on-clicks of the same quantity as the click s for training and testing. Note that training CTR p rediction models with compara ble click s and non-c lick samp les is common in online advertising system s [2]. The number of training and testin g entries used for th e three days are (109 K, 99 K ) , (91 K , 103 K ) and (109 K , 1 36 K ) r espectively . W e comp ared with popular me thods for CTR predictio n, including logistic regres- sion and linear SVM, where each tensor entry is represented by a set of binary features accordin g to the indices of each mode in the entry . The results are rep orted in T able 1, in term s of A UC. It sh ows that o ur model improves logistic regression and linear SVM by a large margin, on a verage 20 . 7% and 20 . 8% respectively . Therefore, althou gh we ha ve not in corpor ated side fea tures, such as user profiles and advertisement attributes, our tentativ e e xperimen ts have shown a promising potential of our model on CTR prediction task. T able 1: CTR predic tion ac curacy o n the first three days of M ay 2015. ” 1-2” means using May 1st’ s da ta for training and May 2nd ’ s data for testing; similar ar e ”2-3” and ”3-4”. Method 1-2 2-3 3-4 Logistic regression 0.7360 0.7337 0.7538 Linear SVM 0.7414 0.7332 0.7540 Our model 0.8925 0.8903 0.9054 13 7 Conclusion In this p aper, we have pr oposed a new nonlinea r and flexible tensor factorization model. By disposing of the K ronecker-produ ct covariance stru cture, the model can pr operly exploit the data sparsity and is flexible to incorp orate any subset of meaning ful tensor entries for training. Moreover , we ha ve der iv ed a tight ELBO for both continuous and binar y problems, b ased on which we f urther developed an efficient d istributed variational inference algorithm in M A P R E D U C E f ramework. In the future, we will consider a pplying asynchr onous infere nce o n th e tight ELBO, su ch as [20], to further improve the scalability of our model. Refer ences [1] Acar , E., Dunlavy , D. M., K o lda, T . G., & M o r up, M. (20 11). Scalab le tensor fac- torizations for incomple te data. Chemometrics an d Intelligent Laboratory Systems , 106 (1) , 4 1–56. [2] Agarwal, D., Long, B., T raupman , J., Xin, D., & Zhang , L. (201 4). Laser: A scalable r esponse predictio n platfor m for onlin e ad vertising. I n P r oceeding s of th e 7th ACM interna tional co nfer en ce on W eb sear ch a nd data mining , (pp. 17 3–182 ). A CM. [3] Bishop, C. M. (2006 ). P a ttern r ecognition and machine learning . springer . [4] Choi, J. H. & V ishwanathan, S. (20 14). Dfacto: Distributed factoriza tion of ten- sors. In Advan ces in N eural Information Pr ocessing Systems , (pp. 1296– 1304) . [5] Chu, W . & Ghahram ani, Z. ( 2009) . Probab ilistic mo dels for inco mplete multi- dimensiona l arrays. AIST A TS . [6] Davidson, A. & Or, A. ( 2013) . Optimizing sh uffle performan ce in spark. U niver- sity of California, Berkeley-Department o f E lectrical Eng ineering and Computer Sciences, T ech. Rep . [7] Gal, Y ., van der W ilk, M., & Rasmussen, C. ( 2014) . Distributed variational infer- ence in spa rse gaussian proc ess regression and latent variable mode ls. In Advan ces in Neural Information Pr ocessing Systems , ( pp. 3257–3 265). [8] Harshman, R. A. (19 70). Foundations of the P A RAF AC proced ure: Mode l and condition s f or an”explanatory”multi- mode factor analysis. UCLA W o rking P apers in Phonetics , 16 , 1–84. [9] Hoff, P . (2 011). Hierar chical mu ltilinear mod els for multiway data. Compu tational Statistics & Data Analysis , 55 , 530–5 43. [10] Hu, C., Rai, P ., & Car in, L. (2 015). Zero -truncated p oisson tensor factorization for massi ve binar y tensors. I n U AI . 14 [11] Kang, U., Papalexakis, E., Har pale, A., & Faloutsos, C. (201 2). Gigatensor : scaling tensor analysis up by 100 times-algorithms and discoveries. In Pr oceed ings of the 18th ACM SI GKDD internation al conference on Knowledge discovery an d data mining , (pp. 316–32 4). A CM. [12] Lathauw er , L. D., Moor , B. D., & V a ndewalle, J. ( 2000) . A m ultilinear sing ular value decomp osition. SI AM J . Matrix Anal. Ap pl , 21 , 1253–1 278. [13] Lawrence, N. D. (20 04). Gaussian pro cess latent variable mo dels for visualisation of high dimension al data. Advances in n eural in formation pr ocessing systems , 16 (3), 329–3 36. [14] Lloyd, J. R., Orbanz, P ., Ghahramani, Z. , & Roy , D. M. (201 2). Rand om function priors for exchang eable a rrays with ap plications to gra phs and relational data. In Advance s in Neural Information Pr ocessing Systems 24 , (pp. 1007 –101 5). [15] Minka, T . P . (2000). Old a nd new matrix alge bra useful for statistics. See w ww . stat. cmu. edu/minka/p apers/matrix. html . [16] Qui ˜ nonero -Candela, J. & Rasmu ssen, C. E. (2005 ). A unifying view of sparse ap - proxim ate gaussian process regression. The J ourn al of Machine Learning Resear ch , 6 , 1939– 1959 . [17] Rai, P ., Hu, C., Harding , M., & Car in, L. (2015). Scalable probabilistic tensor factorization for binary and count data. [18] Rai, P ., W ang , Y ., Guo, S., Chen, G., Dunson, D., & C arin, L. (201 4). Scalable Bayesian low-rank decomp osition of incomp lete multiway tensors. I n Pr oceeding s of the 31th International Confer ence on Machine Learning (ICML) . [19] Shashua, A. & Hazan , T . (2005). No n-negative tensor factorization with appli- cations to statistics an d co mputer vision . In Pr oceeding s o f the 2 2th International Confer e nce on Machine Learning (ICML) , (pp. 792–7 99). [20] Smyth, P ., W elling, M., & Asun cion, A. U. (2 009). Asyn chrono us distrib uted learning of topic models. In Advances in Neural I nformation Pr o cessing S ystems , (pp. 81–88 ). [21] Sun, W ., Lu, J., Liu , H., & Cheng, G. (2 015). Provable sparse tensor decom posi- tion. arXiv pr eprint arXiv:1502. 01425 . [22] Sutskev er , I., T enenbaum , J. B., & Salakh utdinov , R. R. (2009). Modelling re- lational data using bayesian clustered tensor factorization. In Advanc es in neural information pr ocessing systems , (pp. 1821– 1828 ). [23] T itsias, M . K. ( 2009) . V ariational learn ing of in ducing variables in sparse gau s- sian processes. I n Internationa l Con fer enc e on Artificial Intelligence a nd Statistics , (pp. 567–5 74). [24] T uc ker , L. (1966). Some mathe matical notes on three-mod e factor analysis. Psy- chometrika , 31 , 279–311. 15 [25] Xu, Z., Y an, F ., & Qi, Y . (2 012). In finite Tucker d ecompo sition: Nonp arametric Bayesian models for multiway data analysis. In Pr oceeding s o f the 29th Interna - tional Confer en ce on Machine Learning (ICML) . [26] Y ang, Y . & Dunson, D. ( 2013) . Bay esian co nditional ten sor factorizations f or high-d imensional classification. Journal of the Ro yal Statistical S ociety B, re vision submitted . [27] Zaharia, M., Chowdhury , M., Das, T . , Dave, A., Ma, J., McCau ley , M., Franklin, M. J., Shenker , S., & Stoica, I. (2012) . Resilient distributed datasets: A fault-to lerant abstraction for in- memory cluster com puting. In Pr oceed ings of the 9th USENIX confer ence on Networked Systems Design and Imp lementation , (pp. 2–2). USENIX Association. [28] Zhe, S., Qi, Y ., Park, Y ., Molloy , I., & Chari, S. (201 3). Dintucker: Scaling up gaussian pr ocess models o n multidimension al arrays with billions of elem ents. arXiv pr ep rint arXiv:1311 .2663 . [29] Zhe, S., Xu, Z., Chu , X., Qi, Y . , & Park, Y . (201 5). Scalab le non parametric multiway data analysis. In Pr oc eedings of the Eigh teenth Internatio nal C onfer ence on Artificial Intelligence and Statistics , (pp. 1125–11 34). Suppl ementary Ma terial In this extra material, we provide the details abou t the deriv ation o f the tight variational evidence lower bou nd of our pr oposed GP factor ization mo del (Section 1) as well as its gradient calculation (Section 2). M oreover , we gi ve the con vergence pro of of the fi xed point iteratio n u sed in our distributed inference algorithm fo r bin ary tensor (Section 3). 1 Tigh t V ariational Evidence Lower Bound The nai ve v ariation al e vid ence lo wer bou nd (ELBO) deri ved from the sparse Gaussian process framew ork (see Section 4.1 of the main paper) is gi ven by L 1 ( U , B , q ( v )) = log( p ( U )) + Z q ( v ) lo g p ( v | B ) q ( v ) d v + X j Z q ( v ) F v ( y i j , β )d v (9) for continuou s tensor and L 2 ( U , B , q ( v ) , q ( z )) = lo g( p ( U )) + Z q ( v ) lo g ( p ( v | B ) q ( v ) )d v + X j q ( z j ) log( p ( y i j | z j ) q ( z j ) ) + X j Z q ( v ) Z q ( z j ) F v ( z j , 1)d z j d v (10) 16 for binar y tensor, wh ere F v ( · j , ∗ ) = R log N ( · j | m i j , ∗ ) N ( m i j | µ j , σ 2 j )d m i j and p ( v | B ) = N ( v | 0 , K B B ) . Our goal is to f urther o btain a tight ELBO that subsumes the optima l variational posterior (i.e. , q ( v ) and q ( z ) ) so as to prevent the sequential E-M proced ure for efficient parallel training and to improve the infer ence quality . 1.1 Co ntinuous T ensor First, let us consider the co ntinuou s data. Given U an d B , we use functional d eriv a- ti ves [3] to ca lculate the optimal q ( v ) . The f unctiona l deriv ative of L 1 with re spect to q ( v ) is given b y δ L 1 ( q ) δ q ( v ) = log p ( v | B ) q ( v ) − 1 + X j F v ( y i j , β ) . Because q ( v ) is a pro bability density function, we use Lagrang e multipliers to impose the constraint and obtain the optimal q ( v ) by solving δ L 1 ( q ) + λ ( R q ( v )d v − 1) δ q ( v ) = 0 , ∂ L 1 ( q ) + λ ( R q ( v )d v − 1) ∂ λ = 0 . Thoug h simple alge braic manipu lations, we can obtain th e op timal q ( v ) to be the fol- lowing form q ∗ ( v ) = N ( v | µ , Λ ) , where µ = β K B B ( K B B + β K B S K S B ) − 1 K B S y , Λ = K B B ( K B B + β K B S K S B ) − 1 K B B . Now substituting q ( v ) in L 1 with N ( v | µ , Λ ) , we o btain the tight ELBO pr esented in Theorem 4.1 of the main paper : log p ( y , U | B ) ≥ L ∗ 1 ( U , B ) = 1 2 log | K B B | − 1 2 log | K B B + β A 1 | − 1 2 β a 2 − 1 2 β a 3 + β 2 tr( K − 1 B B A 1 ) − 1 2 K X k =1 k U ( k ) k 2 F + 1 2 β 2 a ⊤ 4 ( K B B + β A 1 ) − 1 a 4 + N 2 log( β 2 π ) , (11) where k · k F is Frobenius norm, and A 1 = X j k ( B , x i j ) k ( x i j , B ) , a 2 = X j y 2 i j , a 3 = X j k ( x i j , x i j ) , a 4 = X j k ( B , x i j ) y i j . 17 1.2 Binary T ensor Next, let us look at th e b inary data. The case f or b inary tensor s is more complex, because we ha ve the additional variational posterior q ( z ) = Q j q ( z j ) . Fu rthermo re, q ( v ) an d q ( z ) are coupled in the o riginal EL BO (see (10)). T o eliminate q ( v ) and q ( z ) , we use the following steps. W e first fix q ( z ) , calculate the o ptimal q ( v ) and plug it into L 2 (this is similar to the continu ous case) to obtain an intermed iate bound, ˆ L 2 ( q ( z ) , U , B ) = max q ( v ) L 2 ( q ( v ) , q ( z ) , U , B ) = 1 2 log | K B B | − 1 2 log | K B B + A 1 | − 1 2 X j h z 2 j i − 1 2 a 3 + 1 2 tr( K − 1 B B A 1 ) − N 2 log(2 π ) + 1 2 ( K B S h z i ) ⊤ ( K B B + A 1 ) − 1 )( K B S h z i ) + X j Z q ( z j ) log( p ( y i j | z j ) q ( z j ) )d z j − 1 2 X K k =1 k U ( k ) k 2 F (12) where h·i denotes the expectatio n und er th e variational po steriors. Note that ˆ L 2 has a similar form to L ∗ 1 in (11). Now we consider to calculate the optimal q ( z ) fo r ˆ L 2 . T o this end, we calculate the function al deriv ative o f ˆ L 2 with respect to each q ( z j ) : δ ˆ L 2 δ q ( z j ) = log p ( y i j | z j ) q ( z j ) − 1 − 1 2 z 2 j + c j j h z j i z j + X t 6 = j c tj h z t i z j . where c tj = k ( x i t , B )( K B B + A 1 ) − 1 k ( B , x i j ) and p ( y i j | z j ) = 1 (2 y i j − 1 ) z j ≥ 0 . Solving δ ˆ L 2 δq ( z j ) being 0 with Lag range multipliers, we find that th e optimal q ( z j ) is a truncated Gaussian, q ∗ ( z j ) ∝ N ( z j | c j j h z j i + X t 6 = j c tj h z t i , 1) 1 (2 y i j − 1) z j ≥ 0 . This expression is unfortunately no t ana lytical. Even if we can e xplicitly upd ate each q ( z j ) , the up dating will depend o n all the other variational posterio rs { q ( z t ) } t 6 = j , mak- ing distributed calcula tion very difficult. This arises from the quad ratic term 1 2 ( K B S h z i ) ⊤ ( K B B + A 1 ) − 1 ( K B S h z i ) in (12), which couples all {h z j i} j . T o resolve this is sue, we introdu ce an extra variational parameter λ to decouple t he depend encies between {h z j i} j using the following lemma. Lemma 1.1. F o r any symmetric positive definite matrix E , η ⊤ E − 1 η ≥ 2 λ ⊤ η − λ ⊤ E λ . (13) The equality is achieved when λ = E − 1 η . Pr oof. Define the functio n f ( η ) = η ⊤ E − 1 η and it is easy to see that f ( η ) is co n vex because E − 1 ≻ 0 . Then using th e con vex conjug ate, we have f ( η ) ≥ λ ⊤ η − g ( λ ) and g ( λ ) ≥ η ⊤ λ − f ( η ) . Then by m aximizing η ⊤ λ − f ( η ) , we c an obtain g ( λ ) = 18 1 4 λ ⊤ E λ . Thus, f ( η ) ≥ λ ⊤ η − 1 4 λ ⊤ E λ . Si nce λ is a free parame ter , we can use 2 λ to replace λ and ob tain the inequ ality (13). Further, we can verif y that when λ = E − 1 η the equality is achiev ed. W e now apply the inequality on the term 1 2 ( K B S h z i ) ⊤ ( K B B + A 1 ) − 1 K B S h z i in (12). Note that the quadratic term regarding all { z j } now vanishes, a nd instead a linear term λ ⊤ K B S h z i is introduc ed so that these annoying depend encies between { z j } j are eliminated. W e therefor e obtain a more friend ly intermed iate ELBO, ˜ L 2 ( U , B , q ( z ) , λ ) = 1 2 log | K B B | − 1 2 log | K B B + A 1 | − 1 2 X j h z 2 j i − 1 2 a 3 + 1 2 tr( K − 1 B B A 1 ) − N 2 log(2 π ) + X j λ ⊤ k ( B , x i j ) h z j i − 1 2 λ ⊤ ( K B B + A 1 ) λ + X j Z q ( z j ) log( p ( y i j | z j ) q ( z j ) )d z j − 1 2 K X k =1 k U ( k ) k 2 F . (14) The function al deri vative with respect to q ( z j ) is then given by δ ˜ L 2 δ q ( z j ) = log p ( y i j | z j ) q ( z j ) − 1 − 1 2 z 2 j + λ ⊤ k ( B , x i j ) z j . Now solving δ ˜ L 2 δq ( z j ) = 0 , we see that the optimal v aria tional posterior has an analytical form: q ∗ ( z j ) ∝ N ( z j | λ ⊤ k ( B , x i j ) , 1) 1 (2 y i j − 1) z j ≥ 0 . Plugging each q ∗ ( z j ) into (14), we fin ally obtain the tight ELBO as presen ted in The- orem 4.2 of the main pap er: log p ( y , U | B ) ≥ L ∗ 2 ( U , B , λ ) = 1 2 log | K B B | − 1 2 log | K B B + A 1 | − 1 2 a 3 + X j log Φ((2 y i j − 1) λ ⊤ k ( B , x i j )) − 1 2 λ ⊤ K B B λ + 1 2 tr( K − 1 B B A 1 ) − 1 2 K X k =1 k U ( k ) k 2 F . (15) 2 Gradients of the Tigh t ELBO In this section, we presen t ho w to calculate the gradie nts of the tig ht ELBOs in (11) and ( 15) with respec t to the latent factors U , the inducing points B and the kerne l parameters. Let us fir st consider the tight ELBO fo r continuou s data. Becau se U , B an d the kernel parameters are all inside the terms in volving the kernel functions, such as K B B and A 1 , we calculate the gr adients with respect to these terms first an d then use the chain rule to c alculate the gradients with respect to U and B and the k ernel parameter s. 19 Specifically , we co nsider the deri vati ves with respect to K B B , A 1 , a 3 and a 4 . Using matrix deriv atives and algebras [15], we obtain d L ∗ 1 = 1 2 tr ( K − 1 B B − ( K B B + β A 1 ) − 1 )d K B B − β 2 tr ( K B B + β A 1 ) − 1 d A 1 − β 2 d a 3 − β 2 tr( K − 1 B B A 1 K − 1 B B d K B B ) + β 2 tr( a ⊤ 4 ( K B B + β A 1 ) − 1 d a 4 ) + β 2 tr( K − 1 B B d A 1 ) − 1 2 β 2 tr ( K B B + β A 1 ) − 1 a 4 a ⊤ 4 ( K B B + β A 1 ) − 1 d K B B − 1 2 β 3 tr ( K B B + β A 1 ) − 1 a 4 a ⊤ 4 ( K B B + β A 1 ) − 1 d A 1 . (16) Next, we calc ulate the deriv ati ves d K B B , d A 1 , d a 3 and d a 4 , which depend on the specific kernel function form used in the model. For example, if we use the line ar ker - nel, d K B B = 2 B ⊤ d B and d A 1 = P N j =1 k ( B , x i j )( x i j d B ⊤ + d x i j B ⊤ ) + (d Bx ⊤ i j + B d x ⊤ i j ) k ( x i j , B ) where x i j = [ u (1) i j 1 , . . . , u ( K ) i jK ] . No te that b ecause A 1 , a 3 and a 4 all have additiv e structure s which inv olve ind ividual tensor entry i j ( 1 ≤ j ≤ N ) and the m ajor comp utation o f the deri vatives in (16) also involv e similar summations, the computatio n of th e final gradients with resp ect to U and B and the k ernel par ameters can easily be performe d in parallel. The gradient calculation for the tight ELBOs for binary ten sors is very similar to the continuo us case. Specifically , we obtain d L ∗ 2 = 1 2 tr K − 1 B B − ( K B B + A 1 ) − 1 d K B B − 1 2 tr ( K B B + A 1 ) − 1 d A 1 − 1 2 d a 3 − 1 2 tr( K − 1 B B A 1 K − 1 B B d K B B ) + 1 2 tr( K − 1 B B d A 1 ) − 1 2 tr( λλ ⊤ d K B B ) + N X j =1 (2 y i j − 1) N λ ⊤ k ( B , x i j ) | 0 , 1 Φ (2 y i j − 1) λ ⊤ k ( B , x i j ) λ ⊤ d k ( B , x i j ) . (17) W e can th en calculate the d eriv atives d K B B , d A 1 , d a 3 and each d k ( B , x i j )(1 ≤ j ≤ N ) and then apply the chain rule to calculate the gradient with respect to U , B a nd the kernel parameters. 3 Fixed Point Iteration for λ In th is sectio n, we gi ve the conv ergence pr oof of the fix ed p oint iter ation of the v ari- ational param eters λ in the tight ELBO for binary te nsors. While λ can be jointly optimized via gradient based approac hes with U , B and the kernel parameter s, we em- pirically find tha t c ombining th is fixed p oint iteration can con verge much fast er . Th e fixed point iteration is gi ven by λ ( t +1) = ( K B B + A 1 ) − 1 ( A 1 λ ( t ) + a 5 ) (18) 20 where A 1 = X j k ( B , x i j ) k ( x i j , B ) , a 5 = X j k ( B , x i j )(2 y i j − 1) N k ( B , x i j ) ⊤ λ ( t ) | 0 , 1 Φ (2 y i j − 1) k ( B , x i j ) ⊤ λ ( t ) . W e now show th at th e fixed p oint iter ation n ot on ly always co n verges, b ut also improves the ELBO in (15) after e very up date of λ ( see Lemma 4 .3 in the main paper). Specifically , gi ven U and B , from Section 1 we have L ∗ 2 λ ( t ) = max q ( z ) ˜ L 2 λ ( t ) , q ( z ) = ˜ L 2 λ ( t ) , q λ ( t ) ( z ) where q λ ( t ) ( z ) is the optima l variational posterior: q λ ( t ) ( z ) = Q j q λ ( t ) ( z j ) and q λ ( t ) ( z j ) ∝ N ( z j | k ( B , x i j ) ⊤ λ ( t ) , 1) 1 (2 y i j − 1) z j ≥ 0 . Now let us fix q λ ( t ) ( z ) and der iv e the optim al λ b y solv ing ∂ ˜ L 2 ∂ λ = 0 . W e then obtain the update of λ : λ ( t +1) = ( K B B + A 1 ) − 1 P j k ( B , x i j ) h z j i where h z j i is the expectation of the optimal v aria tional posterio r o f z j giv en λ ( t ) , i.e., q λ ( t ) ( z j ) . Obviously , we have ˜ L 2 λ ( t ) , q λ ( t ) ( z ) ≤ ˜ L 2 λ ( t +1) , q λ ( t ) ( z ) . Further, beca use L ∗ 2 ( λ ( t ) ) = ˜ L 2 λ ( t ) , q λ ( t ) ( z ) and ˜ L 2 λ ( t +1) , q λ ( t ) ( z ) ≤ ˜ L 2 λ ( t +1) , q λ ( t +1) ( z ) = L ∗ 2 ( λ ( t +1) ) we con clude tha t L ∗ 2 ( λ ( t ) ) ≤ L ∗ 2 ( λ ( t +1) ) . Now , we p lug the fact that h z j i = w ( t ) j + k ( B , x i j ) ⊤ λ ( t ) giv en q λ ( t ) ( z j ) into the calculation o f λ ( t +1) , merge and arr ange the terms. W e the n obtain the fixed point iter ation for λ in ( 18). Finally since L ∗ 2 is upp er bound ed by the log model evidence, the fixed point iteration alw ays con verges. 21

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment