Dynamic Clustering of Histogram Data Based on Adaptive Squared Wasserstein Distances

This paper deals with clustering methods based on adaptive distances for histogram data using a dynamic clustering algorithm. Histogram data describes individuals in terms of empirical distributions. These kind of data can be considered as complex de…

Authors: Antonio Irpino, Rosanna Verde, Francisco de AT De Carvalho

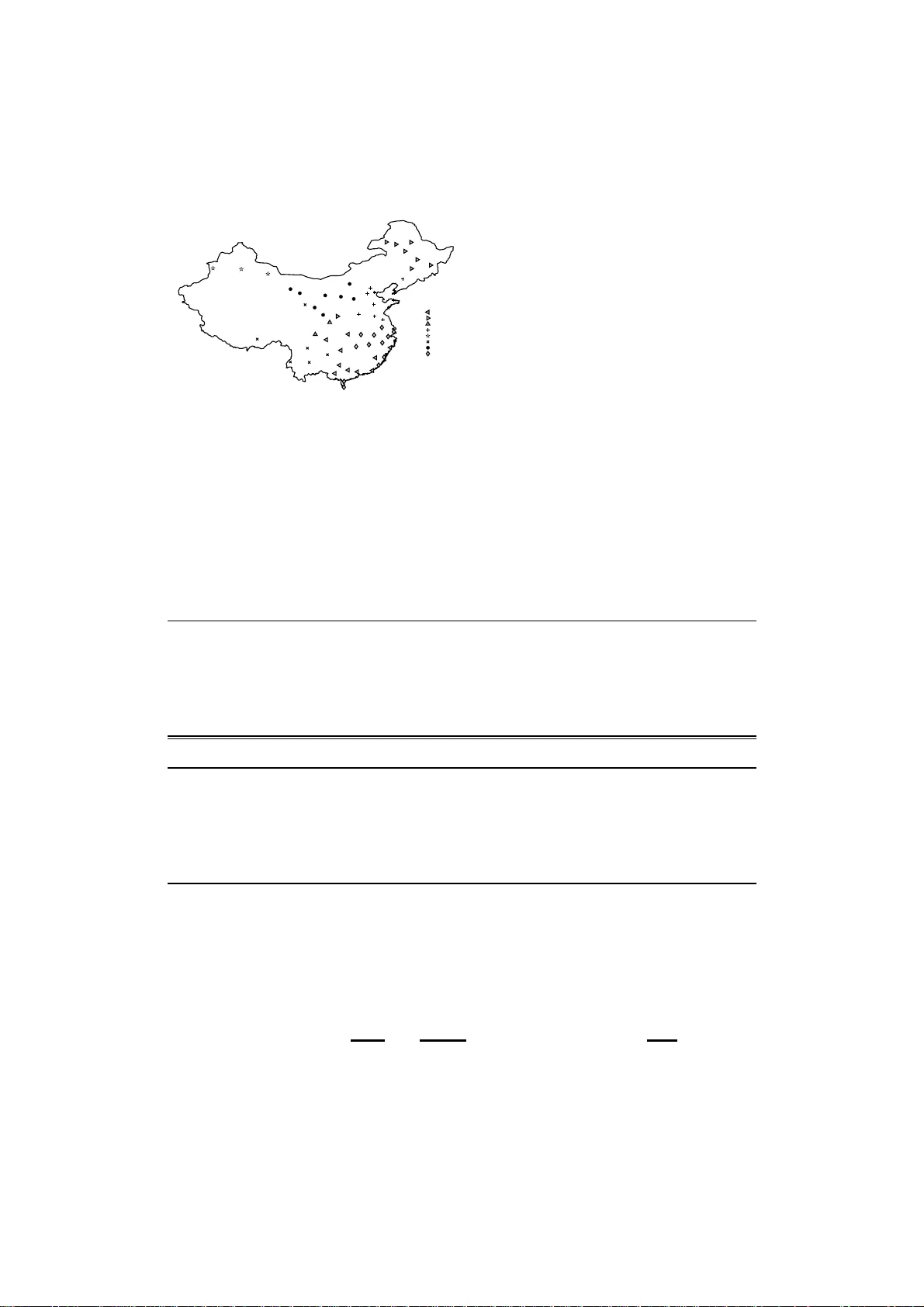

Dynamic Clustering of Histogram Data Based on Adapti ve Squared W asserstein Distances Antonio Irpino a , Rosanna V erde a , Francisco de A.T . De Carvalho b a Dipartimen to di Studi Eur opei e Mediterrane i Second Universit y of Naples 81100 Caserta, Italy { antonio.irpi no, rosanna.ve rde } @unina 2.it b Centr o de Informatica–C In / UFP E, A v . Prof . Luiz F reir e, s / n, Ciadade Univer sitaria, CEP 50.740–540 R ecife -PE , B razil fact@c in.ufpe.br Abstract This paper deals with clustering method s based on a daptive distances for histog ram data using a dynamic clustering algo rithm. Histogram data describes individuals in terms of empirical distri- butions. These kind of data can be c onsidered a s co mplex descrip tions of phenomen a observed on co mplex objects: images, group s of individuals, spatial or temporal variant data, results o f queries, en viro nmental data, an d so on. The W ass er stein d istance is used to co mpare two his- tograms. The W asserstein distance b etween histogra ms is constituted by two comp onents: the first based on the m eans, and the seco nd, to internal dispersions ( standard de viation , sk ewness, kurtosis, and so on) of the histograms. T o cluster sets of h istogram data, we propose to u se Dynamic C lusterin g Algorithm, (based o n adaptive sq uared W asserstein distances) that is a k-means-like algorithm for clustering a set of individuals into K classes that ar e aprio ri fixed. Th e main aim of th is resear ch is to provide a tool fo r clustering histogram s, empha sizing the di ff erent contr ibutions of the histogram variables, and their compo nents, to the definition of the clusters. W e d emonstrate that this can be achieved using adaptive d istances. T wo kind of adapti ve distances are considered: the fi r st takes in to account the v ariability of each compon ent of each descriptor for th e whole set o f individuals; the second takes into accou nt the variability of e ach co mpon ent of eac h de scriptor in each cluster . W e furnish inter pretative to ols of the obtained partition based on an e xte nsion of the classical measures (index es) to the use of adaptive distances in the clustering criterion fun ction. Applications on synthetic an d real-world data corrob orate the proposed procedure. K eywor ds: Histogram data, Clustering method , W asserstein distance, A daptive distance 1. Introduction In many real experiences, data are collec ted and / or represente d by histograms representing empirical distributions of phenomen on. In the framework o f computer vision, the characteristics of image s are usually represented as histogr ams of di ff erent masses. Other fi eld s of applications also use histog ram descriptions: for priv acy preserving matters, data abo ut a phenomen on (for example, flows of a bank account) c an be su mmarized by h istograms, as well as the dissemination Prep rint submitted to to be submitted in exten ded form Nove mber 27, 2024 of o ffi cial statistics. Cluster analysis aims to collect a set of objects in a number of hom ogeneo us clusters accord ing to the values the y assume with respect to a set of observed variables. In this paper we deal with a clustering proce dure to partition a set of histogram data, in a predefine d number of clusters. Histogram data were introduce d in the con text of Sy mbolic Data A nalysis by Bock and Diday [1] and they are d efined by a set of contiguo us intervals of real dom ain which represent the suppo rt of each histogr am, with associated a system o f weights (frequ encies, densities). Symbolic Data An alysis (SDA) is a domain in the area of k nowledge discovery related to multiv ariate analysis, pattern recognition and artificial intelligence, aiming to p rovide suitable methods ( clustering, factorial techniq ues, decision trees, e tc.) for mana ging aggregated data de- scribed by multi-valued variables, i.e. , wh ere t h e cells of t h e d ata tab le co ntain sets of c ategories, intervals, or weig ht (pro bability) distributions (for furth er insights ab out the SDA appro ach, see [1], [2] and [3]). Sev eral propo sals have been p resented in the literatur e for cluster ing histogra m data (see [4],[5], [6], [7]). Dynamic Clusterin g (DC) ( [8],[9]) is pr oposed as a suitable method to par tition a set of data represented b y frequen cy distributions. W e recall that DC needs to define a proximity function , to assign the individuals to the clusters, and to choose a suitable way t o repr esent the clusters by an elemen t which optimizes a c riterion fu nction. Fu rther, the representativ e element of a cluster, c alled prototyp e, has to be consistent with the descriptio n of the clustered elements: i.e., if data to be clustered are distributions, the prototype must be also a distrib u tion. The DC method [ 8] is a general partitioning algor ithm of a set of objects in K clusters. It looks for the solution by o ptimizing a cr iterion o f best fitting between the partition and the representatio n of the clusters of s u ch partition. In DC, the choice of a suitable dissimilarity plays a central role for th e definition of the allocation and of representation phases. k-means algor ithm is a particu lar case of DC wh en in the criterio n functio n is used th e squa red Euclidean d istance. According to the natur e of data and the cho sen dissimilarity functio n, DC is a more genera l schema o f p artition aroun d a set o f prototy pes. In the case of k-mean s algor ithm prototyp es are the mean s of each cluster . Acco rding to the op timized criterion, pr ototypes can also b e regression lines, factorial axis, etc. The comparison of histogram d ata c an be seen a particular case of comp arison of distribution function s. Sev eral distances and dissimilarities have bee n presented in the literature , some of these, used for histog ram data, are pr esented in [7]. Anoth er goo d review of d istances between distributions can be fo und in [1 0]. In the special field o f computin g vision, [11] introduced the Ear th Mover’ s distan ce (EMD) f or co lor and texture im ages. Th is distance can be app lied to distrib ution s o f p oints. It is worth of notice that EMD for histograms o f pixel in tensities is equiv alent to the Mallows, or W asserstein, distance on pro bability distributions [12], [ 13]. The family of distances based on W asserstein metric permits to o btain interesting inter pretative results based on the c haracteristics or on the moments of the c ompared distributions (see [7] for details). It has also proved such distance can be decompo sed into two compon ents: the first related to the means of the histograms, and the second to their interna l dispersions. One o f the main issues in clustering (in m ultiv ariate analy sis, in gene ral) is to take in to ac- count the roles of the di ff erent variables. While th e use of standar d distances allows to fin d spherical group s (like in k- means), the m ain advantage of using adap tiv e distances is the possi- bility of identifying clusters of d i ff erent size (in terms of variability) and orientation in the space (in terms of main align ment o f the cluster with one ore mo re dir ections of a set of v ariab les). One way is to hom ogenize th e variables b y means of a standard ization step. A nother way is to use an adaptive distanc e in the cluster ing algorith m that includes, in the optimization pro cess, 2 the tuning of a set of weights to associate with each variable (f or all the clusters or within e ach cluster). De Carvalho and L echev allier [14, 15], d e Sou za an d De Car valho [16], [ 17] pr oposed se veral a daptive distances for th e dy namic clustering of intervals and histogram d ata. In the p a- per [17] is introdu ced a system of weights for each variable f or Euclidean distance. Th e weights are dependent from the distance b ut not the op timization process so that the same schema can be be easily extended for the W asserstein d istance too. In the present p aper we p ropose two ada ptiv e app roaches for clusterin g histogram d ata, based on W asserstein distance. This metric allows to compare h istogram data with respect both f re- quency and supp ort while E uclidean distance com pare h istogram taking into account only on e compon ent (fre quency or supp ort). Further, Irpino and Rom ano [18] showed that is possible to decomp ose the W asserstein d istance between two h istograms in two (ad ditiv e and inde penden t) compon ents: the first is rela ted to the lo cations of the histogram s, while th e secon d is related to the di ff eren t variability o f th e two h istograms. In or der to take a dvantage from this deco mposi- tion, we prop ose the following approaches for the definition of adapti ve distances. In the first ap proach, we p ropose to associate two sets of we ights for ea ch variable and each compon ent in which is deco mposed the distance, the first is globally estimated for all the clusters at once; the second is locally estimated for each cluster . In ord er to furnish clustering inter pretative tools, we propo se an extension of classical ratios based on within (intra-clu ster) sum of sq uares, the between (inter -cluster ) sum of s q uares and the total sum of squ ares having p roved the dec omposition of the inertia o f a set of histogram d ata computin g with the adaptive ( squared) W asserstein d istances. This paper is organize d as follows: in Section 2, we intr oduce the definitio ns of histo gram data and of the W a sserstein distance for h istograms. In Section 3, starting from the Dynam ic Clustering Algorithm with non -adaptive distances, we propo se two schemas where the adequacy criterion is based on adaptiv e squared W asserstein distances for histogram data. I n section 4, we introdu ce some tools for the in terpretation of the clustering r esults. In Section 5, two application s are sho wn: one using synthetic data in order to prove the usefu lness of the pro posed method s based on the variability stru cture of the d ata; the other one, using a real dataset in order to demonstra te th e app lication in a real situ ation and to show how to interpret the results of a classic clustering task on histogra m data. Section 6 end s the pape r with some conclu sions and perspectives abo ut the proposed clustering methods. 2. Histogram data and W asserstein dista nce The clustering of data expre ssed as histogr ams can be usef ul to discover typolog ies of p he- nomena on th e basis of th e similarity of the ir distributions. In g eneral, clustering techniqu es depend fro m the choice of a suitab le dissimilarity , wher e th e adjective suita ble is related to the capability of th e dissimilarity to take into acco unt the natur e o f the d ata a nd of their r epresen- tation space. In this section, we g i ve a definition of the histogram data and we pr opose the W asserstein distance as a suitable metric for compar ing t h em. 2.1. Histogr a m Data Histogram is a cheap way fo r the representation of aggregate data or empirical distributions. Indeed , if any model of distribution can be a ssumed, the distribution of a set of data can be represented by histog rams: i.e. a set of contig uous intervals (b ins), of equal or of di ff erent width, associated with a set of weights (empirical frequen cies or d ensities). 3 Formally , let Y be a c ontinuo us variable defined on a finite su pport S = [ min ( y ); Ma x ( y )] ⊂ ℜ . Th e suppor t S is partitioned into a set of contig uous intervals (bins) { I 1 , . . . , I h , . . . , I H } , wh ere I h = [ a h ; b h ) wher e min ( y ) = a 1 and Ma x ( y ) = b H . Each I h is associated with a weigh t π h that represents an empirical (or theore tical) r elativ e freq uency . Let us E be consid ered as a set of n emp irical distributions F i ( y ) ( i = 1 , . . . , n ). In the case of a histogram descriptio n, it is possible to assume that S ( i ) = [ min ( y i ); M a x ( y i )]. Con sidering a set of intervals I hi = min ( y hi ) , M a x ( y hi ) ) such that: i . I li ∩ I mi = ∅ ; l , m ; ii . [ s = 1 ,... , H i I si = [ min ( y i ); M a x ( y i )] the suppo rt can also be written as S ( i ) = I 1 i , ..., I ui , ..., I H i i . In this pap er , we den ote with f i ( y ) the (emp irical) d ensity functio n associated with the description y i and with F i ( y ) its distribution f unction. It is possible to d efine the descrip tion o f th e i − th histogram for the variable Y a s: y i = { ( I 1 i , π 1 i ) , ..., ( I ui , π ui ) , ..., I H i i , π H i i } such tha t ∀ I ui ∈ S ( i ) π ui = R I ui f i ( y ) d y ≥ 0 a nd R S ( i ) f i ( y ) d y = 1 . (1) In the fo llowing, we use y i to denote the description of the i − th histogram in the univ ariate case. If we obverse p variables, we deno te with y i j (where i = 1 , . . . , n and j = 1 , . . . , p ) i − th histogram for the variable j . Thus, con sidering to th e classic d ata analysis approa ch, the ind i v idual × variable input data table contains in each cell a histogram as repre sented i n T ab le 1. Objs. V ar 1 . . . V ar j . . . V ar p 1 y 11 . . . y 1 j . . . y 1 p . . . . . . . . . . . . . . . . . . i y i 1 = . . . y i j = . . . y i p = . . . . . . . . . . . . . . . . . . n y n 1 . . . y n j . . . y n p T able 1: Indi vidual × v ariable input data table of histogram data 2.2. The W asserstein-Kantor ovich distanc e between two histograms The comp arison of h istogram d ata is a particu lar case of com parison o f distribution fu nctions. Sev eral d istances and dissimilarities h av e be en pr esented in the literature. In [7] we introduc e se veral distan ces that can b e u sed for compar ing h istogram data. Ano ther g ood review o n dis- tances between distributions can be foun d in [10]. Th e most part of these distance s are based on th e com parison of den sities or frequency associated with a rand om variable, but the fam- ily of d istances based o n W asserstein metric perm its to ob tain interesting interpretative results about the ch aracteristics or the moments of the distrib u tions (see [7] f or details). W asserstein- Kantorovich metric [1 0][19], a nd, in par ticular the derived L 2 -W asserstein d istance is a n atural 4 extension of the Euclidean metric to com pare d istributions. If F i ( y ) and F i ′ ( y ) are the (empirical) distribution fun ctions associated with y i and y i ′ , r espectively , and F − 1 i ( t ) and F − 1 i ′ ( t ) ( t ∈ [0 , 1]) their correspo nding quantile function s, the L 2 -W asserstein metric is defined as follows: d W ( y i , y i ′ ) : = v u u u u t 1 Z 0 F − 1 i ( t ) − F − 1 i ′ ( t ) 2 d t (2) This distanc e is also kn own as Mallows’ [ 12] distance. If the co rrespond ing quantile fu nctions are centered b y the respecti ve sample means: ¯ F − 1 i ( t ) = F − 1 i ( t ) − ¯ y i and ¯ F − 1 i ′ ( t ) = F − 1 i ′ ( t ) − ¯ y i ′ ( ∀ t ∈ [0 , 1] ), the correspon ding h istogram description are den oted with y c i and y c i ′ and Cuesta-Alberto s et al. [20] proved th at d 2 W ( y i , y i ′ ) = ( ¯ y i − ¯ y i ′ ) 2 + d 2 W ( y c i , y c i ′ ) (3) or , in other words, the ( squared) W asserstein distance between two distributions f i ( y ) an d f j ( y ) (or random variables), is equal to the sum of the squared Euclidean distance between their means (the first moments) and the squared W asserstein distance between the two centered random v ar i- ables. Th e latter can be con sidered as a d istance m easure of th eir d ispersions, i.e., repr esent the di ff erence between two distributions excep t for their location. Irpino a nd Romano [18] an d V er de an d Irpino [6] showed that L 2 W asserstein distance be- tween two gene ric distributions F i ( y ) an d F i ′ ( y ) can be decom posed into the fo llowing co mpo- nents (location, size and shape): d 2 W ( y i , y i ′ ) = ( ¯ y i − ¯ y i ′ ) 2 | {z } Location + ( s i − s i ′ ) 2 | {z } S ize + 2 s i s i ′ h 1 − r QQ ( F − 1 i , F − 1 i ′ ) i | {z } S ha pe | {z } d 2 W ( y c i , y c i ′ ) (4) where: ¯ y i , s i and ¯ y i ′ , s i ′ are the samp le mean s an d standard d eviations respectively o f f i ( y ) and f i ′ ( y ). r QQ ( F − 1 i , F − 1 i ′ ) = 1 R 0 ( F − 1 i ( t ) − ¯ y i )( F − 1 i ′ ( t ) − ¯ y i ′ ) d t s i s i ′ = 1 R 0 F − 1 i ( t ) F − 1 i ′ ( t ) d t − ¯ y i ¯ y i ′ s i s i ′ (5) is the samp le corr elation of the qu antiles of the two empirical distributions as repr esented in a classical QQ plot. A comp utational p roblem is related to the calculation of r QQ , because it n eeds the com putation of th e qua ntile fun ctions. Irpino an d V erde [4] showed th at the the W asserstein distanc e be- tween histogram da ta depends only from the nu mber of bins used for the histogram descriptions, av oiding the comp utational drawbacks related to the identification of th e q uantile fun ctions of continuo us d istributions. W asserstein distance can be u sed fo r d efining an inertia measure amon g h istograms like f or the Euclidean metr ics [4]. The total in ertia with r espect to the bary center g ( which is a histogram whose quantiles are the m eans of the respecti ve quantile of all the distributions) of a set of n histogram data is giv en by the following quantity: 5 T = n X i = 1 d 2 W ( y i , g ) = n X i = 1 1 Z 0 F − 1 i ( t ) − F − 1 g ( t ) 2 d t = K X k = 1 X i ∈ C k d 2 W ( y i , g k ) | {z } W + K X k = 1 | C k | d 2 W ( g k , g ) | {z } B . (6) i.e., T ca n be decom posed in to within (W) and between (B) clu sters inertia, according to the Huygen ’ s theorem of decomp osition, where g k is the barycen ter of the k -th cluster and | C k | is the number of objects in E belon ging to the clu ster C k . W e will use th is prop erty for defining too ls f or the interpr etation of clustering results. 2.2.1. Multivariate a nd adaptive W asserstein distance Giv en a set E o f n objec ts described by p variables as in T a ble 1, e ach y i j is associated with a (empirical) den sity function f i ( y j ), a distribution functio n F i ( y j ) and a qu antile function F − 1 i ( t j ). W e denote with ¯ y i j and s i j the sample mean and the sample standard deviation o f f i ( y j ). The individual description of the i − th ob ject is then the following vector y i : y i = [ y i 1 , . . . , y i p ] . (7) Clark and Rae [ 21] present specific f ormulation s for the multiv ariate W asserstein distance wh en the distribution are Gaussians which are equipped with their cov ariance matrix. For all the other cases [20], it is not possible to co mpute an alytically a W asserstein d istance b etween two mu lti- variate distributions. Considering that we do not know th e joint h istograms for e ach ind ividual but on ly the marginal h istograms, we con sider th e multiv ariate square d W asserstein distance as follows: d 2 W ( y i , y i ′ ) = p X j = 1 d 2 W y i j , y i ′ j . (8) Follo wing the sam e o bservations derived for E qn. (3), we may rewrite th e multiv ariate sq uared W asserstein distance as follows: d 2 W ( y i , y i ′ ) = p X j = 1 ¯ y i j − ¯ y i ′ j 2 + p X j = 1 d 2 W y c i j , y c i ′ j . (9) In ord er to g i ve a di ff erent weights to the variables we introduce adaptive distances [22], these are distances equ ipped with a system o f weights. Le t us consider a vector of weights Λ = [ λ 1 , . . . , λ p ] such that λ j > 0. According to [22] and [1 4], a general formulatio n for an Adaptive Single V ariab le (squared) W asserstein distance is as f ollows: d 2 W ( y i , y i ′ | Λ ) = p X j = 1 λ j d 2 W y i j , y i ′ j . (10) In this f ormulation , the weights induc e a linear transfo rmation of the original space. Se veral approa ches of these types have been pro posed (see for examples [16], [15], [1 7]), where th e weights a re associated to th e who le set of data or where th e weights are ch osen locally for eac h cluster in which is partitioned the set of data. If the distance is decomposable in se veral com- ponen ts, it is possible to introdu ce a suitable sy stem of weights for such componen ts. While 6 the choice of weighting each variable is easily extendib le to the histogram data, the choice of weighting comp onents needs to b e proven for such k ind of da ta. In the pr esent paper, starting from the deco mposition o f th e squar ed W asserstein distanc e as shown in Eq s. 3 an d 4 , we pr o- pose two schemas of weigh ting systems for the definitio n of two adap tiv e squ ared W asserstein distances based on the two compon ents: the first den oted as Globally Compo nent-wise Ada ptive W assertein D istan ce (GC-A WD), while the secon d is denoted as Cluster Depen dent Compon ent- wise Adap tive W a ssertein Distance (CDC-A WD). 3. The Dy namic Clustering Algorithm The Dynam ic Clustering Algorithm (DCA) [8][ 9] is her e propo sed as a method to pa rtition a set of data descr ibed b y distributions. W e recall th at D CA is based on the definitio n of a criterion of the best fitting between the partition of a set of in dividuals and the r epresentation of th e clusters o f the partitio n. Th e algo rithm simultan eously loo ks fo r the best partition into K clusters a nd their b est repre sentation. Thus, the DCA needs the definitio n of a proximity function to assign the individuals to the clusters and the definition of a way to represent the clusters. The choice of the representativ e elements of the cluster s ( pr ototype ) is done according t o the dissimilarity functio n used in the algorith m to allocate the elem ents to the clu sters, such that a criterion of intern al homogen eity is minimized. Th e consistence between the rep resentation and the alloca tion func tion g uarantees the c on vergence of the algorithm to a station ary value of th e criterion. According to th e nature o f the d ata, we propo se to base the a dequacy criterion for the dynamic clustering algorithm on the W asserstein d istance between histogram data. W e introduce two schemas of Dynamic Clustering algorithms b ased o n two pro posals o f ad aptive (square d) W asser- stein distances. I n th ese algo rithms, we prop ose two sets of weights c omputed on the whole set E for defining th e adeq uacy cr iterion o n a Globally Compon ent-wise Adap tive W assertein Dis- tance ( GC-A WD) an d on a Cluster Dep endent Compon ent-wise Adaptive W a ssertein Distan ce (CDC-A WD). 3.1. Adequacy criterion based o n sta n dard an d adap tive distance s Let us consider a set E of n ob jects d escribed by p histogr am variables. The individual description of the i − th object is y i = [ y i 1 , . . . , y i p ], where y i j is the h istogram descriptio n of the i − t h individual for the j − t h variable. W e assume that th e prototype o f the cluster C k ( k = 1 , . . . , K ) is also r epresented by a vector g k = ( g k 1 , . . . , g k p ), wh ere g k j is a histogram. As in the stand ard adaptive dy namic cluster algor ithm, th e propo sed methods look fo r the partition P = ( C 1 , . . . , C k ) of E in K classes, its correspo nding set of K prototyp es G = ( g 1 , . . . , g K ) and a set of K di ff e rent adaptiv e distances d = ( d 1 , . . . , d K ) depen ding on a set Λ of positive we ights associated with the clusters, s u ch that the following adequacy criterion of the best fitting between the clusters and their representation is locally minimized: ∆ ( G , Λ , P ) = K X k = 1 X i ∈ C k d ( y i , g k | Λ ) . (11) As in the stand ard ad aptive dynam ic cluster algor ithm, we perform a repr esentation step where the pro totypes are up dated according to th e cluster structure o f the data. This is followed by a weighting step, allowing th e definition of the weight to be associated with each variable 7 (or comp onent) in the d efinition of the a daptive d issimilarity . Subseque ntly , an allocatio n step assigns the individuals to classes accor ding to their pro ximity to the class prototy pes. The three steps ar e repeated un til co n vergence of th e algorith m is achie ved, i.e., u ntil the adequacy cr iterion reaches a stationary value. The adequacy criteria to be minimized are based on the follo win g distances: ST AN DARD - Standa r d W asserstein distance. Th e standard (squ ared) W asserstein distance between the histogram y i and of the prototy pe g k is defined as: d ( y i , g k ) = p X j = 1 d 2 W ( y i j , g k j ) . (12) In this case, the general criterion becomes: ∆ ( G , P ) = K X k = 1 X i ∈ C k d 2 W ( y i , g k ) (13 ) as no system of weights is defined. GC-A WD - Globa lly Componen t-wise Ad aptive W assertein Distance. Th e d istances depends from two vector Λ ¯ y and Λ Di s p of co e ffi cients that assign weights for each comp onent of each variable: Λ ¯ y = ( λ 1 ¯ y , . . . , λ p ¯ y ) (14) Λ Di s p = ( λ 1 Di s p , . . . , λ p Di s p ) . (15) W e de fine the two-com ponen t global ad aptiv e W asserstein distance between the d escrip- tion y i and proto type g k as: d ( y i , g k | Λ ) = p X j = 1 λ j ¯ y ( ¯ y i j − ¯ y g k j ) 2 + p X j = 1 λ j Di s p d 2 W ( y c i j , g c k j ) (16) where y c i j and g c k j are the centered description of y i j and of g k j , as presented in Eqn. ( 3). In this case, being Λ = " Λ ¯ y = ( λ 1 ¯ y , . . . , λ p ¯ y ) Λ Di s p = ( λ 1 Di s p , . . . , λ p Di s p ) # (17) the general criterion is: ∆ ( G , Λ , P ) = K X k = 1 X i ∈ C k p X j = 1 λ j ¯ y ( ¯ y i j − ¯ y g k j ) 2 + K X k = 1 X i ∈ C k p X j = 1 λ j Di s p d 2 W ( y c i j , g c k j ) . (18) CDC-A WD - Cluster Depen d ent Compon e nt-wise Adaptive W assertein Distance. The d istances depend s from K cou ples of vectors Λ k , ¯ y and Λ k , Di s p of coe ffi cients that assign a weight for each compo nent of th e variable in each cluster . For each cluster we define: Λ k , ¯ y = ( λ 1 k , ¯ y , . . . , λ p k , ¯ y ) Λ k , Di s p = ( λ 1 k , Di s p , . . . , λ p k , Di s p ) . 8 W e define the two-componen t cluster-depende nt adap ti ve W asserstein distance b etween the description y i and proto type g k as: d ( y i , g k | Λ ) = p X j = 1 λ j k , ¯ y ( ¯ y i j − ¯ y g k j ) 2 + p X j = 1 λ j k , Di s p d 2 W ( y c i j , g c k j ) (19) where y c i j and g c k j are the centered description of y i j and of g k j , as presented in Eqn. ( 3). In this case, being Λ = Λ k , ¯ y = λ 1 k , ¯ y , . . . , λ p k , ¯ y Λ k , Di s p = λ 1 k , Di s p , . . . , λ p k , Di s p ∀ k ∈ { 1 , . . . , K } (20) the general criterion becomes: ∆ ( G , Λ , P ) = K P k = 1 P i ∈ C k p P j = 1 λ j k , ¯ y ( ¯ y i j − ¯ y g k j ) 2 + + K P k = 1 P i ∈ C k p P j = 1 λ j k , Di s p d 2 W ( y c i j , g c k j ) . (21) From an initial solution fo r ( G 0 , Λ 0 , P 0 ), the dy namic clustering alg orithm based on adap tiv e distances alternates three steps until the criterion ∆ reach a stationary point. 3.1.1. Step 1 : definition of the p r ototypes In the first step of th e algorithm, the partition P of E in K clusters and the c orrespon ding weights Λ are fixed. Proposition 1. Chosen one of the distan c e functions ( Eqs. 1 2, 16 or 19), the vec to r of pr oto types G = ( g 1 , . . . , g k ) , wher e g k = ( g k 1 , . . . , g k p ) , which minimizes the criterion ∆ , is calcula ted by means of the q uantile function s associa ted with each g k j . g k j is r epresented by a histogram with associated the following q uantile fu nction: F − 1 g k ( t j ) = 1 n k X i ∈ C k F − 1 i ( t j ) ∀ t j ∈ [0 , 1 ] (22) wher e n k is the car din ality of cluster C k . It is the means of the qu antile function s of the histogram r epr esen tations of th e elemen ts belo nging to the cluster C k and it can a lso b e written as: F − 1 c g k ( t j ) + ¯ y g k j = 1 n k X i ∈ C k F − 1 c i ( t j ) + 1 n k X i ∈ C k ¯ y i j ∀ t j ∈ [0 , 1 ] (23) wher e F − 1 c g k ( t j ) (resp. F − 1 c i ( t j ) ) is a ssociated with the center ed descriptio n g c k j (r esp. y c i j ) o f g k j (r esp. y i j ) a nd ¯ y g k j (r esp. ¯ y i j ) is the mean of th e de scription of g k j (r esp. y i j ). 9 Pr oof. Beeing ∆ an ad ditiv e criterion (for the variables, the c ompon ents and th e clusters), ac- cording to [20], we can write: min φ ( g k j ) = min X i ∈ C k 1 Z 0 F − 1 i ( t j ) − F − 1 g k ( t j ) 2 d t j | {z } I = = min P i ∈ C k ¯ y i j − ¯ y g k j 2 + P i ∈ C k 1 R 0 F − 1 c i ( t j ) − F − 1 c g k ( t j ) 2 d t j = = min X i ∈ C k ¯ y i j − ¯ y g k j 2 | {z } I I + min X i ∈ C k 1 Z 0 F − 1 c i ( t j ) − F − 1 c g k ( t j ) 2 d t j | { z } I I I where F − 1 c i ( t j ) = F − 1 i ( t j ) − ¯ y i j and F − 1 c g k ( t j ) = F − 1 g k ( t j ) − ¯ y g k j . Prob lem I I is minimized when: ¯ y g k j = 1 n k X i ∈ C k ¯ y i j ; while pro blem I I I is minimized when, for each t j ∈ [0 , 1]: F − 1 c g k ( t j ) = 1 n k X i ∈ C k F − 1 c i ( t j ) . Then, prob lem I is minimize d when th e barycen ter ( pr ototyp e ) h istogram g k j is a histogram whose quantile function is: F − 1 g k ( t j ) = F − 1 c g k ( t j ) + ¯ y g k j ∀ t j ∈ [0 , 1] . (24) 3.1.2. Step 2 : definition of the b est distances In the second step, the partitio n P of E and the corr espondin g vector G of the prototy pes are fixed. It is worth notin g that the Dynamic Clustering Algorithm based on the standar d (squared) W asserstein distances does not require this step. Proposition 2. Accor ding to Did ay a nd Govaert [22], the Λ system of weights, useful fo r d efining the adaptive distan ces that minimize the criterion ∆ , is ca lculated acc o r ding ly to the di ff er ent schemes of adaptive distance fun ctions used for the definition o f th e ade q uacy criterion of the algorithm. GC-A WD If the distance fun ction is g iven b y Eqn. (1 6), the Λ system of weights that min imize the c riterion ∆ is Λ = " Λ ¯ y = ( λ 1 ¯ y , . . . , λ p ¯ y ) Λ Di s p = ( λ 1 Di s p , . . . , λ p Di s p ) # (25) under th e following restrictions: 10 1. λ j ¯ y > 0 a nd λ j Di s p > 0 2. Q p j = 1 λ j ¯ y = 1 and Q p j = 1 λ j Di s p = 1 . The coe ffi c ie n ts of Λ ¯ y and Λ Di s p , which satisfy r estrictions (1) and (2) an d minimize the criterion ∆ , a r e calculated as: λ j ¯ y = " Q p h = 1 K P k = 1 P i ∈ C k ( ¯ y ih − ¯ y g kh ) 2 # 1 p K P k = 1 P i ∈ C k ( ¯ y i j − ¯ y g k j ) 2 , (26) λ j Di s p = " Q p h = 1 K P k = 1 P i ∈ C k d 2 W ( y c ih , g c kh ) !# 1 p K P k = 1 P i ∈ C k d 2 W ( y c i j , g c k j ) . (27) Note that the closer ar e th e objects to the pr ototypes of th e clusters for the compo nent r elated to the mean (r esp. dispersion) the higher is th e res p ective weight. CDC-A WD If th e d istance function is given by Eq n. (19), the Λ system of weights that minimize the c riterion ∆ is: Λ = Λ 1 , ¯ y Λ 1 , Di s p . . . . . . Λ k , ¯ y Λ k , Di s p . . . . . . Λ K , ¯ y Λ K , Di s p (28) wher e, fo r ea ch cluster , k = 1 , . . . , K : Λ k , ¯ y = ( λ 1 k , ¯ y , . . . , λ p k , ¯ y ) Λ k , Di s p = ( λ 1 k , Di s p , . . . , λ p k , Di s p ) under th e following restrictions for each c lu ster k = 1 , . . . , K : 1. λ j k , ¯ y > 0 and λ j k , Di s p > 0 2. Q p j = 1 λ j k , ¯ y = 1 and Q p j = 1 λ j k , Di s p = 1 . The coe ffi cients λ j k , ¯ y and λ j k , Di s p , which satisfy r estrictions (1 ) and (2) and minimize the criterion ∆ , a r e: λ j k , ¯ y = [ Q p h = 1 P i ∈ C k ( ¯ y ih − ¯ y g kh ) 2 ] 1 p P i ∈ C k ( ¯ y i j − ¯ y g k j ) 2 , and (29) λ j k , Di s p = h Q p h = 1 P i ∈ C k d 2 W ( y c i j , g c k j ) i 1 p P i ∈ C k d 2 W ( y c i j , g c k j ) . (30) Note that th e closer to the pr ototype s of a given cluster C k ar e the objects for the componen t r elated to the mean (r esp. dispersion) the hig her is the r espective weight for th e cluster C k . 11 Pr oof. The proof of th e p roposition can be perfo rmed acco rding to [22] using the L agrange multipliers m ethod for th e m inimization of the cr iterion. For the sake of b revity , and with out loss of generality , see [14] and [17]. 3.1.3. Step 3 : definition of the b est partition In this step, prototy pe G a nd the Λ are fixed. Proposition 3. The p a rtition P = ( C 1 , . . . , C K ) , which minimizes the criterion ∆ , consists o f clusters C k ( k = 1 , . . . , K ) identified acco r ding to th e follo wing a llocation rules: ST AN DARD C k = { i ∈ E | d ( y i , g k ) < d ( y i , g m ) } ∀ m , k ( m = 1 , . . . , K ) . (31) GC-A WD and CDC-A WD C k = { i ∈ E | d ( y i , g k | Λ ) < d ( y i , g m | Λ ) } ∀ m , k ( m = 1 , . . . , K ) . (32) In general, when d ( y i , g k ) = d ( y i , g m ) or d ( y i , g k | Λ ) = d ( y i , g m | Λ ) , then i ∈ C k if k < m. Pr oof. The proof of Proposition 3 is straightforward. 3.2. Pr operties of th e a lg orithm According to [9], the pro perty of con vergence of the alg orithm can be studied fro m two series: z t = ( G t , Λ t , P t ) and s s t = ∆ ( z t ), t = 0 , 1 , . . . From an initial term z 0 = ( G 0 , Λ 0 , P 0 ), the algor ithm c omputes the d i ff erent terms o f the series z t until co n vergence, a t which p oint th e c riterion ∆ achieves a stationary value. Here, we show the co n vergence of algorithms in term s of co nfiguration of partition, prototyp es and criterion ∆ ( G , Λ , P ). Proposition 4. The series s s t = ∆ ( z t ) decreases a t ea ch iteration an d conver ges. Pr oof. First, we show that inequ alities ( I ), ( I I ) and ( I I I ) ∆ G t , Λ t , P t | { z } s s t ( I ) z }| { ≥ ∆ G t + 1 , Λ t , P t ( I I ) z }| { ≥ ∆ G t + 1 , Λ t + 1 , P t ( I I I ) z }| { ≥ ∆ G t + 1 , Λ t + 1 , P t + 1 | {z } s s t + 1 (33) hold. Inequa lity ( I ) holds because ∆ G t , Λ t , P t = K X k = 1 X i ∈ C t k d ( y i , g t k | Λ t ) ∆ G t + 1 , Λ t , P t = K X k = 1 X i ∈ C t k d ( y i , g t + 1 k | Λ t ) and accord ing t o Pro position 1, g ( t + 1) k = ar gmin | {z } g k p X j = 1 X i ∈ C ( t ) k d y i j , g ( t ) k j | Λ ( t ) ( k = 1 , . . . , K ) . 12 Inequa lity (II) als o holds because ∆ G t + 1 , Λ t + 1 , P t = K X k = 1 X i ∈ C t k d ( y i , g t + 1 k | Λ t + 1 ) and accord ing t o Pro position 2, λ ( t + 1) k = ar gmin | {z } λ k X i ∈ C ( t ) k d y i j , g ( t + 1) k j | λ k . Finally , inequality ( I I I ) holds because ∆ G t + 1 , Λ t + 1 , P t + 1 = K X k = 1 X i ∈ C t k d ( y i , g t + 1 k | Λ t + 1 ) and accord ing t o Pro position 3 C ( t + 1) k = ar gmin | {z } C ∈ P ( E ) X i ∈ C d y i j , g ( t + 1) k j | Λ ( t + 1) ( k = 1 , . . . , K ) . (34) Finally , because the series s s t decreases and it is boun ded ( ∆ ( z t ) ≥ 0 ), it conv erges. Proposition 5. The series z t = ( G t , Λ t , P t ) con verges . Pr oof. Let us assum e that the station ary point of th e series s s t is achieved at the iteration t = T . Then, we have that z T = z T + 1 and then ∆ ( z T ) = ∆ ( z T + 1 ) (i.e., s s T = s s T + 1 ). From ∆ ( z T ) = ∆ ( z T + 1 ), we have ∆ ( G T , Λ T , P T ) = ∆ ( G T + 1 , Λ T + 1 , P T + 1 ) and this eq uality , accordin g to Proposition 4, can be rewritten as equalities ( I ), ( I I ) and ( I I I ) as follo ws: ∆ G T , Λ T , P T ( I ) z }| { = ∆ G T + 1 , Λ T , P T ( I I ) z }| { = ( I I ) z }| { = ∆ G T + 1 , Λ T + 1 , P T ( I I I ) z }| { = ∆ G T + 1 , Λ T + 1 , P T + 1 . (35) From the first equality ( I ), we kn ow that G T = G T + 1 because G is u nique, m inimizing ∆ whe n the partition P T and Λ T are fixed. From the secon d equality ( I I ), we know that Λ T = Λ T + 1 because Λ is unique, minimizin g ∆ wh en the p artition P T and G T + 1 are fixed. Th en, fr om th e third equality ( I I I ), we kno w that P T = P T + 1 because P is uniqu e, minimizing ∆ when Λ T + 1 and G T + 1 are fixed. Finally , we conclude that z T = z T + 1 . T his conclusio n h olds for all t ≥ T and z t = z T , ∀ t ≥ T and then z t conv erges. 3.2.1. Complexity of the algorithm Let us d enote the numbe r of opera tions required for th e com putation of the W asserstein dis- tance with D , th e number of operation s fo r computing the mean quantile function of a set of distributions with Q and the numb er of iterations o f the alg orithm a s I . The repre sentation step requires approxim ativ ely O ( n p × Q ) operation s. The weighting step r equires ap proxim ativ ely O ( n p × D ) operations; indeed it is b ased on the co mputation of th e inertia within the clusters. The a llocation step requ ires appro ximatively O ( n K p × D ) o perations. Th en, the comp lete com- putational cost is of order O ( n K p × D × I ) . 13 3.3. Algorithm schema The pro cedure step s fo r the Dynam ic Clustering Algor ithm w ith adap ti ve distances f or his- togram data are described in algorith m 1. 4. T ools for the interpretation o f the part ition After a clustering task, it is important to e valuate th e results of th e procedure, in order to have information abo ut the intra-class an d the inter-classes hetero geneity , th e con tribution of each variable to the fina l p artition, etc. In the framework of the stand ard Dynam ic Cluster Al- gorithm, some indices, based on the ratio between the in tra-cluster and the total in ertia, can be used as too ls fo r in terpreting th e clustering results Celeux et al. [23]. Such indeces have been ex- tended De Carvalho et al. [24] to the case of dynam ic clustering with adaptive a nd non-ad aptive Euclidean distances for interval-v alu ed data. Here, we u se the same class o f indices fo r the ev aluation of the partition of histogram data. In the following section , we define an extension of: T ota l inertia . I t is the total sum of squared (TSS) distances o f all the elements o f th e set E from the global prototyp e ( g E ). TSS is the adeq uacy criterion; Within inertia . It is the within sum o f squar ed (WSS) distanc es of th e elements o f a c luster from the respecti ve bary center, for all the clusters o f the partition. It co rrespon ds to the criterion ∆ ( G , Λ , P ) with P = C 1 , . . . , C k , . . . , C K ; G = g 1 , . . . , g k , . . . , g K and Λ = " Λ ¯ y = ( λ 1 ¯ y , . . . , λ p ¯ y ) Λ Di s p = ( λ 1 Di s p , . . . , λ p Di s p ) # (36) accordin g to th e GC-A WD ; as well as Λ = Λ 1 , ¯ y Λ 1 , Di s p . . . . . . Λ k , ¯ y Λ k , Di s p . . . . . . Λ K , ¯ y Λ K , Di s p (37) accordin g to th e CDC-A WD ; Between inertia . It is the sum of squared (BSS) distances between th e pro totypes of the s everal clusters an d the general p rototyp es. Using the adaptive W asserstein distance, and accord- ing to th e H uygen’ s theore m of d ecompo sition of in ertia, we prove th at th e total sum of squares can be deco mposed into two additi ve terms: the within sum of squar es an d the between sum of squares (TSS = WSS + BSS). 14 Algorithm 1 D ynamic clustering with adaptive distan ces Require: K n umber of clusters, E a set n ≥ K indi vid uals d escribed by p > 0 h istogram variables Initialization Set t = 0 Randomly choose a partition of E into K clu sters P t = ( C t 1 , . . . , C t k ) of E Set Λ t = 1 . repeat Step 1: Definition of the best prototypes (Representation step) Giv en P t and Λ t , compu te the G t + 1 set of K prototypes accord ing to the c riterion ∆ ( G t + 1 , Λ t , P t ) in Eqn. (22). Step 2: Definition of the best distances (W e ig hting step) Giv en P t and G t + 1 , compute the Λ t + 1 matrix according to Eqs. (26) and (27) for GC-A WD , and Eqs. (29) and (30) for CDC-A WD . Step 3: Definition of the best partition (Allocatio n step) Giv en G t + 1 and Λ t + 1 allocate each elem ent of E to the closest p rototyp e accord ing to Eq s. (31) or (32) te st ← 0 P t + 1 ← P t for i = 1 to n do find the cluster C t + 1 m to which i belongs find the winnin g cluster C t + 1 k such that k = ar gmin 1 ≤ h ≤ K d ( y i , g t + 1 h | Λ ) if k , m then te st ← 1 C t + 1 k ← C t + 1 k ∪ { i } C t + 1 m ← C t + 1 m \{ i } end if end for set t = t + 1 until te st , 0 15 4.1. T otal sum of squ ar es (TSS) Let E be a set of n rea l poin ts, deno ted as x i , where each of the m is weig hted by a positive real number denoted as π i and a positiv e num ber h : n X i = 1 X j > i π i π j ( x i − x j ) 2 = h n X i = 1 π i ( x i − ¯ x ) 2 (38) where ¯ x = n P i = 1 π i x i n P i = 1 π i (39) and h = n P j = 1 π j . W e de fine the total sum of squares as: T S S = n X i = 1 π i ( x i − ¯ x ) 2 . (40) If we have p > 1 descrip tors, the total sum of squares is: T S S = p X j = 1 n X i = 1 π i j x i j − ¯ x j 2 . (41) W ithout loss of generality , given a set E of n individuals describe d by p histogr am descrip tors, in our two adap ting schemes o f W asserstein distanc e, fo r a set o f da ta clustered into K groups, we have two kinds of T S S and globa l prototypes that are consistent with Eqn. (22) and Eqn . (41). ST AN DARD According to Eq. 22, th e general pro totype g E = ( g E 1 , . . . , g E p ) is a vecto r of histogram description s g E j whose quantile function is: F − 1 g E ( t j ) = n − 1 n X i = 1 F − 1 i ( t j ) ∀ t j ∈ [0 , 1 ] (42) i.e., it is the histog ram whose qu antiles are the average of the q uantiles of the e lements of E for the j − t h variable and the total sum of squares is T S S = p X j = 1 X i ∈ E d 2 W ( y i j , g E j ) . (43) GC-A WD The gener al p rototyp e is co mputed accor ding to Eqs. 23 and 39. Thus, the genera l prototy pe g E = ( g E 1 , . . . , g E p ) is a vector of histogram description s g E j whose quan tile function is: F − 1 g E ( t j ) = n − 1 n X i = 1 ¯ y i j | {z } ¯ y g E j + n − 1 n X i = 1 F − 1 c i ( t j ) | {z } F − 1 g c E ( t j ) ∀ t j ∈ [0 , 1] (44 ) 16 the total sum of squares is T S S GC − AW D = p X j = 1 X i ∈ E h λ j ¯ y ( ¯ y i j − ¯ y g E j ) 2 + λ j Di s p d 2 W ( y c i j , g c E j ) i . (45) CDC-A WD The general prototype g E = ( g E 1 , . . . , g E p ) is a vector of histogram d escriptions g E j whose quantile function is: F − 1 E ( t j ) = ¯ F − 1 E ( t j ) + ¯ y g E j (46) where ¯ y g E j = K P k = 1 λ j k , ¯ y K P h = 1 n h λ j h , ¯ y P i ∈ C k ¯ y i j and ¯ F − 1 g E ( t j ) = K P k = 1 λ j k , Di s p K P h = 1 n h λ j h , Di s p P i ∈ C k ¯ F − 1 i ( t j ) ∀ t j ∈ [0 , 1 ]; (47) where n h is the number of elemen ts belon ging to the clu ster h . In this case, th e total sum of squares is T S S C DC − AW D = p X j = 1 K X k = 1 X i ∈ C k λ j k , ¯ y ¯ y i j − ¯ y g E j 2 + λ j k , Di s p d 2 W ( y c i j , g c E j ) ; (48) 4.2. W ithin su m of sq u ar es WSS The within sum o f squ ares correspo nds to the minimized criterion in the algo rithm. Th ere- fore, we recall the same results obtained in section 3.1 for the prop osed tw o adap ti ve distances: W S S = ∆ ( G , Λ , P ) . (49) 4.3. Between sum o f squ ar es BSS For the proposed tw o adap tiv e distances, the between sum of squares are: ST AN DARD BS S = p X j = 1 K X k = 1 n k d 2 W ( g k j , g E j ); (50) GC-A WD BS S GC − AW D = p X j = 1 K X k = 1 n k h λ j ¯ y ( ¯ y g k j − ¯ y g E j ) 2 + λ j Di s p d 2 W ( g c k j , g c E j ) i ; (51) CDC-A WD BS S C DC − AW D = p X j = 1 K X k = 1 n k λ j k , ¯ y ¯ y g k j − ¯ y g E j 2 + λ j k , Di s p d 2 W ( g c k j , g c E j ) . (52) 17 Based on th e quantities T S S , W S S and BS S , we can ev alua te the clustering procedure as in [24], where a quality of partition index is considered and is defined as: QP I = 1 − W S S T S S . (53) Holding the deco mposition of inertia for the W asserstein distanc e, the Q P I in dex can be written as follows: QP I = BS S T S S . (54) Equation 53 is eq ual to eq. 54 if and only if the to tal inertia c an b e deco mposed in two c ompo- nents: the inter-cluster in ertia ( BS S ) a nd th e intra- cluster inertia ( W S S ). W e showed in Eq. 6 that this is true f or W asserstein distance. Considering th e additivity of T S S , W S S and BS S , it is possible to detail the Q P I f or each v aria ble, for each cluster and for each componen t. 5. Experimental results Clustering (considere d an un supervised le arning task ) is an explor ati ve me thod ap plied to a dataset, where , in general, no inform ation about the class structure is av ailable to the researc her . Agreeing with Meila [25], there are m any competing criteria for com paring clusterings, with no clea r best choice. In an experimental evaluation, th e q uality of clustering alg orithms is of- ten based on their pe rforman ce accord ing to a specific qu ality ind ex. Experiments use either a limited number of re al-world instances or synthetic data. While real-world data is cru cial for testing the p roposed algorithm s, until now there is a lack of p ublic repo sitories fur nishing data described b y histograms. This is indee d true f or histo gram data; histograms are composed by synthesized raw data, while a large r epository of standa rd data exists. Therefore, a test b ed of synthetic, pre-c lassified data must be assembled by a generator . In orde r to asses the qu ality of th e p roposed algorithm s, we u sed synthetic data an d histog ram representatio ns of real data. For t h e an alysis of the synthe tic data, we set u p t wo Monte Carlo e x- periments that allo wed the g eneration of two hu ndred datasets of histog ram data of known cluster structure. W e th en ev aluated the qu ality of each algor ithm u sing an index fo r the ev aluatio n o f the ag reement a nd one for the a ccuracy between the obta ined par titions and th e initial on e. The real data analysis was performed using d ata from a set of meteorolo gical s tation s in the People’ s Republic of Chin a. I n the following we p resent how th e experiment h av e been set up and wha t indices have b een used for assessing the perform ances of the algo rithms. 5.1. Synthetic d ataset In this section , we will d escribe the perf ormances of the tw o Dy namic Clustering metho ds based on adaptive squared W asserstein distances when th e d ata ho ld structu res o f (co ntrolled) variability . In particular, we aim to show that GC-A WD has the b est perform ance when the data present a d i ff erent dispersion for each c ompon ent of each histog ram variable. Further, consider- ing a di ff eren t dispersion (for each componen t of the variables) for each cluster, we aim to show CDC-A WD gives better results when the data p resent a di ff erent disper sion for each compon ent of the histogram variables for each cluster . W e cannot compare the u se of the W asserstein distanc e with oth er distances fo r distrib ution s, because it is no t assured the decomposition in terms of mean and of disper sion for the other dis- tances presen ted in the literature (see [7]). 18 T o this en d, we set up two Monte Carlo experiments. Eac h experiment c onsisted of 10 0 g ener- ations of 150 synthetic objects (3 clusters o f 50 objects), descr ibed by two h istogram variables. Each experiment was initialized by choosing, for each cluster and each variable, four parameters (mean, standard de viatio n, s kewness a nd kurtosis), obtaining 3 ( clu ster s ) × 2( var iable s ) ba seline sets of fou r parameters. Starting fr om the baseline parameter s, we assigned a standard de v iation for each param eter a nd repeated the following steps 100 times: 1. for each cluster and each variable, we generated 50 sets o f param eters, adding a ran- dom error ( consistent with the standard de v iation of the parameter) to each baseline set of four parame ters on the basis of the o bject belonging to the class, obtaining 3( clu ster s ) × 2( var iable s ) × 50( ob ject s ) sets of four parameters; 2. for each object an d each variable, we g enerated 1,00 0 ran dom numb ers using a Pear son parametric distribution (for fu rther d etails see Johnson et al. [2 6, Pg. 15, Eqn. 12.33]) based on the sets of parameter s; 3. for each o bject and each v ariable , we compu ted a histogram using the algo rithm p resented in [18], obtaining 3( clu ster s ) × 2( v a ria ble s ) × 50 ( ob ject s ) histograms; 4. each clustering m ethod (the stand ard Dynam ic Clustering and th e four adap tiv e distanced based ones) was randomly initialized 50 times an d we chose the best final clu stering result (i.e., the one with lower cr iterion); 5. for each best solution we c omputed the Correc ted Rand Index [27] to in dicate th e qu ality of the result with respect to the initial labels of the data. T o measure the quality of the r esults f urnished by the dyna mic clustering algor ithm con sid- ering di ff eren t adaptiv e distances, an external validity index can be adopted. Indeed , in this case the da ta we re labeled on the b asis of the g enerator fun ctions u sed to set up the experimen ts. In this pape r , we use the Corrected Rand (CR) ind ex, defin ed b y Hu bert an d Arabie [27] fo r co m- paring two p artitions. C R takes its values in [ − 1 , 1] interval, where th e value 1 ind icates perfect agreemen t between partitions; whereas values close to zer o (or negatives) cor respond to clus- ter agr eement fo und by chance. Further we u se th e accuracy index as the perc entage of correct classified objects. At the end of each experimen t, we rep ort the main statistics for the CR index and fo r th e accuracy . Experiment 1. Considering the variability o f a histogram variable as a c ombinatio n of the vari- ability of the moments associated with each histogra m descrip tion o f each individual, in the first e x periment we gener ated data in order to obtain two histogram variables that loca lly ( for each c luster) presen t the same histogram variability ( i.e., each h istogram variable fo r each clu s- ter has the same variability), while globally , the two histogr am variables present di ff eren t vari- ability . In orde r to obtain the da tasets, we fixed the baseline par ameter space sampling fro m 3( clu ster s ) × 2( var iable s ) × 4 ( par amet er s ) Normal distributions, as d escribed in T able 2. In o rder to have a visualization of the space of the paramete rs, Fig.1 shows the ellipses fo r each cluster and for each parameter . After the generation of 100 d atasets accordin g the the ba seline p arameters, we comp uted th e CR index as well as th e accuracy for each algorithm. The means an d the stan dard de viation s of th e agreemen t indices are sum marized in T ab le 3. T he results o f Ex perimen t 1 show th at th e Dynamic Clusterin g Alg orithms b ased on adaptive distances outper formed the c lassic Dy namic Clustering. In this case, it can be seen that GC-A WD an d CDC-A WD had similar perfor mances, but the algorithm GC-A WD allowed the best results in terms of the mean of CR index . 19 T able 2: Baseline se ttings for Expe riment 1: each coupl e are respe ctiv ely the mean and stand ard de viation of th e sampled Normal distributi ons used for extrac ting the parameters of a Pearson’ s family distribut ion that has been sampled for the ext raction of val ues summ arize d by histogram data. V ariable 1: space of pa rameters Mean St.dev Skewness Kurtosis Cluster 1 (- 4.8, 6) (12, 1.2) (-0.05, 0.1) ( 3.10, 0.1) Cluster 2 (- 4.8, 6) ( 9, 1.2) ( 0.00, 0.1) ( 3.00, 0.1) Cluster 3 (10.0, 6) ( 6, 1.2) ( 0.1 0, 0.1) ( 2.95, 0.1) V ariable 2: space of pa rameters Mean St.dev Skewness Kurtosis ( 17, 12) (6.0, 0.6) ( 0.1, 0.1) (2.95, 0.1) (-17, 12) (4.6, 0.6) ( 0.0, 0.1) (3.00, 0.1) ( 0, 12) (3.3, 0.6) (-0.1 , 0.1) (3.10, 0.1) −40 −20 0 20 40 −40 −20 0 20 40 C1 C2 C3 Mean for V1 Mean for V2 5 10 15 2 4 6 8 10 12 14 C1 C2 C3 St. dev. for V1 St. dev. for V2 −0.2 −0.1 0 0.1 0.2 0.3 −0.2 −0.1 0 0.1 0.2 0.3 C1 C2 C3 Skew for V1 Skew for V2 2.8 2.9 3 3.1 3.2 3.3 2.8 2.9 3 3.1 3.2 3.3 C1 C2 C3 Kurtosis for V1 Kurtosis for V2 Figure 1 : Experiment 1: 95% ellipse s for t he bi varia te distributio ns of the parameter s of th e distrib utions defined in T able 2. T able 3 : Mean of the best 100 CR indices and accuracie s for Experiment 1 ST ANDARD GC-A WD CDC-A WD Mean Best CR (std) 0.497 9 ( 0.009 7) 0.6596 (0.0 072) 0.62 92 (0.0094) Mean Best Accuracy (std) 0.7867 (0.041 0) 0.8667 (0.0 3 40) 0.866 7(0.03 40) 20 Experiment 2. The secon d exper iment was b ased on th e generatio n of h istogram data in order to ob tain two histogram variables wher e, for each histogram variable and for each cluster th ere is di ff eren t variability , while g lobally , the two variables p resented a similar variability . In orde r to ob tain the 1 00 datasets, we fixed the ba seline par ameter sp ace samplin g from 3 ( clu ster s ) × 2( var iable s ) × 4 ( par amet er s ) Normal distrib utio ns, as described in T able 4. In order to have a visualizatio n of the space of the parameters, Fig.2 sho ws the ellipses for each T able 4: Baseline se ttings for Expe riment 2: each coupl e are respe ctiv ely the mean and stand ard de viation of th e sampled Normal distributi ons used for extrac ting the parameters of a Pearson’ s family distribut ion that has been sampled for the ext raction of val ues summ arize d by histogram data. V ariable 1: space of parameter s Mean St.dev Skewness Kurtosis Cluster 1 ( 0.0, 0.8) (3.6, 0.3) (-0.04, 0.01) (2.90, 0.03) Cluster 2 (-0.5, 1.6) (2.7, 0.2) ( 0.0 3, 0.01) (3 .05, 0.03) Cluster 3 ( 2.8, 2.4) (1.8, 0.1) ( 0.10, 0.01) (3.20, 0.03) V ariable 2: space of parameter s Mean St.dev Skewness Kurtosis ( 0.0, 2.3) (4.1, 0.1) ( 0.10, 0.01) (3.20, 0.03) (-3.0, 1.6) (3.4, 0.2) ( 0.03, 0.01) (3.05 , 0.03) ( 1.1, 0.8) (2.8, 0.3) (-0.03, 0.01) (2.90, 0.03) cluster and for each parameter . After the gene ration of 100 datasets according to the baseline parameters, we computed the CR −5 0 5 −6 −4 −2 0 2 4 6 8 C1 C2 C3 Mean for V1 Mean for V2 1.5 2 2.5 3 3.5 4 4.5 1.5 2 2.5 3 3.5 4 4.5 C1 C2 C3 St. dev. for V1 St. dev. for V2 −0.05 0 0.05 0.1 −0.05 0 0.05 0.1 C1 C2 C3 Skew for V1 Skew for V2 2.9 3 3.1 3.2 2.9 2.95 3 3.05 3.1 3.15 3.2 3.25 C1 C2 C3 Kurtosis for V1 Kurtosis for V2 Figure 2 : Experiment 4: 95% ellipse s for t he bi varia te distributio ns of the parameter s of th e distrib utions defined in T able 4. index as well as the accuracy f or e ach alg orithm. The mean s an d th e stand ard d eviations of the agreemen t indices are s u mmarized in T able 5. T able 5 : Mean of the best 100 CR indices and accuracie s for Experiment 2 ST ANDARD GC-A WD CDC-A WD Mean Best CR (std) 0.425 5 ( 0.006 4) 0.4248 (0.0063) 0.53 76 (0.0 095) Mean Best Accuracy (std) 0.6867 (0.046 4) 0 .6867 (0.046 4) 0.7133 (0. 0452) The second experiment sho ws that the Dy namic Clustering Algorithms based on adaptive distances b ased on th e schem as of CDC-A WD outper formed the classic D ynamic Clusterin g. It can be seen th at CDC-A WD allowed the b est results i n terms of the mea n CR in dex and accuracy , while GC-A WD did not outperf orm th e ST ANDAR D a lgorithm. W e have empirical e vide nce that each of the two schemas of adaptive distances allows better 21 clustering results, wh en considering the tw o structures o f disper sion listed at the beginning of the section. 5.2. A r eal dataset: Climatic data fr om China In this section, we use dynamic clustering based on adap tiv e distances on a d ataset where the de scriptors a re the d istributions of the me an month ly temp erature, the pressure, th e relative humidity , the wind speed and the total month ly pr ecipitations reco rded in 6 0 m eteorolog ical stations in the People’ s Repu blic of Chin a 1 , recorded from 1 840 to 1988. For th e pur poses of this paper, we hav e consider ed the distributions of the variables for Januar y (the co ldest month ) and Ju ly (the ho ttest m onth), so our initial data is a 60 × 10 matrix, where th e gen eric ( i , j ) cell con tains the histogram of the values for the j th variable of the i th meteorolo gical station, defined by means of th e algorithm pro posed b y Irpino and Romano [18]. T able 6 describe s th e variables and the main statis tics r elated to the glob al bar ycenter fo r each variable ( ¯ y and s a re, respectively , the mean and the standard d eviation of the histog ram bary center, while T S S is computed acc ording the for mulation of the T otal Su m o f Squares p roposed by V erde and Ir pino [6] as a measure of variability of the histogram v ariable) . T able 7 shows the main characteristics of a subset of the o bserved s tation s. The mean , standard ♯ V a riable ¯ y j s j T S S j Y 1 Mean Relati ve Humidity (perce nt) Jan 67 .9 7.0 127.9 Y 2 Mean Relati ve Humidity (perce nt) July 73.9 4.5 114.2 Y 3 Mean Station Pressure (mb) Jan 968.3 3.6 5 864.7 Y 4 Mean Station Pressure (mb) July 951 .1 3.0 5084 .4 Y 5 Mean T emp erature (Cel. × 10) Jan -12 .1 17.3 114.8 Y 6 Mean T emp erature (Cel. × 10) July 252 .3 10.5 113.5 Y 7 Mean W ind Speed (m / s) Jan 2.3 0.6 1.1 Y 8 Mean W ind Speed (m / s) July 2.3 0.5 0.6 Y 9 T otal Precipitation (mm) Jan 18.2 14 .3 519.6 Y 10 T otal Precipitation (mm) July 144.6 8 0.8 499.9 T able 6: Basic statistics of the histogram varia bles: ¯ y j and s j are the mean and the standard devi ation of the barycent er histogram for the j th v ariable, while T S S j is the T otal Sum of Squares for the j th v ariable. deviation, th e skewness and the k urtosis are reported for each histogr am descr iption. Th e last two m easures co rrespon d to the th ird an d f ourth standar dized mo ments. T able 7 shows also the spatial coord inates of the stations (longitu de, lati tu de and elev ation in meters). This information is no t relev ant to the analysis except for the interp retation of the o btained cluster, indeed the spatial coordinates do not play a n acti ve role in the an alysis. I n this case, we use c lustering in an explorative fashion . For the sak e of brevity , we sho w the r esults of only one method. Th e choices for the method and number of clusters were made according to the maximu m value observed fo r the Calinski and Harabasz [2 8] index (CH). The CH index is a validity ind ex that is g enerally used for the determ ination of the numb er of clusters, and c an be viewed as a Pseudo -F index. I f K is the n umber of clusters of a partition of a set of n ind i v iduals, W S S ( K ) th e W ithin Sum of Squares and BS S ( K ) the Between Sum of Squ ares, the C H index is computed as follows: C H ( K ) = BS S ( K ) / ( K − 1) W S S ( K ) / ( n − K ) . (55) 1 Dataset URL: http://ds s.ucar.edu/data s ets/ds578.5/ 22 T able 7 : Dataset from 60 climatic stations in China: main characte ristics for each station. Y 1 Y 2 Y 3 ID St.Name Long. Lat . Elev . Mean St.dev . Skew . Kurt. Mean St.dev. Skew . Kurt. Mean St.dev. Skew . Kurt. 1 Hailaer 119.75 49.22 612.80 7 81.77 59 .34 1.26 5.78 708.21 54. 58 -0.88 3.28 9,477.82 2 5.02 -0.72 3.77 2 NenJiang 125. 23 49.17 242.20 7 40.16 48 .88 0.31 3.24 779.10 38 .95 -0.32 1.89 9,920.16 28.91 -0.13 2.42 3 BoKeTu 121.92 48. 77 739.40 690.4 3 46.44 1.22 5.68 78 2.71 4 2.46 -0.51 2.32 9,318. 44 39.43 -0.04 2.13 4 QiQiHaEr 123 .92 47.38 145.9 0 688.03 6 7.16 0.1 1 3.08 727.0 1 57. 91 -0.53 3.16 10,051. 41 26.14 -0.10 2.33 · · · · · · · · · · · · · · · · · · · · · · · · · · · · · · · · · · · · · · · · · · · · · · · · · · · 59 ZhanJian g 110.40 21.2 2 25.3 0 783.32 64.65 -0.98 3.59 808. 81 20 .74 -0.06 2.90 10,166 .05 20.78 -0.34 2.95 60 HaiKou 110.3 5 20.03 1 4.10 851. 33 41.81 -0.62 3.2 3 819.64 28.38 -0.48 3.97 10, 175.49 26.0 3 0.66 4.59 Y 4 Y 5 Y 6 ID STNAME Mean St.dev . Skew . Kurt. Mean St.dev . Skew . Kurt. Mean St.d ev . Skew . Kurt. 1 Hailaer 9,339.85 16.68 -0.39 3.67 -27 5.31 32.4 0 -0.86 3.72 200.71 13.60 0.96 4.47 2 NenJiang 9,756.11 1 8.44 0.5 0 2.66 -254 .96 26.44 -0.11 2.42 206.27 9.76 0 .44 2.70 3 BoKeTu 9,224.33 29.67 0.71 2.56 - 216.13 2 6.50 -0.69 3.77 179.4 6 10.87 0.66 4.04 4 QiQiHaEr 9,861.43 15.68 0.08 1.95 -197. 70 23.36 -0.49 2.64 227.18 10.68 0.06 2.69 · · · · · · · · · · · · · · · · · · · · · · · · · · · · · · · · · · · · · · · · · · 58 NanNing 9,946. 66 19.69 -1.41 4.35 129.52 18.88 -0.69 3.18 283.90 5.96 -0.68 4.29 59 ZhanJian g 10,012.9 1 13.96 0.00 3.34 15 7.52 16. 68 -0.45 2.66 288.28 5.42 0.12 2.4 8 60 HaiKou 10,02 8.93 18.09 0.44 3.63 174.09 17.78 0.02 2.55 284 .05 5.49 -0.27 3.11 Y 7 Y 8 Y 9 Y 10 ID STNAME Mean St.d ev . Skew . Kurt. Mean St.dev . Skew . Kurt. Mean St.dev . Skew . Kurt. Mean St.d ev . Skew . Kurt. 1 Hailaer 18.80 7.60 0.77 3.47 27.84 6.51 1.25 6.66 34.79 24.92 1.27 4.55 886 .70 42 4.09 0. 43 3.63 2 NenJiang 15.99 6.78 1.25 3.67 26.97 7.37 0.64 2.72 31.67 22.05 1.19 4.34 1 ,397.45 683.74 0.73 4.42 3 BoKeTu 33 .97 7.44 0.01 3.14 2 0.44 4.32 0.46 2.94 25.4 1 23 .90 1.06 3 .40 1,362. 48 605.9 8 0.86 3. 32 4 QiQiHaEr 28.32 6.89 0.36 2.52 29.71 5.09 0.03 2.64 17.49 17. 74 1.17 4 .52 1,321. 97 753.2 0 0.77 3. 42 · · · · · · · · · · · · · · · · · · · · · · · · · · · · · · · · · · · · · · · · · · · · · · · · · · · · · · 58 NanNing 16.83 4.33 -0.04 2.57 20.37 4.04 -0.30 2.30 317.7 3 293.68 1 .59 6.26 2,0 03.83 87 2.76 0. 36 2.77 59 ZhanJian g 31.0 1 9.34 1 .27 5.62 28.86 5.67 0 .23 2.70 221 .59 248.06 1.49 4.87 2,0 80.67 1,146.92 0.56 3.08 60 HaiKou 32.65 8.63 0.62 2 .53 2 5.84 6.62 -0.15 2.14 21 2.79 185.36 0.77 2.45 1, 899.15 1,029.41 1.46 6.26 In this case, we executed 100 initializations f or each c lustering algorith m and a nu mber of clu s- ters of the p artition going from 2 to 10 . Amo ng th e three a lgorithms, the method that allo wed a g ood b ehavior f or th e C H index was CDC-A WD. Ind eed, as it is shown in Fig. 3, it rea ches a maximu m when we cho ose a p artition in K = 8 clu sters, while the other methods d o not present an absolute max imum su ggesting a scarce cluster structure of the data. For K = 8, CDC- A WD registered an high value f or the Q P I index (0 . 928) , while the d ynamic cluster ing ba sed on non -adaptive distances (ST ANDAR D) h ad a QP I e qual to 0 . 873, an d GC-A WD h ad QP I ’ s respectively equal to 0 . 825. T able 8 shows the final set o f λ weig hts associated with th e compo - 2 3 4 5 6 7 8 9 10 20 30 40 50 60 70 80 90 100 Clusters CH index STANDARD GC−AWD CDC−AWD Figure 3: CH indices for the three algorithms and for K = 2 , . . . , 10. nents (the mean and the disp ersion)of the distan ce in each c luster accordin g to th e CDC-A WD algorithm . Th e λ ’ s can b e consider ed as normalization factors f or the comp onent of the variables; indeed, we can s ee a h igh value fo r the weights associated with the v aria bles Y 7 and Y 8 (the Wind Speed in Januar y and in July) that h av e, in g eneral, a lower T S S . Because we u sed the CDC- A WD algo rithm, the weights take in to acco unt the within c luster variability of the c ompon ents of th e variables accordin g to ea ch cluster, so, in this case, we must read the weights cluster b y 23 1 2 3 4 5 6 7 8 Figure 4: Map of the 60 stations clustered into K = 8 classes using CDC-A WD. E ach station is marked with a symbol that represents the cluster to which it belongs. cluster . In deed, the con straint of product equal to 1 related to the weights computed acco rding to Eqs. 29 and 30 are r eferred to each cluster and cannot generally be compared acro ss th e d i ff erent clusters. Figu re 4 is the map of the 60 stations. E ach station is marked with a symbol that repre- T able 8: W eights genera ted by CDC-A WD on China data, K = 8. n k ’ s are the cardin ality of the obtaine d clusters. Y 1 Y 2 Y 3 Y 4 Y 5 n k ¯ y Di s p ¯ y Di s p ¯ y Di s p ¯ y Di s p ¯ y Di s p Cluster 1 10 0.8 66 0.558 1.230 1.17 7 0.08 0 0. 465 0. 067 0.582 0 .904 14.62 8 Cluster 2 8 1.25 1 0. 234 1. 649 0.325 0.028 1.6 36 0.0 35 1.684 0. 311 1.696 Cluster 3 2 0.05 7 0. 781 0. 050 0.114 0.593 0.7 10 528.519 0.476 0.091 10.4 24 Cluster 4 11 0.3 29 0.214 0.480 0.22 2 0.79 4 1. 784 1. 037 1.394 1 .115 6.680 Cluster 5 3 0.12 1 0. 183 0. 095 1.253 0.189 0.0 38 0.1 69 0.048 2. 149 7.179 Cluster 6 6 0.19 9 1. 292 0. 654 1.337 0.009 0.0 06 0.0 10 0.005 1. 489 25.620 Cluster 7 8 1.56 1 0. 161 0. 226 0.397 0.014 1.8 68 0.0 17 0.191 1. 236 5.317 Cluster 8 12 0.6 91 0.811 1.346 1.10 1 0.54 8 0. 514 1. 661 0.467 0 .280 21.02 0 Y 6 Y 7 Y 8 Y 9 Y 10 n k ¯ y Di s p ¯ y Di s p ¯ y Di s p ¯ y Di s p ¯ y Di s p Cluster 1 10 139.669 22.6 29 38.096 25.118 77 .594 55.284 0.090 0.01 0 0.005 0.0 01 Cluster 2 8 2.92 9 11.920 24.5 85 12 .705 66.03 0 15 .128 14.789 0.42 0 0.023 0.0 03 Cluster 3 2 1 19.150 7.413 18. 507 219.00 7 22 .134 34.659 2.106 0.23 4 0.000 0.0 00 Cluster 4 11 6.2 48 11.517 10. 351 2 9.491 16.2 68 5 3.147 0.423 0.04 9 0.016 0.0 01 Cluster 5 3 2.57 2 17.131 96.8 03 103.463 105.6 39 2 6.841 0.261 0.09 5 0.185 0.0 73 Cluster 6 6 6.75 9 126.53 1 34 3.066 93.389 632 .098 205.222 1.147 0. 135 0.03 2 0.002 Cluster 7 8 1 8.122 11.068 73. 259 2 8.923 112 .579 36.336 6.912 0.31 6 0.009 0.0 02 Cluster 8 12 24.848 12.61 1 21.428 23.214 24. 027 1 6.495 0.038 0.02 0 0.009 0.0 02 sents the cluster to w hich it b elongs. Note that th e cluster s represent geog raphical areas th at are consistent with the climate zones of China. In ord er to comment on the clusterin g results and consider ing the additiv e prop erties of the W S S , the BS S , and (as consequence) T S S with respect to the components of the variables, the variables and the clusters, we pr esent di ff erent versions of the QP I in T able 9, as prop osed by De Carvalho et al. [24] : • The QP I ’ s de noted by BS S ¯ y k j T S S ¯ y k j and BS S Di s p k j T S S Di s p k j for the com ponen ts an d by BS S k j T S S k j for the vari- ables in each cluster , a llow us to measure the hom ogeneity of a clu ster with respect to the compon ents and the v ariab les: the closer they are to 1, th e more likely the cluster is to 24 8000 8200 8400 8600 8800 9000 9200 9400 9600 9800 10000 0 0.1 0.2 0.3 0.4 0.5 Cl 8 Cl 7 Cl 6 Cl 5 Cl 4 Cl 3 Cl 2 Cl 1 Barycenter Figure 5: T he genera l prototype and the cluster prototypes for the varia ble Y 4 : Mean Station Pressure (mb) in July . contain similar objects. • The Q P I ’ s denoted b y BS S ¯ y k T S S ¯ y k and BS S Di s p k T S S Di s p k for the comp onents and b y BS S k T S S k for all the vari- ables in each clu ster , allow the measur ement of the h omogen eity of a cluster with respect to all the co mponen ts an d all the variables: th e clo ser they ar e to 1, the mo re likely th e cluster is to contain similar objects for all the componen ts or all th e v ar iables. • The Q P I ’ s related to each com ponent BS S ¯ y j T S S ¯ y j and BS S Di s p j T S S Di s p j and each variable BS S j T S S j for all the clusters, allo w us t o understand the contribution of each component or of each variable to the cluster separation (The closer the QPI is to 1, the more the clusters are separated). • The g lobal Q P I ’ s related to the comp onents BS S ¯ y T S S ¯ y and BS S Di s p T S S Di s p and the Q P I related to all the variables B T allow u s to ev aluate the global q uality of the clu stering results for each compon ent and eac h variable. In general, the CDC-A WD a lgorithm reaches a QP I = BS S T S S = 0 . 9 28 for a n umber of clus- ters equal to K = 8. Specifically , the mean compo nent o f the v ariab les allo ws higher homo - geneity within clusters BS S ¯ y T S S ¯ y = 0 . 932 , while th e dispersion compo nent has a mediu m e ff ect BS S Di s p T S S Di s p = 0 . 553 . Considering the second group of Q P I ind ices fr om th e r esults pr esented in T able 9, cluster 3 contain s objects that are very similar . For dispersion structure, cluster 2 is slightly m ore glob ally heterog enous. Con sidering the e ff ect of each com ponent in clustering the curren t dataset, the two variables th at allow b etter h omogen eity in the clu ster are Y 3 and Y 4 (Mean Statio n Pressure in J an uary and in July), while the worst results are o bserved for Y 7 (Mean W ind Speed in January ) and Y 2 (Mean Relati ve Humidity in July). Finally , in Figs. 5 and 6, we show the pro totypes o f the o btained clusters for the better (in terms of Q P I ) and for the worst v ariable s in partitioning the 60 st atio ns into K = 8 clusters. 25 T able 9 : Q P I s result ing from CDC-A WD, which has bee n used for part itioning China’ s stations into K = 8 clusters. The QP I s are detaile d for each component, for each v ariable and for each cluster . QP I ¯ y BS S ¯ y k j T S S ¯ y k j Y 1 Y 2 Y 3 Y 4 Y 5 Y 6 Y 7 Y 8 Y 9 Y 10 BS S ¯ y k T S S ¯ y k Cluster 1 0.860 0.548 0 .072 0.933 0 .929 0.920 0 .092 0.132 0 .908 0. 800 0.85 7 Cluster 2 0.469 0.347 0 .604 0.365 0 .832 0.820 0 .435 0.720 0 .016 0. 596 0.64 1 Cluster 3 0.960 0.838 1 .000 1.000 0 .924 0.999 0 .986 0.978 0 .986 0. 854 0.99 9 Cluster 4 0.564 0.075 0 .837 0.995 0 .129 0.486 0 .606 0.524 0 .407 0. 899 0.95 7 Cluster 5 0.232 0.921 0 .992 0.926 0 .962 0.688 0 .433 0.881 0 .316 0. 993 0.96 9 Cluster 6 0.304 0.060 0 .868 0.781 0 .430 0.860 0 .040 0.359 0 .174 0. 750 0.68 1 Cluster 7 0.952 0.844 0 .956 0.902 0 .827 0.973 0 .543 0.137 0 .770 0. 423 0.91 1 Cluster 8 0.881 0.435 0 .825 0.998 0 .763 0.840 0 .773 0.730 0 .889 0. 792 0.98 5 BS S ¯ y j T S S ¯ y j 0.781 0 .525 0. 9 23 0 .990 0. 7 90 0 .897 0.4 65 0 .564 0. 6 94 0.87 9 0.932 BS S ¯ y T S S ¯ y QP I Di s p BS S Dis p k j T S S Dis p k j Y 1 Y 2 Y 3 Y 4 Y 5 Y 6 Y 7 Y 8 Y 9 Y 10 BS S ¯ y k T S S Dis p k Cluster 1 0.123 0.204 0 .110 0.091 0 .002 0.197 0 .052 0.075 0 .783 0. 792 0.45 7 Cluster 2 0.297 0.369 0 .359 0.066 0 .712 0.612 0 .522 0.362 0 .804 0. 769 0.59 6 Cluster 3 0.959 0.379 0 .524 0.641 0 .943 0.385 0 .984 0.898 0 .398 0. 833 0.92 3 Cluster 4 0.684 0.096 0 .147 0.192 0 .022 0.393 0 .566 0.245 0 .459 0. 883 0.57 2 Cluster 5 0.244 0.613 0 .318 0.251 0 .884 0.664 0 .245 0.338 0 .176 0. 960 0.78 9 Cluster 6 0.095 0.059 0 .151 0.152 0 .131 0.302 0 .135 0.173 0 .242 0. 599 0.24 7 Cluster 7 0.629 0.550 0 .271 0.142 0 .364 0.396 0 .033 0.148 0 .629 0. 028 0.39 8 Cluster 8 0.301 0.103 0 .039 0.012 0 .136 0.147 0 .449 0.281 0 .915 0. 889 0.67 6 BS S Dis p j T S S Dis p j 0.413 0 .258 0. 1 69 0 .126 0. 4 48 0 .358 0.4 22 0 .235 0. 7 68 0.84 0 0.553 BS S Dis p T S S Dis p QP I BS S k j T S S k j Y 1 Y 2 Y 3 Y 4 Y 5 Y 6 Y 7 Y 8 Y 9 Y 10 BS S k T S S k Cluster 1 0.841 0.519 0 .078 0.923 0 .918 0.909 0 .087 0.125 0 .900 0. 799 0.84 1 Cluster 2 0.465 0.348 0 .599 0.358 0 .830 0.817 0 .439 0.715 0 .126 0. 605 0.64 0 Cluster 3 0.960 0.770 1 .000 1.000 0 .933 0.999 0 .985 0.968 0 .977 0. 846 0.99 9 Cluster 4 0.574 0.076 0 .829 0.995 0 .123 0.481 0 .604 0.514 0 .410 0. 899 0.95 5 Cluster 5 0.234 0.910 0 .990 0.914 0 .958 0.685 0 .410 0.864 0 .298 0. 992 0.96 4 Cluster 6 0.295 0.060 0 .861 0.771 0 .418 0.853 0 .046 0.351 0 .178 0. 745 0.67 0 Cluster 7 0.948 0.835 0 .953 0.895 0 .816 0.971 0 .523 0.138 0 .763 0. 404 0.90 5 Cluster 8 0.865 0.404 0 .803 0.998 0 .737 0.820 0 .753 0.704 0 .893 0. 814 0.98 3 BS S j T S S j 0.770 0 .512 0. 9 18 0 .989 0. 7 80 0 .891 0.4 62 0 .549 0. 7 01 0.87 7 0.928 B / T 26 0 5 10 15 20 25 30 35 40 45 50 0 0.2 0.4 0.6 0.8 1 1.2 Cl 8 Cl 7 Cl 6 Cl 5 Cl 4 Cl 3 Cl 2 Cl 1 Barycenter Y 7 Figure 6: The genera l prototyp e and the cluster prototypes for the variabl e Y 7 : Mean W ind Speed (m / s) in Janua ry . 6. Conclusions In this p aper, we presented two n ew alg orithms for the Dynamic Clustering o f histogram data based on two adaptive squared W a sserstein distances. The adap tiv e clustering dynamic algorithm locally optim izes an ad equacy criterion that measures the fitting between the c lusters and their r epresentatives (the bary centers) based on distances that chang e at each iteration. The first algorithm ( GC-A WD) uses a globally adaptive squa red W asserstein distance for eac h one of the two (me an and dispersion ) compon ents of the qua ntile fu nctions of histogram s. The second algorithm (CDC-A WD) uses a locally adaptive square d W asserstein distance for each of the two (mean and disp ersion) co mponen ts of the quan tile functio ns of histograms th at changes acco rding to each cluster . The a dvantages o f using such adaptive distances is the ability to identify clusters of di ff erent sizes and shapes, while standard DCA (like in k- means case) finds spherical clu sters. Starting from an initial ra ndom p artition of the o bjects, the adap ti ve DCA alternate three steps until conver g ence wh en the adequacy criterion reaches a stationary value, which rep resents a local (with in sum of squares) min imum. In the first two steps, the algor ithms g iv e the solu tion for th e b est pr ototype of each cluster as well as the solution for the be st adap ti ve distance (locally for each cluster). In the last step, th e algorithm g iv es the solution for the best p artition. Th e conv ergen ce as well as the time comp lexity of the algo rithms were addressed. T wo experimental ev aluations of t h e proposed methods were presented. The first was performed using two baseline settings f or the generation o f two h undre ds synth etic datasets in order to show the usefuln ess of each scheme of adaptive W asserstein distance in iden tifying a starting class structure in the data. The second, using a r eal-word dataset, showed th e use of such algo rithms in an explor atory fashion . All th e algor ithms b ased on adaptive distances were com pared w ith the standard dynamic clustering algorithm (i.e., based on a standard squared W asserstein distance). The experimen t co nducted on artificial d ata showed tha t th e adaptive alg orithms outperf ormed the standa rd one in terms of acc uracy in identifying the initial c lass structu re of th e data. The experiment on the real-word dataset also dem onstrated tha t the a daptive algo rithms wer e able to reach a g ood quality of partition that was generally greater than th e standard non-ad aptiv e algorithm . 27 References [1] H. H. B ock, E. Dida y , Analysis of S ymbolic Data. Explora tory Methods for Extracting Statisti cal Information from Comple x Data, Springer , Berlin, 2000. [2] L. Billard , E. Diday , Symbolic Data Analysis: Conceptual Stati stics and Data Mining (Wi ley Series in Computa- tional Statisti cs), John W iley & Sons, 2007. [3] E. Diday , M. Noirhomme-Fraiture, Symbolic Data Analysis and the SOD AS Software, Wile y-Interscie nce, Ne w Y ork, NY , USA, 2008. [4] A. Irpino, R. V erde, A new wasserstei n based distance for the hierarchica l clustering of histogram symbolic data, in: Batanj eli, Bock, Ferligoj, Ziberna (Eds.), Data Sc ience and Classificatio n, Springer , Berlin, 2006, pp. 185–192. [5] R. V erde, A. Irpino, Dynamic clusterin g of histograms using wasserstei n metric, in: A. Rizzi, M. V ichi (Eds.), Proceedi ngs in Computa tional Statistics, COMPST A T 2006, Co mpstat 2006, Physica V erlag, Heide lberg, 2006, pp. 869–876. [6] R. V erde, A. Irpino, Comparing histogram data using a mahalanob is-wasserstein dista nce, in: P . Brito (Ed.), Proceedi ngs in Computati onal Statistics, COMPST A T 2008, Compstat 2008, Springer V erlag, Heidelbe rg, 2008, pp. 77–89. [7] R. V erde, A. Irpino, Dynamic clustering of histogram data: using the right metric, in: P . Brito, P . Bertrand, G. Cucumel, F . De Carv alho (Eds.), Selecte d cont ributions in data analysis and classificat ion, Springer , Be rlin, 2008, pp. 123–134. [8] E. Diday , La m ´ ethode des nu ´ es dynami que, Revue de Statisti que Appliqu ´ ee 19 (1971) 19–34. [9] E. Diday , J. C. Simon, Clustering analysis, in: K. Fu (Ed. ), Digital Pattern Classification, S pringer , Berlin, 1976, pp. 47–94. [10] A. L. Gibbs, F . E. Su, On choosing and bounding probabili ty metrics, Intl. Stat. Re v . 7 (2002) 419–435. [11] Y . Rubner , C. T omasi, L. J. Guibas, The earth mover’ s distance as a metric for image retrie v al, Int. J. Comput. V ision 40 (2000) 99–121. [12] C. L. Mallo ws, A note on asymptotic joint normality , Anna ls of Mathematics S tatist ics 43 (1972) 508–51 5. [13] E. Lev ina, P . J. Bicke l, The earth move r’ s distance is the m allo ws distance : S ome insights from statistics, in: Proceedi ngs of the 8th Internat ional Conference On Computer V ision, volume 2, IEE E Computer Society , 2001, pp. 251–256. [14] F . A. T . De Carva lho, Y . Leche val lier , Parti tional clusteri ng algorit hms for symbolic interv al data based on single adapti ve distances, Patt ern Recogniti on 42 (2009) 1223–1236. [15] F . A. T . De Carv alho, Y . L eche va llier , Dynamic clustering of interv al-v alued dat a based on adapti ve quadratic distanc es, T rans. Sys. Man Cyber . Part A 39 (2009) 1295–1306. [16] R. M. C. R. de Souza, F . A. T . De Carval ho, A clustering method for m ixe d feature -type s ymbolic data using adapti ve squared euclidea n di stances, in: HIS ’07: Procee dings of the 7th Internationa l Confer ence on Hybrid Intell igent Systems, IEEE Computer Socie ty , W ashington, DC, USA, 2007, pp. 168–173. [17] F . A. T . De Carva lho, R. M. C. R. De Souza, Unsupervised pattern recognit ion models for mixed feature –type symbolic data, Pattern Recogniti on Letters 31 (2010) 430–443. [18] A. Irpino, E . Romano, Optimal histogram representat ion of large data sets: Fisher vs piece wise linear approxima- tion, in: M. Noirhomme-Fraiture, G. V enturini (Eds.), EGC, volume RNTI-E-9 of Revue des Nouvell es T echnolo - gies de l’Information , C ´ epadu ` es- ´ Editions, 2007, pp. 99–110. [19] C. V illan i, T opics in Optimal Tra nsportation, AMS, 2003. [20] J. A. Cuesta-Albe rtos, C. Matr ´ an, A. T uero-Diaz, Optimal transporta tion plans and con ver gence in distribut ion, Journ. of Multi v . An. 60 (1997) 72–83. [21] R. G. Clark, M. S. Rae, A class of wasserstein m etric s for probabil ity distributi ons, Michigan Math. J. 31 (2) (1984) 231–240. [22] E. Diday , G. Gov aert, Classificati on automatique av ec distance s adaptat iv es, R.A.I.R.O. Informatique Computer Science 11 (1997) 329–349. [23] G. Celeux, E . Diday , G. Gov aert, Y . Leche va llier , H. Ralambondrain y , Cla ssification Automatique des Donn ´ e s, Bordas, Paris, 1989. [24] F . De Carval ho, P . Brito, H. H. Bock, Dynamic clustering for interv al data based on l2 distanc e, Computa tional Statist ics 2 (2006) 231–250. [25] M. Meila, Compari ng clusteri ngs: an axiomatic vie w , in: In ICML 05: Proceedings of the 22nd internationa l confere nce on Machine learning, A CM Press, 2005, pp. 577–584. [26] N. L. Johnson, S. K otz, N. Balakri shnan, Continuous Uni var iate Distrib utions, V olume 1, Wil ey-Intersci ence, 1994. [27] L. Hubert, P . Arabie, Comparing partit ions, Journal of Cla ssification 2 (1985) 193–218. [28] R. B. Calinski, J. Harabasz, A dendrite method for cluster analysis, Communicati ons in Statistics 3 (1) (1974) 1–27. 28

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment