Spectral Ranking using Seriation

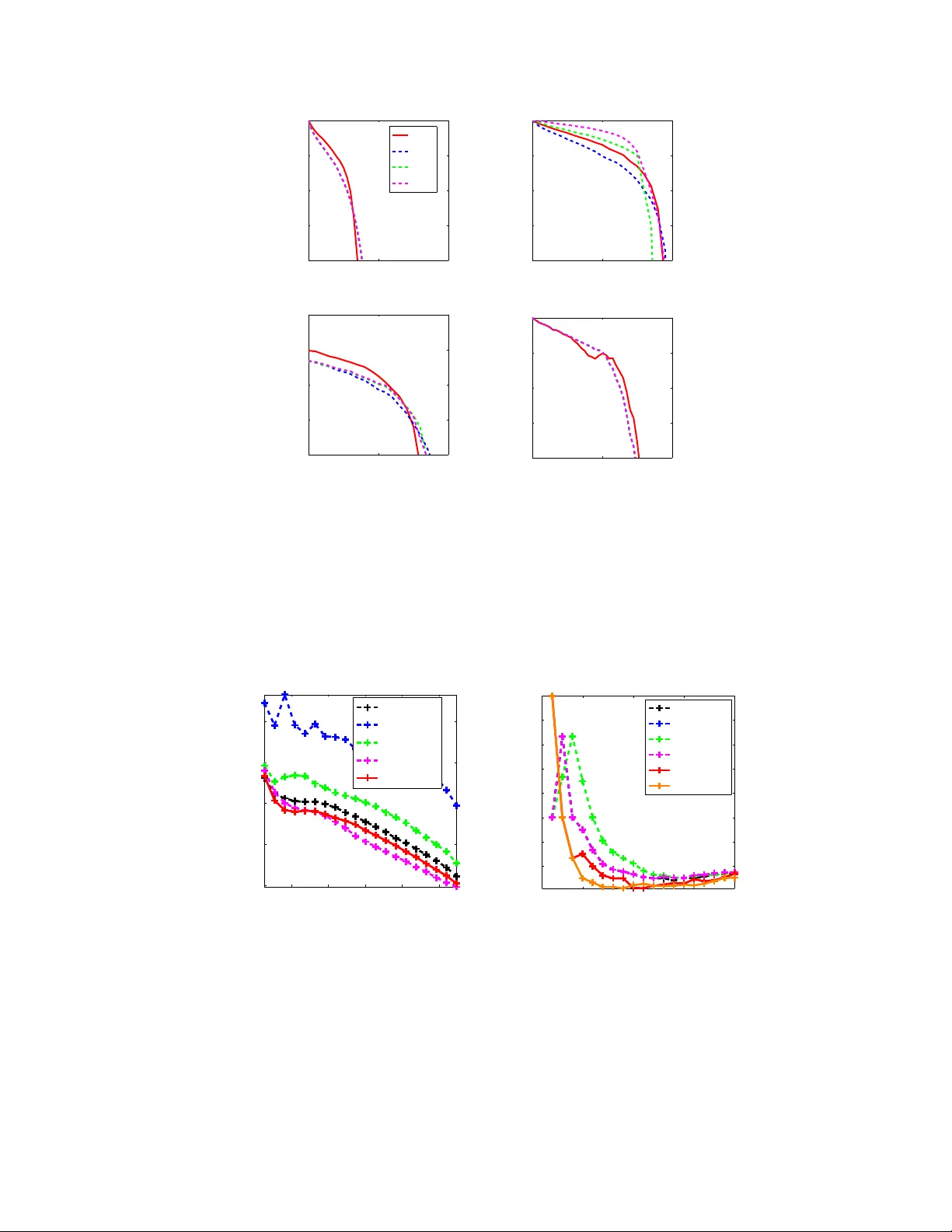

We describe a seriation algorithm for ranking a set of items given pairwise comparisons between these items. Intuitively, the algorithm assigns similar rankings to items that compare similarly with all others. It does so by constructing a similarity …

Authors: Fajwel Fogel, Alex, re dAspremont