Spectral Learning for Supervised Topic Models

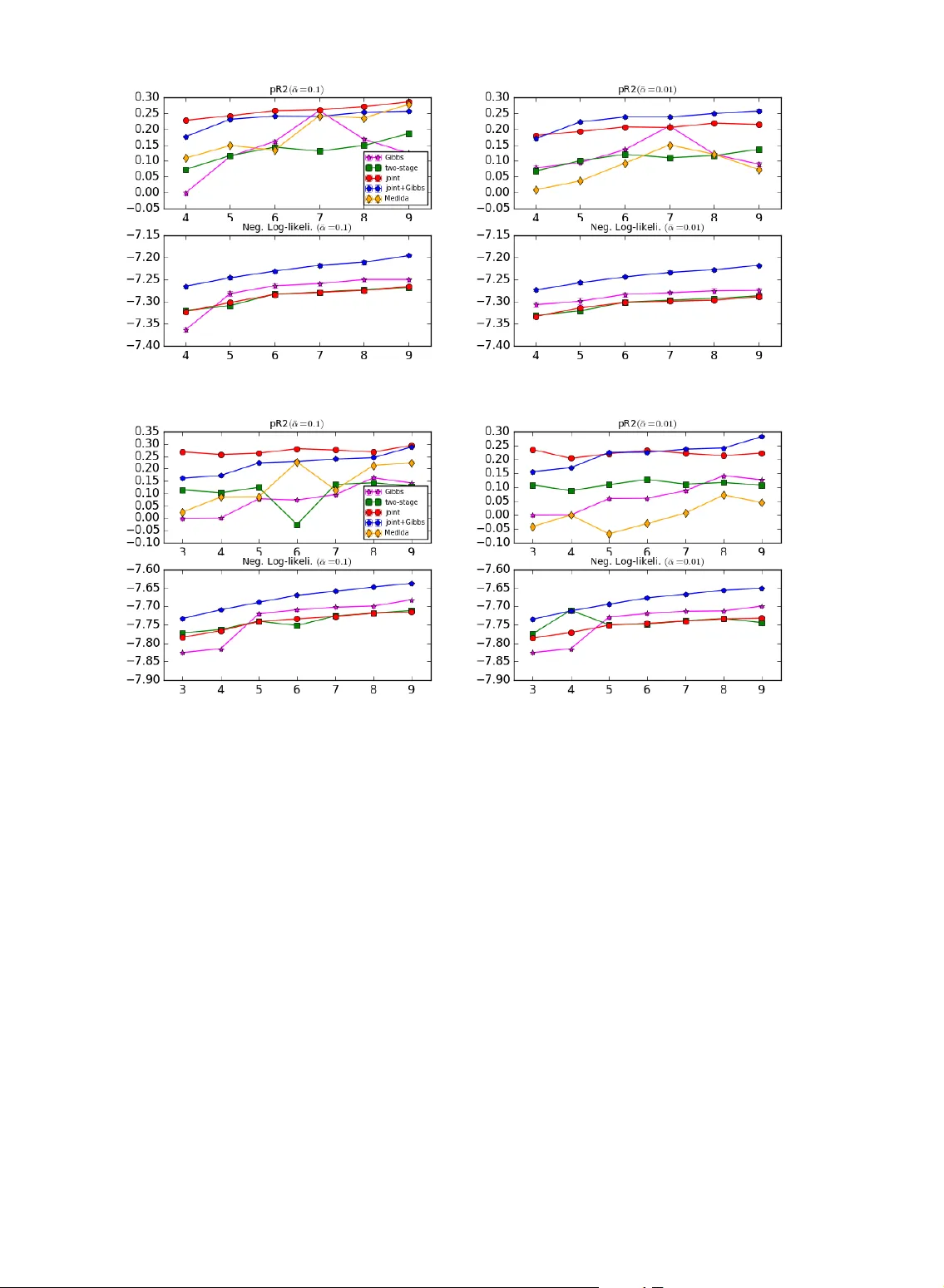

Supervised topic models simultaneously model the latent topic structure of large collections of documents and a response variable associated with each document. Existing inference methods are based on variational approximation or Monte Carlo sampling…

Authors: Yong Ren, Yining Wang, Jun Zhu