Secure Approximation Guarantee for Cryptographically Private Empirical Risk Minimization

Privacy concern has been increasingly important in many machine learning (ML) problems. We study empirical risk minimization (ERM) problems under secure multi-party computation (MPC) frameworks. Main technical tools for MPC have been developed based …

Authors: Toshiyuki Takada, Hiroyuki Hanada, Yoshiji Yamada

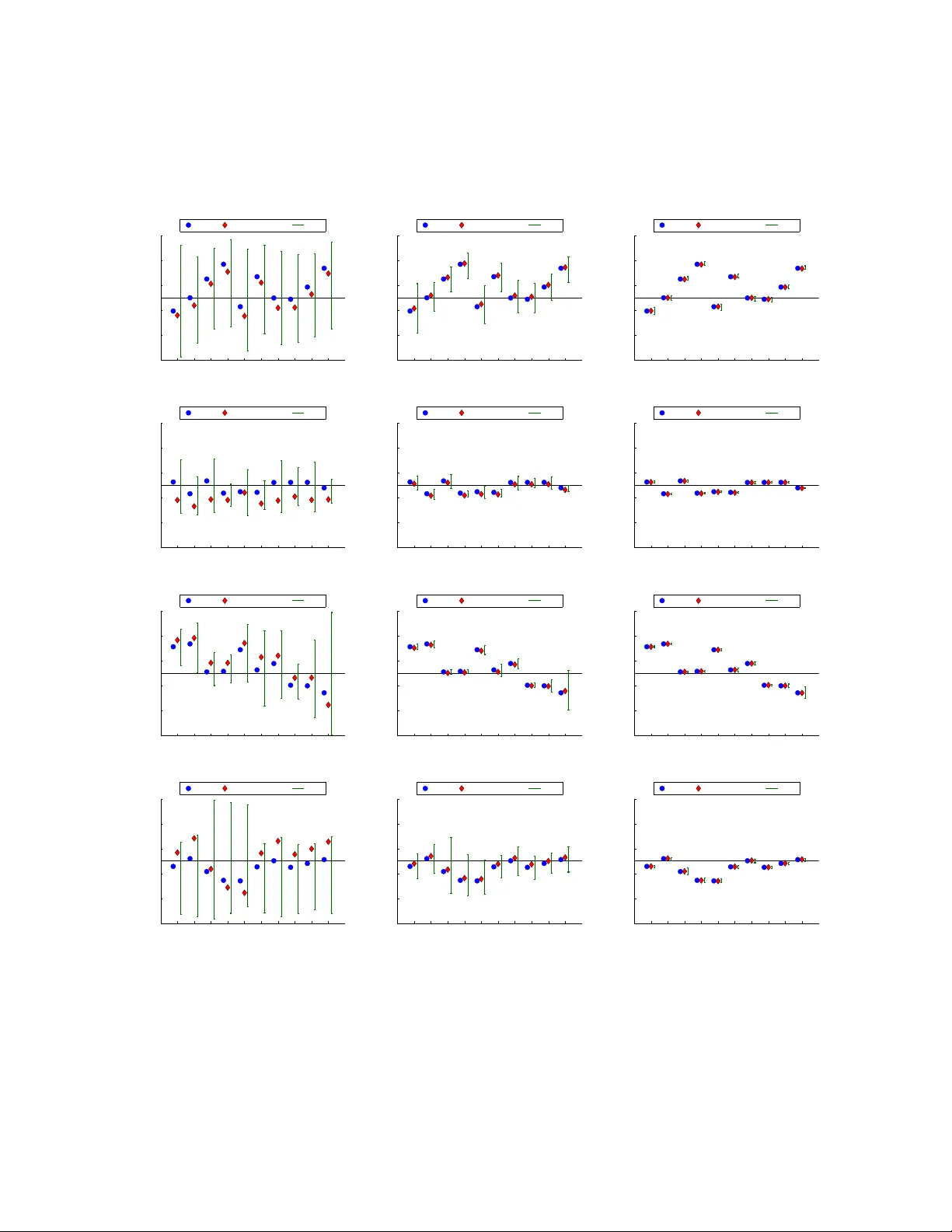

Secure Appro ximation Guaran tee for Cryptographically Priv ate Empirical Risk Minimization T oshiyuki T ak ada Nago ya Institute of T ec hnology Nago ya, Aic hi, Japan takada.t.mllab.nit@gmail.com Hiro yuki Hanada Nago ya Institute of T ec hnology Nago ya, Aic hi, Japan hanada.hiroyuki@nitech.ac.jp Y oshiji Y amada Mie Universit y Tsu, Mie, Japan yamada@gene.mie-u.ac.jp Jun Sakuma Univ ersity of Tsukuba Tsukuba, Ibaraki, Japan jun@cs.tsukuba.ac.jp Ic hiro T akeuc hi ∗ Nago ya Institute of T ec hnology Nago ya, Aic hi, Japan takeuchi.ichiro@nitech.ac.jp Jan uary 17, 2018 Abstract Priv acy concern has been increasingly important in man y mac hine learning (ML) problems. W e study empirical risk minimization (ERM) problems under secure multi-part y computation (MPC) framew orks. Main technical to ols for MPC hav e been dev elop ed based on cryptography . One of limitations in curren t cryptographically priv ate ML is that it is computationally intractable to ev aluate non-linear functions suc h as logarithmic functions or exp onen tial functions. Therefore, for a class of ERM problems suc h as logistic regression in whic h non-linear function ev aluations are required, one can only obtain appro ximate solutions. In this pap er, we introduce a nov el cryptographically priv ate tool called se cur e approximation guar ante e (SAG) metho d. The key prop erty of SAG method is that, given an arbitrary appro ximate solution, it can provide a non-probabilistic assumption-free bound on the approximation qualit y under cryptographically secure computation framework. W e demonstrate the benefit of the SAG method by ∗ Corresponding author 1 applying it to several problems including a practical priv acy-preserving data analysis task on genomic and clinical information. 1 In tro duction Priv acy preserv ation has been increasingly imp ortant in man y mac hine learning (ML) tasks. In this pap er, w e consider empirical risk minimizations (ERMs) when the data is distributed among m ultiple parties, and these parties are unwilling to share their data to other parties. F or example, if tw o parties hav e different sets of features for the same group of p eople, they migh t wan t to com bine these tw o datasets for more accurate predictiv e mo del building. On the other hand, due to priv acy concerns or legal regulations, these tw o parties migh t wan t to keep their own data priv ate. The problem of learning from multiple confidential databases ha ve b een studied under the name of se cur e multi-p arty c omputation (se cur e MPC) . This pap er is motiv ated b y our recen t secure MPC pro ject on genomic and clinical data. Our task is to dev elop a mo del for predicting the risk of a disease based on genomic and clinical information of p otential patien ts. The difficulty of this problem is that genomic information were collected in a researc h institute, while clinical information w ere collected in a hospital, and b oth institutes do not w ant to share their data to others. Ho w ever, since the risk of the disease is dependent b oth on genomic and clinical features, it is quite v aluable to use b oth t yp es of information for the risk mo deling. V arious to ols for secure MPC ha ve been taken from cryptograph y , and priv acy-preserving ML approac hes based on cryptographic tec hniques hav e been called crypto gr aphic al ly private ML . A key building blo ck of cryptographically priv ate ML is homomorphic encryption by which sum or pro duct of tw o encrypted v alues can be ev aluated without decryption. Many cryptographically priv ate ML algorithms ha ve b een dev elop ed, e.g., for linear regression [1, 2] and SVM [3, 4] by using homomorphic encryption prop erty . One of limitations in curren t cryptographically priv ate ML is that it is computationally intractable to ev aluate non-linear functions such as logarithmic functions or exp onen tial functions in homomorphic encryption framew ork. Since non-linear function ev aluations are required in man y fundamen tal statistical analyses such as logistic regression, it is crucially important to dev elop a method that can alleviate this computational b ottlenec k. One w ay to circum ven t this issue is to appr oximate non-linear functions. F or example, in Nardi et al. ’s w ork [5] for secure logistic regression, the authors prop osed to approximate a logistic function b y sum of step functions, whic h can be computed under secure computation framework. Due to the v ery nature of MPC, even after the final solution is obtained, the users are not allo w ed to access to priv ate data. When the resulting solution is an appro ximation, it is imp ortan t for the users to b e able to chec k its appro ximation qualit y . Unfortunately , most existing cryptographically priv ate ML metho d does not ha v e such an appro ximation guaran tee mec hanism. Although a probabilistic approximation guaran tee w as pro vided in the aforemen tioned secure logistic regression study [5], the approximation b ound deriv ed in that work dep ends on the unkno wn true solution, meaning that the users cannot mak e sure ho w 2 m uch they can trust the appro ximate solution. The goal of this pap er is to develop a practical metho d for secure computations of ERM problems. T o this end, we in tro duce a no vel secure computation technique called se cur e appr oximation guar ante e (SA G) metho d. Given an arbitrary approximate solution of an ERM problem, the SAG metho d provides non- probabilistic assumption-free b ounds on ho w far the approximate solution is aw ay from the true solution. A key difference of our approac h with existing ones is that our approximation b ound is not for theoretical justification of an approximation algorithm itself, but for practical decision making based on a given appro x- imate solution. Our approximation b ound can b e obtained without any information ab out the true solution, and it can b e computed with a reasonable computational cost under secure computation framework, i.e., without the risk of disclosing priv ate information. The proposed SAG method can provide non-probabilistic b ounds on a quan tity dep ending on the true solution of the ERM problem under cryptographically secure computation framew ork, whic h is v aluable for making decisions when only an appro ximate solution is a v ailable. In order to develop the SA G method, we in tro duce t wo nov el tec hnical con tributions in this pap er. W e first introduce a no vel algorithmic framew ork for computing appro ximation guarantee that can b e applied to a class of ERM problems whose loss function is non-linear and its secure ev aluation is difficult. In this framework, w e use a pair of surrogate loss functions that b ounds the non-linear loss function from b elo w and ab ov e. Our second con tribution is to implemen t these surrogate loss functions by piecewise-linear functions, and sho w that they can b e cryptographically securely computed. F urthermore, we empirically demonstrate that the b ounds obtained by the SAG method is muc h tigh ter than the b ounds in Nardi et al. ’s metho d [5] despite the former is non-probabilistic and assumption-free. Figure 1 is an illustration of the SAG method in a simple logistic regression example. In mac hine learning literature, significan t amoun t of w orks on differen tial priv acy [6] ha v e b een recen tly studied. The ob jectiv e of differential priv acy is to disclose an information from confiden tial database without taking a risk of revealing priv ate information in the database, and random p erturbation is main technical to ol for protecting differential priv acy . W e note that the priv acy concern studied in this pap er is rather differen t from those in differential priv acy . Although it would b e interesting to study how the latter type of priv acy concerns can b e handled with the approac h we discussed here, we would focus in this paper on priv acy regarding cryptographically priv ate ML. Notations W e use the following notations in the rest of the pap er. W e denote the sets of real n umbers and integers as R and Z , resp ectively . F or a natural n umber N , w e define [ N ] := { 1 , 2 , . . . , N } and Z N := { 0 , 1 , . . . , N − 1 } . The Euclidean norm is written as k · k . Indicator function is written as I χ i.e., I χ = 1 if χ is true, and I χ = 0 otherwise. F or a proto col Π betw een tw o parties, w e use the notation Π( I A , I B ) → ( O A , O B ), where I A and I B are inputs from the parties A and B, respectively , and O A and O B are outputs giv en to A and B, resp ectively . 3 −3 −2 −1 0 1 2 3 0 0.2 0.4 0.6 0.8 1 Logistic function Approximation by Nardi et al. (A) A non-linear function 1 / (1 + exp( − x )) and its appro ximation with [5] 1 2 3 4 5 0 0.2 0.4 0.6 0.8 1 Instance ID Classification probability Truth (not securely computable) Approximation by Nardi et al. Secure approximation bound (SAG) (B) Class probabilities by true and appro ximate solutions and the b ounds obtained by the SAG metho d. The left plot (A) shows the logistic function (blue) and its appro ximation (red) prop osed in [5]. The righ t plot (B) shows the true (blue) and appro ximate (red) class probabilities of fiv e training instances (the instance IDs 1 , . . . , 5 are shown in the horizontal axis), where the former is obtained with true logistic function, while the latter is obtained with the appro ximate logistic function. The green interv als in plot (B) are the approximation guaran tee interv als provided b y the SAG method. The k ey prop erty of the SA G metho d is that these in terv als are guaran teed to contain the true class probabilities. Th us they can b e used for certainly classifying some of these five instances to either p ositive or negative class. Noting that the low er b ounds of the class probabilities are greater than 0.5 in the instances 1 and 4, they would b e certainly classified to p ositive class. Similarly , noting that the upp er b ounds of the class probabilities are smaller than 0.5 in the instances 3 and 5, they w ould b e certainly classified to negativ e class. Figure 1: An illustration of the prop osed SA G method in a simple logistic regression example 4 2 Preliminaries 2.1 Problem statemen t Empirical risk minimization (ERM) Let { ( x i , y i ) ∈ X × Y } i ∈ [ n ] b e the training set, where the input domain X ⊂ R d is a compact region in R d , and the output domain Y is {− 1 , +1 } in classification problems and R in regression problems. In this pap er, we consider the following class of empirical risk minimization problems: argmin w λ 2 k w k 2 + 1 n X i ∈ [ n ] ` ( y i , x > i w ) , (1) where ` is a loss function sub differentiable and con vex with respect to w , and λ > 0 is the regularization parameter. L 2 regularization in (1) ensures that the solution w is within a compact region W ⊂ R d . W e consider the cases where ` is hard to compute in secure computation framew ork, i.e., ` includes non-linear functions suc h as log and exp. Popular examples includes logistic regression ` ( y , x > w ) := log(1 + exp( − x > w )) − y x > w , (2) P oisson regression ` ( y , x > w ) := exp( x > w ) − y x > w , (3) and exponential regression ` ( y , x > w ) := ( y exp( − x > w )) − x > w . (4) Secure tw o-part y computation W e consider secure tw o-party computation scenario where the training set { ( x i , y i ) } i ∈ [ n ] is vertic al ly-p artitione d b et ween tw o parties A and B [7], i.e., A and B own different sets of features for common set of n instances. More precisely , let part y A own the first d A features and part y B o wn the last d B features, i.e., d A + d B = d . W e consider a scenario where the lab els { y i } i ∈ [ n ] are also o wned b y either part y , and w e let part y B own them here. W e assume that both parties can identify the instance index i ∈ [ n ], i.e., it is p ossible for b oth parties to make comm unications with resp ect to a sp ecified instance. W e denote the input data matrix owned by parties A and B as X A and X B , resp ectiv ely . F urthermore, we denote the n -dimensional vector of the lab els as y := [ y 1 , . . . , y n ] > . Semi-honest model In this pap er, we develop the SAG method so that it is secure (meaning that priv ate data is not revealed to the other part y) under the semi-honest model [8]. In this securit y mo del, an y parties are allow ed to guess other party’s data as long as they follo w the sp ecified proto col. In other words, we 5 assume that all the parties do not mo dify the sp ecified proto col. The semi-honest mo del is standard security mo del in cryptographically priv ate ML. 2.2 Cryptographically Secure Computation P aillier cryptosystem F or secure computations, we use Pail lier cryptosystem [9] as an additive homo- morphic encryption to ol, i.e., w e can obtain E ( a + b ) from E ( a ) and E ( b ) without decryption, where a and b are plain texts and E ( · ) is the encryption function. Paillier cryptosystem has the semantic se curity [10] (the IND-CP A se curity ), whic h roughly means that it is difficult to judge whether a = b or a 6 = b b y knowing E ( a ) and E ( b ). P aillier cryptosystem is a public k ey cryptosystem with additive homomorphism ov er Z N (i.e., mo d N ). In public key cryptosystem, the priv ate k ey is t w o large prime n umbers p and q , and the public key is ( N , g ) ∈ Z × Z N 2 , where N = pq and g is an integer co-prime with N 2 . Giv en a plaintext m ∈ Z N , a ciphertext of E ( m ) is obtained with a random in teger R ∈ Z N as follo ws: E ( m ) = g m R N mo d N 2 . Ciphertext E ( m ) is decrypted with the priv ate key whatev er R is c hosen. With the encryption, the following additiv e homomorphism holds for any plain texts a, b ∈ Z N : E ( a ) · E ( b ) = E ( a + b ) , E ( a ) b = E ( ab ) . Hereafter, we denote b y E pk A ( · ) and E pk B ( · ) the encryption functions with the public keys issued b y party A and B, resp ectively . Note that w e need computations of real num b ers rather than in tegers in data analysis tasks. First, negativ e n umbers can b e treated with the similar technique to the tw o’s complement. In order to handle real n umbers, w e multiply a magnification constan t M for eac h input real n umber for expressing it with an in teger. Here, there is a tradeoff b etw een the accuracy and range of acceptable real num ber, i.e., for large M , accuracy w ould b e high, but only p ossible to handle a limited range of real num bers. 2.3 Related w orks The most general framew ork for cryptographically priv ate ML is the Y ao’s garbled circuit [11], where an y desired secure computation is expressed as an electronic circuit with encrypted comp onents. In principle, Y ao’s garbled circuit can ev aluate any function securely , but its computational costs are usually extremely large. Unfortunately , it is impractical to use Y ao’s garbled circuit for secure computations of ERM problems. Nardi et al. [5] studied cryptographically priv ate approac h for logistic regression. As briefly mentioned 6 in § 1, in order to circum ven t the difficult y of secure non-linear function ev aluations, the authors prop osed to approximate logistic function by empirical cum ulative density function (CDF) of logistic distributions (see Figure 1(A) as an example). Denoting the true solution and the approximate solution as w ∗ and ˆ w , resp ectiv ely , the authors sho w ed that k w ∗ − ˆ w k ≤ nc 1 max k x i k L γ λ min in probabilit y 1 − 2 exp( − cL 1 − 2 γ ) , (5) where L is the sample size for the empirical CDF, λ min is the smallest eigen v alue of Fisher information matrix dep ending on w ∗ , and c > 0, c 1 > 0, γ ∈ (0 , 1 / 2) are constants. This approximation error b ound cannot be used for knowing the approximation quality of the given appro ximate solution ˆ w : the b ound dep ends on the unkno wn true solution w ∗ b ecause λ min dep ends on it. F urthermore, in experiment section, we demonstrate that the SA G metho d can provide m uc h tighter non-probabilistic bounds than the ab o ve probabilistic bound in Nardi et al. ’s method [5]. 3 Secure Appro ximation Guaran tee(SA G) The basic idea b ehind the SA G metho d is to in tro duce tw o surrogate loss functions φ and ψ that bound the target non-linear loss function ` from b elo w and abov e. In what follows, we show that, given an arbitrary appro ximate solution ˆ w , if we can securely ev aluate φ ( ˆ w ), ψ ( ˆ w ) and a subgradient ∂ φ/∂ w | w = ˆ w , we can securely compute bounds on the true solution w ∗ whic h itself cannot b e computed under secure computation framew ork. First, the follo wing theorem state s that w e can obtain a ball in the solution space in whic h the true solution w ∗ certainly exists. Theorem 1. L et φ : R → R and ψ : R → R b e functions that satisfy φ ( y , x > w ) ≤ ` ( y, x > w ) ≤ φ ( y, x > w ) ∀ y ∈ Y , x ∈ X , w ∈ W , and assume that they ar e c onvex and sub differ entiable with r esp e ct to w . Then, for any ˆ w ∈ W , k w ∗ − m ( ˆ w ) k ≤ r ( ˆ w ) , i.e., the true solution w ∗ is lo c ate d within a b al l in W with the c enter m ( ˆ w ) := 1 2 ˆ w − 1 λ ∇ Φ( ˆ w ) and the r adius r ( ˆ w ) := s 1 2 ˆ w + 1 λ ∇ Φ( ˆ w ) 2 + 1 λ (Ψ( ˆ w ) − Φ( ˆ w )) , 7 wher e Φ( ˆ w ) := 1 n P i ∈ [ n ] φ ( y i , x > i ˆ w ) , Ψ( ˆ w ) := 1 n P i ∈ [ n ] ψ ( y i , x > i ˆ w ) and ∇ Φ( ˆ w ) is a sub gr adient of Φ at w = ˆ w . The proof of Theorem 1 is presented in App endix. Using Theorem 1, w e can compute a pair of lo wer and upp er bounds of an y linear score in the form of η > w ∗ for an arbitrary η ∈ R d as the follo wing Corollary states . Corollary 2. F or an arbitr ary η ∈ R d , LB ( η > w ∗ ) ≤ η > w ∗ ≤ U B ( η > w ∗ ) , (6) wher e LB ( η > w ∗ ) := η > m ( ˆ w ) − k η k r ( ˆ w ) (7a) U B ( η > w ∗ ) := η > m ( ˆ w ) + k η k r ( ˆ w ) . (7b) The proof of Corollary 2 is presented in App endix. Man y imp ortant quan tities in data analyses are represented as a linear score. F or example, in binary classification, the classification result ˜ y of a test input ˜ x is determined b y the sign of the linear score ˜ x > w ∗ . It suggests that w e can certainly classify the test instance as LB ( ˜ x > w ∗ ) > 0 ⇒ ˜ y = +1 and U B ( ˜ x > w ∗ ) < 0 ⇒ ˜ y = − 1. Similarly , if w e are interested in each co efficient w ∗ h , h ∈ [ d ] , of the trained mo del, b y setting η = e h where e h is a d -dimensional v ector of all 1s except 0 in the h -th comp onent, we can obtain a pair of lo wer and upp er bounds on the coefficient as LB ( e > h w ∗ ) ≤ w ∗ h ≤ U B ( e > h w ∗ ). W e note that Theorem 1 and Corollary 2 are inspired by recent works on safe screening and related problems [12 – 19], where an approximate solution is used for bounding the optimal solution without solving the optimization problem. 4 SA G implemen tation with piecewise-linear functions In this section, w e present ho w to compute the b ounds on the true solution discussed in § 3 under secure computation framew ork. Specifically , we propose using piecewise-linear functions for the t w o surrogate loss functions φ and ψ . In § 4.1, we present a proto col of secure piecewise-linear function ev aluation ( S P L ). In § 4.2, we describ e a proto col for securely computing the b ounds. In the appendix, w e describ e a sp ecific implemen tation for logistic regression. 8 10 5 0 5 10 s 0 2 4 6 8 10 f ( s ) l o g ( 1 + e x p ( − s ) ) 10 5 0 5 10 s 0 2 4 6 8 10 l o w e r ( s ) u p p e r ( s ) 10 5 0 5 10 s 0 2 4 6 8 10 l o w e r ( s ) u p p e r ( s ) A. log(1 + exp( − s )) B. Bounds with K = 2 C. Bounds with K = 10 Figure 2: An example of b ounding conv ex function of one v ariable log(1 + exp( − s )) with piecewise linear functions with K sections for s ∈ [ − 10 , 10] 4.1 Secure piecewise-linear function computation Let us denote a piecewise-linear function with K pieces defined in s ∈ [ T 0 , T K ] as g ( s ) = ( α j s + β j ) I T j − 1 ≤ s w ) = u ( s ( y , x > w )) + v ( y , x > w ) , (10) where u is a non-linear function whose secure ev aluation is difficult, while s ( y , x > w ), v ( y , x > w ), and their subgradien ts are assumed to b e securely ev aluated. Note that most commonly-used loss functions can b e written in this form. F or example, in the case of logistic regression (2), u ( s ) = log(1 + exp( − s )), s ( y , x > w ) = x > w and v ( y , x > w ) = − y x > w . W e consider a situation that tw o parties A and B o wn encrypted appro ximate solution ˆ w separately for their own features, i.e., parties A and B own E pk B ( ˆ w A ) and E pk A ( ˆ w B ), resp ectively , where ˆ w A and ˆ w B the first d A and the follo wing d B comp onen ts of ˆ w . 4.2.1 Secure computations of the ball The follo wing theorem states that the center m ( ˆ w ) and the radius r ( ˆ w ) can b e securely computed. Theorem 4. Supp ose that p arty A has X A and E pk B ( ˆ w A ) , while p arty B has X B , y and E pk A ( ˆ w B ) . Then, the two p arties c an se cur ely c ompute the c enter m ( ˆ w ) and the r adius r ( ˆ w ) in the sense that ther e is a se cur e pr oto c ol that outputs E pk B ( m A ( ˆ w )) and E pk B ( r A ( ˆ w ) 2 ) to p arty A, and E pk A ( m B ( ˆ w )) and E pk A ( r B ( ˆ w ) 2 ) to p arty B such that m A ( ˆ w ) + m B ( ˆ w ) = m ( ˆ w ) and r A ( ˆ w ) 2 + r B ( ˆ w ) 2 = r ( ˆ w ) 2 . 10 W e call such a proto col as secure ball computation ( S B C ) protocol. whose input-output prop erty is c haracterized as S B C (( X A , E pk B ( ˆ w A )) , ( X B , y , E pk A ( ˆ w B )) → (( E pk B ( m A ( ˆ w )) , E pk B ( r A ( ˆ w ) 2 )) , ( E pk A ( m B ( ˆ w )) , E pk A ( r B ( ˆ w ) 2 ))) T o prov e Theorem 4, w e only describ e secure computations of three comp onen ts in the S B C proto col. W e omit the security analysis of the other components b ecause they can b e easily derived from the security prop erties of Paillier cryptosystem [9], comparison proto col [20] and multiplication proto col [21]. 1 Encrypted v alues of Ψ( ˆ w ) − Φ( ˆ w ) This quan tity can b e obtained b y summing ψ ( x i ) − φ ( x i ) for i ∈ [ n ]. Denoting φ := u ( s ) + v and ψ := u ( s ) + v , where u and u are low er and upp er bounds of u implemen ted with piecewise-linear functions, respectively , w e can compute ψ ( x i ) − φ ( x i ) = u − u b y using SPL proto col for eac h of u and u . Encrypted v alues of ∇ Φ( ˆ w ) This quantit y can b e obtained b y summing ∇ φ at w = ˆ w . Since ∇ φ = ∂ ∂ w u ( s ) + ∂ v ∂ w = u 0 ( s ) ∂ s ∂ w + ∂ v ∂ w , its encrypted v ersion can b e written as E ( ∇ φ ) = E ( u 0 ( s ) ∂ s ∂ w ) E ( ∂ v ∂ w ). Here, u 0 ( s ) can b e securely ev aluated because u 0 is piecewise-constant function, while ∂ s ∂ w and ∂ v ∂ w are securely computed from the assumption in (10). F or computing E ( u 0 ( s ) ∂ s ∂ w ) from E ( u 0 ( s )) and E ( ∂ s ∂ w ), w e can use the secure m ultiplication protocol in [21]. Encrypted v alue of r ( ˆ w ) 2 In order to compute this quantit y , we need the encrypted v alue of k 1 2 ( ˆ w + 1 /λ ∇ Φ) k 2 , whic h can be also computed b y using the secure multiplication proto col in [21]. 4.2.2 Secure computations of the b ounds Finally w e discuss here ho w to securely compute the upper and the low er bounds in (6) from the encrypted m ( ˆ w ) and r ( ˆ w ) 2 . The protocol depends on who o wns the test instance and who receives the resulted b ounds. W e describe here a protocol for a particular setup where the test instance ˜ x is o wned b y t wo parties A and B, i.e., ˜ x = [ ˜ x > A ˜ x > B ] > where ˜ x A and ˜ x B are the first d A and the following d B comp onen ts of ˜ x , and that the lo wer and the upp er b ounds are given to either party . Similar proto cols can be easily developed for other setups. Theorem 5. L et p arty A owns ˜ x A , E pk B ( m A ( ˆ w )) and E pk B ( r A ( ˆ w ) 2 ) , and p arty B owns ˜ x B , E pk A ( m B ( ˆ w )) and E pk A ( r B ( ˆ w ) 2 ) , r esp e ctively. Then, either p arty A or B c an r e c eive the lower and the upp er b ounds of ˜ x > w ∗ in the form of (6) without r eve aling ˜ x A and ˜ x B to the others. 1 W e add that the trade-off of security strengths and computation times of Paillier cryptosystem and the comparison proto col are controlled by parameters ( N in § 2.2 for P aillier cryptosystem; another parameter exists for the comparison proto col). Th us the total securit y depends on the w eaker one of the t wo. The securit y of the m ultiplication protocol depends on the securit y of Paillier cryptosystem itself. 11 T able 1: Data sets used for the logistic regression. All are from UCI Machine Learning Rep ository . data set training set v alidation set d Musk 3298 3300 166 MGT 9510 9510 10 Spam base 2301 2301 57 OLD 1268 1268 72 The pro of of Theorem 5 is presen ted in App endix. W e note that a party who receiv es bounds from the pro- to col would get some information about the center m B ( ˆ w ) and the radius r B ( ˆ w ), but no other information ab out the original dataset is rev ealed. 5 Exp erimen ts W e conducted experiments for illustrating the performances of the proposed SAG method. The experimental setup is as follows. W e used Paillier cryptosystem with N = 1024-bit public key and comparison proto col by V eugen et al. [20] for 60 bits of integers. The program is implemented with Jav a, and the comm unications b et ween t w o parties are implemented with sock ets b et ween t w o pro cesses working in the same computer. W e used a single computer with 3.07GHz Xeon CPU and 48GB RAM. Except when w e inv estigate computational costs, computations were done on unencrypted v alues. Note that the prop osed SAG metho d provide b ounds on the true solution w ∗ based on an arbitrary approximate solution ˆ w . In all the exp eriments presented here, w e used approximate solutions obtained b y Nardi et al. ’s approac h [5] as the appro ximate solution ˆ w . In what follows, we call the b ounds or interv als obtained b y the SA G metho d as SAG b ounds and SA G in terv als, respectively . 5.1 Logistic regression W e first inv estigated several prop erties of the SAG metho d for the logistic regression (2) by applying it to four benchmark datasets summarized in T able 1. First, in Figure 3, w e compared the tightness of the b ounds on the predicted classification probabilities for tw o randomly chosen v alidation instances x i defined as p ( x i ) := 1 / (1 + exp( − x > i w ∗ )), i = 1 , 2. In the figure, four t yp es of interv als are plotted. The orange ones are Nardi et al. ’s probabilistic b ounds [5] with the probability 90% (see (5)). The blue, green and purple ones w ere obtained b y the SAG metho d with K = 100 , 1000 and ∞ , resp ectively , where K is the n um b er of pieces in the piecewise-linear approximations. Here, K = ∞ means that the true loss function ` w as used as the tw o surrogate loss functions φ and ψ . The results clearly indicate that bounds obtained by the SA G method are clearly tighter than those b y Nardi et al.’s approach despite that the latter is probabilistic and cannot b e securely computed in practice. When comparing the results with differen t K , w e can confirm that large K yields tighter b ounds. The results with K = 1000 are almost as tight as those obtained with the true loss function ( K = ∞ ). 12 1 2 0 0.2 0.4 0.6 0.8 1 Instance ID Classification probability Truth Approximation Nardi et al.(90%) K=100 K=1000 K= ∞ Musk, λ = 0 . 1 1 2 0 0.2 0.4 0.6 0.8 1 Instance ID Classification probability Truth Approximation Nardi et al.(90%) K=100 K=1000 K= ∞ Musk, λ = 1 1 2 0 0.2 0.4 0.6 0.8 1 Instance ID Classification probability Truth Approximation Nardi et al.(90%) K=100 K=1000 K= ∞ Musk, λ = 10 1 2 0 0.2 0.4 0.6 0.8 1 Instance ID Classification probability Truth Approximation Nardi et al.(90%) K=100 K=1000 K= ∞ MGT, λ = 0 . 1 1 2 0 0.2 0.4 0.6 0.8 1 Instance ID Classification probability Truth Approximation Nardi et al.(90%) K=100 K=1000 K= ∞ MGT, λ = 1 1 2 0 0.2 0.4 0.6 0.8 1 Instance ID Classification probability Truth Approximation Nardi et al.(90%) K=100 K=1000 K= ∞ MGT, λ = 10 1 2 0 0.2 0.4 0.6 0.8 1 Instance ID Classification probability Truth Approximation Nardi et al.(90%) K=100 K=1000 K= ∞ Spam base, λ = 0 . 1 1 2 0 0.2 0.4 0.6 0.8 1 Instance ID Classification probability Truth Approximation Nardi et al.(90%) K=100 K=1000 K= ∞ Spam base, λ = 1 1 2 0 0.2 0.4 0.6 0.8 1 Instance ID Classification probability Truth Approximation Nardi et al.(90%) K=100 K=1000 K= ∞ Spam base, λ = 10 1 2 0 0.2 0.4 0.6 0.8 1 Instance ID Classification probability Truth Approximation Nardi et al.(90%) K=100 K=1000 K= ∞ OLD, λ = 0 . 1 1 2 0 0.2 0.4 0.6 0.8 1 Instance ID Classification probability Truth Approximation Nardi et al.(90%) K=100 K=1000 K= ∞ OLD, λ = 1 1 2 0 0.2 0.4 0.6 0.8 1 Instance ID Classification probability Truth Approximation Nardi et al.(90%) K=100 K=1000 K= ∞ OLD, λ = 10 Figure 3: The result of proposed b ounds for some test instances 13 1 2 3 4 5 6 7 8 9 10 0 0.2 0.4 0.6 0.8 1 Instance ID Classification probability Truth Approximation SAG Musk, L = 10 1 2 3 4 5 6 7 8 9 10 0 0.2 0.4 0.6 0.8 1 Instance ID Classification probability Truth Approximation SAG Musk, L = 100 1 2 3 4 5 6 7 8 9 10 0 0.2 0.4 0.6 0.8 1 Instance ID Classification probability Truth Approximation SAG Musk, L = 1000 1 2 3 4 5 6 7 8 9 10 0 0.2 0.4 0.6 0.8 1 Instance ID Classification probability Truth Approximation SAG MGT, L = 10 1 2 3 4 5 6 7 8 9 10 0 0.2 0.4 0.6 0.8 1 Instance ID Classification probability Truth Approximation SAG MGT, L = 100 1 2 3 4 5 6 7 8 9 10 0 0.2 0.4 0.6 0.8 1 Instance ID Classification probability Truth Approximation SAG MGT, L = 1000 1 2 3 4 5 6 7 8 9 10 0 0.2 0.4 0.6 0.8 1 Instance ID Classification probability Truth Approximation SAG Spam base, L = 10 1 2 3 4 5 6 7 8 9 10 0 0.2 0.4 0.6 0.8 1 Instance ID Classification probability Truth Approximation SAG Spam base, L = 100 1 2 3 4 5 6 7 8 9 10 0 0.2 0.4 0.6 0.8 1 Instance ID Classification probability Truth Approximation SAG Spam base, L = 1000 1 2 3 4 5 6 7 8 9 10 0 0.2 0.4 0.6 0.8 1 Instance ID Classification probability Truth Approximation SAG OLD, L = 10 1 2 3 4 5 6 7 8 9 10 0 0.2 0.4 0.6 0.8 1 Instance ID Classification probability Truth Approximation SAG OLD, L = 100 1 2 3 4 5 6 7 8 9 10 0 0.2 0.4 0.6 0.8 1 Instance ID Classification probability Truth Approximation SAG OLD, L = 1000 Figure 4: Change of b ounds for the change of the accuracy of the approximated solution ˆ w 14 Nardi+ K=100 K=200 K=500 K=1000 K=INF 0 0.2 0.4 0.6 0.8 1 Certainly classified rate Methods and options 10 0 10 1 10 2 10 3 10 4 10 5 Average SAG interval size Musk, λ = 0 . 1 Nardi+ K=100 K=200 K=500 K=1000 K=INF 0 0.2 0.4 0.6 0.8 1 Certainly classified rate Methods and options 10 −1 10 0 10 1 10 2 Average SAG interval size Musk, λ = 1 Nardi+ K=100 K=200 K=500 K=1000 K=INF 0 0.2 0.4 0.6 0.8 1 Certainly classified rate Methods and options 10 −2 10 −1 10 0 10 1 Average SAG interval size Musk, λ = 10 Nardi+ K=100 K=200 K=500 K=1000 K=INF 0 0.2 0.4 0.6 0.8 1 Certainly classified rate Methods and options 10 −1 10 0 10 1 10 2 Average SAG interval size MGT, λ = 0 . 1 Nardi+ K=100 K=200 K=500 K=1000 K=INF 0 0.2 0.4 0.6 0.8 1 Certainly classified rate Methods and options 10 −2 10 −1 10 0 10 1 Average SAG interval size MGT, λ = 1 Nardi+ K=100 K=200 K=500 K=1000 K=INF 0 0.2 0.4 0.6 0.8 1 Certainly classified rate Methods and options 10 −2 10 −1 10 0 Average SAG interval size MGT, λ = 10 Nardi+ K=100 K=200 K=500 K=1000 K=INF 0 0.2 0.4 0.6 0.8 1 Certainly classified rate Methods and options 10 −1 10 0 10 1 10 2 10 3 Average SAG interval size Spam base, λ = 0 . 1 Nardi+ K=100 K=200 K=500 K=1000 K=INF 0 0.2 0.4 0.6 0.8 1 Certainly classified rate Methods and options 10 −2 10 −1 10 0 10 1 10 2 Average SAG interval size Spam base, λ = 1 Nardi+ K=100 K=200 K=500 K=1000 K=INF 0 0.2 0.4 0.6 0.8 1 Certainly classified rate Methods and options 10 −3 10 −2 10 −1 10 0 10 1 Average SAG interval size Spam base, λ = 10 Nardi+ K=100 K=200 K=500 K=1000 K=INF 0 0.2 0.4 0.6 0.8 1 Certainly classified rate Methods and options 10 −1 10 0 10 1 10 2 10 3 Average SAG interval size OLD, λ = 0 . 1 Nardi+ K=100 K=200 K=500 K=1000 K=INF 0 0.2 0.4 0.6 0.8 1 Certainly classified rate Methods and options 10 −2 10 −1 10 0 10 1 10 2 Average SAG interval size OLD, λ = 1 Nardi+ K=100 K=200 K=500 K=1000 K=INF 0 0.2 0.4 0.6 0.8 1 Certainly classified rate Methods and options 10 −0.9 10 −0.6 10 −0.3 10 0 Average SAG interval size OLD, λ = 10 Figure 5: Rate of successfully classified test instances and the av erage of size of b ounds by differen t b ound calculations (Nardi’s, K ∈ { 100 , 200 , 500 , 1000 , ∞} ) 15 T able 2: Computation Time for obtaining b ounds per instance K 100 200 500 1000 Time(s) 381.089 790.674 1877.176 3717.569 Figure 4 also shows similar plots. Here, w e in vestigated how the tightness of the SA G bounds changes with the qualit y of the appro ximate solution ˆ w . In order to consider approximate solutions with different lev els of quality , we computed three approximate solutions with L = 10 , 100 and 1000 in Nardi et al.’s approac h, where L is the sample size used for approximating the logistic function (see § 2.3). The results clearly indicate that tighter bounds are obtained when the quality of the appro ximate solution is higher (i.e., larger L ). Figure 5 illustrates how the SAG b ounds can b e useful in binary classification problems. In binary classification problems, if a lo wer b ound of the classification probabilit y is greater than 0.5, the instance w ould b e classified to p ositive class. Similarly , if an upper b ound of the classification probability is smaller than 0.5, the instance would b e classified to negativ e class. The green histograms in the figure indicate ho w man y p ercent of the v alidation instances can b e certainly classified as p ositive or negative class based on the SA G b ounds. The blue lines indicate the av erage length of the SAG in terv als, i.e., the difference b etw een the upp er and the low er bounds. The results clearly indicate that, as the num b er of pieces K increases in the SA G metho d, the tigh ter b ounds are obtained, and more v alidation instances can b e certainly classified. On the other hand, probabilistic b ounds in Nardi et al.’s approac h cannot pro vide certain classification results b ecause their b ounds are too lo ose. Finally , we examined the computation time for computing the SAG bounds. T able 2 sho ws the compu- tation time per instance with K = { 100 , 200 , 500 , 1000 } . The results suggest that the computational cost is almost li near in K , meaning that the computation of piecewise-linear functions dominates the cost. Although this task can b e completely parallelized per instance, further sp eed-up w ould b e desired when K is larger than 1000. 5.2 P oisson and exp onen tial regressions W e applied the SA G metho d to P oisson regression (3) and exp onen tial regression (4). Poisson regression w as applied to a problem for predicting the n um b er of pro duced seeds 2 . Exp onential regression was applied to a problem for predicting surviv al time of lung cancer patients 3 . The results are sho wn in Figure 6. The left plot (A) sho ws the result of P oisson regression, where the SAG in terv als on the predicted n um b er of seeds are plotted for several randomly chosen instances. The right plot (B) sho ws the SA G b ounds on the predicted surviv al probabilit y curve, in which we can confirm that the true surviv al probability curv e is included in the SA G b ound. 2 http://hosho.ees.hokudai.ac.jp/˜kubo/stat/2015/Fig/poisson/data3a.csv 3 http://help.xlstat.com/customer/portal/kb article attachmen ts/60040/original.xls 16 1 2 3 4 5 6 7 8 9 10 4 4.5 5 5.5 6 Instance ID Predicted # of Seeds Truth Approximation SAG (A) P oisson regression 20 40 60 80 100 120 140 0 0.2 0.4 0.6 0.8 1 Days Survaival rate Truth Approximation SAG (B) Exponential regression Figure 6: Prop osed b ounds for Poisson and exp onential regressions 1 2 3 4 5 6 7 8 9 10 −0.1 −0.05 0 0.05 0.1 Clinical feature ID Coefficients and their bounds Approximation SAG 11 12 13 14 15 16 17 18 19 20 21 22 23 −0.1 −0.05 0 0.05 0.1 Genetic feature ID Coefficients and their bounds Approximation SAG Figure 7: Bounds of co efficien ts for disease risk ev aluation 5.3 Priv acy-preserving logistic regression to genomic and clinical data analysis Finally , w e apply the SAG metho d to a logistic regression on a genomic and clinical data analysis, which is the main motiv ation of this work ( § 1). In this problem, we are in terested in mo deling the risk of a disease based on genomic and clinical information of potential patients. The difficulty of this problem is that genomic information were collected in a research institute, while clinical information were collected in a hospital, and b oth institutes do not wan t to share their data to others. How ev er, since the risk of the disease is dependent b oth on genomic and clinical features, it is quite v aluable to use b oth t yp es of information for the risk modeling. Our goal is to find genomic and clinical features that highly affect the risk of the disease. T o this end, we use the SA G metho d for computing the b ounds of co efficien ts of the logistic regression mo del as described in § 3. In this exp eriment, 13 genomic (SNP) and 10 clinical features of 134 p otential patients are pro vided from a researc h institute and a hospital, respectively 4 . The SA G b ounds on each of these 23 coefficients are plotted in Figure 7. Although w e do not kno w the true co efficient v alues, w e can at least identify features that p ositiv ely/negatively correlated with the disease risk (note that, if the low er/upp er b ound is greater/smaller than 0, the feature is guaran teed to ha ve p ositive/negativ e coefficient in the logistic regression model). 4 Due to confiden tiality reasons, w e cannot describ e the details of the dataset. Here, we only analyzed a randomly sampled small portion of the datasets just for illustration purp ose. 17 6 Conclusions W e studied empirical risk minimization (ERM) problems under secure multi-part y computation (MPC) framew orks. W e developed a nov el technique called secure approximation guaran tee (SAG) method that can b e used when only an approximate solution is av ailable due to the difficulty of secure non-linear function ev aluations. The key prop ert y of the SAG method is that it can securely provide the b ounds on the true solution, whic h is practically v aluable as w e illustrated in benchmark data experiments and in our motiv ating problem on genomic and clinical data. 18 References [1] R. Hall, S. E. Fienberg, and Y. Nardi. Secure multiple linear regression based on homomorphic encryp- tion. Journal of Official Statistics , 27(4):669, 2011. [2] V. Nik olaenko, U. W einsb erg, S. Ioannidis, M. Joy e, D. Boneh, and N. T aft. Priv acy-preserving ridge regression on h undreds of millions of records. In 2013 IEEE Symp osium on Se curity and Privacy (SP) , pages 334–348. IEEE, 2013. [3] S. Laur, H. Lipmaa, and T. Mielik¨ ainen. Cryptographically priv ate supp ort v ector mac hines. In Pr o- c e e dings of the 12th ACM SIGKDD international c onfer enc e on Know le dge disc overy and data mining (KDD2006) , pages 618–624. ACM, 2006. [4] H. Y u, J. V aidy a, and X. Jiang. Priv acy-preserving svm classification on vertically partitioned data. In A dvanc es in Know le dge Disc overy and Data Mining , pages 647–656. Springer, 2006. [5] Y. Nardi, S. E. Fien b erg, and R. J. Hall. Ac hieving both v alid and secure logistic regression analysis on aggregated data from differen t priv ate sources. Journal of Privacy and Confidentiality , 4(1):9, 2012. [6] C. Dwork. Differential priv acy . In 33r d International Col lo quium Automata, L anguages and Pr o gr am- ming (ICALP 2006) Pr o c e e dings Part II , pages 1–12, 2006. [7] J. V aidya and C. Clifton. Priv acy-preserving k-means clustering ov er v ertically partitioned data. In Pr o c e e dings of the 9th ACM SIGKDD international c onfer enc e on Know le dge disc overy and data mining (KDD 2003) , pages 206–215. A CM, 2003. [8] O. Goldreic h. F oundations of crypto gr aphy: volume 1, b asic to ols . Cam bridge universit y press, 2001. [9] P . Paillier. Public-k ey cryptosystems based on comp osite degree residuosit y classes. In A dvanc es in cryptolo gy – EUROCR YPT’99 , pages 223–238. Springer, 1999. [10] O. Goldreich. F oundations of crypto gr aphy: volume 2, b asic applic ations . Cambridge universit y press, 2004. [11] C. A. Y ao. Ho w to generate and exc hange secrets. In The 27th Annual IEEE Symp osium on F oundations of Computer Scienc e (F OCS 1986) , pages 162–167. IEEE, 1986. [12] L. El Ghaoui, V. Viallon, and T. Rabbani. Safe feature elimination for the lasso and sparse supervised learning problems. Pacific Journal of Optimization , 8(4):667–698, 2012. [13] Z. J Xiang, H. Xu, and P . J. Ramadge. Learning sparse represen tations of high dimensional data on large scale dictionaries. In A dvanc es in Neur al Information Pr o c essing Systems , pages 900–908, 2011. 19 [14] K. Ogaw a, Y. Suzuki, and I. T akeuc hi. Safe screening of non-supp ort vectors in pathwise svm com- putation. In Pr o c e e dings of the 30th International Confer enc e on Machine L e arning , pages 1382–1390, 2013. [15] J. Liu, Z. Zhao, J. W ang, and J. Y e. Safe Screening with V ariational Inequalities and Its Application to Lasso. In Pr o c e e dings of the 31st International Confer enc e on Machine L e arning , 2014. [16] J. W ang, J. Zhou, J. Liu, P . W onk a, and J. Y e. A safe screening rule for sparse logistic regression. In A dvanc es in Neur al Information Pr o c essing Systems , pages 1053–1061, 2014. [17] Z. J. Xiang, Y. W ang, and P . J. Ramadge. Screening tests for lasso problems. arXiv pr eprint arXiv:1405.4897 , 2014. [18] O. F ercoq, A. Gramfort, and J. Salmon. Mind the dualit y gap: safer rules for the lasso. In The 32nd International Confer enc e on Machine L e arning (ICML 2015) , 2015. [19] S. Okum ura, Y. Suzuki, and I. T ak euchi. Quick sensitivity analysis for incremen tal data mo dification and its application to leav e-one-out cv in linear classification problems. In Pr o c e e dings of the 21th ACM SIGKDD International Confer enc e on Know le dge Disc overy and Data Mining , pages 885–894. A CM, 2015. [20] T. V eugen. Comparing encrypted data. T echnical Rep ort, Multimedia Signal Pro cessing Group, Delft Univ ersity of T echnology , The Netherlands, and TNO Information and Comm unication T echnology , Delft, The Netherlands, 2011. [21] K. Nissim and E. W einreb. Comm unication efficient secure linear algebra. In The ory of Crypto gr aphy , pages 522–541. Springer, 2006. [22] D. P . Bertsek as. Nonline ar Pr o gr amming . A thena Scientific, 1999. [23] T. V eugen. Encrypted in teger division and secure comparison. International Journal of Applie d Cryp- to gr aphy , 3(2):166–180, 2014. 20 App endix Pro ofs of Theorem 1 and Corollary 2 (b ounds of w ∗ from ˆ w ) First w e presen t the follo wing prop osition whic h will be used for pro ving Theorem 1. Prop osition 6. Consider the fol lowing gener al pr oblem: min z g ( z ) s . t . z ∈ Z , (11) wher e g : Z → R is a sub differ entiable c onvex function and Z is a c onvex set. Then a solution z ∗ is the optimal solution of (11) if and only if ∇ g ( z ∗ ) > ( z ∗ − z ) ≤ 0 ∀ z ∈ Z , wher e ∇ g ( z ∗ ) is the sub gr adient ve ctor of g at z = z ∗ . See, for example, Prop osition B.24 in [22] for the proof of Prop osition 6. Pr o of of The or em 1. Using a slack v ariable ξ ∈ R , let us first rewrite the minimization problem (1) as min w ∈ R d ,ξ ∈ R J ( w , ξ ) := ξ + λ 2 k w k 2 s.t. ξ ≥ 1 n X i ∈ [ n ] ` ( y i , x > i w ) . (12) Note that the optimal solution of the problem (12) is w = w ∗ and ξ = ξ ∗ := 1 n P i ∈ [ n ] ` ( y i , x > i w ∗ ). Using the definitions of ψ and Ψ, we ha ve 1 n P i ∈ [ n ] ` ( y i , x > i ˆ w ) ≤ 1 n P i ∈ [ n ] ψ ( y i , x > i ˆ w ) = Ψ( ˆ w ). It means that ( ˆ w , Ψ( ˆ w )) is a feasible solution of the problem (12). Applying this fact into Proposition 6, w e ha ve ∇ J ( w ∗ , ξ ∗ ) > w ∗ ξ ∗ − ˆ w Ψ( ˆ w ) ≤ 0 , (13) where ∇ J ( w ∗ , ξ ∗ ) ∈ R d +1 is the gradien t of the ob jective function in (12) ev aluated at ( w ∗ , ξ ∗ ). Since J ( w , ξ ) is a quadratic function of w and ξ , we can write ∇ J ( w ∗ , ξ ∗ ) explicitly , and (13) is written as λ k w 2 k + ξ ∗ − λ w ∗> ˆ w − Ψ( ˆ w ) ≤ 0 ⇔ λ k w ∗ 2 k + 1 n X i ∈ [ n ] ` ( y i , x > i w ∗ ) − λ w ∗> ˆ w − Ψ( ˆ w ) ≤ 0 (14) F rom the definition of φ and Φ, w e ha ve 1 n X i ∈ [ n ] ` ( y i , x > i w ∗ ) ≥ 1 n X i ∈ [ n ] φ ( y i , x > i w ∗ ) = Φ( w ∗ ) . 21 Plugging this in to (14), we ha ve λ k w ∗ 2 k + Φ( w ∗ ) − λ w ∗> ˆ w − Ψ( ˆ w ) ≤ 0 (15) F urthermore, noting that φ and Φ are conv ex with resp ect to w , by the definition of conv ex functions we get Φ( w ∗ ) ≥ Φ( ˆ w ) + ∇ Φ( ˆ w ) > ( w ∗ − ˆ w ) . (16) By plugging (16) into (15), λ k w ∗ 2 k + Φ( ˆ w ) + ∇ Φ( ˆ w ) > ( w ∗ − ˆ w ) − λ w ∗> ˆ w − Ψ( ˆ w ) ≤ 0 (17) Noting that (17) is a quadratic function of w ∗ , w e obtain w ∗ − 1 2 ˆ w − 1 λ ∇ Φ( ˆ w ) 2 ≤ 1 2 ˆ w + 1 λ ∇ Φ( ˆ w ) 2 + 1 λ (Ψ( ˆ w ) − Φ( ˆ w )) . It means that the optimal solution w ∗ is within a ball with the center m ( ˆ w ) and the radius r ( ˆ w ), which completes the proof. Next, w e pro ve Corollary 2. Pr o of of Cor ol lary 2. W e show that the lo w er b ound of the linear mo del output v alue w ∗> x is x > m ( ˆ w ) − k x k r ( ˆ w ) under the constraint that k w ∗ − m ( ˆ w ) k ≤ r ( ˆ w ) . T o form ulate this, let us consider the follo wing constrained optimization problem min w ∈ R d w > x s.t. k w − m ( ˆ w ) k 2 ≤ r ( ˆ w ) 2 . (18) Using a Lagrange multiplier µ > 0, the problem (18) is rewritten as min w ∈ R d w > x s.t. k w − m ( ˆ w ) k 2 ≤ r ( ˆ w ) 2 , = min w ∈ R d max µ> 0 w > x + µ ( k w − m ( ˆ w ) k 2 − r ( ˆ w ) 2 ) = max µ> 0 − µr ( ˆ w ) 2 + min w µ k w − m ( ˆ w ) k 2 + w > x = max µ> 0 H ( µ ) := − µr ( ˆ w ) 2 − k x k 2 4 µ + x > m ( ˆ w ) , where µ is strictly p ositive b ecause the constraint k w − m ( ˆ w ) k 2 ≤ r ( ˆ w ) 2 is strictly activ e at the optimal 22 solution. By letting ∂ H ( µ ) /∂ µ = 0, the optimal µ is written as µ ∗ := k x k 2 r ( ˆ w ) = arg max µ> 0 H ( µ ) . Substituting µ ∗ in to H ( µ ), x > m ( ˆ w ) − k x k r ( ˆ w ) = max µ> 0 H ( µ ) . The upper b ound part can b e sho wn similarly . Pro of of Theorem 3 (Proto col ev aluating piecewise linear function and its sub- deriv ative securely) First we explain the outline of the proto col of secure comparison by V eugen et al. [20]. The proto col returns the result of comparison E pk B ( I q > 0 ) (giv en to party A) for the encrypted v alues E pk B ( q ) (owned by party A) with the following t w o steps: • Part y A and B obtain q A := R and q B := q + R , resp ectiv ely , where R is a random v alue, and • Part y A and B compare q A and q B with the implementation of bit-wise comparison with Paillier cryptosystem (see the original pap er). Let us denote the proto col of the latter by S C ( q A , q B ) → ( E pk B ( I q A >q B ) , E pk A ( I q A >q B )), that is, S C is a proto col comparing tw o priv ate, unencrypted v alues o wned b y tw o parties q A , q B . The protocol for Theorem 3 is as follows: 1. Part y A computes E pk B ( s ) = E pk B ( s A + s B ) from E pk B ( s A ) and E pk A ( s B ) as follo ws: • Part y B generates a random v alue R ∈ Z N/ 2 ( N is defined in § 2.2), then sends E pk A ( s B − R ) = E pk A ( s B ) − R and E pk B ( R ) to part y A. • Part y A decrypts E pk A ( s B − R ) and computes E pk B ( s A + s B ) as: E pk B ( s A + s B ) = E pk B ( s A + s B − R + R ) = E pk B ( s A + s B − R ) E pk B ( R ) = E pk B ( s A ) s B − R E pk B ( R ). See [23] for the security of the part. 2. With the similar proto col to V eugen et al. ’s, part y A and B obtains p A and p B , resp ectively , where p A and p B are randomized and satisfy p A + p B = s . 3. Compute t j = I p A + p B >T j securely with S C : S C ( p A , T j − p B ) → ( E pk B ( t j ) , E pk A ( t j )) , (19) 23 Figure 8: Computing o j from t j in the protocol S P LC 4. Part y A computes E pk B ( o j ) from E pk B ( t j ): E pk B ( o j ) = E pk B ( t j − 1 − t j ) = E pk B ( t j − 1 ) · E pk B ( t j ) − 1 P arty B similarly computes for E pk A . The idea is shown in Figure 8. 5. Part y A computes g Aj := α j p A + β j , and party B g B j := α j p B for all j ∈ [ K ]. Note that g Aj + g B j = α j s + β j . 6. Compute encrypted g A and g B . Because g A + g B = g ( s ) after taking g A = P j ∈ [ K ] o j g Aj and g B = P j ∈ [ K ] o j g B j (see (9) in § 4), party A computes E pk B ( g A ) as E pk B ( g A ) = E pk B X j ∈ [ K ] o j g Aj = Y j ∈ [ K ] E pk B ( o j ) g Aj . P arty B similarly computes E pk A ( g B ). T o obtain the sub deriv ativ e g 0 ( s ) = P j ∈ [ K ] o j α j , during the proto col for Theorem 3, party A computes E pk B ( g 0 ( s )) as E pk B ( g 0 ( s )) = E pk B X j ∈ [ K ] o j α j = Y j ∈ [ K ] E pk B ( o j ) α j . P arty B similarly computes E pk A ( g 0 ( s )). Pro of of Theorem 5 (Proto col ev aluating the upp er and the low er b ounds) Proto col 1 securely ev aluates the upper b ound U B and the low er bound LB , where sq r t is an upper bound of the square ro ot function implemen ted as a piecewise linear function. Note that taking r larger does not 24 Proto col 1: Proto col for bound computation public sq r t Input of A E pk B ( m A ) , E pk B ( r 2 A ) , ˜ x A Input of B E pk B ( m B ) , E pk B ( r 2 B ) , ˜ x B Output of A U B , LB Output of B ∅ Step 1. P arty A and B computes: part y A: E pk B ( ˜ x > A m A ) , E pk A ( k ˜ x A k 2 ) part y B: E pk A ( ˜ x > A m B ) , k ˜ x B k 2 step 2. P arty A sends E pk B ( ˜ x > A m A ) , E pk A ( k ˜ x A k 2 ) , E pk B ( r 2 A ) to B. P arty B obtains E pk A ( ˜ x > m ) , E pk A ( k ˜ x k 2 ) , E pk A ( r 2 ). // The similar manner to the protocol for Theorem 3, step 1 step 2. Compute E pk B ( k ˜ x k 2 r 2 ) using the proto col for m ultiplication in [21]. step 3. Compute k ˜ x k r with S P L : S P L ( E pk B (0) , E pk B ( k ˜ x k 2 r 2 )) → ( q A , q B ) // q A + q B = k ˜ x k r step 4. P arty A sends E pk B ( q B ) to B. P arty B obtains q B and th us E pk A ( k ˜ x k r ) = E pk A ( q A ) q B . P arty B computes the follo wings and sends to A. E pk A ( U B ) ← E pk A ( ˜ x > m + k ˜ x k r ) E pk A ( LB ) ← E pk A ( ˜ x > m − k ˜ x k r ) step 5. P arty A obtains U B , LB by dec rypting them. lose the v alidity of the b ounds (looser b ounds are obtained) as ˜ x > m − k ˜ x k r ≤ ˜ x > m − k ˜ x k r , ˜ x > m + k ˜ x k r ≥ ˜ x > m + k ˜ x k r . The security is pro ved as follows: all techniques used in the proto col are secure with the same discussions as previous. The remaining problem is that whether part y A can guess ˜ x B from U B and LB . Part y A can kno w U B + LB = ˜ x > m = ˜ x > A m A + ˜ x > B m B and U B − LB = k ˜ x k r = sq r t (( k ˜ x A k 2 + k ˜ x B k 2 )( r 2 A + r 2 B )). Ho wev er, because party A do es not kno w m A , m B , r 2 A or r 2 B , part y A cannot guess ˜ x B either 5 . Example Proto col for the Logistic Regression W e sho w the detailed implementation of secure ball computation ( S B C , Theorem 4) in § 4 for the logistic regression, including ho w to use the secure computation of piecewise linear functions ( S P L , Theorem 3). 5 If this proto col is conducted for many enough ˜ x , because party A knows ˜ x A , part y A can also know m A by solving a system of linear equations, and th us kno w ˜ x > B m B . This, how ever, do es not lead party A to guess separate ˜ x B or m B because they are b oth priv ate for party B. 25 F or the logistic regression ( § 2.1), Y = {− 1 , +1 } , and we take u ( s ) = log(1 + exp( − s )), s = x > w and v ( y , x > w ) = − y x > w in Theorem 4. T o apply this for S P L , we set E pk B ( s A ) := E pk B ( x > A ˆ w A ) and E pk A ( s B ) := E pk A ( x > B ˆ w B ) since w e assume part y A and B knows E pk B ( ˆ w A ) and E pk A ( ˆ w B ), resp ectiv ely . Note that s A + s B = s b ecause x = [ x > A x > B ] > and w = [ w > A w > B ] > . T ak e piecewise linear functions u ( s ) and u ( s ) as low er and upper b ounds of u ( s ), resp ectiv ely . With it, w e can compute φ , ψ and ∇ φ in S AG as follo ws: ψ | w = ˆ w − φ | w = ˆ w = u ( x > ˆ w ) − u ( x > ˆ w ) , ∇ φ | w = ˆ w = u 0 ( x > ˆ w ) ∂ s ∂ w w = ˆ w + ∂ v ∂ w w = ˆ w = u 0 ( x > ˆ w ) ∂ ∂ w x > w w = ˆ w + ∂ ∂ w y x > w w = ˆ w = ( u 0 ( x > ˆ w ) + y ) x , whic h are all computable w ith S P L . After these preparations, we can conduct the proto col S B C as Proto col 2. Remark 7. In the description of the pr oto c ol, we omitte d the maginific ation c onstant M ( § 2.2) for simplicity. We have to notic e that, summing two values magnifie d by M a and M b , we get a value magnifie d by M max { a,b } . Similarly, multiplying two values magnifie d by M a and M b , we get a value magnifie d by M a + b . In the pr oto c ol, when the original data is magnifie d by M , then the final r esult is magnifie d by M 12 . So we have to adjust M so that M 12 times the final r esult do es not exc e e d the domain of Pail lier cryptosystem Z N . 26 Proto col 2: Secure Ball Computation protocol (SBC) Public φ := u ( s ) − y x > w , ψ := u ( s ) − y x > w Input from A { x iA } i ∈ [ n ] , E pk B ( ˆ w A ) Input from B { x iB , y i } i ∈ [ n ] , E pk A ( ˆ w B ) Output to A E pk B ( m A ) , E pk B ( r 2 A ) Output to B E pk A ( m B ) , E pk A ( r 2 B ) (where r 2 A + r 2 B = r 2 ) Step1 Part y B sends E pk B ( y ) to part y A. Step2 Part y A and B compute encrypted Φ, Ψ and ∇ Φ at w = ˆ w . P arty A do es: for i = 1 to n : S P LC ( E pk B ( x > iA ˆ w A ) , E pk A ( x > iB ˆ w B )) → ( E pk B ( u ∗ iA ) , E pk A ( u ∗ iB )), S P LC ( E pk B ( x > iA ˆ w A ) , E pk A ( x > iB ˆ w B )) → ( E pk B ( u ∗ iA ) , E pk A ( u ∗ iB )) // Note: E ( a ) 1 /n is in realit y computed as E ( a ) M /n , // where M is the magnification constan t. // Note: E ( a ) η ( η : a v ector) means [ E ( a ) η 1 E ( a ) η 2 · · · ] > . E pk B (Ψ A − Φ A ) ← E pk B 1 n P i ∈ [ n ] [ u ∗ iA − y i x > iA ˆ w A ] − 1 n P i ∈ [ n ] [ u ∗ iA − y i x > iA ˆ w A ] = E pk B 1 n P i ∈ [ n ] [ u ∗ iA − u ∗ iA ] = h Q i ∈ [ n ] E pk B ( u ∗ iA ) i 1 /n h Q i ∈ [ n ] E pk B ( u ∗ iA ) i − 1 /n // Φ A + Φ B = Ψ, Ψ A + Ψ B = Ψ E pk B ( ∇ Φ A ) ← E pk B 1 n P i ∈ [ n ] [ u 0 ( x > i w ) − y i ] x iA = h Q i ∈ [ n ] E pk B ( u 0 ( x > i w )) E pk B ( y i ) − 1 i (1 /n ) x iA // [ ∇ Φ > A , ∇ Φ > B ] > = ∇ Φ // Note: α j and o j means α j and o j for u // (see the sub deriv ativ e computation in Theorem 3). P arty B do es the similar. Step3 Part y A and B compute encrypted m and r . P arty A do es: E pk B ( m A ) ← E pk B ( ˆ w A − 1 λ ∇ Φ A ) 1 / 2 Compute E pk B ( k 1 2 ( ˆ w A + 1 λ ∇ Φ A ) k 2 ) from E pk B ( 1 2 ( ˆ w A + 1 λ ∇ Φ A )) = [ E pk B ( ˆ w A ) E pk B ( ∇ Φ A ) 1 /λ ] 1 / 2 using the m ultiplication protocol in [21]. E pk B ( r 2 A ) ← E pk B ( k 1 2 ( ˆ w A + 1 λ ∇ Φ A ) k 2 ) · ( E pk B (Ψ A ) · E pk B (Φ A ) − 1 ) 1 /λ P arty B do es the similar. 27

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment