Class Probability Estimation via Differential Geometric Regularization

We study the problem of supervised learning for both binary and multiclass classification from a unified geometric perspective. In particular, we propose a geometric regularization technique to find the submanifold corresponding to a robust estimator…

Authors: Qinxun Bai, Steven Rosenberg, Zheng Wu

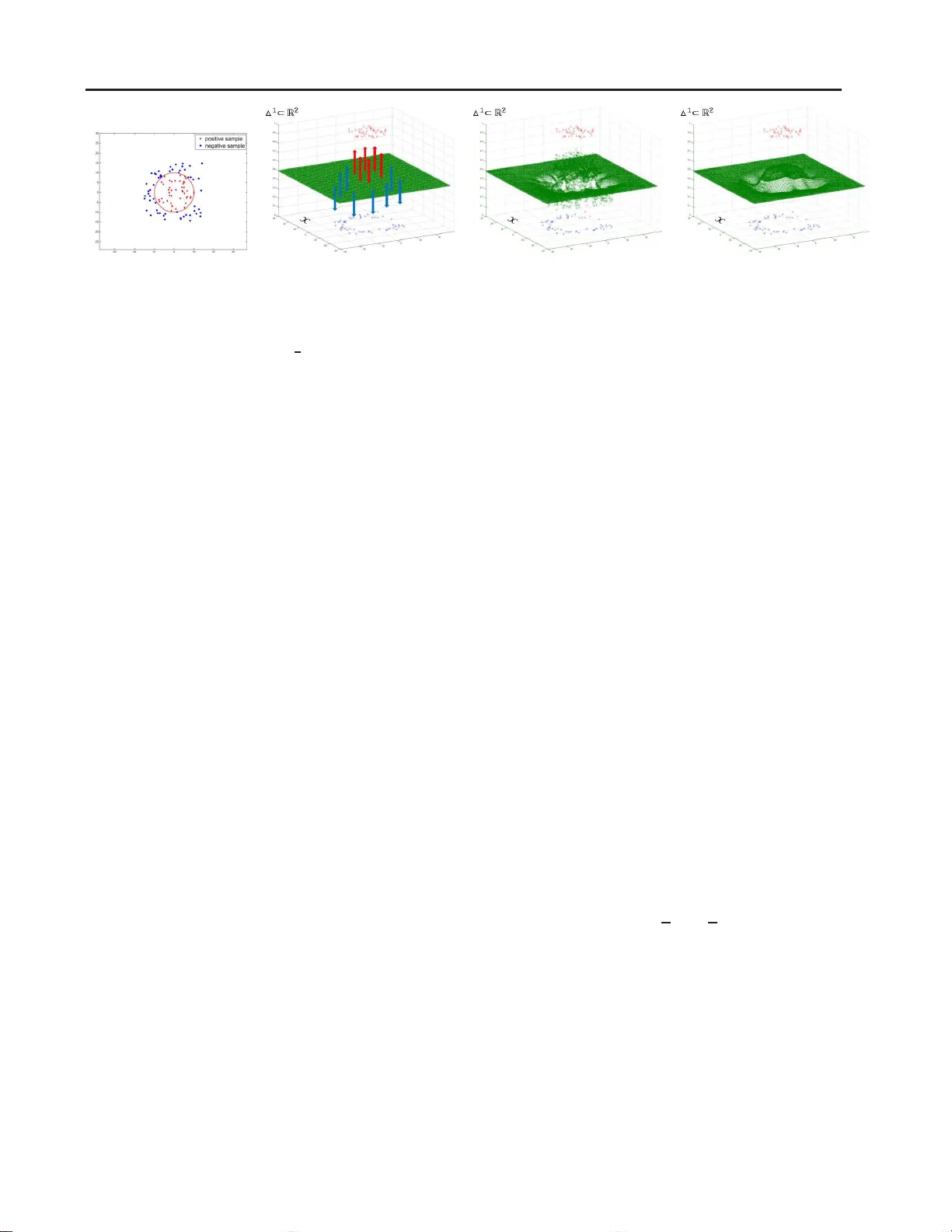

Class Pr obability Estimation via Differ ential Geometric Regularization Qinxun Bai Q I N X U N @ C S . BU . E D U Departmen t of Computer Science, Boston Univ ersity , Boston, MA 0 2215 USA Steven Rosenber g S R @ M A T H . B U . E D U Departmen t of Mathematics and Statistics, Boston Uni versity , Boston, MA 0 2215 USA Zheng W u W U Z H E N G @ B U . E D U The Mathworks Inc. Natick, MA 017 60 USA Stan Sclaroff S C L A RO FF @ C S . B U . E D U Departmen t of Computer Science, Boston Univ ersity , Boston, MA 0 2215 USA Abstract W e study the problem of supervised learn ing for both binary and multiclass classification from a unified geometric perspecti ve. In particular , we propo se a geometric r e g ularization techn ique to find the submanifo ld correspond ing to a robust estimator of the c las s p robability P ( y | x ) . The regularization term measures the v o lume of this submanifo ld, based on the in tuition that overfit- ting produces rapid local oscillations and hence large v olu me of the estimator . This techniq ue can be applied to regular ize any classification func- tion that satisfies two requirements: fir s tly , an es- timator of the class prob ability can be obtained; secondly , first and second deriv atives of the class probab ility estimator can be calculated. In ex- periments, we apply our regularization techn ique to standard loss functions for classification, ou r RBF-based im plementation compar es fav orably to widely used regularization meth ods for both binary and multiclass classification. 1. Intr oduction In supervised lea rning for classification, the idea o f regu- larization seeks a balance b etween a p erfect description of the training data and the potential for ge neralization to un- seen data. Most regular ization techniq ues are defined in the form of p enalizing some functiona l norms. For instance, one of the most successfu l class ification methods, the sup - port vector machin e (SVM) ( V apnik , 19 98 ; Sch ¨ olkopf & Smola , 20 02 ) and its variants ( Bartlett et al. , 2006 ; Stein- wart , 200 5 ), use a RKHS norm as a regula rizer . While function al norm based regularization is widely-used in ma- chine learning , we feel that there is impor tant loca l g eomet- ric informatio n overloo k ed by this app roach. In many real world classification pro blems, if the fe ature space is meaning ful, then all samples that are loc ally within a small enough neighb orhood of a training sam ple should have class probability P ( y | x ) similar to the training sam- ple. For in st ance, a small enou gh perturbatio n of R GB values at some pixels of a hum an face image should not change dramatically the lik elihood of correct iden tifica- tion of this image during face r ecognition. However , such “small local oscillations” of the class probability a re no t explicitly in corporated b y penalizing com monly used fu nc- tional norms. For instance , as repo rted b y Goodfellow et al. ( 2014 ), linear models and their combinatio ns can be eas- ily fo oled b y hard ly percep ti ble pertu rbations of a co rrectly predicted image, ev en though a L 2 regularizer is adopted. Geometric r e gularization tech niques have also bee n studied in machine learning. Belkin et al. ( 2006 ) employed ge o- metric re gularization in the f orm of the L 2 n orm o f the gra- dient magnitude supported o n a manif old. This approach exploits the geometry of th e marginal distribution P ( x ) for semi-supervised learning, ra ther tha n the geo metry of the class pro bability P ( y | x ) . Other related g eometric reg- ularization methods are moti vated by the success of le vel set methods in image segmentatio n ( Cai & Sowmya , 2007 ; V arshney & W illsky , 2010 ) and Euler’ s Elastica in imag e processing ( Lin et al. , 201 2 ; 201 5 ). In particular , the Le vel Learning Set ( C ai & Sowmya , 2007 ) com bines a counting function of train ing samp les and a geo metric p enalty o n the surface ar ea of the decision b oundary . The Geomet- ric Level Set ( V arshney & W illsky , 2010 ) gene ralizes this idea to standard empir ical risk minimization schem es with margin-based loss. Along this line, the Euler’ s Elastica Model ( Lin et al. , 2012 ; 2015 ) proposes a regularization Class Probability Estimation via Differ ential Geometric Regularization technique that penalize s both th e g radient o scillati ons and the curvature of the decision boundary . Howe ver , all three methods focus on the g eometry o f th e decision b oundary supported in the domain of the feature spa ce, and the “small local oscillation ” o f the class prob ability is not explicitly addressed. In this work, we argue that the “small local oscillation” of the c las s pro bability actua ll y lies in the prod uct spac e o f the feature d omain and the probabilistic ou tput space, an d can be characterize d by the geometry of a submanifo ld in this produ ct space correspo nding to the class probab ility . Let f : X → ∆ L − 1 be a class probability estimato r , wh ere X is the feature space and ∆ L − 1 is the prob abilistic simplex for L classes. From a g eometric perspecti ve, if we re gard { ( x , f ( x )) | x ∈ X } , the functiona l graph ( in the geom etric sense) o f f , as a subm anifold in X × ∆ L − 1 , then “small local oscillations” c an be measure d b y the local flatness of this submanif old. In our appro ach, th e lea rning pr ocess can be viewed as a submanifo ld fitting problem that is solved b y a g eometric flow me thod. I n p articular , ou r app roach finds a subman i- fold by iterati vely fitting the training sam ples in a curvature or volume decrea s ing manner withou t any a priori assump- tions on the geometry of the subman ifold in X × ∆ L − 1 . W e use gr adient flow metho ds t o fin d an optimal direction , i.e. at each step we find the vector fi eld pointing in the optimal direction to mov e f . As we will see in the next sectio n, this regularization ap proach n aturally hand les bin ary and mul- ticlass classification in a un ifi ed way , while previous deci- sion bo undary based techn iques (and most f unctional reg- ularization approach es) ar e origin ally designed for binary classification, an d rely on “on e versus one”, “one versus all” or mo re efficiently a binary cod ing strategy ( V arshney & W illsky , 2010 ) to generalize to multiclass case. In exp eriments, a radial b asis function (RBF) based im- plementation of our for mulation comp ares fa vorably to widely used binary an d mu lticlas s classification methods on datasets fr om the UCI re pository and r eal-world d atasets including the Flickr Material Datab ase (FMD) and the MNIST Database of handwritten digits. In summary , our contributions ar e: • A geo metric per specti ve on overfitting and a regular - ization app roach that exploits the geo metry of a robust class probab ility estima tor for classifi cation, • A unified gradien t flow based algorithm for both bi- nary a nd m ulticlass cla s sification that can be applied to standard loss functions, and • A RBF-based implem entation tha t achieves pr omising experimental results. Figure 1. Example of three-class l ea rning, i .e., L = 3 , where the input space X is 2 d . T raining samples of the three classes are marked with red, g reen and blue dots respectiv ely . The class label for each training sample correspon ds to a verte x of the simplex ∆ L − 1 . As a result, each mapped training point ( x i , z i ) l ies on one face (correspon ding to it s label y i ) of the space X × ∆ 2 . 2. Method Overview In our work, we propose a regularizatio n sch eme that e x- ploits the geometry of a r ob u s t c las s pr obability estimator and sugg es t a gradien t flo w b ased appro ach to solve f or it. In the follow , we will describe ou r approa ch. Related math- ematical notation is summarized in T able 2 . Follo wing the p robabilistic setting of classification, g i ven a sample ( feature) space X ⊂ R N , a label space Y = { 1 , . . . , L } , an d a finite train ing set of labeled samples T m = { ( x i , y i ) } m i =1 , where each training sample is gen- erated i.i.d. from distribution P over X × Y , o ur goal is to find a h T m : X → Y such th at for any n e w sample x ∈ X , h T m predicts its label ˆ y = h T m ( x ) . The optimal generalizatio n risk (Bayes risk) is achiev ed by the classifi er h ∗ ( x ) = a rgmax { η ℓ ( x ) , ℓ ∈ Y } , where η = ( η 1 , . . . , η L ) with η ℓ : X → [0 , 1 ] b eing th e ℓ th class proba bility , i.e. η ℓ ( x ) = P ( y = ℓ | x ) . Our regular ization ap proach exploits the geo metry o f the class probability estimator, and can be regarded as a “h y- brid” plu g-in/ERM scheme ( Audibert & T s ybakov , 20 07 ). A regularized loss m inimization prob lem is setup to find an estimator f : X → ∆ L − 1 , where ∆ L − 1 is the stan- dard ( L − 1) -simplex in R L , and f = ( f 1 , . . . , f L ) is an estimator of η with f ℓ : X → [0 , 1] . The estima- tor f is then “plugged-in ” to get the classifier h f ( x ) = argmax { f ℓ ( x ) , ℓ ∈ Y } . Figure 1 shows an example of th e setup of our approach, for a syn thetic three-class classification problem . The sub- manifold corr esponding to estimator f is the gra ph (in the geometric sense) of f : gr( f ) = { ( x , f 1 ( x ) , . . . , f L ( x )) : x ∈ X } ⊂ X × ∆ L − 1 . W e denote a point in the space X × ∆ L − 1 as ( x , z ) = ( x 1 , . . . , x N , z 1 , . . . , z L ) , wh ere x ∈ X a nd z ∈ ∆ L − 1 . Then in this pro duct space, Class Probability Estimation via Differ ential Geometric Regularization ( a ) ( b ) ( c ) ( d ) Figure 2. Example of b inary learning via grad ient flow . As shown i n ( a ) , the feature space X is 2 d , training points are sampled u niformly within the region [ − 15 , 15] × [ − 15 , 15] , and labeled by the function y = sign(10 − k x k 2 ) (the red circle). In the initialization step, sho wn in ( b ) , positi ve and negativ e training points map t o the two faces of the space X × ∆ 1 respecti vely . Our gradient fl o w method starts from a neutral function f 0 ≡ 1 2 and move s tow ards the negati ve direction (red and blue arro ws) of the penalty gradient ∇P f 0 . Figure ( c ) sho ws the submanifold ( gr( f 1 ) ) one st e p after ( b ) . T h e submanifold t h en continues to ev olve t o wards −∇P f t step by step and the final output after con verg ence of the algorithm is shown in ( d ) . a training p air ( x i , y i = ℓ ) natu rally maps to the poin t ( x i , z i ) = ( x i , 0 , . . . , 1 , . . . , 0) , w ith the one- hot vector z i (with the 1 in its ℓ -th slot) at the vertex of ∆ L − 1 corre- sponding to P ( y = y i | x ) = 1 . W e point out two proper ti es of this g eometric setup. Firstly , it inherently han dles mu lticlass classification, with binary classification as a special case. Second ly , while the dimen- sion of th e ambient space, i.e. R N + L , depen ds on bo th the feature dimension N and number of classes L , the intrinsic dimension of the submanif old gr( f ) only dep ends on N . 2.1. V ariational f ormulation W e want gr( f ) to appr oach the m apped training points while remaining as flat as possible, so we imp ose a penalty on f consisting of an empirica l loss term P T m and a geo- metric regularizatio n term P G . For P T m , we can cho ose ei- ther the wide ly-used cross-entropy loss fun ction for multi- class classification or the simpler Euclid ean distance func- tion between the simplex co ordinates o f the grap h p oint an d the m apped training po int. For P G , we would id eally con- sider an L 2 measure of the Riemann curv a ture of gr( f ) , as the vanishing o f th is term gi ves optimal (i.e., locally distor- tion free) diffeomorp hisms from gr( f ) to R N . Ho wev er , the Riemann curvature tensor takes the fo rm of a combina- tion o f deriv atives up to third o rder , and th e correspon ding gradient vector field is even mo re complica ted and ineffi- cient to compu te in practice. As a result, we measure the graph’ s v olume, P G ( f ) = R gr( f ) dvol , where dv ol is the induced volume from the Lebesgue measure on the ambi- ent space R N + L . More precisely , we find the fu nction that minimize s the fol- lowing pen alty P : P = P T m + λ P G : M = Maps( X , ∆ L − 1 ) → R (1) on the set M of smooth functions from X to ∆ L − 1 , where λ is the tradeoff parameter between empirical loss and reg- ularization. I t is impo rtant to note that any relativ e scal- ing of the d omain X will not affect the estimate of the class probability η , as scaling will distort gr( f ) but will not change the critical function estimating η . 2.2. Gradient flow and geometric f o undation The standard techniq ue for solving variational form ulas is the Eule r -Lag range PDE. Howe ver , du e to our geo met- ric term P G , finding the minimal solutions of th e Euler- Lagrang e equation s for P is d if ficult, instead, we solve for argmin P using grad ient flow in functional space M . A simple but intuitive simulated examp le of binary learn- ing usin g gradient flow for our appro ach is giv en in Fig- ure 2 . For the explanation purposes only , we replace M with a finite dim ensional Riemannian manifold M . W ith- out loss of ge nerality , we also assume that P is smooth , then it has a differential d P f : T f M → R fo r each f ∈ M , where T f M is th e tangen t space to M at f . Since d P f is a linear func tional on T f M , th ere is a u nique tangent vec- tor , denoted ∇P f , such that d P f ( v ) = h v , ∇P f i for all v ∈ T f M . ∇P f points in the dir ection o f maximal in- crease of P at f . Thus, the solutio n of th e n e gativ e gra- dient flow d f t /dt = −∇P f t is a flow line of steepest descent starting at an initial f 0 . For a dense open set of initial points, flow lines approach a local minimum of P at t → ∞ . W e always choose the initial function f 0 to be the “neutral” choice f 0 ( x ) ≡ ( 1 L , . . . , 1 L ) which re asonably assigns equal condition al proba bility to all classes. Similar gradien t flo w procedu res are w idely used in variational p roblems, such as level set m ethods ( Osher & Sethian , 1988 ; Sethian , 1999 ), Mu mford-Shah fun c- tional ( Mu mford & Shah , 1989 ), etc. In th e classification literature, V arshney & Willsk y ( 20 10 ) were th e first to use gradient flo w meth ods for solving level set based en ergy function s, the n followed by Lin et al. ( 2012 ; 2015 ) to solve Euler’ s Elastica mode ls . I n our case, we ar e exploiting the Class Probability Estimation via Differ ential Geometric Regularization geometry in the space X × ∆ L − 1 , rather than standard vec- tor spaces. Since o ur grad ient flow me thod is actually applied on the infinite dim ensional m anifold M , we have to un derstand both the top ology and the Riemannia n geometry of M . For the topolo gy , we put the Fr ´ echet top ology o n M ′ = Maps( X , R L ) , the set of smoo th maps from X to R L , an d take the induced topology on M . Intuitively speaking, two function s in M a re close if the function s and all their par- tial de ri vati ves are pointwise clo se. Sin ce M is an op en Fr ´ ec het submanifo ld with boundary inside the vector space M ′ , so as with an open set in Euclidean space, we c an canonically identify T f M with M ′ . For the Riemannian metric, we take the L 2 metric on each tangent space T f M : h φ 1 , φ 2 i := R X φ 1 ( x ) φ 2 ( x )dvol x , with φ i ∈ M ′ and dvol x being the v olu me form of the induced Riema nnian metric on the gr aph of f . ( St rictly speaking , the v olume form is pu lled back to X by f , usually den oted b y f ∗ dvol .) The dif ferential d P f is linear as abov e, an d by a direct cal- culation, th ere is a uniq ue tangent vector ∇P f ∈ T f M such that d P f ( φ ) = h∇P f , φ i for all φ ∈ T f M . Thus, we can co nstruct the gradien t flow equ ation. However , u nlik e the case of finite d imensions, the existence of flow lines is not au tomatic. Assuming the existence o f flow lines, a generic initial point flows to a local minimum of P . In any case, our RBF-based implementatio n in § 3 mimicking gra- dient flow is well d efined. Note that we think o f X as large enoug h so th at the train - ing data actually is sampled well inside X . This allows us to tr eat X as a c losed manifold in ou r gradient calcu- lations, so that boundary ef fects can be ignored. A simi- lar natural bounda ry co ndition is als o adop ted by previous work ( V arshney & W illsky , 2010 ; Lin et al. , 2012 ; 2015 ) . 2.3. More on related work There exist some other works th at are r elated to some as- pects of our work. Most n otably , Sob ole v regularization, in volves fun ctional norms of a cer tain numb er of deriva- ti ves of th e prediction function . For instance , the manifold regularization ( Belkin et al. , 2006 ) men tioned in § 1 uses a Sobolev re gularization term, Z x ∈M k∇ M f k 2 dP ( x ) , (2) where f is a smo oth fun ction on manifold M . A discrete version of ( 2 ) co rresponds to the g raph L aplacian r e g ular - ization ( Zhou & Sch ¨ olkopf , 2005 ). Lin et al. ( 2015 ) dis- cussed in detail the difference between a Sobolev n orm and a cu rv atu re-based norm for th e purpo se o f exploiting the geometry of the decision bound ary . For ou r pu rpose, while imposing , say , a h igh Sobolev norm 1 , will also lead to a flattening of the hyper surface gr( f ) , these n orms are n ot specifically tailo red to m easur - ing the flatne s s of g r ( f ) . In other w o rds, a h igh Sobolev norm bo und will imply the volume bo und we desire, b ut not vice versa . As a result, imposing high Sobolev nor m constraints ( re gardless of co mputational difficulties) over - shrinks the hyp othesis space from a lear ning theory point of view . In contr ast, our regularizatio n term (g i ven in ( 11 )) in volves only the c ombination of first der i vati ves of f that specifically address the geo metry behind the “small local oscillation” prior observed in practice. Our training procedure fo r findin g the optima l graph of a function is, in a general sense, also re lated to the manif old learning problem ( T en enbaum et al. , 2000 ; Ro weis & Saul , 2000 ; Belkin & Niyog i , 2003 ; Donoho & Grimes , 2003 ; Zhang & Zh a , 2 005 ; Lin & Zha , 2 008 ). The most clo sely related work is ( Donoho & Grimes , 2 003 ), which seeks a flat s ubman ifold o f Euclidean space that contains a dataset. Again, there are key differences. Since the goal of ( Donoho & Grimes , 2003 ) is dimensionality re duction, their mani- fold ha s high co dimension, while ou r functional g raph has codimensio n L − 1 , which may be as low as 1 . More impo r - tantly , w e do not assum e that the g raph of o ur target fu nc- tion is a flat (or volume min imizing) submanif old, and we instead flo w to wards a function wh ose graph is as flat (or volume minimizing) as possible. In this regard, o ur work is related to a large b ody of literatur e on Morse theory in finite and infinite dimension s , and on mean curvature flow ( Chen et al. , 1999 ; Mantegazza , 2011 ). 3. Example Formu lation: RBFs W e no w illustrate our appro ach using an RBF representa- tion of ou r estimator f . RBFs are also used b y previous ge- ometric classification methods ( V arshney & W illsky , 20 10 ; Lin et al. , 2012 ; 2015 ). Giv en values o f f are pro babilistic vectors, it is common to represent f as a “so ftmax” o utput of RBFs, i.e. f j = e h j P L l =1 e h l , where h j = m X i =1 a j i ϕ i ( x ) , for j = 1 , . . . , L , (3) where ϕ i ( x ) = e − 1 c k x − x i k 2 is the RBF fun ction centered at training sample x i , with kernel width parameter c . Estimating f becomes an optimization pr oblem for th e m × L coefficient matrix A = ( a ℓ i ) . Th e following eq uation determines A : [ h ( x 1 ) , . . . , h ( x m )] T = GA, wh ere G ij = ϕ j ( x i ) . (4) 1 “High S ob ole v no rm” is the co n ventional term for S ob ole v norm with high order of deriv ativ es. Class Probability Estimation via Differ ential Geometric Regularization T o p lug th is RBF rep resentation in to ou r g radient flow scheme, the grad ient v ector field ∇P f is ev aluated at each sample point x i , and A is upd ated by A ← A − τ G − 1 [ ∇P h ( x 1 ) , . . . , ∇P h ( x m )] T , (5) where τ is the step-size param eter , an d ∇P h ( x i ) = ∂ f ∂ h T x i ∇P f ( x i ) . (6) Here ∇P h ( x i ) den otes the gr adient vector field w .r .t. h ev aluated at x i , a nd the L × L Jacobian matr ix h ∂ f ∂ h i x i can be o btained in clo sed form from ( 3 ). In the fo llo wing sub - sections, we give exact for ms o f the empirical penalty P T m and the g eometric penalty P G , and discuss the co mputation of ∇P h for both penalty terms. 3.1. The empirical penalty P T m W e con sider tw o widely-used loss fun ctions for the em pir - ical penalty term P T m . Quadratic loss. Since P T m measures th e deviation of gr( f ) from the mapp ed train ing po ints, it is natu ral to choose the qu adratic func ti on of the Euclidean distance in the simplex ∆ L − 1 , P T m ( f ) = m X i =1 k f ( x i ) − z i k 2 , (7) where z i is the one-hot vector correspondin g to the groun d truth lab el of x i . The g radient vector w .r .t. f evaluated at x i is ∇P T m , f ( x i ) = 2 ( f ( x i ) − z i ) . The gradien t vector w .r .t. h ev aluated at x i is ∇P T m , h ( x i ) = 2 ∂ f ∂ h T x i ( f ( x i ) − z i ) , (8) ev aluatio n of h ∂ f ∂ h i T x i is the same as in ( 6 ). Cross-entropy loss. The cross-entro p y loss function is also widely-used for probab ilis tic output in clas sification, P T m ( f ) = − m X i =1 L X ℓ =1 z ℓ i log f ℓ ( x i ) , (9) whose gradien t vector field w .r .t. h ev alu ated at x i is ∇P T m , h ( x i ) = f ( x i ) − z i . (10) 3.2. The geometric penalty P G As discussed in § 2 , we wish to p enalize gra phs for exces- si ve curvature and we use the following function, wh ich measures the volume of the gr( f ) : P G ( f ) = Z gr( f ) dvol = Z gr( f ) p det( g ) dx 1 . . . dx N , (11) where g = ( g ij ) with g ij = δ ij + f a i f a j , is the Riemm a- nian metric on gr( f ) indu ced fro m the s tandard dot pr oduct on R N + L . W e use the summation conv ention on repeated indices. Note that this regularization term is clearly very different from the s tandard Sobolev norm of any orde r . It is standard that ∇P G = − T r II ∈ R N + L on the space of all embedd ings of X in R N + L . If we restrict to the sub- manifold o f grap hs of f ∈ M ′ , it is easy to calcu late that the gradient of geometric penalty ( 11 ) is ∇P G, f = V G, f = − T r I I L , (12) where T r II L denotes the last L compon ents of T r I I . Then the geometric gradient w .r .t. h is ∇P G, h = V G, h = − ∂ f ∂ h T T r I I L . (13) Evaluation of h ∂ f ∂ h i and T r I I L at x i leads to ∇P G, h ( x i ) . The fo rmulation gi ven above is general in that it e ncom- passes both the binary and the multiclass cases. For both cases, ev alu ation of h ∂ f ∂ h i at the trainin g poin ts is the same as that in ( 6 ), and evaluation o f T r I I L at any po int x can be perform ed explicitly by the follo wing theorem. Theorem 1. F or f : R N → ∆ L − 1 , T r I I L for gr( f ) is given by T r II L = ( g − 1 ) ij f 1 j i − ( g − 1 ) r s f a r s f a i f 1 j , . . . , f L j i − ( g − 1 ) r s f a r s f a i f L j , (14) wher e f a i , f a ij denote partial derivatives of f a . The pr oof is in Appendix A . Note that for our RBF r ep- resentation ( 3 ), the p artial deriv a ti ves f a i , f a ij can b e easily obtained in closed form. Simplex co ns traint. Th e class p robability estimato rs f : X → ∆ L − 1 always takes values in ∆ L − 1 ⊂ R L . While this constrain t is au tomatically satisfied fo r the flow of the empirical gradient vector form ula ( 8 ) and ( 10 ), it may fail for th e flow of geom etric grad ient vector f ormula ( 12 ) . There are tw o ways to enforce th is constrain t fo r the g eo- metric gradient v ector field. First, since our initial fu nction Class Probability Estimation via Differ ential Geometric Regularization f 0 takes values at the center of ∆ L − 1 , we can ortho gonally project th e geo metric g radient vector V G, f to V ′ G, f in the tangent space Z = { ( y 1 , . . . , y L ) ∈ R L : P L ℓ =1 y ℓ = 0 } of the simp le x , an d then scale τ V ′ G, f ( τ is the stepsize) to ensure that the ra nge of the new f 1 lies in ∆ L − 1 . W e then iterate. Mo re simply , we can select L − 1 of the L com- ponen ts of f ( x ) , call the new f unction f ′ : X → R L − 1 , and compu te the ( L − 1 ) -dimensional gradien t vector V G, f ′ following ( 12 ) and ( 14 ). The omitted compon ent of the de- sired L -grad ient vector is determine d by − P L − 1 ℓ =1 V ℓ G, f ′ , by the definition of Z . Our implementa ti on reported fol- lows th is second approach, where we choose th e ( L − 1) compon ents of f by omitting th e c omponent co rresponding to the class with least number of training samples. 3.3. Algorithm summary Algorithm 1 gives a summ ary o f th e c las sifier learning pro- cedure. Inp ut to the algorithm is the training set T m , RBF kernel width c , trad e-of f par ameter λ , and step-size param- eter τ . F o r initialization, ou r algorithm first initializes the function values of h and f fo r ev ery tr aining po int, and then constructs matrix G and solves for A by ( 4 ). I n the subsequen t steps, at each iteration, our algo rithm first e val- uates the gradient vector field ∇ P h at every trainin g point, then upd ates coefficient matrix A by ( 5 ). For the overall penalty fun ction P = P T m + λ P G , we co mpute th e total gradient vector field ∇P h ev aluated at x i , ∇P h ( x i ) = ∇P T m , f ( x i ) + λ ∇P G, f ( x i ) (15) = h ∂ f ∂ h i T x i 2( f ( x i ) − z i ) − λ T r II L x i , quadratic f ( x i ) − z i − λ h ∂ f ∂ h i T x i T r II L x i , cross-entro p y . Our algorith m iterates un til it conv erges or reach es th e maximum iteration number . The same algorith m applies to both the quadra tic loss a nd the cross-entropy loss. T o evaluate th e total g radient vec- tors ∇P h ( x i ) in each iteration , for the quadra ti c loss, we use ( 8 ) and ( 13 ) to compu te the to tal gradient vector ( 22 ); for the cross-entro p y loss, w e u se ( 10 ) and ( 1 3 ) instead. The remain ing st eps of the proced ure are exactly th e same for both loss function s. The final predictor learned by our algorithm is given by F ( x ) = ar gmax { f ℓ ( x ) , ℓ ∈ { 1 , 2 , · · · , L } } . (16) 4. Experiments T o evaluate the effecti veness of the p roposed r e gulariza- tion appr oach, we com pare our RBF-based implementation with tw o groups of related classification methods. The first Algorithm 1 Geometric regularized classification Input: training data T m = { ( x i , y i ) } m i =1 , RBF kernel width c , trade-off par ameter λ , step-size τ Initialize: h ( x i ) = (1 , . . . , 1) , f ( x i ) = ( 1 L , . . . , 1 L ) , ∀ i ∈ { 1 , · · · , m } , construct matr ix G and solve A b y ( 4 ) for t = 1 to T do – Evaluate the to tal gradient vector ∇P h ( x i ) at ev- ery training point according to ( 22 ). – Update the A by ( 5 ). end for Output: class pr obability estimator f gi ven by ( 3 ) . group o f methods are stand ard RBF-based m ethods that u se different regu larizers than ou rs. Th e second group of meth- ods are previous geometric re gularizatio n metho ds. In particular, th e first grou p include s the Radial Basis Func- tion Network (RBN), SVM with RBF kernel (SVM) and the Import V ector Mach ine (IVM) ( Zhu & Hastie , 2005 ) (a gr eedy search variant of the standard RBF kernel lo gis- tic regression classifier). No te th at b oth SVM and IVM use RKHS regularizers a nd th e IVM als o uses the similar cross-entro p y loss as Ou rs-CE. The second grou p inc ludes the Level Lear ning Set classi- fier ( Cai & Sowmya , 2007 ) (LLS) , the Geom etric Level Set classifier ( V arshney & Wills ky , 2010 ) (GLS) and the Eu- ler’ s Elastica classifier ( Lin et a l. , 2 012 ; 2 015 ) (EE ). No te that both GLS and EE use R BF represen tations an d EE also uses the same quadratic distance loss as Ours-Q. W e test both the quadr atic lo ss version (Ours-Q) an d the cross-entro p y loss version (Ours-CE) of ou r implementa- tion. 4.1. UCI datasets W e tested our classification method on four binar y c las si- fication datasets and fou r multiclass classification datasets. Giv en that V arshney & Willsk y ( 201 0 ) has covered se veral methods on our co mparing list and their im plementation is pu blicly a vailable, we choose to use the same datasets as ( V arshney & W illsky , 2010 ) and carefully follow the ex- act experimental setup. T enfold cross-validation error is reported . For each of the ten folds, the kernel-width con - stant c and tradeoff parameter λ are found using fivefold cross-validation on th e training folds. All dimensions of input sample points ar e nor malized to a fixed ran ge [0 , 1] throug hout the experiments. W e select c from the set of val- ues { 1 / 2 5 , 1 / 2 4 , 1 / 2 3 , 1 / 2 2 , 1 / 2 , 1 , 2 , 4 , 8 } and λ fro m the set o f values { 1 / 1 . 5 4 , 1 / 1 . 5 3 , 1 / 1 . 5 2 , 1 / 1 . 5 , 1 , 1 . 5 } that minimizes the fivefold cross-validation error . Th e step-size τ = 0 . 1 and iteration number T = 5 are fixed over all Class Probability Estimation via Differ ential Geometric Regularization T able 1. T enfold cross-valida tion error rate (percent) on four binary and four multiclass classification datasets from the UCI machine learning repository . ( L, N ) denote the number of classes and input feature dimensions respecti vely . W e compare bo th the quadratic loss version (Ours-Q) and the cross-entropy loss version (Ours-CE) of our method with 6 RBF-based classificati o n methods and (or) geometric regularization methods: SVM wi th RBF kernel (SVM), R a dial basis function network (RBN), Le vel learning set classifier ( Cai & Sowmya , 2007 ) (L LS), Geometric le vel set classifier ( V arshney & Willsky , 2010 ) (GLS ), Import V ector Machine ( Zhu & Hastie , 2005 ) (IVM), Euler’ s Elastica classifier ( Lin et al. , 2012 ; 2015 ) (EE). The mean error rate averaged over all eight datasets is shown in the bottom ro w . T op performance for each dataset is sho wn in bold. D A TA S E T ( L, N ) R B N S V M I V M L L S G L S E E O U R S - Q O U R S - C E P I M A ( 2 , 8 ) 2 4 . 6 0 2 4 . 1 2 2 4 . 1 1 2 9 . 9 4 2 5 . 9 4 23.33 2 3 . 9 8 2 4 . 5 1 W D B C ( 2 , 3 0 ) 5 . 7 9 2 . 8 1 3 . 1 6 6. 5 0 4 . 4 0 2.63 2.63 2.63 L I V E R ( 2 , 6 ) 3 5 . 6 5 2 8 . 6 6 2 9 . 2 5 3 7 . 3 9 3 7 . 6 1 2 6 . 3 3 25.74 2 6 . 3 1 I O N O S . ( 2 , 3 4 ) 7 . 3 8 3.99 2 1 . 7 3 1 3 . 1 1 1 3 . 6 7 6 . 5 5 6 . 8 3 6 . 2 6 W I N E ( 3 , 1 3 ) 1 . 7 0 1 . 1 1 1 . 6 7 5. 0 3 3 . 9 2 0 . 5 6 0.00 0.00 I R I S ( 3 , 4 ) 4 . 6 7 2.67 4 . 0 0 3 . 3 3 6 . 0 0 4 . 0 0 3 . 3 3 3 . 3 3 G L A S S ( 6 , 9 ) 3 4 . 5 0 3 1 . 7 7 29.44 3 8 . 7 7 3 6 . 9 5 3 2 . 2 8 29 . 8 7 29.44 S E G M . ( 7 , 1 9 ) 1 3 . 0 7 3. 8 1 3 . 6 4 14 . 4 0 4 . 0 3 8 . 8 0 2.47 2 . 7 3 A L L - A V G 1 5 . 9 2 1 2 . 3 7 1 4 . 6 3 1 8 . 5 6 1 6 . 5 7 1 3 . 0 6 11.86 1 1 . 9 0 datasets. W e used the same settings for both loss fu nctions. T able 1 r eports the results o f this experiment. The top per- former for each dataset is marked in bold, an d the aver - aged p erformance of each m ethod over all testing datasets is summ arized in the botto m r o w . The nu mbers fo r RBN, LLS an d GLS are c opied fro m T able 1 of ( V arshney & Will- sky , 2010 ). Results fo r SVM and IVM are obtained by run- ning p ublicly av ailab le im plementations for SVM ( Chang & Lin , 2011 ) and I VM ( Ro s cher e t al. , 201 2 ). Results fo r EE are obtained b y runn ing an implem entation provided by the auth ors of ( Lin et al. , 2012 ) . When ru nning these im- plementation s, we followed the same exp erimental setup as described above and exh austi vely searched f or the op timal range for the kernel band width and the trad e-of f par ameter via cross-validation. As shown in the last row of T able 1 , two versions of our approa ch are overall the to p two p erformers a mong all r e- ported meth ods. In par ticular , Ou rs-Q attains top perfo r - mance on fo ur out of the eight be nchmarks, Ours-CE at- tains top perfor mance on thre e o ut of the eight bench marks. The perform ance of th e two versions of our m ethod are very close, which sho ws the r ob u s tness of our geom etric regularization approa ch cross different loss function s for classification. No te that three pairs o f comparison s, IVM vs Ours-CE, GLS vs Ours-Q/Ou rs-CE, and EE v s Our s -Q are of particular interest. W e are g oing to d is cuss th em in detail respectively . The IVM m ethod o f kernel logistic regression uses th e same RBF-based implementation and very similar cross- entropy loss as ou r cr oss-entropy versio n Ou rs-CE, and both m ethods handle the mu lt iclass case inherently . The main difference lies in regularizatio n, i.e., the standard RKHS norm regu larizer vs our geometric regular izer . Ours-CE outperforms IVM on six o f the e ight b enchmars in T able 1 , and achieves e qual p erformance on one of the remaining two, an d is only slightly behind o n “PIMA”. T he overall sup erior per formance of Ours-CE demonstrates the advantage of the p roposed geometric r e gularization over the standard RKHS norm regularization. The GLS method uses the same RBF-based implem enta- tion as our s and also exploits v o lume geometry for regu- larization. As d escribed in § 1 , however , the re ar e key dif- ferences between the two regulariz ation techniq ues. GLS measures the volume of the d ecision boundary supported in X , while our appro ach measures the volume of a sub- manifold sup ported in X × ∆ L − 1 that c orresponds to the class probab ilit y estimator . Our regularization technique handles the binar y and multiclass cases in a un ifi ed fram e- work, while the d ecision boun dary based techniq ues, such as GLS (an d EE), were in herently design ed fo r th e bin ary case and rely on a binar y coding strate gy to train log 2 L decision boun daries to gener alize to the multiclass case. In our experimen ts , both Ours-Q an d Ours-CE ou tperform GLS on all the ben chmarks we have tested. This d emon- strates the effectiveness of exploiting the geome try o f the class probab ility in addre s sing the “small local oscillation” for classification. The EE method of Euler’ s Elastica model uses the same RBF-based implem entation and the same q uadratic lo ss as ou r quad ratic loss version Ou rs-Q. Th e main d if fer- ence, again , lies in regularizatio n, i.e., a combina tion of 1 - Sobolev norm an d curvature penalty on the d ecisi on b ound- ary vs o ur v olu me penalty on the submanifo ld correspon d- ing to the class prob ability estimato r . Since E E adopts a combinatio n of soph is ticated g eometric measur es on the decision b oundary , wh ich fit specifically th e binary case, it achie ves top performan ce on binary datasets. Ho wever , as e x plained in § 1 , the geome try of the class p robability fo r Class Probability Estimation via Differ ential Geometric Regularization T able 2. Notations h f ( x ) = arg max ℓ ∈Y f ℓ ( x ) , : plug-in classifier of f : X → ∆ L − 1 ∆ L − 1 : t h e standard ( L − 1) -simplex in R L ; η ( x ) = ( η 1 ( x ) , . . . , η L ( x )) : class probability: η ℓ ( x ) = P ( y = ℓ | x ) M : { f : X → ∆ L − 1 ) : f ∈ C ∞ } M ′ : { f : X → R L : f ∈ C ∞ } T f M : the tangent space to M at some f ∈ M ; T f M ≃ M ′ The graph of f ∈ M (or M ′ ) : gr( f ) = { ( x , f ( x ) ) : x ∈ X } g ij = ∂ f ∂ x i ∂ f ∂ x j : T h e Riemannian metric on gr( f ) induced from the standard dot product on R N + L ( g ij ) = g − 1 , with g = ( g ij ) i,j =1 ,...,N dvol = p det( g ) dx 1 . . . dx N , the volume element on gr( f ) { e i } N i =1 : a smoothly varying orthono rmal basis of the tangent spaces T ( x , f ( x ) gr( f ) of the graph of f T r I I : the trace of the second fundamental form of gr( f ) , T r I I ∈ R N + L T r I I = P N i =1 D e i e i ⊥ : with ⊥ the orthogonal projection to the subspace perpendicular to the tangent space of gr( f ) and D y w the directional deriv ative of w in y directi o n T r I I L : the projection of T r I I onto the last L coordinates of R N + L ∇P : the gradient vector field of a function P : M → R on a possibly infinite dimensional manifold M general class ification, which is captur ed b y our approach, cannot be captured by decision bou ndary based t echniqu es. That is the reason wh y Ours-Q, a gen eral scheme fo r both the b inary and mu lti class c ase, outpe rforms EE on a ll four multiclass datasets, while it still ach ie ves to p perform ance on binary datasets. This again demonstra tes our geo met- ric p erspecti ve and regulariza ti on a pproach that exploits the geometry of the class prob ability . 4.2. Real-world datasets T o test the scalability of our meth od to high dimen- sional an d large-scale prob lems, w e also cond uct exper - iments on two real-world d atasets, i.e., the Flick r Ma- terial D atabase ( FMD ) for image classification and the MNIST ( MNIST ) Database of handwritten digits. FMD (409 6 dimensional). The FMD dataset co ntains 10 categories of images with 100 ima ges per category . W e ex- tract im age feature s u s ing the SIFT descriptor augmented by its feature coor dinates, im plemented by the VLFeat li- brary ( VLFeat ). W ith this descriptor, Bag-of- visual-words uses 4096 vector-quantized visual words, histogram square rooting , followed by L2 no rmalization. W e com pare o ur method with an SVM classifier with RBF kern els, using exactly the same 40 96 dimension al fe ature. Our method achieves a co rrect classification rate of 48 . 8% while the SVM b aseline ach ie ves 46 . 4% . Note th at while rece nt works (Qi et al., 2015; Cimpoi et al., 2015) report better perfor mance on this dataset, the e f for t f ocuses on better feature design, not on the classi fier itself. Th e feature s u s ed in tho s e work s, such as loc al texture d escriptors and CNN features, are more sophisticated. MNIST (60,0 00 sa mp les). The MNIST dataset contains 10 classes ( 0 ∼ 9 ) o f handwritten digits with 60 , 00 0 sam - ples for training and 10 , 000 sam ples for testing. Each sam- ple is a 28 × 28 grey scale image. W e use 1 000 RBFs to represent our f unction f , with RBF centers obtaine d by ap- plying K-means clustering on the trainin g set. Note that our learning and regularization app roach still han dles all the 60 , 000 training samples as described by Algo rithm 1 . Our meth od achieves an erro r r ate of 2 . 7 4% . While there are many resu lts repor ted on this d ataset, we feel that th e most co mparable metho d with our representatio n is the Ra- dial Basis Fu nction Network with 1000 RBF un its ( LeCun et al. , 199 8 ), which achieves an er ror r ate o f 3 . 6% . This experiment shows the potential that ou r geo metric regular - ization appro ach scales to larger datasets. 5. Discussion Our geom etric regularization approach c an also be v ie wed as a comb ination of co mmon physical mo dels. As illus- trated in Figure 1 and 2 , ea ch train ing pair ( x , y ) corr e- sponds to a point at one of the vertices of the simplex asso- ciated with x . As a result, all train ing d ata lie on the bo und- ary of the space X × ∆ L − 1 , while the f unctional graph of a class probab ility estimator f is a h ypersurface (subman- ifold) in X × ∆ L − 1 . An initial estimator without trainin g informa tion correspo nds to the flat h yperplane in th e n eu- tral position. In respo nse to the p resence of the training data, this neu tral hy persurface deform s towards the train- ing data, as if attracted by a gravitational force due to point masses center ed at the train ing poin ts. Simultane ously , the regularization term for ces the h ypersurface to rem ain as flat (or as volume minimizing) as possible, as if in the presence of surface tension. Thus this term follows the physics of Class Probability Estimation via Differ ential Geometric Regularization soap fi lms and min imal surfaces ( Dierkes et al. , 1992 ). Ge- ometric flows like the one proposed here are often mod eled on ph ysical processes. In ou r case, the flow can b e vie wed as a mixed gravity and surface ten s ion physical exper iment. 6. Conclusion W e have intro duced a new ge ometric perspec ti ve on regu- larization for classification that explo its the geo metry of a robust class pro bability e s timator . Under this per specti ve, we p ropose a general regularization appr oach that applies to both binary and multiclass cases in a un ified way . In ex- periments w it h an examp le formu lation based on RBFs, ou r implementatio n achie ves fav or able results com paring with widely used RBF-based c las sification method s an d pr e vi- ous geometr ic r e gularization methods. While experimen- tal results dem onstrate the ef fec ti veness of our geometr ic regularization techniq ue, it is also imp ortant to study con- vergence pro perties of th is app roach fr om a learn ing th e- ory pe rspecti ve. As an initial attempt, we have established Bayes con sis tency for an easy case of em pirical penalty function an d d etails ar e provid ed in Appendix B . W e will continue this study in the future . Refer ences Audibert, Jean-Yves and Tsybakov , Ale xandre . Fast learn- ing rates fo r p lug-in c las sifiers. Annals of Statistics , 35 (2):60 8–633, 2007. Bartlett, Peter L, Jordan , Michael I, and McAuliffe, Jon D. Con vexity , classification, and risk boun ds. Journal of the American Statistical Association , 101(47 3):138– 156, 2006. Belkin, Mik hail an d Niyogi, Partha. Laplacian eigen- maps for dimen sionality red uction an d data representa- tion. Neural Computatio n , 15(6):1 373–1396 , 2003 . Belkin, Mikh ail, Niyo gi, Partha, an d Sind hwani, V ikas. Manifold regu larization: A geom etric framework for learning f rom lab eled and unlab eled examples. Journal of Machine Learning Resear ch , 7:2399– 2434, 200 6. Cai, Xiongcai a nd Sowmya, A rcot. Le vel learn ing set: A novel c las sifier based on acti ve conto ur mo dels. In Pr oc. Eur op ean Conf. on Machine Learning (ECML) , p p. 79– 90. 2007 . Chang, Chih-Chu ng a nd Lin, Chih- Jen. LIBSVM: A libra ry fo r suppo rt vector mach ines. AC M T ransactions on Intelligent Systems and T echnol- ogy , 2:27:1– 27:27, 2 011. Software av ailab le at http://www .csie.ntu.e du.tw/ ∼ cjlin/libsvm . Chen, Y un-Gang , Giga, Y oshikazu, and Goto, Shun ’ichi. Uniquene s s and existence of v is cosity solutions of g en- eralized mean curvature flow equation s . In Fundamental contributions to th e continuu m theory of evolving pha s e interfaces in s olids , pp. 375 –412. Sprin ger , Berlin, 1999. Devroye, Luc, Gy ¨ orfi, L ´ aszl ´ o, and Lug osi, G ´ abor . A p r ob - abilistic theory of pattern r ecognitio n . Springer , 19 96. Dierkes, Ulrich, Hildeb randt, Stefan, K ¨ uster , Albrecht, and W ohlrab, Ortwin. Min imal surface s . Sp ringer , 1992. Donoho , David and G rimes, Carrie . Hessian eigen- maps: Locally linear embed ding techniques for h igh- dimensiona l da ta. P r ocee dings of the National Academy of Sciences , 100( 10):5591–5 5 96, 200 3. FMD. http://peop le.csail.mit.edu/celiu/CVPR2010/FMD/ . Accessed: 201 5-06-01. Goodfellow , Ian J, Shlens, Jonathon, and Szegedy , Chris- tian. Explainin g and har nessing adversarial examples. arXiv pr ep ri nt arXiv:141 2.6572 , 2014. Guckenheimer, John and W orfo lk, Patrick. Dynam ical sys- tems: some computation al problems. In Bifur cations and periodic orbits of vector fi elds (Montreal, PQ, 19 92) , volume 4 08 of NA TO Adv . S ci. In st. Ser . C Math. Phys. Sci. , pp. 241–277. Klu wer Acad. Publ. , Do rdrecht, 199 3. LeCun, Y ann, Bottou , L ´ eon, Beng io, Y oshua, and Haffner , Patrick. Grad ient-based learnin g app lied to documen t recogn it ion. P r ocee dings of the IEEE , 86(1 1):2278– 2324, 1998 . Lin, T on g and Zha, Ho ngbin. Rieman nian manifold learn- ing. I EEE T rans. on P a ttern Analysis and Machine In - telligence (P AMI) , 30(5):796 –809, 200 8. Lin, T ong, Xue, Hanlin, W an g, Lin g, and Zha , Hongbin . T otal variation and Eu ler’ s elastica f or supervised learn- ing. P r oc. Interna tional Con f . o n Ma c h ine Learning (ICML) , 2012. Lin, T o ng, Xue, Hanlin, W ang, Ling, Huang , Bo, and Zha, Hongbin . Supervised learnin g via euler’ s elastica m od- els. Journal of Machine Learning Resear ch , 16 :3637– 3686, 2015 . Mantegazza, Carlo. Lectur e Notes o n Mea n Curva- tur e F low , volume 290 of Pr ogr ess in Mathematics . Birkh ¨ auser/Spring er Basel AG, Basel, 20 11. MNIST . http://http://yan n.lecun.com/exdb/mnist/ . Ac- cessed: 2 015-06-01 . Mumfor d, Da vid and Shah, Jayan t. Optimal app roxima- tions by piece wise smooth fun ctions an d associated v ari- ational pr oblems. Co mmunications on pure and applied mathematics , 42(5):5 77–685, 1 989. Class Probability Estimation via Differ ential Geometric Regularization Osher , Stanley and Sethian, James. Fronts propag ating with curvature-dep endent speed : alg orithms b ased o n hamilton- jacobi f ormulations. Journal of Computational Physics , 79(1):1 2–49, 1 988. Roscher, Ribana, F ¨ orstner , W olfgan g, and W aske, Bj ¨ orn. I 2 vm: inc remental imp ort vector machin es. Image and V ision Compu ting , 30(4 ):263–278, 2012 . Roweis, Sam and Saul, La wrence. Non linear dimension al- ity red uction by lo cally linear em bedding. S cience , 290 (5500 ):2323–232 6 , 200 0. Sch ¨ olkopf, Bernhar d and Smola, Alexander . Learning w ith kernels: Su pport vector machines, r e gu larization, op ti- mization, and beyond . MIT press, 2002. Sethian, James Albert. Level set metho ds and fast mar ching methods: evolving inte r faces in co mputational geome tr y , fluid mechan ics , co mputer vision, and materials science , volume 3. Cambridge university press, 1999 . Steinwart, Ingo . Consistency of sup port vector machines and oth er re gularized kernel classifiers. I EEE T rans. In - formation Theory , 51(1) :128–142, 2005. Stone, Charles. Consistent nonp arametric regression. An- nals of Statistics , pp. 595–62 0, 1977 . T en enbaum, Joshu a, De Silv a, V in, and Lan gford, J ohn. A global geometric f rame work f or no nlinear dimen sional- ity reduction. S cience , 29 0(5500):23 19–2323, 2 000. V apnik , Vladimir Nau movich. Statistical lea r ning theory , volume 1. W iley Ne w Y or k, 199 8. V arshney , Kush and Wi llsky , Alan. Classification using geometric level sets. Journal o f Machine Learnin g Re- sear ch , 11:4 91–516, 2010 . VLFeat. http://www .vlfeat.o r g/application s/ap ps.html . Ac- cessed: 2 015-06-01 . Zhang, Z henyue and Zh a, Hong yuan. Pr incipal man ifolds and no nlinear d imensionality redu ction via tangent spac e alignment. SIAM Journal on S cientific Computing , 26 (1):31 3–338, 2005. Zhou, Dengyong and S ch ¨ olkopf, Bernhard. Re gularizatio n on discrete spaces. In P attern Recognition , pp. 361–368 . Springer, 2005 . Zhu, Ji and Hastie, Tre vor . Kernel logistic re gression and the import vecto r machine. Journal of Compu tational and Graphical Statistics , 2005. Class Probability Estimation via Differ ential Geometric Regularization A. Proof of Th eor em 1 Pr oof. For f : R N → ∆ L − 1 ⊂ R L , { r j = r j ( x ) = (0 , . . . , j 1 , . . . , 0 , f 1 j , . . . , f L j ) : j = 1 , . . . N } is a basis of the tang ent space T x gr( f ) to gr( f ) . Here f i j = ∂ x j f i . Let { e i } be an orthonormal fram e of T x gr( f ) . W e have e i = B j i r j for some in vertible ma trix B j i . Define the metric matrix g fo r t he basis { r j } by g = ( g kj ) with g kj = r k · r j = δ kj + f i k f i j . Then δ ij = e i · e j = B k i B t j r k · r t = B k i B t j g kt ⇒ I = ( B B T ) g ⇒ B B T = g − 1 . Thus B B T is computab le in terms of derivati ves of f . Let D u w be the R N + L directional derivati ve o f w in the direction u . Th en T r I I = P ν D e i e i = P ν D B j i r j B k i r k = B j i P ν D r j B k i r k = B j i P ν [( D r j B k i ) r k ] + B j i B k i D r j r k = B j i B k i P ν D r j r k = ( g − 1 ) j k P ν D r j r k , since P ν r k = 0 . W e have r k = (0 , . . . , 1 , . . . , f 1 k ( x 1 , . . . , x N ) , . . . , f L k ( x 1 , . . . , x N )) = ∂ R N + L k + L X i =1 f i k ∂ R N + L N + i , so in particular, ∂ R N + L ℓ r k = 0 if ℓ > N . Thu s D r j r k = (0 , . . . , N 0 , f 1 kj , . . . , f L kj ) . So far , we have T r I I = ( g − 1 ) j k P ν (0 , . . . , N 0 , f 1 kj , . . . , f L kj ) . Since g is given in terms of deri vati ves of f , we need to write P ν = I − P T in terms of d eri vati ves of f . For any u ∈ R N + L , we have P T u = ( P T u · e i ) e i = ( u · B j i r j ) B k i r k = B j i B k i ( u · r j ) r k = ( g − 1 ) j k ( u · r j ) r k . Thus T r I I (17) = ( g − 1 ) j k P ν (0 , . . . , N 0 , f 1 kj , . . . , f L kj ) (18) = ( g − 1 ) j k (0 , . . . , N 0 , f 1 kj , . . . , f L kj ) − P T [( g − 1 ) j k (0 , . . . , N 0 , f 1 kj , . . . , f L kj )] = ( g − 1 ) j k (0 , . . . , N 0 , f 1 kj , . . . , f L kj ) − ( g − 1 ) j k [( g − 1 ) r s (0 , . . . , N 0 , f 1 r s , . . . , f L r s ) · r j ] r k = ( g − 1 ) j k (0 , . . . , N 0 , f 1 kj , . . . , f L kj ) − ( g − 1 ) j k ( g − 1 ) r s f i r s f i j r k = ( g − 1 ) ij 0 , . . . , j − ( g − 1 ) r s f a r s f a i , . . . , 0 , (19) f 1 j i − ( g − 1 ) r s f a r s f a i f 1 j , . . . , f L j i − ( g − 1 ) r s f a r s f a i f L j , after a relab eling of indices. T herefore, th e last L compo - nent of T r I I are given by T r II L = ( g − 1 ) ij f 1 j i − ( g − 1 ) r s f a r s f a i f 1 j , . . . , f L j i − ( g − 1 ) r s f a r s f a i f L j . B. An Easy Example with Bayes Consistency W e n o w give an examp le with a loss function that enab les easy Bayes consistency proof under some mild initializa- tion assumption. Related no tation is summer ized in § C . For ease of reading , we ch ange the notation for empirical penalty P T m in the App endix to P D , i.e., P = P D + λ P G . P D measures the deviation of g r ( f ) from th e map ped train- ing p oints, a natural geometr ic distance pen alty term is an L 2 distance in R L from f ( x ) to the averaged z com ponent of the k -nearest training points: P D ( f ) = R D, T m ,k ( f ) = Z X d 2 f ( x ) , 1 k k X i =1 ˜ z i ! d x , (20) where d is the Euclidean distance in R L , ˜ z i is the vector of the last L co mponents o f ( ˜ x i , ˜ z i ) = ( ˜ x 1 i , . . . , ˜ x N i , ˜ z 1 i , . . . , ˜ z L i ) , with ˜ x i the i th nearest neigh bor of x in T m , and d x is the Leb esgue measure. The g radient vector field is ∇ ( R D, T m ,k ) f ( x , f ( x )) = 2 k k X i =1 ( f ( x ) − ˜ z i ) . Class Probability Estimation via Differ ential Geometric Regularization Howe ver, ∇ ( R D, T m ,k ) f is discontinu ous on the set D of points x such that x has eq uidistant training points am ong its k nearest neig hbors. D is th e unio n of ( N − 1) - dimensiona l hyper planes in X , so D has measur e zer o. Such points will necessarily exist unless th e last L com - ponen ts of th e mapped trainin g po ints are all 1 or all 0 . T o rectify this, we can smo oth out ∇ ( R D, T m ,k ) f to a vector field V D, f ,φ = 2 φ ( x ) k k X i =1 ( f ( x ) − ˜ z i ) . (21) Here φ ( x ) is a smooth d amping fun ction close to the sin- gular function δ D , which has δ D ( x ) = 0 for x ∈ D and δ D ( x ) = 1 for x 6∈ D . Outside any ope n n eighborhood of D , ∇ R D, T m ,k = V D, f ,φ for φ close enou gh to δ D . Recall the g eometric pen alty from the submission, i.e., P G ( f ) = R gr( f ) dvol , with the geom etric gr adient vector field being V G, f = − T r I I L . Then the gradien t v ector field V tot,λ,m, f ,φ of this example penalty P is, V tot,λ,m, f ,φ = ∇P f = V D, f ,φ + λV G, f = 2 φ ( x ) k k X i =1 ( f ( x ) − ˜ z i ) − λ T r I I L . (22) B.1. Consistency analysis For a trainin g set T m , we let f T m = ( f 1 T m , . . . , f L T m ) be the class proba bility estimator g i ven by ou r approach. W e d enote the gene ralization risk of the co rresponding plug-in c las sifier h f T m by R P ( f T m ) = E P [ 1 h f T m ( x ) 6 = y ] . The Bayes risk is d efined by R ∗ P = inf h : X →Y R P ( h ) = E P [ 1 h η ( x ) 6 = y ] . Our algorithm is Bayes consistent if lim m →∞ R P ( f T m ) = R ∗ P holds in probab ili ty for all distribu- tions P on X × Y . Usually , grad ient flow method s are ap - plied to a convex f unctional, so that a flow line approach es the u nique global minimum. I f the domain of th e function al is an infinite dimensio nal manif old of (e .g. smoo th) fun c- tions, we al ways assume th at flow lines e xist and that the actual minimum exists in this manifold. Because o ur func ti onals are not conv ex, an d because we are strictly sp eaking not working with gradient vector fields, we ca n only hope to prove Bayes consistency for the set of initial estimator s in the stable ma nifold of a stable fixed point (or sink ) of the vector field ( Guckenheimer & W or- folk , 199 3 ). Recall that a stable fixed point f 0 has a maxi- mal op en ne ighborhood , the s table manifold S f 0 , on which flow lines tend towards f 0 . For the man ifold M , the sta- ble man ifold f or a stable critical point of the vector field V tot,λ,m, f ,φ is infinite dimensional. The pr oof of Bayes con sis tency for multiclass (including binary) classification follows these s teps: Step 1: lim λ → 0 R ∗ D,P ,λ = 0 . Step 2: lim n →∞ R D,P ( f n ) = 0 ⇒ lim n →∞ R P ( f n ) = R ∗ P . Step 3: For all f ∈ M = Maps( X , ∆ L − 1 ) , | R D, T m ( f ) − R D,P ( f ) | m →∞ − − − − → 0 in pr obability . Proofs o f these steps are provided in following sub- sections. F or the notatio n see § C . R ∗ D,P ,λ is th e minimum of the regularize d D risk R D,P ,λ ( f ) for f : R D,P ,λ ( f ) = R D,P ( f ) + λ P G ( f ) , with R D,P ( f ) = R X d 2 ( f ( x ) , η ( x )) d x the D -risk. Also, R D, T m ,λ ( f ) = R D, T m ( f ) + λ P G ( f ) , with R D, T m ( f ) = R X d 2 f ( x ) , 1 k P k i =1 ˜ z i d x the empirical D -risk. Theorem 2 (Bayes Consistency) . Let m be the size of the training data set. Let f 1 ,λ,m ∈ S f D, T m ,λ , the sta- ble manifold for the global minimum f D, T m ,λ of R D, T m ,λ , and let f n,λ,m,φ be a sequ ence o f fu nctions on the flo w line o f V tot,λ,m, f ,φ starting with f 1 ,λ,m with the flow time t n → ∞ a s n → ∞ . Then R P ( f n,λ,m,φ ) m,n →∞ − − − − − − − → λ → 0 ,φ → δ D R ∗ P in pr ob ability for all distr ibutions P on X × Y , if k /m → 0 as m → ∞ . Pr oof. I n the notation of § C , if f D, T m ,λ is a global mini- mum fo r R D, T m ,λ , then o utside of D , f D, T m ,λ is th e limit of critical p oints for the negative flow of V tot,λ,m, f , φ as φ → δ D . T o see this, fix an ǫ i neighbo rhood D ǫ i of D . For a sequence φ j → δ D , V tot,λ,m,f ,φ j is independen t o f j ≥ j ( ǫ i ) on X \ D ǫ i , so we fin d a function f i , a criti- cal point of V tot,λ,m, f ,φ j ( ǫ i ) , equal to f D, T m ,λ on X \ D ǫ i . Since a n y x 6∈ D lies outside some D ǫ i , the sequen ce f i conv erges at x if we let ǫ i → 0 . Thus we can ign ore the choice of φ in ou r proo f, and drop φ fro m the no tation. For our algorith m, f or fixed λ, m , we have as ab ov e lim n →∞ f n,λ,m = f D, T m ,λ , so lim n →∞ R D, T m ,λ ( f n,λ,m ) = R D, T m ,λ ( f D, T m ,λ ) , for f 1 ∈ S f D, T m ,λ . By Step 2, it suffices to show R D,P ( f D, T m ,λ ) m →∞ − − − − → λ → 0 0 . In probability , we h a ve ∀ δ > Class Probability Estimation via Differ ential Geometric Regularization 0 , ∃ m > 0 suc h tha t R D,P ( f D, T m ,λ ) ≤ R D,P ( f D, T m ,λ ) + λ P G ( f D, T m ,λ ) ≤ R D, T m ( f D, T m ,λ ) + λ P G ( f D, T m ,λ ) + δ 3 (Step 3) = R D, T m ,λ ( f D, T m ,λ ) + δ 3 ≤ R D, T m ,λ ( f D,P ,λ ) + δ 3 (minimality of f D, T m ,λ ) = R D, T m ( f D,P ,λ ) + λ P G ( f D,P ,λ ) + δ 3 ≤ R D,P ( f D,P ,λ ) + λ P G ( f D,P ,λ ) + 2 δ 3 (Step 3) = R D,P ,λ ( f D,P ,λ ) + 2 δ 3 = R ∗ D,P ,λ + 2 δ 3 ≤ δ, ( Step 1) for λ close to zero. Since R D,P ( f D, T m ,λ ) ≥ 0 , we are done. B.2. Step 1 Lemma 3. (Step 1) lim λ → 0 R ∗ D,P ,λ = 0 . Pr oof. Af ter the smoothing pr ocedure in § 3.1 for the dis- tance p enalty term, the fun ction R D,P ,λ : M → R is co n- tinuous in the Fr ´ echet topology o n M . W e check that the function s R D,P ,λ : M → R are equico ntinuous in λ : fo r fixed f 0 ∈ M an d ǫ > 0 , there exists δ = δ ( f 0 , ǫ ) such that | λ − λ ′ | < δ ⇒ | R D,P ,λ ( f 0 ) − R D,P ,λ ′ ( f 0 ) | < ǫ. This is immediate: | R D,P ,λ ( f 0 ) − R D,P ,λ ′ ( f 0 ) | = | ( λ − λ ′ ) P G ( f 0 ) | < ǫ , if δ < ǫ/ |P G ( f 0 ) | . It is standard that th e in fi mum inf R λ of an eq uicontinuous family of functions is continuo us in λ , so lim λ → 0 R ∗ D,P ,λ = R ∗ D,P ,λ =0 = R D,P ( η ) = 0 . B.3. Step 2 W e assume that the class probab ilit y fun ction η ( x ) : R N → R L is smooth, and that the marginal distribution µ ( x ) is continuous. W e also let µ denote the correspond- ing measure on X . Notatio n: h f ( x ) = a rgmax { f ℓ ( x ) , ℓ ∈ Y } . Of course, 1 h f ( x ) 6 = y = 1 , h f ( x ) 6 = y , 0 , h f ( x ) = y . Lemma 4. (Step 2 for a subseque nce) lim n →∞ R D,P ( f n ) = 0 ⇒ lim i →∞ R P ( f n i ) = R ∗ P for some subsequen ce { f n i } ∞ i =1 of { f n } . Pr oof. T he left han d side of the Lem ma is Z X d 2 ( f n ( x ) , η ( x )) d x → 0 , which is equiv alent t o Z X d 2 ( f n ( x ) , η ( x )) µ ( x ) d x → 0 , (23) since X is co mpact and µ is contin uous. Therefo re, it suf- fices to show Z X d 2 ( f n ( x ) , η ( x )) µ ( x ) d x → 0 (24) = ⇒ E P [ 1 h f n ( x ) 6 = y ] → E P [ 1 h η ( x ) 6 = y ] . W e recall that L 2 conv ergence imp lies pointwise conver - gence a.e, so ( 23 ) implies th at a subsequence of f n , also denoted f n , has f n → η ( x ) pointwise a.e. on X . (By our assumptio n on µ ( x ) , these statements hold for either µ or Lebesgue measure.) By Ego ro v’ s theo rem, for any ǫ > 0 , th ere exists a set B ǫ ⊂ X w it h µ ( B ǫ ) < ǫ such that f n → η ( x ) unif ormly on X \ B ǫ . Fix δ > 0 and set Z δ = { x ∈ X : # { argma x ℓ ∈Y η ℓ ( x ) } = 1 , | ma x ℓ ∈Y η ℓ ( x ) − submax ℓ ∈Y η ℓ ( x ) | < δ } , where s ubm ax ℓ ∈Y denotes the secon d largest elemen t in { η 1 ( x ) , . . . , η L ( x ) } . For th e moment, assume th at Z 0 = { x ∈ X : # { a rgmax ℓ ∈Y η ℓ ( x ) } > 1 } has µ ( Z 0 ) = 0 . It follows easily 2 that µ ( Z δ ) → 0 as δ → 0 . On X \ ( Z δ ∪ B ǫ ) , we hav e 1 h f n ( x ) 6 = y = 1 h η ( x ) 6 = y for n > N δ . Thus E P [ 1 X \ ( Z δ ∪ B ǫ ) 1 h f n ( x ) 6 = y ] = E P [ 1 X \ ( Z δ ∪ B ǫ ) 1 h η ( x ) 6 = y ] . 2 Let A k be sets wi th A k +1 ⊂ A k and with µ ( ∩ ∞ k =1 A k ) = 0 . If µ ( A k ) 6→ 0 , then there exists a subsequence, also called A k , with µ ( A k ) > K > 0 for some K . W e claim µ ( ∩ A k ) ≥ K , a contradiction. For the claim, let Z = ∩ A k . If µ ( Z ) ≥ µ ( A k ) for all k , we are done. If not, since the A k are nested, we c an replace A k by a set, also called A k , of measure K and such that the new A k are still nested. For the relabeled Z = ∩ A k , Z ⊂ A k for all k , and we may assume µ ( Z ) < K. Thus there exists Z ′ ⊂ A 1 with Z ′ ∩ Z = ∅ and µ ( Z ′ ) > 0 . Since µ ( A i ) = K , we must hav e A i ∩ Z ′ 6 = ∅ for all i . Thus ∩ A i is strictly larger t h an Z , a co ntradiction. In summary , the claim must hold, so we get a contradiction to assuming µ ( A k ) 6→ 0 . Class Probability Estimation via Differ ential Geometric Regularization (Here 1 A is the characteristic function of a set A .) As δ → 0 , E P [ 1 X \ ( Z δ ∪ B ǫ ) 1 h f n ( x ) 6 = y ] → E P [ 1 X \ B ǫ 1 h f n ( x ) 6 = y ] . and similarly for f n replaced by η ( x ) . Dur ing th is process, N δ presumab ly g oes to ∞ , but that precisely means lim n →∞ E P [ 1 X \ B ǫ 1 h f n ( x ) 6 = y ] = E P [ 1 X \ B ǫ 1 h η ( x ) 6 = y ] . Since E P [ 1 X \ B ǫ 1 h f n ( x ) 6 = y ] − E P [ 1 h f n ( x ) 6 = y ] < ǫ, and similarly for η ( x ) , we get lim n →∞ E P [ 1 h f n ( x ) 6 = y ] − E P [ 1 h η ( x ) 6 = y ] ≤ lim n →∞ E P [ 1 h f n ( x ) 6 = y ] − lim n →∞ E P [ 1 X \ B ǫ 1 h f n ( x ) 6 = y ] + lim n →∞ E P [ 1 X \ B ǫ 1 h f n ( x ) 6 = y ] − E P [ 1 X \ B ǫ 1 h η ( x ) 6 = y ] + lim n →∞ E P [ 1 X \ B ǫ 1 h η ( x ) 6 = y ] − E P [ 1 h η ( x ) 6 = y ] ≤ 3 ǫ. (Strictly speaking, lim n →∞ E P [ 1 h f n ( x ) 6 = y ] is first lim sup and then lim inf to show th at th e limit exists.) Since ǫ is arbitrary , the proof is complete if µ ( Z 0 ) = 0 . If µ ( Z 0 ) > 0 , we reru n the proof with X rep laced by Z 0 . As ab o ve, f n | Z 0 conv erges unifor mly to η ( x ) off a set of measure ǫ . Th e argumen t ab o ve, without the set Z δ , gives Z Z 0 1 h f n ( x ) 6 = y µ ( x ) d x → Z Z 0 1 h η ( x ) 6 = y µ ( x ) d x . W e then proc eed with the proo f above on X \ Z 0 . Corollary 5 . (Step 2 in general) F or our algo r ithm, lim n →∞ R D,P ( f n,λ,m ) = 0 ⇒ lim i →∞ R P ( f n,λ,m ) = R ∗ P . Pr oof. Cho ose f 1 ,λ,m as in Theo rem 2 . Since V tot,λ,m, f n,λ,m has po intwise length go ing to zero as n → ∞ , { f n,λ,m ( x ) } is a Cauchy sequen ce for all x . This im - plies that f n,λ,m , and not ju st a subsequenc e, converges pointwise to η . B.4. Step 3 Lemma 6. (Step 3) If k → ∞ and k /m → 0 as m → ∞ , then for f ∈ Maps( X , ∆ L − 1 ) , | R D, T m ( f ) − R D,P ( f ) | m →∞ − − − − → 0 in pr obability , for all distributions P that generate T m . Pr oof. Sin ce R D,P ( f ) is a constan t for fixed f and P , conv ergence in p robability will follow from w eak co n ver- gence, i.e., E T m [ | R D, T m ( f ) − R D,P ( f ) | ] m →∞ − − − − → 0 . W e have | R D, T m ( f ) − R D,P ( f ) | = Z X " d 2 f ( x ) , 1 k k X i =1 ˜ z i ! − d 2 ( f ( x ) , η ( x )) # d x ≤ Z X d 2 f ( x ) , 1 k k X i =1 ˜ z i ! − d 2 ( f ( x ) , η ( x )) d x . Set a = f ( x ) − 1 k P k i =1 ˜ z i , b = f ( x ) − η ( x ) . The n k a k 2 2 − k b k 2 2 = L X ℓ =1 a 2 ℓ − L X ℓ =1 b 2 ℓ = L X ℓ =1 ( a 2 ℓ − b 2 ℓ ) ≤ L X ℓ =1 | a 2 ℓ − b 2 ℓ | ≤ 2 L X ℓ =1 | a ℓ − b ℓ | ma x {| a ℓ | , | b ℓ |} ≤ 2 L X ℓ =1 | a ℓ − b ℓ | , since f ℓ ( x ) , 1 k P k i ˜ z ℓ i , η ℓ ( x ) ∈ [0 , 1] . Ther efore, it suffices to show that L X ℓ =1 E T m " Z X (( f ℓ ( x ) − 1 k k X i ˜ z ℓ i ) − ( f ℓ ( x ) − η ℓ ( x )) d x # m →∞ − − − − → 0 , so the result follows if lim m →∞ E T m , x " η ℓ ( x ) − 1 k k X i ˜ z ℓ i # = 0 f or all ℓ. (2 5) By Jensen’ s inequality ( E [ f ]) 2 ≤ E ( f 2 ) , ( 25 ) follows if lim m →∞ E T m , x η ℓ ( x ) − 1 k k X i ˜ z ℓ i ! 2 = 0 f or all ℓ. (26) Let η ℓ k,m ( x ) = 1 k P k i ˜ z ℓ i . Then η ℓ k,m is actu ally an estimate of the class p robability η ℓ ( x ) by th e k -Neare s t Neighbor rule. Following the proo f of Stone’ s Theorem ( Stone , 1 977 ; Devroye et al. , 1996 ), if k m →∞ − − − − → ∞ an d k /m m →∞ − − − − → 0 , ( 26 ) holds for all distributions P . Class Probability Estimation via Differ ential Geometric Regularization C. Notation h f ( x ) = a rgmax { f ℓ ( x ) , ℓ ∈ Y } : plug-in classifier of estimator f : X → ∆ L − 1 1 h f ( x ) 6 = y = 1 , h f ( x ) 6 = y , 0 , h f ( x ) = y . R P ( f ) = E P [ 1 h f ( x ) 6 = y ] : genera lization risk for the estimator f η ( x ) = ( η 1 ( x ) , . . . , η L ( x )) : class probab ility f unction: η ℓ ( x ) = P ( y = ℓ | x ) R ∗ P = R P ( η ) : Bayes risk ( D - risk for our P D ) R D,P ( f ) = Z X d 2 ( f ( x ) , η ( x )) d x ( empirical D -risk ) R D, T m ( f ) = R D, T m ,k ( f ) = Z X d 2 f ( x ) , 1 k k X i =1 ˜ z i ! d x where ˜ z i is the vector of the last L co mponents of ( ˜ x i , ˜ z i ) , with ˜ x i the i th nearest neighbor of x in T m ( volume penalty term ) P G ( f ) = Z gr( f ) dvol R D,P ,λ ( f ) = R D,P ( f ) + λ P G ( f ) : regularized D -risk for estimato r f R D, T m ,λ ( f ) = R D, T m ( f ) + λ P G ( f ) : regularized empirical D -risk for estimator f f D,P ,λ = function attaining the global minimum for R D,P ,λ R ∗ D,P ,λ = R D,P ,λ ( f D,P ,λ ) : minimum value for R D,P ,λ f D, T m ,λ = f D, T m ,k,λ : function attaining the global minimum for R D, T m ,λ ( f ) Note that we assume f D,P ,λ and f D, T m ,λ exist.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment