Machine Learning Model of the Swift/BAT Trigger Algorithm for Long GRB Population Studies

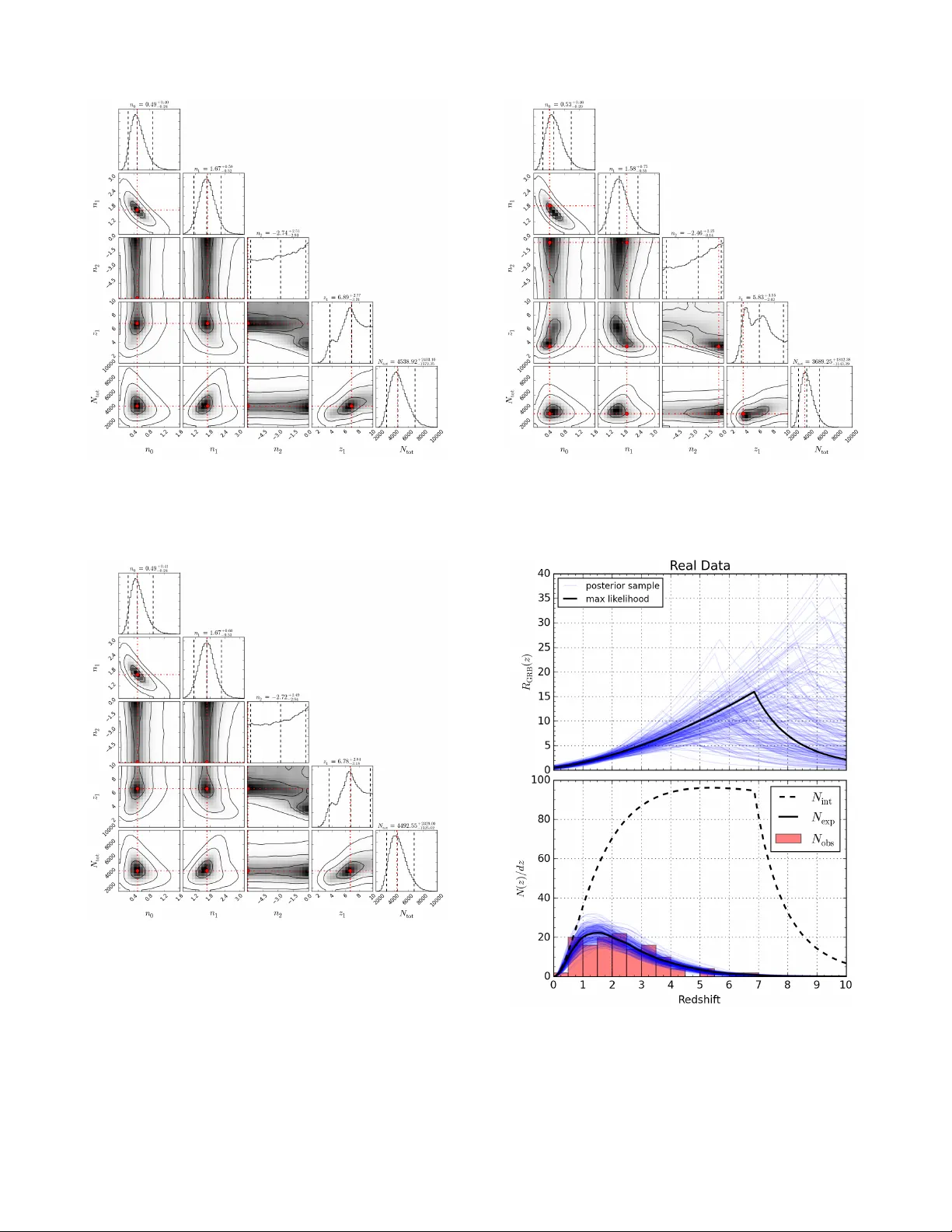

To draw inferences about gamma-ray burst (GRB) source populations based on Swift observations, it is essential to understand the detection efficiency of the Swift burst alert telescope (BAT). This study considers the problem of modeling the Swift/BAT…

Authors: Philip B Graff, Amy Y Lien, John G Baker