Information-Theoretic Bounded Rationality

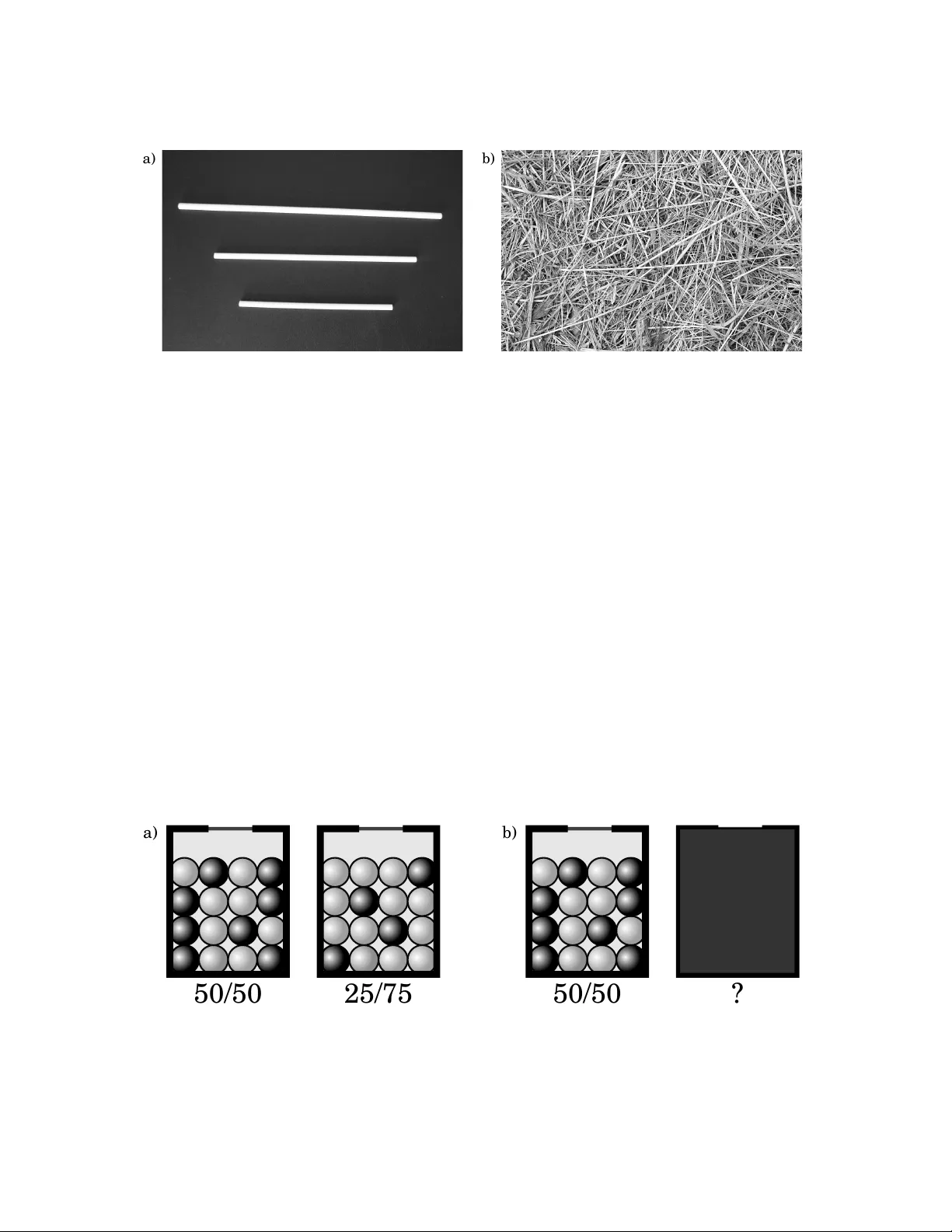

Bounded rationality, that is, decision-making and planning under resource limitations, is widely regarded as an important open problem in artificial intelligence, reinforcement learning, computational neuroscience and economics. This paper offers a c…

Authors: Pedro A. Ortega, Daniel A. Braun, Justin Dyer