Low-latency List Decoding Of Polar Codes With Double Thresholding

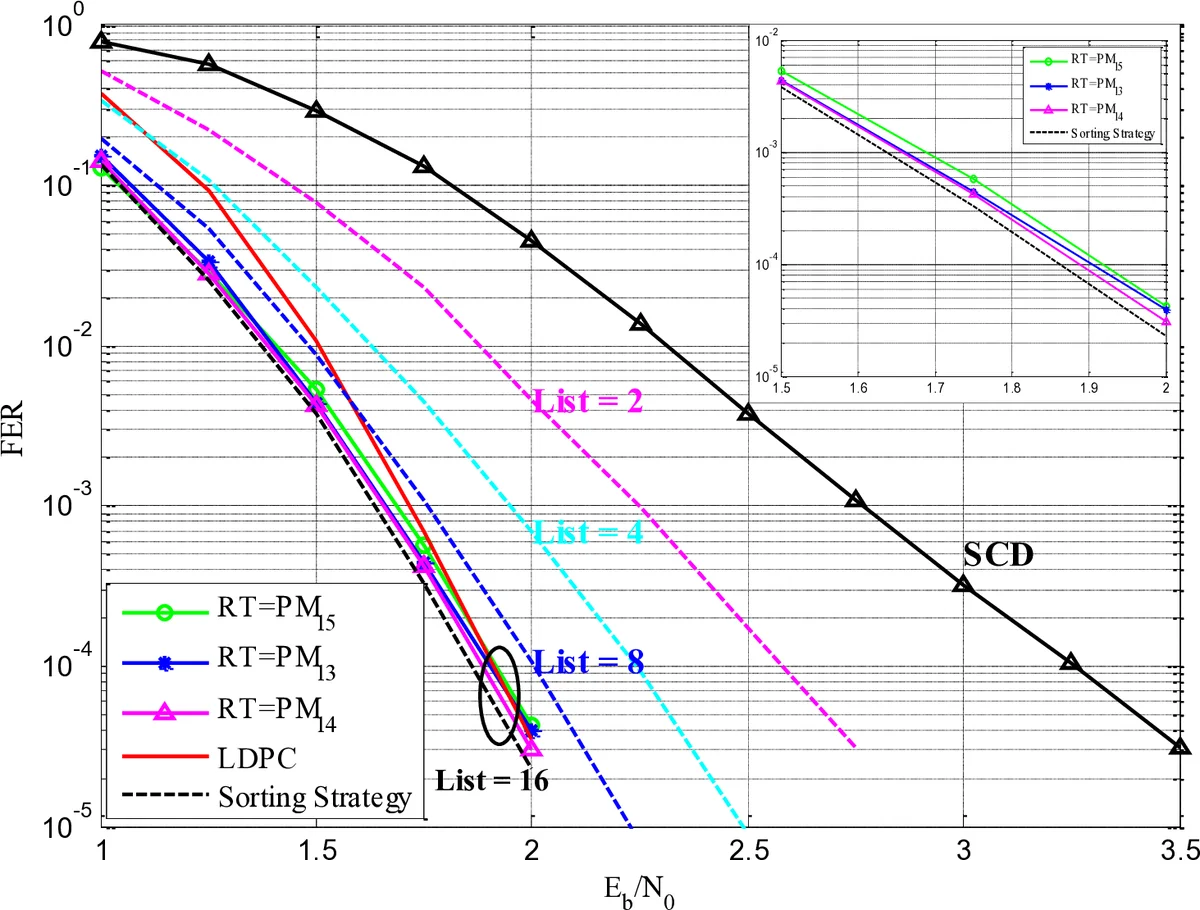

For polar codes with short-to-medium code length, list successive cancellation decoding is used to achieve a good error-correcting performance. However, list pruning in the current list decoding is based on the sorting strategy and its timing complexity is high. This results in a long decoding latency for large list size. In this work, aiming at a low-latency list decoding implementation, a double thresholding algorithm is proposed for a fast list pruning. As a result, with a negligible performance degradation, the list pruning delay is greatly reduced. Based on the double thresholding, a low-latency list decoding architecture is proposed and implemented using a UMC 90nm CMOS technology. Synthesis results show that, even for a large list size of 16, the proposed low-latency architecture achieves a decoding throughput of 220 Mbps at a frequency of 641 MHz.

💡 Research Summary

The paper addresses a critical bottleneck in polar‑code list successive cancellation decoding (LSCD): the list pruning (LPO) step, which traditionally relies on sorting 2 L path metrics to retain the L best candidates. As the list size L grows (e.g., 16 or 32), sorting incurs O(L²) hardware complexity and a latency that scales with L, making it unsuitable for low‑latency, high‑throughput applications such as 5G.

To overcome this, the authors propose a Double Thresholding Strategy (DTS). DTS defines two thresholds—Acceptance Threshold (AT) and Rejection Threshold (RT)—derived from the current list’s metrics. After extending each of the L paths, 2 L new path metrics are generated. The algorithm classifies each metric as follows:

- If metric < AT, the corresponding path is automatically kept.

- If metric > RT, the path is discarded.

- If AT ≤ metric ≤ RT, the path is a “borderline” candidate; a random selection fills the list up to size L.

Theoretical analysis (Proposition 1) shows that choosing AT = pm_{L/2} (the median of the current list) and RT = pm_{L‑1} (the maximum) guarantees that at least L/2 of the truly best paths survive, while no more than L paths are ever kept. Consequently, the algorithm approximates the optimal L‑best selection without full sorting.

Hardware implementation of DTS is extremely lightweight: four comparators evaluate all 2 L metrics against the two fixed thresholds in parallel, and a priority encoder resolves the random‑selection case. This yields a logic delay far shorter than that of a full sorter.

Because AT and RT must be updated each decoding step, the authors introduce a Threshold Tracking Architecture (TTA). TTA computes the median (AT) and the maximum (RT) of the L current path metrics using a hierarchical approach: the L values are split into two halves, each sorted by a radix‑L/2 sorter; subsequent stages compare middle elements to narrow the candidate set for the median, employing log₂ L stages of comparators and multiplexers. The maximum is obtained by a simple comparator between the two half‑maxima. This structure has O(L·log L) complexity and can be pipelined over several cycles, relaxing timing constraints.

The complete decoder architecture integrates L parallel SCD units (each semi‑parallel with M < N/2 processing elements), a Path Metric Update (PMU) block that computes the 2 L metrics using the LLR‑based metric update formula, the DTS block for fast pruning, and a Lazy Copy (LCP) module that updates memory pointers for the surviving paths. After the leaf nodes are processed, a CRC check selects the final codeword.

Post‑layout synthesis in a UMC 90 nm CMOS library demonstrates that even with L = 16 the decoder achieves 641 MHz operation and a throughput of 220 Mbps, surpassing prior works that used Bitonic sorters or full parallel sorters for L = 8. The performance loss relative to an ideal CRC‑aided LSCD is modest (≈0.05–0.1 dB in FER), attributable to the random selection step when the number of paths kept by the “accept” rule is insufficient.

In summary, the paper presents a novel, low‑complexity list‑pruning technique that replaces costly sorting with simple threshold comparisons, complemented by an efficient median‑tracking circuit. This results in a decoder that simultaneously reduces latency, area, and power while preserving the strong error‑correction capability of polar‑code list decoding, making it highly suitable for next‑generation wireless standards that demand both high throughput and stringent latency constraints.

Comments & Academic Discussion

Loading comments...

Leave a Comment