Distributed Detection via Bayesian Updates and Consensus

In this paper, we discuss a class of distributed detection algorithms which can be viewed as implementations of Bayes' law in distributed settings. Some of the algorithms are proposed in the literature most recently, and others are first developed in…

Authors: Qipeng Liu, Jiuhua Zhao, Xiaofan Wang

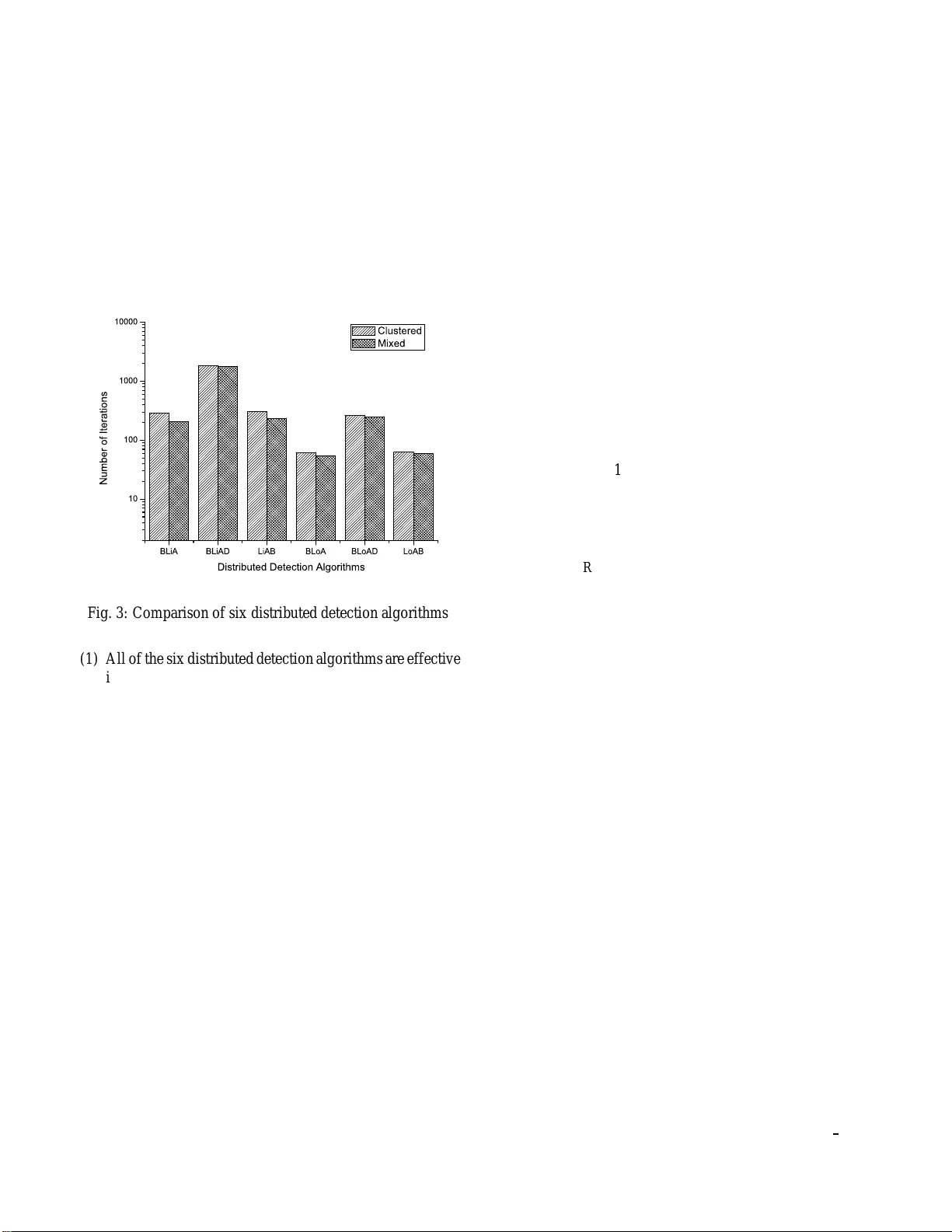

Distrib uted Detection via Bayesian Updates and Consensus LIU Qipeng 1 , ZHA O Jiuhua 2 , W ANG Xiaofan 2 1. Institute of Comple xity Science, Qingdao Unive rsity , Qingdao 266071, P . R. China E-mail: qipengliu@qdu .edu.cn 2. Department of Automation, Shanghai Jiao T ong Uni versity , and Ke y Laboratory of System Control and Information Processing, Ministry of Education of China, Shanghai 200240, P . R. China E-mail: { jiuhuadandan,xfw ang } @sjtu.edu.cn Abstract: In this paper , we discu ss a class of d istributed detection algorithms which can be viewed as implemen tations of Bay es’ la w in d istributed setting s. Some of the algorithms are propose d in the literature most recently , and others are first dev eloped in this p aper . The common feature of these alg orithms is that they all combine (i) certain kinds of consensu s protoco ls with (ii) Bayesian updates. They are dif ferent mainly in the asp ect o f the type of consensus protoc ol and the order of the two op erations. After discu ssing their similarities and differences, we compare these distributed algorithms by numerical examples. W e focus on the rate at which these algorithms detect the u nderlying true state of an object. W e find that (a) The algorithms with co nsensus via geometric a verage is more efficien t than tha t via arithmetic av erage; (b) The order o f consensus agg regation and Bayesian upda te d oes not ap parently influence the performa nce of the algorithms; (c) The existence o f co mmunication delay d ramatically slo ws do wn the rate of con verg ence; (d) More communica tion b etween agents with different signa l structures improv es the rate of con vergen ce. K ey W ords: Networke d Systems, Distributed Detection, Consensus, Bayes’ Law 1 Introduction Recent years ha ve witnessed a considerab le am ount of w o rk on analysis of n etworked systems ranging from social an d econom ic n etworks to robot and sensor networks [1 – 4]. An amazing phenom enon arising in networked systems is that, by commun icating an d cooperating among in dividuals, the whole group co uld comp lete very complicated tasks way beyon d the ability of any single agent. A com mon task in n etworked systems is that all agen ts are supposed to collecti vely fi nd out an underlyin g true state of an object using relativ ely local information such as priv ate obser- vations and neigh bors’ in formation . F or instance, v oters at- tempt to find out the ab ility of some political candid ates; cos- tumers lear n the quality of a new prod uct; a n etwork of sen- sors detect th e mean temperature of a wid e area. According to specific contexts, this task might have d ifferent names, such as social learning, distributed de tection, distributed estimation , and distrib u ted hypoth esis testing [5 – 14]. T o be consistent, we call this ta sk d istrib uted detection throu ghout th is paper . The aim of the whole grou p is to detect the un derlying true state of an ob ject. T o complete the task, a variety of distributed al- gorithms are design ed in the literatur e to effectiv ely aggregate informa tion scattered all over the network. In this paper , we focus on a clas s of distrib u ted detection al- gorithms which in volve th e implementation of Bayes’ la w in a distributed setting . It is well known that th e stan dard Bayes’ law is very useful in detection, estimation, hypoth esis testing, and other similar applications. By continuously o bserving n ew data or oth er useful infor mation, an indi vidual could eventu- ally learn t he true state. In a networked setting, it is a common This work was supported by the National Natural Science Foundat ion of Chi na under Grant Nos. 61374176 and 61473189, and the Scienc e Fund for Creati ve Researc h Groups of the Nati onal Nat ural Science Foundati on of China (No. 61221003). pheno menon that any s ingle agent co uld n ot lea rn the true state by itself. Howe ver, com municating with others in the network might b ring the agen ts mo re useful info rmation, and un der ce r- tain cond itions, all agents mig ht e ventually learn the true state collectively . Our work in this paper is directly motivated by [1 0 – 14] where different but quite similar distributed detection algo- rithms are d ev eloped. All of th ese algorithm s combine certain kinds of consensus pro tocols with Bayesian updates. They are different mainly in the aspect of the ty pe of consensus protocol and the or der of the two oper ations. In [1 0], a gents first lo g- arithmically ag gregate the ir neigh bors’ beliefs to f orm a new prior belief, and then update their own beliefs using Bayes’ law . In [11], in contrast to that in [10], an distributed detection algorithm with local B ayesian update first is p roposed , i. e., the two o perations chang e their order . In stead of logar ithmically aggregating neigh bors’ posterior be liefs like th at in [11], an al- gorithm with linearly ag gregation is proposed in [12]. In [1 3], each agent first compute its B ayesian posterior belief based on its priv ate observation, and then linear ly combine it with neigh- bors’ prior beliefs which can be interpre ted as commun ication delay in the d ynamics. The distributed detectio n rule in [14], also inv olv ing d elayed comm unication, log arithmically com- bines th e Bayesian posterior based on private observation and neighbo rs’ prior beliefs, which is can be viewed as a logarith - mic analog of the rule in [13]. In this pape r , we first provide a systemic d iscussion about the class of distrib u ted de tection algor ithms which combine certain kinds of consensus proto cols with Bay esian updates, and show their e ssential similar ities and d ifferences. Some of the algorithm s are proposed in the l iterature most recently , and others ar e first pr oposed in this paper . Then, we provid e n u- merical examples to compare their perf ormance in d istributed detection prob lems. Some qu alitativ e results are given which might provide u s insight in to the design of mor e efficient dis- tributed detection algorithm s. This paper is organized as follows. In Sec. 2 we discuss and compare the distrib uted detection algorithms. In Sec. 3 we provide numerical examples to analy ze the factor s which in- fluence the efficient of distributed detection alg orithms. Con - cluding remarks are giv en in Sec. 4. 2 Distribut ed Detection Algorithms Base d on Con- sensus and Bayesian Updates 2.1 Preliminaries Consider a social n etwork as a dir ected g raph G = ( V , E ) , where V = { 1 , 2 , · · · , n } is the nod e set and E ⊂ V × V is the edge set. Each node in V represents an agent, and the edge from i to j , denoted by th e or der pa ir ( i, j ) ∈ E , captu res the fact that agen t i is a ne ighbor of age nt j , and j can receive some info rmation f rom i . The set of neighbo rs of agent i is denoted b y N i = { j ∈ V : ( j, i ) ∈ E } . Moreover , weight a ij ∈ [0 , 1] is assigned to any ordere d pair o f ag ents such that a ij > 0 if and o nly if j ∈ N i . The weig ht a ii ≥ 0 is the self-weight of agent i , and we posit that P n i =1 a ij = 1 . Let θ d enote a state of the object we a re concerned with, an d all the possible states compose a state set Θ = [ θ 1 , θ 2 , · · · , θ m ] , in which the true state is deno ted by θ ∗ . From the point o f view of agent i at time t , the probability of state θ bein g true is den oted b y µ i,t ( θ ) , wh ich is called the belief of agent i on θ . Thus, agen t i ’ s belief µ i,t = [ µ i,t ( θ 1 ) , µ i,t ( θ 2 ) , · · · , µ i,t ( θ m )] ∈ P (Θ) is a probab ility dis- tribution over Θ , where P (Θ) is the set o f all possible p roba- bility distribution o ver Θ . Conditional on the u nderlyin g true state, at ea ch time pe- riod t > 0 , a signal vector s t = ( s 1 ,t , s 2 ,t , · · · , s n,t ) ∈ S is generated according to the likelihood function ℓ ( s t | θ ∗ ) , where s i,t is the signal observed by agent i and S is th e signal space. For each ob served signal s and each possible state θ , ag ent i holds a corresponding priv a te signal structure ℓ i ( s | θ ) > 0 , rep- resenting the prob ability that it believes signal s appe ars if the true state is θ . W e assume tha t the private signal stru cture of agent i abo ut the true state θ ∗ is the i - th margina l o f ℓ ( ·| θ ∗ ) , which means the a gent has a perf ect p rior infor mation abo ut the true state. If there exists a state ¯ θ 6 = θ ∗ satisfying that ℓ i ( s | ¯ θ ) = ℓ i ( s | θ ∗ ) for all signal s , we call ¯ θ observationally equiv alent to the true state. That is to say , state ¯ θ an d th e un- derlying true state θ ∗ arouse exactly the same signals acc ording to the same prob ability in agent i ’ s eyes, and th us, he canno t tell these two states apart only by ob serving the signals. All the states th at ob servationally equ i valent to θ ∗ from the p oint of v ie w of agent i compo se a set ¯ Θ i = { θ ∈ Θ : ℓ i ( s | θ ) = ℓ i ( s | θ ∗ ) , ∀ s ∈ S } . If ∩ n i =1 ¯ Θ i = { θ ∗ } , we say the tru e state θ ∗ is globally identifiable. In the next, we will describe six d istributed detec tion algo- rithms used to detect the under lying true state, which all can b e viewed as com binations of consensus pr otocols and Bayesian updates. 2.2 Interpreting the Bayesian P osterio r as the Solution of an Optimizatio n Pr oblem The standar d Bayesian posterior ob tain by agen t i b ased on its observation s i,t +1 is as follows: µ i,t +1 ( θ ) = µ i,t ( θ ) ℓ i ( s i,t +1 | θ ) P θ k ∈ Θ µ i,t ( θ k ) ℓ i ( s i,t +1 | θ k ) , θ ∈ Θ . (1) As point out in [10, 15, 16], the posterior b elief can be inter - preted as the solution of the following optimization prob lem µ i,t +1 = arg min π ∈ P (Θ) n D K L ( π k µ i,t ) − X θ k ∈ Θ π ( θ k ) log ( ℓ i ( s i,t +1 | θ k )) o (2) where D K L ( π k µ i,t ) is the Kullbac k-Leibler di vergen ce (the KL-divergence for short) b etween pr obability distributions π and µ i,t with the following definition D K L ( π k µ i,t ) = X θ k ∈ Θ π ( θ k ) log π ( θ k ) µ i,t ( θ k ) . Note that the fir st term on the right h and side of (2) mea- sures the difference between th e distributions π a nd µ i,k , and the second term is the max imum likelihood e stimation given the observation s i,t +1 . Thus, the poster ior distribution ca n be viewed as a tra deoff between the prio r belief and th e observa- tion. In a network setting, by introdu cing neighb ors’ prior d istri- bution into the optimization problem ( 2), we obtain the fo llow- ing new optimization problem µ i,t +1 = arg min π ∈ P (Θ) n X j =1 a ij D K L ( π k µ j,t ) − X θ k ∈ Θ π ( θ k ) log ( ℓ i ( s i,t +1 | θ k )) . (3) Note that n X j =1 a ij D K L ( π k µ j,t ) = n X j =1 a ij X θ k ∈ Θ π ( θ k ) log π ( θ k ) µ j,t ( θ k ) = X θ k ∈ Θ π ( θ k ) n X j =1 a ij log π ( θ k ) µ j,t ( θ k ) = X θ k ∈ Θ π ( θ k ) log n Y j =1 π ( θ k ) µ j,t ( θ k ) a ij = X θ k ∈ Θ π ( θ k ) log π ( θ k ) Q n j =1 µ a ij j,t ( θ k ) = D K L π k n Y j =1 µ a ij j,t which is the KL-divergence between π an d the ge ometric mean of the prior beliefs of agent i and its neighb ors. By th e above der i vation, th e o ptimization proble m (3) can be rewritten as µ i,t +1 = arg min π ∈ P (Θ) D K L π k n Y j =1 µ a ij j,t − X θ k ∈ Θ π ( θ k ) log ( ℓ i ( s i,k +1 | θ k )) . ( 4) The so lution of (3) (also (4)), is the Baye sian poster ior belief correspo nding to the prior Q n j =1 µ a ij j,t , which has the follo wing form µ i,t +1 ( θ ) = Q n j =1 µ a ij j,t ( θ ) ℓ i ( s i,t +1 | θ ) P θ k ∈ Θ Q n j =1 µ a ij j,t ( θ k ) ℓ i ( s i,t +1 | θ k ) , θ ∈ Θ . (5) The updating ru le (5) is a distributed algor ithm wh ereby each agent first ag gregates th e b eliefs of its ne ighbors and itself as a n ew prio r belief via weighted geometric average, an d then uses Bayes’ law to compute po sterior distribution. F or sim- plicity , in this pa per we call th e rule (5) Lo AB (Log arithmic Aggregation and Bayesian u pdate). T he rule LoAB has b een originally prop osed an d studied b y Nedi ´ c e t al. in [10], and the sufficient condition u nder wh ich agen ts can lear n the un - derlying true state using (5) is summarized as follows: Condition 1: (1) The time-varying ne twork is B-str ongly connected , i.e ., ther e is a n integ er B ≥ 1 such tha t the n etwork is jointly str ongly conn ected acr o ss every B time slots. (2) Any positive weig ht ha s a constan t lo wer bound η > 0 , i.e., if a ij > 0 then a ij ≥ η for all i, j ∈ V . (3) All agents have p ositive self-weights, i.e., a ii > 0 for all i ∈ V . (4) All agents have positive initial belief on θ ∗ . (5) The true state θ ∗ is globally identifiab le. In [10], Nedi ´ c et al. further con sider a more ge neral case where the tru e state θ ∗ might not be listed as one of the pos- sible states. T hey define a set of state Ω i for each agent i , where Ω i = arg min θ k ∈ Θ D K L ( ℓ i ( ·| θ ∗ ) | ℓ i ( ·| θ k )) , and let Ω ∗ , ∩ n i =1 Ω i which is not emp ty . That is to say , even though the true state might not be considered as a possible state, there exist some states which best explain the observations from the point of v iew of all agents. Th ey pr ove that under such a re- laxed condition, µ i,t ( θ ) → 0 alm ost surely as t → ∞ for all i and θ / ∈ Ω ∗ . This imp lies that if there is only on e state in Ω ∗ , all agents e ventually assign belief of one on this state which is closest to the true state. Next we propose an alternati ve way to introduce neighbor s’ informa tion into the Bayesian update (2): instead of com- puting th e w eighted a verage of all KL-div ergences between π and pr ior beliefs like that in ( 3), we can compute the KL- div ergence between π and th e weighted average of neigh bors’ prior beliefs. The new optimization prob lem is as follows µ i,t +1 = arg min π ∈ P (Θ) D K L π k n X j =1 a ij µ j,t − X θ k ∈ Θ π ( θ k ) log ( ℓ i ( s i,k +1 | θ k )) . (6) The solution of (6 ) is the Bayesian posterior distribution cor- respond ing to the prior P n j =1 a ij µ j,t , i.e., for any θ ∈ Θ µ i,t +1 ( θ ) = P n j =1 a ij µ j,t ( θ ) ℓ i ( s i,t +1 | θ ) P θ k ∈ Θ P n j =1 a ij µ j,t ( θ k ) ℓ i ( s i,t +1 | θ k ) . (7) The essential d ifference between (5) an d (7) is that the fo r- mer aggregates prior beliefs via geometric average while the latter via ar ithmetics av erage. Her e we call th e algor ithm ( 7) LiAB (Linear Aggr egation an d Bayesian upd ate). W e simply propo se th is algorithm in th is paper without strict theore tical analysis. T o the b est of our knowledge, this algorith m has no t been pro posed and studied in the existing paper s. Ther efore, theoretically analyzin g its perf ormance is still an open ques- tion. 2.3 Aggregation of Bayesian P osterio r Distrib utions A commo n feature of the algo rithms LiAB an d LoAB is tha t they first ag gregate lo cal pr ior beliefs linearly or logarithmi- cally as a new prio r and then use the Bayes’ law to compute the poster ior distribution. W e might ch ange the order of th e two steps and obtain two new algorithm s. If we let each agent first u pdate its belief distribution based on its pr iv ate observation and then exchange the pos- terior distribution with its neighbo rs via weighted geometric av erage, we obtain the following algo rithm which is called BLoA (Bayesian update and Logarithmic Aggregation) µ i,t +1 ( θ ) = Q n j =1 ˜ µ a ij j,t +1 ( θ ) P θ k ∈ Θ Q n j =1 ˜ µ a ij j,t +1 ( θ p ) (8) where ˜ µ i,t +1 ( θ ) = µ i,t ( θ ) ℓ i ( s i,t +1 | θ ) P θ k ∈ Θ µ i,t ( θ k ) ℓ i ( s i,t +1 | θ k ) . (9) The denomin ator in (8) is added to ensure that µ i,t +1 is still a well-defined probability distribution o ver Θ . The rule ( 8) has b een prop osed an d extensively stud ied in [11]. The sufficient conditio n for detecting the true state is summarized as follows: Condition 2: (1) The network is str ongly connected . (2) All agents have positive initial belief on all θ ∈ Θ . (3) F or every pair θ p 6 = θ q , th er e is a t least o ne agent i for which the KL-diver gence D K L ( ℓ i ( ·| θ p ) k ℓ i ( ·| θ q )) > 0 . Compared with Cond ition 1 , th e term s in Condition 2 are more string ent. The second term req uires that not on ly the initial beliefs of all agents on th e true state are positiv e, but also beliefs on all other states must be positive. Th e third term implies th at there is no state that is o bservationally equiv a lent to any o ther state f rom th e point of v iew of all agents in the network, i.e., all states are globally identifiable. Note that the rule in [11] is not in the form like (8) but in the following form µ i,t +1 ( θ ) = exp P n j =1 a ij log ˜ µ j,t +1 ( θ ) P θ k ∈ Θ exp P n j =1 a ij log ˜ µ j,t +1 ( θ k ) . (10 ) In fact, the rule (10) is identical to (8) si nce exp P n j =1 a ij log ˜ µ j,t +1 ( θ ) P θ k ∈ Θ exp P n j =1 a ij log ˜ µ j,t +1 ( θ k ) = exp P n j =1 log ˜ µ a ij j,t +1 ( θ ) P θ k ∈ Θ exp P n j =1 log ˜ µ a ij j,t +1 ( θ k ) = exp log Q n j =1 ˜ µ a ij j,t +1 ( θ ) P θ k ∈ Θ exp log Q n j =1 ˜ µ a ij j,t +1 ( θ k ) = Q n j =1 ˜ µ a ij j,t +1 ( θ ) P θ k ∈ Θ Q n j =1 ˜ µ a ij j,t +1 ( θ k ) . From the above deriv atio n it is not hard to u nderstand why we call rules (5) and (8) log arithmic agg regations, since bo th of them inv o lve geometr ic averages which can be written in logarithm ic forms like that in (10). Similar to (8) in the sense of aggregating Bayesian poste- rior beliefs of neig hbors, an algor ithm with geometric av erage being r eplaced b y arithmetic average is proposed an d e xten- si vely stu died in [ 12], which is called BLiA (Bayesian upd ate and Linear Aggregation) here µ i,t +1 ( θ ) = n X j =1 a ij ˜ µ j,t +1 ( θ ) (11) where ˜ µ j,t +1 ( θ ) is identical to that in (9). The suf ficient condi- tions und er which a gents can d etect the true state can be sum - marized as follows: Condition 3: (1) The weight matrix A = [ a ij ] is primitive. (2) There exists at lea st one agent with positive in itial belief on the true state. (3) F or each agent i , the r e exists at least on e pr evailing signal s o i such that ℓ i ( s o i | θ ∗ ) − ℓ i ( s o i | θ ) ≥ δ o k > 0 fo r any θ / ∈ ¯ Θ i . Compared with LoAB and B LoA , the algorith m BLiA re- quires more relaxed co nditions in som e aspects to detect the underly true state. For instance, it only needs at least one ag ent having p ositiv e initial belief on the true state. And also, th e requirem ent of prim iti ve matrix is more relaxed than that with positive d iagonal elements (i.e., positi ve s elf-weights). 2.4 Aggregation of P ersonal Bayesian Posterior and Oth- ers’ Prior The following two alg orithms, lik e BLoA and BLiA , also contain personal Bayesian update a nd communication with neighbo rs. Howev er , agents exchan ge prior distributions with their neighbors rath er than posterio r distrib u tions. W e may consider th at this sort o f alg orithms intr oduce co mmunica tion delay into the d ynamics such that ag ents cou ld not r eceiv e their neighbo rs’ latest info rmation (the posteriors) but only delayed informa tion (the priors before Bayesian update). There are a lso two ways to aggregate neigh bors’ inform a- tion. If the agent aggr egate its personal p osterior and its neigh- bors’ priors via arith metic average, we h av e the following alg o- rithm called BLiA D (Bayesian update and Linear Aggregation of Delayed informatio n) µ i,t +1 ( θ ) = a ii ˜ µ i,t +1 ( θ ) + X j ∈ N i a ij µ j,t ( θ ) . (12) The rule (12) is originally propo sed in [1 3] in the context of social learnin g. Th e sufficient conditio n under which agents can learn the unde rlying tru e state u sing (12) can be sum ma- rized as follows: Condition 4: (1) The network is str ongly connected . (2) All agents have strictly positive self-weigh ts, i.e., a ii > 0 for all i ∈ V . (3) There exists at lea st one agent with positive in itial belief on the true state θ ∗ . (4) The true state θ ∗ is globally identifiab le. Replacing the ar ithmetic average in BLiAD by the geomet- ric av erage, we obtain anoth er algo rithm here called BLoAD (Bayesian update and Logarithmic Agg regation of Delayed in - formation ) as follows µ i,t +1 ( θ ) = ˜ µ a ii i,t +1 ( θ ) Q j ∈ N i µ a ij j,t ( θ ) P θ k ∈ Θ ˜ µ a ii i,t +1 ( θ k ) Q j ∈ N i µ a ij j,t ( θ k ) . (13) Similar to (13), in [14] Rad and T ahbaz-Salehi propose a distributed est imation algorith m as follows: log µ i,t +1 ( θ ) = λ i log ℓ i ( s i,t +1 | θ ) + n X j =1 a ij log µ j,t ( θ ) + c i,t (14) where λ i > 0 is the weigh t that a gent i assign s to its private ob- servations and c i,t is a norm alization con stant, not d ependen t on θ , which ensures th at µ i,t +1 is a well-defined p robability distribution o ver Θ . The rule (14) is identical to (1 3) by cho osing the following values for the parameters λ i and c i,t : λ i = a ii and c i,t = − log " X θ k ∈ Θ µ i,t ( θ k ) ℓ i ( s i,t +1 | θ k ) a ii × X θ k ∈ Θ ˜ µ a ii i,t +1 ( θ k ) Y j ∈ N i µ a ij j,t ( θ k ) # . In fact, the rule (14) can be written in the follo wing form: µ i,t +1 ( θ ) = exp λ i log ℓ i ( s i,t +1 | θ ) + n X j =1 a ij log µ j,t ( θ ) + c i,t = exp log ℓ λ i i ( s i,t +1 | θ ) + log n Y j =1 µ a ij j,t ( θ ) + c i,t = ℓ λ i i ( s i,t +1 | θ ) n Y j =1 µ a ij j,t ( θ ) · e c i,t = ℓ a ii i ( s i,t +1 | θ ) µ a ii i,t ( θ ) Y j ∈ N i µ a ij j,t ( θ ) · e c i,t = ˜ µ a ii i,t +1 ( θ ) Q j ∈ N i µ a ij j,t ( θ ) P θ k ∈ Θ ˜ µ a ii i,t +1 ( θ k ) Q j ∈ N i µ a ij j,t ( θ k ) . (15) The rule (14) has be en theor etically studied in [1 4], and th e sufficient condition fo r detec ting the true state is summa rized as follows: Condition 5: (1) The network is str ongly con nected. (2) All agents have positive initial prior belief on all θ ∈ Θ . (3) The true state θ ∗ is globally identifiab le. As a summ arization, we provid e the classification tree of the six distributed detection algorith ms in F ig. 1. 2.5 Comparison with the Bayesian Update of a Single Agent L. J. Savage has pointed ou t in [17] th at, bar ring two b anal exceptions, a sin gle agen t b ecomes almost certain of th e true state when th e am ount of its observation incr eases infinitely . One exception is th at the initial belief of the true state is zero. This is very easy to un derstand. If the b elief is zero, then, no matter what signal is obser ved, the posterio r b elief o f the tr ue state is still zero. Th e other exceptio n occurs when there exists a state which arou ses e xactly the same signals as the tru e state does, i.e., observationally equiv alent state exists. When an agen t is situated in a network setting, th e above two req uiremen ts might be r elaxed. For instance , it has bee n proven that the ru les LoAB, BLoA, BLiA, BLiAD, and BLoAD only require the true state being globally identifiable. W e con- jecture th at LiAB might also work well w ith th e same cond i- tion, e ven though theoretical analyses are not a vailable yet. It is not hard to see that all rules inv olvin g g eometric aver - ages, such as Lo AB, BLoA, and BLoA D , still need the require- ment of non -zero initial b eliefs of all ag ents on the tru e state. For the ru les B LiA and BLiAD which contain s linear a ggrega- tions, it h as b een proven in [ 12] and [13], respectively , that at least one a gent with non-z ero in itial belief o n the true state is enoug h for a correct detection. For th e rules inv olv ing delay ed in formation such as BLiAD and BLoAD , the requir ement of non-zero s self-we ights m ust be satisfied, at least for p art o f the agents. Because if all self- weights ar e zero s, any n ew o bservation will be discard ed and BLiAD and B LoAD specialize to traditional consensus pro to- cols with ar ithmetic a verage and geo metric a verag e, respec- ti vely . 3 Numerical Examples In this section, we e x am the ef fectiveness of e ach distrib uted detection rule by numerical examp les. Our test platform is a nearest-neig hbor c oupled n etwork, which might represen t a sensor network or a robo t network where, re stricted by its com- munication ran ge, each agen t ca n on ly exchang e inform ation with a given num ber o f its closest neighbors. The following Fig. 2 shows a schematic diagram of a nearest-n eighbor s cou- pled network of 20 agents, wh ere each a gent cou ld only inter- act with 5 clo sest agents (includ ing itself) and a ll of the ed ges are bi-directed . Fig. 2: A nearest-ne ighbor coupled network of 20 agents Simulations are perf ormed on three p ossible states, i.e. , Θ = { θ 1 , θ 2 , θ 3 } in which θ 3 is set to be the true state. Any agent i ’ s belief distribution at time t is µ i,t = [ µ i,t ( θ 1 ) , µ i,t ( θ 2 ) , µ i,t ( θ 2 )] ∈ [0 , 1] 3 . The initial belief is unifor mly distributed in the interval [0, 1] and subje ct to P 3 k =1 µ i,t ( θ k ) = 1 . The signals ge nerated by the true state are { s 1 , s 2 } . W e as- sume t hat s ignal s 1 appears with possibility of 0.8, and s 2 with 0.2, which im plies the p riv ate sign al struc ture abou t θ 3 of any agent i should be ℓ i ( s 1 | θ 3 ) = 0 . 8 and ℓ i ( s 2 | θ 3 ) = 0 . 2 . Let half of agents, d enoted by V 1 ⊂ V , hav e th e signal structures ℓ i ( s 1 | θ 1 ) = 0 . 8 , ℓ i ( s 2 | θ 1 ) = 0 . 2 , ℓ i ( s 1 | θ 2 ) = 0 . 5 , and ℓ i ( s 2 | θ 2 ) = 0 . 5 ( i ∈ V 1 ). That is to say , the states θ 1 and θ 3 are equiv alen t to agents in V 1 . Let th e other half of agents, denoted by V 2 = V \ V 1 , hav e th e following signa l structures: ℓ i ( s 1 | θ 1 ) = 0 . 2 , ℓ i ( s 2 | θ 1 ) = 0 . 8 , ℓ i ( s 1 | θ 2 ) = 0 . 8 , and ℓ i ( s 2 | θ 2 ) = 0 . 2 ( i ∈ V 2 ), i.e., the state θ 2 and θ 3 are observationally equivalent to agents in V 2 . Th e tru e state is unidentifiab le to any single agent, b ut glob ally identifiable. In our first simulation , we let agents from V 1 be close to each othe r , and the same to V 2 , i.e., agents belong to the same set form a cluster . In the seco nd simulation, we mix all ag ents in the sense that each agen t from V 1 is located between tw o agents from V 2 , and vice versa. W e focus on the number o f iteratio ns of up date for each al- gorithm to d etect the true state. I f for all i ∈ V , | µ i,t ( θ 3 ) − 1 | ≤ 10 − 3 , we say the wh ole group collecti vely detect the true s tate. The result is shown in Fig. 3, which is the a verage of 100 real- izations. Fr om Fig. 3, we have the following observations: B L i A B L i A D L i A B B L o A B L o A D L o A B 1 0 1 0 0 1 0 0 0 1 0 0 0 0 N u m b e r o f I t e r a t i o n s D i st r i b u t e d D e t e ct i o n A l g o r i t h m s C l u st e r e d M i x e d Fig. 3: Comparison of six distributed detection algorithms (1) All o f the six distributed detection algorithm s are effective in detecting the true state. (2) If o ther aspects are id entical, aggr egating inf ormation via geom etric average (i.e., B LoA, BLoAD , and Lo AB ) is much faster than th at via arith metic av erage (i.e., BLiA, BLiAD , and LiAB ). (3) Using delaye d information in aggregation (i.e., BLiAD and BLoAD ) makes the detection much slo wer . (4) Bayesian upd ate first or agg regating info rmation from neighbo rs first do es no t influen ce the efficiency of detec- tion apparen tly . (5) Mixin g agents with different signal structures promotes the rate of detection. The above items (2) an d (3) are in acco rdance with the the- oretical result in [11] where BLoA is compared with BLiAD . One o f th e main results is that, th e lower boun d on th e rate o f detection b y u sing BLo A is even greater than the upper bound of BLiAD . The item (3) is also in accordance with the theoreti- cal result in [12], in which BLiA is compared wit h BLiAD , and the former is much faster in detecting the true state. 4 Concluding Remarks In this paper, we discuss a class of distributed detection algo - rithms in which perso nal Bayesian updates are com bined with some types o f con sensus protoco ls. W e focus on six algorith ms and classify them according to the type of consensus protocol, the or der of Bayesian up date an d con sensus, and whether time- delayed information is in volved in the interaction. By compar- ison, we have a systematic impression of these d istributed de- tection alg orithms, which might lead us to establishing m ore refined co nditions under which agents could detect th e un der- lying true state in distributed settings. For instan ce, could the terms (2 ) an d (3) in Con dition 2 b e replac ed by more r elaxed requirem ents, say the terms (4) and (5) in Condition 1 , respec- ti vely? And also, could the Conditions 2, 3, 4, and 5 be relaxed to time-varying n etworks like th at in Condition 1 ? Throug h nu - meric examples, we o btain some qualitative results ab out the efficiency of these distributed algorithm s, which might sh ed light on d esigning more ef ficient distributed detectio n algo- rithms in the future. There are many other type s of distrib u ted detection algo- rithms prop osed in the literatu re. For instan ce, instead o f ex- changin g beliefs, agen ts can shar e their signal stru ctures with their n eighbor s wh ich cou ld also result in a corr ect detectio n of the true state [7 – 9]. Also, Bayesian u pdate is not the o nly choice in detection problem. Mo re alternativ es can be f ound in the literature (e.g., [18 – 21]). References [1] M. O. Jackson, Social and Economic Networks . Ne w Jersey: Princeton Uni versity Press, 2010. [2] W . Ren and R. W . Beard, Distributed Consen sus in Multi-vehicle Cooper ative Contr ol . London: Springer-V erlag, 2 008. [3] R . W . Beard, T . W . McLain, D. Nelson, D. Kingston and D. Johanson, Decentralized cooperati ve aerial surveillance us- ing fixed-wing miniature U A Vs, Pr oceedings of the IEEE , 94(7): 1306–1 324, 2006. [4] S . Boyd, A. Ghosh, B. Prabhakar , and D. Shah, Ran domized gos- sip algorithms, IEEE T rans. on In formation Theory , 5 2(6): 2508– 2530, 2006. [5] L . Smith and P . Sorensen, Pathological outcomes of observ ational learning, Econometrica , 68(2): 371–398, 2000. [6] D. Acemoglu and A. Ozdaglar , Opinion dynamics an d learn ing in social networks, Dynamics Games and Applications , 1(1): 3–49, 2010. [7] S . S hahrampou r , A. Rakhlin, and A. Jad babaie, Distributed detec- tion : finite-time analysis and impact of network topology , arXiv pr eprint arXiv:1409.8606v1 , 2014. [8] B . S. Rao and H. Durrant-Whyte, A decentralized Baeysian al- gorithm for identification of t racked targ ets, IEEE T rans. on Sys- tems, Man, and Cybernetics , 23(6): 1683–169 8, 1993. [9] R . Olfati-Saber , E. Franco, E. Fr azzoli, and J. S. Shamma, Belief consensus and distri buted hypothesis testing in sensor networks, in W orkshop on Network Embedded Sensing and Control , 2006: 169–18 2. [10] A. Nedi ´ c, A. Ol she vsky , and C. A. Uribe, Nonasymptotic con- ver gence rates for cooperati ve learning ov er t ime-v arying di- rected graphs, arXiv pr eprint arXiv:1410.1977v1 , 2014. [11] A. L alitha, T . Javidi, and A. Sarwate, Social l earning and dis- tributed hypothe sis testing, arXiv pr eprint arXiv:1410.43 07v2 , 2014. [12] X. Zhao and A. H. S ayed, Learning o ver social networks via diffu sion adaptation, in Pr oceedings of Asil omar Confer ence on Signals, Systems, and Computers , 2012: 709-713. [13] A. Jadbabaie, P . Molavi, A. Sandroni, and A. T ahbaz Salehi, Non-Bayesian social learning, Games and Economic Behavior , 76(1): 210–225 , 2012. [14] K. R. R ad and A. T ahbaz Salehi, Distributed parameter estima- tion in networks, in Procee dings of 49th IEEE Confer ence on De- cision and Contr ol , 2010: 5050-5055. [15] A. Zellner , Optimal information processing and Bayes’ theo- rem, The American Statistician , 42(4): 278–280 , 1988. [16] S. G. W alker , Bayesian i nference via a minimization rule, Sankhy ¯ a: T he Indian J ournal of Statistics , 68 (4): 542–55 3, 2006. [17] L. J. Sav age, The F oundations of Statistics , New Y ork: W iley , 1954, chapter 6. [18] R. V iswanathan and P . K. V arshney , Distributed detection with multiple sensors: part I—fundamentals Pr oceedings of the IEEE , 85(1): 54–63, 1997. [19] R. S. Blum, S. A. Kassam, and H. V . Poor, Distributed d etection with multiple sensors: part I—advance d topics Pr oceedings of the IEEE , 85(1): 64–79, 1997. [20] J. B. Predd, S. R. Kulkarni, and H. V . Poor, Distrib uted learning in wireless sensor networks, IEEE Signal Processin g Mag azine , 23(4): 56–69, 2006. [21] A. H. Sayed, Adaptation, Learning , and Optimization over Net- works , Boston-Delft: NO W Publishers, 2014. Distributed Detection Algorithms Linear Aggregation Logarithmic Aggregation Personal Bayesian Update First Aggregation First Personal Bayesian Update First Aggregation First Delayed Aggregation Non-Delayed Aggregation Delayed Aggregation Non-Delayed Aggregation LiAB LoAB BLoAD BLoA BLiAD BLiA Fig. 1: Classification tree of the six distributed detection algorithms

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment