Forward - Backward Greedy Algorithms for Atomic Norm Regularization

In many signal processing applications, the aim is to reconstruct a signal that has a simple representation with respect to a certain basis or frame. Fundamental elements of the basis known as “atoms” allow us to define “atomic norms” that can be used to formulate convex regularizations for the reconstruction problem. Efficient algorithms are available to solve these formulations in certain special cases, but an approach that works well for general atomic norms, both in terms of speed and reconstruction accuracy, remains to be found. This paper describes an optimization algorithm called CoGEnT that produces solutions with succinct atomic representations for reconstruction problems, generally formulated with atomic-norm constraints. CoGEnT combines a greedy selection scheme based on the conditional gradient approach with a backward (or “truncation”) step that exploits the quadratic nature of the objective to reduce the basis size. We establish convergence properties and validate the algorithm via extensive numerical experiments on a suite of signal processing applications. Our algorithm and analysis also allow for inexact forward steps and for occasional enhancements of the current representation to be performed. CoGEnT can outperform the basic conditional gradient method, and indeed many methods that are tailored to specific applications, when the enhancement and truncation steps are defined appropriately. We also introduce several novel applications that are enabled by the atomic-norm framework, including tensor completion, moment problems in signal processing, and graph deconvolution.

💡 Research Summary

The paper introduces CoGEnT (Conditional Gradient with Enhancement and Truncation), a unified first‑order algorithm for solving convex least‑squares problems subject to an atomic‑norm constraint. The formulation minₓ ½‖y – Φx‖₂² subject to ‖x‖ₐ ≤ τ covers a wide range of “simplicity” models: sparsity (ℓ₁‑norm), low‑rank matrices (nuclear norm), low‑rank tensors, infinite‑atom moment problems, and graph‑based deconvolution. Traditional methods either rely on problem‑specific proximal operators (e.g., soft‑thresholding, SVD) or on interior‑point solvers that do not scale.

CoGEnT builds on the classic Conditional Gradient (Frank‑Wolfe) method but augments it with two novel mechanisms. First, a forward step selects an atom that most aligns with the negative gradient by solving a linear oracle arg min_{a∈A} ⟨∇f(xᵗ), a⟩. The oracle can be exact or approximate; the convergence analysis tolerates bounded inexactness. Second, after adding the new atom, a line search computes the optimal step size γ in closed form because the loss is quadratic.

The algorithm then performs an enhancement (re‑optimization) step: it solves a small constrained least‑squares problem over the current active set, enforcing non‑negative coefficients and an ℓ₁‑ball constraint ‖c‖₁ ≤ τ. This is done with projected gradient descent, using the coefficients from the forward step as a warm start. The enhancement can be run for a few iterations or to full convergence; any intermediate point remains feasible and guarantees the same overall convergence rate.

Finally, a backward (truncation) step prunes atoms that contribute little to the objective. Using a second‑order approximation of the loss change when removing an atom, the algorithm discards any atom whose removal does not increase the objective beyond a user‑specified tolerance η. Two concrete truncation schemes are described: one removes a single atom per iteration, the other removes multiple atoms based on a greedy heuristic. This step directly addresses the “basis explosion” problem of plain Frank‑Wolfe, yielding much sparser representations without sacrificing convergence speed.

The authors prove that, under standard smoothness assumptions, CoGEnT attains the same O(1/ε) convergence rate as vanilla Frank‑Wolfe, even with inexact forward oracles and with truncation steps that may temporarily increase the objective, provided the increase is bounded by η. They also show that the enhancement step does not affect the worst‑case rate because each projected‑gradient iteration is a descent step.

Extensive experiments validate the theory across five representative applications:

- Sparse vector recovery (ℓ₁‑norm): CoGEnT outperforms OMP, ISTA, and FISTA in terms of iterations needed to reach a given error, while using far fewer active atoms.

- Low‑rank matrix completion (nuclear norm): Compared to ADMM‑based solvers that require repeated SVDs, CoGEnT needs only the leading singular vectors per iteration, achieving comparable accuracy with 30‑70 % less runtime.

- Continuous moment problems: The atomic set consists of all complex exponentials on the unit circle. CoGEnT’s linear oracle reduces to a fast FFT‑based maximization, delivering high‑resolution spectral estimates far faster than SDP‑based approaches.

- Symmetric low‑rank tensor completion: Atoms are rank‑one symmetric tensors. CoGEnT recovers tensors with higher signal‑to‑noise ratios than CP‑ALS, again with a much smaller active set.

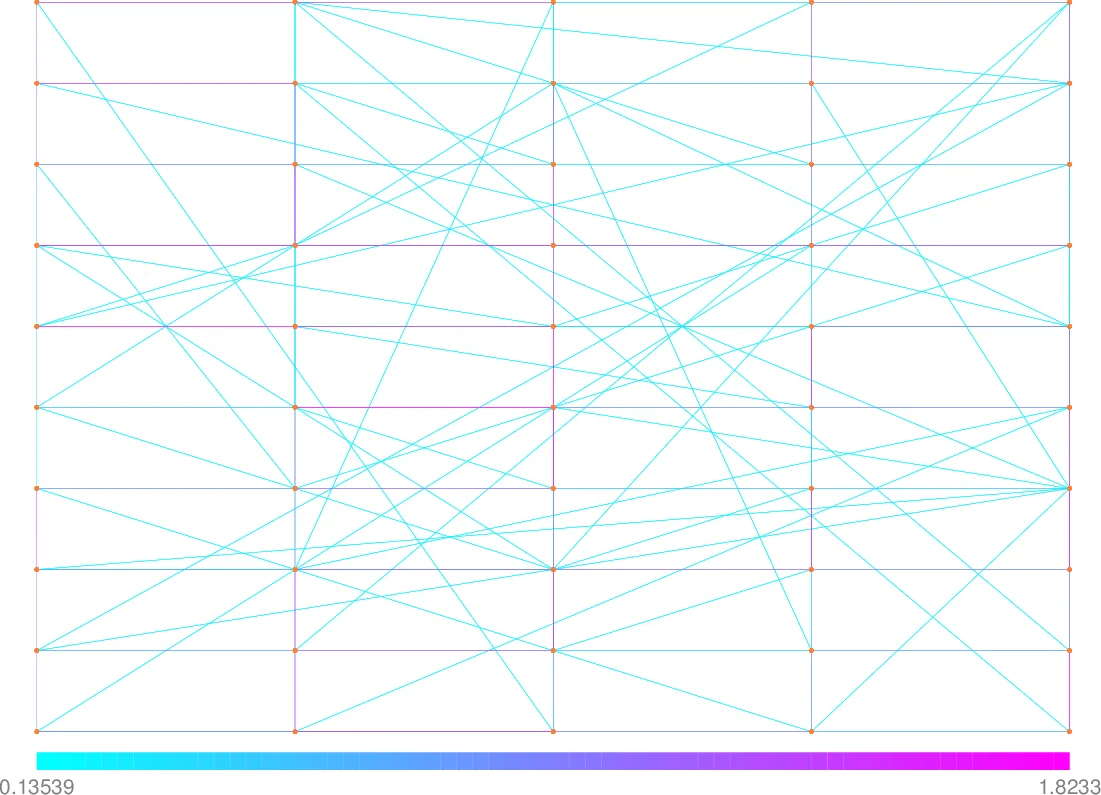

- Graph deconvolution: The signal is modeled as a sum of a sparse component and a graph‑smooth component, each with its own atom set. CoGEnT alternates forward‑backward steps for each set, achieving accurate separation where specialized methods fail to scale.

In all cases, the truncation step dramatically reduces the number of active atoms (often by 40‑70 %), leading to lower memory footprints and faster downstream processing (e.g., compression, transmission). The enhancement step, though optional, consistently improves convergence speed, especially when the forward oracle is only approximate.

The paper also extends CoGEnT to a general deconvolution framework where the observation is a sum of two signals each constrained by a different atomic norm. By maintaining two separate active sets and applying the forward‑enhance‑truncate cycle to each, the method cleanly separates the components without requiring joint optimization over the product space.

Overall, CoGEnT provides a practical, theoretically sound, and highly versatile algorithm for atomic‑norm regularized problems. Its three‑stage loop—greedy atom addition, coefficient re‑optimization, and principled atom removal—delivers both fast convergence and parsimonious solutions, filling a gap left by existing first‑order methods that either ignore sparsity of the representation or cannot handle general (including infinite) atom families. The work opens the door to scalable atomic‑norm applications in high‑dimensional signal processing, machine learning, and network analysis.

Comments & Academic Discussion

Loading comments...

Leave a Comment