Coarse-to-Fine Sequential Monte Carlo for Probabilistic Programs

Many practical techniques for probabilistic inference require a sequence of distributions that interpolate between a tractable distribution and an intractable distribution of interest. Usually, the sequences used are simple, e.g., based on geometric …

Authors: Andreas Stuhlm"uller, Robert X.D. Hawkins, N. Siddharth

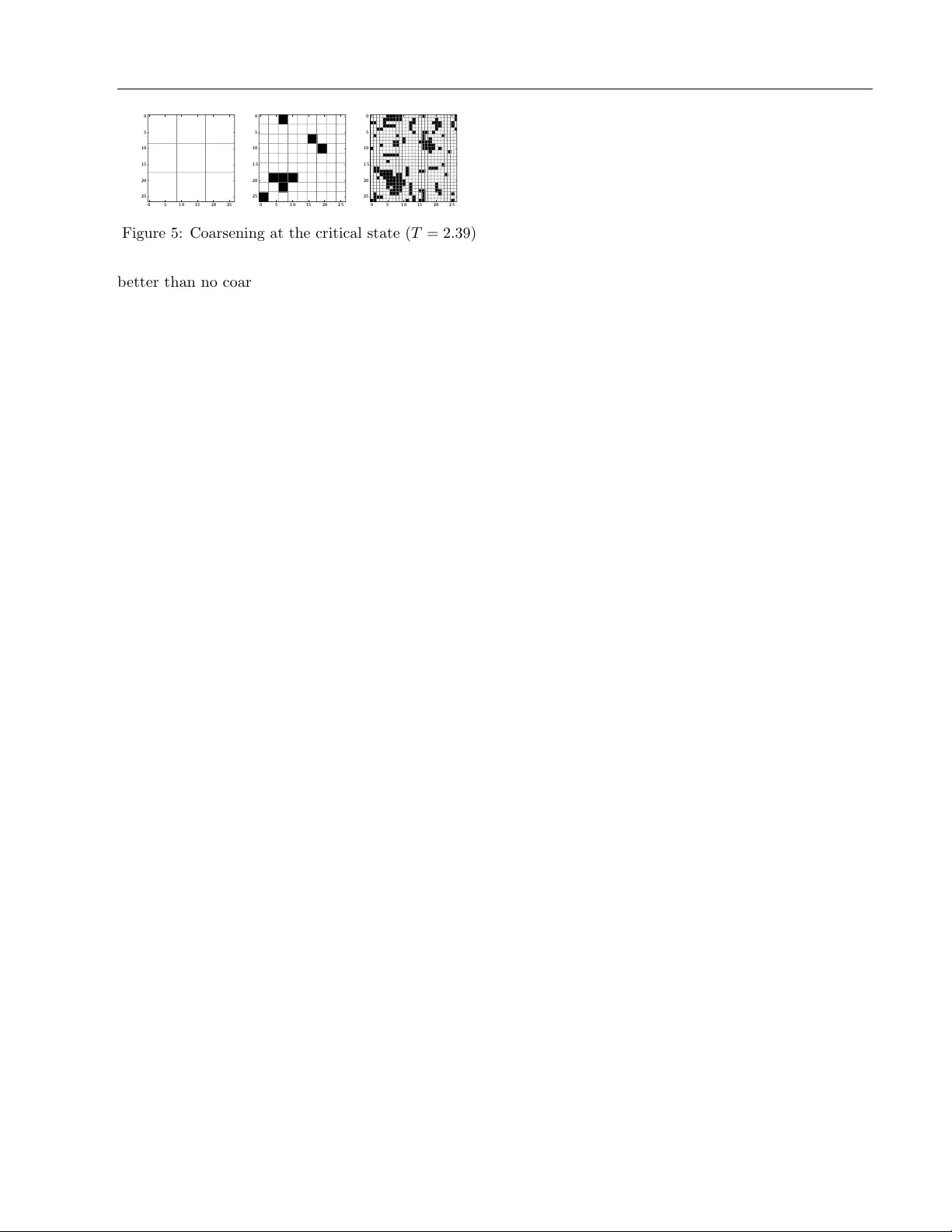

Coarse-to-Fine Sequen tial Mon te Carlo for Probabilistic Programs Andreas Stuhlm ¨ uller Rob ert X.D. Ha wkins N. Siddharth Noah D. Go o dman Departmen t of Psyc hology Stanford Univ ersit y { astu, rxdh, nsid, ngo o dman } @stanford.edu Abstract Man y practical tec hniques for probabilistic inference require a sequence of distributions that interpolate b etw een a tractable distribu- tion and an in tractable distribution of interest. Usually , the sequences used are simple, e.g., based on geometric a verages b etw een distri- butions. When mo dels are expressed as prob- abilistic programs, the models themselves are highly structured ob jects that can be used to derive annealing sequences that are more sensitiv e to domain structure. W e prop ose an algorithm for transforming probabilistic pro- grams to c o arse-to-fine pr o gr ams which hav e the same marginal distribution as the original programs, but generate the data at increas- ing levels of detail, from coarse to fine. W e apply this algorithm to an Ising mo del, its depth-from-disparit y v ariation, and a factorial hidden Marko v mo del. W e sho w preliminary evidence that the use of coarse-to-fine mo dels can make existing generic inference algorithms more efficient. 1 INTR ODUCTION Imagine watc hing a tennis tournamen t. Y our visual system makes fast and accurate inferences ab out the depth-field (how far aw a y are different patc hes?), the ob jects (is that a ball or rack et?), their tra jectories, and many other properties of the scene. A p ow erful in tuition is that such feats of inference are enabled by c o arse-to-fine reasoning: first getting a rough sense of where the action is in the scene, ab out ho w far a wa y it is, and so on; later refining this impression to pic k out details. The app eal of coarse-to-fine reasoning is manifold. First, there is introspection: When faced with a complex reasoning task, it often helps to tak e a step back, try to understand the big picture, and then fo cus on what seems most promising. The big 2 8 10 3 1 1 1 1 3 2 5 2 1 Figure 1: Incremen tal coarsening reduces surprise in SMC. Particles (red) are directed tow ards high- probabilit y regions (light) step by step as we refine the state space from coarse to fine. The n umbers indi- cate how man y particles are asso ciated with a particular state. picture tends to ha ve few er moving parts, and its parts tend to b e easier to understand. Neuroscience pro- vides another angle: for instance, face pro cessing in the high-level visual cortex plausibly follows coarse-to- fine principles (Goffaux et al., 2010), and stereoscopic depth p erception similarly pro ceeds from large to small spatial scales (Menz and F reeman, 2003). Finally , there is a rich set of existing applications of coarse-to-fine tec hniques for sp ecific applications in a diverse set of areas including ph ysical c hemistry (Lyman and Zuck- erman, 2006), sp eec h pro cessing (T ang et al., 2006), PCF G parsing (Charniak et al., 2006), and machine translation (Petro v et al., 2008). Despite the success and app eal of coarse-to-fine ideas , they hav e been dif- ficult to apply in general settings. Here w e propose a system for deriving coarse-to-fine inference from any mo del written as a probabilistic program. W e do this b y lev eraging program structure to transform the initial program into a m ulti-level coarse-to-fine program that Coarse-to-Fine Sequen tial Monte Carlo for Probabilistic Programs can b e used with existing inference algorithms. Probabilistic programming languages pro vide a uni- v ersal and high-level representation for probabilistic mo dels, separating the burdens of mo deling from those of inference. Y et the difficulty of inference can grow quic kly as the state space (num b er of program execu- tions) grows large. A widely-used technique for infer- ence in large state spaces is Sequential Monte Carlo (SMC), a class of algorithms based on constructing a sequence of distributions, b eginning with an easy-to- sample distribution and ending with the distribution of interest, with each distribution serving as an im- p ortance sampler for the next. The success of SMC rests on the quality of the approximating sequence. W e presen t a generic metho d for deriving coarse-to- fine sequences of approximating distributions from a probabilistic program. Our approach can b e seen as building a hierarchical mo del from an initial mo del, where each stage of the hierarc hy resolves more details of the state space than the one b efore. W e additionally augment eac h lev el of the hierarch y with a coarse approximation to the evi- dence (implemented via heuristic factors , see Section 3.1), in order to specify a useful conditional distribution at each level. In practice we create the hierarchical mo del and heuristic factors at once b y sp ecifying ho w to “lift” each elemen t of the program—elementary distribu- tions, factors, primitive functions, and constants—to the coarser levels. The resulting mo del supports coarse- to-fine inference by SMC, where the nth distribution in the sequence is simply the state space of the n-coarsest lev els; this is correct inference for the original model b ecause, b y construction, the marginal distribution o ver the finest level is the original distribution. Mo del transformations let us directly use existing se- quen tial inference algorithms to p erform coarse-to-fine inference, rather than prop osing a new inference al- gorithm p er se . This is in con trast to essentially all prior work on coarse-to-fine inference, including Kiddon and Domingos (2011) and Steinhardt and Liang (2014). One b enefit of this mo dular approac h is that adv ances in SMC algorithms immediately yield improv ements to coarse-to-fine inference. Another b enefit is the concep- tual clarity that comes from an explicit representation of the coarse-to-fine mo del. In the following, w e first review probabilistic programs and Sequen tial Monte Carlo. W e then describ e our coarse-to-fine program transform and how it lifts ran- dom v ariables, primitive functions, and factors to op- erate on m ultiple levels of abstraction. W e apply this transform to tw o mo dels in the domain of tracking partially observ able ob jects ov er time giv en visual in- formation, a depth-from-disparit y mo del and a factorial hidden Marko v model, and sho w preliminary evidence that it may help reduce inference time in these do- mains. Finally , we discuss the current limitations of this framework, the circumstances where our approach to coarse-to-fine inference is a go o d fit, and outline researc h questions raised b y this new approac h. 2 BA CK GROUND 2.1 PR OBABILISTIC PROGRAMS Probabilistic programs are mo dels expressed in T uring- complete languages that supply primitives for random sampling and probabilistic inference (e.g., Go o dman et al., 2008; Koller et al., 1997; Pfeffer, 2007). Many existing probabilistic mo dels hav e been expressed con- cisely as probabilistic programs. A distinguishing fea- ture of probabilistic programming as a mac hine learning tec hnique is that it separates inference techniques from mo deling assumptions. Th us, any adv ances in algo- rithms provide b enefits for a wide range of applications at once. While we demonstrate our technique for a small set of mo dels chosen for their p edagogical v alue, w e emphasize that the technique can b e applied to a m uch wider range of mo dels without mo dification. W e express probabilistic programs in WebPPL (Go o d- man and Stuhlm¨ uller, 2014), a small probabilistic language embedded in Ja v ascript. This language is univ ersal, and feature-ric h, so w e exp ect the tech- niques to generalize straigh tforw ardly to other lan- guages. In this language, all random c hoices are mark ed b y sample ; the argument to sample is a distribution ob ject (also called Elementary R andom Primitive , or ERP ), its return v alue a sample from this distribution. Calls to functions suc h as flip(0.5) are shorthand for sample(bernoulliERP, [0.5]) . T o enable probabilistic conditioning, the language sup- p orts factor statemen ts. The argument to factor is a score: a num b er that is added to the log-probability of a program execution, th us increasing or decreasing its relative p osterior probabilit y . This includes hard conditioning on evidence as a sp ecial case (scores 0 and −∞ ). Finally , the language supp orts inference primitiv es suc h as ParticleFilter and MH (Metrop olis- Hastings). Eac h of these takes as an argument a thunk, that is, a sto chastic function that itself takes no argu- men ts. And each of these computes or estimates the distribution on return v alues of this thunk (its mar ginal distribution ), taking into account the re-weigh ting in- duced by factor statements. Figure 2a sho ws a program that implement s a simpli- fied one-step version of multiple ob ject trac king: the noisily observed v alue 7 could hav e b een produced by either x or y , each of which is uniformly chosen from Andreas Stuhlm ¨ uller Rob ert X.D. Hawkins N. Siddharth Noah D. Go o dman v a r n o i s y O b s e r v e = f u n c t i o n ( o b s ) { v a r s c o r e = - 3 * d i s t a n c e ( o b s , 7 ) f a c t o r ( s c o r e ) } v a r m o d e l = f u n c t i o n ( ) { v a r x = s a m p l e ( u n i f o r m E R P ) v a r y = s a m p l e ( u n i f o r m E R P ) v a r o b s e r v a t i o n = f l i p ( . 5 ) ? x : y n o i s y O b s e r v e ( o b s e r v a t i o n ) r e t u r n [ x , y ] } (a) A probabilistic program v a r n o i s y O b s e r v e = f u n c t i o n ( o b s ) { v a r s c o r e = - 3 * d i s t a n c e ( o b s , 7 ) f a c t o r ( s c o r e ) } v a r m o d e l = f u n c t i o n ( ) { v a r x = s a m p l e ( u n i f o r m E R P ) v a r h e u r i s t i c S c o r e = - d i s t a n c e ( x , 7 ) f a c t o r ( h e u r i s t i c S c o r e ) v a r y = s a m p l e ( u n i f o r m E R P ) v a r o b s e r v a t i o n = f l i p ( . 5 ) ? x : y n o i s y O b s e r v e ( o b s e r v a t i o n ) f a c t o r ( - h e u r i s t i c S c o r e ) r e t u r n [ x , y ] } (b) Rewritten using heuristic factors Figure 2: Tw o probabilistic programs with the same marginal distribution (shown in the final panel in Figure 1). { 1 , 2 , . . . , 8 } . The final panel in Figure 1 shows the marginal distribution on [ x, y ] for this program. In probabilistic programs, the same syntactic v ariable can b e used multiple times. The protot ypical example is the geometric distribution: v a r g e o m e t r i c = f u n c t i o n ( ) { r e t u r n f l i p ( 0 . 1 ) ? 0 : 1 + g e o m e t r i c ( ) } The call to flip(0.1) ma y occur an unbounded num- b er of times. F or many purposes, it is necessary to distinguish and refer to these different calls. In the con text of MCMC, Wingate et al. (2011) in tro duced a suitable naming sc heme based on stac k addresses. The address of a random choice is a list of syn tactic lo ca- tions, one for each function on the function call stac k at the time when the random v ariable w as sampled. W e will build on this scheme to associate corresp onding random choices on different levels of coarsening with eac h other, and use address in the following to refer to the curren t stac k address. 2.2 SEQUENTIAL MONTE CARLO Supp ose our target distribution is X with probabil- it y mass function p . Importance sampling generates samples from an appro ximating distribution Y (with probabilit y mass function q ) and re-weigh ts the samples to account for the difference b etw een true and appro x- imating w ( x ) = p ( x ) /q ( x ). T o compute estimates of ψ = E x ∼ X [ f ( x )] given samples y 1 , . . . , y n w e use ˆ ψ = P n i =1 w ( y i ) f ( y i ) P n i =1 w ( y i ) T o generate appro ximate samples from p ( x ), w e re- sample from the set of samples in prop ortion to the imp ortance weigh ts. If we iterate this pro cedure with a sequence of ap- pro ximating distributions q 1 , . . . , q k , we get Se quential Imp ortanc e Sampling . If we resample at each stage, we get Se quential Imp ortanc e R esampling . If we addition- ally apply MCMC “rejuv enation” steps at each stage i with a transition kernel that lea ves the distribution q i in v ariant, w e get Se quential Monte Carlo . F or Sequential Imp ortance Sampling, the sum of the KL divergences b et ween successive distributions con- trols the difficulty of sampling (F reer et al., 2010). If w e can sample from the right coarse-grained distribu- tions, we can reduce this difficulty , as illustrated in Figure 1. With rejuv enation steps (SMC), the picture is more complex, but empirically , it is still the case that distributions that are closer together in KL gener- ally make the sampling problem easier. In particular, w e exp ect that goo d coarse-to-fine sequences lead to b etter co verage of regions with high p osterior proba- bilit y , and that they enable more efficien t pruning of lo w-probability regions. A finite set of fine-grained par- ticles ma y not co ver the entire region, which can lead to a situation where all particles assign lo w probability to the next filtering step (particle decay). A particle that has not b een refined yet corresp onds to distributions on fine-grained states, th us each suc h particle can cov er a bigger region (Steinhardt and Liang, 2014). Go od coarse-to-fine sequences can allow us to prune entire parts of the state space in one go, only considering re- finemen ts of abstract states that hav e sufficien tly high p osterior probability (Kiddon and Domingos, 2011). 3 ALGORITHM Giv en a probabilistic program, our algorithm builds a coarse-to-fine program with the same marginal dis- tribution as the original program, but with additional Coarse-to-Fine Sequen tial Monte Carlo for Probabilistic Programs laten t structure corresp onding to coarsened v ersions of the program. W e will assume that the user provides a coarsenValue function that describ es how v alues map to more ab- stract v alues. Iterating this function leads to m ultiple lev els of coarsened v alues. Our goal then is to construct a v ersion of the original program that op erates o ver v alues coarsened N times. W e will preserve the basic flo w structure of the program, and th us w e only need to sp ecify ho w each primitive construct in the program is lifted to the space of coarsened v alues. The tricky part is to construct these lifted comp onen ts suc h that the final marginal distribution is preserved. W e use t wo ideas to accomplish this. First, we replace each unconditional elementary distribution at a given lo ca- tion with a distribution that dep ends on the coarser v alue of the same lo cation, but such that the marginal o ver this coarser v alue yields the original distribution. Second, we treat lifted factor s as only appro ximations useful for guiding inference, which are then canceled by an extra factor inserted at the next-finer level. With this scheme, only the finest-lev el factors contribute to the final score. This gains us flexibility ov er the lifting of primitive functions: lifted functions (that ultimately flo w only to factor statements) only need to hav e similar b eha vior to their original; deviations will b e corrected b y the cancellation of factors. In the next few subsections, we introduce heuristic factors, the inputs that the program transform requires, ho w the mo del syntax is transformed, and how each of the comp onents of the lifted mo del w orks: constants, random v ariables, factors, and primitive functions. 3.1 HEURISTIC F A CTORS A heuristic factor is a factor that is in tro duced for the purp ose of guiding incremental inference algorithms suc h as particle filtering and b est-first enumeration (Go odman and Stuhlm ¨ uller, 2014). Its distinguishing c haracteristic is that an equiv alent, canceling factor is inserted at a later p osition in the program in order to leav e the program’s distribution inv ariant. In other w ords, the pair of statemen ts factor(s) and factor(-s) together has no effect on the meaning of a mo del; its only effect is in controlling how inference algorithms explore the state space. F or example, Figure 2b shows a wa y to rewrite the program in Figure 2a in a wa y that initially assigns higher weigh t to program executions where x is close to the true observ ation 7. This is a heuristic, since— dep ending on the outcome of the coin flip—it may b e y whic h is observ ed, in which case there is no pressure for x to b e close to 7. The coarse-to-fine transform in tro duces heuristic fac- tors that guide sampling on coarse lev els tow ards high- probabilit y regions of the state space without c hanging the program’s distribution. 3.2 PREREQUISITES The main inputs to the transform are a mo del, giv en as co de for a probabilistic program, and a pair of functions coarsenValue and refineValue . The main constraint on the mo del is that all ERPs need to b e indep endent, i.e., do not tak e parameters that dep end on other ERPs. If the supp ort of each ERP is known, this can b e achiev ed using a simple transform that replaces eac h dep endent ERP with a maxim um-entrop y ERP , and adds a dep endent factor that corrects the score. That is, we transform v a r x = s a m p l e ( o r i g i n a l E R P , p a r a m s ) to v a r x = s a m p l e ( m a x e n t E R P ) f a c t o r ( o r i g i n a l E R P . s c o r e ( x , p a r a m s ) - m a x e n t E R P . s c o r e ( x ) ) This transform lea ves the mo del’s distribution un- c hanged and greatly simplifies the coarsening of ERPs, but reduces the statistical efficiency of the mo del. This statistical inefficiency can p otentially b e ad- dressed by merging sample and factor statemen ts into sampleWithFactor (Go odman and Stuhlm¨ uller, 2014) after the coarse-to-fine transform =. The mo del is annotated with the name of the main mo del function (which defines the marginal distribu- tion of interest) and a list of names of ERPs, constan ts, and functions (comp ound, primitiv e, score, and p oly- morphic; see b elo w) to b e lifted. The main parameters that control the coarse distribu- tions are the user-sp ecified functions coarsenValue and refineValue . The function coarsenValue maps a v alue to a coarser v alue; the function refineValue maps a coarse v alue to a set of finer v alues. T o generate v al- ues on abstraction level i , we iterate the coarsenValue function i times. W e require that the t wo functions are in verses in the sense that v ∈ refineValue ( V ) ⇔ coarsenValue ( v ) = V for all v and V . If a mo del has multiple differen t t yp es of v ariables for whic h inference is needed, it is easy to define a p oly- morphic coarsening function that implements different b eha viors for different t yp es of v alues. In cases where differen t v ariables ha ve the same type but different meaning or scale, we recommend using wrapp er types to control which coarsening is used. This pap er do es not address the task of finding go o d v alue coarsening functions. Instead, w e ask: if such a Andreas Stuhlm ¨ uller Rob ert X.D. Hawkins N. Siddharth Noah D. Go o dman v a r l i f t e d U n i f o r m E R P = l i f t E R P ( u n i f o r m E R P ) v a r l i f t e d D i s t a n c e = l i f t S c o r e r ( d i s t a n c e ) v a r n o i s y O b s e r v e = f u n c t i o n ( o b s ) { v a r s c o r e = - 3 * l i f t e d D i s t a n c e ( o b s , 7 ) l i f t e d F a c t o r ( s c o r e ) } v a r m o d e l = f u n c t i o n ( ) { s t o r e . b a s e = g e t S t a c k A d d r e s s ( ) v a r x = l i f t e d U n i f o r m E R P ( ) v a r y = l i f t e d U n i f o r m E R P ( ) v a r o b s e r v a t i o n = f l i p ( 0 . 5 ) ? x : y n o i s y O b s e r v e ( o b s e r v a t i o n ) r e t u r n [ x , y ] } v a r c o a r s e T o F i n e M o d e l = f u n c t i o n ( l e v e l ) { s t o r e . l e v e l = l e v e l v a r m a r g i n a l V a l u e = m o d e l ( ) i f ( l e v e l = = = 0 ) { r e t u r n m a r g i n a l V a l u e } e l s e { r e t u r n c o a r s e T o F i n e M o d e l ( l e v e l - 1 ) } } Figure 3: The coarse-to-fine mo del corresp onding to the mo del in Figure 2a. This mo del has the same marginal distribution as 2a and 2b, but samples it using the hierarc hical pro cess shown in Figure 1. function is given, how c an we use it to coarsen en tire programs so that we produce a sequence of coarse-to- fine mo dels that is useful for SMC? 3.3 MODEL TRANSF ORM The transform adds a wrapp er coarseToFineModel that calls the mo del once for eac h coarsening lev el, from coarse to fine, each time setting the (dynamically scop ed) v ariable store.level (in the following, lev el ) to the current level. The transform also replaces all ERPs, factors, primitive functions, and score functions with lifted versions that act differen tly depending on lev el . The coarse mo dels only affect the fine-grained mo dels through the side-effect of storing the v alues of their random choices and the w eights of their factors in store , whic h is used b y the finer-grained mo dels to conditionally sample their random c hoices and compute their factor w eights. The syntactic transform itself pro ceeds as follo w: 1. F or eac h ERP , primitive and score function, insert the corresponding lifted definition b efore the model definition. F or example: var liftedPlus = liftPrimitive(plus) 2. Rename all ERPs, factors, primitive and score functions to their corresp onding lifted names in mo del and compound functions. F or example, replace plus with liftedPlus . 3. W rap all constants. F or example, replace c with liftConstant( c ) . 4. As the first statemen t in the mo del, store the cur- ren t address , which is needed to compute relative addresses of random choices and factors later on: store.base = getStackAddress() 5. Add a wrapp er coarseToFineModel that calls the mo del once for eac h coarsening level (see Figure 3). W e will now describ e the mechanisms b ehind lifted constan ts, random v ariables, factors, and primitive functions. 3.3.1 Lifting Constan ts T o lift a constan t, w e simply rep eatedly coarsen it to the current level: Algorithm 2: Lifting constants pro cedure liftedConst ant ( c ) for i =0; i < level ; i ++ do c = coarsenV alue( c ) end for return c end pro cedure 3.3.2 Lifting Random V ariables W e lift each random v ariable to a sequence of v ariables, from coarse to fine, such that (1) on the coarsest level, w e unconditionally sample from the original distribu- tion, coarsened N times; (2) at eac h lev el n < N , we sample from the set of v alid refinemen ts of the v alue of the next-coarser v ariable; (3) marginalizing out all coarser v ariables, the distribution at the finest level reco vers the distribution of the original uncoarsened v ariable. Let D 0 b e the domain of an ERP with distribution p 0 ( x ), and let D n = cv n ( D 0 ) be the set of v alues arriv ed at by rep eatedly applying the coarsenValue function (written cv for short). W e w ould like to decomp ose the original distribution p 0 ( x ) in to a sequence of conditional distributions q ( x n | x n +1 ) for random v ariables x n ∈ D n . If we take: q ( x n | x n +1 ) ∝ X x 0 p ( x 0 ) 1 cv n ( x 0 )= x n ∧ cv( x n )= x n +1 Coarse-to-Fine Sequen tial Monte Carlo for Probabilistic Programs Algorithm 1: Lifting ERPs pro cedure sampleLiftedERP ( e 0 , l ) v 1 = store[erpName( address , l + 1)] if v 1 is undefined then if l is 0 then return e 0 .sample() else return coarsenV alue(sampleLiftedERP( e 0 , l − 1)) end if else ~ v = refineV alue( v 1 ) ~ p = ~ v .map( λ (v) { return getERPScore( e 0 , v , l ) } ) return sampleDiscrete( ~ v, ~ p ) end if end pro cedure pro cedure liftERP ( e 0 ) e 1 = makeERP( λ () { sampleLiftedERP ( e 0 , level ) } ) return λ () { v = sample( e 1 ) store[erpName( address , level )] = v return v } end pro cedure and for the coarsest lev el, N , q ( x N ) = X x 0 p ( x 0 ) 1 cv N ( x 0 )= x N then it is clear that w e preserve the marginal distribu- tion on x 0 . That is: p ( x 0 ) = X x 1 ,...,x N q ( x 0 | x 1 ) · · · q ( x N − 1 | x N ) q ( x N ) . Algorithm 1 sho ws how we implemen t sampling from suc h a decomp osed ERP at a giv en lev el. Note that, to lo ok up the existing v alue at the next-coarser level, w e iden tify random v ariables on differen t levels based on relativ e stack addresses (via erpName ); this is critical for mo dels such as grammars with an un b ounded num b er of random choices. The implementation for computing the score of lifted ERPs is analogous (although this score is not needed for pure particle filtering, so we omit the details). Both are parameterized b y a function getERPScore that estimates the total probability of the equiv alence class of v alues that map to a given coarse v alue. These ERP scores for coarse v alues can be estimated by sampling refinements, via user-sp ecified scoring functions (as in Section 4.1), or using exact computation (as in Section 4.2). 3.3.3 Lifting F actors W e treat the lifted counterpart to factors as heuristic factors: the score on the next-higher level is subtracted out on the current level. By canceling out these fac- tors when inference pro ceeds to finer-grained levels, w e ensure that the ov erall distribution of the mo del remains unchanged—ultimately , only the base-level factors count. This incremental scoring process formal- izes the intuition of increasing attention to detail as w e mov e down the abstraction ladder. Lik e random v ariables, w e identify factors based on relative stack addresses. Algorithm 3: Lifting factors pro cedure liftedF actor ( s ) s 1 = store[factorName( address , level + 1)] ∨ 0 factor( s − s 1 ) store[factorName( address , level )] = s end pro cedure 3.3.4 Lifting Primitiv e F unctions When a primitive function f is applied to a base-level v alue, it is deterministic. Now we are interested in lifting primitive functions to op erate on coarse v alues. Ho wev er, eac h coarse v alue corresp onds to a set of base-lev el v alues. F or differen t elements in this set, f ma y return different v alues. This suggests that lifted v ersions of f ma y be sto c hastic. W e wish to preserve the marginal distribution of the entire program, but b ecause we hav e required ERPs to b e unconditional and treat coarse factors as canceling heuristics, we ha v e some latitude in how to lift the primitive functions. Algorithm 4 shows one approach to the computation of such coarsened primitives. This algorithm is pa- rameterized by a function marginalize , which may be implemen ted using exact computation, sampling, etc, and which may cac he its computations. Algorithm 4: Lifting primitive functions pro cedure liftPrimitive ( f ) return λ ( ~ x ) { e = marginalize( λ () { ~ x 0 = ~ x .map((uniformDra w ◦ refineV alue) level ) v = f ( ~ x 0 ) return coarsenV alue level ( v ) } ) return sample( e ); } end pro cedure Scoring functions—those that directly compute a score to b e consumed by factor —are a sp ecial case of prim- itiv e functions. W e kno w that they return a n umber, so instead of sampling from the return distribution, w e can simply take the exp ectation. W e find that this leads to more stable, and hence useful, heuristic factors. Algebraic data type constructors are another sp ecial case. In man y circumstances (including Section 4.2), they can b e treated as transparent with resp ect to coarsening. F or example, it is frequently useful to Andreas Stuhlm ¨ uller Rob ert X.D. Hawkins N. Siddharth Noah D. Go o dman define coarsenValue([ x 1 , x 2 , . . . ]) as equiv alen t to [ coarsenValue( x 1 ), coarsenValue( x 2 ), . . . ] . More gen- erally , if a function can apply to coarsened ob jects directly , it can be marked as p olymorphic . In that case, no lifting is necessary . F or example, if w e hav e a function that computes the mean of a list of n um- b ers, and if our coarsening maps lists of num b ers to shorter lists (without changing the t yp e of the list ele- men ts), then w e ha ve the option to mark this function as p olymorphic. 4 EMPIRICAL EV ALUA TION W e introduced t wo complemen tary ideas: (1) Coarse-to- fine SMC (which can be useful even for a single random v ariable with a big state space, giv en an appropriate coarsening function) and (2) constructing coarse-to- fine mo dels from probabilistic programs (using a v alue coarsening function to lift eac h of the comp onents of a probabilistic program to op erate on coarsened v al- ues without c hanging the marginal distribution of the program). Our exp erimen ts mirror this structure: In the first set of exp erimen ts, w e ev aluate the b enefits of coarse-to-fine SMC on an Ising model and its depth-from-disparit y v ariation. These mo dels use a single matrix-v alued random v ariable and a single global factor (the energy function). In the second set of experiments—on a F actorial Hidden Mark ov Mo del—we mak e lib eral use of probabilistic program constructs: the mo del is defined as a recursiv e sto c hastic function, and the state transition function is implemented using the higher-order function map . This set of exp eriments fully exercises our program transform, including the iden tification of corresp onding coarse and fine random v ariables using relative stack addresses. All mo dels are expressed as programs, and all experi- men ts are implemented within the same framework. F or eac h experiment, we sho w ho w the av erage imp ortance w eight—essen tially a low er b ound on the normalization constan t (Grosse, 2013)—b ehav es ov er time. Note that these exp erimen ts are preliminary: They do not yet employ rejuvenation steps, and hence only test the qualit y of the sequence of coarse-to-fine distribu- tions in the setting of Sequen tial Imp ortance Resam- pling. While the use of a coarse-to-fine sequence of imp ortance distributions results in b etter p erformance than baseline, we ha ve only ev aluated the p erformance compared to a relatively weak baseline (imp ortance sampling for the MRF mo dels, particle filtering for the F actorial HMM mo del), and expect that the results are not impressive on an absolute scale. Indeed, as describ ed in Section 5.2, we b eliev e that some mo difi- cations and further developmen ts are needed to make this approach useful in practice. 4.1 MARK OV RANDOM FIELDS A num b er of applications in ph ysics, biology , and com- puter vision can b e mo deled as Mark ov Random Fields (MRFs). These problems are unified in sp ecifying a global energy function whic h, b y virtue of the Mark ov prop ert y , depends only on the lo cal neighborho o ds of elemen ts. Once this energy function is specified, it can b e difficult to minimize; sp ecialized optimization algorithms hav e b een developed for particular domains (Szeliski et al., 2008), but there is no generally applica- ble solution. The lo cal neigh b orho o d structure of the energy func- tion, how ev er, suggests that a coarse-to-fine transfor- mation may b e useful: if neigh b orho o ds are coarsened in to single represen tativ e v alues, then the energy can be minimized in this smaller space, using heuristic factors to guide search in the original space. W e do not claim that our mo del transformation constitutes a solution in itself, but can b e used in tandem with other algorithms to effectively reduce the search space. In this situa- tion, we demonstrate the coarse-to-fine transformation on tw o simple MRFs: the Ising mo del and the stereo matc hing task. 4.1.1 Ising mo del Coarse-to-fine transformations hav e a long history of applications in physics. When studying systems which in teract across multiple orders of magnitude, such as fluids, ferromagnets, and metal alloys, it is intractable to work at the most fine-grained level. Since exact solutions do not exist, physicists developed a method called the r enormalization gr oup (Wilson, 1975, 1979), whic h effectiv ely maps the fine-grained representation of a system onto a coarser but identically parameterized represen tation with similar prop erties. One of the simplest testb eds for renormalization group metho ds is the 2-dimensional Ising mo del. The state space is an n × n lattice of cells, eac h of whic h can take one of tw o spin v alues, σ i ∈ {− 1 , +1 } . The ener gy of a particular configuration of spins σ is given b y the Hamiltonian: H ( σ ) = J X h ij i σ i σ j where J is the inter action c onstant and h ij i indicates summing ov er all p ossible pairs of neighbors. Note that the num b er of p ossible configurations grows ex- p onen tially in n , rendering an exhaustive search for lo w-energy states imp ossible. Coarse-to-Fine Sequen tial Monte Carlo for Probabilistic Programs 0 1000 2000 3000 4000 5000 0 200 400 600 800 n = 27, T = 1 Seconds Estimated log Z No coarsening 30 lev els 90 lev els (a) Ising at low temp erature ( T = 1) 0 500 1500 2500 3500 −50 0 50 100 150 n = 27, T = 2.39 Seconds Estimated log Z No coarsening 30 lev els 90 lev els (b) Ising at the critical state ( T = 2 . 39) 0 100 200 300 400 500 6300 6500 6700 n = 10, m = 40, d = 15 Seconds Estimated log Z No coarsening 50 lev els 100 lev els (c) Depth-from-disparity Figure 4: Quantitativ e inference results for Marko v Random Field mo dels The interaction constant can b e written as J = 1 /T where T is the temp er atur e of the system. The config- uration distribution tak es differen t forms at differen t temp eratures: we will conduct exp erimen ts at T = 1, a lo w-temp erature condition where spins prefer to glob- ally align, and T = 2 . 39, the critic al temp er atur e , where long-range correlations dominate. Abov e the critical temp erature (e.g. for T > 3), cells become uncoupled and the energy distribution across configurations con- v erges to uniform, so we fo cus on lo wer temp eratures. W e implemented the Ising energy-minimization prob- lem as a simple probabilistic program, whic h first sam- ples a set of spins and then factors based on the energy of that configuration. T o apply our coarse-to-fine trans- formation, w e used the spin-blo ck ma jorit y-rule for our coarsenValue and refineValue functions. T o coarsen, this rule replaced eac h 3 × 3 sub-lattice with its mo dal v alue (see Figure 5). T o refine a single cell in the coarse matrix, we considered the space of all 256 p ossible 3 × 3 matrices that could coarsen to that v alue. Note that our sequen tial refinemen t – making man y small choices instead of one big one – differs from the typical renormalization group approac h, which si- m ultaneously replaces all sublattices and rew eights the in teraction constan t J accordingly . F or example, consider a fine-grained v alue such as 1 : A = 0 1 0 0 0 0 1 1 0 1 0 0 1 1 1 1 0 0 0 1 1 0 0 0 0 0 0 0 0 1 0 1 1 0 1 1 1 W e use spins { 1 , 0 } here instead of { 1 , − 1 } to mak e the matrices more readable. Coarser v alues are partially-coarsened matrices, which can b e represen ted as pairs of matrices, e.g. cv ( A ) = ∗ ∗ ∗ 0 0 0 ∗ ∗ ∗ 1 0 0 ∗ ∗ ∗ 1 0 0 0 1 1 0 0 0 0 0 0 0 0 1 0 1 1 0 1 1 , 1 ∗ ∗ ∗ where each entry in the second matrix corresponds to a 3x3 blo c k in the first matrix. In each coarsening step, w e only coarsen a single suc h blo c k. As a consequence, there are 90 (= 81 + 9) steps when w e incrementally coarsen a 27x27 matrix first to 9x9, then to 3x3. T o facilitate this sequen tial refinemen t, w e implemen ted a p olymorphic energy function, which can directly re- turn a score for such partially coarsened matrices with- out needing to refine all the wa y down to the most fine-grained level. When this energy function is applied to a matrix that contains cells at different levels of abstraction, the energy computation counts a single coarse cell as a neighbor for each surrounding finer cell (and vice v ersa). W e ran tw o exp eriments on 27 × 27 lattices to demon- strate the p erformance of our coarsened program at differen t lev els of coarsening. In our first exp eriment, w e set the temperature to T = 1 and a verage 10 runs for b oth coarse-to-fine filtering (with 90 and 30 levels) and flat imp ortance sampling. The 90 level coarsening condition fully reduces the 27 × 27 lattice to a 3 × 3 lattice, and the 30 lev el condition yields a partially coarsened matrix. In our second exp eriment, w e set the temp erature to T = 2 . 39 and run the same set of conditions. Figures 4a and 4b show the av erage im- p ortance weigh t for differen t le v els of coarsening. W e see that even in termediate levels of coarsening p erform Andreas Stuhlm ¨ uller Rob ert X.D. Hawkins N. Siddharth Noah D. Go o dman 0 5 10 15 20 25 0 5 10 15 20 25 0 5 10 15 20 25 0 5 10 15 20 25 0 5 10 15 20 25 0 5 10 15 20 25 Figure 5: Coarsening at the critical state ( T = 2 . 39) b etter than no coarsening, and that a full coarsening p erforms dramatically b etter than the other conditions. The b est solution from the second exp eriment is shown in the right-most panel of Figure 5, along with the tw o coarser configurations from which it w as refined. This displa ys the characteristic local structure of the Ising mo del at critical temp eratures. 4.1.2 Stereo matc hing Another common application of MRFs is the stereo matc hing task (Boyk o v et al., 2001; Scharstein and Szeliski, 2002). The goal is to estimate the disparity b et ween t wo images, I and I 0 , captured from slightly shifted viewp oints. This disparity map can b e used to reco ver a rough measure of depth. As in the Ising case, w e implemen t this task as a probabilistic program b y sampling a lattice of disparit y v alues, and factoring on its energy . The energy function for a particular set of disparity v alues has tw o parts: (1) a smo othing term penalizing distance b etw een the v alues of neigh b ors and (2) a data c ost term p enalizing each particular disparity v alue for discrepancies with the true data (as measured b y comparing the difference in pixel intensities at the giv en discrepancy): H ( d ) = X { p,q }∈N V p,q ( d p , d q ) + X p C ( p, d p ) W e denote the intensit y of pixel p in image I b y I p . Since corresp onding pixels should ha ve similar in tensi- ties, we set our data cost te rm C ( p, d p ) as suggested by Bo yko v et al. (2001), taking the absolute difference be- t ween I p and I 0 p + d p . T o reduce sensitivity to v ariability in image sampling, we interpolate b etw een neigh b oring in tensities in the neighborho o d x ∈ ( d − 0 . 5 , d + 0 . 5) and tak e the minimum. F or our smo othing function, w e use the truncated squared error: V p,q ( d p , d q ) = min(( d p − d q ) 2 , V max ) with V max = 5. W e implemented energy minimization for the stereo matc hing mo del analogous to the Ising mo del, but with differen t coarsening and refinement functions: coars- ening replaces a 2 × 2 sublattice with its mean and standard deviation; refinement returns the set of all p ossible 2 × 2 lattices with the given mean and stan- dard deviation that could hav e o ccurred at the giv en abstraction level. Figure 4c sho ws that in a comparison betw een coarse-to- fine SMC and imp ortance sampling, SMC finds low er- energy states more efficien tly for a 10x40 cropp ed pair of images from the Middlebury dataset (Sc harstein and Szeliski, 2002), although w e exp ect that the absolute qualit y of the states found is not very goo d for either algorithm. 4.2 F A CTORIAL HMM In our second example, we test the hypothesis that abstractions are useful as a means to av oid particle collapse in large state spaces. F or this reason, we chose the F actorial HMM, a model with a large effectiv e state size even within a single particle filter step. The F actorial HMM is a HMM where the state factors in to m ultiple v ariables (Ghahramani and Jordan, 1997). If there are M p ossible v alues for eac h latent state, and k state v ariables p er time step, then the effective state size is M k . If only few of these hav e high probabilit y , then even for mo derate M and k it is p ossible that there are not sufficiently many fine-grained particles to co ver all regions of high p osterior probability . In our first exp eriment, w e use a F actorial HMM with 3 v ariables p er step, 256 possible state v alues p er v ariable, and 6 observed time steps. W e run b oth coarse-to- fine filtering (with 6 and 8 abstraction lev els) and flat filtering and a verage 10 runs. W e coarsen the F actorial HMM by merging some state and observ ation symbols. T o test the h yp othesis that coarse-to-fine inference will work b est when abstrac- tions matc h the dynamics of the mo del, w e generate transition and observ ation matrices with appro ximately hierarc hical structure as follows. En umerate state 1 to N . F or states i and j , we let the transition probabilit y b e approximately prop ortional to 2 −| i − j | . Similarly , for state i , the probability of generating observ ation k is prop ortional to 2 −| i − k | . Eac h state consists of three substates, and each sub- state is c hosen from { 1 , 2 , . . . , 256 } . Coarsening maps n umbers to successively greater in terv als. F or example, coarsening maps (5 , 11 , 56) to ([5 , 6] , [11 , 12] , [55 , 56]), and on the next-coarser level to ([5 , 8] , [11 , 14] , [55 , 58]). Figure 6a shows that plain particle filtering consistently underestimates the true normalization constant relative to coarse-to-fine filtering. In our second exp erimen t (Figure 6b), we compare the b eha vior of flat and coarse-to-fine filtering as the n um- b er of HMM states increases from 2 3 to 256 3 . As before, Coarse-to-Fine Sequen tial Monte Carlo for Probabilistic Programs 0 50 100 150 200 −350 −300 −250 −200 Seconds Estimated log Z No coarsening 6 le vels 8 le vels (a) SMC using a coarse-to-fine mo del finds more proba- ble samples earlier on than SMC without coarsening. −300 −200 −100 0 Number of HMM states Estimated log Z 50 3 100 3 150 3 200 3 250 3 Coarse−to−fine SMC Plain SMC (b) F or small n umbers of states, coarse-to-fine is indis- tinguishable from plain particle filtering. As the n um b er of states grows, coarse-to-fine is able to provide b etter solutions in the same amoun t of time. Figure 6: Inference results for a F actorial HMM w e keep the runtime constant. F or small n umbers of states, plain and coarse-to-fine SMC give v ery similar estimates. As the num b er of states grows, the differ- ence b etw een coarse-to-fine and plain filtering grows as well, indicating that coarse-to-fine is most useful in large state spaces. 5 DISCUSSION 5.1 WHEN DOES CO ARSE-TO-FINE INFERENCE HELP? It is generally difficult to compute or estimate the p os- terior probabilit y of a set of (program) states. How ev er, this is precisely what is required for coarse-to-fine infer- ence to work: when we ev aluate a program on a coarse lev el, we need to estimate for each coarse v alue how lik ely its refinements are under the p osterior. This sug- gests that settings where coarse-to-fine inference works ha ve sp ecial c haracteristics that make such estimation feasible. W e now name a few. First, the giv en program may satisfy independence as- sumptions that mak e estimating p osterior probabilities feasible. F or example, for the program sho wn in Figures 1 and 2a, the score function only dep ends on one of x and y at a time; hence, w e can indep endently compute the estimated score for the refinements of x and y , and use this information in computing the estimated scores for abstract v alues of b oth. Second, we may b e in a setting where the t yp e of a coarse v alue matc hes the t yp e of its refinements. In that situation, “p olymorphic” score- and primitive functions may b e a c heap heuristic for estimating the p osterior probability of a coarse state. F or the Ising mo del, the energy function satisfies this criterion up to parameterization. Third, coarse-to-fine may be particularly useful in the amortized setting (Stuhlm ¨ uller et al., 2013). Learn- ing the conditional distributions asso ciated with lifted primitiv e functions is one instan tiation of “learning to do inference”. This is particularly feasible for smooth state spaces, where one can effectively estimate en tire distributions from a few samples. Another answer to the question of when coarse-to-fine helps is to p oint out that this depends on what inference algorithm is used. F or inference b y en umeration, exact coarsening (i.e., coarsening within v alues that hav e the same p osterior probability) is useful for increasing computational efficiency . By contrast, for sequential Mon te Carlo metho ds, it is frequen tly more desirable to merge states with differ ent p osterior probabilit y , as this smo othes the state space and th us increases statistical efficiency . 5.2 CURRENT LIMIT A TIONS The system as presented has three main limitations. W e will now describ e these limitations and outline how they can b e addressed. First, as discussed in Section 3.2, the coarse-to-fine transform can only b e applied to mo dels where all ERPs are indep endent. W e ha v e describ ed how to transform mo dels into this form and referred to the tec hnique of merging sample and factor statemen ts describ ed in Go o dman and Stuhlm ¨ uller (2014) as a Andreas Stuhlm ¨ uller Rob ert X.D. Hawkins N. Siddharth Noah D. Go o dman to ol for reco vering statistical efficiency lost in such a transform. W e hav e not yet emplo yed this merging in our exp eriments, which mak es the results more difficult to in terpret. W e exp ect that it would be feasible to dev elop a version of the coarse-to-fine transform that directly op erates on dep endent ERPs. Second, single-site MCMC rejuvenation steps are of v ery limited use in the curren t setup. This is due to the combination of (a) computing coarsened v al- ues by binning fine-grained v alues (using the user- pro vided coarsenValue function) and (b) using this coarsening to build a hierarchical mo del to which stan- dard SMC algorithms can b e applied. In this hierarchi- cal mo del, each fine-grained v alue v only has non-zero probabilit y if the corresponding coarse v alue is set to coarsenValue( v ) . As a c onsequence, w e reject all MCMC steps that c hange this coarse-grained v alue to an ything else, and likewise all steps that change v to v 0 suc h that coarsenValue( v ) 6 = coarsenValue( v 0 ) . These strong dep endencies b et ween levels of abstraction can b e a voided if we only use each level in the sequence of coarse-to-fine models as an imp ortance sampler for the next lev el instead of constructing a coarse-to-fine mo del. Third, coarsening only applies to individual v ariables and v alues, not to sets of v ariables. Consider the Ising mo del: while w e implemented it using a matrix-v alued random v ariable, a more natural implementation w ould use indep endent Bernoulli v ariables for each of the ma- trix entries. Our current scheme cannot express coars- enings that apply to such sets of v ariables. While it is easy to see how our scheme can b e extended to coars- ening multiple v ariables within the same con trol-flow blo c k (by transforming multiple v ariables to a single tuple-v alued v ariable), generic coarsening of v ariables that are created in different control flow blo cks will require new inno v ations. 5.3 RELA TED AND FUTURE WORK The work in this pap er is related to and inspired by a broad background of work on coarse-to-fine and lifted inference, including (but not limited to) w ork by Char- niak et al. (2006) on coarse-to-fine inference in PCF Gs, w ork on coarse-to-fine inference for first-order proba- bilistic mo dels by Kiddon and Domingos (2011), and attempts to “fatten” particles for filtering with broader co verage (Kulk arni et al., 2014; Steinhardt and Liang, 2014). Outside of machine learning, we tak e inspiration from Appr oximate Bayesian Computation (condition- ing on summary statistics) and r enormalization gr oup approac hes to inference in Ising mo dels (Lyman and Zuc kerman, 2006). This approach op ens up man y exciting researc h di- rections, including (1) understanding the relation to abstract interpretation, and Galois Connections sp ecif- ically (e.g., Cousot and Monerau, 2012; Monniaux, 2000), (2) automatically deriving coarsenings for hier- arc hical Ba y esian mo dels, (3) learning go o d coarsenings, and efficient learning of approximations for coarsened primitiv e and score functions, and (4) coarsening (merg- ing) m ultiple v ariables across blo c ks, potentially via flo w analysis. W e exp ect that the most in teresting applications of coarse-to-fine approaches to efficient inference are yet to come. Ac knowledgemen ts This material is based on researc h sp onsored b y DARP A under agreement num b er F A8750-14-2-0009. The U.S. Go vernmen t is authorized to repro duce and distribute reprin ts for Go vernmen tal purp oses notwithstanding an y cop yright notation thereon. RXDH is supp orted by a NSF Graduate Researc h F el- lo wship and a Stanford Graduate F ello wship. References Y uri Boyk ov, Olga V eksler, and Ramin Zabih. F ast appro ximate energy minimization via graph cuts. Pattern Analysis and Machine Intel ligenc e, IEEE T r ansactions on , 23(11):1222–1239, 2001. Eugene Charniak, Mark Johnson, Mic ha Elsner, Joseph Austerw eil, David Ellis, Isaac Haxton, Catherine Hill, R. Shriv aths, Jerem y Mo ore, Mic hael Pozar, and Theresa V u. Multilev el Coarse-to-fine PCFG Pars- ing. Pr o c e e dings of the Human L anguage T e chnolo gy Confer enc e of the NAACL, Main Confer enc e , pages 168–175, 2006. P atrick Cousot and Mic hael Monerau. Probabilistic abstract interpretation. In Pr o gr amming L anguages and Systems , volume 7211 of L e ctur e Notes in Com- puter Scienc e , pages 169–193. Springer, 2012. Cameron E F reer, Vik ash K Mansinghk a, and Daniel M Ro y . When are probabilistic programs probably com- putationally tractable? NIPS 2010 Workshop on Monte Carlo Metho ds in Mo dern Applic ations , 2010. Zoubin Ghahramani and Mic hael I Jordan. F actorial Hidden Marko v Mo dels. Machine L e arning , 29(2-3): 245–273, Nov ember 1997. V alerie Goffaux, Judith Peters, Julie Haubrech ts, Chris- tine Schiltz, Bernadette Jansma, and Rainer Go ebel. F rom coarse to fine? Spatial and temp oral dynamics of cortical face pro cessing. Cer ebr al Cortex , page bhq112, 2010. Noah D Goo dman and Andreas Stuhlm ¨ uller. The Design and Implemen tation of Probabilistic Pro- Coarse-to-Fine Sequen tial Monte Carlo for Probabilistic Programs gramming Languages. http://dippl.org , 2014. Ac- cessed: 2015-4-16. Noah D Go o dman, Vik ash Mansinghk a, Daniel M Roy , K A Bona witz, and Josh ua B T enenbaum. Churc h: a language for generative mo dels. Pr o c e e dings of the 24th Confer enc e in Unc ertainty in A rtificial Intel li- genc e , pages 220–229, 2008. Roger Grosse. Unbiased estimators of partition func- tions are basically lo wer b ounds. http://hips.seas. harvard.edu/ , January 2013. Chlo ´ e Kiddon and P edro Domingos. Coarse-to-Fine Inference and Learning for First-Order Probabilistic Mo dels. In Pr o c e e dings of the Twenty-Fifth AAAI Confer enc e on A rtificial Intel ligenc e , pages 1049– 1056, August 2011. Daphne Koller, David McAllester, and Avi Pfeffer. Ef- fectiv e Ba yesian Inference for Sto chastic Programs. In Pr o c e e dings of the National Confer enc e on Artifi- cial Intel ligenc e , pages 740–747, 1997. T ejas D Kulk arni, Ardav an Saeedi, and Samuel Ger- shman. V ariational Particle Approximations. arXiv pr eprint arXiv:1402.5715 , pages 1–11, 2014. Edw ard Lyman and Daniel M. Zuc kerman. Resolution exc hange sim ulation with incremental coarsening. Journal of Chemic al The ory and Computation , 2: 656–666, 2006. ISSN 15499618. Mic hael D Menz and Ralph D F reeman. Stereoscopic depth processing in the visual cortex: a coarse-to-fine mec hanism. Natur e neur oscienc e , 6(1):59–65, 2003. Da vid Monniaux. Abstract in terpretation of probabilis- tic seman tics. In Static Analysis , volume 1824 of L e ctur e Notes in Computer Scienc e , pages 322–339. Springer, 2000. Sla v P etrov, Aria Haghighi, and Dan Klein. Coarse- to-fine syntactic machine translation using language pro jections. Empiric al Metho ds in Natur al L anguage , pages 108–116, 2008. Avi Pfeffer. 14 The Design and Implemen tation of IBAL: A General-Purp ose Probabilistic Language. Intr o duction to statistic al r elational le arning , page 399, 2007. Daniel Scharstein and Richard Szeliski. A taxonomy and ev aluation of dense t wo-frame stereo correspon- dence algorithms. International journal of c omputer vision , 47(1-3):7–42, 2002. Jacob Steinhardt and P ercy Liang. Filtering with Abstract P articles. In Pr o c e e dings of The Thirty- First International Confer enc e on Machine L e arning , pages 727–735, June 2014. Andreas Stuhlm ¨ uller, Jessica T a ylor, and Noah D Go o d- man. Learning Sto chastic In verses. A dvanc es in Neur al Information Pr o c essing Systems (NIPS) 27 , 2013. Ric hard Szeliski, Ramin Zabih, Daniel Scharstein, Olga V eksler, Vladimir Kolmogorov, Aseem Agarwala, Marshall T appen, and Carsten Rother. A com- parativ e study of energy minimization metho ds for mark ov random fields with smo othness-based priors. Pattern Analysis and Machine Intel ligenc e, IEEE T r ansactions on , 30(6):1068–1080, 2008. Y un T ang, W en-Ju Liu, Hua Zhang, Bo Xu, and Guo- Hong Ding. One-pass coarse-to-fine segmental sp eec h deco ding algorithm. In A c oustics, Sp e e ch and Signal Pr o c essing, 2006. ICASSP 2006 Pr o c e e dings. 2006 IEEE International Confer enc e on , v olume 1, pages I–I. IEEE, 2006. Kenneth G Wilson. The renormalization group: Criti- cal phenomena and the Kondo problem. R eviews of Mo dern Physics , 47(4):773, 1975. Kenneth G. Wilson. Problems in Ph ysics with many Scales of Length. Scientific Americ an , 241:158–179, 1979. ISSN 0036-8733. Da vid Wingate, Andreas Stuhlm¨ uller, and Noah D Go odman. Light weigh t Implementations of Prob- abilistic Programming Languages Via T ransforma- tional Compilation. In Pr o c e e dings of the 14th in- ternational c onfer enc e on Artificial Intel ligenc e and Statistics , 2011.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment