Enhancement and Recognition of Reverberant and Noisy Speech by Extending Its Coherence

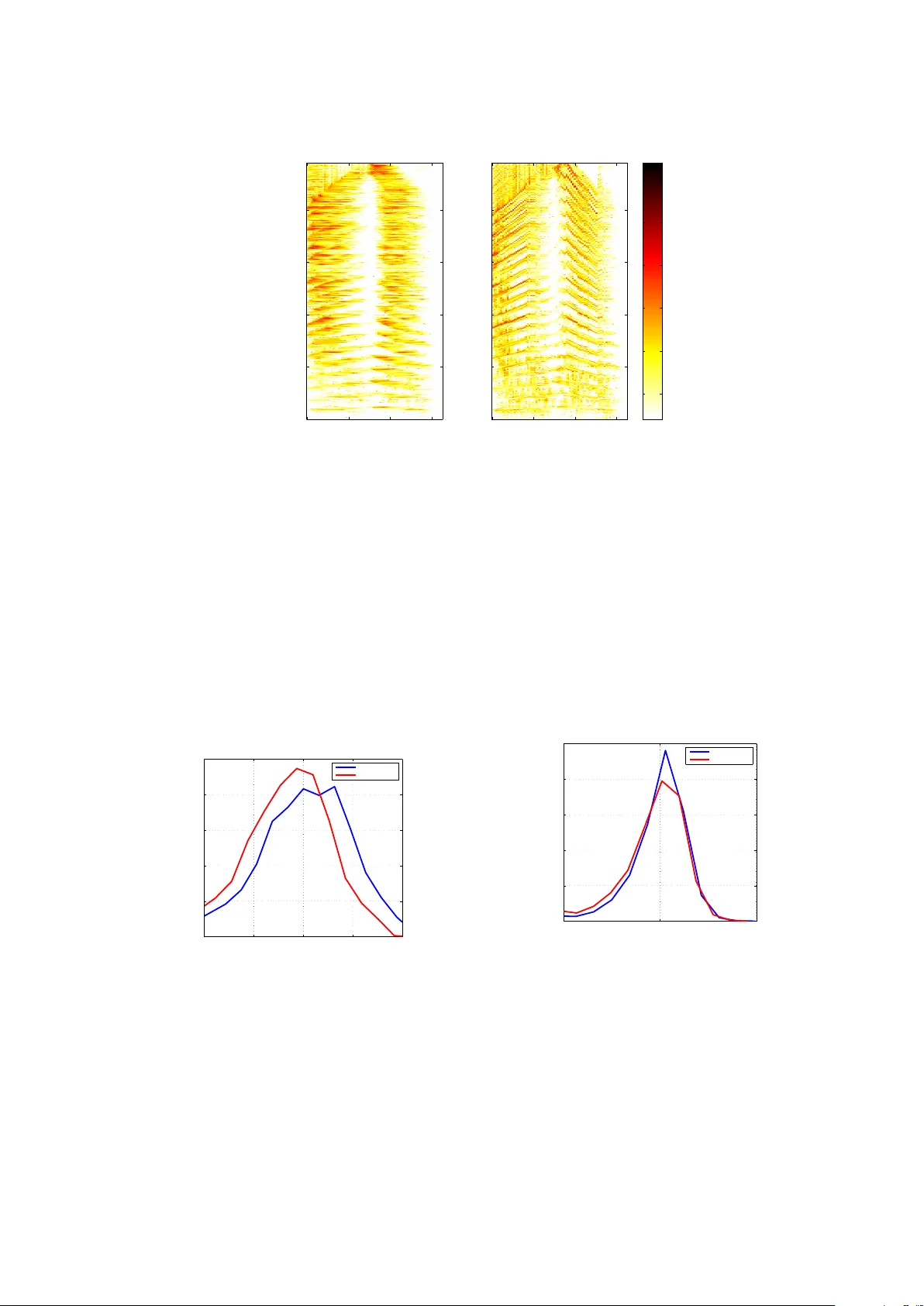

Most speech enhancement algorithms make use of the short-time Fourier transform (STFT), which is a simple and flexible time-frequency decomposition that estimates the short-time spectrum of a signal. However, the duration of short STFT frames are inh…

Authors: Scott Wisdom, Thomas Powers, Les Atlas