Fast and Flexible ADMM Algorithms for Trend Filtering

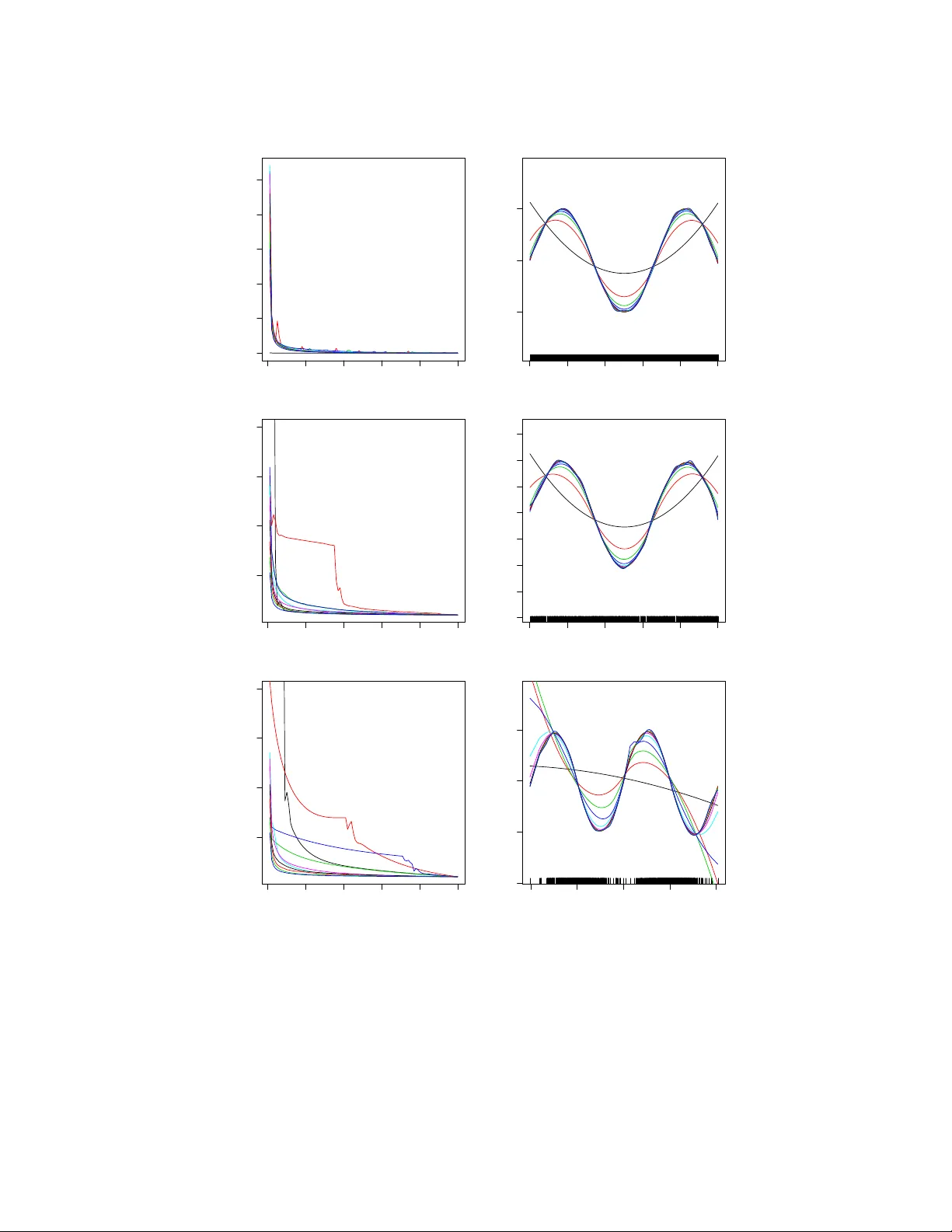

This paper presents a fast and robust algorithm for trend filtering, a recently developed nonparametric regression tool. It has been shown that, for estimating functions whose derivatives are of bounded variation, trend filtering achieves the minimax…

Authors: Aaditya Ramdas, Ryan J. Tibshirani