A Generative Word Embedding Model and its Low Rank Positive Semidefinite Solution

Most existing word embedding methods can be categorized into Neural Embedding Models and Matrix Factorization (MF)-based methods. However some models are opaque to probabilistic interpretation, and MF-based methods, typically solved using Singular Va…

Authors: Shaohua Li, Jun Zhu, Chunyan Miao

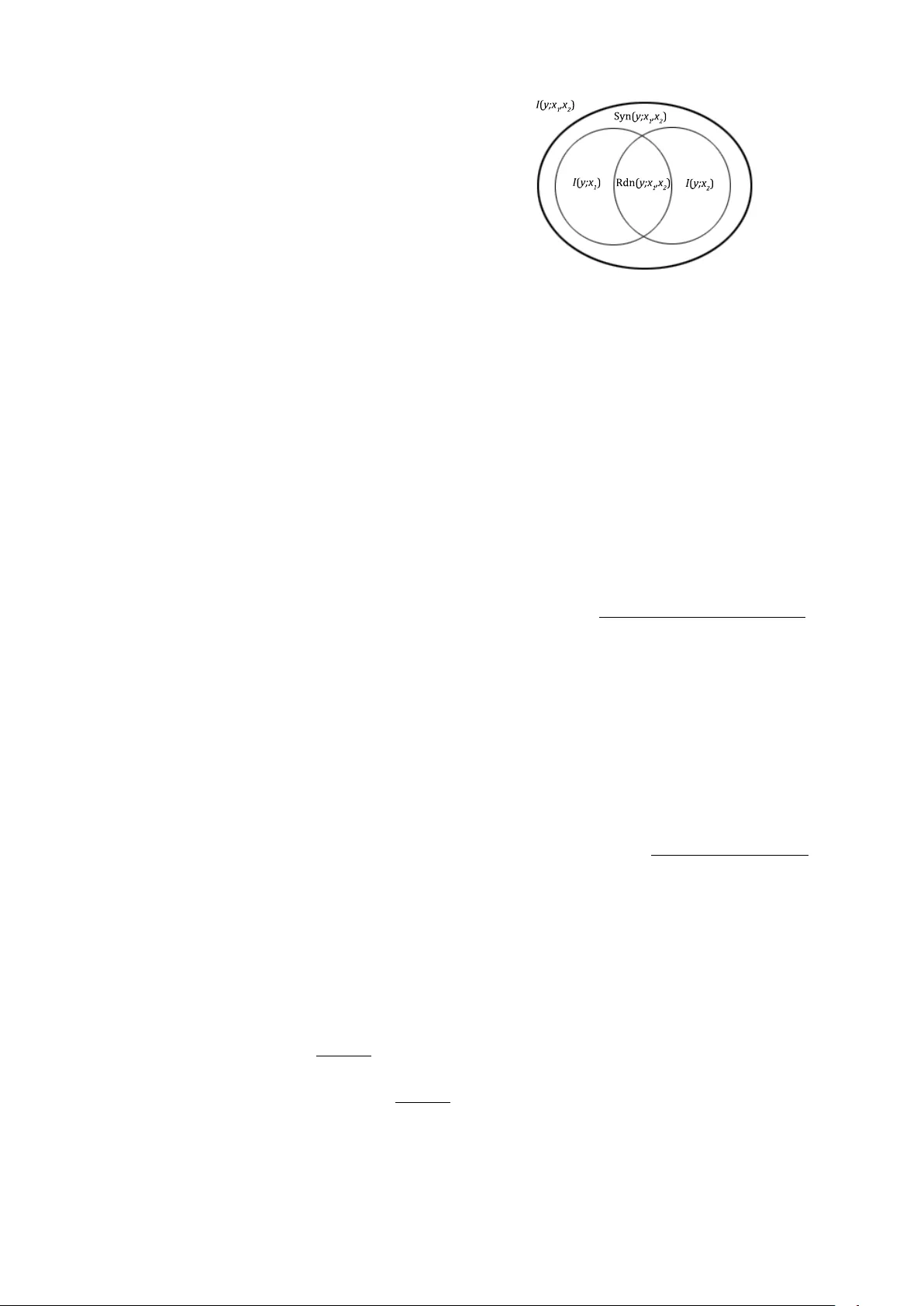

A Generativ e W ord Embedding Model and its Low Rank P ositi ve Semidefinite Solution Shaohua Li 1 , Jun Zhu 2 , Chunyan Miao 1 1 Joint NTU-UBC Research Centre of Excellence in Acti ve Li ving for the Elderly (LIL Y), Nanyang T echnological Univ ersity , Singapore 2 Tsinghua Uni versity , P .R. China lish0018@ntu.edu.sg, dcszj@tsinghua.edu.cn, ascymiao@ntu.edu.sg Abstract Most existing w ord embedding methods can be categorized into Neural Embedding Models and Matrix Factorization (MF)- based methods. Ho we ver some mod- els are opaque to probabilistic interpre- tation, and MF-based methods, typically solved using Singular V alue Decomposi- tion (SVD), may incur loss of corpus in- formation. In addition, it is desirable to incorporate global latent factors, such as topics, sentiments or writing styles, into the word embedding model. Since gen- erati ve models provide a principled way to incorporate latent factors, we propose a generati ve word embedding model, which is easy to interpret, and can serve as a basis of more sophisticated latent factor models. The model inference reduces to a low rank weighted positive semidefinite approximation problem. Its optimization is approached by eigendecomposition on a submatrix, follo wed by online blockwise regression, which is scalable and av oids the information loss in SVD. In experi- ments on 7 common benchmark datasets, our vectors are competiti ve to word2vec, and better than other MF-based methods. 1 Introduction The task of word embedding is to model the distri- bution of a word and its context words using their corresponding vectors in a Euclidean space. Then by doing regression on the relev ant statistics de- ri ved from a corpus, a set of vectors are recovered which best fit these statistics. These v ectors, com- monly referred to as the embeddings , capture se- mantic/syntactic regularities between the w ords. The core of a word embedding method is the link function that connects the input — the embed- dings, with the output — certain corpus statistics. Based on the link function, the objectiv e function is de v eloped. The reasonableness of the link func- tion impacts the quality of the obtained embed- dings, and dif ferent link functions are amenable to dif ferent optimization algorithms, with different scalability . Based on the forms of the link func- tion and the optimization techniques, most meth- ods can be di vided into tw o classes: the traditional neural embedding models , and more recent low rank matrix factorization methods . The neural embedding models use the softmax link function to model the conditional distrib ution of a word given its context (or vice versa) as a function of the embeddings. The normalizer in the softmax function brings intricacy to the optimiza- tion, which is usually tackled by gradient-based methods. The pioneering work was (Bengio et al., 2006). Later Mnih and Hinton (2007) propose three different link functions. Howe ver there are interaction matrices between the embeddings in all these models, which complicate and slow down the training, hindering them from being trained on huge corpora. Mikolo v et al. (2013a) and Mikolo v et al. (2013b) greatly simplify the condi- tional distribution, where the two embeddings in- teract directly . They implemented the well-kno wn “word2v ec”, which can be trained ef ficiently on huge corpora. The obtained embeddings show e x- cellent performance on v arious tasks. Lo w-Rank Matrix Factorization (MF in short) methods include various link functions and opti- mization methods. The link functions are usu- ally not softmax functions. MF methods aim to reconstruct certain corpus statistics matrix by the product of two lo w rank factor matrices. The ob- jecti ve is usually to minimize the reconstruction error , optionally with other constraints. In this line of research, Levy and Goldberg (2014) find that “word2vec” is essentially doing stochastic weighted factorization of the word-conte xt point- wise mutual information (PMI) matrix. They then factorize this matrix directly as a new method. Pennington et al. (2014) propose a bilinear re gres- sion function of the conditional distribution, from which a weighted MF problem on the bigram log- frequency matrix is formulated. Gradient Descent is used to find the embeddings. Recently , based on the intuition that words can be organized in se- mantic hierarchies, Y ogatama et al. (2015) add hi- erarchical sparse regularizers to the matrix recon- struction error . W ith similar techniques, Faruqui et al. (2015) reconstruct a set of pretrained embed- dings using sparse v ectors of greater dimensional- ity . Dhillon et al. (2015) apply Canonical Corre- lation Analysis (CCA) to the word matrix and the context matrix, and use the canonical correlation vectors between the two matrices as word embed- dings. Stratos et al. (2014) and Stratos et al. (2015) assume a Bro wn language model, and prove that doing CCA on the bigram occurrences is equi va- lent to finding a transformed solution of the lan- guage model. Arora et al. (2015) assume there is a hidden discourse vector on a random walk, which determines the distribution of the current word. The slo wly e volving discourse vector puts a con- straint on the embeddings in a small text window . The maximum likelihood estimate of the embed- dings within this text window approximately re- duces to a squared norm objecti ve. There are two limitations in current word em- bedding methods. The first limitation is, all MF- based methods map w ords and their conte xt words to two different sets of embeddings, and then em- ploy Singular V alue Decomposition (SVD) to ob- tain a lo w rank approximation of the word-context matrix M . As SVD f actorizes M > M , some in- formation in M is lost, and the learned embed- dings may not capture the most significant re gu- larities in M . Appendix A giv es a toy example on which SVD does not work properly . The second limitation is, a generati v e model for documents parametered by embeddings is absent in recent development. Although (Stratos et al., 2014; Stratos et al., 2015; Arora et al., 2015) are based on generativ e processes, the generati ve pro- cesses are only for deri ving the local relationship between embeddings within a small te xt window , leaving the likelihood of a document undefined. In addition, the learning objecti ves of some mod- els, e.g. (Mikolov et al., 2013b, Eq.1), e ven hav e no clear probabilistic interpretation. A genera- ti ve word embedding model for documents is not only easier to interpret and analyze, but more im- portantly , pro vides a basis upon which document- le vel global latent factors, such as document topics (W allach, 2006), sentiments (Lin and He, 2009), writing styles (Zhao et al., 2011b), can be incor- porated in a principled manner , to better model the text distrib ution and e xtract relev ant information. Based on the above considerations, we pro- pose to unify the embeddings of words and con- text words. Our link function factorizes into three parts: the interaction of two embeddings capturing linear correlations of two words, a residual captur - ing nonlinear or noisy correlations, and the uni- gram priors. T o reduce ov erfitting, we put Gaus- sian priors on embeddings and residuals, and ap- ply Jelinek-Mercer Smoothing to bigrams. Fur- thermore, to model the probability of a sequence of words, we assume that the contributions of more than one context word approximately add up. Thereby a generativ e model of documents is con- structed, parameterized by embeddings and resid- uals. The learning objecti ve is to maximize the corpus lik elihood, which reduces to a weighted lo w-rank positiv e semidefinite (PSD) approxima- tion problem of the PMI matrix. A Block Co- ordinate Descent algorithm is adopted to find an approximate solution. This algorithm is based on Eigendecomposition, which av oids information loss in SVD, b ut brings challenges to scalability . W e then exploit the sparsity of the weight matrix and implement an efficient online blockwise re- gression algorithm. On sev en benchmark datasets cov ering similarity and analogy tasks, our method achie ves competiti v e and stable performance. The source code of this method is provided at https://github.com/askerlee/topicvec . 2 Notations and Definitions Throughout the paper, we always use a uppercase bold letter as S , V to denote a matrix or set, a lo w- ercase bold letter as v w i to denote a vector , a nor- mal uppercase letter as N , W to denote a scalar constant, and a normal lowercase letter as s i , w i to denote a scalar v ariable. Suppose a vocab ulary S = { s 1 , · · · , s W } con- sists of all the words, where W is the vocab- ulary size. W e further suppose s 1 , · · · , s W are sorted in decending order of the frequency , i.e. s 1 is most frequent, and s W is least frequent. A document d i is a sequence of words d i = ( w i 1 , · · · , w iL i ) , w ij ∈ S . A corpus is a collec- Name Description S V ocabulary { s 1 , · · · , s W } V Embedding matrix ( v s 1 , · · · , v s W ) D Corpus { d 1 , · · · , d M } v s i Embedding of word s i a s i s j Bigram residual for s i , s j ˜ P ( s i ,s j ) Empirical probability of s i , s j in the corpus u Unigram probability vector ( P ( s 1 ) , · · · , P ( s W )) A Residual matrix ( a s i s j ) B Conditional probability matrix P ( s j | s i ) G PMI matrix PMI ( s i , s j ) H Bigram empirical probability matrix ˜ P ( s i , s j ) T able 1: Notation T able tion of M documents D = { d 1 , · · · , d M } . In the vocab ulary , each word s i is mapped to a vector v s i in N -dimensional Euclidean space. In a document, a sequence of words is referred to as a text window , denoted by w i , · · · , w i + l , or w i : w i + l in shorthand. A text window of chosen size c before a word w i defines the conte xt of w i as w i − c , · · · , w i − 1 . Here w i is referred to as the focus wor d . Each conte xt word w i − j and the focus word w i comprise a bigram w i − j , w i . The P ointwise Mutual Information between two words s i , s j is defined as PMI ( s i , s j ) = log P ( s i , s j ) P ( s i ) P ( s j ) . 3 Link Function of T ext In this section, we formulate the probability of a sequence of words as a function of their embed- dings. W e start from the link function of bigrams, which is the building blocks of a long sequence. Then this link function is extended to a text win- do w with c context words, as a first-order approx- imation of the actual probability . 3.1 Link Function of Bigrams W e generalize the link function of “word2vec” and “GloV e” to the following: P ( s i , s j ) = exp n v > s j v s i + a s i s j o P ( s i ) P ( s j ) (1) The rationale for (1) originates from the idea of the Pr oduct of Experts in (Hinton, 2002). Sup- pose different types of semantic/syntactic regu- larities between s i and s j are encoded in dif fer- ent dimensions of v s i , v s j . As exp { v > s j v s i } = Q l exp { v s i ,l · v s j ,l } , this means the effects of dif- ferent re gularities on the probability are combined by multiplying together . If s i and s j are indepen- dent, their joint probability should be P ( s i ) P ( s j ) . In the presence of correlations, the actual joint probability P ( s i , s j ) would be a scaling of it. The scale factor reflects how much s i and s j are pos- iti vely or negati v ely correlated. W ithin the scale factor , v > s j v s i captures linear interactions between s i and s j , the residual a s i s j captures nonlinear or noisy interactions. In applications, only v > s j v s i is of interest. Hence the bigger magnitude v > s j v s i is of relati ve to a s i s j , the better . Note that we do not assume a s i s j = a s j s i . This provides the flexibility P ( s i , s j ) 6 = P ( s j , s i ) , agreeing with the asymmetry of bigrams in natu- ral languages. At the same time, v > s j v s i imposes a symmetric part between P ( s i , s j ) and P ( s j , s i ) . (1) is equi v alent to P ( s j | s i ) = exp n v > s j v s i + a s i s j + log P ( s j ) o , (2) log P ( s j | s i ) P ( s j ) = v > s j v s i + a s i s j . (3) (3) of all bigrams is represented in matrix form: V > V + A = G , (4) where G is the PMI matrix. 3.1.1 Gaussian Priors on Embeddings When (1) is employed on the regression of empir- ical bigram probabilities, a practical issue arises: more and more bigrams have zero frequency as the constituting words become less frequent. A zero-frequency bigram does not necessarily imply negati ve correlation between the two constituting words; it could simply result from missing data. But in this case, e ven after smoothing, (1) will force v > s j v s i + a s i s j to be a big neg ativ e number , making v s i ov erly long. The increased magnitude of embeddings is a sign of ov erfitting. T o reduce overfitting of embeddings of infre- quent w ords, we assign a Spherical Gaussian prior N (0 , 1 2 µ i I ) to v s i : P ( v s i ) ∼ exp {− µ i k v s i k 2 } , where the hyperparameter µ i increases as the fre- quency of s i decreases. 3.1.2 Gaussian Priors on Residuals W e wish v > s j v s i in (1) captures as much corre- lations between s i and s j as possible. Thus the smaller a s i s j is, the better . In addition, the more frequent s i , s j is in the corpus, the less noise there is in their empirical distribution, and thus the residual a s i s j should be more heavily penalized. T o this end, we penalize the residual a s i s j by f ( ˜ P ( s i , s j )) a 2 s i s j , where f ( · ) is a nonnega- ti ve monotonic transformation, referred to as the weighting function . Let h ij denote ˜ P ( s i , s j ) , then the total penalty of all residuals are the square of the weighted F r obenius norm of A : X s i ,s j ∈ S f ( h ij ) a 2 s i s j = k A k 2 f ( H ) . (5) By referring to “GloV e”, we use the following weighting function, and find it performs well: f ( h ij ) = √ h ij C cut p h ij < C cut , i 6 = j 1 p h ij ≥ C cut , i 6 = j 0 i = j , where C cut is chosen to cut the most frequent 0 . 02% of the bigrams of f at 1 . When s i = s j , two identical words usually hav e much smaller proba- bility to collocate. Hence ˜ P ( s i , s i ) does not reflect the true correlation of a word to itself, and should not put constraints to the embeddings. W e elimi- nate their ef fects by setting f ( h ii ) to 0 . If the domain of A is the whole space R W × W , then this penalty is equiv alent to a Gaussian prior N 0 , 1 2 f ( h ij ) on each a s i s j . The v ariances of the Gaussians are determined by the bigram empirical probability matrix H . 3.1.3 Jelinek-Mer cer Smoothing of Bigrams As another measure to reduce the impact of miss- ing data, we apply the commonly used Jelinek- Mercer Smoothing (Zhai and Laf ferty , 2004) to smooth the empirical conditional probability ˜ P ( s j | s i ) by the unigram probability ˜ P ( s j ) as: ˜ P smoothed ( s j | s i ) = (1 − κ ) ˜ P ( s j | s i ) + κP ( s j ) . (6) Accordingly , the smoothed bigram empirical joint probability is defined as ˜ P ( s i , s j ) = (1 − κ ) ˜ P ( s i , s j ) + κP ( s i ) P ( s j ) . (7) In practice, we find κ = 0 . 02 yields good re- sults. When κ ≥ 0 . 04 , the obtained embeddings begin to de grade with κ , indicating that smoothing distorts the true bigram distributions. 3.2 Link Function of a T ext Windo w In the pre vious subsection, a regression link func- tion of bigram probabilities is established. In this section, we adopt a first-order approximation based on Information Theory , and extend the link function to a longer sequence w 0 , · · · , w c − 1 , w c . Decomposing a distribution conditioned on n random variables as the conditional distributions on its subsets roots deeply in Information The- ory . This is an intricate problem because there could be both (pointwise) r edundant information and (pointwise) synergistic information among the conditioning variables (W illiams and Beer, 2010). They are both functions of the PMI. Based on an analysis of the complementing roles of these two types of pointwise information, we assume they are approximately equal and cancel each other when computing the pointwise interaction infor- mation . See Appendix B for a detailed discussion. Follo wing the abov e assumption, we ha ve PMI ( w 2 ; w 0 , w 1 ) ≈ PMI ( w 2 ; w 0 ) + PMI ( w 2 ; w 1 ) : log P ( w 0 , w 1 | w 2 ) P ( w 0 , w 1 ) ≈ log P ( w 0 | w 2 ) P ( w 0 ) +log P ( w 1 | w 2 ) P ( w 1 ) . Plugging (1) and (3) into the abov e, we obtain P ( w 0 , w 1 , w 2 ) ≈ exp 2 X i,j =0 i 6 = j ( v > w i v w j + a w i w j ) + 2 X i =0 log P ( w i ) . W e extend the above assumption to that the pointwise interaction information is still close to 0 within a longer text window . Accordingly the abov e equation extends to a conte xt of size c > 2 : P ( w 0 , · · · , w c ) ≈ exp c X i,j =0 i 6 = j ( v > w i v w j + a w i w j ) + c X i =0 log P ( w i ) . From it deriv es the conditional distribution of w c , gi ven its conte xt w 0 , · · · , w c − 1 : P ( w c | w 0 : w c − 1 ) = P ( w 0 , · · · , w c ) P ( w 0 , · · · , w c − 1 ) ≈ P ( w c ) exp v > w c c − 1 X i =0 v w i + c − 1 X i =0 a w i w c . (8) 4 Generative Pr ocess and Lik elihood W e proceed to assume the text is generated from a Markov chain of order c , i.e., a word only depends on words within its conte xt of size c . Given the hyperparameter µ = ( µ 1 , · · · , µ W ) , the generati ve process of the whole corpus is: 1. For each word s i , draw the embedding v s i from N (0 , 1 2 µ i I ) ; 2. For each bigram s i , s j , draw the residual a s i s j from N 0 , 1 2 f ( h ij ) ; 3. For each document d i , for the j -th word, draw word w ij from S with probability P ( w ij | w i,j − c : w i,j − 1 ) defined by (8). v w 0 v w 1 v w c · · · d V A µ i v s i h ij a ij Figure 1: The Graphical Model of PSD V ec The abov e generative process for a document d is presented as a graphical model in Figure 1. Based on this generativ e process, the probabil- ity of a document d i can be deri ved as follows, gi ven the embeddings and residuals V , A : P ( d i | V , A ) = L i Y j =1 P ( w ij ) exp v > w ij j − 1 X k = j − c v w ik + j − 1 X k = j − c a w ik w ij . The complete-data likelihood of the corpus is: p ( D , V , A ) = W Y i =1 N (0 , I 2 µ i ) W,W Y i,j =1 N 0 , 1 2 f ( h ij ) M Y i =1 p ( d i | V , A ) = 1 Z ( H , µ ) exp n − W,W X i,j =1 f ( h i,j ) a 2 s i s j − W X i =1 µ i k v s i k 2 o · M ,L i Y i,j =1 P ( w ij ) exp v > w ij j − 1 X k = j − c v w ik + j − 1 X k = j − c a w ik w ij , where Z ( H , µ ) is the normalizing constant. T aking the log arithm of both sides of p ( D , A , V ) yields log p ( D , V , A ) = C 0 − log Z ( H , µ ) − k A k 2 f ( H ) − W X i =1 µ i k v s i k 2 + M ,L i X i,j =1 v > w ij j − 1 X k = j − c v w ik + j − 1 X k = j − c a w ik w ij , (9) where C 0 = P M ,L i i,j =1 log P ( w ij ) is constant. 5 Learning Algorithm 5.1 Learning Objecti ve The learning objectiv e is to find the embeddings V that maximize the corpus log-likelihood (9). Let x ij denote the (smoothed) frequency of bi- gram s i , s j in the corpus. Then (9) is sorted as: log p ( D , V , A ) = C 0 − log Z ( H , µ ) − k A k 2 f ( H ) − W X i =1 µ i k v s i k 2 + W,W X i,j =1 x ij ( v > s i v s j + a s i s j ) . (10) As the corpus size increases, P W,W i,j =1 x ij ( v > s i v s j + a s i s j ) will dominate the parameter prior terms. Then we can ignore the prior terms when maximizing (10). max X x ij ( v > s i v s j + a s i s j ) = X x ij · max X ˜ P smoothed ( s i , s j ) log P ( s i , s j ) . As both { ˜ P smoothed ( s i , s j ) } and { P ( s i , s j ) } sum to 1, the abov e sum is maximized when P ( s i , s j ) = ˜ P smoothed ( s i , s j ) . The maximum likelihood estimator is then: P ( s j | s i ) = ˜ P smoothed ( s j | s i ) , v > s i v s j + a s i s j = log ˜ P smoothed ( s j | s i ) P ( s j ) . (11) Writing (11) in matrix form: B ∗ = ˜ P smoothed ( s j | s i ) s i ,s j ∈ S G ∗ = log B ∗ − log u ⊗ (1 · · · 1) , (12) where “ ⊗ ” is the outer product. No w we fix the values of v > s i v s j + a s i s j at the abov e optimal. The corpus likelihood becomes log p ( D , V , A ) = C 1 − k A k 2 f ( H ) − W X i =1 µ i k v s i k 2 , subject to V > V + A = G ∗ , (13) where C 1 = C 0 + P x ij log ˜ P smoothed ( s i , s j ) − log Z ( H , µ ) is constant. 5.2 Learning V as Low Rank PSD A pproximation Once G ∗ has been estimated from the corpus using (12), we seek V that maximizes (13). This is to find the maximum a posteriori (MAP) estimates of V , A that satisfy V > V + A = G ∗ . Applying this constraint to (13), we obtain Algorithm 1 BCD algorithm for finding a unreg- ularized rank- N weighted PSD approximant. Input : matrix G ∗ , weight matrix W = f ( H ) , iteration number T , rank N Randomly initialize X (0) f or t = 1 , · · · , T do G t = W ◦ G ∗ + (1 − W ) ◦ X ( t − 1) X ( t ) = PSD Approximate( G t , N ) end f or λ , Q = Eigen Decomposition( X ( T ) ) V ∗ = diag ( λ 1 2 [1: N ]) · Q > [1: N ] Output : V ∗ arg max V log p ( D , V , A ) = arg min V k G ∗ − V > V k f ( H ) + W X i =1 µ i k v s i k 2 . (14) Let X = V > V . Then X is positiv e semidef- inite of rank N . Finding V that minimizes (14) is equiv alent to finding a rank- N weighted posi- tive semidefinite appr oximant X of G ∗ , subject to T ikhono v regularization. This problem does not admit an analytic solution, and can only be solved using local optimization methods. First we consider a simpler case where all the words in the vocabulary are enough frequent, and thus T ikhono v regularization is unnecessary . In this case, we set ∀ µ i = 0 , and (14) becomes an unregularized optimization problem. W e adopt the Block Coordinate Descent (BCD) algorithm 1 in (Srebro et al., 2003) to approach this problem. The original algorithm is to find a generic rank- N ma- trix for a weighted approximation problem, and we tailor it by constraining the matrix within the positi ve semidefinite manifold . W e summarize our learning algorithm in Al- gorithm 1. Here “ ◦ ” is the entry-wise prod- uct. W e suppose the eigenv alues λ returned by Eigen Decomposition( X ) are in descending or- der . Q > [1: N ] extracts the 1 to N ro ws from Q > . One key issue is how to initialize X . Srebro et al. (2003) suggest to set X (0) = G ∗ , and point out that X (0) = 0 is far from a local optimum, thus requires more iterations. Ho we ver we find G ∗ is also far from a local optimum, and this setting con- ver ges slowly too. Setting X (0) = G ∗ / 2 usually 1 It is referred to as an Expectation-Maximization algo- rithm by the original authors, but we think this is a misnomer . yields a satisfactory solution in a fe w iterations. The subroutine PSD Approximate( ) computes the unweighted nearest rank- N PSD approxima- tion, measured in F-norm (Higham, 1988). 5.3 Online Blockwise Regr ession of V In Algorithm 1, the essential subroutine PSD Approximate() does eigendecomposi- tion on G t , which is dense due to the logarithm transformation. Eigendecomposition on a W × W dense matrix requires O ( W 2 ) space and O ( W 3 ) time, difficult to scale up to a large vocab ulary . In addition, the majority of words in the vocab ulary are infrequent, and T ikhono v regularization is necessary for them. It is observed that, as words become less fre- quent, fe wer and fe wer w ords appear around them to form bigrams. Remind that the v ocabulary S = { s 1 , · · · , s W } are sorted in decending or - der of the frequency , hence the lo wer-right blocks of H and f ( H ) are very sparse, and cause these blocks in (14) to contribute much less penalty rela- ti ve to other regions. Therefore these blocks could be ignored when doing regression, without sacri- ficing too much accurac y . This intuition leads to the follo wing online blockwise r e gr ession . The basic idea is to select a small set (e.g. 30,000) of the most frequent words as the cor e wor ds , and partition the remaining noncor e wor ds into sets of moderate sizes. Bigrams consist- ing of two core words are referred to as cor e bi- grams , which correspond to the top-left blocks of G and f ( H ) . The embeddings of core words are learned approximately using Algorithm 1, on the top-left blocks of G and f ( H ) . Then we fix the embeddings of core words, and find the em- beddings of each set of noncore words in turn. After ignoring the lower -right regions of G and f ( H ) which correspond to bigrams of two non- core words, the quadratic terms of noncore em- beddings are ignored. Consequently , finding these embeddings becomes a weighted ridge re gr ession problem, which can be solved ef ficiently in closed- form. Finally we combine all embeddings to get the embeddings of the whole vocabulary . The de- tails are as follo ws: 1. Partition S into K consecutiv e groups S 1 , · · · , S k . T ake K = 3 as an example. The first group is core words; 2. Accordingly partition G into K × K blocks, in this example as G 11 G 12 G 13 G 21 G 22 G 23 G 31 G 32 G 33 . Partition f ( H ) , A in the same way . G 11 , f ( H ) 11 , A 11 correspond to core bi- grams. P artition V into |{z} S 1 V 1 | {z } S 2 V 2 | {z } S 3 V 3 ; 3. Solve V > 1 V 1 + A 11 = G 11 using Algorithm 1, and obtain core embeddings V ∗ 1 ; 4. Set V 1 = V ∗ 1 , and find V ∗ 2 that minimizes the total penalty of the 12 -th and 21-th blocks of residuals (the 22-th block is ignored due to its high sparsity): arg min V 2 k G 12 − V > 1 V 2 k 2 f ( H ) 12 + k G 21 − V > 2 V 1 k 2 f ( H ) 21 + X s i ∈ S 2 µ i k v s i k 2 = arg min V 2 k G 12 − V > 1 V 2 k 2 ¯ f ( H ) 12 + X s i ∈ S 2 µ i k v s i k 2 , where ¯ f ( H ) 12 = f ( H ) 12 + f ( H ) > 21 ; G 12 = G 12 ◦ f ( H ) 12 + G > 21 ◦ f ( H ) > 21 / f ( H ) 12 + f ( H ) > 21 is the weighted av er- age of G 12 and G > 21 , “ ◦ ” and “ / ” are element- wise product and di vision, respecti vely . The columns in V 2 are independent, thus for each v s i , it is a separate weighted ridge re gression problem, whose solution is (Holland, 1973): v ∗ s i =( V > 1 diag ( ¯ f i ) V 1 + µ i I ) − 1 V > 1 diag ( ¯ f i ) ¯ g i , where ¯ f i and ¯ g i are columns corresponding to s i in ¯ f ( H ) 12 and G 12 , respecti vely; 5. For an y other set of noncore words S k , find V ∗ k that minimizes the total penalty of the 1 k - th and k 1 -th blocks, ignoring all other k j -th and j k -th blocks; 6. Combine all subsets of embeddings to form V ∗ . Here V ∗ = ( V ∗ 1 , V ∗ 2 , V ∗ 3 ) . 6 Experimental Results W e trained our model along with a fe w state-of- the-art competitors on Wikipedia, and ev aluated the embeddings on 7 common benchmark sets. 6.1 Experimental Setup Our own method is referred to as PSD . The com- petitors include: • (Mikolov et al., 2013b): word2vec 2 , or SGNS in some literature; 2 https://code.google.com/p/word2vec/ • (Levy and Goldberg, 2014): the PPMI ma- trix without dimension reduction, and SVD of PPMI matrix, both yielded by hyperwords; • (Pennington et al., 2014): GloV e 3 ; • (Stratos et al., 2015): Singular 4 , which does SVD-based CCA on the weighted bigram fre- quency matrix; • (Faruqui et al., 2015): Sparse 5 , which learns ne w sparse embeddings in a higher dimen- sional space from pretrained embeddings. All models were trained on the English W ikipedia snapshot in March 2015. After removing non- textual elements and non-English words, 2.04 bil- lion words were left. W e used the default hyperpa- rameters in Hyperwords when training PPMI and SVD. W ord2v ec, GloV e and Singular were trained with their o wn default hyperparameters. The embedding sets PSD-Reg-180K and PSD- Unreg-180K were trained using our online block- wise regression. Both sets contain the embed- dings of the most frequent 180,000 words, based on 25,000 core words. PSD-Unreg-180K was traind with all µ i = 0 , i.e. disabling T ikhono v regularization. PSD-Reg-180K was trained with µ i = 2 i ∈ [25001 , 80000] 4 i ∈ [80001 , 130000] 8 i ∈ [130001 , 180000] , i.e. increased regularization as the sparsity increases. T o con- trast with the batch learning performance, the per - formance of PSD-25K is listed, which contains the core embeddings only . PSD-25K took advantages that it contains much less false candidate words, and some test tuples (generally harder ones) were not ev aluated due to missing words, thus its scores are not comparable to others. Sparse was trained with PSD-180K-reg as the input embeddings, with default hyperparameters. The benchmark sets are almost identical to those in (Levy et al., 2015), except that (Luong et al., 2013)’ s Rare W ords is not included, as many rare words are cut off at the frequency 100, mak- ing more than 1/3 of test pairs in v alid. W ord Similarity There are 5 datasets: W ord- Sim Similarity ( WS Sim ) and W ordSim Related- ness ( WS Rel ) (Zesch et al., 2008; Agirre et al., 2009), partitioned from W ordSim353 (Finkelstein et al., 2002); Bruni et al. (2012)’ s MEN dataset; 3 http://nlp.stanford.edu/projects/glov e/ 4 https://github .com/karlstratos/singular 5 https://github .com/mfaruqui/sparse-coding T able 2: Performance of each method across different tasks. Similarity T asks Analogy T asks Method WS Sim WS Rel MEN T urk SimLex Google MSR word2v ec 0.742 0.543 0.731 0.663 0.395 0.734 / 0.742 0.650 / 0.674 PPMI 0.735 0.678 0.717 0.659 0.308 0.476 / 0.524 0.183 / 0.217 SVD 0.687 0.608 0.711 0.524 0.270 0.230 / 0.240 0.123 / 0.113 GloV e 0.759 0.630 0.756 0.641 0.362 0.535 / 0.544 0.408 / 0.435 Singular 0.763 0.684 0.747 0.581 0.345 0.440 / 0.508 0.364 / 0.399 Sparse 0.739 0.585 0.725 0.625 0.355 0.240 / 0.282 0.253 / 0.274 PSD-Reg-180K 0.792 0.679 0.764 0.676 0.398 0.602 / 0.623 0.465 / 0.507 PSD-Unreg-180K 0.786 0.663 0.753 0.675 0.372 0.566 / 0.598 0.424 / 0.468 PSD-25K 0.801 0.676 0.765 0.678 0.393 0.671 / 0.695 0.533 / 0.586 Radinsky et al. (2011)’ s Mechanical T urk dataset; and (Hill et al., 2014)’ s SimLex -999 dataset. The embeddings were ev aluated by the Spearman’ s rank correlation with the human ratings. W ord Analogy The two datasets are MSR ’ s analogy dataset (Mikolo v et al., 2013c), contain- ing 8000 questions, and Google ’ s analogy dataset (Mikolo v et al., 2013a), with 19544 questions. Af- ter filtering questions in v olving out-of-vocab ulary words, i.e. words that appear less than 100 times in the corpus, 7054 instances in MSR and 19364 instances in Google were left. The analogy ques- tions were answered using 3CosAdd as well as 3CosMul proposed by Le vy et al. (2014). 6.2 Results T able 2 shows the results on all tasks. W ord2vec significantly outperformed other methods on anal- ogy tasks. PPMI and SVD performed much worse on analogy tasks than reported in (Levy et al., 2015), probably due to sub-optimal hyperparam- eters. This suggests their performance is unstable. The new embeddings yielded by Sparse systemat- ically de graded compared to the old embeddings, contradicting the claim in (Faruqui et al., 2015). Our method PSD-Reg-180K performed well consistently , and is best in 4 similarity tasks. It performed worse than word2vec on analogy tasks, but still better than other MF-based meth- ods. By comparing to PSD-Unreg-180K, we see T ikhono v re gularization brings 1-4% performance boost across tasks. In addition, on similarity tasks, online blockwise re gression only degrades slightly compared to batch factorization. Their perfor- mance gaps on analogy tasks were wider , but this might be explained by the fact that some hard cases were not counted in PSD-25K’ s ev aluation, due to its limited vocab ulary . 7 Conclusions and Future W ork In this paper , inspired by the link functions in pre vious works, with the support from Informa- tion Theory , we propose a new link function of a text windo w , parameterized by the embeddings of words and the residuals of bigrams. Based on the link function, we establish a generati v e model of documents. The learning objectiv e is to find a set of embeddings maximizing their posterior likeli- hood giv en the corpus. This objectiv e is reduced to weighted low-rank positive-semidefinite approxi- mation, subject to T ikhono v regularization. Then we adopt a Block Coordinate Descent algorithm, jointly with an online blockwise regression algo- rithm to find an approximate solution. On sev en benchmark sets, the learned embeddings show competiti ve and stable performance. In the future work, we will incorporate global latent factors into this generativ e model, such as topics, sentiments, or writing styles, and dev elop more elaborate models of documents. Through learning such latent factors, important summary information of documents would be acquired, which are useful in v arious applications. Acknowledgments W e thank Omer Le vy , Thomas Mach, Peilin Zhao, Mingkui T an, Zhiqiang Xu and Chunlin W u for their helpful discussions and insights. This re- search is supported by the National Research Foundation, Prime Minister’ s Office, Singapore under its IDM Futures Funding Initiati ve and ad- ministered by the Interacti ve and Digital Media Programme Of fice. A ppendix A P ossible T rap in SVD Suppose M is the bigram matrix of interest. SVD embeddings are derived from the low rank approx- imation of M > M , by keeping the lar gest singular v alues/vectors. When some of these singular v al- ues correspond to ne gati ve eigen v alues, undesir- able correlations might be captured. The follow- ing is an example of approximating a PMI matrix. A vocab ulary consists of 3 words s 1 , s 2 , s 3 . T wo corpora deriv e two PMI matrices: M (1) = 1 . 4 0 . 8 0 0 . 8 2 . 6 0 0 0 2 , M (2) = 0 . 2 − 1 . 6 0 − 1 . 6 − 2 . 2 0 0 0 2 . They have identical left singular matrix and sin- gular values (3 , 2 , 1) , but their eigenv alues are (3 , 2 , 1) and ( − 3 , 2 , 1) , respecti vely . In a rank-2 approximation, the largest two singular v alues/vectors are kept, and M (1) and M (2) yield identical SVD embeddings V = ( 0 . 45 0 . 89 0 0 0 1 ) (the ro ws may be scaled depending on the algorithm, without af fecting the validity of the follo wing conclusion). The embeddings of s 1 and s 2 (columns 1 and 2 of V ) point at the same di- rection, suggesting the y are positiv ely correlated. Ho we ver as M (2) 1 , 2 = M (2) 2 , 1 = − 1 . 6 < 0 , they are actually negati vely correlated in the second cor- pus. This inconsistency is because the principal eigen v alue of M (2) is negati ve, and yet the corre- sponding singular v alue/vector is k ept. When using eigendecomposition, the largest two positive eigen v alues/eigen v ectors are kept. M (1) yields the same embeddings V . M (2) yields V (2) = − 0 . 89 0 . 45 0 0 0 1 . 41 , which correctly preserves the ne gati ve correlation between s 1 , s 2 . A ppendix B Inf ormation Theory Redundant information refers to the reduced un- certainty by knowing the v alue of any one of the conditioning variables (hence redundant). Syner- gistic information is the reduced uncertainty as- cribed to knowing all the v alues of conditioning v ariables, that cannot be reduced by knowing the v alue of any v ariable alone (hence syner gistic). The mutual information I ( y ; x i ) and the redun- dant information Rdn ( y ; x 1 , x 2 ) are defined as: I ( y ; x i ) = E P ( x i ,y ) [log P ( y | x i ) P ( y ) ] Rdn ( y ; x 1 , x 2 ) = E P ( y ) min x 1 ,x 2 E P ( x i | y ) [log P ( y | x i ) P ( y ) ] The synergistic information Syn ( y ; x 1 , x 2 ) is defined as the PI-function in (W illiams and Beer , 2010), skipped here. I ( y ; x 1 ) I ( y ; x 2 ) S y n ( y ; x 1 , x 2 ) I ( y ; x 1 , x 2 ) R d n ( y ; x 1 , x 2 ) Figure 2: Dif ferent types of information among 3 random variables y , x 1 , x 2 . I ( y ; x 1 , x 2 ) is the mutual information between y and ( x 1 , x 2 ) . Rdn ( y ; x 1 , x 2 ) and Syn ( y ; x 1 , x 2 ) are the redun- dant information and synergistic information be- tween x 1 , x 2 , conditioning y , respectiv ely . The interaction information Int ( x 1 , x 2 , y ) mea- sures the relativ e strength of Rdn ( y ; x 1 , x 2 ) and Syn ( y ; x 1 , x 2 ) (T imme et al., 2014): Int ( x 1 , x 2 , y ) = Syn ( y ; x 1 , x 2 ) − Rdn ( y ; x 1 , x 2 ) = I ( y ; x 1 , x 2 ) − I ( y ; x 1 ) − I ( y ; x 2 ) = E P ( x 1 ,x 2 ,y ) [log P ( x 1 ) P ( x 2 ) P ( y ) P ( x 1 , x 2 , y ) P ( x 1 , x 2 ) P ( x 1 , y ) P ( x 2 , y ) ] Figure 2 sho ws the relationship of dif ferent information among 3 random variables y , x 1 , x 2 (based on Fig.1 in (W illiams and Beer , 2010)). PMI is the pointwise counterpart of mutual information I . Similarly , all the abov e concepts hav e their pointwise counterparts, obtained by dropping the expectation operator . Specifically , the pointwise interaction information is defined as PInt ( x 1 , x 2 , y ) = PMI ( y ; x 1 , x 2 ) − PMI ( y ; x 1 ) − PMI ( y ; x 2 ) = log P ( x 1 ) P ( x 2 ) P ( y ) P ( x 1 ,x 2 ,y ) P ( x 1 ,x 2 ) P ( x 1 ,y ) P ( x 2 ,y ) . If we kno w PInt ( x 1 , x 2 , y ) , we can reco ver PMI ( y ; x 1 , x 2 ) from the mutual information o ver the variable subsets, and then recover the joint distribution P ( x 1 , x 2 , y ) . As the pointwise redundant information PRdn ( y ; x 1 , x 2 ) and the pointwise synergistic information PSyn ( y ; x 1 , x 2 ) are both higher - order interaction terms, their magnitudes are usually much smaller than the PMI terms. W e assume they are approximately equal, and thus cancel each other when computing PInt. Gi ven this, PInt is always 0 . In the case of three words w 0 , w 1 , w 2 , PInt ( w 0 , w 1 , w 2 ) = 0 leads to PMI ( w 2 ; w 0 , w 1 ) = PMI ( w 2 ; w 0 ) + PMI ( w 2 ; w 1 ) . References [Agirre et al.2009] Eneko Agirre, Enrique Alfonseca, Keith Hall, Jana Kravalo v a, Marius Pas ¸ ca, and Aitor Soroa. 2009. A study on similarity and relatedness using distributional and wordnet-based approaches. In Pr oceedings of Human Language T echnologies: The 2009 Annual Conference of the North Ameri- can Chapter of the Association for Computational Linguistics , pages 19–27. Association for Computa- tional Linguistics. [Arora et al.2015] S. Arora, Y . Li, Y . Liang, T . Ma, and A. Risteski. 2015. Random walks on discourse spaces: a ne w generati ve language model with ap- plications to semantic word embeddings. ArXiv e- prints , arXiv:1502.03520 [cs.LG]. [Bengio et al.2006] Y oshua Bengio, Holger Schwenk, Jean-S ´ ebastien Sen ´ ecal, Fr ´ ederic Morin, and Jean- Luc Gauvain. 2006. Neural probabilistic language models. In Innovations in Machine Learning , pages 137–186. Springer . [Blei et al.2003] David M Blei, Andrew Y Ng, and Michael I Jordan. 2003. Latent dirichlet allocation. The Journal of Machine Learning Resear ch , 3:993– 1022. [Bruni et al.2012] Elia Bruni, Gemma Boleda, Marco Baroni, and Nam-Khanh T ran. 2012. Distributional semantics in technicolor . In Pr oceedings of the 50th Annual Meeting of the Association for Computa- tional Linguistics: Long P apers-V olume 1 , pages 136–145. Association for Computational Linguis- tics. [Deerwester et al.1990] Scott C. Deerwester , Susan T Dumais, and Richard A. Harshman. 1990. Index- ing by latent semantic analysis. J. Am. Soc. Inf . Sci. [Dhillon et al.2011] Paramveer Dhillon, Dean P Foster , and L yle H Ungar . 2011. Multi-vie w learning of word embeddings via cca. In Proceedings of Ad- vances in Neural Information Pr ocessing Systems , pages 199–207. [Dhillon et al.2015] Paramveer S Dhillon, Dean P Fos- ter , and L yle H Ungar . 2015. Eigenwords: Spectral word embeddings. The Journal of Machine Learn- ing Resear ch . [Faruqui et al.2015] Manaal Faruqui, Y ulia Tsvetk ov , Dani Y ogatama, Chris Dyer, and Noah A. Smith. 2015. Sparse overcomplete word vector represen- tations. In Pr oceedings of A CL 2015 . [Finkelstein et al.2002] Le v Finkelstein, Evgeniy Gabrilovich, Y ossi Matias, Ehud Rivlin, Zach Solan, Gadi W olfman, and Eytan Ruppin. 2002. Placing search in context: The concept revisited. A CM T rans. Inf. Syst. , 20(1):116–131, January . [Higham1988] Nicholas J. Higham. 1988. Comput- ing a nearest symmetric positiv e semidefinite matrix. Linear Algebra and its Applications , 103(0):103 – 118. [Hill et al.2014] Felix Hill, Roi Reichart, and Anna Ko- rhonen. 2014. Simlex-999: Evaluating semantic models with (genuine) similarity estimation. CoRR , abs/1408.3456. [Hinton2002] Geoffre y Hinton. 2002. T raining prod- ucts of experts by minimizing contrastive diver - gence. Neural Computation , 14(8):1771–1800. [Holland1973] Paul W . Holland. 1973. W eighted Ridge Regression: Combining Ridge and Ro- bust Regression Methods. NBER W orking Papers 0011, National Bureau of Economic Research, Inc, September . [Hsu et al.2012] Daniel Hsu, Sham M Kakade, and T ong Zhang. 2012 . A spectral algorithm for learn- ing hidden markov models. Journal of Computer and System Sciences , 78(5):1460–1480. [Levy and Goldber g2014] Omer Le vy and Y oav Gold- berg. 2014. Neural word embeddings as implicit matrix factorization. In Pr oceedings of NIPS 2014 . [Levy et al.2014] Omer Levy , Y oav Goldberg, and Is- rael Ramat-Gan. 2014. Linguistic regularities in sparse and explicit word representations. In Pr o- ceedings of CoNLL-2014 , page 171. [Levy et al.2015] Omer Levy , Y oa v Goldber g, and Ido Dagan. 2015. Improving distributional similarity with lessons learned from word embeddings. T rans- actions of the Association for Computational Lin- guistics , 3:211–225. [Lin and He2009] Chenghua Lin and Y ulan He. 2009. Joint sentiment/topic model for sentiment analysis. In Proceedings of the 18th A CM confer ence on In- formation and Knowledge Mana gement , pages 375– 384. A CM. [Luong et al.2013] Minh-Thang Luong, Richard Socher , and Christopher D Manning. 2013. Better word representations with recursi v e neural networks for morphology . CoNLL-2013 , 104. [Mach2012] T . Mach. 2012. Eigen value Algorithms for Symmetric Hierar chical Matrices . Dissertation, Chemnitz Univ ersity of T echnology . [Mikolov et al.2013a] T omas Mikolov , Kai Chen, Greg Corrado, and Jeffrey Dean. 2013a. Efficient esti- mation of word representations in vector space. In Pr oceedings of W orkshop at ICLR 2013 . [Mikolov et al.2013b] T omas Mikolov , Ilya Sutske ver , Kai Chen, Greg S Corrado, and Jef f Dean. 2013b . Distributed representations of words and phrases and their compositionality . In Pr oceedings of NIPS 2013 , pages 3111–3119. [Mikolov et al.2013c] T omas Mikolov , W en-tau Y ih, and Geoffre y Zweig. 2013c. Linguistic regularities in continuous space word representations. In Pr o- ceedings of HLT -NAA CL 2013 , pages 746–751. [Mnih and Hinton2007] Andriy Mnih and Geof fre y Hinton. 2007. Three new graphical models for sta- tistical language modelling. In Pr oceedings of the 24th International Conference on Machine learning , pages 641–648. A CM. [Pennington et al.2014] Jeffre y Pennington, Richard Socher , and Christopher D Manning. 2014. Glo ve: Global v ectors for word representation. Proceedings of the Empiricial Methods in Natural Language Pr o- cessing (EMNLP 2014) , 12. [Radinsky et al.2011] Kira Radinsky , Eugene Agichtein, Evgeniy Gabrilo vich, and Shaul Markovitch. 2011. A word at a time: Computing word relatedness using temporal semantic analysis. In Pr oceedings of the 20th International Conference on W orld W ide W eb , WWW ’11, pages 337–346, New Y ork, NY , USA. ACM. [Srebro et al.2003] Nathan Srebro, T ommi Jaakkola, et al. 2003. W eighted low-rank approximations. In Pr oceedings of ICML 2003 , volume 3, pages 720– 727. [Stratos et al.2014] Karl Stratos, Do-kyum Kim, Michael Collins, and Daniel Hsu. 2014. A spectral algorithm for learning class-based n-gram models of natural language. In Pr oceedings of the Association for Uncertainty in Artificial Intelligence . [Stratos et al.2015] Karl Stratos, Michael Collins, and Daniel Hsu. 2015. Model-based word embeddings from decompositions of count matrices. In Pr oceed- ings of A CL 2015 . [T an et al.2014] M. T an, I. W . Tsang, L. W ang, B. V an- dereycken, and S. J. P an. 2014. Riemannian pursuit for big matrix recovery . In Pr oceedings of ICML 2014 , pages 1539–1547. [T imme et al.2014] Nicholas T imme, W esley Alford, Benjamin Flecker , and John M Beggs. 2014. Syn- ergy , redundancy , and multiv ariate information mea- sures: an experimentalist’ s perspecti ve. Journal of Computational Neur oscience , 36(2):119–140. [W allach2006] Hanna M W allach. 2006. T opic mod- eling: beyond bag-of-words. In Pr oceedings of the 23r d international confer ence on Machine learning , pages 977–984. A CM. [W illiams and Beer2010] Paul L W illiams and Ran- dall D Beer . 2010. Nonnegati ve decomposi- tion of multi variate information. arXiv prepr int arXiv:1004.2515 . [Y an et al.2015] Y an Y an, Mingkui T an, Iv or Tsang, Y i Y ang, Chengqi Zhang, and Qinfeng Shi. 2015. Scalable maximum margin matrix factorization by activ e riemannian subspace search. In Proceedings of IJCAI 2015 . [Y ogatama et al.2015] Dani Y ogatama, Manaal Faruqui, Chris Dyer , and Noah A Smith. 2015. Learning word representations with hierarchical sparse coding. In Pr oceedings of ICML 2015 . [Zesch et al.2008] T orsten Zesch, Christof M ¨ uller , and Iryna Gurevych. 2008. Using wiktionary for com- puting semantic relatedness. In Pr oceedings of AAAI 2008 , volume 8, pages 861–866. [Zhai and Lafferty2004] Chengxiang Zhai and John Lafferty . 2004. A study of smoothing methods for language models applied to information retrie v al. A CM T r ansactions on Information Systems (T OIS) , 22(2):179–214. [Zhao et al.2011a] Peilin Zhao, Ste ven CH Hoi, and Rong Jin. 2011a. Double updating online learn- ing. The Journal of Machine Learning Researc h , 12:1587–1615. [Zhao et al.2011b] W ayne Xin Zhao, Jing Jiang, Jian- shu W eng, Jing He, Ee-Peng Lim, Hongfei Y an, and Xiaoming Li. 2011b . Comparing twitter and tra- ditional media using topic models. In Advances in Information Retrieval (Pr oceedings of the 33r d An- nual Eur opean Confer ence on Information Retrieval Resear ch) , pages 338–349. Springer .

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment