Universal Approximation of Edge Density in Large Graphs

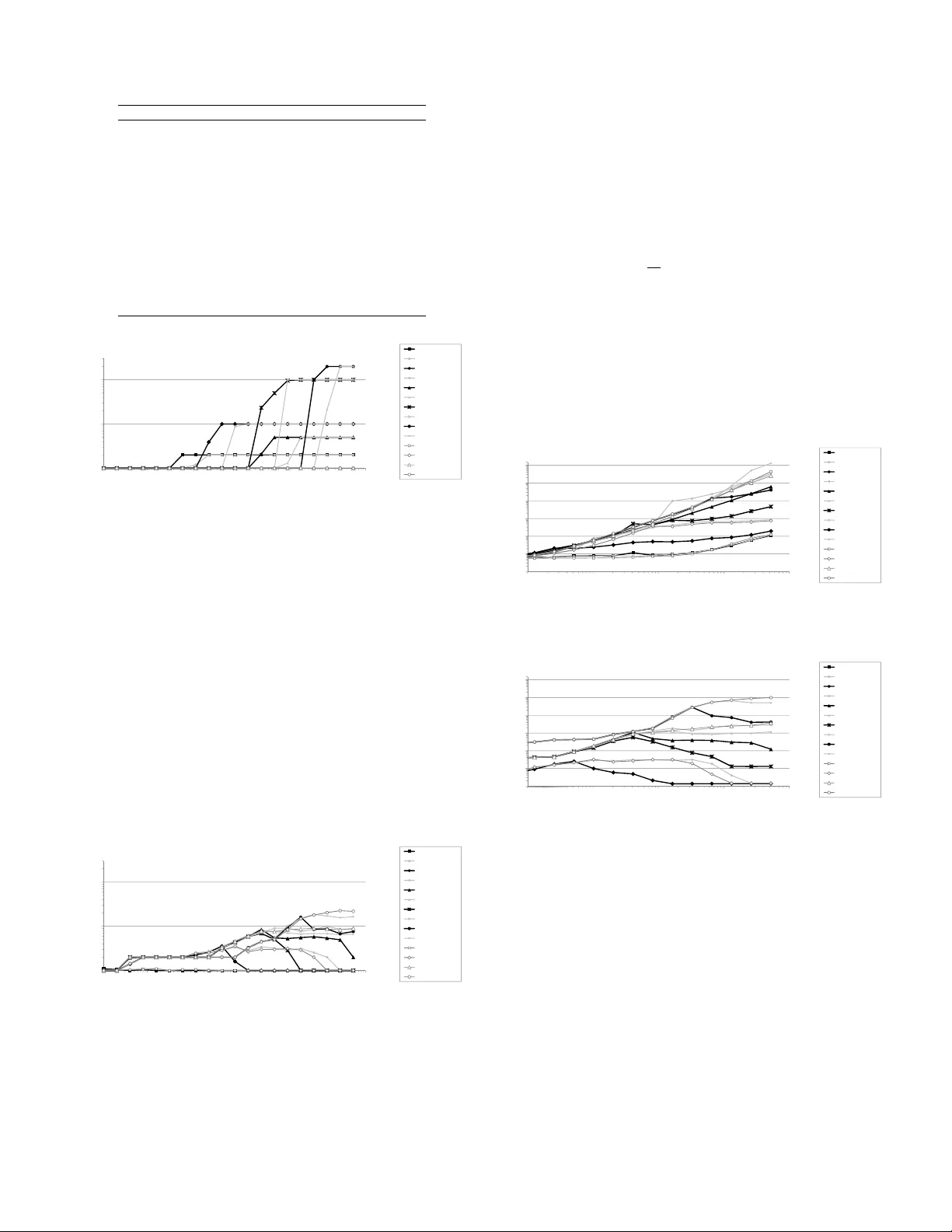

In this paper, we present a novel way to summarize the structure of large graphs, based on non-parametric estimation of edge density in directed multigraphs. Following coclustering approach, we use a clustering of the vertices, with a piecewise const…

Authors: Marc Boulle