Tag-Weighted Topic Model For Large-scale Semi-Structured Documents

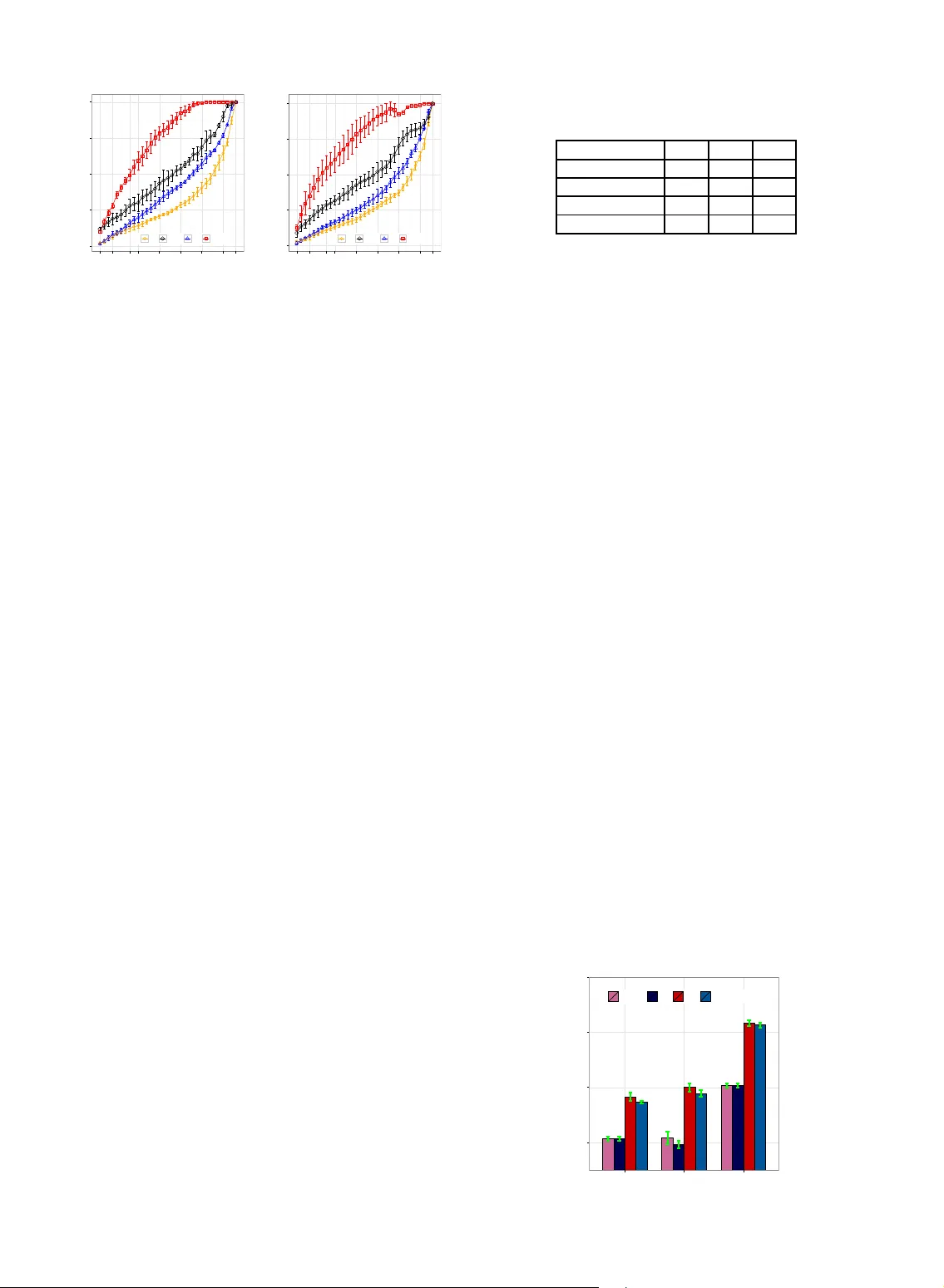

To date, there have been massive Semi-Structured Documents (SSDs) during the evolution of the Internet. These SSDs contain both unstructured features (e.g., plain text) and metadata (e.g., tags). Most previous works focused on modeling the unstructur…

Authors: Shuangyin Li, Jiefei Li, Guan Huang