Robust Subjective Visual Property Prediction from Crowdsourced Pairwise Labels

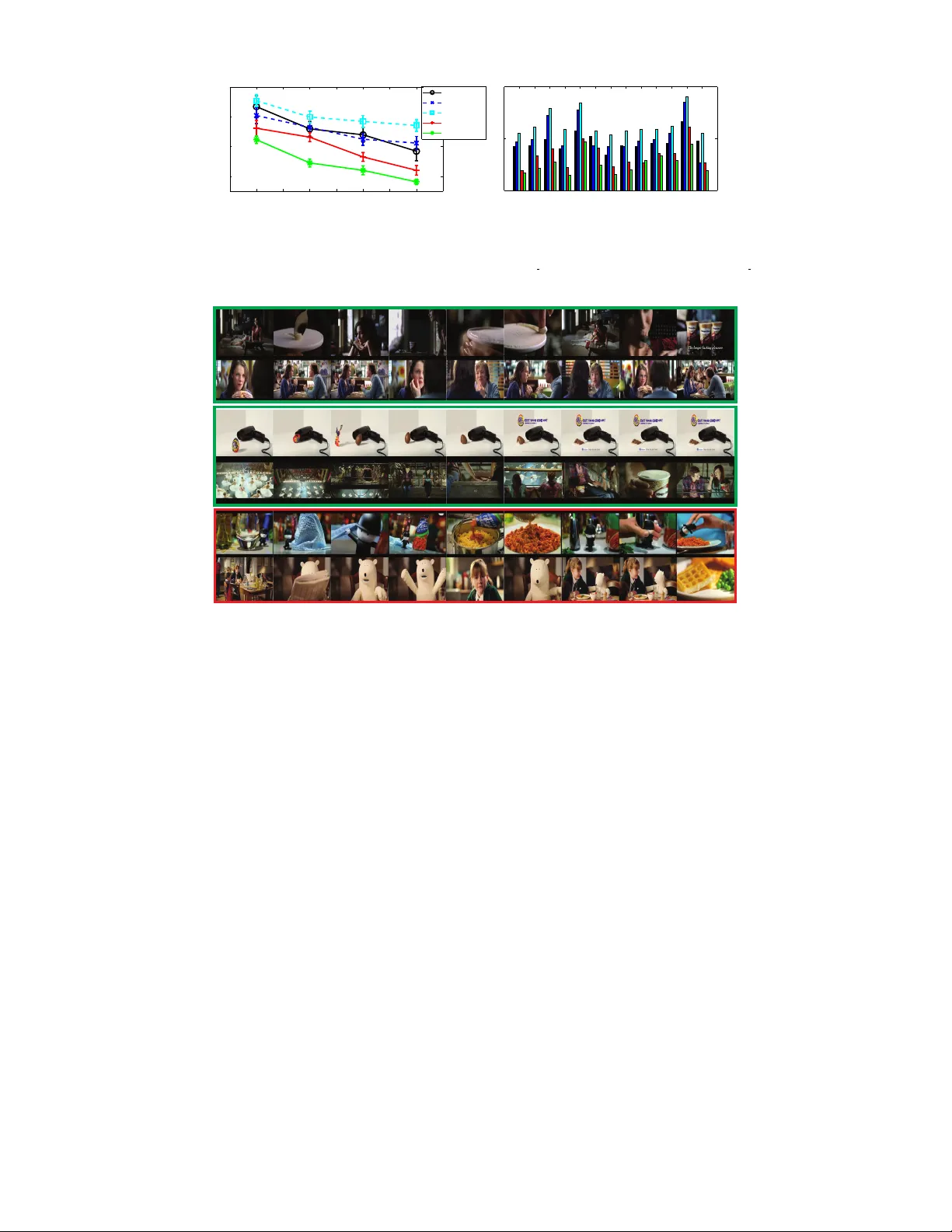

The problem of estimating subjective visual properties from image and video has attracted increasing interest. A subjective visual property is useful either on its own (e.g. image and video interestingness) or as an intermediate representation for vi…

Authors: Yanwei Fu, Timothy M. Hospedales, Tao Xiang