Balancing the load: A Voronoi based scheme for parallel computations

The use of numerical simulations in science is ever increasing and with it the computational size. In many cases single processors are no longer adequate and simulations are run on multiple core machines or supercomputers. One of the key issues when running a simulation on multiple CPUs is maintaining a proper load balance throughout the run and minimizing communications between CPUs. We propose a novel method of utilizing a Voronoi diagram to achieve a nearly perfect load balance without the need of any global redistributions of data. As a show case, we implement our method in RICH, a 2D moving mesh hydrodynamical code, but it can be extended trivially to other codes in 2D or 3D. Our tests show that this method is indeed efficient and can be used in a large variety of existing hydrodynamical codes as well as other applications.

💡 Research Summary

The paper addresses a fundamental challenge in large‑scale scientific simulations: maintaining an even computational load across many processors while keeping inter‑processor communication to a minimum. Traditional domain‑decomposition techniques work well for static, uniform grids but become inefficient for dynamic methods such as Smoothed Particle Hydrodynamics (SPH), Adaptive Mesh Refinement (AMR), or moving‑mesh codes, where the number of cells or particles per sub‑domain changes continuously. The authors propose a novel, Voronoi‑based parallelisation scheme that eliminates the need for global data redistribution.

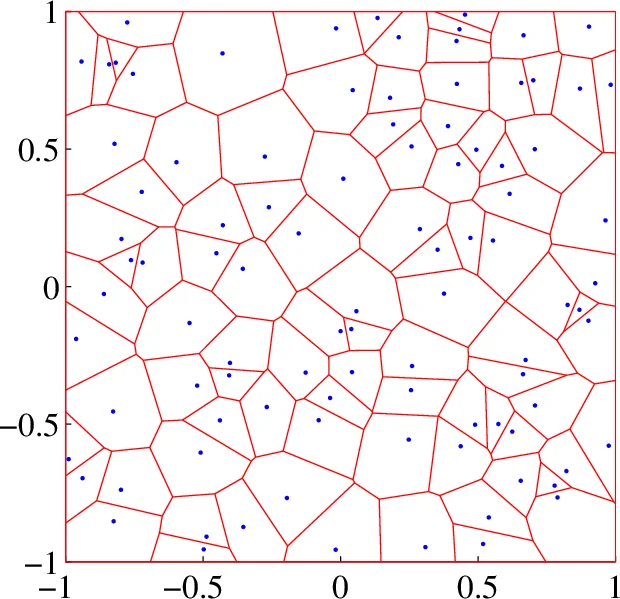

The core idea is to introduce a set of “CPU mesh points” – one per processor – whose positions define a Voronoi diagram over the computational domain. Each Voronoi cell is assigned to a single processor, and all hydrodynamic points (particles, cells, or mesh vertices) that lie inside that cell are stored locally. At the beginning of each time step the algorithm checks which hydrodynamic points have crossed a Voronoi boundary and transfers them to the appropriate neighboring processor. Because a Voronoi cell in two dimensions typically has six neighbours (on average twelve communication partners when counting mutual neighbours), the amount of data exchanged per step scales as O(√N) in 2‑D and O(N^{2/3}) in 3‑D, where N is the number of points per processor. For sufficiently large N this communication cost becomes a negligible fraction of the total runtime.

Load balancing is achieved through a “Pressure Balancing Scheme”. Each processor computes its current load M_i (the number of hydrodynamic points it owns) and compares it to the ideal load M_best = (total points)/(number of processors). The positions of the CPU mesh points are then updated according to

Δx_i = M_best ∑_j (x_i – x_j)

Comments & Academic Discussion

Loading comments...

Leave a Comment