Improving self-calibration

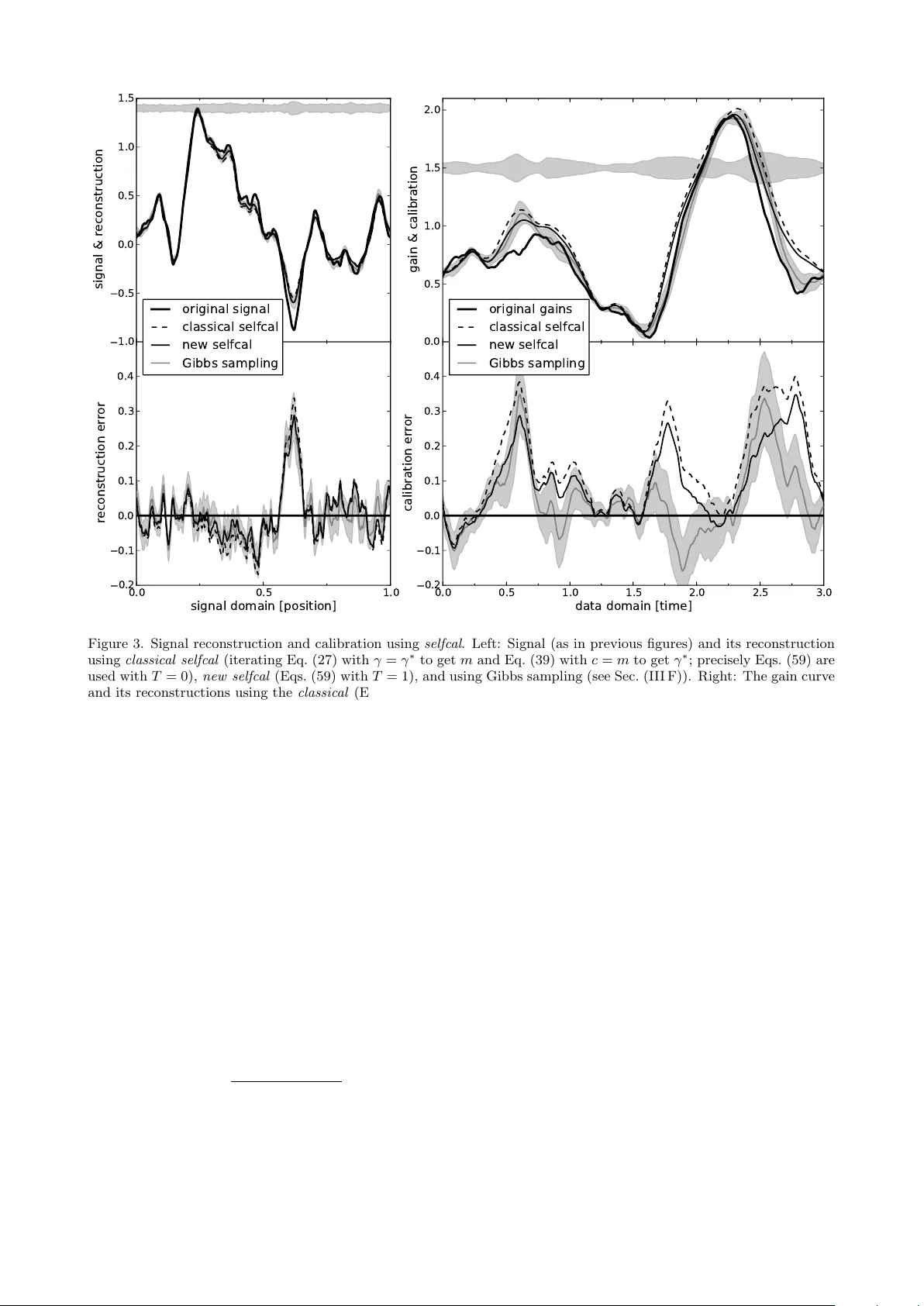

Response calibration is the process of inferring how much the measured data depend on the signal one is interested in. It is essential for any quantitative signal estimation on the basis of the data. Here, we investigate self-calibration methods for …

Authors: Torsten A. En{ss}lin, Henrik Junklewitz, Lars Winderling