On Symmetric and Asymmetric LSHs for Inner Product Search

We consider the problem of designing locality sensitive hashes (LSH) for inner product similarity, and of the power of asymmetric hashes in this context. Shrivastava and Li argue that there is no symmetric LSH for the problem and propose an asymmetri…

Authors: Behnam Neyshabur, Nathan Srebro

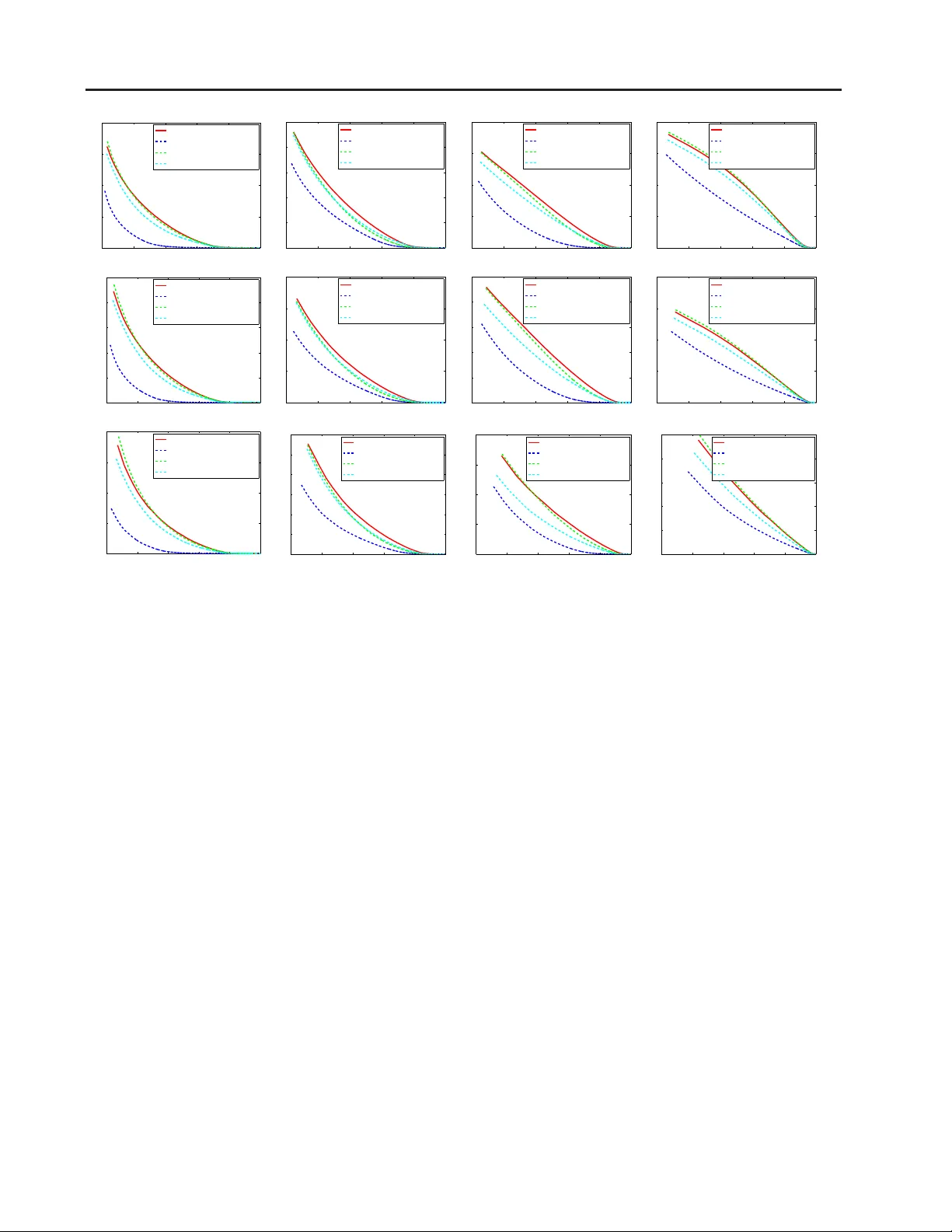

On Symmetric and Asymmetric LSHs f or Inner Pr oduct Search Behnam Neyshabur B N E Y S H A B U R @ T T I C . E D U Nathan Srebro NAT I @ T T I C . E D U T oyota T echnolog ical Institute at Chicago, Chicago, IL 606 37, USA Abstract W e consider the pr oblem of designing locality sensiti ve hashes (LSH) fo r inner produ ct similar - ity , and of the power of asymmetric hashes in this context. Shriv astav a an d Li ( 2014a ) argue that there is no symmetric LSH for the problem an d propo se an asymmetric L SH based on different mapping s for query and datab ase po ints. How- ev er, we show there does exist a simple symmet- ric LSH that enjoys stron ger g uarantees and bet- ter em pirical performance than the asymmetric LSH they suggest. W e also show a variant of the settings where asymmetry is in -fact nee ded, but there a different as ymm etric LSH is req uired. 1. Intr oduction Follo wing Shriv asta va an d Li ( 2 014a ), we consider the problem of Maximum Inner Product Search (MIPS): given a collectio n of “database” vectors S ⊂ R d and a qu ery q ∈ R d , find a d ata vector maximizing the inner produ ct with the query : p = arg max x ∈S q ⊤ x (1) MIPS pr oblems of the f orm ( 1 ) arise, e.g. whe n us- ing matrix-factoriza tion ba sed recommen dation systems ( K oren et al. , 20 09 ; Srebro et al. , 2 005 ; Cremonesi et al. , 2010 ), in multi-class prediction ( Dean et al. , 2013 ; Jain et al. , 2009 ) and structu ral SVM ( Joach ims , 200 6 ; Joachims et al. , 2009 ) problems and in vision problems when scoring filters based on their activ ations ( Dean et al. , 2013 ) (see Shriv asta va an d Li , 201 4a , fo r mo re about MIPS). In order to efficiently find app roximate MIPS so- lutions, Shriv astav a an d Li ( 2 014a ) suggest constructin g a Locality Sensiti ve Hash (LSH) for in ner prod uct “similar- ity”. Pr oceedings of the 31 st International Conferen ce on Machine Learning , Lille, F rance , 2015. JMLR: W&C P volume 37. Copy- right 2015 by the author(s). Locality Sensitive Hashing ( Indyk and Motwani , 1 998 ) is a popular t ool for approximate nearest neighbo r searc h and is also widely used in other setti ngs ( Gionis et al. , 199 9 ; Datar et al. , 2004 ; Charikar , 2002 ). An LSH is a rando m mapping h ( · ) from objects to a small, possibly binary , al- phabet, where collision pr obabilities P [ h ( x ) = h ( y )] r e- late to the de sired notion of similarity sim ( x, y ) . An LSH can in turn be used to generate shor t hash words such that hamming distances between hash words cor respond to sim- ilarity b etween objects. Recent studies have also exp lored the p o wer of asymmetry in LSH and binary hash ing, where two different map pings f ( · ) , g ( · ) are used to ap proximate similarity , sim ( x, y ) ≈ P [ h ( x ) = g ( y )] ( Neyshabur et al. , 2013 ; 2 014 ). Neyshabur et al. showed that ev en when the similarity sim ( x, y ) is en tirely symmetric, asymmetry in the hash may en able ob taining an LSH when a symm et- ric LSH is not p ossible, or enable obtaining a muc h better LSH yielding shorter and more accurate hashes. Sev eral tree-based methods have also been pro- posed for inner product search ( Ram and Gray , 2012 ; Koenigstein et al. , 2 012 ; Curtin et al. , 2013 ). Shriv astav a an d Li ( 201 4a ) argue that tree-based method s, such as cone tr ees, are impractica l in high dimensions while the per formance of LSH-based metho ds is in a way in dependent of dime nsion of the data. Although the exact regimes under wh ich LSH- based metho ds are superior to tree -based methods and v ice versa ar e n ot fully established yet, the goal of this paper is to analyze different LSH methods and compare them with each other, rather than co mparing to tree-based meth ods, so as to understan d which LSH to use and why , in tho se regimes where tree-based methods are not practical. Considering M IPS, Shriv asta va an d Li ( 201 4a ) argue that there is no symmetric LSH for inner pr oduct similarity , and propo se two distinct ma ppings, one o f d atabase o bjects an d the o ther for queries, wh ich yields an asymm etric LSH for MIPS. But the caveat is that th e y co nsider different spaces in their positive and negativ e results: they show nonexis- tence of a symmetric LSH over the entire space R d , but their asymmetric L SH is o nly valid when queries are nor- malized an d data vecto rs are bound ed. Th us, th e y d o n ot On Symmetric and Asymmetric LSHs for Inner Pr oduct Search actually show a situation wh ere an asymmetric hash suc- ceeds where a sy mmetric hash is no t po ssible. In fact, in Section 4 we show a simp le symmetric LSH th at is also valid u nder the same assum ptions, and it even enjoys im- proved theo retical guarantee s and em pirical perfor mance! This su ggests that asymm etry might actu ally not be re- quired nor helpful for MIPS. Motiv ated b y understan ding the power o f a symmetry , an d using this under standing to o btain the simplest and best possible LSH for MIPS, we co nduct a mor e carefu l stud y of LSH fo r inner product similarity . A crucial issue here is what is the spa ce of vectors over which w e would like our LSH to be valid. First, we show that over th e entire space R d , not only is there no symmetric LSH, but there is also no asymmetric L SH either (Section 3 ). Second, as mentione d above, when querie s are normalized and data is bou nded, a symmetric LSH is po ss ible and ther e is no ne ed for asym- metry . But when quer ies an d data v ectors are bo unded and queries are no t normalized, we do observe the power of asymmetry: h ere, a symmetric LSH is no t possible, but an asymmetric LSH exists (Section 5 ). As mentioned above, our stud y also yields an LSH for MIPS, wh ich we refer to as S I M P L E - L S H , which is not only symmetric but also paramete r -free and enjoys s ignifican tly better theoretical and empirical compare d to L 2 - A L S H ( S L ) propo sed by Shriv astav a an d Li ( 201 4a ). In Append ix A we show that all of o ur the oretical observations about L 2 - A L S H ( S L ) a pply also to the alternative hash S I G N - L S H ( S L ) put forth by Shriv astav a an d Li ( 2 014b ). The transformation at the root of S I M P L E - L S H was also re- cently p roposed by Bachrach et al. ( 20 14 ), who u sed it in a PCA-T ree data structu re for speedin g up the Xbo x rec- ommend er system. Here, we study the transform ation as part of an LSH scheme, invest igate its theoretica l prop er - ties, and compare it to L S - A L S H ( S L ) . 2. Locality Sensitiv e Ha shing A ha sh of a set Z of o bjects is a random map ping from Z to som e alphabet Γ , i.e. a distribution over functions h : Z → Γ . Th e hash is s ometime s thoug ht of as a “family” of function s, where the distribution over the family is implicit. When studying hashes, we usu ally study the behavior w hen comparin g any two poin ts x, y ∈ Z . Howe ver , f or our study her e, it will be im portant for us to m ake dif ferent assumptions abo ut x an d y —e.g., we will want to assum e w .l. o.g. that queries are n ormalized but will not b e a ble to make the same a ss ump tions on database vectors. T o this end, we d efine what it means f or a hash to be an LSH over a pair of constrained subspace s X , Y ⊆ Z . Giv en a sim- ilarity function sim : Z × Z → R , suc h as inner prod uct similarity s im ( x, y ) = x ⊤ y , an LSH is defined as follows 1 : Definition 1 (Locality Sen siti ve Hashing (LSH)) . A ha sh is said to be a ( S, cS, p 1 , p 2 ) -LSH for a similarity function sim over the pair of spaces X , Y ⊆ Z if for an y x ∈ X and y ∈ Y : • if sim ( x, y ) ≥ S then P h [ h ( x ) = h ( y )] ≥ p 1 , • if sim ( x, y ) ≤ cS then P h [ h ( x ) = h ( y )] ≤ p 2 . When X = Y , we say simply “ over the space X ”. Here S > 0 is a thresho ld of interest, and for efficient approx imate near est neighbor search, we need p 1 > p 2 and c < 1 . In pa rticular , given a n ( S, cS, p 1 , p 2) -LSH, a data s tructur e for findin g S -similar objects fo r query points when cS -similar objects exist in the database can b e con- structed in time O ( n ρ log n ) a nd space O ( n 1+ ρ ) wher e ρ = log p 1 log p 2 . Th is quantity ρ is the refore of par ticular inter- est, as we are interested in an LSH with minimu m po ss ible ρ , and we refer to it as the hashing quality . In Defin ition 1 , the hash itself is still symmetric, i.e. the same fu nction h is applied to both x and y . Th e only asym - metry allowed is in the pro blem d efinition, as we allow re- quiring the prop erty for differently constrained x and y . This should be c ontrasted with a truly asymmetric hash, where two dif ferent functions ar e u sed, on e for each space. Formally , an a symmetric hash for a pair of spaces X and Y is a jo int distribution over pairs o f map pings ( f , g ) , f : X → Γ , g : Y → Γ . Th e asymme tric hashes we con- sider will be specified b y a pair of deterministic mappin gs P : X → Z and Q : Y → Z and a sing le rand om m ap- ping (i.e. d istrib ution over function s) h : Z → Γ , where f ( x ) = h ( P ( x )) and g ( y ) = h ( Q ( y )) . Given a similarity function sim : X × Y → R we define: Definition 2 (Asym metric Locality Sensitive Hash- ing (ALSH)) . An asymmetric hash is said to be an ( S, cS, p 1 , p 2 ) -ALSH for a similarity function sim over X , Y if for any x ∈ X a nd y ∈ Y : • if sim ( x, y ) ≥ S then P ( f ,g ) [ f ( x ) = g ( y )] ≥ p 1 , • if sim ( x, y ) ≤ cS then P ( f ,g ) [ f ( x ) = g ( y )] ≤ p 2 . Referring to either of th e above d efinitions, we also say that a h ash is an ( S, cS ) -LSH (or ALSH ) if ther e exists p 2 > p 1 such that it is an ( S, cS, p 1 , p 2 ) -LSH (o r ALSH). And we say it is a univ ersal LSH (or ALSH ) if f or every S > 0 , 0 < c < 1 it is an ( S, cS ) -LSH (or ALSH). 1 This is a formalization of t he definition gi ven by Shriv asta va and Li ( 201 4a ), which in turn is a modification of the definition of LSH for distance functions ( Indyk and Motw ani , 1998 ), whe re we also allo w different constraints on x and y . Even though inner product similarity could be negati ve, this definition is only concerned with the positi ve va lues. On Symmetric and Asymmetric LSHs for Inner Pr oduct Search 3. No ALSH ov er R d Considering the problem o f findin g an LSH for in ner prod - uct similarity , Shriv astav a an d Li ( 2014 a ) first ob serve that for any S > 0 , 0 < c < 1 , there is no symmetric ( S, cS ) - LSH for sim ( x, y ) = x ⊤ y over th e entire spa ce X = R d , which promp ted them to consider asymmetr ic ha shes. In fact, we show that asymmetry doesn’t help her e, as there also isn’t an y ALSH over the entir e spa ce: Theorem 3.1. F or an y d ≥ 2 , S > 0 and 0 < c < 1 ther e is no asymmetric hash that is an ( S, cS ) -ALSH for inner pr oduct similarity over X = Y = R d . Pr oof. Assume for contradictio n th ere exists some S > 0 , 0 < c < 1 and p 1 > p 2 for wh ich ther e exists an ( S, cS, p 1 , p 2 ) -ALSH ( f , g ) for inner p roduct similarity over R 2 (an ALSH for inner pro ducts over R d , d > 2 , is also an ALSH f or inner pr oducts over a tw o-dim ensional subspace, i.e. over R 2 , and so it is enough to con sider R 2 ). Consider the following two seque nces of po ints: x i = [ − i, 1] y j = [ S (1 − c ) , S (1 − c ) j + S ] . For any N ( to be set later ), define the N × N matr ix Z as follows: Z ( i, j ) = 1 x ⊤ i y j ≥ S − 1 x ⊤ i y j ≤ cS 0 otherwise . (2) Because of the choice of x i and y j , the matrix Z does not actually con tain zeros, and is in -fact t riang ular with +1 on and above th e diagonal and − 1 below it. Consider also the m atrix P ∈ R N × N of collision pro babilities P ( i, j ) = P ( f ,g ) [ f ( x i ) = g ( x j )] . Setting θ = ( p 1 + p 2 ) / 2 < 1 and ǫ = ( p 1 − p 2 ) / 2 > 0 , the ALSH p roperty implies that for ev ery i, j : Z ( i, j )( P ( i, j ) − θ ) ≥ ǫ (3) or equiv alently: Z ⊙ P − θ ǫ ≥ 1 (4) where ⊙ denotes element-wise ( Hadamard) pr oduct. Now , fo r a sign matrix Z , the margin comple xity of Z is defined a s mc ( Z ) = inf Z ⊙ X ≥ 1 k X k max (see Srebro and Shraibman , 200 5 , an d also f or th e defin ition of the max-no rm k X k max ), and we know th at the margin complexity of an N × N triangu lar matrix is boun ded b y mc ( Z ) = Ω(log N ) ( Forster et al. , 2003 ), i mply ing k ( P − θ ) /ǫ k max = Ω(log N ) . (5) Furthermo re, any collision prob ability matrix has ma x- norm k P k max ≤ 1 ( Neyshabur et al. , 20 14 ), and sh ifting the matrix by 0 < θ < 1 change s the max- norm by at most θ , imply ing k P − θ k max ≤ 2 , which co mbined with ( 5 ) implies ǫ = O (1 / log N ) . For any ǫ = p 1 − p 2 > 0 , selecting a large enough N we get a contrad iction. For complete ness, we also include in Appen dix B a full def- inition of the max-no rm an d margin com plexity , as well as the bo unds on th e max- norm and margin comp le xity used in the proo f above. 4. Maximum Inner Pr oduct Searc h W e saw th at n o LSH, nor ALSH, is possible for in- ner pr oduct similarity over the entire space R d . Fortu- nately , this is not r equired for MIPS. As pointed out b y Shriv astav a an d Li ( 2014a ), we can assume the following without loss of generality: • Th e quer y q is normalized: Since gi ven a vector q , the norm k q k d oes not affect the argmax in ( 1 ), we can assume k q k = 1 always. • Th e database vecto rs ar e bound ed inside the u nit sphere: W e assum e k x k ≤ 1 for all x ∈ S . Other- wise we can rescale all vectors with out chan ging the argmax. W e canno t, of cour se, assume the vectors x are no rmal- ized. This mean s we can limit o ur attention to the be ha v- ior of the hash over X • = x ∈ R d k x k ≤ 1 and Y ◦ = q ∈ R d k q k = 1 . In deed, Shriv astav a an d Li ( 2 014a ) establish the existence of an asymmetric LSH, which we refer to as L 2 - A L S H ( S L ) , over this pair of d atabase and query spaces. Our main re sult in this sectio n is to sho w that in fact there does exists a simple, param eter -free, uni- versal, symmetric LSH, which we refer to as S I M P L E - L S H , over X • , Y ◦ . W e see then that we do need to con sider the hashing pro perty asy mmetrically (with different assump - tions for queries and database vectors), but the same hash function can be u sed for bo th the database and the qu eries and there is no ne ed for tw o different hash functio ns or two different mappings P ( · ) and Q ( · ) . But fir st, we review L 2 - A L S H ( S L ) and note that it is not universal—it dep ends o n three p arameters and no setting of the p arameters works for all thresh olds S . W e also com- pare our S I M P L E - L S H to L 2 - A L S H ( S L ) (and to the recently suggested S I G N - A L S H ( S L ) ) both in terms of th e hashing quality ρ and empirica lly of movie reco mmendation data sets. On Symmetric and Asymmetric LSHs for Inner Pr oduct Search 4.1. L2-ALSH(SL) For an integer parame ter m , and real valued p arameters 0 < U < 1 and r > 0 , consider the following p air of mappings: P ( x ) = [ U x ; k U x k 2 ; k U x k 4 ; . . . ; k U x k 2 m ] Q ( y ) = [ y ; 1 / 2; 1 / 2; . . . ; 1 / 2 ] , (6) combined with the standard L 2 hash function h L 2 a,b ( x ) = a ⊤ x + b r (7) where a ∼ N (0 , I ) is a sph erical multi-Gaussian rando m vector , b ∼ U (0 , r ) is a u niformly distrib uted rando m vari- able on [0 , r ] . T he alph abet Γ used is the integers, the in- termediate space is Z = R d + m and th e asym metric hash L 2 - A L S H ( S L ) , param eterized b y m, U and r , is then given by ( f ( x ) , g ( q )) = ( h L 2 a,b ( P ( x )) , h L 2 a,b ( Q ( q ))) . (8) Shriv astav a an d Li ( 2014 a ) establish 2 that fo r any 0 < c < 1 and 0 < S < 1 , there exists 0 < U < 1 , r > 0 , m ≥ 1 , such that L 2 - A L S H ( S L ) is an ( S, cS ) -ALSH over X • , Y ◦ . They fu rthermore calculate the hash ing quality ρ as a func- tion of m, U an d r , an d n umerically find the optimal ρ over a grid of possible values for m, U and r , for each choice o f S, c . Before movin g on to presenting a symmetric hash for the problem , we note that L 2 - A L S H ( S L ) is n ot universal (as de- fined at the en d of Sectio n 2 ). That is, no t only might the optimal m, U and r depe nd o n S, c , but in fact ther e is no choice of the parameters m and U that yields an ALSH for all S, c , or even for all ratios c for some sp ecific th reshold S o r fo r all th resholds S f or som e sp ecific ratio c . This is unfor tunate, since in MIPS pr oblems, the relev ant thresh- old S is the maxim al inner p roduct max x ∈S q ⊤ x (or th e threshold in ner prod uct if we are interested in the “to p- k ” hits), which typ ically varies with the query . It is therefo re desirable to have a single hash tha t works fo r all t hresho lds. Lemma 1. F or any m, U, r , and for any 0 < S < 1 and 1 − U 2 m +1 − 1 (1 − S 2 m +1 ) 2 S ≤ c < 1 , L 2 - A L S H ( S L ) is no t an ( S, cS ) -ALSH for inner pr oduct similarity over X • = { x |k x k ≤ 1 } a nd Y ◦ = { q |k q k = 1 } . Pr oof. Assume for contradiction that it is an ( S, cS ) - ALSH. For any query point q ∈ Y ◦ , let x ∈ X • be a vector 2 Shriv asta va and Li ( 2014a ) have the scaling by U as a sepa- rate step, and state their hash as an ( S 0 , cS 0 ) -ALSH over {k x k ≤ U } , {k q k = 1 } , where t he threshold S 0 = U S is also scaled by U . This is equi valen t to the presentation here which integrates the pre-scaling step, which also scales the threshold, into the hash. s.t. q ⊤ x = S and k x k 2 = 1 and let y = cS q , so tha t q ⊤ y = cS . W e have that: p 1 ≤ P h L 2 a,b ( P ( x )) = h L 2 a,b ( Q ( q )) = F r ( k P ( x ) − Q ( q ) k 2 ) p 2 ≥ P h L 2 a,b ( P ( y )) = h L 2 a,b ( Q ( q )) = F r ( k P ( y ) − Q ( q ) k 2 ) where F r ( δ ) is a monoto nically decreasing fun ction of δ ( Datar et al. , 200 4 ). T o g et a contrad iction it is therefo r enoug h to show that k P ( y ) − Q ( q ) k 2 ≤ k P ( x ) − Q ( q ) k 2 . W e hav e: k P ( y ) − Q ( q ) k 2 = 1 + m 4 + k y k 2 m +1 − 2 q ⊤ y = 1 + m 4 + ( cS U ) 2 m +1 − 2 c S U using 1 − U 2 m +1 − 1 (1 − S 2 m +1 ) 2 S ≤ c < 1 : < 1 + m 4 + ( S U ) 2 m +1 − 2 cS U ≤ 1 + m 4 + U 2 m +1 − 2 S U = k P ( x ) − Q ( q ) k 2 Corollary 4.1. F or an y U, m a nd r , L 2 - A L S H ( S L ) is not a univ er sal ALSH for inne r pr oduct similarity over X • = { x |k x k ≤ 1 } and Y ◦ = { q |k q k = 1 } . Furthermore , fo r any c < 1 , an d any choice of U, m, r ther e exists 0 < S < 1 for which L 2 - A L S H ( S L ) is not an ( S, cS ) -ALSH over X • , Y ◦ , and fo r any S < 1 and a ny choice o f U, m, r ther e exists 0 < c < 1 fo r which L 2 - A L S H ( S L ) is n ot a n ( S, cS ) -ALSH over X • , Y ◦ . In Append ix A , we show a similar no n-universality result also for S I G N - A L S H ( S L ) . 4.2. SIMPLE-LSH W e prop ose here a simp ler , parameter-free, symm etric LSH, which we call S I M P L E - L S H . For x ∈ R d , k x k ≤ 1 , defin e P ( x ) ∈ R d +1 as fol- lows ( Bachrach et al. , 2014 ): P ( x ) = x ; q 1 − k x k 2 2 (9) For any x ∈ X • we have k P ( x ) k = 1 , and for any q ∈ Y ◦ , since k q k = 1 , we hav e: P ( q ) ⊤ P ( x ) = q ; 0 ⊤ x ; q 1 − k x k 2 2 = q ⊤ x (10) Now , to de fine the hash S I M P L E - L S H , take a spherical ran- dom vecto r a ∼ N (0 , I ) and consider the following ra n- dom mapping into the binary alphabet Γ = {± 1 } : h a ( x ) = sign ( a ⊤ P ( x )) . (11) On Symmetric and Asymmetric LSHs for Inner Pr oduct Search 0 0.2 0.4 0.6 0.8 1 0 0.2 0.4 0.6 0.8 1 p * SIMPLE−LSH L2−ALSH(SL) SIGN−ALSH(SL) 0 0.2 0.4 0.6 0.8 1 0 0.2 0.4 0.6 0.8 1 SIMPLE−LSH L2−ALSH(SL) SIGN−ALSH(SL) 0 0.2 0.4 0.6 0.8 1 0 0.2 0.4 0.6 0.8 1 SIMPLE−LSH L2−ALSH(SL) SIGN−ALSH(SL) 0 0.2 0.4 0.6 0.8 1 0 0.2 0.4 0.6 0.8 1 c p * SIMPLE−LSH L2−ALSH(SL) SIGN−ALSH(SL) 0 0.2 0.4 0.6 0.8 1 0 0.2 0.4 0.6 0.8 1 c SIMPLE−LSH L2−ALSH(SL) SIGN−ALSH(SL) 0 0.2 0.4 0.6 0.8 1 0 0.2 0.4 0.6 0.8 1 c SIMPLE−LSH L2−ALSH(SL) SIGN−ALSH(SL) S = 0 . 3 S = 0 . 5 S = 0 . 7 S = 0 . 9 S = 0 . 99 S = 0 . 999 Figure 1. The optimal hashing quality ρ ∗ for differen t hashes (lower is better). Theorem 4.2. S I M P L E - L S H given in ( 1 1 ) is a universal LSH over X • , Y ◦ . Th at is, for every 0 < S < 1 a nd 0 < c < 1 , it is an ( S, cS ) -LSH over X • , Y ◦ . Further- mor e, it has hashing quality: ρ = log 1 − cos − 1 ( S ) π log 1 − cos − 1 ( cS ) π . Pr oof. For any x ∈ X • and q ∈ Y ◦ we have ( Goemans and W illiamson , 1995 ): P [ h a ( P ( q )) = h a ( P ( x ))] = 1 − cos − 1 ( q ⊤ x ) π . (12) Therefo re: • if q ⊤ x ≥ S , then P h a ( P ( q )) = h a ( P ( x )) ≥ 1 − cos − 1 ( S ) π • if q ⊤ x ≤ cS , then P h a ( P ( q )) = h a ( P ( x )) ≤ 1 − cos − 1 ( cS ) π Since for any 0 ≤ x ≤ 1 , 1 − cos − 1 ( x ) π is a mon otonically increasing function , this gives us an LSH. 4.3. Theoretical Comparison Earlier we discussed tha t an LSH with the smallest possible hashing quality ρ is desirable. In th is Section, we compa re the best achiev able hashing quality and sho w that S I M P L E - L S H allows for much b etter hashin g quality compared to L 2 - A L S H ( S L ) , as well as compared to the im proved hash S I G N - L S H ( S L ) . For L 2 - A L S H ( S L ) and S I G N - A L S H ( S L ) , for each desired threshold S and ratio c , one can optim ize o ver the parame- ters m and U , and for L 2 - A L S H ( S L ) also r , to find the hash with the best ρ . This is a non-convex optimization p roblem and Shriv astav a and Li ( 201 4a ) suggest using grid search to find a bo und on the optimal ρ . W e followed the procedu re, and grid, as suggested by Shrivas tav a and Li ( 2014a ) 3 . For S I M P L E - L S H no par ameters need to b e tuned, and for each S, c the hashing quality is given by Theor em 5.3 . In Figure 1 w e compar e the optimal hashing qu ality ρ for the three methods, for different values of S and c . It is clear th at the S I M P L E - L S H dominates the other methods. 4.4. Empirical Evaluation W e also comp ared th e hash fu nctions empirically , follow- ing the exact same protocol as Shriv astav a an d Li ( 2014 a ), 3 W e actually used a slightly tighter bound—a careful analy- sis sho ws the denominator in equation 19 of Shriv astav a and Li ( 2014a ) can be log F r ( p 1 + m/ 2 − 2 cS U + ( cS U ) 2 m +1 )) On Symmetric and Asymmetric LSHs for Inner Pr oduct Search 0 0.2 0.4 0.6 0.8 1 0 0.1 0.2 0.3 0.4 Precision Top 10, K = 64 SIMPLE−LSH L2−ALSH(SL) SIGN−ALSH(SL),m=2 SIGN−ALSH(SL),m=3 0 0.2 0.4 0.6 0.8 1 0 0.1 0.2 0.3 0.4 0.5 Top 10, K = 128 SIMPLE−LSH L2−ALSH(SL) SIGN−ALSH(SL),m=2 SIGN−ALSH(SL),m=3 0 0.2 0.4 0.6 0.8 1 0 0.2 0.4 0.6 0.8 Top 10, K = 256 SIMPLE−LSH L2−ALSH(SL) SIGN−ALSH(SL),m=2 SIGN−ALSH(SL),m=3 0 0.2 0.4 0.6 0.8 1 0 0.2 0.4 0.6 0.8 Top 10, K = 512 SIMPLE−LSH L2−ALSH(SL) SIGN−ALSH(SL),m=2 SIGN−ALSH(SL),m=3 0 0.2 0.4 0.6 0.8 1 0 0.05 0.1 0.15 0.2 0.25 Precision Top 5, K = 64 SIMPLE−LSH L2−ALSH(SL) SIGN−ALSH(SL),m=2 SIGN−ALSH(SL),m=3 0 0.2 0.4 0.6 0.8 1 0 0.1 0.2 0.3 0.4 Top 5, K = 128 SIMPLE−LSH L2−ALSH(SL) SIGN−ALSH(SL),m=2 SIGN−ALSH(SL),m=3 0 0.2 0.4 0.6 0.8 1 0 0.1 0.2 0.3 0.4 0.5 Top 5, K = 256 SIMPLE−LSH L2−ALSH(SL) SIGN−ALSH(SL),m=2 SIGN−ALSH(SL),m=3 0 0.2 0.4 0.6 0.8 1 0 0.2 0.4 0.6 0.8 Top 5, K = 512 SIMPLE−LSH L2−ALSH(SL) SIGN−ALSH(SL),m=2 SIGN−ALSH(SL),m=3 0 0.2 0.4 0.6 0.8 1 0 0.02 0.04 0.06 0.08 0.1 Recall Precision Top 1, K = 64 SIMPLE−LSH L2−ALSH(SL) SIGN−ALSH(SL),m=2 SIGN−ALSH(SL),m=3 0 0.2 0.4 0.6 0.8 1 0 0.05 0.1 0.15 0.2 Recall Top 1, K = 128 SIMPLE−LSH L2−ALSH(SL) SIGN−ALSH(SL),m=2 SIGN−ALSH(SL),m=3 0 0.2 0.4 0.6 0.8 1 0 0.05 0.1 0.15 0.2 Recall Top 1, K = 256 SIMPLE−LSH L2−ALSH(SL) SIGN−ALSH(SL),m=2 SIGN−ALSH(SL),m=3 0 0.2 0.4 0.6 0.8 1 0 0.05 0.1 0.15 0.2 0.25 Recall Top 1, K = 512 SIMPLE−LSH L2−ALSH(SL) SIGN−ALSH(SL),m=2 SIGN−ALSH(SL),m=3 Figure 2. Netflix : Precision-Recall curves (higher is better) of retrieving top T items by hash code of length K . S I M P L E - L S H is parameter-free. For L 2 - A L S H ( S L ) , we fix the parameters m = 3 , U = 0 . 84 , r = 2 . 5 and for S I G N - A L S H ( S L ) we used two differ - ent settings of the parameters: m = 2 , U = 0 . 75 and m = 3 , U = 0 . 85 . using two collabo rati ve filterin g datasets, Netflix and Movielens 10M. For a given u ser -item matrix Z , we followed the p ureSVD proced ure sugg ested by Cremonesi et al. ( 2010 ): we first subtracted the overall average rating fr om each in di vid- ual rating and created the matr ix Z with these av erage- subtracted r atings for o bserved entries and zeros f or unob- served entries. W e the n take a rank- f app roximation ( top f singular compon ents, f = 150 f or Movielen s and f = 300 for Netflix) Z ≈ W Σ R ⊤ = Y an d define L = W Σ so th at Y = LR ⊤ . W e can think of each row of L as the vector presentation of a u ser an d each r o w o f R as the presenta tion for an item. The database S consists of all rows R j of R (co rresponding to movies) and we use eac h row L i of L ( correspondin g to users) as a query . That is, for each user i we would like to find the top T movies, i.e. the T m ovies with highest h L i , R j i , for different v alues of T . T o do so, fo r each h ash family , w e g enerate hash codes o f length K , f or varying lengths K , fo r all movies a nd a r an- dom selection of 6 0000 users (queries). For each user , we sort movies in ascending order of hamming d istance b e- tween the user h ash and movie h ash, br eaking up ties r an- domly . For ea ch of several values of T a nd K we calculate precision-r ecall curves for recallin g the top T m ovies, aver - aging the prec ision-recall values over th e 600 00 random ly selected users. In Figures 2 and 3 , we plot pr ecision-recall curves of re- trieving top T items b y h ash code of length K for Net- flix and Movielens da tasets where T ∈ { 1 , 5 , 10 } and K ∈ { 64 , 128 , 2 56 , 512 } . For L 2 - A L S H ( S L ) we u sed m = 3 , U = 0 . 83 , r = 2 . 5 , suggested by the autho rs and used in t heir empir ical ev aluation. For S I G N - A L S H ( S L ) we used two different settings o f the para meters suggested by Shriv astav a an d Li ( 201 4b ): m = 2 , U = 0 . 75 and m = 3 , U = 0 . 85 . S I M P L E - L S H d oes not re quire any pa- rameters. As can be seen in the Figures, S I M P L E - L S H shows a d ra- matic empirical improvement over L 2 - A L S H ( S L ) . F ollow- ing the p resentation of S I M P L E - L S H and the compar ison with L 2 - A L S H ( S L ) , Shriv astav a an d Li ( 20 14b ) sugg ested the mod ified hash S I G N - A L S H ( S L ) , which is ba sed on ran- dom projec tions, as is S I M P L E - L S H , b ut with an asym- metric tr ansform similar to that in L 2 - A L S H ( S L ) . Perhaps On Symmetric and Asymmetric LSHs for Inner Pr oduct Search 0 0.2 0.4 0.6 0.8 1 0 0.1 0.2 0.3 0.4 Precision Top 10, K = 64 SIMPLE−LSH L2−ALSH(SL) SIGN−ALSH(SL),m=2 SIGN−ALSH(SL),m=3 0 0.2 0.4 0.6 0.8 1 0 0.1 0.2 0.3 0.4 0.5 Top 10, K = 128 SIMPLE−LSH L2−ALSH(SL) SIGN−ALSH(SL),m=2 SIGN−ALSH(SL),m=3 0 0.2 0.4 0.6 0.8 1 0 0.2 0.4 0.6 0.8 Top 10, K = 256 SIMPLE−LSH L2−ALSH(SL) SIGN−ALSH(SL),m=2 SIGN−ALSH(SL),m=3 0 0.2 0.4 0.6 0.8 1 0 0.2 0.4 0.6 0.8 Top 10, K = 512 SIMPLE−LSH L2−ALSH(SL) SIGN−ALSH(SL),m=2 SIGN−ALSH(SL),m=3 0 0.2 0.4 0.6 0.8 1 0 0.05 0.1 0.15 0.2 0.25 Precision Top 5, K = 64 SIMPLE−LSH L2−ALSH(SL) SIGN−ALSH(SL),m=2 SIGN−ALSH(SL),m=3 0 0.2 0.4 0.6 0.8 1 0 0.1 0.2 0.3 0.4 Top 5, K = 128 SIMPLE−LSH L2−ALSH(SL) SIGN−ALSH(SL),m=2 SIGN−ALSH(SL),m=3 0 0.2 0.4 0.6 0.8 1 0 0.1 0.2 0.3 0.4 0.5 Top 5, K = 256 SIMPLE−LSH L2−ALSH(SL) SIGN−ALSH(SL),m=2 SIGN−ALSH(SL),m=3 0 0.2 0.4 0.6 0.8 1 0 0.2 0.4 0.6 0.8 Top 5, K = 512 SIMPLE−LSH L2−ALSH(SL) SIGN−ALSH(SL),m=2 SIGN−ALSH(SL),m=3 0 0.2 0.4 0.6 0.8 1 0 0.02 0.04 0.06 0.08 Recall Precision Top 1, K = 64 SIMPLE−LSH L2−ALSH(SL) SIGN−ALSH(SL),m=2 SIGN−ALSH(SL),m=3 0 0.2 0.4 0.6 0.8 1 0 0.02 0.04 0.06 0.08 0.1 0.12 Recall Top 1, K = 128 SIMPLE−LSH L2−ALSH(SL) SIGN−ALSH(SL),m=2 SIGN−ALSH(SL),m=3 0 0.2 0.4 0.6 0.8 1 0 0.05 0.1 0.15 0.2 Recall Top 1, K = 256 SIMPLE−LSH L2−ALSH(SL) SIGN−ALSH(SL),m=2 SIGN−ALSH(SL),m=3 0 0.2 0.4 0.6 0.8 1 0 0.05 0.1 0.15 0.2 0.25 Recall Top 1, K = 512 SIMPLE−LSH L2−ALSH(SL) SIGN−ALSH(SL),m=2 SIGN−ALSH(SL),m=3 Figure 3. Movielens : Precision-Recall curves (higher is better) of retriev ing top T items by hash code of length K . S I M P L E - L S H is parameter-free. For L 2 - A L S H ( S L ) , we fix the parameters m = 3 , U = 0 . 84 , r = 2 . 5 and for S I G N - A L S H ( S L ) we used two dif ferent settings of the parameters: m = 2 , U = 0 . 75 and m = 3 , U = 0 . 85 . not surp rising, S I G N - A L S H ( S L ) d oes indeed pe rform al- most the same as S I M P L E - L S H ( S I M P L E - L S H has only a slight ad v antage on Movielens), howev er: (1) S I M P L E - L S H is simp ler , and uses a single symmetric lower -dimension al transform ation P ( x ) ; (2 ) S I M P L E R - L S H is u ni versal and parameter free, while S I G N - A L S H ( S L ) requir es tuning two parameters (its authors suggest two d if ferent parameter set- tings fo r use). Theref or , we see no reason to prefer S I G N - A L S H ( S L ) over the si mpler symm etric op tion. 5. Unnormalized Queries In the pr e vious Sectio n, we exploited asymme try in the MIPS pr oblem fo rmulation, and showed that with such asymmetry , there is n o need for the hash itself to be asym- metric. In this Section, we c onsider LSH for inne r product similarity in a m ore symmetric settin g, where we a ss ume no nor malization and only b oundedness. That is, we ask whether there is an LSH or ALSH fo r inner p roduct similar - ity over X • = Y • = { x | k x k ≤ 1 } . Beyond a th eoretical interest in the n eed fo r asymmetry in th is fully symm etric setting, the setting can also be useful if we are interested in using sets X and Y in terchangeably as query and data sets. In user-item setting for example, o ne m ight be also inter- ested in r etrie ving the top user s intere sted in a giv en item without the need to create a separate hash for this task. W e first observe that there is no symmetric LSH for this setting. W e therefore consid er asymmetric hashes. Unfortu nately , we show that neither L 2 - A L S H ( S L ) ( nor S I G N - A L S H ( S L ) ) ar e ALSH over X • . Instead, we p ro- pose a parameter-free asym metric extension of S I M P L E - L S H , which we call S I M P L E - A L S H , and show that it is a universal ALSH for inner prod uct similarity over X • . T o summa rize the situation , if we consider th e prob lem asymmetrically , as in the p re vious Section, there is no n eed for the hash to b e asym metric, and we can use a single hash function . But if we insist on con sidering the problem sym- metrically , we do indeed have to use an asymmetric hash . 5.1. No symmetric LSH W e first show we do not ha ve a symmetric LSH: Theorem 5.1. F or any 0 < S ≤ 1 and 0 < c < 1 ther e is no ( S, cS ) -LSH (by Defin ition 1 ) for inner pr od uct similar- ity over X • = Y • = { x | k x k ≤ 1 } . On Symmetric and Asymmetric LSHs for Inner Pr oduct Search Pr oof. The same argumen t a s in Shrivas tav a and Li ( 2014 a , Theorem 1) app lies: Assume for contradictio n h is an ( S, cS, p 1 , p 2 ) -LSH (with p 1 > p 2 ). Let x be a vec- tor such that k x k = cS < 1 . Let q = x ∈ X • and y = 1 c x ∈ X • . Therefo re, we have q ⊤ x = cS an d q ⊤ y = S . Howe ver , since q = x , P h ( h ( q ) = h ( x )) = 1 ≤ p 2 < p 1 = P h ( h ( q ) = h ( y )) ≤ 1 a nd we get a contradictio n. 5.2. L2-ALSH(SL) W e migh t ho pe L 2 - A L S H ( S L ) is a valid AL SH here. Un- fortun ately , when e ver S < ( c + 1) / 2 , and so in pa rticular for all S < 1 / 2 , it is not: Theorem 5.2. F or any 0 < c < 1 and any 0 < S < ( c + 1 ) / 2 , there ar e no U, m a nd r such tha t L 2 - A L S H ( S L ) is an ( S, cS ) -ALSH for inner pr oduct similarity over X • = Y • = { x | k x k ≤ 1 } . Pr oof. Let q 1 and x 1 be unit vectors such that q ⊤ 1 x 1 = S . Let x 2 be a u nit vector and defin e q 2 = c S x 2 . For any U and m : k P ( x 2 ) − Q ( q 2 ) k 2 = k q 2 k + m 4 + k U x 2 k 2 m +1 − 2 q ⊤ 2 x = c 2 S 2 + m 4 + U 2 m +1 − 2 c S U ≤ 1 + m 4 + U 2 m +1 − 2 S U = k P ( x 2 ) − Q ( q 2 ) k 2 where th e inequality follows f rom S < ( c + 1 ) / 2 . Now , the same a r gum ents as in Lemma 1 using mo notonicity of collision probabilities in k P ( x ) − Q ( q ) k establish L S - A L S H ( S L ) is not an ( S, cS ) -AL SH. In App endix A , we show a stronger negativ e result fo r S I G N - A L S H ( S L ) : for any S > 0 an d 0 < c < 1 , there are no U, m such that S I G N - A L S H ( S L ) is an ( S, cS ) − AL S H . 5.3. SIMPLE-ALSH Fortunately , we can define a variant of S I M P L E - L S H , which we refer to as S I M P L E - A L S H , for this more general case where queries are not n ormalized. W e use the pair of trans- formation s: P ( x ) = x ; q 1 − k x k 2 2 ; 0 (13) Q ( x ) = x ; 0; q 1 − k x k 2 2 and the random mapp ings f ( x ) = h a ( P ( x )) , g ( y ) = h a ( Q ( x )) , where h a ( z ) is as in ( 11 ). It is clear that by these definitions, we always have that fo r all x, y ∈ X • , P ( x ) ⊤ Q ( y ) = x ⊤ y and k P ( x ) k = k Q ( y ) k = 1 . Theorem 5.3. S I M P L E - A L S H is a universal ALSH over X • = Y • = { x | k x k ≤ 1 } . That is, for every 0 < S, c < 1 , it is an ( S, cS ) -ALS H over X • , Y • . Pr oof. The c hoice of mapping s ensures tha t fo r all x, y ∈ X • we hav e P ( x ) ⊤ Q ( y ) = x ⊤ y and k P ( x ) k = k Q ( y ) k = 1 , a nd so P [ h a ( P ( x )) = h a ( Q ( y ))] = 1 − cos − 1 ( q ⊤ x ) π . As in the proof o f Theorem 4.2 , m onotonicity of 1 − cos − 1 ( x ) π establishes the desired ALSH prope rties. Shriv astav a an d Li ( 2015 ) also showed how a mod ification of S I M P L E - A L S H ca n be used for searching similarity mea- sures such as set co ntainment and we ighted Jacca rd simi- larity . 6. Conclusion W e provide a com plete chara cterization of when sym metric and asymmetric LSH are possible for inner produ ct similar- ity: • Over R d , no sym metric no r asymmetric LSH is po ssi- ble. • For the M IPS setting, with no rmalized queries k q k = 1 and boun ded database vector s k x k ≤ 1 , a universal symmetric LSH is possible. • When queries an d database vecto rs are bound ed but not normalized , a symmetric LSH is not possible, but a u ni versal asymmetr ic LSH is. Here we s ee the power of asymmetry . This cor rects the view of Shriv astav a and Li ( 201 4a ), wh o used the nonexistence of a symmetric LSH over R d to mo- ti vate an asymmetric LSH when queries ar e n ormalized and database vector s are bo unded, even though we now see that in these tw o settings there is actually no advan- tage to asymmetry . In the third setting, where an asym- metric hash is in deed need ed, the hashes suggested by Shriv astav a an d Li ( 2014a ; b ) are no t ALSH, an d a dif fer- ent asym metric h ash is require d (which we provide). Fu r - thermor e, even in the MIPS setting whe n quer ies are nor- malized (the seco nd setting) , the asymm etric h ashes sug- gested by Shriv astav a an d Li ( 201 4a ; b ) are not un i versal and req uire tun ing parameters specific to S, c , in contrast to S I M P L E - L S H which is sy mmetric, para meter -free and u ni- versal. It is im portant to em phasize that even thou gh in the MI PS setting an asymmetric h ash, as we define here, is no t needed, an asymm etric vie w of the problem is req uired. In particular, to use a symmetric hash, one must normalize the queries but not the database vectors, which can le gitimately be v ie wed as an asymm etric operation which is part of the On Symmetric and Asymmetric LSHs for Inner Pr oduct Search hash (though then th e h ash would not be, strictly speak- ing, an ALSH). In this regard Shriv astav a an d Li ( 2014a ) do indeed successfully iden tify th e need for an asymmetric view o f MIPS, and provide the first pr actical ALSH for the problem . A C K N O W L E D G M E N T S This research was partially funded by NSF award IIS- 13026 62. Refer ences Bachrach, Y ., Finkelstein, Y ., Gilad- Bachrach, R., Katzir , L., K oenigstein, N., Nice, N., a nd Paquet, U. (2014 ). Speeding up the Xbox recomm ender system using a e u- clidean transfor mation for inn er -prod uct sp aces. In P r o- ceedings o f the 8th ACM Confer ence o n Recommen der systems , pages 257–2 64. Charikar, M. S. (2002). Similarity estimation techniqu es from round ing algorith ms. STOC . Cremonesi, P ., K oren, Y ., and Turrin, R. (2010). Perfor- mance of recomm ender algorithms on top-n recomm en- dation tasks. In Pr oceedin gs of the fourth ACM con fer - ence on Recommen der systems, AC M , pag e 394 6. Curtin, R. R., Ram , P ., and Gra y , A. G. (201 3). Fast exact max-kernel search. SDM , pag es 1 –9. Datar , M., Immor lica, N., Indyk , P ., and Mirrokni, S. V . (2004 ). Locality-sensitive hashing sch eme based on p- stable distributions. In Pr oc. 20th S oCG , p ages 253 –262. Dean, T ., Ruzo n, M., Segal, M., Shlens, J., V ijaya- narasimhan , S., and Y agn ik, J. (201 3). Fast, accu rate detection of 100 ,000 o bject class es o n a single machine. CVPR . Forster , J., Schmitt, N., Simon , H., and Suttorp , T . (2 003). Estimating the optima l margins of embeddin gs in eu- clidean half spaces. Mac hine Learning , 51:263 281. Gionis, A., In dyk, P ., and Motwani, R. ( 1999). Similarity search in hig h dime nsions via h ashing. VLDB , 99:518 – 529. Goemans, M. X. and Willi amson , D. P . (19 95). Improved approx imation algorithm s fo r maximum cut an d satisfi- ability problem s using semidefinite programm ing. Jour- nal of the A CM (JA CM) , 42 .6:1115–1 145. Indyk , P . and Motwani, R. (1998 ). Appro ximate near est neighbo rs: to wards removin g the curse of d imensional- ity . STOC , pages 604–613. Jain, P ., , and Kapoo r , A. (2009). Ac ti ve learning fo r large multi-class problem s. CVPR . Joachims, T . (20 06). T raining linear SVMs in linear tim e. SIGKDD . Joachims, T ., Finley , T ., and Y u , C.-N. J. (200 9). Cutting- plane trainin g of structural SVMs. Machine Learning , 77.1:2 7–59. K oenigstein, N., Ram, P ., and Shavitt, Y . (2 012). Efficient retriev al of reco mmendations in a matrix factor ization framework. CIKM , pa ges 535–544. K oren, Y ., Bell, R ., and V olinsky ., C. (2009). Matrix factor- ization techn iques for recommender sy stems. Comp uter , 42.8:3 0–37. Neyshabur , B., Makar yche v , Y ., and Srebro, N. (201 4). Clustering, hammin g embed ding, generalized LSH and the max norm. A L T . Neyshabur , B., Y adollahpo ur , P ., Makar yche v , Y ., Salakhutdin ov , R., and Srebro, N. ( 2013). Th e p o wer of asymmetry in binary hashing. NIP S . Ram, P . and Gray , A. G. (201 2). Maximu m inn er -prod uct search using cone trees. SI GKDD . Shriv astav a, A. and Li, P . (201 4a). Asymmetr ic LSH (ALSH) for sub linear time maximum inner pro duct search (MIPS). NIPS . Shriv astav a, A. and Li, P . (20 14b). Imp rov ed asym metric locality sensitive hashing (ALSH) for maxim um inn er produ ct search (MIPS). arXiv:1410.54 10 . Shriv astav a, A. and Li, P . (20 15). Asymme tric m inwise hashing fo r ind e xing binary in ner p roducts an d set co n- tainment. WWW . Srebro, N., Renn ie, J., an d Jaakkola, T . (200 5). Maximum margin matrix f actorizatio n. NIPS . Srebro, N. and Shraibman, A. (2 005). Rank, trace-no rm and max-n orm. COLT . A. Another variant T o ben efit fro m th e emp irical ad v antages of ra ndom p ro- jection hashing , Shriv astav a and Li ( 2 014b ) also pro posed a modified asy mmetric L SH, wh ich we refer to here as S I G N - A L S H ( S L ) . S I G N - A L S H ( S L ) uses two different map- pings P ( x ) , Q ( q ) , similar to th ose of L 2 - A L S H ( S L ) , but then uses a random projection hash h a ( x ) , as is the one used by S I M P L E - L S H , instead of th e qu antized hash used in L 2 - A L S H ( S L ) . In this app endix we show th at o ur th eo- retical obser v ations abou t L 2 - A L S H ( S L ) are also valid for S I G N - A L S H ( S L ) . On Symmetric and Asymmetric LSHs for Inner Pr oduct Search S I G N - A L S H ( S L ) uses the pair of mapp ings: P ( x ) = [ U x ; 1 / 2 − k U x k 2 ; . . . ; 1 / 2 − k U x k 2 m ] Q ( y ) = [ y ; 0; 0 ; . . . ; 0] , (14) where m an d U ar e param eters, as in L 2 - A L S H ( S L ) . S I G N - A L S H ( L S ) is then given by f ( x ) = h a ( P ( x )) , g ( y ) = h a ( Q ( x )) , where h a is the rand om projectio n hash g i ven in ( 11 ). S I G N - A L S H ( L S ) th erefor depe nds on two para me- ters, and uses a binary alphabet Γ = {± 1 } . In th is section, we show that, like L 2 - A L S H ( L S ) , S I G N - A L S H ( L S ) is n ot a u ni versal ALSH over X • , Y ◦ , and more- over for any S > 0 and 0 < c < 1 it is not an ( S, cS ) - ALSH over X • = Y • : Lemma 2. F or any m, U, r , and for any 0 < S < 1 an d min s 1 − U 2 m +1 (1 − S 2 m +1 ) U 2 m +1 + m/ 4 , 2 m +1 q ( m/ 2) 2 m +1 − 2 S U ≤ c < 1 S I G N - A L S H ( S L ) is n ot an ( S, cS ) -ALSH fo r inner pr oduc t similarity over X • = { x |k x k ≤ 1 } a nd Y ◦ = { q |k q k = 1 } . Pr oof. Assume for contrad iction that: s 1 − U 2 m +1 (1 − S 2 m +1 ) U 2 m +1 + m/ 4 ≤ c < 1 and S I G N - A L S H ( S L ) is an ( S, cS ) -ALSH. For any query point q ∈ Y ◦ , let x ∈ X • be a vector s.t. q ⊤ x = S and k x k 2 = 1 and let y = cS q , so th at q ⊤ y = cS . W e have that: ( P ( y ) ⊤ Q ( q )) 2 k P ( y ) k 2 = c 2 S 2 U 2 m/ 4 + k y k 2 m +1 = c 2 S 2 U 2 m/ 4 + ( cS U ) 2 m +1 Using 1 − U 2 m +1 (1 − S 2 m +1 ) U 2 m +1 + m/ 4 ≤ c 2 < 1 : > c 2 S 2 U 2 m/ 4 + ( S U ) 2 m +1 ≥ S 2 U 2 m/ 4 + U 2 m +1 = ( P ( x ) ⊤ Q ( q )) 2 k P ( x ) k 2 The m onotonicity of 1 − cos − 1 ( x ) π establishes a contr adic- tion. T o g et the other boun d on c , let α m = 2 m +1 q ( m/ 2) 2 m +1 − 2 and assume for contradiction that: α m S U = 2 m +1 q ( m/ 2) 2 m +1 − 2 S U ≤ c < 1 and S I G N - A L S H ( S L ) is an ( S, cS ) -ALSH. For any query point q ∈ Y ◦ , let x ∈ X • be a vector s.t. q ⊤ x = S and k x k 2 = 1 and let y = ( α m /U ) q . By the mon otonicity of 1 − cos − 1 ( x ) π , to get a contradiction is enough to show th at P ( x ) ⊤ Q ( q ) k P ( x ) k ≤ P ( y ) ⊤ Q ( q ) k P ( y ) k W e have: ( P ( y ) ⊤ Q ( q )) 2 k P ( y ) k 2 = α 2 m m/ 4 + k α m k 2 m +1 = 2 m q ( m/ 2) 2 m +1 − 2 m/ 4 + ( m/ 2) 2 m +1 − 2 Since this is the maximum value o f the function f ( U ) = U 2 / ( m/ 4 + U 2 m +1 ) : ≥ U 2 m/ 4 + U 2 m +1 ≥ S 2 U 2 m/ 4 + U 2 m +1 = ( P ( x ) ⊤ Q ( q )) 2 k P ( x ) k 2 which is a contradiction . Corollary A.1. F o r any U, m and r , S I G N - A L S H ( S L ) is not a universal ALSH for inner pr oduct similarity over X • = { x |k x k ≤ 1 } and Y ◦ = { q |k q k = 1 } . Further- mor e, for an y c < 1 , an d any choice of U, m, r ther e exists 0 < S < 1 for which S I G N - A L S H ( S L ) is not an ( S, cS ) - ALSH over X • , Y ◦ , a nd for any S < 1 and a ny choice of U, m, r there exists 0 < c < 1 for which S I G N - A L S H ( S L ) is not an ( S, cS ) -ALS H over X • , Y ◦ . Lemma 3. F or an y S > 0 and 0 < c < 1 ther e are no U and m suc h that S I G N - A L S H ( S L ) is an ( S, cS ) -A LSH for inner pr oduct similarity over X • = Y • = { x | k x k ≤ 1 } . Pr oof. Similar to the proof of Th eorem 5.2 , for any S > 0 and 0 < c < 1 , let q 1 and x 1 be unit vectors such tha t q ⊤ 1 x 1 = S . Let x 2 be a u nit vector and defin e q 2 = c S x 2 . For any U and m : P ( x 2 ) ⊤ Q ( q 2 ) k P ( x 2 ) k k Q ( q 2 ) k = cS U cS q m/ 4 + k U k 2 m +1 = U q m/ 4 + k U k 2 m +1 ≥ S U q m/ 4 + k U k 2 m +1 = P ( x 1 ) ⊤ Q ( q 1 ) k P ( x 1 ) k k Q ( q 1 ) k On Symmetric and Asymmetric LSHs for Inner Pr oduct Search Now , th e same argum ents as in Lemma 1 using mon otonic- ity of co llision proba bilities in k P ( x ) − Q ( q ) k establish S I G N - A L S H ( S L ) is not an ( S, cS ) -AL SH. B. Max-norm and margin complexity M A X - N O R M The max-no rm (aka γ 2 : ℓ 1 → ℓ ∞ norm) is d efined as ( Srebro et al. , 2005 ): k X k max = min X = U V ⊤ max( k U k 2 2 , ∞ , k V k 2 2 , ∞ ) (15) where k U k 2 , ∞ is the maximu m over ℓ 2 norms of rows o f matrix U , i.e. k U k 2 , ∞ = max i k U [ i ] k . For any pair of sets ( { x i } 1 ≤ i ≤ n , { y i } 1 ≤ i ≤ m ) an d hashes ( f , g ) over them, let P be the collision probab ility matrix , i.e. P ( i, j ) = P [ f ( x i ) = g ( y j )] . In the following lemma we prove tha t k P k max ≤ 1 : Lemma 4 . F o r any two sets of o bjects and ha shes over them, if P is the collision pr obability matrix, then k P k max ≤ 1 . Pr oof. For e ach f and g , define the fo llo wing biclu stering matrix: κ f ,g ( i, j ) = ( 1 f ( x i ) = g ( y j ) 0 otherwise. (16) For any fun ction f : Z → Γ , let R f ∈ { 0 , 1 } n ×| Γ | be the indicator of the values of function f : R h ( i, γ ) = ( 1 h ( x i ) = γ 0 o therwise, (17) and define R g ∈ { 0 , 1 } m ×| Γ | similarly . It is ea sy to show that κ f ,g = R f R ⊤ g and since k R f k 2 , ∞ = k R g k 2 , ∞ = 1 , by the definition o f the m ax-norm, we can conclud e that k κ f ,g k max ≤ 1 . But the co llis ion p robabilities are giv en by P = E [ κ f ,g ] , and so by con vexity of the max- norm a nd Jensen’ s ine quality , k P k max = k E [ κ f ,g ] k max ≤ E [ k κ f ,g k max ] ≤ 1 . It is also easy to see th at 1 n × n = RR ⊤ where R = 1 n × 1 . Therefo re for any θ ∈ R , k θ n × n k max = θ k 1 n × n k max ≤ | θ | M A R G I N C O M P L E X I T Y For any sign m atrix Z , the margin co mplexity of Z is de- fined as: min Y k Y k max (18) s.t. Y ( i, j ) X ( i , j ) ≥ 1 ∀ i, j Let Z ∈ {± 1 } N × N be a sign matrix with +1 on and above the diagonal and - 1 below it. Forster et al. ( 200 3 ) prove that the margin complexity of matr ix Z is Ω(log N ) .

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment