Bayesian time series analysis of terrestrial impact cratering

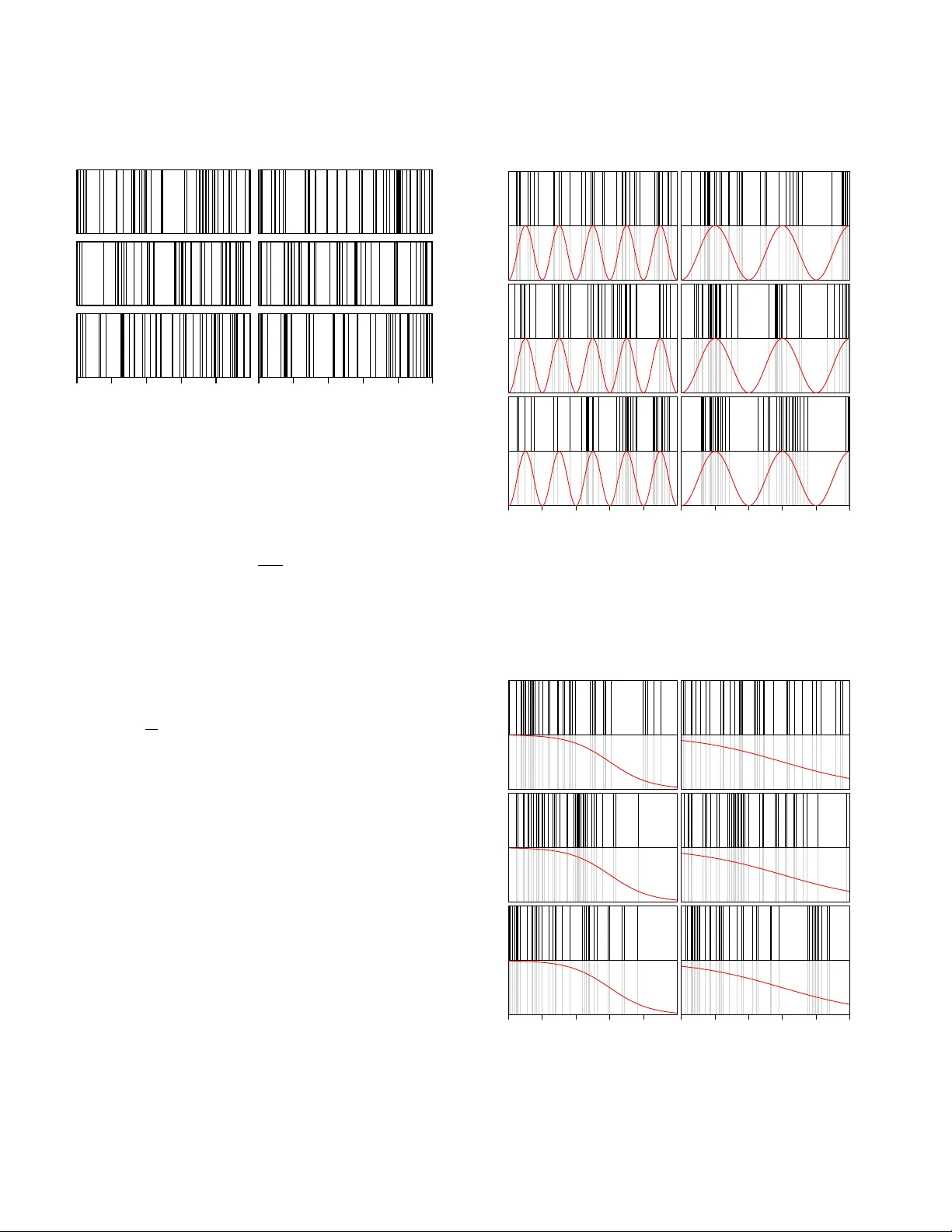

Giant impacts by comets and asteroids have probably had an important influence on terrestrial biological evolution. We know of around 180 high velocity impact craters on the Earth with ages up to 2400Myr and diameters up to 300km. Some studies have i…

Authors: C.A.L. Bailer-Jones (Max Planck Institute for Astronomy, Heidelberg)