Optimization via Low-rank Approximation for Community Detection in Networks

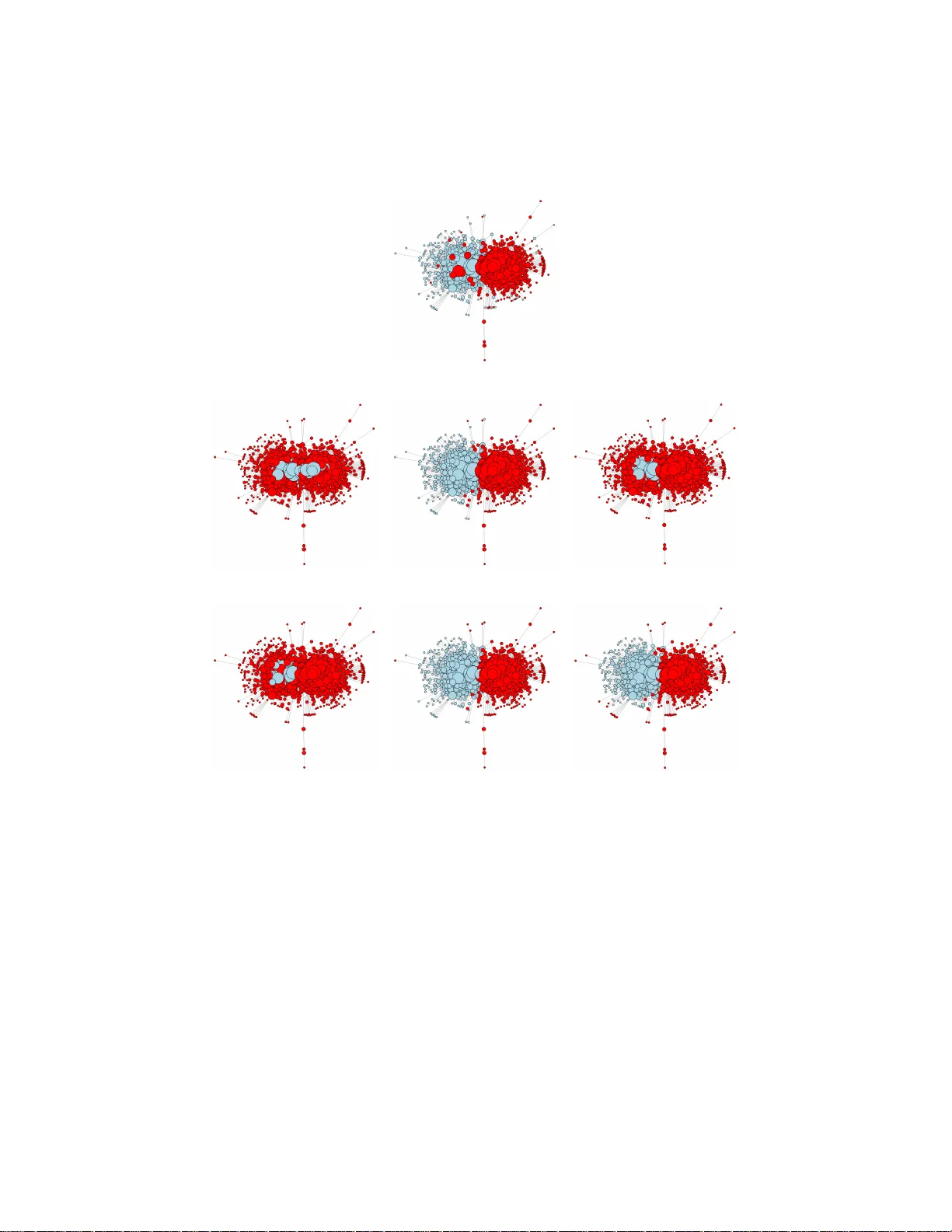

Community detection is one of the fundamental problems of network analysis, for which a number of methods have been proposed. Most model-based or criteria-based methods have to solve an optimization problem over a discrete set of labels to find commu…

Authors: Can M. Le, Elizaveta Levina, Roman Vershynin