Efficient Non-parametric Estimation of Multiple Embeddings per Word in Vector Space

There is rising interest in vector-space word embeddings and their use in NLP, especially given recent methods for their fast estimation at very large scale. Nearly all this work, however, assumes a single vector per word type ignoring polysemy and t…

Authors: Arvind Neelakantan, Jeevan Shankar, Alex

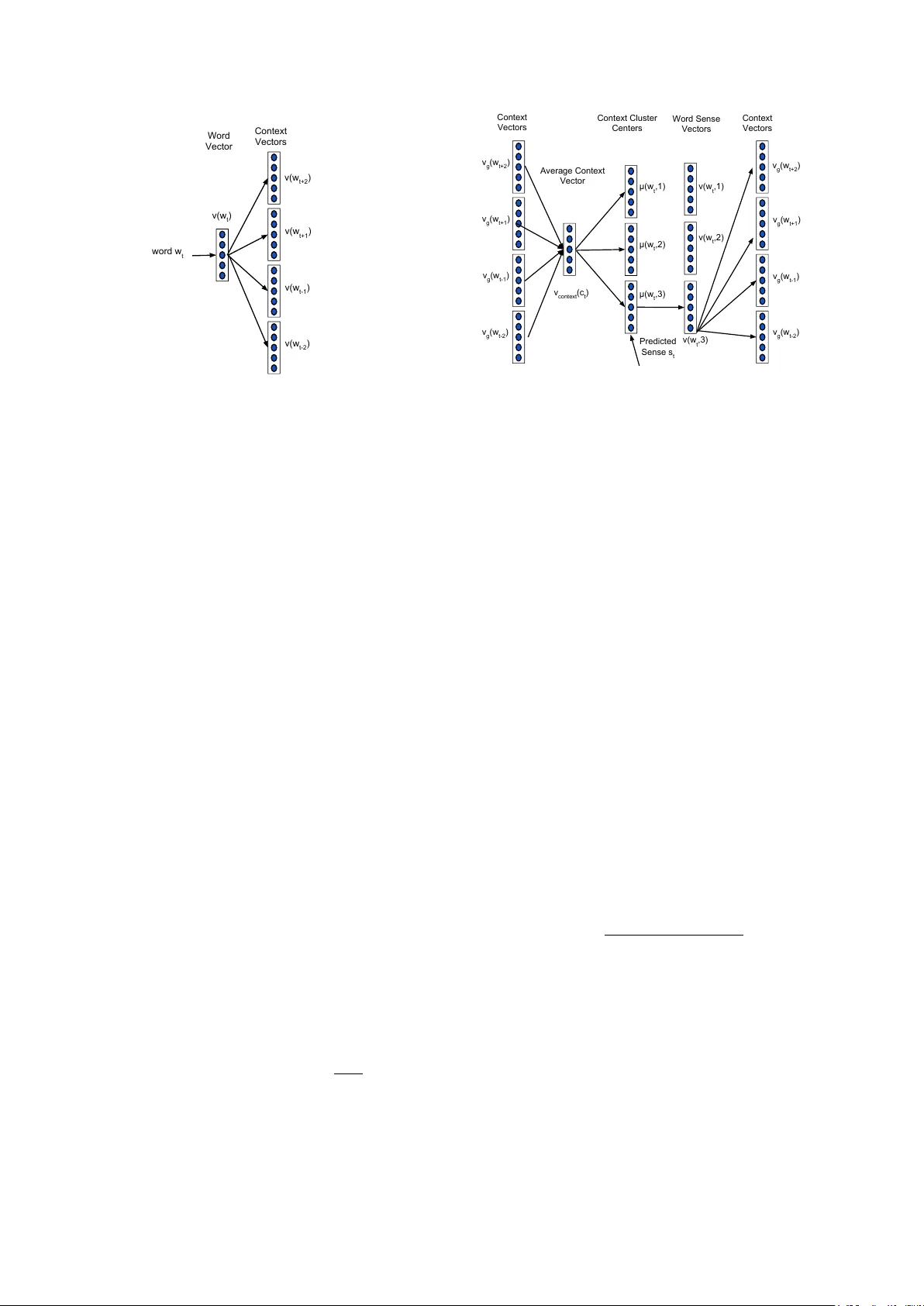

Efficient Non-parametric Estimation of Multiple Embeddings per W ord in V ector Space Arvind Neelakantan * , Jee van Shankar * , Alexandre P assos, Andrew McCallum Department of Computer Science Uni versity of Massachusetts, Amherst Amherst, MA, 01003 { arvind,jshankar,apassos,mccallum } @cs.umass.edu Abstract There is rising interest in vector -space word embeddings and their use in NLP , especially gi ven recent methods for their fast estimation at very large scale. Nearly all this work, ho wev er , assumes a sin- gle vector per w ord type—ignoring poly- semy and thus jeopardizing their useful- ness for do wnstream tasks. W e present an e xtension to the Skip-gram model that ef ficiently learns multiple embeddings per word type. It dif fers from recent related work by jointly performing word sense discrimination and embedding learning, by non-parametrically estimating the num- ber of senses per word type, and by its ef- ficiency and scalability . W e present new state-of-the-art results in the word similar- ity in context task and demonstrate its scal- ability by training with one machine on a corpus of nearly 1 billion tokens in less than 6 hours. 1 Introduction Representing words by dense, real-valued vector embeddings, also commonly called “distributed representations, ” helps address the curse of di- mensionality and improve generalization because they can place near each other words having sim- ilar semantic and syntactic roles. This has been sho wn dramatically in state-of-the-art results on language modeling (Bengio et al, 2003; Mnih and Hinton, 2007) as well as improvements in other natural language processing tasks (Collobert and W eston, 2008; T urian et al, 2010). Substantial benefit arises when embeddings can be trained on large volumes of data. Hence the recent consider- able interest in the CBOW and Skip-gram models * The first two authors contrib uted equally to this paper . of Mik olov et al (2013a); Mikolo v et al (2013b)— relati vely simple log-linear models that can be trained to produce high-quality word embeddings on the entirety of English W ikipedia text in less than half a day on one machine. There is rising enthusiasm for applying these models to improv e accuracy in natural language processing, much like Brown clusters (Bro wn et al, 1992) hav e become common input features for many tasks, such as named entity extraction (Miller et al, 2004; Ratinov and Roth, 2009) and parsing (K oo et al, 2008; T ¨ ackstr ¨ om et al, 2012). In comparison to Brown clusters, the vector em- beddings ha ve the adv antages of substantially bet- ter scalability in their training, and intriguing po- tential for their continuous and multi-dimensional interrelations. In fact, Passos et al (2014) present ne w state-of-the-art results in CoNLL 2003 named entity extraction by directly inputting continuous vector embeddings obtained by a version of Skip- gram that injects supervision with lexicons. Sim- ilarly Bansal et al (2014) show results in depen- dency parsing using Skip-gram embeddings. They hav e also recently been applied to machine trans- lation (Zou et al, 2013; Mikolo v et al, 2013c). A notable deficiency in this prior work is that each word type ( e.g . the word string plant ) has only one vector representation—polysemy and hononymy are ignored. This results in the word plant having an embedding that is approximately the a verage of its different contextual seman- tics relating to biology , placement, manufactur- ing and power generation. In moderately high- dimensional spaces a vector can be relativ ely “close” to multiple regions at a time, but this does not negate the unfortunate influence of the triangle inequality 2 here: words that are not synonyms but are synonymous with different senses of the same word will be pulled together . For example, pollen and refinery will be inappropriately pulled to a dis- 2 For distance d , d ( a, c ) ≤ d ( a, b ) + d ( b, c ) . tance not more than the sum of the di stances plant– pollen and plant–refiner y . Fitting the constraints of legitimate continuous gradations of semantics are challenge enough without the additional encum- brance of these illegitimate triangle inequalities. Discov ering embeddings for multiple senses per word type is the focus of work by Reisinger and Mooney (2010a) and Huang et al (2012). They both pre-cluster the contexts of a word type’ s to- kens into discriminated senses, use the clusters to re-label the corpus’ tokens according to sense, and then learn embeddings for these re-labeled words. The second paper improv es upon the first by em- ploying an earlier pass of non-discriminated em- bedding learning to obtain vectors used to rep- resent the contexts. Note that by pre-clustering, these methods lose the opportunity to jointly learn the sense-discriminated vectors and the clustering. Other weaknesses include their fixed number of sense per word type, and the computational ex- pense of the two-step process—the Huang et al (2012) method took one week of computation to learn multiple embeddings for a 6,000 subset of the 100,000 vocab ulary on a corpus containing close to billion tokens. 3 This paper presents a new method for learn- ing vector-space embeddings for multiple senses per word type, designed to provide se veral ad- v antages over previous approaches. (1) Sense- discriminated vectors are learned jointly with the assignment of token contexts to senses; thus we can use the emer ging sense representation to more accurately perform the clustering. (2) A non- parametric variant of our method automatically discov ers a varying number of senses per word type. (3) Efficient online joint training makes it fast and scalable. W e refer to our method as Multiple-sense Skip-gram , or MSSG , and its non- parametric counterpart as NP-MSSG . Our method builds on the Skip-gram model (Mikolo v et al, 2013a), but maintains multiple vectors per word type. During online training with a particular token, we use the average of its context words’ vectors to select the token’ s sense that is closest, and perform a gradient update on that sense. In the non-parametric version of our method, we build on facility location (Meyerson, 2001): a new cluster is created with probability proportional to the distance from the conte xt to the 3 Personal communication with authors Eric H. Huang and Richard Socher . nearest sense. W e present experimental results demonstrating the benefits of our approach. W e show quali- tati ve improv ements ov er single-sense Skip-gram and Huang et al (2012), comparing against word neighbors from our parametric and non-parametric methods. W e present quantitativ e results in three tasks. On both the SCWS and W ordSim353 data sets our methods surpass the previous state-of- the-art. The Google Analogy task is not espe- cially well-suited for word-sense e valuation since its lack of context makes selecting the sense dif- ficult; howe ver our method dramatically outper- forms Huang et al (2012) on this task. Finally we also demonstrate scalabilty , learning multiple senses, training on nearly a billion tokens in less than 6 hours—a 27x impro vement on Huang et al. 2 Related W ork Much prior work has focused on learning vector representations of words; here we will describe only those most relev ant to understanding this pa- per . Our work is based on neural language mod- els, proposed by Bengio et al (2003), which extend the traditional idea of n -gram language m odels by replacing the conditional probability table with a neural network, representing each word token by a small vector instead of an indicator variable, and estimating the parameters of the neural network and these vectors jointly . Since the Bengio et al (2003) model is quite expensiv e to train, much re- search has focused on optimizing it. Collobert and W eston (2008) replaces the max-likelihood char- acter of the model with a max-margin approach, where the network is encouraged to score the cor- rect n -grams higher than randomly chosen incor- rect n -grams. Mnih and Hinton (2007) replaces the global normalization of the Bengio model with a tree-structured probability distribution, and also considers multiple positions for each word in the tree. More relev antly , Mikolov et al (2013a) and Mikolo v et al (2013b) propose extremely com- putationally ef ficient log-linear neural language models by removing the hidden layers of the neu- ral networks and training from larger conte xt win- do ws with very aggressiv e subsampling. The goal of the models in Mikolov et al (2013a) and Mikolo v et al (2013b) is not so much obtain- ing a low-perple xity language model as learn- ing word representations which will be useful in do wnstream tasks. Neural networks or log-linear models also do not appear to be necessary to learn high-quality word embeddings, as Dhillon and Ungar (2011) estimate word vector repre- sentations using Canonical Correlation Analysis (CCA). W ord vector representations or embeddings hav e been used in various NLP tasks such as named entity recognition (Neelakantan and Collins, 2014; Passos et al, 2014; T urian et al, 2010), dependency parsing (Bansal et al, 2014), chunking (T urian et al, 2010; Dhillon and Ungar , 2011), sentiment analysis (Maas et al, 2011), para- phrase detection (Socher et al, 2011) and learning representations of paragraphs and documents (Le and Mikolov , 2014). The word clusters obtained from Brown clustering (Brown et al, 1992) hav e similarly been used as features in named entity recognition (Miller et al, 2004; Ratinov and Roth, 2009) and dependency parsing (K oo et al, 2008), among other tasks. There is considerably less prior work on learn- ing multiple vector representations for the same word type. Reisinger and Mooney (2010a) intro- duce a method for constructing multiple sparse, high-dimensional v ector representations of words. Huang et al (2012) extends this approach incor- porating global document context to learn mul- tiple dense, lo w-dimensional embeddings by us- ing recursiv e neural networks. Both the meth- ods perform word sense discrimination as a pre- processing step by clustering contexts for each word type, making training more expensi ve. While methods such as those described in Dhillon and Ungar (2011) and Reddy et al (2011) use token-specific representations of words as part of the learning algorithm, the final outputs are still one-to-one mappings between word types and word embeddings. 3 Background: Skip-gram model The Skip-gram model learns word embeddings such that they are useful in predicting the sur- rounding words in a sentence. In the Skip-gram model, v ( w ) ∈ R d is the vector representation of the word w ∈ W , where W is the words vocab u- lary and d is the embedding dimensionality . Gi ven a pair of words ( w t , c ) , the probability that the word c is observed in the context of word w t is gi ven by , P ( D = 1 | v ( w t ) , v ( c )) = 1 1 + e − v ( w t ) T v ( c ) (1) The probability of not observing word c in the con- text of w t is gi ven by , P ( D = 0 | v ( w t ) , v ( c )) = 1 − P ( D = 1 | v ( w t ) , v ( c )) Gi ven a training set containing the sequence of word types w 1 , w 2 , . . . , w T , the w ord embeddings are learned by maximizing the following objecti ve function: J ( θ ) = X ( w t ,c t ) ∈ D + X c ∈ c t log P ( D = 1 | v ( w t ) , v ( c )) + X ( w t ,c 0 t ) ∈ D − X c 0 ∈ c 0 t log P ( D = 0 | v ( w t ) , v ( c 0 )) where w t is the t th word in the training set, c t is the set of observed context words of word w t and c 0 t is the set of randomly sampled, noisy con- text words for the word w t . D + consists of the set of all observed word-context pairs ( w t , c t ) ( t = 1 , 2 . . . , T ). D − consists of pairs ( w t , c 0 t ) ( t = 1 , 2 . . . , T ) where c 0 t is the set of randomly sampled, noisy context w ords for the word w t . For each training word w t , the set of context words c t = { w t − R t , . . . , w t − 1 , w t +1 , . . . , w t + R t } includes R t words to the left and right of the giv en word as shown in Figure 1. R t is the window size considered for the word w t uniformly randomly sampled from the set { 1 , 2 , . . . , N } , where N is the maximum context windo w size. The set of noisy context words c 0 t for the word w t is constructed by randomly sampling S noisy context words for each word in the context c t . The noisy context words are randomly sampled from the follo wing distribution, P ( w ) = p unig ram ( w ) 3 / 4 Z (2) where p unig ram ( w ) is the unigram distribution of the words and Z is the normalization constant. 4 Multi-Sense Skip-gram (MSSG) model T o extend the Skip-gram model to learn multiple embeddings per word we follow previous work (Huang et al, 2012; Reisinger and Mooney , 2010a) Word Vector word w t v(w t+2 ) Context Vectors v(w t+1 ) v(w t-1 ) v(w t-2 ) v(w t ) Figure 1: Architecture of the Skip-gram model with window size R t = 2 . Context c t of word w t consists of w t − 1 , w t − 2 , w t +1 , w t +2 . and let each sense of word hav e its own embed- ding, and induce the senses by clustering the em- beddings of the context words around each token. The vector representation of the context is the av- erage of its context words’ vectors. For every word type, we maintain clusters of its contexts and the sense of a word token is predicted as the cluster that is closest to its context representation. After predicting the sense of a word token, we perform a gradient update on the embedding of that sense. The crucial difference from previous approaches is that w ord sense discrimination and learning em- beddings are performed jointly by predicting the sense of the word using the current parameter es- timates. In the MSSG model, each word w ∈ W is associated with a global vector v g ( w ) and each sense of the word has an embedding (sense vec- tor) v s ( w , k ) ( k = 1 , 2 , . . . , K ) and a context clus- ter with center µ ( w , k ) ( k = 1 , 2 , . . . , K ). The K sense vectors and the global vectors are of dimen- sion d and K is a hyperparameter . Consider the word w t and let c t = { w t − R t , . . . , w t − 1 , w t +1 , . . . , w t + R t } be the set of observed context words. The vector repre- sentation of the context is defined as the av erage of the global vector representation of the words in the context. Let v context ( c t ) = 1 2 ∗ R t P c ∈ c t v g ( c ) be the vector representation of the context c t . W e use the global v ectors of the context words instead of its sense vectors to av oid the computational complexity associated with predicting the sense of the context words. W e predict s t , the sense Word Sense Vectors v(w t ,2) v g (w t+2 ) Context Vectors v g (w t+1 ) v g (w t-1 ) v g (w t-2 ) Average Context Vector Context Cluster Centers v(w t ,1) v(w t ,3) Predicted Sense s t μ(w t ,1) v context (c t ) μ(w t ,2) μ(w t ,3) Context Vectors v g (w t+2 ) v g (w t+1 ) v g (w t-1 ) v g (w t-2 ) Figure 2: Architecture of Multi-Sense Skip-gram (MSSG) model with window size R t = 2 and K = 3 . Context c t of word w t consists of w t − 1 , w t − 2 , w t +1 , w t +2 . The sense is predicted by finding the cluster center of the context that is clos- est to the av erage of the context v ectors. of word w t when observed with context c t as the context cluster membership of the vector v context ( c t ) as sho wn in Figure 2. More formally , s t = arg max k =1 , 2 ,...,K sim ( µ ( w t , k ) , v context ( c t )) (3) The hard cluster assignment is similar to the k - means algorithm. The cluster center is the av er- age of the vector representations of all the contexts which belong to that cluster . For sim we use co- sine similarity in our experiments. Here, the probability that the word c is observ ed in the context of word w t gi ven the sense of the word w t is, P ( D = 1 | s t ,v s ( w t , 1) , . . . , v s ( w t , K ) , v g ( c )) = P ( D = 1 | v s ( w t , s t ) , v g ( c )) = 1 1 + e − v s ( w t ,s t ) T v g ( c ) The probability of not observing word c in the con- text of w t gi ven the sense of the word w t is, P ( D = 0 | s t ,v s ( w t , 1) , . . . , v s ( w t , K ) , v g ( c )) = P ( D = 0 | v s ( w t , s t ) , v g ( c )) = 1 − P ( D = 1 | v s ( w t , s t ) , v g ( c )) Gi ven a training set containing the sequence of word types w 1 , w 2 , ..., w T , the word embeddings are learned by maximizing the following objecti ve Algorithm 1 T raining Algorithm of MSSG model 1: Input: w 1 , w 2 , ..., w T , d , K , N . 2: Initialize v s ( w , k ) and v g ( w ) , ∀ w ∈ W, k ∈ { 1 , . . . , K } randomly , µ ( w , k ) ∀ w ∈ W , k ∈ { 1 , . . . , K } to 0. 3: for t = 1 , 2 , . . . , T do 4: R t ∼ { 1 , . . . , N } 5: c t = { w t − R t , . . . , w t − 1 , w t +1 , . . . , w t + R t } 6: v context ( c t ) = 1 2 ∗ R t P c ∈ c t v g ( c ) 7: s t = arg max k =1 , 2 ,...,K { sim ( µ ( w t , k ) , v context ( c t )) } 8: Update context cluster center µ ( w t , s t ) since context c t is added to context cluster s t of word w t . 9: c 0 t = N oisy S amples ( c t ) 10: Gradient update on v s ( w t , s t ) , global vec- tors of words in c t and c 0 t . 11: end for 12: Output: v s ( w , k ) , v g ( w ) and context cluster centers µ ( w , k ) , ∀ w ∈ W, k ∈ { 1 , . . . , K } function: J ( θ ) = X ( w t ,c t ) ∈ D + X c ∈ c t log P ( D = 1 | v s ( w t , s t ) , v g ( c ))+ X ( w t ,c 0 t ) ∈ D − X c 0 ∈ c 0 t log P ( D = 0 | v s ( w t , s t ) , v g ( c 0 )) where w t is the t th word in the sequence, c t is the set of observed context words and c 0 t is the set of noisy context w ords for the word w t . D + and D − are constructed in the same way as in the Skip- gram model. After predicting the sense of word w t , we up- date the embedding of the predicted sense for the word w t ( v s ( w t , s t ) ), the global vector of the words in the context and the global vector of the randomly sampled, noisy conte xt words. The con- text cluster center of cluster s t for the word w t ( µ ( w t , s t ) ) is updated since context c t is added to the cluster s t . 5 Non-Parametric MSSG model (NP-MSSG) The MSSG model learns a fix ed number of senses per word type. In this section, we describe a non-parametric version of MSSG, the NP-MSSG model, which learns v arying number of senses per word type. Our approach is closely related to the online non-parametric clustering procedure de- scribed in Meyerson (2001). W e create a new clus- ter (sense) for a word type with probability propor- tional to the distance of its context to the nearest cluster (sense). Each word w ∈ W is associated with sense v ec- tors, context clusters and a global vector v g ( w ) as in the MSSG model. The number of senses for a word is unknown and is learned during training. Initially , the words do not have sense vectors and context clusters. W e create the first sense vector and context cluster for each w ord on its first occur- rence in the training data. After creating the first context cluster for a word, a new context cluster and a sense vector are created online during train- ing when the word is observ ed with a context were the similarity between the vector representation of the context with ev ery existing cluster center of the word is less than λ , where λ is a hyperparameter of the model. Consider the word w t and let c t = { w t − R t , . . . , w t − 1 , w t +1 , . . . , w t + R t } be the set of observed context words. The vector repre- sentation of the context is defined as the av erage of the global vector representation of the words in the context. Let v context ( c t ) = 1 2 ∗ R t P c ∈ c t v g ( c ) be the vector representation of the context c t . Let k ( w t ) be the number of context clusters or the number of senses currently associated with word w t . s t , the sense of word w t when k ( w t ) > 0 is gi ven by s t = k ( w t ) + 1 , if max k =1 , 2 ,...,k ( w t ) { sim ( µ ( w t , k ) , v context ( c t )) } < λ k max , otherwise (4) where µ ( w t , k ) is the cluster center of the k th cluster of word w t and k max = arg max k =1 , 2 ,...,k ( w t ) sim ( µ ( w t , k ) , v context ( c t )) . The cluster center is the average of the vector representations of all the contexts which belong to that cluster . If s t = k ( w t ) + 1 , a new context cluster and a new sense vector are created for the word w t . The NP-MSSG model and the MSSG model described pre viously differ only in the way word sense discrimination is performed. The objec- ti ve function and the probabilistic model associ- ated with observing a (word, context) pair giv en the sense of the word remain the same. Model T ime (in hours) Huang et al 168 MSSG 50d 1 MSSG-300d 6 NP-MSSG-50d 1.83 NP-MSSG-300d 5 Skip-gram-50d 0.33 Skip-gram-300d 1.5 T able 1: T raining Time Results. First fiv e model reported in the table are capable of learning mul- tiple embeddings for each word and Skip-gram is capable of learning only single embedding for each word. 6 Experiments T o ev aluate our algorithms we train embeddings using the same corpus and vocab ulary as used in Huang et al (2012), which is the April 2010 snap- shot of the W ikipedia corpus (Shaoul and W est- bury , 2010). It contains approximately 2 million articles and 990 million tokens. In all our experi- ments we remove all the words with less than 20 occurrences and use a maximum context window ( N ) of length 5 (5 words before and after the word occurrence). W e fix the number of senses ( K ) to be 3 for the MSSG model unless otherwise speci- fied. Our hyperparameter values were selected by a small amount of manual exploration on a vali- dation set. In NP-MSSG we set λ to -0.5. The Skip-gram model, MSSG and NP-MSSG models sample one noisy conte xt word ( S ) for each of the observed context words. W e train our models us- ing AdaGrad stochastic gradient decent (Duchi et al, 2011) with initial learning rate set to 0.025. Similarly to Huang et al (2012), we don’t use a regularization penalty . Belo w we describe qualitativ e results, display- ing the embeddings and the nearest neighbors of each word sense, and quantitative experiments in two benchmark word similarity tasks. T able 1 shows time to train our models, com- pared with other models from previous work. All these times are from single-machine implementa- tions running on similar-sized corpora. W e see that our model shows significant improvement in the training time ov er the model in Huang et al (2012), being within well within an order-of- magnitude of the training time for Skip-gram mod- els. A P PL E Skip-gram blackberry , macintosh, acorn, pear, plum MSSG pear , honey , pumpkin, potato, nut microsoft, activision, son y , retail, gamestop macintosh, pc, ibm, iigs, chipsets NP-MSSG apricot, blackberry , cabbage, blackberries, pear microsoft, ibm, wordperfect, amiga, trs-80 F OX Skip-gram abc, nbc, soapnet, espn, kttv MSSG beav er, w olf, moose, otter, sw an nbc, espn, cbs, ctv , pbs dexter , myers, sawyer , kelly , griffith NP-MSSG rabbit, squirrel, wolf, badger , stoat cbs,abc, nbc, wnyw , abc-tv N E T Skip-gram profit, dividends, pe gged, profits, nets MSSG snap, sideline, ball, game-trying, scoring negati ve, of fset, constant, hence, potential pre-tax, billion, rev enue, annualized, us$ NP-MSSG negati ve, total, transfer , minimizes, loop pre-tax, taxable, per , billion, us$, income ball, yard, fouled, bounced, 50-yard wnet, tvontorio, cable, tv , tv-5 R O C K Skip-gram glam, indie, punk, band, pop MSSG rocks, basalt, boulders, sand, quartzite alternativ e, progressive, roll, indie, blues-rock rocks, pine, rocky , butte, deer NP-MSSG granite, basalt, outcropping, rocks, quartzite alternativ e, indie, pop/rock, rock/metal, blues-rock R U N Skip-gram running, ran, runs, afoul, amok MSSG running, stretch, ran, pinch-hit, runs operated , running, runs, operate, managed running, runs, operate, driv ers, configure NP-MSSG two-run, walk-of f, runs, three-runs, starts operated, runs, serviced, links, walk running, operating, ran, go, configure re-election, reelection, re-elect, unseat, term-limited helmed, longest-running, mtv , promoted, produced T able 2: Nearest neighbors of each sense of each word, by cosine similarity , for dif ferent algo- rithms. Note that the different senses closely cor- respond to intuitions regarding the senses of the gi ven word types. 6.1 Nearest Neighbors T able 2 shows qualitativ ely the results of dis- cov ering multiple senses by presenting the near- est neighbors associated with v arious embeddings. The nearest neighbors of a word are computed by comparing the cosine similarity between the em- bedding for each sense of the word and the context embeddings of all other words in the vocab ulary . Note that each of the disco vered senses are indeed semantically coherent, and that a reasonable num- ber of senses are created by the non-parametric method. T able 3 sho ws the nearest neighbors of the word plant for Skip-gram, MSSG , NP-MSSG and Haung’ s model (Huang et al, 2012). Skip- gram plants, flowering, weed, fungus, biomass MS -SG plants, tubers, soil, seed, biomass refinery , reactor, coal-fired, f actory , smelter asteraceae, fabaceae, arecaceae, lamiaceae, eri- caceae NP MS -SG plants, seeds, pollen, fungal, fungus factory , manufacturing, refinery , bottling, steel fabaceae, legume, asteraceae, apiaceae, flo wering power , coal-fired, hydro-power , hydroelectric, re- finery Hua -ng et al insect, capable, food, solanaceous, subsurface robust, belong, pitcher , comprises, eagles food, animal, catching, catch, ecology , fly seafood, equipment, oil, dairy , manufacturer facility , expansion, corporation, camp, co. treatment, skin, mechanism, sugar , drug facility , theater , platform, structure, storage natural, blast, energy , hurl, po wer matter , physical, certain, expression, agents vine, mute, chalcedony , quandong, excrete T able 3: Nearest Neighbors of the word plant for different models. W e see that the discovered senses in both our models are more semantically coherent than Huang et al (2012) and NP-MSSG is able to learn reasonable number of senses. 6.2 W ord Similarity W e ev aluate our embeddings on two related datasets: the W ordSim-353 (Finkelstein et al, 2001) dataset and the Contextual W ord Similari- ties (SCWS) dataset Huang et al (2012). W ordSim-353 is a standard dataset for e valuat- ing word vector representations. It consists of a list of pairs of word types, the similarity of which is rated in an integral scale from 1 to 10. Pairs include both monosemic and polysemic words. These scores to each word pairs are giv en with- out any contextual information, which makes them tricky to interpret. T o overcome this issue, Stanford’ s Contextual W ord Similarities (SCWS) dataset was dev eloped by Huang et al (2012). The dataset consists of 2003 word pairs and their sentential contexts. It consists of 1328 noun-noun pairs, 399 verb-verb pairs, 140 verb-noun, 97 adjective-adjecti ve, 30 noun-adjecti ve, 9 verb-adjectiv e, and 241 same- word pairs. W e ev aluate and compare our embed- dings on both W ordSim-353 and SCWS word sim- ilarity corpus. Since it is not trivial to deal with multiple em- beddings per word, we consider the follo wing sim- ilarity measures between words w and w 0 gi ven their respectiv e contexts c and c 0 , where P ( w, c, k ) is the probability that w takes the k th sense giv en the context c , and d ( v s ( w , i ) , v s ( w 0 , j )) is the sim- ilarity measure between the gi ven embeddings v s ( w , i ) and v s ( w 0 , j ) . The a vgSim metric, a vgSim( w , w 0 ) = 1 K 2 K X i =1 K X j =1 d ( v s ( w , i ) , v s ( w 0 , j )) , computes the average similarity over all embed- dings for each word, ignoring information from the context. T o address this, the avgSimC metric, a vgSimC( w , w 0 ) = K X j =1 K X i =1 P ( w , c, i ) P ( w 0 , c 0 , j ) × d ( v s ( w , i ) , v s ( w 0 , j )) weighs the similarity between each pair of senses by ho w well does each sense fit the context at hand. The globalSim metric uses each word’ s global context v ector , ignoring the many senses: globalSim( w , w 0 ) = d ( v g ( w ) , v g ( w 0 )) . Finally , lo calSim metric selects a single sense for each word based independently on its context and computes the similarity by lo calSim( w, w 0 ) = d ( v s ( w , k ) , v s ( w 0 , k 0 )) , where k = arg max i P ( w , c, i ) and k 0 = arg max j P ( w 0 , c 0 , j ) and P ( w , c, i ) is the prob- ability that w takes the i th sense given context c . The probability of being in a cluster is calculated as the in verse of the cosine distance to the cluster center (Huang et al, 2012). W e report the Spearman correlation between a model’ s similarity scores and the human judge- ments in the datasets. T able 5 shows the results on W ordSim-353 task. C&W refers to the language model by Col- lobert and W eston (2008) and HLBL model is the method described in Mnih and Hinton (2007). On W ordSim-353 task, we see that our model per- forms significantly better than the previous neural network model for learning multi-representations per word (Huang et al, 2012). Among the meth- ods that learn low-dimensional and dense repre- sentations, our model performs slightly better than Skip-gram. T able 4 shows the results for the SCWS task. In this task, when the words are Model globalSim a vgSim a vgSimC lo calSim TF-IDF 26.3 - - - Collobort & W eston-50d 57.0 - - - Skip-gram-50d 63.4 - - - Skip-gram-300d 65.2 - - - Pruned TF-IDF 62.5 60.4 60.5 - Huang et al-50d 58.6 62.8 65.7 26.1 MSSG-50d 62.1 64.2 66.9 49.17 MSSG-300d 65.3 67.2 69.3 57.26 NP-MSSG-50d 62.3 64.0 66.1 50.27 NP-MSSG-300d 65.5 67.3 69.1 59.80 T able 4: Experimental results in the SCWS task. The numbers are Spearmans correlation ρ × 100 between each model’ s similarity judgments and the human judgments, in context. First three models learn only a single embedding per model and hence, a vgSim , avgSimC and lo calSim are not reported for these models, as they’ d be identical to globalSim . Both our parametric and non-parametric models outperform the baseline models, and our best model achieves a score of 69.3 in this task. NP-MSSG achie ves the best results when globalSim , avgSim and lo calSim similarity measures are used. The best results according to each metric are in bold face. Model ρ × 100 HLBL 33.2 C&W 55.3 Skip-gram-300d 70.4 Huang et al-G 22.8 Huang et al-M 64.2 MSSG 50d-G 60.6 MSSG 50d-M 63.2 MSSG 300d-G 69.2 MSSG 300d-M 70.9 NP-MSSG 50d-G 61.5 NP-MSSG 50d-M 62.4 NP-MSSG 300d-G 69.1 NP-MSSG 300d-M 68.6 Pruned TF-IDF 73.4 ESA 75 T iered TF-IDF 76.9 T able 5: Results on the W ordSim-353 dataset. The table sho ws the Spearmans correlation ρ be- tween the model’ s similarities and human judg- ments. G indicates the globalSim similarity mea- sure and M indicates a vgSim measure.The best results among models that learn low-dimensional and dense representations are in bold face. Pruned TF-IDF (Reisinger and Mooney , 2010a), ESA (Gabrilovich and Markovitch, 2007) and Tiered TF-IDF (Reisinger and Mooney , 2010b) construct spare, high-dimensional representations. Figure 3: The plot sho ws the distribution of num- ber of senses learned per word type in NP-MSSG model gi ven with their context, our model achiev es ne w state-of-the-art results on SCWS as shown in the T able-4. The previous state-of-art model (Huang et al, 2012) on this task achiev es 65 . 7% using the avgSimC measure, while the MSSG model achie ves the best score of 69 . 3% on this task. The results on the other metrics are similar . For a fixed embedding dimension, the model by Huang et al (2012) has more parameters than our model since it uses a hidden layer . The results show that our model performs better than Huang et al (2012) ev en when both the models use 50 dimen- sional vectors and the performance of our model improv es as we increase the number of dimensions to 300. W e e valuate the models in a word analogy task Figure 4: Shows the ef fect of varying embedding dimensionality of the MSSG Model on the SCWS task. Figure 5: show the ef fect of varying number of senses of the MSSG Model on the SCWS task. Model T ask S im ρ × 100 Skip-gram WS-353 globalSim 70.4 MSSG WS-353 globalSim 68.4 MSSG WS-353 a vgSim 71.2 NP MSSG WS-353 globalSim 68.3 NP MSSG WS-353 a vgSim 69.66 MSSG SCWS lo calSim 59.3 MSSG SCWS globalSim 64.7 MSSG SCWS a vgSim 67.2 MSSG SCWS a vgSimC 69.2 NP MSSG SCWS lo calSim 60.11 NP MSSG SCWS globalSim 65.3 NP MSSG SCWS a vgSim 67 NP MSSG SCWS a vgSimC 68.6 T able 6: Experiment results on W ordSim-353 and SCWS T ask. Multiple Embeddings are learned for top 30,000 most frequent words in the v ocab ulary . The embedding dimension size is 300 for all the models for this task. The number of senses for MSSG model is 3. introduced by Mikolov et al (2013a) where both MSSG and NP-MSSG models achiev e 64% accu- racy compared to 12% accuracy by Huang et al (2012). Skip-gram which is the state-of-art model for this task achie ves 67% accuracy . Figure 3 sho ws the distribution of number of senses learned per word type in the NP-MSSG model. W e learn the multiple embeddings for the same set of approximately 6000 words that were used in Huang et al (2012) for all our experiments to ensure fair comparision. These approximately 6000 words were choosen by Huang et al. mainly from the top 30,00 frequent words in the vocab- ulary . This selection was likely made to av oid the noise of learning multiple senses for infre- quent words. Ho wev er , our method is robust to noise, which can be seen by the good performance of our model that learns multiple embeddings for the top 30,000 most frequent words. W e found that ev en by learning multiple embeddings for the top 30,000 most frequent words in the vocub u- lary , MSSG model still achieves state-of-art result on SCWS task with an a vgSimC score of 69.2 as sho wn in T able 6. 7 Conclusion W e present an extension to the Skip-gram model that efficiently learns multiple embeddings per word type. The model jointly performs word sense discrimination and embedding learning, and non-parametrically estimates the number of senses per word type. Our method achiev es new state- of-the-art results in the word similarity in con- text task and learns multiple senses, training on close to billion tokens in less than 6 hours. The global vectors, sense vectors and cluster centers of our model and code for learning them are av ail- able at https://people.cs.umass.edu/ ˜ arvind/emnlp2014wordvectors . In fu- ture work we plan to use the multiple embeddings per word type in do wnstream NLP tasks. Acknowledgments This work was supported in part by the Center for Intelligent Information Retrie val and in part by D ARP A under agreement number F A8750-13-2- 0020. The U.S. Gov ernment is authorized to re- produce and distribute reprints for Governmental purposes notwithstanding any copyright notation thereon. Any opinions, findings and conclusions or recommendations expressed in this material are those of the authors and do not necessarily reflect those of the sponsor . References Mohit Bansal, Ke vin Gimpel, and Karen Li vescu. 2014. T ailoring Continuous W ord Repr esentations for Dependency P arsing. Association for Computa- tional Linguistics (A CL). Y oshua Bengio, R ´ ejean Ducharme, Pascal V incent, and Christian Jauvin. 2003. A neural pr obabilistic lan- guage model. Journal of Machine Learning Re- search (JMLR). Peter F . Brown, Peter V . Desouza, Robert L. Mercer, V incent J. Della Pietra, and Jenifer C. Lai. 1992. Class-based N-gram models of natural language Computational Linguistics. Ronan Collobert and Jason W eston. 2008. A Uni- fied Ar chitectur e for Natural Language Pr ocess- ing: Deep Neural Networks with Multitask Learn- ing. International Conference on Machine learning (ICML). Paramv eer S. Dhillon, Dean Foster , and L yle Ungar . 2011. Multi-V iew Learning of W or d Embeddings via CCA. Advances in Neural Information Processing Systems (NIPS). John Duchi, Elad Hazan, and Y oram Singer 2011. Adaptive sub- gradient methods for online learn- ing and stochastic optimization. Journal of Machine Learning Research (JMLR). Lev Finkelstein, Evgeniy Gabrilovich, Y ossi Matias, Ehud Rivlin, Zach Solan, Gadi W olfman, and Eytan Ruppin. 2001. Placing searc h in context: the con- cept re visited. International Conference on W orld W ide W eb (WWW). Evgeniy Gabrilovich and Shaul Markovitch. 2007. Computing semantic r elatedness using wikipedia- based explicit semantic analysis. International Joint Conference on Artificial Intelligence (IJCAI). Eric H. Huang, Richard Socher , Christopher D. Man- ning, and Andrew Y . Ng. 2012. Impr oving W ord Repr esentations via Global Context and Multiple W ord Pr ototypes . Association of Computational Linguistics (A CL). T erry K oo, Xavier Carreras, and Michael Collins. 2008. Simple Semi-supervised Dependency P arsing. Association for Computational Linguistics (A CL). Quoc V . Le and T omas Mikolo v . 2014 Distributed Repr esentations of Sentences and Documents. Inter- national Conference on Machine Learning (ICML) Andrew L. Maas, Raymond E. Daly , Peter T . Pham, Dan Huang, Andrew Y . Ng, and Christopher Potts. 2011 Learning W ord V ectors for Sentiment Analysis Association for Computational Linguistics (A CL) Adam Meyerson. 2001 Online F acility Location. IEEE Symposium on Foundations of Computer Sci- ence T omas Mikolov , Kai Chen, Greg Corrado, and Jef- frey Dean. 2013a. Ef ficient Estimation of W or d Repr esentations in V ector Space. W orkshop at In- ternational Conference on Learning Representations (ICLR). T omas Mikolov , Ilya Sutskev er , Kai Chen, Greg Cor- rado, and Jeffrey Dean. 2013b . Distributed Repre- sentations of W ords and Phr ases and their Composi- tionality . Advances in Neural Information Process- ing Systems (NIPS). T omas Mikolov , Quoc V . Le, and Ilya Sutske ver . 2013c. Exploiting Similarities among Languages for Machine T ranslation. arXiv . Scott Miller, Jethran Guinness, and Alex Zamanian. 2004. Name tagging with word clusters and dis- criminative training . North American Chapter of the Association for Computational Linguistics: Hu- man Language T echnologies (N AA CL-HL T). Andriy Mnih and Geoffre y Hinton. 2007. Thr ee new graphical models for statistical language mod- elling. International Conference on Machine learn- ing (ICML). Arvind Neelakantan and Michael Collins. 2014. Learning Dictionaries for Named Entity Recogni- tion using Minimal Supervision. European Chap- ter of the Association for Computational Linguistics (EA CL). Alexandre Passos, V ineet Kumar , and Andrew McCal- lum. 2014. Lexicon Infused Phrase Embeddings for Named Entity Resolution. Conference on Natural Language Learning (CoNLL). Lev Ratinov and Dan Roth. 2009. Design Chal- lenges and Misconceptions in Named Entity Recog- nition. Conference on Natural Language Learning (CoNLL). Siv a Reddy , Ioannis P . Klapaftis, and Diana McCarthy . 2011. Dynamic and Static Pr ototype V ectors for Se- mantic Composition. International Joint Conference on Artificial Intelligence (IJCNLP). Joseph Reisinger and Raymond J. Mooney . 2010a. Multi-pr ototype vector-space models of word mean- ing. North American Chapter of the Association for Computational Linguistics: Human Language T ech- nologies (N AA CL-HL T) Joseph Reisinger and Raymond Mooney . 2010b . A mixtur e model with sharing for lexical semantics. Empirical Methods in Natural Language Processing (EMNLP). Cyrus Shaoul and Chris W estb ury . 2010. The W estbury lab wikipedia corpus. Richard Socher, Eric H. Huang, Jeffre y Pennington, Andrew Y . Ng, and Christopher D. Manning. 2011 Dynamic P ooling and Unfolding Recursive Autoen- coders for P araphrase Detection. Advances in Neu- ral Information Processing Systems (NIPS). Oscar T ¨ ackstr ¨ om, Ryan McDonald, and Jakob Uszkor - eit. 2012. Cr oss-lingual W or d Clusters for Dir ect T ransfer of Linguistic Structur e. North American Chapter of the Association for Computational Lin- guistics: Human Language T echnologies. Joseph Turian, Lev Ratinov , and Y oshua Bengio. 2010. W ord Repr esentations: A Simple and Gener al Method for Semi-Supervised Learning. Association for Computational Linguistics (A CL). W ill Y . Zou, Richard Socher, Daniel Cer , and Christo- pher D. Manning. 2013. Bilingual W or d Embed- dings for Phrase-Based Machine T ranslation. Em- pirical Methods in Natural Language Processing.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment