Social Trust Prediction via Max-norm Constrained 1-bit Matrix Completion

Social trust prediction addresses the significant problem of exploring interactions among users in social networks. Naturally, this problem can be formulated in the matrix completion framework, with each entry indicating the trustness or distrustness…

Authors: Jing Wang, Jie Shen, Huan Xu

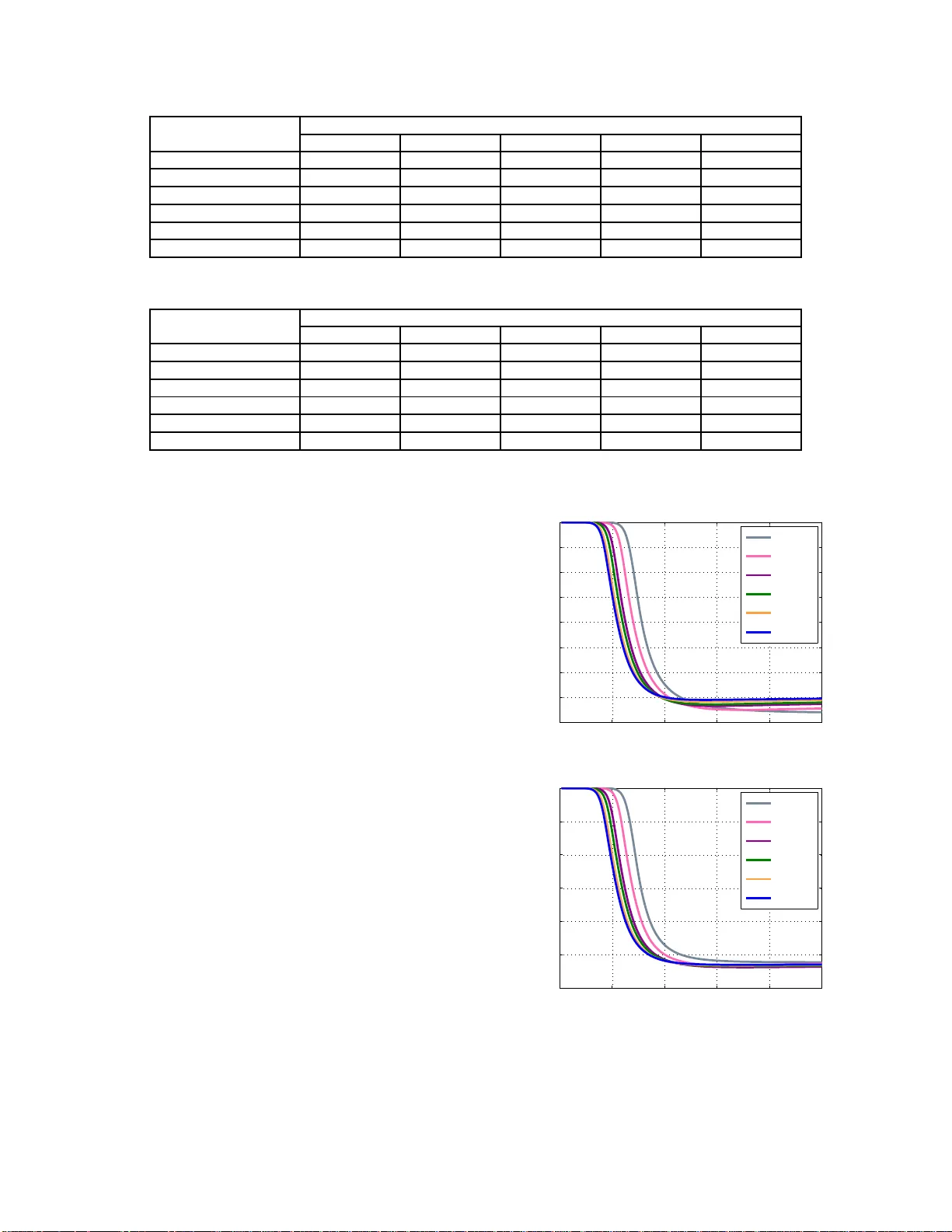

Social T rust Pred iction via Max-norm Constrained 1-bit Matrix Completion Jing W ang 1 , Jie Shen 2 , Huan Xu 3 1 Hefei Univ ersity of T echnology , China wangjing@mail.hfut.edu .cn 2 Rutgers Univ ersity , USA js2007@rutgers.edu 3 National Univ ersity of Singapore, S ingapore mpexuh@nus.edu.sg Abstract Social trust prediction addresses the significant problem of exploring interactions among users in social networks. Natu rally , this problem can be for- mulated in the matrix comp letion fr amew ork, with each entry indicating the trustne ss o r distrustness. Howe ver, there are two challenges for the social trust pr oblem: 1) the observed data are with sign (1-bit) measur ements; 2) the y are typ ically sampled non-u niform ly . Most of the previous matrix com- pletion metho ds do no t well handle the two issues. Motiv ated by the recen t progr ess of max-no rm, we propo se to solv e the problem with a 1-bit max- norm constrained f ormulatio n. Since max -norm is not easy to o ptimize, we utilize a refor mulation of max-no rm which facilitates an efficient projec ted gradient decent alg orithm. W e d emonstrate the superiority of ou r f ormulatio n on two be nchmark datasets. 1 Intr oduction W ith the increa sing pop ularity of social networks, there ex- ist many interesting and difficult prob lems, such as friends recommen dation, informatio n prop agation, etc. In this paper, we study the problem o f social trust prediction, which aims to esti mate the positiv e or negati ve relation ship amon g the users based on the existing tru stness informatio n associated with them. This pro blem play s a n importan t ro le in social networks as the system can b lock the in vitation fro m some- one that the user does not trust, or reco mmend new frien ds who enjoys a high reputation. Naturally , the social trust problem can be formu lated wit hin the matrix completion framework [ Liben-Nowell and Kleinberg, 2007 ] [ Billsus and Pazzani, 1998 ] . That is, the ( i, j ) -th entry of the o bserved data matrix Z ∈ R n × n is a 1- bit c ode implying that th e i -th user trusts th e j -th user if Z ij = 1 . Here, n deno tes the number of users. Howe ver, what we observe is only a small fra ction o f the entr ies, whose values are zero . And our goal is to estimate the m issing entries accordin g to the 1-bit measuremen ts in Z . Note that the problem is ill-posed if no assumption is im- posed on the s tructure of the data. T o solve the prob lem, a numb er of method s are p ropo sed. Generally , existing so- cial trust p rediction methods fall into three categories. The first category is based on similarity measures or the struc- tural context similarity [ Newman, 2001 ] [ Chowdhury , 2010 ] [ Katz, 1953 ] [ Jeh and W id om, 2002 ] , motiv ated by the in- tuition that an individual tends to trust their neighb ors, o r the ones with similar trusted people. The second is based on low rank matrix com pletion [ Billsus and Pazzani, 1998 ] [ Cai et al. , 2010 ] [ Huang et al. , 2013 ] , which assumes tha t the underlying data matrix is low-rank or can be approximate by a lo w-rank matr ix. The third o ne mo dels the problem as a binary classification one a nd utilizes tech niques such as lo - gistic regression [ Leskovec e t al. , 2010 ] . Challenges. Howe ver, the re are two issues emerging in social trust pr ediction which are no t well ch aracterized by the algorithms in pr evious works. First, th e value of the observed entry is either 1 o r − 1 , which is analogous to the binary c lassification problem. But in our problem, we are h andling much more com plex matrix data. For - tunately , [ Srebro et al. , 2004 ] presented a maximum margin matrix factorization framework that un ifies the binar y prob - lem fo r vector case and m atrix case. The key idea in their work is a low-norm m atrix factorization , which w ill also be utilized in this pap er . Sec ond, th e lo cations of the entries are sampled non- unifor mly , which gaps the theory and practice for a lo t of matrix completion algorithms. T o tackle this chal- lenge, we sugg est using the max -norm as a conve x surrogate for the rank func tion, which is shown to be sup erior to the well-known nuc lear norm wh en addressing the n on-un iform data [ Salakhutdin ov and Sreb ro, 2010 ] . Our contrib utions are two-folds: 1) T o the be st of our knowledge, we are the first to addr ess the social trust pred ic- tion pro blem by utilizing a max -norm constrain ed formula- tion. 2) Althou gh a m ax-nor m co nstrained pro blem can be solved by SDP solvers and an accu rate enou gh solution c an be achieved, we here utilize a pro jected g radient alg orithm that is scalable to large scale datasets. W e empirically show the imp rovement of our formu lation for the no n-un iform 1-bit benchm arks compared to state-of-the- art solvers. 2 Related W ork Social interaction is in vestigated intensi vely in the last decades. The social interactio n indicates the fr iendship, sup- port, en emy or disapproval as shown in Figure 1. On line users Figure 1 : Illustration of the data matr ix of social trust. Each column of th e d ata matrix is a rating sample. Each entry is a tag tha t a u ser assign s to ano ther user . Th e sym bol “+” de - notes the “trust” relationship , “- ” denotes “d istrust” and “? ” means unk nown re lationship (that we aim to predict). Most of the r elationships are unk nown, so the data is sparse. T ypi- cally , each individual has own preference and fr iendship net- work, making the d ata non-un iform. Also n oted that the d ata matrix may not be symmetric. rely on the tr ustworthiness information to filter inf ormation , establish collabora tion or b u ild socia l b onds. Social ne tworks rely o n the trust information to make r ecommen dation, at- tract u sers fro m other circles, or in fluencing pu blic opin ions. Thus, the exp loration of social trust has a wide range of ap- plications, and has em erged as an important to pic in social network research. A numb er of method s are proposed. One kind o f methods are based on the similar- ity me asurement. Specifically , Jaccar d’ s coefficient is co mmon ly u sed to me asure th e p robability two items that have a relation ship. Inspired by the met- ric, Jeh and W idom [ Jeh and W id om, 2002 ] propo sed a domain- indepen dent similar ity measure ment, SimRank. [ Newman, 2001 ] directly define d a score to verify th e corre- lation between co mmon neig hbors. Some meth ods are based on relational data modeling, stru ctural proximity measures and stochastic relational mod el [ Getoor and Diehl, 2005 ] [ Liben-Nowell and Kleinberg, 2007 ] [ Y u et al. , 2009 ] . The above men tioned methods a re mainly derived from th e solutions of link prediction . The link pred iction is or i- ented to n etwork-level pred iction, wher eas social trust prediction focu ses on person-level. Another class of methods are d erived fr om the c ollaborative filtering m eth- ods, such as clu stering technique s [ Sarwar et al. , 2001 ] , model-b ased methods [ Hofmann and Puzicha, 199 9 ] , and the matrix factorization models [ Srebro et al. , 2003 ] [ Mnih and Salakhutdinov , 200 7 ] . Howe ver , the data ma trix of trust has som e structure pro perties d ifferent from th e user-item matrix , such as transitivity . Meanwhile, th e soc ial trust in reality is extremely sparse. For instance, Facebook has hundred s of millions of users, but most of them ha ve l ess than 1,000 friend s. Besides, the peop le with similar p erson- ality tend to behave similarly . T o sum up, the d ata matrix of social trust has bo th sparse and low-rank structure. Thus, the social trust pred iction problem is especially suitable for the matrix completio n model. Th at is the focus of our paper . The problem of matrix completion is to recover a lo w -rank matrix from a sub set of entries [ Cand ` es and Recht, 2009 ] , which is given b y: min X rank ( X ) s.t. P Ω ( X ) = P Ω ( Z ) (2.1) where Z ∈ R p × n is the d ata matrix , X is the recovered matrix, and Ω is the in dex set o f o bserved entries. Th op- timization problem ( 2.1) is n ot only NP-h ard, b ut requires double exponential tim e complexity with the number of sam- ples [ Recht et al. , 2010 ] . T o solve the ab ove pr oblem, one alternative is to use n uclear norm as a relaxation to the rank function : min X || X || ∗ s.t. P Ω ( X ) = P Ω ( M ) (2.2) where || X || ∗ denotes th e sum o f singular values of m atrix X . [ Cai et al. , 2010 ] developed a first-ord er pro cedure to solve the co n vex problem (2.2), namely singu lar value thresholding (SVT). [ Jain et al. , 2010 ] minimized the rank min imization b y th e singular value p rojection (SVP) algor ithm. [ Kes hav an et al. , 2010 ] solved the problem by first tr imming e ach r ow and colu mn with too few e ntries, then compu te th e truncated SVD of the trimmed matrix. U nder certain conditio ns, it sh owed accurate r ecovery on the order of nd log n samp les ( n is the number of samples, d is the rank of recovered ma- trix). W ith the r apid development o f ma trix completion problem , some more efficient metho ds have been p roposed [ Cand ` es and T ao, 201 0 ][ Gross, 2011 ][ W an g and Xu, 2012 ][ Huang et al. , 2 0 1 3 ] . Howe ver, all the m ethods men tioned ab ove use the nuclear n orm a s the surrog ate to the rank, who se ex- act recovery can be gu aranteed only when the data are sampled unifor mly , w hich is not pr actical in real world applications. On the other h and, recent empiri- cal o n max -norm [ Srebro et al. , 2004 ] shows promising re- sults fo r non -unifo rm data if o ne utilize the m ax-no rm as a surr ogate [ Salakhutdin ov and Sreb ro, 2010 ] . No tably , for some specific problems, such as collaborative filter- ing, [ Srebro and Shraibman , 2005 ] proved that the general- ization error bou nd for max-nor m is better tha n the nuclear norm. More r ecently , [ Shen et al. , 201 4 ] reported encou rag- ing results on the sub space r ecovery task (which is closely relev ant to matr ix com pletion). Since the social trust data is non-u niform ly sampled, we belie ve that a max-n orm regu- larized formulation can better handle th e challenge tha n the nuclear norm. Our fo rmulation is also inspir ed by a re- cent th eoretical study on m atrix comp letion with 1-bit mea- surement [ Cai and Zhou, 2013 ] , which establishe d a minimax lower bou nd on the gener al sampling model and derived the optimal c onv ergence rate in terms of Frobeniu s norm loss. Furthermo re, there are several practical algo rithms to solve max-no rm regularized or m ax-nor m constrained prob lems, see [ Lee et al. , 2010 ] and [ Shen et al. , 2014 ] for example. 2.1 Over view After revie w of related work in Section 2, we intro duce the notations and formulate the problem in Section 3. Then we giv e algorith m to solve the m ax-nor m co nstrained 1 -bit matrix comp letion (MMC) problem in Sectio n 4. Mean - while, we also provide an equi valent SDP formulation for the MMC, which ca n be accurately solved at the expense of effi- ciency . T hen we r eport the emp irical study on two benchmark datasets in Section 5. Section 6 concludes this paper and dis- cusses possible future work. 3 Notations and Prob lem Setup In this section, we introdu ce the notation s th at will be used in this pap er . Capital letters s uch as M are used for matrices an d lowercase bold letters s uch as v den otes vectors. T he i -th row and j -th column of a m atrix M is den oted b y m ( i ) and m j respectively , and the ( i, j ) -th en try is d enoted by M ij . For a vector v , we use v i to d enote its i -th elemen t. W e denote the ℓ 2 norm of a vector v by k v k 2 . For a matrix M ∈ R p × n , we denote th e Fro benius no rm by k M k F and k M k 2 , ∞ denotes the maximum ℓ 2 row norm of M , i.e., k M k 2 , ∞ := p max i =1 k m ( i ) k 2 . W e furth er define the max-norm of M [ Linial et al. , 2007 ] , k M k max = min U,V ,M = U V ⊤ max {k U k 2 2 , ∞ , k V k 2 2 , ∞ } , (3 .1) where we enum erate a ll possible factorizations to obtain the minimum. Intuition on max-norm. At a first sight, th e ma x-nor m is hard to under stand. W e simply e xplain why it is a tighter approx imation to the rank f unction than th e n uclear norm. Again, we write the nuclear norm of M as a factorization form [ Recht et al. , 2010 ] : k M k ∗ := min U,V ,M = U V ⊤ 1 2 k U k 2 F + k V k 2 F . Note th at the Frobenius n orm is the sum o f th e squar e of th e ℓ 2 row norm. Thus, a nuclear nor m regularizer actually con- strains the average of th e ℓ 2 row norm , while the max- norm constrains the maximu m of the ℓ 2 row norm! Giv en th e ob served data Z ∈ R p × n , we are interested in approx imating Z with a low-rank m atrix X , wh ich can be formu lated by , minimize 1 2 kP Ω ( Z − X ) k F , s.t. rank ( X ) ≤ d, where Ω is a n index set of ob served entries a nd d is some expected rank. P Ω ( M ) is a pro jection operator on a ma- trix M such that P Ω ( m ij ) = m ij if ( i , j ) ∈ Ω and zero otherwise. H owe ver, it is u sually intractable to optim ize the above pr ogram as the r ank fu nction is non- conv ex and non- continuo us [ Recht et al. , 2010 ] . One com mon appr oach is to use the nuclear n orm as a con vex surrogate to the rank f unc- tion. Howe ver , it is well kn own that the nuclear no rm c an- not w ell handle the non- unifor m data. Mo tiv ated by th e re- cent progress in max-nor m [ Salakhutdin ov and Sreb ro, 2010; Cai and Zhou, 2013; Sh en et al. , 2014 ] , we use the max - norm as a n alternative conv ex relaxation, which giv es the fol- lowing formu lation: min X 1 2 kP Ω ( Z − X ) k 2 F , s.t. k X k max ≤ λ 2 , (3.2) where λ is some tunable parameter . 4 Algorithm The m ax-no rm is co n vex an d m oreover , it can be solved by any SDP solver . Formally , we have the following lemma: Lemma 4.1 ( [ Srebro et al. , 2004 ] ) . F or an y matrix X ∈ R p × n and λ ∈ R , k X k max ≤ λ if and only if ther e e x- ist A ∈ R p × p and B ∈ R n × n , such th at A X X ⊤ B is semi- definite po sitive and ea ch d iagonal element in A and B is upper bounde d by λ . W ith Lemma 4.1 on hand, on e can formu late Problem 3 .2 as an SDP: min X,A,B 1 2 kP Ω ( Z − X ) k 2 F , s.t. A ii ≤ λ 2 , B j j ≤ λ 2 , ∀ i ∈ [ p ] , j ∈ [ n ] , A X X ⊤ B 0 . (4.1) And this pro gram can b e solved by any SDP solver to obtain accurate enough solution. Howe ver, SDP solvers are n ot scalable to large matrices. Thus, in this paper, we ap ply a projected g radient meth od to solve Pr oblem (3.2), which is due to [ Lee et al. , 2010 ] . A key technique is the ref ormulatio n of the ma x-nor m (3.1). As- sume that the rank of the optimal solution X ∗ produ ced by the SDP (4.1) is at most d . Then we can safely f actorize X = U V ⊤ , with U ∈ R p × d and V ∈ R n × d . Com bining the factor ization and the definition, we obtain th e fo llowing equiv alent progr am : min U,V 1 2 P Ω ( Z − U V ⊤ ) 2 F , s.t. k U k 2 , ∞ ≤ λ, k V k 2 , ∞ ≤ λ. (4.2) Note that the gradient o f the ob jectiv e fun ction w . r .t. U and V can be easily computed. Th at is, ∇ U f ( Z, U, V ) = P Ω ( U V ⊤ − Z ) V , ∇ V f ( Z, U, V ) = P Ω ( V U ⊤ − Z ⊤ ) U . (4.3) Here, for simplicity we define f ( Z, U, V ) = 1 2 P Ω ( Z − U V ⊤ ) 2 F . Algorithm 1 Max-n orm Constrained 1-Bit Matrix Comple- tion (MMC) Input: Z ∈ R p × n (observed sam ples), pa rameters λ , initial solution ( U 0 , V 0 ) , maximu m iteration τ . Output: Optimal solution ( U ∗ , V ∗ ) . 1: for t = 1 to τ do 2: Compute the gradient by Eq. (4.3): U ′ t = ∇ U f ( Z, U, V t − 1 ) | U = U t − 1 , V ′ t = ∇ V f ( Z, U t − 1 , V ) | V = V t − 1 . 3: Compute the step size α t accordin g to Armijo rule. 4: Compute the new iterate: U t = Π( U t − 1 − α t U ′ t ) , V t = Π( V t − 1 − α t V ′ t ) . 5: end for The inequality con straints can be addressed by a pro jection step. T hat is, when we have a new iterate ( U t , V t ) a t the t - th iteration, we can check if they v iolate the constrain ts. If not, we can proc eed to the next iteration. Otherwise, we can scale the r ows of U an d/or V by λ k U k 2 , ∞ and/or λ k V k 2 , ∞ re- spectiv ely . I n this way , we ha ve the projection operator: Π( M ) = ( λ k M k 2 , ∞ M , if k M k 2 , ∞ > λ , M , otherwise. (4.4) If we further p ick the step size α t via the Armijo rule [ Armijo and others, 1966 ] , it c an be shown that the sequ ence of ( U t , V t ) will co n verge to a station ary point [ Bertsekas, 1999 ] . Th e algorithm is sum marized in Al- gorithm 1. The b enefits o f applyin g the factorization on the max-n orm are tw o-folds: 1) the memory c ost ca n be sig nificantly r e- duced fro m O ( pn ) of SDP to O ( d ( p + n )) . 2) it facil- itates the projected gradien t algorithm, which is compu ta- tionally efficient whe n working on large matrices (see Sec- tion 5 ). Howe ver, note that Problem (4.2) is non- conv ex. For - tunately , [ Burer and Monteiro, 2005 ] proved that as lon g a s we p ick a sufficiently large value for d , then any loc al m ini- mum of Eq . (4 .2) is a global op timum. In Sectio n 5 , we will report the influence of d on th e performance. Actually , in Algorithm 1, the stoppin g criteria is set to b e a maximum it- eration. One may also check if it reaches a local min ima as the stopping criteria, as discussed in [ Cai and Zhou, 2013 ] . 4.1 Heuristic on λ The λ is the only tuna ble parameter in ou r algo rithm. For our prob lem, note that the data is of 1-b it measurements, i.e. , | Z ij | = 1 for ( i , j ) ∈ Ω . Also no te that Z ij = u ( i ) v ( j ) ⊤ . Thus, u ( i ) v ( j ) ⊤ = 1 . So we have λ ≥ 1 . Howe ver , if we choose a large λ , the estimation | X ij | may de v iate away fro m 1 . W e find that λ = 1 . 2 lead to satisfactory improvement. 5 Experiments In th is section, we empirica lly evaluate o ur method for the matrix completion perform ance. W e will first introduce the used datasets. I n the exper imental settings, we present the compara ti ve metho ds and e valuation metr ics. Then we report encour aging results on two b enchma rk datasets. W e also ex- amine the influence of matrix rank d . 5.1 Datasets W e c onduc t the experime nts o n two b enchmar k datasets: Epinions and Slashdot. In these two datasets, the u sers are connected by explicit positiv e (trust) o r negative (d istrust) links ( i.e., the 1-bit measur ements in Z ). Th e first dataset con- tains 119,21 7 nod es (u sers) an d 8 41,00 0 edges (links), 85 .0% of which are positive. The Slashdot dataset co ntains 82, 144 users a nd 549,2 02 link s, and 77.4 % of the edges are lab eled as positive. T able 1 giv es a summary descr iption about the subset used in our experiment. It is clear that th e distribution o f link s are not uniform since each u ser has his/her individual preference and own friendship network . Following [ Huang et al. , 2013 ] , we se- lect 2,000 users with the highest degrees f rom each dataset to form the observation matrix Z . T able 1: Description of 2 datasets Dataset Epinions Slashdot # of Users 2,000 2,000 # of T rust 171,7 31 68,9 32 # of Distrust 18,91 6 20,03 2 5.2 Experimental Settings Baselines. W e choose fou r state-of-th e-art metho ds as baselines, including SVP [ Jain et al. , 2010 ] , SVT [ Cai et al. , 2010 ] , OPTSp ace [ Kes hav an et al. , 2010 ] and RRMC [ Huang et al. , 2013 ] . Since SVT and RRMC need a sp ecified rank, we tune the ran k fo r these method s and choose the best perfor mance as the final result. Evaluation Metric. Let T be the index set of all observed entries. W e use two ev aluation m etrics to measure the per- forman ce, mean av erage error (MAE) an d root mean square error (RMSE), compute d as follows: M AE = X ( i,j ) ∈ T \ Ω ( X ij − M ij ) / ( | T | − | Ω | ) , RM S E = s X ( i,j ) ∈ T \ Ω ( X ij − M ij ) 2 / ( | T | − | Ω | ) , where | T | d enotes the cardinality of T . T raining and T esting. W e random ly split the dataset for training a nd testing. In particu lar , the num ber of observation measuremen ts Ω fo r trainin g r anges f rom 1 0% to 60%, with step size 10 %. For each split, we run all the algorithms fo r 20 trials, with the training da ta in each tr ail being rand omly sampled. Then , we report the mean and standa rd de v iation of MAE and RMSE over all 20 trials. T able 2: MAE Results o n Epinions Dataset Observed entries (%) Methods SVT OPTSpace SVP RRMC MMC 10 0.359 ± 0.00 4 0 .289 ± 0.019 0.450 ± 0.0 08 0.576 ± 0.00 1 0.2 54 ± 0. 003 20 0.394 ± 0.02 2 0 .236 ± 0.005 0. 294 ± 0 .002 0.5 18 ± 0. 002 0 .212 ± 0.003 30 0.360 ± 0.05 7 0 .219 ± 0.009 0. 248 ± 0 .001 0.4 60 ± 0. 002 0 .201 ± 0.002 40 0.410 ± 0.09 9 0 .205 ± 0.008 0. 224 ± 0 .001 0.4 18 ± 0. 002 0 .193 ± 0.001 50 0.471 ± 0.12 9 0 .197 ± 0.007 0. 210 ± 0 .001 0.3 86 ± 0. 001 0 .190 ± 0.002 60 0.476 ± 0.14 6 0 .197 ± 0.003 0. 199 ± 0 .001 0.3 62 ± 0. 002 0 .206 ± 0.003 T able 3: RMSE Results on Ep inions Dataset Observed entries (%) Methods SVT OPTSpace SVP RRMC MMC 10 0.513 ± 0.01 0 0 .530 ± 0.021 0. 610 ± 0 .010 0.6 50 ± 0. 001 0 .466 ± 0.004 20 0.559 ± 0.03 1 0 .456 ± 0.005 0. 459 ± 0 .002 0.6 06 ± 0. 002 0 .406 ± 0.004 30 0.532 ± 0.08 2 0 .422 ± 0.011 0. 415 ± 0 .002 0.5 63 ± 0. 002 0 .383 ± 0.002 40 0.620 ± 0.17 1 0 .406 ± 0.015 0. 394 ± 0 .002 0.5 33 ± 0. 002 0 .371 ± 0.002 50 0.719 ± 0.22 5 0 .398 ± 0.016 0. 381 ± 0 .001 0.5 09 ± 0. 001 0 .364 ± 0.001 60 0.728 ± 0.28 8 0 .403 ± 0.009 0. 371 ± 0 .002 0.4 91 ± 0. 002 0 .365 ± 0.002 5.3 Experimental Results W e r eport detailed r esults from T able 2 to T able 5. Fro m the results in T ab les 2 and 3 , we observe that MMC out- perfor ms the o ther method s in terms of b oth evaluation met- rics on the Epin ions dataset most of th e time. In particular, when ther e are fe w observations 10 % (wh ich indicates a hard task), MMC o btains th e RMSE o f 0.46 6, mu ch b etter th an OPTSpace (0. 530), SVP (0 .610) and RRMC (0.6 50). Ex cept on the case with 60% observed entries, OPTSpace obtains the smallest MSE with 0.19 7, but our algorithm is compar ativ e with 0.2 06. In a nut o f shell, the gap between MM C an d the baselines becomes larger as the fraction of observed entries decreases. Similarly , our me thod ach iev es the le ast MAE and RMSE on the S lashdot dataset (see T able 4 and 5). For instance with 30 % ob served entries, MMC o btains the MAE with less than 0.4, much better than the co mparative methods, such as SVT (0.513 ), OPTSpace (0.427), SVP (0.50 1) and RRMC ( 0.686 ). In terms o f RMSE, in the case of 20 % observed entries, th e RMSE values of other meth ods are all above 0. 7 while ou r method reaches 0.6 79. In su m, our method is superior than the comparative me thods on tw o real-life datasets in terms of MSE and RMSE most of the time. Since we have studied the effectiv eness of ou r m ethod, here we examine the computational efficiency in T able 6, which is importan t for p ractical ap plications. T o test the time com- plexity of the method s, we report the av eraged time cost on the Epin ions d ataset with 10% observed entries and Slash- dot with 20 % observed entries. T o illustrate the trad e-off be- tween ac curacy and efficiency , we also rep ort the MAE and RMSE. As we see, SVP is the most efficient metho d, wh ose runnin g tim e is 0.84 seconds on Epinion s while ou rs is 1 .92 seconds. On Slashd ot, it also ach iev es the best per forman ce in terms of e fficiency . Howe ver , our me thod enjoys a sign ifi- cant impr ovement of MAE and RMSE comp ared to all base- 0 30 60 90 120 150 0.2 0.3 0.4 0.5 0.6 0.7 0.8 0.9 1 Epoch MAE d=1 d=5 d=50 d=100 d=300 d=500 0 30 60 90 120 150 0.4 0.5 0.6 0.7 0.8 0.9 1 Epoch RMSE d=1 d=5 d=50 d=100 d=300 d=500 Figure 2: MAE and RMSE in ter ms of different rank d o n the Epinions dataset. T able 4: MAE Results on Slashdot Dataset Observed entries (%) Methods SVT OPTSpace SVP RRMC MMC 10 0.679 ± 0.00 8 0 .554 ± 0.017 0. 755 ± 0 .005 0.7 15 ± 0. 001 0 .546 ± 0.008 20 0.562 ± 0.00 4 0 .458 ± 0.008 0. 582 ± 0 .007 0.7 04 ± 0. 001 0 .437 ± 0.008 30 0.513 ± 0.03 0 0 .427 ± 0.009 0. 501 ± 0 .003 0.6 86 ± 0. 003 0 .395 ± 0.006 40 0.506 ± 0.04 1 0 .395 ± 0.009 0. 460 ± 0 .002 0.6 47 ± 0. 006 0 .380 ± 0.009 50 0.495 ± 0.06 0 0 .376 ± 0.004 0. 432 ± 0 .002 0.6 09 ± 0. 002 0 .366 ± 0.003 60 0.520 ± 0.06 5 0 .362 ± 0.011 0. 413 ± 0 .002 0.5 85 ± 0. 002 0 .350 ± 0.002 T able 5: RMSE Results on Slashdot Dataset Observed entries (%) Methods SVT OPTSpace SVP RRMC MMC 10 0.788 ± 0.00 8 0 .826 ± 0.021 0. 873 ± 0 .004 0.8 29 ± 0. 001 0 .774 ± 0.008 20 0.718 ± 0.02 0 0 .704 ± 0.013 0. 746 ± 0 .007 0.8 21 ± 0. 001 0 .679 ± 0.009 30 0.680 ± 0.04 3 0 .652 ± 0.006 0. 680 ± 0 .003 0.8 07 ± 0. 002 0 .633 ± 0.008 40 0.670 ± 0.05 6 0 .620 ± 0.009 0. 647 ± 0 .003 0.7 78 ± 0. 005 0 .615 ± 0.011 50 0.642 ± 0.05 5 0 .596 ± 0.009 0. 624 ± 0 .003 0.7 49 ± 0. 002 0 .581 ± 0.006 60 0.699 ± 0.08 4 0 .577 ± 0.011 0. 609 ± 0 .002 0.7 30 ± 0. 002 0 .566 ± 0.002 T able 6: Th e trade-off between accuracy and ef ficiency . Dataset SVT OPTSpace SVP RRMC MMC MAE RMSE Time MAE RMSE T ime M AE RMSE Time M AE RMSE Time MAE RMSE T ime Epinions 0.46 6 0.61 8 2 6.76 0 .288 0.531 15.26 0 .450 0.61 0 0.84 0.5 76 0. 651 43.11 0.2 62 0. 481 1.92 Slashdot 0.618 0.728 50.73 0.458 0.705 9.23 0.5 82 0.746 0.83 0 .715 0 .830 44.12 0 .437 0 .679 2.41 lines. Also, our algorithm is ord ers of magnitu de faster than other three baselines. This implies that MMC fav ors a good trade-off between the accura cy an d efficiency . 5.4 Examine The Influence of d The no n-conve x re formu lation (4.2) requires an exp licit rank estimation d o n th e true m atrix. In this section, we in vestigate the influence of d on t he Epinions dataset as an e xample. The rank d is chosen from [ 1, 5, 50 ,100, 300, 500] and the results are plot in Figure 2. W e observe th at the rank has little in- fluence on the perfo rmance. Th is is possibly because that the actual d ata has a low-rank structure ( close to r ank one). And from [ Burer and Monteiro, 2005 ] , we k now that if d is larger than the actual rank, an y local minimum of Eq. (4.2) is also a global optima. 6 Conclusion and Futur e W ork In this pap er , we form ulated the social tru st pre diction in th e matrix com pletion framework. In par ticular, due to the spe- cial structure of the social trust pro blem, i.e., the measu re- ments are 1-bit a nd th e observed en tries are no n-un iformly sampled, we presen ted a max-norm con strained 1 -bit ma trix completion (MMC) algorithm. Sin ce SDP solvers are not scalable to large scale matrices, we u tilized a n on-co n vex re- formu lation of the max-no rm, wh ich facilitates an efficient projected gradien t decent algorithm. W e empirically exam- ined ou r algorithm on two ben chmark datasets. Compared to other state- of-the- art m atrix co mpletion fo rmulation s, MMC consistently ou tperfor med them , which meets with recently developed theories on max -nor m. W e also studied th e trad e- off b etween the accuracy and efficiency and ob served that MMC achieved superior accuracy while keeping com parable computatio nal ef ficien cy . The m ax-no rm has been studied for se veral year s and in many applicatio ns, such as collabo rative filtering, clusterin g, subspace recovery . It is em pirically an d theoretically shown to be superior than the popu lar n uclear norm . This work in- vestigates the power of max-norm fo r social trust problem and demo nstrates en courag ing results. It is interesting and promising to a pply max -norm as a conv ex surroga te to o ther practical problem s such as face recognitio n, subspace cluster- ing etc. Refer ences [ Armijo and others, 1966 ] Larry Armi jo et al. Minimization of functions h aving lipschitz con tinuous first partial deriv ative s. P a- cific J ournal of mathematics , 16(1):1–3, 1966. [ Bertsekas, 1999 ] Dimitri P Bert sekas. Nonlinear p rogramming. 1999. [ Billsus and Pazzani, 19 98 ] Daniel Billsus and Michael J Pazzani. Learning collaborati ve information filters. In ICML , v olume 98, pages 46–54, 1998. [ Burer and Monteiro, 2005 ] Samuel Burer and Renato DC M on- teiro. Local minima and con vergen ce in l o w-rank semidefinite programming. Mathematical Pro gra mming , 103(3):427–444 , 2005. [ Cai and Zhou, 2013 ] T on y Cai and W en-Xin Zhou. A max-norm constrained minimization approach to 1-bit matrix completion. The J ournal of Machin e Learning Resear ch , 14(1):3619–364 7, 2013. [ Cai et al. , 2010 ] Jian-Feng Cai, Emmanuel J Cand` es, and Zuowei Shen. A si ngular value thresholding algorithm for matrix com- pletion. SIAM Journ al on Optimization , 20(4):1956–1 982, 201 0. [ Cand ` es and Recht, 2009 ] Emmanuel J C and ` es and Benjamin Recht. Exact matrix completion via conv ex optimization. F oun - dations of Computational mathematics , 9(6):717–77 2, 200 9. [ Cand ` es and T ao, 2010 ] Emmanuel J Cand ` es and T erence T ao. The po wer of con vex relax ation: Near-optimal matrix co mpletion. IEEE T ransactions on Information Theory , 56(5):205 3–2080, 2010. [ Cho wdhury , 2010 ] Gobinda Chowdh ury . Intr oduction to modern information r etrieval . 2010. [ Getoor and Diehl, 2005 ] Lise Getoor and Christopher P Diehl. Link mining : a surv ey . A CM SIGKDD Explora tions Newsletter , 7(2):3–12, 2005. [ Gross, 2011 ] David Gross. Reco vering lo w-rank matrices from few coef fi cients in any basis. IEEE T ransac tions on Information Theory , 57(3):1548 –1566, 2011. [ Hofmann and Puzicha, 1999 ] Thomas Hofmann and Jan Puzicha. Latent class models for collaborative filteri ng. In IJCAI , vol- ume 99, pages 688–693, 1999. [ Huang et al. , 2013 ] Jin Huang, Feiping Nie, Heng Huang, Y i- Cheng Tu, and Y u Lei. S ocial trust prediction using heteroge- neous networks. ACM Tr ansaction s on Knowledge Discovery fr om Data , 7(4):17, 2013. [ Jain et al. , 2010 ] Prateek Jain, Raghu Meka, and Inderjit S Dhillon. Guaranteed rank minimization via singular value pro- jection. In NIPS , pages 937– 945, 2010. [ Jeh and W idom, 2002 ] Glen Jeh and Jennifer Wid om. Simrank: a measure of structural-context similarity . In ACM KDD , pages 538–54 3, 200 2. [ Katz, 1953 ] Leo Katz. A ne w st atus index deri ved from sociomet- ric analysis. Psych ometrika , 18(1):39–43 , 1953 . [ Ke shav an et al. , 201 0 ] Raghunand an H K eshav an, Andrea Monta- nari, and Sewoon g Oh. Matrix completion from a few entries. Information Theory , IEEE T ransa ctions on , 56(6):2980–2998, 2010. [ Lee et al. , 2010 ] Jason D Lee, Ben Recht, Nathan Srebro, Joel T ropp, and Ruslan R S alakhutdino v . Practical large-scale op- timization for max-no rm reg ularization. In NIPS , pages 1297– 1305, 2010. [ Lesko vec et al. , 2010 ] Jure Lesk ov ec, Daniel Huttenlocher , and Jon Kleinberg. Predicting positive and negativ e links in online social networks. In WWW , pages 641–650, 2010. [ Liben-No well and Kleinberg, 200 7 ] David L iben-No well and Jon Kleinberg. The link-prediction problem for social networks . J ournal of t he American soc iety for information science a nd tech- nolog y , 58(7):1019–1031 , 2007. [ Linial et al. , 2007 ] Nati Linial, Shahar Mendelson, Gi deon Schechtman, and Adi Shraibman. Complexity measures of sign matrices. Combinatorica , 27(4):439–463, 2007. [ Mnih and Salakhutdino v , 2007 ] Andriy Mnih and Ru slan Salakhutdino v . Probabilistic matri x factorization . In NIP S , pages 1257–12 64, 2007. [ Ne wman, 2001 ] Mark EJ Newman . Clustering and preferen- tial attachment in gro wing networks. Physical Re view E , 64(2):02510 2, 200 1. [ Recht et al. , 2010 ] Benjamin Recht, Maryam Fazel, and Pablo A Parrilo. Guaranteed minimum-rank solutions of linear ma- trix equation s via nuclear norm minimization . SIAM r evie w , 52(3):471–5 01, 2010 . [ Salakhutdino v and Srebro, 2010 ] Ruslan Salakhutdino v and Nathan Srebro. Collaborati ve filt ering in a non-uniform world: Learning with the weighted trace norm. tc (X) , 10:2, 2010. [ Sarwar et al. , 200 1 ] Badrul Sarwar , George Karypis, Joseph K on- stan, and John Ri edl. Item-based collaborativ e filtering recom- mendation algorithms. In WWW , pages 285 –295, 2001. [ Shen et al. , 2014 ] Jie Shen, Huan Xu, and Ping L i. Online op- timization for max-no rm reg ularization. In NIPS , pages 1718– 1726, 2014. [ Srebro and Shraibman, 2005 ] Nathan Srebro and Adi Shraibman. Rank, trace-norm and max-norm. In Learning Theo ry , pages 545–56 0. 200 5. [ Srebro et al. , 2003 ] Nathan Srebro, T ommi Jaakkola, et al. W eighted low-rank approximations. In ICML , volume 3, pages 720–72 7, 200 3. [ Srebro et al. , 2004 ] Nathan Srebro, Jason DM Rennie, and T ommi Jaakk ola. Maximum-margin matrix factorization. In NIPS , v ol- ume 17, pages 1329–133 6, 200 4. [ W ang and Xu, 201 2 ] Y u-Xiang W ang and Huan Xu. S tability of matrix factorization for collaborati ve fil tering. ICML , 2012. [ Y u et al. , 2009 ] Kai Y u, John Lafferty , Shen ghuo Zhu, an d Y ihong Gong. Large-scale collaborativ e prediction using a no nparamet- ric random effects mod el. In ICML , pages 1185–11 92, 2009.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment