Bayesian Neural Networks for Genetic Association Studies of Complex Disease

Discovering causal genetic variants from large genetic association studies poses many difficult challenges. Assessing which genetic markers are involved in determining trait status is a computationally demanding task, especially in the presence of ge…

Authors: Andrew L. Beam, Alison Motsinger-Reif, Jon Doyle

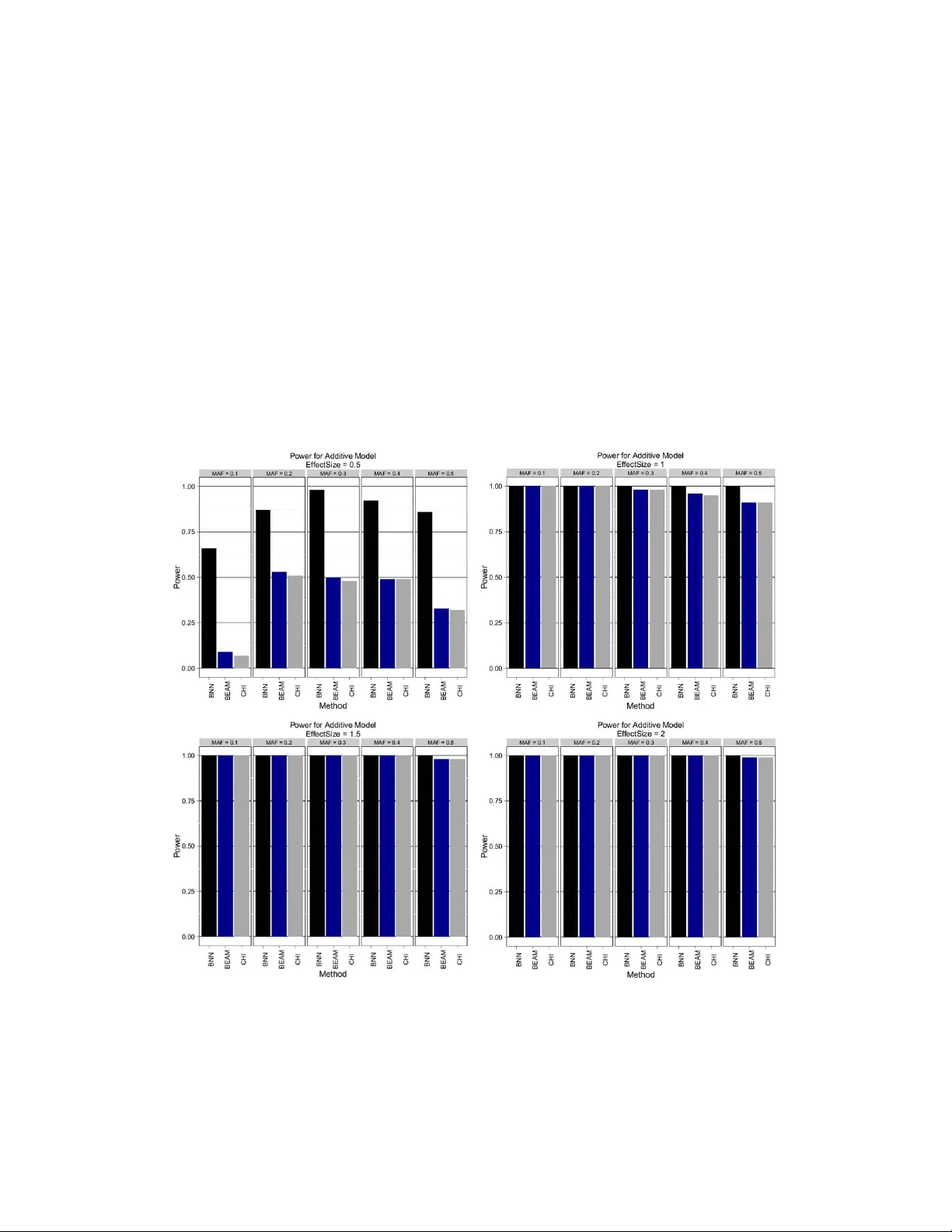

Bayesian Neural Netw orks f or Genetic Association Studies of Complex Disease Andrew L. Beam ∗ Bioinformatics Research Center North Carolina State Univ ersity Raleigh, NC albeam@ncsu.edu Alison Motsinger -Reif Bioinformatics Research Center Department of Statistics North Carolina State Univ ersity Raleigh, NC alison motsinger@ncsu.edu Jon Doyle Department of Computer Science North Carolina State Univ ersity Raleigh, NC Jon Doyle@ncsu.edu Abstract Background Discov ering causal genetic v ariants from lar ge genetic association studies poses many difficult challenges. Assessing which genetic markers are in v olved in deter - mining trait status is a computationally demanding task, especially in the presence of gene-gene interactions. Results A non-parametric Bayesian approach in the form of a Bayesian neural network is proposed for use in analyzing genetic association studies. Demonstrations on synthetic and real data re veal the y are able to ef ficiently and accurately determine which variants are in volv ed in determining case-control status. Using graphics processing units (GPUs) the time needed to build these models is decreased by sev eral orders of magnitude. In comparison with commonly used approaches for detecting interactions, Bayesian neural netw orks perform very well across a broad spectrum of possible genetic relationships. Conclusions The proposed framework is sho wn to be powerful at detecting causal SNPs while having the computational ef ficienc y needed to handle large datasets. 1 Background The ability to rapidly collect and genotype large numbers of genetic variants has outpaced the abil- ity to interpret such data, lea ving the genetic etiology for many diseases incomplete. The presence of gene-gene interactions, or epistasis, is believ ed to be a critical piece of this missing heritability [Manolio et al., 2009]. This has in turn spurred de v elopment on adv anced computational approaches to account for these interactions, with varying degrees of success [Motsinger-Reif et al., 2008b, Motsinger-Reif et al., 2008a, Koo et al., 2013]. The main computational challenge comes from the ∗ Corresponding author . 1 vast number of markers that are present in a typical association study . This problem is exacer - bated when interactions between tw o or more markers must be considered. F or example, gi ven an experiment that genotypes 1,000 markers, examining all possible interactions between two of the markers in volv es consideration of nearly half a million combinations. This situation becomes ex- ponentially worse as higher order interactions are considered. Modern genome-wide association studies (GW ASs) routinely consider 1-2 million single nucleotide polymorphisms (SNPs), which would require e xamining half a trillion potential interactions. As whole genome sequencing (WGS) methods become commonplace, methods that cannot cope with lar ge data sets will be of little utility . Data on this scale will require approaches that can find interactions without ha ving to enumerate all possible combinations. As genotypic technology advances, datasets now routinely include millions of SNPs. Sev eral distinct types of methods have emer ged that attempt to address this challenge. Perhaps one of the most popular approaches from the last decade has been Multifactor Dimensionality Reduction (MDR) [Moore et al., 2006, Hahn et al., 2003], and extensions of the method. MDR is a combina- torial search that considers all possible interactions of a gi ven order and selects the best model via cross validation. Because MDR is an exhausti ve search, it suffers from the previously discussed scalability issue, though recent work using graphics processing units has attempted to lessen this deficit [Greene et al., 2010]. MDR is reliant upon a permutation testing strategy to assess statistical significance for each marker , so the computational b urden becomes prohibitiv e for large datasets. Permutation testing computes a p-v alue for-a statistic of interest (such as an accurac y measure from MDR) by randomly permuting the class labels and calculating the statistic on the permuted dataset. This procedure is repeated many times to compute a null distrib ution for the statistic of interest. The relativ e percentage of instances in the permuted null distribution that are less than or equal to the actual statistic from the unpermuted data is taken as the desired one-sided p-value. Unfortunately , this can be e xtremely e xpensiv e for large datasets when many hypotheses are simultaneously tested, leading to a lar ge multiple testing scenario. T o get the required resolution for a Bonferroni corrected p-value of 0.05 when considering a set of 1,000 SNPs, one must perform 20,000 permutations. This makes permutation testings infeasible for e ven moderately sized datasets. Another popular approach is Bayesian Epistasis Association Mapping (BEAM) [Zhang and Liu, 2007]. BEAM partitions markers into groups representing indi vidual (i.e. marginal) genetic effects, interactions, and a third group resenting background markers that are unin volved with the trait. BEAM employs a stochastic Markov Chain Monte Carlo (MCMC) search technique to probabilistically assign mark ers to each group and uses a no vel B-statistic based on the MCMC simulation to assign statistical significance to each marker . This allows BEAM to assign statistical significance without the need to perform a costly permutation test. This method has been demonstrated successfully on data sets with half a million markers. Howe ver , the recommended amount of MCMC iterations needed is quadratic in the number of SNPs considered [Zhang and Liu, 2007], possibly limiting its effecti veness for larger datasets. Many popular machine learning algorithms ha ve also been adopted for use in analyzing as- sociation studies. Notable examples are decision trees (both bagged, i.e. random forests, [Lunetta et al., 2004, Diaz-Uriarte and de Andres, 2006] and boosted [Li et al., 2011] support vec- tor machines (SVM) [Guyon et al., 2002], Bayesian networks [Jiang et al., 2011], and neural net- works [Motsinger-Reif et al., 2008b]. In particular , tree-based methods such as random forests and boosted decision trees have been found to perform well in several pre vious association stud- ies [Lunetta et al., 2004, Li et al., 2011, Diaz-Uriarte and de Andres, 2006]. Machine learning ap- proaches are appealing because they assume very little a priori about the relationship between genotype and phenotype, with most methods being flexible enough to model complex relationships accurately . Howe ver , this generality is something of a double-edged sw ord as man y machine learn- ing algorithms function as black boxes, providing in vestigators with little information on which variables may be most important. T ypically it is the goal of an association study to determine which variables are most important, so a black box may be of little use. Some approaches hav e easy adap- tations that allow them to provide such measures. Both types of tree based methods (bagged and boosted) can provide measures of relati ve v ariable importance [Breiman, 2001, Friedman, 2001], but these indicators lack measures of uncertainty , so they are unable to determine how likely a v ariable’ s importance measure is to occur by chance without resorting to permutation testing. In this study , we propose the use of Bayesian neural networks (BNNs) for association studies to directly address some the issues with current epistasis modeling. While BNNs have been previ- 2 ously dev eloped and applied for other tasks [Lisboa et al., 2003, Baesens et al., 2002, Neal, 1995, Neal, 1992] they have yet to see significant usage in bioinformatics and computational biology . Like most complex Bayesian models, BNNs require stochastic sampling techniques that draw samples from the posterior distrib ution, because direct or deterministic calculation of the pos- terior distribution is often intractable. These posterior samples are then used to make infer- ences about the parameters of the model or used to mak e predictions for ne w data. Standard MCMC methods that employ a random walk such as the Metropolis-Hastings (R W -MH) algo- rithm [Metropolis et al., 2004, Hastings, 1970] (which is the algorithm that forms the core of BEAM [Zhang and Liu, 2007]) e xplores the posterior distribution very slo wly when the number of predic- tors is large. If d is the number of parameters in a model, the number of iterations needed to obtain a nearly independent sample is O ( d 2 ) [Neal, 2011b] for R W -MH. This makes the R W -MH algorithm unsuitable for neural network models in high-dimensions, so the Hamiltonian Monte Carlo (HMC) algorithm is instead used to generate samples from the posterior . HMC has more fa vorable scaling properties, as the number of iterations needed is only O ( d 5 / 4 ) [Neal, 2011b]. HMC achiev es this fa- vorable scaling by using information about the gradient of the log-posterior distrib ution to guide the simulation to regions of high posterior probability . Readers familiar with standard neural network models will notice an inherent similarity between Bayesian neural networks sampled using HMC and traditional feed-forw ard neural netw orks that are trained using the well known back-propagation algorithm [Rumelhart et al., 1988], as both take steps in the steepest direction using gradient based information. Though HMC will in general explore the posterior distribution in a more efficient manner than R W -MH, the ev aluation of the gradient can v ery e xpensiv e for large data sets. Recent work has shown that this dra wback can be lessened through the use of parallel computing techniques [Beam et al., 2014]. The BNN framework outlined here has several features designed to address many of the challenges inherent in analyzing large datasets from genetic association studies. These adv antages are outlined below . • Quantification of variable influence with uncertainty measures. This allo ws v ariable influ- ence to be assessed relativ e to a null or background model using a nov el Bayesian testing framew ork. This av oids reliance on a permutation testing strategy . • Automatic modeling of arbitrarily complex genetic relationships. Interactions are ac- counted for without having to examine all possible combinations. This is achiev ed from the underlying neural network model. • An efficient sampling algorithm. HMC scales much better than other MCMC methods, such as the R W -MH algorithm, in high-dimensions. • Computational expediency through the use of GPUs. The time needed to build the model is greatly reduced using the massiv e parallel processing offered by GPUs. W e of fer evidence for these claims using sev eral simulated scenarios and a demonstration on a real GW AS dataset. In addition, we compare the proposed approach to se veral popular methods so that relativ e performance can be assessed. 2 Methods Neural networks are a set of popular methods in machine learning that have enjoyed a flurry of renewed activity spurred on by advances in training so-called deep networks [Hinton et al., 2012, Bengio, 2009]. The term neural network can refer to a v ery large class of modeling techniques, but to be clear we use the term here to refer to multilayer feed-forward perceptions (MLPs). In the most basic sense, neural nets represent a class of non-parametric methods for regression and classifica- tion. They are non-parametric in the sense that they are capable of modeling any smooth function on a compact domain to an arbitrary degree of precision without the need to specify the exact relation- ship between input and output. This is often succinctly stated as neural nets are universal function appr oximators [Hornik et al., 1989]. This property makes them appealing for many tasks, including modeling the relationship between genotype and phenotype, because a suf ficiently complex network will be capable of automatically representing the underlying function. Some draw backs of classical neural nets are natural consequences of their strengths. Due to their flexibility , neural nets are highly prone to ov er-fitting to data used to train them. Over-fitting occurs 3 when the network starts to represent the training data e xactly , including noise that may be present, which reduces its ability to generalize to ne w data. Many methods exist to address this issue, but popular methods such as weight decay are well known to be approximations to a fully Bayesian procedure [Neal, 1995, Williams, 1995]. Another issue with standard neural nets is they are often regarded as black boxes in that the y do not provide much information beyond a predicted value for each input. Little intuition or knowledge can be gleaned as to which inputs are most important in determining the response, so nothing coherent can be said as to what dri ves the networks predic- tions. Discussions of the advantages and disadv antages of neural nets for gene mapping ha ve been revie wed in [Motsinger-Reif and Ritchie, 2008]. First we describe the base neural network model, and then describe how this can be incorporated into a Bayesian frame work. The network is defined in terms of a directed, acyclic graph (D AG) where inputs are feed into a layer of hidden units. The output of the hidden units are then fed in turn to the output layer which transforms a linear combination of the hidden unit outputs into a probability of class membership. Specifically consider a network with p input v ariables, h hidden units, and 2 output units to be used to predict whether an observ ation belongs to class 1 or class 2. Let x i = < x i 1 , , x ip > T be the input vector of p v ariables and y i = < y i 1 , y i 2 > be the response vector , where y i 1 = 1 if observation i belongs to class 1 and 0 if not, with y i 2 is defined in the same way for class 2. Hidden unit k first takes a linear combination of each input vector followed by a nonlinear transformation, using the following form: h k ( x i ) = φ b k + p X j =1 w kj ∗ x ij (1) where φ ( · ) is a nonlinear function. For the purposes of this study , consider the logistic transforma- tion, giv en as: φ ( z ) = 1 1 + e − z Sev eral other activ ation functions such as the hyperbolic tangent, linear rectifier , and the radial basis/Gaussian functions are often used in practice. Each output unit takes a linear combination of each h k followed by another nonlinear transformation. Let f 1 ( x i ) be the output unit that is associated with class 1: f 1 ( x i ) = ψ B 1 + h X k =1 W k 1 ∗ h k ( x i ) ! (2) Note we have used upper case letters to denote parameters in the output layer and lowercase letters to indicate parameters belonging to the hidden layer . The ψ ( · ) function is the softmax transformation of element z 1 from the vector z = < z 1 , . . . , z n > : ψ ( z 1 ) = exp( z 1 ) n P i =1 exp( z i ) In this representation, f 1 ( x i ) represents the estimated conditional probability that y i belongs to class 1, giv en the input vector x i . A similar definition is made for output unit 2, f 2 ( x i ) . Note that for the case of only 2 classes, f 2 ( x i ) = 1 − f 1 ( x i ) because the softmax transformation forces the outputs to sum to 1. Having described the formulation for standard neural networks we next describe how this can be extended using the Bayesian formulation. Bayesian methods define a probability distribution over possible parameter values, and thus over possible neural networks. T o simplify notation, let θ = { B , W, β , w } represent all of the network weights and biases sho wn in equations 1, 2. The posterior distribution for θ gi ven the data x i and y i , is giv en according to Bayes rule: p ( θ | x i , y i ) = L ( θ | x i , y i ) · π ( θ ) m ( x ) (3) 4 where m ( x ) = R L ( θ | x i , y i ) · π ( θ ) dθ is the marinal density of the data. L ( θ | , x i , y i ) is the likelihood of θ given then data and π ( θ ) is the prior distribution for the network parameters. Howe ver , in practice we only need to be able to ev aluate the numerator of (3) up to a constant because we will be relying on MCMC sampling techniques that draw from the correct posterior without having to ev aluate m ( x ) , which may be intractable in high dimensions. Often it is better to work with the log-likelihood l ( θ | x i , y i ) = log ( L ( θ | x i , y i )) , because the raw likelihood can suffer from numerical overflo w or underflow for large problems. In this study we assume the log-likelihood for a neural network with 2 output units is binomial: l ( θ | x i , y i ) = y i 1 · log ( f 1 ( x i )) + y i 2 · log (1 − f 1 ( x i )) (4) Next ev ery parameter in the model must be giv en a prior distribution. The prior distribution cod- ifies beliefs about the values each parameter is likely to take before seeing the data. This type of formulation is extremely useful in high-dimensional settings such as genomics, because it enables statements such as ‘most of the variables are likely to be unrelated to this response’ to be quanti- fied and incorporated into the prior . In this study , we adopt a specific prior structure known as the Automatic Relevance Determination (ARD) prior . This prior was originally introduced in some of the foundational work on Bayesian neural nets [Neal, 1995, Neal, 1998] and later used in SVMs for cancer classification [Li et al., 2002]. The ARD prior groups network weights in the hidden layer together in a meaningful and inter- pretable way . All of the weights in the hidden layer that are associated with the same input variable are considered part of the same group. Each weight in a group is giv en a normal prior distribu- tion with mean zero and a common v ariance parameter . This shared group-le vel v ariance parameter controls how large the weights in a group are allowed to become and performs shrinkage by pool- ing information from sev eral hidden units, which helps to prev ent overfitting [Neal, 1998]. Each of the group-level variance parameters is itself giv en a prior distribution, typically an In verse-Gamma distribution with some shape parameter α 0 and some scale parameter β 0 . These parameters often referred to as hyper parameters, and can themselves be subject to another lev el of prior distrib utions, but for the purposes of this study , we will leave them fixed as user specified values. Specifically , for a network with h hidden units, the weights in the hidden layer for input variable j will have the following prior specification: w j 1 , . . . , w j p ∼ N (0 , σ 2 j ) σ 2 j ∼ I G ( α 0 , β 0 ) This structure allows the network to automatically determine which of the inputs are most relev ant. If variable j is not very useful in determining whether an observation is a case or a control, then the posterior distribution for σ 2 j will be concentrated around small values. Like wise, if variable j is useful in determining the response status, most of the posterior mass for σ 2 j will be centered on larger v alues. 2.1 Hamiltonian Monte Carlo (HMC) f or Neural Networks Here we briefly give an overvie w of Hamiltonian Monte Carlo (HMC) for neural networks, but please see [Neal, 1995, Neal, 1992, Neal, 2011a] for a thorough treatment. HMC is one of many Markov Chain Monte Carlo (MCMC) methods used to draw samples from probability distributions that may not have analytic closed forms. HMC is well suited for high-dimensional models, such as neural nets, because it uses information about the gradient of the log-posterior to guide the sampler to regions of high posterior probability . For neural netw orks, we adopt the two-phase sampling scheme of Neal used in [Neal, 1995]. In the first phase, we update the values of the variance parameters using a Gibbs update, conditional on the current values of the networks weights. In the next phase, we leave the variance parameters fixed and update the network weights using HMC. W e repeat this procedure of Gibbs-coupled HMC updates until we hav e acquired the desired number of posterior samples. The higher level variance parameters, including those in the ARD prior , have simple closed forms be- cause the Inv erse-Gamma distrib ution is a conditionally conjugate prior distribution for the v ariance parameter of a Normal distribution. T o obtain a new value for a variance parameter , conditional on 5 the values of the weights a parameter controls, one makes a draw from the following In verse-Gamma distribution: σ 2 new ∼ I G α 0 + n w 2 , β 0 + n w X i =1 w 2 i 2 ! (5) where each w i is a weight controlled by this v ariance parameter , n w is the number of weights in the group, and α 0 , β 0 are the shape and scale parameters respectiv ely of the prior distribution. HMC then proceeds by performing L number of leap-frog updates for the weights, given the v al- ues of the variance parameters. The algorithm introduces a fictious momentum variable for every parameter in the network that will be updated by simulating Hamiltonian dynamics on the surface of the log-posterior . Since HMC was orginally created in statistical physics it is often presented in terms of energy potentials which is equiv alent to an exponentiation of the negati ve log-posterior , but we will describe the algorithm directly in terms of the log-posterior, which is more natural for our purposes. The full algorithm for sampling the posterior of all parameters (network weights and variance parameters) is sho wn below . HMC Algorithm for Neural Networks Input: X : matrix of predictors Y : matrix of class membership P : log-posterior density to be sampled { ε , L , N s , α }: HMC P arameters Output: N s P osterior Samples of Model P arameters BEGIN ALGORITHM: θ 0 = InitializeNetw orkP arameters() σ 0 2 = InitializeV arianceP arameters() m prev ~ N(0,1) FOR i in 1 to N s DO: σ i 2 = GibbsUpdate(θ i-1 ) γ 0 = θ i-1 m 0 = InitializeMomentum(α, m prev ) FOR t in 1 to L DO: END m prev = m t IF Accept(γ t ): θ i = γ t ELSE: θ i = θ i-1 END ݉ ∗ = ݉ ௧ିଵ + ߳ 2 ߘ ఊ ܲ ߛ ௧ିଵ X, Y; ߪ ଶ ߛ ௧ = ߛ ௧ିଵ + ߳ ∗ ݉ ∗ ݉ ௧ = ݉ ∗ + ߳ 2 ߘ ఊ ܲ ߛ ௧ ܺ , ܻ; ߪ ଶ Figure 1: Algorithm for HMC-based posterior sampling for the neural network model. A fe w details in the HMC algorithm as sho wn need further explanation. First the momentum variables (m) are refreshed after ev ery sequence of L leap-frog updates, shown in the algorithm 6 as InializeMomentum( α ) . In the most simple formulation each momentum component is a independent draw from a Normal distribution, with mean 0 and standard deviation of 1. How- ev er, this can lead to wasted computation because the sampler may start out in bad direction by chance, requiring more leap-frop updates until the sampler is heading in a useful direction re- sulting in random-walk like behavior . T o combat this, we use the persistent momentum refreshes [Nabney , 2002, Neal, 1995] which initializes the momentum using a weighted combination of the final momentum v alue of the pre vious leap-frog update and a draw of standard normal random v ari- able. Using the notation of the algorithm, this is shown belo w: m 0 = α ∗ m prev + p 1 − α 2 ∗ ζ where ζ ∼ N (0 , 1) . If the proposal is rejected the momentum must be negated. This must be done to ensure that the canonical distribution is left intact [Nabney , 2002]. This formulation reduces the number of leap-frog updates (L) needed to reach a distant point by suppressing random-walk behavior while leaving the correct target distribution of the Markov chain intact. [Nabney , 2002, Neal, 1995, Neal, 2011a]. The Accept ( γ t ) function returns true if the new proposal, γ t , is accepted according to a modified Metropolis-Hastings acceptance probability . Accept ( γ t ) returns true with the following probability: ¯ α = min 1 , P ( γ t | X, Y ; σ 2 i ) − 1 2 m T t m t P ( γ t − 1 | X, Y ; σ 2 i ) − 1 2 m T t − 1 m t − 1 ! Howe ver , the posterior distribution is often very ‘bumpy’ with many posterior modes [Neal, 1995]. This property may be exacerbated in high dimensions, so becoming stuck in one mode for e xtended periods of time is a great concern. T o alle viate this we modify the acceptance probability , ¯ α , to correspond to a flattened version of the posterior whose acceptance probability is giv en as α ∗ = ¯ α · T , for T > 1 , which is equiv alent to sampling from P ( θ | X , Y ; σ 2 t ) 1 T . While its true that we are no longer sampling from the exact posterior P ( θ | X , Y ; σ 2 t ) , under mild regularity conditions the posterior modes of the correct distribution remain intact [Andrieu et al., 2003]. Since none of the parameters hav e biological interpretations modifying the posterior in this way of little concern, if the full procedure is capable of maintaining a fa vorable discriminatory ability . W e find the trade-of f between ease of sampling across a wide-range possible scenarios and exactness of the posterior to be acceptable. 2.2 HMC Using Graphics Pr ocessing Units (GPUs) Previous work has shown how the gradient and log-posterior ev aluations needed by HMC can be sped-up by as much as 150x for large problems using Graphics Processing Units (GPUs) [Beam et al., 2014]. W e adopt that framework here and express the gradient calculations as matrix- vector operations or element-wise operations. Similarly , ev aluation of the log-posterior can be ex- pressed in terms of linear operators and element-wise operations. Using GPUs for these opera- tions is well known in the neural network literature [Bergstra et al., 2010, Lopes and Ribeiro, 2009, Oh and Jung, 2004] as the gradient of the log-posterior corresponds roughly to the well-known back- propagation algorithm and e valuation of the log-posterior corresponds to the feed-forward operation in standard neural netw orks. Howe ver , to our kno wledge this study represents the first GPU-enabled implementation of Bayesian neural networks. W ithout GPU computing, it is likely that the com- putational burden imposed by large datasets would be too great for the Bayesian neural network framew ork to be feasible. All of methods discussed in this study are implemented in the Python programming language. All GPU operations were conducted using the Nvidia CUD A-GPU [Nvidia, 2008] programming en- vironment and accessed from Python using the PyCuda library [Klockner et al., 2012]. Source code containing the Bayesian neural network package is available at https://github.com/ beamandrew/BNN . 2.3 Bayesian T est of Significance f or ARD Parameters Giv en the ARD prior a natural question to ask is how large do values of σ 2 j need to be for input j to be considered relev ant compared to a variable that is completely unrelated. This question can be framed in terms of a Bayesian hypothesis test. In this framew ork we will assume that under 7 the null hypothesis a variable is completely irrele vant in determining the status of the response. If this were a simple linear model, this would be equiv alent to saying the regression coef ficient for this variable has a posterior mean of zero. In the neural network model the ARD parameters that determine ho w rele vant each input is are strictly positi ve, so we need a baseline or null model for the ARD parameters in order to determine if we can reject this null hypothesis of irrelev ance. In order to construct and test this hypothesis, we make a simplifying assumption that weights for unrelated variables have a normal distrib ution with mean 0 and v ariance σ 2 null i.e. w kj ∼ N (0 , σ 2 null ) . Due to the complex statistical model, the true posterior distrib utions for the weights under the null may not be exactly normal, b ut this approximation will be useful in simplifying the calculations. Additionally since, the prior for each weight is normal, this approximation will most likely not be too far from true posterior form under the stated null hypothesis. Since σ 2 null represents the null ARD parameter associated with a variable of no effect, we wish to test whether a v ariable of interest is significantly greater than this null v alue. W e use the phrases null and significance here because of their familiar statistical connotations, but they should not be confused with the p-v alue based frequentist hypothesis testing procedure, as we are operating within a fully Bayesian frame work. Our goal becomes testing whether the mean, µ j of the posterior distribution for the ARD parameter , σ 2 j , is greater than the mean of the null, µ null , for the null ARD parameter σ 2 null . Specifically we wish to test the following null hypothesis: H 0 : µ null = µ j against the one-sided alternati ve: H a : µ null < µ j T o test this, we need to know the closed form of µ null . Making use of the iterative two-stage sampling scheme, we will deriv e this form by induction. W e will also make use of se veral well- known facts of random v ariables. Firstly , if a random variable X has an in verse-gamma distribution, i.e. X ∼ I G ( α, β ) , then the mean or expected value of X , E [ X ] , is giv en by β α − 1 . Next, if the sequence of random variables X 1 . . . X n are each independently and identically distributed as N (0 , σ 2 ) , then n P i =1 X i σ 2 = < X 1 σ , . . . , X n σ > T < X 1 σ , . . . , X n σ > ∼ χ 2 n , i.e. a chi-squared random variable with n degrees of freedom. This sum has an expected v alue of n, from the definition of a chi-squared random variable. This implies the conditional expected v alue E [ < X 1 , , X n > T < X 1 , , X n > | σ ] = σ 2 ∗ n . Using these basic facts we will show that under the null, the two-stage sampling scheme leav es expected v alue of the ARD parameter inv ariant, i.e. µ null = µ prior . For a network with h hidden units, let w j = < w 1 j , . . . , w hj > be a vector containing all of the weights associated with input j , where each component of w j is initially distributed according to the prior , N (0 , σ 2 j ) and σ 2 j ∼ I G ( α 0 , β 0 ) . The mean, for σ j at the start of the simulation is µ 0 = β 0 α 0 − 1 . W e begin the simulation at iteration i=1 and perform a Gibbs update of σ 2 j . The Gibbs update for the shape parameter , α 1 = α 0 + n w / 2 , is iteration independent and will remain fix ed for the entirety of the simulation. Howe ver , the Gibbs update for the scale parameter, β 1 = β 0 + ( w T j w j ) / 2 , depends upon the current values of the weights, and thus will take on a random value at each iteration. Howe ver , we can compute the expected value for β 1 as: E [ β 1 ] = E " β 0 + w T j w j 2 # = β 0 + 1 2 E [ w T j w j ] = β 0 + 1 2 ( h · E [ σ 2 0 ]) = β 0 + h 2 · β 0 α 0 − 1 = β 0 + h 2 · µ 0 8 Thus, the expected v alue of β 1 after the first Gibbs update is β 0 + h 2 · µ 0 . Note that this expectation is independent of simulation iteration, so this result will hold for all β 1 , β 2 , · · · , β . Next, we use this fact to compute the expected v alue of the ARD parameter , E [ σ 2 1 ] : E [ σ 2 1 ] = E β 1 α 0 + h/ 2 − 1 = E [ β 1 ] α 0 + h/ 2 − 1 = β 0 + h/ 2 · µ 0 α 0 + h/ 2 − 1 = β 0 α 0 − 1 + h/ 2 + h/ 2 · µ 0 α 0 − 1 + h/ 2 = 1 α 0 − 1 1 α 0 − 1 · β 0 α 0 − 1 + h/ 2 + h/ 2 · µ 0 α 0 − 1 + h/ 2 = µ 0 1 + h/ 2 · 1 α 0 − 1 + h/ 2 · µ 0 α 0 − 1 + h/ 2 = µ 0 1 1 + h/ 2 · 1 α 0 − 1 + h/ 2 α 0 − 1 + h/ 2 ! = µ 0 α 0 − 1 α 0 − 1 + h/ 2 + h/ 2 α 0 − 1 + h/ 2 = µ 0 α 0 − 1 + h/ 2 α 0 − 1 + h/ 2 = µ 0 Thus, the Gibbs update of the ARD parameter does not change the expected value under the null, since we defined E [ σ 2 0 ] = µ 0 . This establishes the base case, and no w we sho w the induction step. Giv en E [ β t +1 ] = E [ β t ] = β 0 + h 2 · µ 0 and E [ σ t ] = µ 0 then: E [ σ t +1 ] = E β t +1 α 0 + h/ 2 − 1 = E [ β t +1 ] α 0 + h/ 2 − 1 = β 0 + h/ 2 · µ 0 α 0 + h/ 2 − 1 = µ 0 where the simplification between lines 3 and 4 proceeds as before. This concludes the proof. 3 Results 3.1 Existing Methods Used f or Comparison W e selected sev eral methods to serve as baselines for ev aluation of the BNNs performance. As previously mentioned BEAM and MDR are widely used methods and so were included in our e val- uation. W e used a custom compiled 64-bit version of BEAM using the source provided on the website [Zhang, 2014] of the authors of [Zhang and Liu, 2007]. The jav a-based MDR package was downloaded from the MDR source-for ge repository (http://sourceforge.net/projects/mdr/) and called from within a Python script. T o ev aluate the effecti veness of tree-based methods, we used an ap- proach nearly identical to that in [Li et al., 2011], which was based on boosted decision trees. The boosted decision tree model provides measures of relativ e influence for each variable that indicate 9 how important a given variable is, relati ve to the others in the model. T o fit the boosted tree model we used the gbm package in R. Finally , we also included the standard 2 degrees-of-freedom chi-square test of marginal ef fects. As discussed, some approaches such as MDR and GBM require a permutation testing strategy to assess statistical significance. This makes assessing their performance on large datasets difficult, due to the amount time required to perform the permutation test. During our pilot inv estigations on a dataset containing 1,000 SNPs, each individual run of MDR was found to take roughly 1 minute to complete. The time needed to complete the required 20,000 permutations would be roughly 2 weeks. If we wish to ev aluate a methods effecti veness on hundreds or thousands of such datasets, this run time becomes prohibitive. As such, we divided our primary analysis into two sections. In the first section, we e valuated methods that do not rely on permutation testing on datasets containing 1,000 SNPs each. Howe ver , since we wish to compare the results of the BNN to that of MDR and GBM, we performed a second set of analyses on smaller datasets that only contained 50 SNPs each, for which permutation testing is feasible. This two-pronged strategy allowed us to ev aluate a wide range of popular approaches in a reasonable amount of time, while serving to underscore the need for methods that do not rely on permutation testing. 3.2 Parametric Models of Multi-Locus Relationships In this section we performed an analysis of three biallelic models of genotypic relationships. These models hav e been used previously [Zhang and Liu, 2007, Li et al., 2011] and are meant to reflect theoretical and empirical e vidence for genetic relationships in volving multiple loci [Li and Reich, 2000]. T ables 1, 2, and 3 contain the risk relativ e of disease for each genotype com- bination, where a capital and lower case letters represent the major and minor alleles, respecti vely . T able 1: Additiv e Risk Model Genotype AA Aa aa BB η η (1 + θ ) η (1 + 2 θ ) Bb η (1 + θ ) η (1 + 2 θ ) η (1 + 3 θ ) bb η (1 + 2 θ ) η (1 + 3 θ ) η (1 + 4 θ ) T able 2: Threshold Risk Model Genotype AA Aa aa BB η η η Bb η η (1 + θ ) η (1 + θ ) bb η η (1 + θ ) η (1 + θ ) T able 3: Epistatic Risk Model Genotype AA Aa aa BB η η η (1 + 4 θ ) Bb η η (1 + 2 θ ) η bb η (1 + 4 θ ) η η The symbols η and θ in the tables represent the baseline risk and effect size, respectiv ely . W e simulated genotypes for the disease SNPs for a range of minor allele frequencies (MAFs) and simulated the disease status for 1,000 cases and 1,000 controls using the risks giv en in T ables 1, 2, and 3. W e embedded the causal SNPs in a background of 998 non-causal SNPs, for a total of 1,000 SNPs to be considered. For each combination of effect size, θ ∈ { 0 . 5 , 1 . 0 , 1 . 5 , 2 . 0 } , 10 MAF ∈ { 0 . 1 , 0 . 2 , 0 . 3 , 0 . 4 , 0 . 5 } , and model type (Additive, Threshold and Epistasis) we generated 100 datasets. This yielded a total of 6,000 datasets for ev aluation. All datasets in this section were created using the R statistical programming language [T eam et al., 2005]. W e ran BNN, BEAM, and the χ 2 test on each dataset and recorded whether or not both disease SNPs were declared as significant by each method. W e took the fraction of datasets where both disease SNPs were correctly identified as an estimate of statistical power . For BEAM and the χ 2 test, we used the canonical Bonferroni corrected significance threshold of p < 0 . 05 . W e used the recommended parameter settings for BEAM [Zhang and Liu, 2007] and performed 1 ∗ 10 6 sampling iterations for each dataset. For the BNN approach, we used a network with 1 hidden layer and 5 logistic units and a softmax output layer with 2 units. The network parameters in the hidden layer are giv en ARD priors, while the network parameters in the output are gi ven a common Gaussian prior . The hyper parameters for the In verse-Gamma prior for the ARD parameters were α 0 = 5 , β 0 = 2 while the hyper parameters for the Gaussian priors were α 0 = 0 . 1 , β 0 = 0 . 1 . The parameters for the HMC algorithm were = 5 ∗ 10 − 2 , L = 15 , α = 0 . 75 , and T = 5 ∗ 10 3 . The cutoff value for the novel Bayesian ARD testing framework was 0.6. W e discarded the first 25 samples as burn- in and kept 100 samples to be used for inference. Processing of each dataset by the BNN took approximately 3 minutes. The results are shown belo w in Figures 2, 3 and 4. Figure 2: Additive Model. Estimated power to detect both disease SNPs using Bayesian neural networks (BNN), BEAM, and χ 2 test (CHI) with 2 d.f. Effect sizes of { 0 . 5 , 1 . 0 , 1 . 5 , 2 . 0 } are shown in order from left to right, top to bottom. W ithin each pane results are stratified by minor allele frequency (MAF). BNNs were found to be uniformly more powerful than both BEAM and the χ 2 test for the additive model. BNNs sho w e xcellent po wer, even for small ef fect sizes and achieve 100% power for second smallest effect size across all tested MAFs. In contrast, BEAM sho wed relati vely little power for the 11 Figure 3: Threshold Model. Estimated power to detect both disease SNPs using Bayesian neural networks (BNN), BEAM, and χ 2 test (CHI) with 2 d.f. Effect sizes of { 0 . 5 , 1 . 0 , 1 . 5 , 2 . 0 } are shown in order from left to right, top to bottom. W ithin each pane results are stratified by minor allele frequency (MAF). smallest effect size and never achieves 100% for all MAFs, ev en at the highest level of effect size. The threshold model tells a similar story . For all but 3 combinations of MAF and effect size, the BNN model is again uniformly more powerful than both BEAM and the 2 test. The picture from the epistatic model is slightly more mixed. BEAM appeared to do a better job at the smallest ef fect size, while performing equally well as BNNs on the remaining three ef fect size lev els. All three methods had almost no power to detect the causal SNPs for a MAF of 0.5. These results suggest that BNN is uniformly more powerful the χ 2 test for these genetic models, and may be more powerful than BEAM in most instances. 3.3 Simulated Epistatic Relationships without Marginal Effects In this section, we ev aluated the performance of all the methods examined in the previous section (BNN, BEAM, and the χ 2 ) as well as GBM and MDR. Since MDR and GBM rely on permu- tation testing, we reduced the size of the dataset to accommodate this strategy . T o generate test datasets, we used the GAMETES software package [Urbano wicz et al., 2012]. This package allows users to specify the proportion of variance for case/control status that is due to genetic variants (i.e. broad-sense heritability) as well as how many loci are inv olved in determining trait status. These relationships are generated such that there are minimal marginal effects, resulting in relationships that are nearly purely epistatic. Relationships without marginal effects are in some sense ‘harder’ than those with marginal effects, because the causal SNPs contrib ute to trait status only through their interaction. Preliminary analysis on the re duced SNP datasets indicated that if the same models were 12 Figure 4: Epistatic Model. Estimated power to detect both disease SNPs using Bayesian neural networks (BNN), BEAM, and χ 2 test (CHI) with 2 d.f. Effect sizes of { 0 . 5 , 1 . 0 , 1 . 5 , 2 . 0 } are shown in order from left to right, top to bottom. W ithin each pane results are stratified by minor allele frequency (MAF). used as in the pre vious section, most methods would ha ve nearly 100% po wer for all simulated sce- narios, which would provide little useful feedback for discerning which approaches were working best. This was the primary motiv ation for using the ‘harder’, purely epistatic relationships instead of the parametric models we used previously . Using GAMETES, we analyzed two le vels of heritability (5% and 10%) across a range of MAFs (0.05, 0.1, 0.2, 0.3, 0.4, 0.5). Power was measured as in the pre vious section using 100 instances for each heritability/MAF combination for a total of 1200 data sets used in ev aluation. The results are shown belo w in Figures 5 and 6. BNN outperform all methods from the previous section (BEAM and χ 2 test) by a very wide mar gin. This suggests that BEAM may be less robust to detect causal SNPs in the absence of marginal effects than previously thought, as it never achieves 25% power in any of the scenarios tested. Again, we find these results encouraging as they indicate that BNNs are indeed po werful relati ve to existing approaches. Additionally , BNN outperformed the GBM method in all but 2 scenarios, indicating that BNN maybe be more adept at detecting purely epistatic signals across a broad array of MAFs and effect sizes. MDR performs well across every parameter combination tested, but as mentioned previously it is incapable of performing this analysis on a GW AS scale due to the exhausti ve search technique and the need to perform permutation testing to assess statistical significance. T o conclude this section, we note that BNN was the only method that did well across a variety of genetic models, number of SNPs, and MAFs while being capable of scaling to GW AS-sized data. This provides 13 MAF = 0.05 MAF = 0.1 MAF = 0.2 MAF = 0.3 MAF = 0.4 MAF = 0.5 0.00 0.25 0.50 0.75 1.00 BNN BEAM CHI GBM MDR BNN BEAM CHI GBM MDR BNN BEAM CHI GBM MDR BNN BEAM CHI GBM MDR BNN BEAM CHI GBM MDR BNN BEAM CHI GBM MDR Method P ower P ower f or NoMarginal Model Heritability = 5% Figure 5: Purely Epistatic Model with 5% heritability . Estimated po wer to detect both disease SNPs of Bayesian neural networks (BNN), BEAM, 2 test (CHI) with 2 d.f., gradient boosted trees (GBM), and MDR. The results are stratified by minor allele frequency (MAF). evidence that BNN framework is deserving of further inv estigation as an analysis technique for association studies. 3.4 Sensitivity and Specificity Analysis of the ARD T est The cutoff value used for the ARD test has an obvious impact on the methods performance. In the extreme case, a cutof f of 0 would result in nothing being significant while a cutoff v alue of 1 would result in everything being declared as such. The cutoff value controls the tradeof f between sensitivity (i.e. the true positive rate) and specificity (i.e. the true negati ve rate, which is equiv alent to 1 the false positiv e rate). Ev aluation of the false positi ve rate for the cutoff value of 0.6 used in the pre vious experiments indicates that the BNN method properly controls the amount of false positi ves. W e observed an av erage false positi ve rate (FPR) of roughly 0.005 and 0.06 for the parametric models and the purely epistatic models, respectiv ely as shown in Figure 7. T o e xamine the trade of f between the true positi ve rate (TPR) and FPR as the cutof f value is changed, we modulated the cutof f from 0 to 1 in increments of 0.01 and recorded the true positive and false positiv e rate for each data set in the two pre vious sections. In Figure 8, we averaged the TPR and FPR o ver ef fect size and MAF to produce a recei ver -operator characteristic (R OC) curve for each of the 4 genetic models. The legend displays the area under the curve (A UC) for each model. 14 MAF = 0.05 MAF = 0.1 MAF = 0.2 MAF = 0.3 MAF = 0.4 MAF = 0.5 0.00 0.25 0.50 0.75 1.00 BNN BEAM CHI GBM MDR BNN BEAM CHI GBM MDR BNN BEAM CHI GBM MDR BNN BEAM CHI GBM MDR BNN BEAM CHI GBM MDR BNN BEAM CHI GBM MDR Method P ower P ower f or NoMarginal Model Heritability = 10% Figure 6: Purely Epistatic Model with 10% heritability . Estimated power to detect both disease SNPs of Bayesian neural networks (BNN), BEAM, 2 test (CHI) with 2 d.f., gradient boosted trees (GBM), and MDR. The results are stratified by minor allele frequency (MAF). These results show that BNN-ARD test for variable importance is able to achie ve a high true positiv e rate, while maintaining a low false positi ve rate, which is an indication the method is performing as well and as expected. 3.5 Analysis of T uberculosis Data T o e valuate the performance of Bayesian neural networks on a real dataset, we analyzed a GW AS designed to find genetic markers associated with tuberculosis (TB) disease progression. The dataset describe in [Oki et al., 2011], contains information on roughly 60,000 SNPS and 105 subjects. For our study , each subject was classified as currently infected with any form of tuberculosis (i.e. e x- trapulmonary or pulmonary) or having a latent form of TB confirmed through a positive tuberculin skin test (purified protein deri vati ve positiv e). Quality control was performed and SNPs with miss- ing values were excluded, as were SNPs that were found to be out of Hardy-W einber g equilibrium at the 0.05 lev el. After QC, there were 16,925 SNPs av ailable for analysis and 104 subjects. Based on evidence of subpopulations in this data [Oki et al., 2011], subjects were assigned to one of three clusters created using the top two principal components and cluster membership was included as a cov ariate in the model. Sampling of the Bayesian neural network was conducted as outlined in the previous section, with ARD hyper-parameters of α 0 = 3 , β 0 = 1 . W e performed 100 burn-in iterations followed by 1,000 sampling iterations which took approximately 20 hours. The top five SNPs based on posterior ARD probabilities are shown below in T able 4. The SNP reported as the 2nd most significant in [Oki et al., 2011] (rs10490266) appeared in our analysis as the 31st most 15 Figure 7: False Positi ve Rates (FPR) for each model/ef fect size combination, av eraged ov er MAF . T able 4: T op 5 SNPs based on posterior ARD probabilities. Note these probabilities are presented in terms of in volvement (lar ger indicates a SNP is more likely to be in volved). SNP CHR P r ( µ j > µ null ) rs966414 2 0.524 rs1378124 8 0.515 rs9327930 5 0.509 rs4721214 7 0.502 rs4721214 9 0.498 significant SNP . Only one of the SNPs in T able 4 is currently kno wn to be located within a gene (rs1378124 - MA TN2) according to dbSNP . Every SNP reported in T able 4 is located on a the same chromosome and within 10-50 MB of loci pre viously reported as having a statistically significant association with pulmonary tuberculosis susceptibility [Png et al., 2012] in an Indonesian popula- tion. The loci reported in [Png et al., 2012] were unfortunately either not part of the original SNP library or remo ved during the QC process in this study . Due to the small sample size of this dataset, it is hard to say conclusiv ely which of the SNPs reported here and in [Oki et al., 2011] are most likely to replicate in a larger study . Howe ver , we present this analysis to demonstrate that the BNN framew ork is capable of analyzing data sets containing a high number of SNPs in a relati vely short amount time. 16 0.00 0.25 0.50 0.75 1.00 0.00 0.25 0.50 0.75 1.00 F alse Positiv e Rate T rue P ositive Rate Model Additive − A UC: 0.99 Epistasis− A UC: 0.85 No Marginal− A UC: 0.85 Threshold − A UC: 0.88 ROC Curve f or Bay esian Neural Networks Figure 8: Receiver -Operator Characteristic (ROC) curve for BNNs. Each line represents the ROC curve for a different genetic model, averaged over effect size and MAF . The area under the curve (A UC) for each model is shown in the le gend. 4 Conclusions In this study we hav e proposed the use of Bayesian neural networks for association studies. This approach was sho wn to be powerful across a broad spectrum of dif ferent genetic architectures, ef fect sizes, and MAFs. Of the approaches that do not rely on permutation testing, BNN was uniformly more powerful than the standard 2 test and almost uniformly more powerful than the powerful than the popular BEAM method in the scenarios considered. BNN again sho wed a near uniformly better performance than the GBM method. MDR was very competitive with BNN in our ev aluations, howe ver MDR is incapable of scaling to larger datasets due to both its exhausti ve search technique and reliance on permutation testing. In conclusion, we hav e demonstrated that BNNs are a po werful technique for association studies while having the capability of scaling to large GW AS sized datasets. A vailability of Code Source code implementing the GPU-based Bayesian neural network framework outlined in this pa- per is av ailable at https://github.com/beamandrew/BNN. References [Andrieu et al., 2003] Andrieu, C., De Freitas, N., Doucet, A., and Jordan, M. I. (2003). An intro- duction to mcmc for machine learning. Machine learning , 50(1-2):5–43. [Baesens et al., 2002] Baesens, B., V iaene, S., den Poel, D. V ., V anthienen, J., and Dedene, G. (2002). Bayesian neural network learning for repeat purchase modelling in direct marketing. Eur opean Journal of Operational Resear ch , 138(1):191–211. 17 [Beam et al., 2014] Beam, A. L., Ghosh, S. K., and Doyle, J. (2014). Fast hamiltonian monte carlo using gpu computing. ArXiv e-prints . 1402.4089; Provided by the SA O/NASA Astrophysics Data System. [Bengio, 2009] Bengio, Y . (2009). Learning deep architectures for ai. F oundations and trends® in Machine Learning , 2(1):1–127. [Bergstra et al., 2010] Bergstra, J., Breuleux, O., Bastien, F ., Lamblin, P ., Pascanu, R., Desjardins, G., T urian, J., W arde-Farle y , D., and Bengio, Y . (2010). Theano: A cpu and gpu math compiler in python. In Proceedings of the Python for Scientific Computing Confer ence, SciPy . [Breiman, 2001] Breiman, L. (2001). Random forests. Machine Learning , 45(1):5–32. [Diaz-Uriarte and de Andres, 2006] Diaz-Uriarte, R. and de Andres, S. A. (2006). Gene selec- tion and classification of microarray data using random forest. BMC bioinformatics , 7:3. LR: 20130905; JID: 100965194; OID: NLM: PMC1363357; 2005/07/08 [received]; 2006/01/06 [ac- cepted]; 2006/01/06 [aheadofprint]; epublish. [Friedman, 2001] Friedman, J. H. (2001). Greedy function approximation: a gradient boosting machine.(english summary). Ann.Statist , 29(5):1189–1232. [Greene et al., 2010] Greene, C. S., Sinnott-Armstrong, N. A., Himmelstein, D. S., P ark, P . J., Moore, J. H., and Harris, B. T . (2010). Multifactor dimensionality reduction for graphics process- ing units enables genome-wide testing of epistasis in sporadic als. Bioinformatics , 26(5):694– 695. [Guyon et al., 2002] Guyon, I., W eston, J., Barnhill, S., and V apnik, V . (2002). Gene selection for cancer classification using support vector machines. Machine Learning , 46(1-3):389–422. [Hahn et al., 2003] Hahn, L. W ., Ritchie, M. D., and Moore, J. H. (2003). Multifactor dimensional- ity reduction softw are for detecting gene–gene and gene–en vironment interactions. Bioinformat- ics , 19(3):376–382. [Hastings, 1970] Hastings, W . K. (1970). Monte carlo sampling methods using markov chains and their applications. Biometrika , 57(1):97–109. [Hinton et al., 2012] Hinton, G. E., Sri vasta va, N., Krizhe vsky , A., Sutskev er , I., and Salakhutdinov , R. R. (2012). Improving neural networks by pre venting co-adaptation of feature detectors. arXiv pr eprint arXiv:1207.0580 . [Hornik et al., 1989] Hornik, K., Stinchcombe, M., and White, H. (1989). Multilayer feedforward networks are uni versal approximators. Neural Networks , 2(5):359–366. [Jiang et al., 2011] Jiang, X., Neapolitan, R. E., Barmada, M. M., and V isweswaran, S. (2011). Learning genetic epistasis using bayesian network scoring criteria. BMC bioinformatics , 12:89– 2105–12–89. LR: 20131018; GR: 1K99LM010822-01/LM/NLM NIH HHS/United States; GR: K99 LM010822/LM/NLM NIH HHS/United States; GR: R00 LM010822/LM/NLM NIH HHS/United States; GR: R01 HG004718/HG/NHGRI NIH HHS/United States; JID: 100965194; OID: NLM: PMC3080825; 2010/07/21 [recei ved]; 2011/03/31 [accepted]; 2011/03/31 [aheadof- print]; epublish. [Klockner et al., 2012] Klockner , A., Pinto, N., Lee, Y ., Catanzaro, B., Ivanov , P ., and Fasih, A. (2012). Pycuda and pyopencl: A scripting-based approach to gpu run-time code generation. P arallel Computing , 38(3):157–174. [K oo et al., 2013] K oo, C. L., Lie w , M. J., Mohamad, M. S., and Salleh, A. H. M. (2013). A re vie w for detecting gene-gene interactions using machine learning methods in genetic epidemiology . BioMed r esear ch international , 2013. [Li et al., 2011] Li, J., Horstman, B., and Chen, Y . (2011). Detecting epistatic ef fects in association studies at a genomic lev el based on an ensemble approach. Bioinformatics , 27(13):222–229. [Li and Reich, 2000] Li, W . and Reich, J. (2000). A complete enumeration and classification of two-locus disease models. Human heredity , 50(6):334–349. [Li et al., 2002] Li, Y ., Campbell, C., and T ipping, M. (2002). Bayesian automatic relev ance deter- mination algorithms for classifying gene expression data. Bioinformatics , 18(10):1332–1339. [Lisboa et al., 2003] Lisboa, P . J., W ong, H., Harris, P ., and Swindell, R. (2003). A bayesian neural network approach for modelling censored data with an application to prognosis after surgery for breast cancer . Artificial Intelligence in Medicine , 28(1):1–25. 18 [Lopes and Ribeiro, 2009] Lopes, N. and Ribeiro, B. (2009). GPU implementation of the multi- ple back-pr opagation algorithm , pages 449–456. Intelligent Data Engineering and Automated Learning-IDEAL 2009. Springer . [Lunetta et al., 2004] Lunetta, K. L., Hayward, L. B., Segal, J., and Eerdewe gh, P . V . (2004). Screening large-scale association study data: exploiting interactions using random forests. BMC genetics , 5(1):32. [Manolio et al., 2009] Manolio, T . A., Collins, F . S., Cox, N. J., Goldstein, D. B., Hindorff, L. A., Hunter , D. J., McCarthy , M. I., Ramos, E. M., Cardon, L. R., Chakrav arti, A., et al. (2009). Finding the missing heritability of complex diseases. Nature , 461(7265):747–753. [Metropolis et al., 2004] Metropolis, N., Rosenbluth, A. W ., Rosenbluth, M. N., T eller, A. H., and T eller , E. (2004). Equation of state calculations by fast computing machines. The Journal of chemical physics , 21(6):1087–1092. [Moore et al., 2006] Moore, J. H., Gilbert, J. C., Tsai, C.-T ., Chiang, F .-T ., Holden, T ., Barney , N., and White, B. C. (2006). A flexible computational framework for detecting, characterizing, and interpreting statistical patterns of epistasis in genetic studies of hu disease susceptibility . Journal of theor etical biology , 241(2):252–261. [Motsinger-Reif et al., 2008a] Motsinger-Reif, A. A., Dudek, S. M., Hahn, L. W ., and Ritchie, M. D. (2008a). Comparison of approaches for machine-learning optimization of neural networks for detecting gene-gene interactions in genetic epidemiology . Genetic epidemiology , 32(4):325– 340. [Motsinger-Reif et al., 2008b] Motsinger-Reif, A. A., Reif, D. M., F anelli, T . J., and Ritchie, M. D. (2008b). A comparison of analytical methods for genetic association studies. Genetic epidemi- ology , 32(8):767–778. [Motsinger-Reif and Ritchie, 2008] Motsinger-Reif, A. A. and Ritchie, M. D. (2008). Neural net- works for genetic epidemiology: past, present, and future. BioData mining , 1(3). [Nabney , 2002] Nabney , I. (2002). NETLAB: algorithms for pattern recognition . Springer . [Neal, 2011a] Neal, R. (2011a). Mcmc for using hamiltonian dynamics. Handbook of Markov Chain Monte Carlo , pages 113–162. [Neal, 2011b] Neal, R. (2011b). Mcmc using hamiltonian dynamics. Handbook of Markov Chain Monte Carlo , pages 113–162. [Neal, 1992] Neal, R. M. (1992). Bayesian training of backpropagation networks by the hybrid monte carlo method. T echnical report, Citeseer . [Neal, 1995] Neal, R. M. (1995). Bayesian learning for neural networks. [Neal, 1998] Neal, R. M. (1998). Assessing relev ance determination methods using delve. NA TO ASI SERIES F COMPUTER AND SYSTEMS SCIENCES , 168:97–132. [Nvidia, 2008] Nvidia, C. (2008). Programming guide. [Oh and Jung, 2004] Oh, K.-S. and Jung, K. (2004). Gpu implementation of neural networks. P at- tern Recognition , 37(6):1311–1314. [Oki et al., 2011] Oki, N. O., Motsinger-Reif, A. A., Antas, P . R., Le vy , S., Holland, S. M., and Ster- ling, T . R. (2011). Novel human genetic v ariants associated with extrapulmonary tuberculosis: a pilot genome wide association study . BMC r esearc h notes , 4(1):28. [Png et al., 2012] Png, E., Alisjahbana, B., Sahiratmadja, E., Marzuki, S., Nelwan, R., Balabanov a, Y ., Nikolayevsk yy , V ., Drobnie wski, F ., Nejentsev , S., Adnan, I., et al. (2012). A genome wide association study of pulmonary tuberculosis susceptibility in indonesians. BMC medical genetics , 13(1):5. [Rumelhart et al., 1988] Rumelhart, D. E., Hinton, G. E., and W illiams, R. J. (1988). Learning r epr esentations by back-pr opagating err ors . MIT Press, Cambridge, MA, USA. [T eam et al., 2005] T eam, R. C. et al. (2005). R: A language and en vironment for statistical com- puting. R foundation for Statistical Computing . [Urbanowicz et al., 2012] Urbanowicz, R. J., Kiralis, J., Sinnott-Armstrong, N. A., Heberling, T ., Fisher , J. M., and Moore, J. H. (2012). Gametes: a fast, direct algorithm for generating pure, strict, epistatic models with random architectures. BioData mining , 5(1):1–14. 19 [W illiams, 1995] W illiams, P . M. (1995). Bayesian regularization and pruning using a laplace prior . Neural computation , 7(1):117–143. [Zhang, 2014] Zhang, Y . (2014). Academic website for yu zhang @online. [Zhang and Liu, 2007] Zhang, Y . and Liu, J. S. (2007). Bayesian inference of epistatic interactions in case-control studies. Natur e genetics , 39(9):1167–1173. 20

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment