Generalized Direct Sampling for Hierarchical Bayesian Models

We develop a new method to sample from posterior distributions in hierarchical models without using Markov chain Monte Carlo. This method, which is a variant of importance sampling ideas, is generally applicable to high-dimensional models involving l…

Authors: Michael Braun, Paul Damien

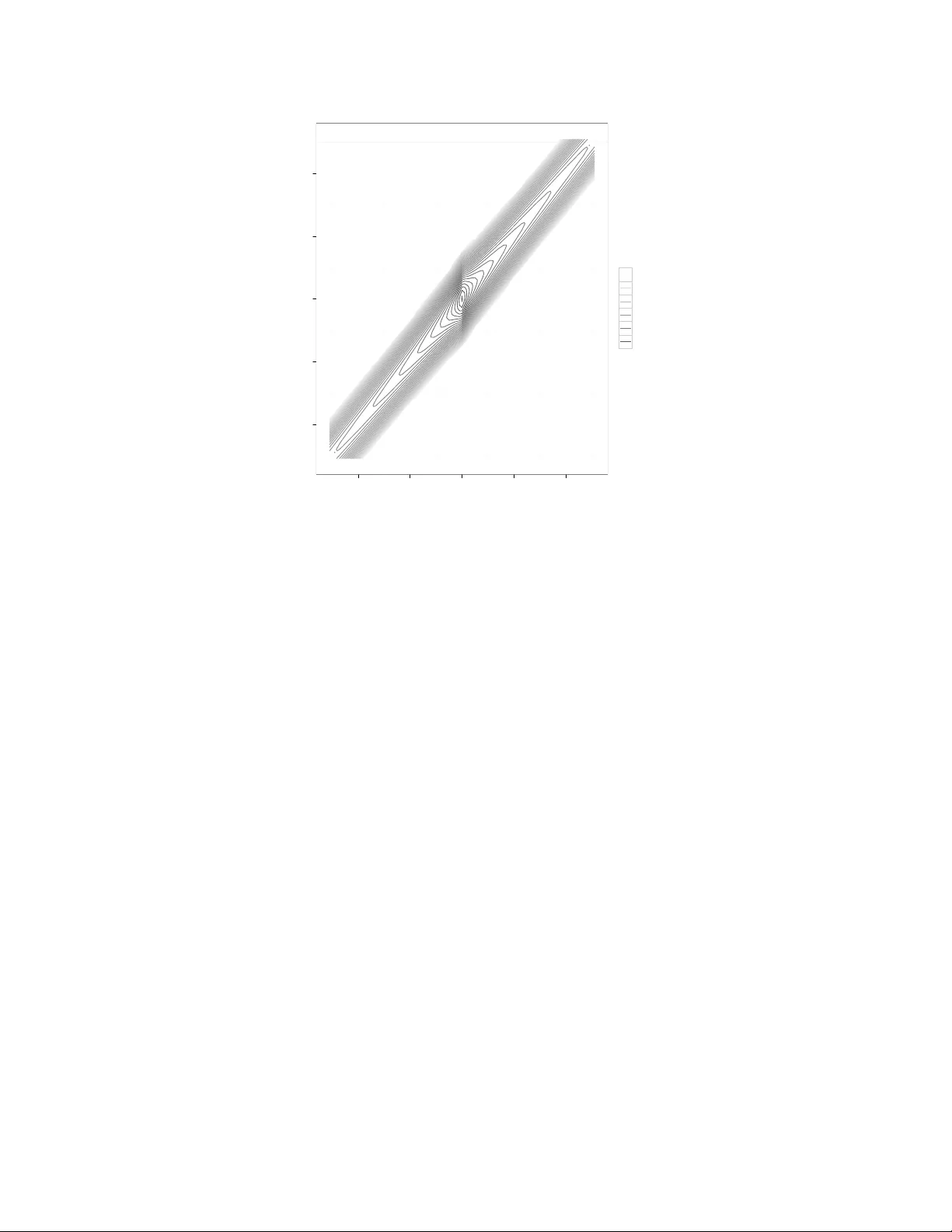

Generalized Direct Sampling for Hierar chical Ba y esian Models Michael Braun MIT Sloan S chool of Management Massachusetts Institute of T echnology Cambridge, MA 02142 braunm@mit.edu Paul Damien McCombs S chool of Business Univ ersity of T exas at A ustin A ustin, TX 78712 paul.damien@mccombs.utexas.edu A ugust 7, 2012 Abstract W e dev elop a new method to sample from posterior distributions in hierarchical models without using Marko v chain Monte Carlo. This method, which is a v ariant of importance sampling ideas, is generally applicable to high-dimensional models inv olving large data sets. Samples are independent, so they can be collected in par- allel, and w e do not need to be concer ned with issues like chain conv ergence and autocorrelation. Additionally , the method can be used to compute marginal likeli- hoods. 1 Introduction Recently , W alker et. al (2010) introduced and demonstrated the merits of a non-MCMC approach called Direct Sampling (DS) for conducting Ba y esian inference. They argued that with their method there is no need to concer n oneself with issues like chain conv er- gence and autocorrelation. They also point out that their method generates independent samples from a target posterior distribution in parallel , unlike MCMC for which, in the absence of parallel independent chains, samples are collected sequentially . W alker et al. also pro v e that the sample acceptance probabilities using DS are better than those from standard rejection algorithms. Put simply , for many common Ba y esian models, they demonstrate impro v ement o v er MCMC in terms of its efficiency , resource demands and ease of implementation. Ho w e v er , DS suffers from some important shortcomings that limit its broad applicabil- ity . One is the failure to separate the specification of the prior from the specifics of the estimation algorithm. Another is an inability to generate accepted dra ws for ev en mod- erately sized problems; the largest number of parameters that W alker et al. consider is 10. Our interest is in conducting full Ba y esian inference on hierarchical models in high dimensions, with or without conjugacy , sans MCMC. The method proposed in this paper , strictly speaking, is not a generalization of the DS algorithm, but since it shares some important features with DS, w e call it Generalized Direct Sampling (GDS). DS and GDS differ in the follo wing respects. 1. While DS focuses on the shape of the data likelihood alone, GDS is concer ned with the characteristics of the entire posterior density . 2. GDS bypasses the need for Ber nstein polynomial approximations, which are inte- gral to the DS algorithm. 1 3. While DS takes proposal dra ws from the prior (which ma y conflict with the data), GDS samples proposals from a separate density that is ideally a good approxima- tion to the target posterior density itself. In addition to the abo v e improv ements ov er DS, GDS maintains many impro v ements o v er MCMC estimation: 1. All samples are collected independently , so there is no need to be concerned with autocorrelation, conv ergence of estimations chains, and so forth. 2. There is no particular adv antage to choosing model components that maintain conditional conjugacy , as is common with Gibbs sampling. 3. GDS generates samples from the target posterior entirely in parallel, which takes adv antage of the most recent adv ances in grid computing and placing multiple CPU cores in a single computer . 4. GDS permits fast and accurate estimation of marginal likelihoods of the data. GDS is introduced in S ection 2. S ection 3 includes examples of GDS in action, starting with a small, but important, tw o-parameter example for which MCMC is known to fail, and concluding with a complex nonconjugate application with ov er 29,000 parameters. In Section 4, GDS is used to estimate marginal likelihoods. Finally , in S ection 5, w e discuss practical issues that one should consider when implementing GDS, including limitations of the approach. 2 2 Generalized Direct Sampling: The Method Like DS, GDS is a v ariant on w ell-kno wn importance sampling methods. The goal is to sample θ ∈ Ω from a posterior density π ( θ | y ) = f ( y | θ ) π ( θ ) L ( y ) = D ( θ , y ) L ( y ) (1) where D ( θ , y ) is the joint density of the data and the parameters (the unnor malized posterior density). Let θ ∗ be the mode of D ( θ , y ) , and define c 1 = D ( θ ∗ , y ) . Choose some proposal distribution g ( θ ) that also has its mode at θ ∗ , and define c 2 = g ( θ ∗ ) . Also, define the function Φ ( θ | y ) = f ( y | θ ) π ( θ ) · c 2 g ( θ ) · c 1 (2) Ob viously π ( θ | y ) = Φ ( θ | y ) · g ( θ ) · c 1 c 2 · L ( y ) (3) An important restriction on the choice of g ( θ ) is that the inequality 0 < Φ ( θ | y ) ≤ 1 must hold, at least for any θ with a non-negligible posterior density . Discussion of the choice of g ( θ ) is giv en in detail a little later . Next, let u | θ , y be an auxiliary variable that is distributed unifor mly on 0, Φ ( θ | y ) π ( θ | y ) , so that p ( u | θ , y ) = π ( θ | y ) Φ ( θ | y ) = c 1 c 2 L ( y ) g ( θ ) . W e then construct a joint density of θ | y and u | θ , y , where p ( θ , u | y ) = π ( θ | y ) Φ ( θ | y ) 1 [ u < Φ ( θ | y ) ] (4) 3 From Equation 4, the mar ginal density of θ | y is p ( θ | y ) = π ( θ | y ) Φ ( θ | y ) Z Φ ( θ | y ) 0 d u = π ( θ | y ) (5) Therefore, simulating from p ( θ | y ) is equivalent to simulating from the target posterior π ( θ | y ) . Using Equations 3 and 4, the marginal density of u | y is p ( u | y ) = Z π ( θ | y ) Φ ( θ | y ) 1 [ u < Φ ( θ | y ) ] d θ (6) = c 1 c 2 L ( y ) Z 1 [ u < Φ ( θ | y ) ] g ( θ ) d θ (7) = c 1 c 2 L ( y ) q ( u ) (8) where q ( u ) = R 1 [ u < Φ ( θ | y ) ] g ( θ ) d θ is defined as the probability that u < Φ ( θ | y ) for any θ dra wn from g ( θ ) . The GDS sampler comes from recognizing that p ( θ , u | y ) can written differently from, but equiv alently to, Equation 4. p ( θ , u | y ) = p ( θ | u , y ) p ( u | y ) (9) The strategy behind GDS is to sample fr om an appr oximation to p ( u | y ) , and then sample from p ( θ | u , y ) . Using the definitions in Equations 2, 3, and 4, w e get p ( θ | u , y ) = p ( θ , u | y ) p ( u | y ) (10) = c 1 c 2 L ( y ) 1 [ u < Φ ( θ | y ) ] g ( θ ) p ( u | y ) (11) Consequently , to sample dir ectly from π ( θ | y ) , one needs only to sample fr om p ( u | y ) and 4 then sample repeatedly from g ( θ ) until Φ ( θ | y ) > u . Ho w does one simulate from p ( u | y ) , which is proportional to q ( u ) ? W alker et al. sam- ple from a similar kind of density by first taking M proposal dra ws from the prior to construct an empirical approximation to q ( u ) , and then constructing a continuous ap- proximation using Ber nstein polynomials. Ho w e v er , in high-dimensional models, this approximation tends to be a poor one at the endpoints, ev en with an extremely large number of Bernstein polynomial components. It is for this reason that the largest num- ber of parameters that W alker et. al. tackle is 10. The GDS strategy to sample u | y is to sample a transfor med v ariable v = T ( u ) , where T ( u ) = − log u . Applying a change of variables, q ( v ) = q ( u ) exp ( − v ) . W ith q ( v ) denot- ing the “true” CDF of v , let q M ( v ) be the empirical CDF after taking M proposal dra ws from g ( θ ) , and ordering the proposals 0 < v 1 < v 2 < . . . < v M < ∞ . T o be clear , q M ( v ) is the proportion of proposal dra ws that are strictly less than v . Because q M ( v ) is discrete, w e can sample from a density proportional to q ( v ) exp ( − v ) b y partitioning the domain into M + 1 segments, partitioned at each v i . The probability of sampling a new v that falls betw een v i and v i + 1 is no w v i = q M ( v ) [ exp ( − v i ) − exp ( − v i + 1 ) ] . Therefore, w e first sample a v i from a multinomial density with w eights proportional to v i , and then let v = v i + e , where e is a dra w from a standard exponential density , truncated on the right at v i + 1 − v i . One can sample from this truncated exponential density b y first sampling a standard unifor m random variate η , and setting v = − log [ 1 − η ( 1 − exp ( v i − v i + 1 ) ] . T o sample N draws from the target posterior , w e sample N “threshold” dra ws of v using this method. Then, for each v , w e repeatedly sample from g ( θ ) until T ( Φ ( θ | y ) ) < v . Note that the inequality sign is flipped from the Φ ( θ | y ) > u expression because of the negativ e sign in the transfor mation. In summary , the steps of the GDS algorithm are as follo ws: 5 1. Find the mode of D ( θ , y ) , θ ∗ and compute the unnormalized log posterior density c 1 = D ( θ ∗ , y ) at that mode. 2. Choose a distribution g ( θ ) so that its mode is also at θ ∗ , and let c 2 = g ( θ ∗ ) . 3. Sample θ 1 , . . . , θ M independently from g ( θ ) . Compute Φ ( θ m ) for these proposal dra ws. If Φ ( θ m ) > 1 for any of these dra ws, repeat Step 2 and choose another proposal distribution for which Φ ( θ m ) < 1 does hold. 4. Compute v i = T ( Φ ( θ | y ) ) for the M proposal draws, and place them in increasing order . 5. Ev aluate, for each proposal dra w , q M ( v ) = M ∑ i = 1 1 [ v i < v ] (12) which is the empirical CDF of v i for the M proposal dra ws. Then compute v i = q M ( v ) [ exp ( − v i ) − exp ( − v i + 1 ) ] for all i . 6. Sample N dra ws of v = v i + e , where a particular v i is chosen according to the multinomial distribution with probabilities proportional to v i , and e is a standard exponential random variate, truncated to v i + 1 − v i . 7. For each of the N required samples from the target posterior , sample θ from g ( θ ) until T ( Φ ( θ | y ) ) < v . Consider each first accepted dra w to be a single draw from the tar get posterior π ( θ | y ) . Choosing g ( θ ) is an important part of this algorithm. Naturally , the closer g ( θ ) is to the target posterior , the more efficient the algorithm will be in ter ms of acceptance rates. In principle, it is up to the researcher to choose g ( θ ) , which is similar in spirit to se- lecting a dominating density while implementing standard rejection algorithms, or ev en 6 Metropolis-Hastings algorithms. For GDS, in practice, a multivariate nor mal proposal distribution with mean at θ ∗ and co v ariance matrix of the inv erse Hessian at θ ∗ , mul- tiplied b y a scaling constant s , w orks w ell. There is nothing special about this choice, except to note that it is easy to implement with a little trial and error in the selection of s . (This is similar , in spirit, to the concept of tuning an M-H algorithm via trial and error .) If the log posterior happens to be multimodal, and the location of the local modes are known, then one could let g ( θ ) be a mixture of multivariate normals instead. Impor - tantly , w e address the sensitivity of the GDS algorithm to M as part of the analysis in S ection 4. Clearly , an advantage of GDS is that the samples one collects from the target posterior density are independent , and that lets us collect them in parallel. Some researchers hav e inv estigated alter nativ e approaches for MCMC-based Ba y esian inference that also take adv antage of parallel computation; see, for example, Suchard et al. (2010). One notable example is a parallel implementation of a multiv ariate slice sampler (MSS), as in T ibbits et. al. (2010). The benefits of parallelizing the MSS come from parallel ev aluation of the target density at each of the v ertices of the multiv ariate slice, and from more efficient use of resources to execute linear algebra operations (e.g, Cholesky decompositions). But the MSS itself remains a Marko vian algorithm, and thus will still generate dependent dra ws. Using parallel technology to generate a single dra w from a distribution is not the same as generating all of the required draws themselv es in parallel. On the other hand, the sampling steps of GDS can be run in their entir ety in parallel. 3 Illustrativ e Analysis W e now pro vide some examples of GDS in action, especially on problems for which MCMC fails, or for which the dimensionality , model structure, and sample size make 7 MCMC methods somewhat unattractiv e. 3.1 A Hierarchical Non-Gaussian Linear Model Consider this motivating example of a linear hierarchical model discussed by Papaspiliopoulous and Roberts (2008). Y = X + e 1 (13) X = Θ + e 2 (14) For an obser v ed v alue Y , X is the latent mean for the prior on Y , Θ is the prior mean of X , and e 1 and e 2 are random error ter ms, each with mean 0. Papaspiliopoulous and Roberts note that to impro v e the robustness of inference on X to outliers of Y , it is common to model e 1 as ha ving hea vier tails than e 2 . Let e 1 ∼ Cauchy ( 0, 1 ) , e 2 ∼ N ( 0, 5 ) , and Θ ∼ N ( 0, 50000 ) , and suppose there is only one observation av ailable, Y = 0. The posterior joint distribution of X and Θ is giv en in Figure 1; the contours represent the logs of the computed posterior densities. Note that around the mode, X and Θ appear uncorrelated, but in the tails they are highly dependent. Papaspiliopoulous and Roberts present this example as a deceptiv ely simple case in which Gibbs sampling perfor ms extremely poorly . Indeed, they note that almost all diagnostic tests will erroneously conclude that the chain has conv er ged. The reason for this failure is that the MCMC chains are attracted to, and get “stuck” in, the modal region where the v ariables are uncorrelated. Once the chain enters the tails, where the v ariables are more correlated, the chains mov es slo wly , or not at all. GDS is a more effectiv e alter nativ e for sampling from the posterior distribution. The posterior mode and Hessian of the log posterior at the mode, are θ ∗ = ( 0, 0 ) and H = 8 −40 −20 0 20 40 −40 −20 0 20 40 x Θ log post density −40 −35 −30 −25 −20 −15 Figure 1: Contours of the ”true” posterior distribution of the non-Gaussian linear model exam- ple. − 2.2 0.2 0.2 − 0.2 . The GDS proposal distribution g ( θ ) is taken to be a bivariate normal with mean θ ∗ and co v ariance − s H − 1 , with s = 200. This scaling factor w as the smallest v alue of s for which Φ ( θ ) ≤ 1 for all M = 20, 000 of the proposal dra ws. T w o hundred independent samples w ere collected using the GDS algorithm. Figure 2 plots each of the GDS dra ws, where darker areas represent higher v alues of log posterior density . GDS not only picks up the correct shape of the regions of high posterior mass near the origin, but also the dependence in the long tails. The acceptance rate to collect these dra ws was about 0.013. In contrast, consider Figure 3. which plots samples collected using MCMC. Specifically , w e used the RH-MALA method in Girolami and Calderhead (2012), with constant curva- ture, estimating the Hessian at each iteration. These are samples from 25,000 iterations, collected after starting at the posterior mode and running through 25,000 burn-in itera- 9 −40 −20 0 20 40 −40 −20 0 20 40 X Θ log.dens −22.7 −22.2 −21.7 −21.2 −20.7 −20.2 −19.7 −19.2 −18.7 −18.2 −17.7 −17.2 −16.7 −16.2 −15.7 −15.2 −14.7 Figure 2: Posterior draws from non-Gaussian linear regression example, using GDS. Darker colors represent regions of higher posterior density tions, thinned ev ery 10 dra ws. Just as Papaspiliopolous and Roberts predicted, the chain tends to get stuck near the mode. It is only after some serendipitously large proposal jumps that the chain ev er finds itself in the tails (hence the gaps in the plot), but the chain does not mov e v ery far along those tails at all. 3.2 Hierarchical Gaussian model Next, w e consider a hierarchical model with a large number of parameters. The depen- dent variable y i t is measured T times for heterogenous units i = 1 . . . n . For each unit, there are k cov ariates, including an intercept. The intercept and coefficients β i are het- erogeneous, with a Gaussian prior with mean ¯ β and co v ariance Ω , which in tur n ha v e w eakly infor mation standar d hyperpriors. This model structure is giv en by: 10 −40 −20 0 20 40 −40 −20 0 20 40 X Θ log.dens −22.7 −22.2 −21.7 −21.2 −20.7 −20.2 −19.7 −19.2 −18.7 −18.2 −17.7 −17.2 −16.7 −16.2 −15.7 −15.2 −14.7 Figure 3: Posterior dra ws from non-Gaussian linear regression example, using MCMC. Darker colors represent regions of higher posterior density y i t ∼ N ( x 0 i β i , 1 ) , i = 1 . . . n , t = 1 . . . T (15) β i | Ω ∼ M V N ( ¯ β , Ω ) (16) ¯ β ∼ MV N ( 0, V β ) (17) Ω ∼ I W ( ν , A ) (18) T o construct simulated datasets, w e set “true” v alues of ¯ β = ( 5, 0, − 2, 0 ) and Ω = 0.25 I . For each unit, w e simulated T = 25 obser v ations, where the non-intercept cov ariates are all i.i.d dra ws from a standard nor mal distribution. Three different v alues for n w ere entertained: 100, 500 and 1000. In all cases, there are 14 population-lev el parameters, so the total number of parameters are 414, 2014 and 4014, respectiv ely . The parameters of the hyperpriors are ν = 10, V β = 0.2 I , and A = 0.1 I . 11 T able 1 summarizes the performance of the GDS algorithm, a v eraged o v er 10 replications of the experiment. For each case, w e collected 100 samples from the posterior , with M = 10, 000 proposal dra ws. The proposal distributions are all multivariate nor mal, with mean at the posterior mode and the cov ariance set as the inv erse of the Hessian at the mode, multiplied b y a scale factor that changes with n . For each v alue of n , w e set the scale factor to roughly be the smallest value for which Φ ( θ | y ) ≤ 1 for all M = 10, 000 proposal dra ws. The “Mean Proposals” column is the a v erage number of proposals it took to collect 100 posterior dra ws during the accept-reject phase of the algorithm (this is the inv erse of the acceptance rate). W e also recor ded the time it took to run each stage of the algorithm. The “Post Mode” column is the number of minutes it took to find the posterior mode, starting at the origin (after transforming all parameters to ha v e a domain on the real line). The “Proposals” column is the amount of time it took to collect the M = 10, 000 proposal dra ws. “Acc-Rej” is the time it took to execute the accept- reject phase of the algorithm to collect 100 independent samples from the posterior , and “T otal” is the total time required to run the algorithm. The study was conducted on an Apple Mac Pro with 12 CPU cores running at 2.93GHz and 32GB of RAM. T en of the 12 cores w ere allocated to this algorithm. T otal scale Mean Minutes for GDS stages n params factor Proposals Post Mode Proposals Acc-Rej T otal 100 414 1.32 15489 0.01 0.11 1.9 2.1 500 2014 1.20 23387 0.08 0.18 11.3 11.6 1000 4014 1.14 26480 0.24 0.28 23.0 23.6 T able 1: Ef ficiency of GDS for hierarchical Gaussian example Although Mean Proposals ma y appear to be high, assessing the ef ficiency of an algorithm b y examining the acceptance rate alone can be misleading. For a single estimation chain, MCMC cannot be run in parallel, while for GDS, all dra ws can be generated in parallel. For example, each of the 10 cores on the CPU w as responsible for collecting 10 posterior samples. One possible counterar gument would be that MCMC can be run with multiple 12 chains in parallel, as suggested in Gelman and Rubin (1992). How ev er , if one does use parallel chains, all of the chains need to be bur ned in independently . There is no guarantee that any one of the chains w ould ha v e conv erged after some arbitrar y number of iterations, and there will still be residual interdependence in the final set of collected dra ws. It would be more appropriate to compare the total number of proposals from the GDS rejection sampling phase to the number of post-bur n-in iterations that MCMC runs require reaching an effective sample size of 100. It is also w orth reiterating that GDS is quite simple to implement, as the algorithm requires no ongoing tuning or adaptation once the accept-reject phase begins. As a point of comparison, w e estimated the posterior for the n = 500 case using the adaptiv e Metropolis-adjusted Langevin algorithm with truncated drift (Atchade 2006). W e chose this algorithm because it is commonly used, not too hard to implement (rel- ativ e to many other MCMC v ariants lie Gibbs sampling), generically applicable to a general class of models that are similar to those for which GDS w ould w ork w ell, and exploits the gradient information that GDS uses to find the posterior mode. W e started the chain at the posterior mode, and let it run for 5.5 million iterations, during the course of sev eral w eeks. Using the Gew eke conv er gence diagnostic criterion on the log posterior density (Gew eke 1991), w e decided that only the final 180,000 dra ws could be considered “post bur n-in.” Ho w ev er , those dra ws represented an ef fectiv e sample size of only 1,460, suggesting a required thinning ratio of about 1 in 12,000. W e did tr y some more adv anced algorithms, such as Girolami and Calderhead(2012), but had difficulty when the chain entered regions for which the Hessian w as not negativ e definite. The Atchade algorithm generates updates of the proposal cov ariance that are guaranteed to be positiv e definite. Regardless, this case illustrates how unreliable (and unpredictable) MCMC can be for collecting posterior samples quickly and easily . 13 3.3 An example of a complex, high-dimensional model In this section, w e consider another model for which GDS should be an attractiv e estima- tion method: the ef fectiv eness of online adv ertising campaigns. Using GDS, w e estimate the posterior density from the model described in Braun and Moe (2012). This model is quite complicated; for 5,803 anonymous users, they observ e which w ebsite adv ertise- ments (if any) w er e ser v ed to each user during the course of that user ’s w eb bro wsing activity . They also observ e when these users visited the adv ertiser ’s o wn w ebsite (if ev er), and if these w ebsite visits resulted in a conv ersion to a sale. The managerial objec- tiv e is to dev elop a method for fir ms to identify which v ersions of ads are most likely to generate site visits and sales, taking into account the fact that the retur n on inv estment of the ad ma y not occur until sev eral w eeks in the future. The model allows each v ersion of an ad to ha v e a contemporaneous effect in that w eek, but for each repeat view of the same ad to an incrementally smaller effect. The effect of the ad campaign for an indi- vidual builds up with each subsequent ad impression, but this accumulated “ad stock” deca ys from w eek to w eek. All together , there are 29,073 parameters in the model, consisting of fiv e heterogeneous parameters per user , plus 58 population-lev el parameters. The data are modeled as be- ing generated fr om zero-inflated Poisson (for ads and visits) and binomial (for successes) distributions, with the rate parameters being correlated, and dependent on a latent ad stock v ariable. The ad stock, in turn, is depends on which v ersion of the ad is ser v ed, along with nonlinear representations of build-up, w ear-out and restoration ef fects. Giv en the complex hierarchical structure, extensiv e nonlinearities, and high degree of correla- tion in the posterior distribution, attempts at MCMC estimation prov ed to be w ell-nigh impossible to implement. GDS, how ev er , w orked w ell. Because the user-lev el data are conditionally independent, 14 w e could write the log posterior density as the sum of user-lev el data likelihoods, plus the priors and hyperpriors. Algorithmic differ entiation softwar e (Bell 2012) automati- cally computed the gradients and Hessians for the model. This allo ws us to find the posterior mode, and estimate the Hessian at the mode, relativ ely quickly . As abov e, the proposal density was a multiv ariate nor mal, centered at the posterior mode, with a co- v ariance matrix of 1.02 times the inv erse of the Hessian at the model. Since the model assumes conditional independence across users, the Hessian of the log posterior has a sparse structure. W e exploit this sparsity to generate the proposal dra ws ef ficiently , and to dramatically reduce the memory footprint of the algorithm. T o collect 100 independent dra ws, it took 23.75 minutes. The mean attempts per draw is 2350. Although this ma y appear like a low acceptance rate, consider that w e w ere able to collect the dra ws in parallel, without any tuning or adaptation of the algorithm bey ond the initial choice of the proposal density . Also, because the sparse Hessian has a “block-diagonal-arro w” structure, it gro ws only linearly with the number of users. 4 Estimating Marginal Likelihoods No w , w e tur n to another adv antage of GDS: the ability to generate accurate estimates of the marginal likelihood of the data with little additional computation. A number of researchers ha v e proposed methods to appr oximate the marginal likelihood, L ( y ) , from MCMC output. Popular examples include Gelfand and Dey (1994), Newton and Rafter y (1994), Chib (1995) and Rafter y , et. al. (2007). But none of these methods hav e achiev ed univ ersal acceptance as being unbiased, stable or easy to compute. In fact, Lenk (2009) demonstrated that methods which depend solely on samples from the posterior density could suf fer from a “pseudo-bias,” and he proposes a method to correct for it. Through sev eral examples, he demonstrates that his method dominates other popular methods, 15 although with substantial computational effort. Thus, the estimation of the marginal likelihood of a dataset, and its use in model selection, remains a difficult problem in MCMC-based Ba yesian statistics for which there is no satisfactor y solution. T o estimate the marginal likelihood fr om GDS output, w e need the acceptance rate from the accept-reject stage of the algorithm. Recall that q ( u ) is the probability that, giv en a threshold value u , a proposal from g ( θ ) is accepted. Therefore, one can express the expected mar ginal acceptance pr obability for any posterior dra w as γ = Z 1 0 q ( u ) p ( u | y ) d u . (19) Substituting Equation 6, γ = c 1 c 2 L ( y ) Z 1 0 q 2 ( u ) d u (20) Applying the change of v ariables so v = − log u , and then rearranging ter ms, L ( y ) = − c 1 c 2 γ Z ∞ 0 q 2 ( v ) exp ( − v ) d v . (21) The v alues for c 1 and c 2 are immediately a v ailable from the GDS algorithm. One can estimate γ b y treating the number of pr oposals required to accept a posterior dra w as a shifted geometric random variable (an acceptance on the first proposal is a count of 1). Thus, an estimator of γ is the inv erse of the mean number of proposals per draw . What remains is estimating the integral in Equation 21. This is done b y using the pro- posal dra ws from Step 5 in the GDS algorithm in S ection 2. The empirical CDF of these dra ws is discrete, so w e can partition the support of q ( v ) at v 1 . . . v M . Also, since q M ( v ) 16 is the proportion of proposal dra ws less than v , w e ha v e q M ( v ) = i M . Therefore, Z ∞ 0 q 2 ( v ) exp ( − v ) d v ≈ M ∑ i = 1 Z v i + 1 v i i M 2 exp ( − v i ) dv (22) = 1 M 2 M ∑ i = 1 i 2 [ exp ( − v i ) − exp ( − v i + 1 ) ] (23) = 1 M 2 M ∑ i = 1 ( 2 i − 1 ) exp ( − v i ) (24) (By conv ention, define v M + 1 = ∞ ). Putting all of this together , w e can estimate the marginal likelihood as L ( y ) ≈ c 1 M 2 c 2 γ M ∑ i = 1 ( 2 i − 1 ) exp ( − v i ) (25) As a demonstration of the accuracy of this method, consider the following normal linear regression model, also used b y Lenk (2009) to demonstrate the accuracy of his method. y i t ∼ N ( x 0 i β , σ 2 ) , i = 1 . . . n , t = 1 . . . T (26) β | σ ∼ N ( β 0 , σ 2 V 0 ) (27) σ 2 ∼ I G ( r , α ) (28) For this model, L ( y ) is a multivariate-T density . This allows us to compare the esti- mates of L ( y ) from GDS with “truth.” T o do this, w e conducted a simulation study for simulated datasets of different sizes ( n =200 or 2000) and numbers of cov ariates ( k =5, 25 or 100). For each n , k pair , w e simulated 25 datasets. For each dataset, each v ector x i includes an intercept and k iid samples from a standard nor mal density . There are k + 2 parameters, corresponding to the elements of β , plus σ . The true inter cept ter m is 5, and the remaining true β parameters are linearly spaced from − 5 to 5. In all cases, there are T = 25 obser v ations per unit. Hyperpriors are set as r = 2, α = 1, β 0 as a zero v ector 17 and V 0 = 0.2 · I k . For each dataset, w e collected 250 dra ws from the posterior density using GDS, with differ ent numbers of proposal dra ws ( M =1,000 or 10,000), and scale factors ( s = 0.5, 0.6, 0.7 or 0.8) on the Hessian (so s H is the precision matrix of the MVN proposal density , and lo w er scale factors generate more dif fuse proposals). The s = 0.8 and n = 200 cases w er e excluded because the proposal density w as not suf ficiently diffuse that Φ ( θ | y ) w as betw een 0 and 1 for the M proposal dra ws. T able 2 presents the true log marginal likelihood (MVT), along with estimates using GDS, the importance sampling method in Lenk (2009), and the har monic mean estimator (Newton and Rafter y 1994). T able 3 shows the mean absolute percentage error (MAPE) of the GDS and Lenk methods, relativ e to the true log likelihood. The acceptance percentages for each dataset, and the time it took to generate 250 posterior dra ws (after finding the posterior mode) are also summarized. What w e can see from these tables is that the GDS estimates for the log marginal likeli- hood are remarkably close to the multivariate T densities, and are robust when w e use differ ent scale factors. Accuracy appears to be better for larger datasets than smaller ones, and improving the approximation of p ( u ) b y increasing the number of proposal dra ws offers negligible impro v ement. Note that the perfor mance of the GDS method is comparable to that of Lenk, but is much better than the harmonic mean estimator . W e did not compare our method to others (e.g., Gelfand and Dey 1994), because Lenk already did that when demonstrating the importance of correcting for pseudo-bias, and ho w his method dominates many other popular ones. The GDS method is similar to Lenk’s in that it computes the probability that a proposal dra w falls within the support of the posterior density . Ho w e v er , note that the inputs to the GDS estimator are intrin- sically generated as the GDS algorithm progresses, while computing the Lenk estimator requires an additional importance sampling run after the MCMC dra ws are collected. 18 W e are not claiming that our method is better than Lenk’s. Instead, this illustration sho ws that one of the important advantages of GDS is the ease and accuracy with which one can estimate marginal likelihoods. 5 Practical considerations and limitations of GDS This section discusses some practical issues while implementing GDS. Like the entire body of MCMC research continues to teach us, more insights will likely be gleaned as w e, and hopefully others, gain additional experience with the method. Here, w e pro vide some suggestions on how to implement GDS effectiv ely , and mention some areas in which more research or inv estigation is needed. 5.1 Finding the poster ior mode and estimating the Hessian S earching for the posterior mode is considered, in general, to be “good practice” for Ba y esian inference ev en when using MCMC; see Step 1 of the “Recommended Strategy for Posterior Simulation” in S ection 11.10 of Gelman et. al (2003). For small problems, like the example in S ection 3.1, standard nonlinear optimization algorithms, such as those found in common statistical packages like R, are sufficient for finding posterior modes and estimating Hessians. For larger problems, finding the mode and estimating the Hessian can be more dif ficult when using those same tools. How ev er , there are many differ ent w a ys to find the extrema of a function, and some may be more appropriate for some kinds of problems than for others. Therefore, one should not immediately conclude that finding the posterior mode (or modes) is a barrier to adopting GDS for large or ill-conditioned problems, like the 29, 073-dimensional model in S ection 3.3. 19 MVT GDS Lenk HME k n M scale mean sd mean sd mean sd mean sd 5 200 1000 0.5 -309 6.6 -309 6.6 -311 6.8 -287 7.1 5 200 1000 0.6 -309 6.6 -309 6.7 -310 6.9 -287 6.9 5 200 1000 0.7 -309 6.6 -309 6.7 -310 6.5 -287 6.3 5 200 10000 0.5 -309 6.6 -309 6.6 -311 6.7 -287 6.7 5 200 10000 0.6 -309 6.6 -309 6.6 -310 7.5 -287 6.8 5 200 10000 0.7 -309 6.6 -309 6.7 -310 7.0 -287 7.1 5 2000 1000 0.5 -2866 46.2 -2865 46.3 -2868 46.2 -2836 46.2 5 2000 1000 0.6 -2866 46.2 -2866 46.2 -2868 45.7 -2836 45.5 5 2000 1000 0.7 -2866 46.2 -2866 46.3 -2867 45.9 -2836 45.9 5 2000 1000 0.8 -2866 46.2 -2866 46.2 -2867 46.3 -2835 46.3 5 2000 10000 0.5 -2866 46.2 -2866 46.4 -2867 46.7 -2836 46.9 5 2000 10000 0.6 -2866 46.2 -2866 46.2 -2867 45.8 -2836 46.3 5 2000 10000 0.7 -2866 46.2 -2866 46.4 -2867 46.0 -2836 46.3 5 2000 10000 0.8 -2866 46.2 -2866 46.2 -2867 46.5 -2835 46.3 25 200 1000 0.5 -387 8.1 -385 8.2 -391 7.6 -292 8.5 25 200 1000 0.6 -387 8.1 -386 8.1 -390 9.5 -292 8.8 25 200 1000 0.7 -387 8.1 -386 8.3 -390 8.0 -292 8.8 25 200 10000 0.5 -387 8.1 -385 8.5 -390 8.2 -292 8.4 25 200 10000 0.6 -387 8.1 -385 8.2 -390 8.9 -292 8.8 25 200 10000 0.7 -387 8.1 -386 8.2 -390 8.7 -292 9.1 25 2000 1000 0.5 -2990 28.7 -2989 28.8 -2994 28.3 -2865 28.8 25 2000 1000 0.6 -2990 28.7 -2989 28.7 -2993 28.4 -2864 29.0 25 2000 1000 0.7 -2990 28.7 -2989 28.9 -2991 30.0 -2864 29.5 25 2000 1000 0.8 -2990 28.7 -2990 28.7 -2992 29.6 -2864 29.4 25 2000 10000 0.5 -2990 28.7 -2988 29.2 -2992 28.5 -2864 28.9 25 2000 10000 0.6 -2990 28.7 -2989 29.1 -2993 29.4 -2864 28.9 25 2000 10000 0.7 -2990 28.7 -2990 29.0 -2993 28.9 -2864 28.9 25 2000 10000 0.8 -2990 28.7 -2990 28.6 -2993 28.2 -2865 28.2 100 200 1000 0.5 -660 6.7 -661 6.5 -683 8.8 -292 9.2 100 200 1000 0.6 -660 6.7 -660 6.6 -678 8.5 -286 9.0 100 200 1000 0.7 -660 6.7 -659 7.1 -673 7.8 -282 8.0 100 200 10000 0.5 -660 6.7 -659 6.9 -682 9.1 -288 10.4 100 200 10000 0.6 -660 6.7 -660 5.7 -678 8.8 -286 8.9 100 200 10000 0.7 -660 6.7 -658 6.7 -674 7.3 -282 8.4 100 2000 1000 0.5 -3364 24.4 -3364 24.8 -3370 27.5 -2871 27.1 100 2000 1000 0.6 -3364 24.4 -3362 24.6 -3369 24.3 -2868 25.3 100 2000 1000 0.7 -3364 24.4 -3361 23.9 -3371 25.6 -2870 25.4 100 2000 1000 0.8 -3364 24.4 -3362 23.9 -3370 26.0 -2868 26.1 100 2000 10000 0.5 -3364 24.4 -3362 24.0 -3372 25.3 -2870 25.2 100 2000 10000 0.6 -3364 24.4 -3360 24.9 -3368 25.3 -2867 25.4 100 2000 10000 0.7 -3364 24.4 -3360 24.6 -3370 25.5 -2869 25.5 100 2000 10000 0.8 -3364 24.4 -3362 24.5 -3367 24.3 -2867 24.4 T able 2: Results of simulation study for effectiv eness of estimator for log marginal like- lihood. 20 MAPE-GDS MAPE-LENK Accept % T ime (mins) k n M scale mean sd mean sd mean sd mean sd 5 200 1000 0.5 0.23 0.16 0.47 0.39 22.05 7.64 0.09 0.24 5 200 1000 0.6 0.11 0.12 0.52 0.45 40.46 10.82 0.02 0.04 5 200 1000 0.7 0.06 0.05 0.47 0.39 57.13 8.46 0.01 0.00 5 200 10000 0.5 0.17 0.08 0.50 0.37 23.99 5.32 0.04 0.01 5 200 10000 0.6 0.10 0.06 0.54 0.46 40.85 7.19 0.04 0.01 5 200 10000 0.7 0.07 0.07 0.48 0.35 55.15 9.15 0.04 0.01 5 2000 1000 0.5 0.02 0.01 0.07 0.05 22.14 7.65 0.04 0.07 5 2000 1000 0.6 0.01 0.01 0.06 0.06 37.76 10.34 0.03 0.08 5 2000 1000 0.7 0.01 0.01 0.06 0.05 49.55 13.39 0.01 0.00 5 2000 1000 0.8 0.01 0.01 0.04 0.02 64.60 12.93 0.01 0.00 5 2000 10000 0.5 0.02 0.01 0.05 0.05 25.30 6.65 0.06 0.07 5 2000 10000 0.6 0.01 0.01 0.04 0.03 36.28 7.06 0.04 0.01 5 2000 10000 0.7 0.01 0.01 0.05 0.03 51.43 14.49 0.05 0.03 5 2000 10000 0.8 0.00 0.01 0.04 0.03 71.95 11.83 0.04 0.00 25 200 1000 0.5 0.49 0.36 0.98 0.47 2.77 2.50 0.47 0.60 25 200 1000 0.6 0.26 0.13 1.06 0.46 8.07 3.36 0.13 0.18 25 200 1000 0.7 0.18 0.10 0.80 0.43 16.16 5.08 0.06 0.11 25 200 10000 0.5 0.52 0.25 0.93 0.65 1.67 0.87 1.15 1.99 25 200 10000 0.6 0.35 0.19 1.03 0.68 6.24 3.72 0.72 1.39 25 200 10000 0.7 0.11 0.05 0.88 0.77 20.01 3.23 0.04 0.01 25 2000 1000 0.5 0.04 0.03 0.11 0.10 2.69 1.98 0.28 0.25 25 2000 1000 0.6 0.06 0.03 0.09 0.06 4.57 3.03 0.75 1.02 25 2000 1000 0.7 0.04 0.03 0.09 0.09 15.44 9.61 0.59 1.36 25 2000 1000 0.8 0.01 0.01 0.09 0.07 43.14 12.62 0.03 0.02 25 2000 10000 0.5 0.10 0.05 0.08 0.06 0.75 0.68 4.34 9.53 25 2000 10000 0.6 0.07 0.03 0.09 0.05 3.65 2.76 1.97 5.95 25 2000 10000 0.7 0.03 0.03 0.08 0.06 17.07 6.33 0.42 1.45 25 2000 10000 0.8 0.01 0.01 0.10 0.08 43.27 10.27 0.05 0.01 100 200 1000 0.5 0.27 0.23 3.50 0.82 0.32 0.29 0.49 0.85 100 200 1000 0.6 0.17 0.22 2.74 0.71 0.30 0.22 1.05 3.06 100 200 1000 0.7 0.26 0.22 1.93 0.81 0.40 0.37 1.17 2.18 100 200 10000 0.5 0.20 0.12 3.21 0.93 0.04 0.03 9.64 24.67 100 200 10000 0.6 0.22 0.14 2.62 0.75 0.08 0.07 7.62 27.89 100 200 10000 0.7 0.28 0.17 2.18 0.64 0.08 0.06 1.66 1.62 100 2000 1000 0.5 0.06 0.05 0.24 0.14 0.34 0.38 12.03 26.31 100 2000 1000 0.6 0.04 0.04 0.19 0.12 0.60 0.53 4.61 10.84 100 2000 1000 0.7 0.07 0.04 0.23 0.13 1.10 0.88 3.66 8.46 100 2000 1000 0.8 0.06 0.03 0.21 0.12 3.16 2.26 2.74 8.74 100 2000 10000 0.5 0.05 0.04 0.27 0.12 0.04 0.05 52.26 85.06 100 2000 10000 0.6 0.08 0.05 0.15 0.13 0.14 0.16 64.11 189.53 100 2000 10000 0.7 0.09 0.04 0.19 0.13 0.44 0.42 11.31 23.94 100 2000 10000 0.8 0.05 0.02 0.15 0.11 3.04 1.81 2.08 4.04 T able 3: Results of simulation study for effectiv eness of estimator for log marginal like- lihood. 21 When the log posterior density is smooth and unimodal, a natural algorithm for finding a posterior mode is one that exploits gradient and Hessian infor mation in a w a y that is related to Newton’s Method. Nocedal and W right (2006) describe many different nonlinear optimization methods, but most can be classified as either “line search” or “trust region” methods. Line search methods ma y be more common; for instance, all of the gradient-based algorithms implemented in the optim function in R are line search methods. But these methods could be subject to numerical problems when the log posterior is nearly flat, or has a ridge, in which case the algorithm ma y tr y to ev aluate the log posterior at a point that is so far a w a y from the current v alue that it generates numerical ov erflow . T rust region methods (Conn, et. al., 2000), on the other hand, tend to be more stable, because each proposed step is constrained to be within a particular distance (the “radius” of the trust region) of the current point. In short, if one finds that a “standard” optimizer for a particular programming environment is ha ving trouble finding the posterior mode, there ma y be other common algorithms that can find the mode more easily . Neither line-search nor trust-region algorithms necessarily require explicit expressions for gradients and Hessians, but generating these structures exactly can also speed up the mode-finding step of GDS. This approach is in contrast to approximations that use finite differ encing or quasi-Newton Hessian updates. Of course, one can alw a ys deriv e the gradient of the log posterior density analytically , but this can be a tedious process. W e ha v e had success with algorithmic differentiation (AD) softw are such as the CppAD library (Bell 2012). W ith AD, w e need only to write a function that computes the log posterior density . The AD librar y includes functions that automatically return deriv a- tiv es of that function. The time to compute the gradient of a function is a small multiple of the time it takes to compute the original function, and other wise does not depend on the dimension of the problem. 22 Estimating the Hessian is useful not only for the mode-finding step, but also for choos- ing the co v ariance matrix of a multiv ariate proposal density . The time it takes for AD softw are to compute a Hessian can depend on the dimension of the problem, and w ork- ing with a dense Hessian for a large problem can be prohibitiv ely expensiv e in ter ms of computation and memory usage. How ev er , for many hierarchical models, w e assume conditional independence across heterogeneous units. For these models, the Hessian of the log posterior is sparse, with a “block-diagonal-arro w” structure (block-diagonal, but dense on the bottom and right margins). Thus, w e can achiev e substantial compu- tational impro v ements by exploiting this sparsity . The advantage comes in storing the Hessian in a compressed format, such that zeros are not stored explicitly . Not only does this permit estimating larger models on computers with less memor y , but it also lets us use efficient computational routines that exploit that sparsity . For example, Po w ell and T oint (1979) and Coleman and More (1983) explain how to efficiently estimate sparse Hessians using graph coloring techniques. Coleman et al. (1985a, 1985b) offer a useful FOR TRAN implementation to estimate sparse Hessians using graph coloring and finite differ encing. Algorithmic differentiation libraries like CppAD can also exploit sparsity when computing Hessians. Both MA TLAB and R (thr ough the Matrix package) can stor e sparse symmetric matrices in compressed for mat. One important consideration is the case of multimodal posteriors. GDS does require finding the global posterior mode, and all the models discussed in this paper ha v e uni- modal posterior distributions. When the posterior is multimodal, one could instead use a mixture of nor mals as the proposal distribution. The idea is to not only find the global mode, but any local ones as w ell, and center each mixture component at each of those lo- cal modes. The GDS algorithm itself remains unchanged, as long as the global posterior mode matches the global proposal mode. W e recognize that finding all of the local modes could be a hard problem, and there is 23 no guarantee that any optimization algorithm will find all local extrema. But, b y the same token, this problem can be resolv ed efficiently in a multitude of complex Bay esian statistical models if one uses the correct tools. And it is only a matter of time before these tools are more widely a v ailable in standard statistical programming languages like R. The nonlinear optimization literature is rife with methods that help facilitate efficient location of multiple modes, ev en if there is no guarantee of finding them all. Also, note that ev en though MCMC sampling chains are, in theory , guaranteed to explore the entire space of any posterior distribution (including multiple regions of high posterior mass), there is no guarantee that this will happen after a large finite number of iterations for general nonconjugate hierarchical models. Other estimation algorithms that purport to be robust to multimodal posteriors offer no such guarantees either . 5.2 Choosing a proposal distr ibution Like many other methods that collect random samples from posterior distributions, the efficiency of GDS depends in part on a prudent selection of the proposal density g ( θ ) . For the examples in this paper , w e used a multiv ariate normal density that is centered at the posterior mode, with a cov ariance matrix that is proportional to the inv erse of the Hessian at the mode. One might then w onder if there is an optimal w a y to deter mine just ho w “scaled out” the proposal co v ariance needs to be. At this time, w e think that trial and error is, quite frankly , the best alter nativ e. For example, if w e start with a small M (sa y , 100 dra ws), and find that Φ ( θ | y ) > 1 for any of the M proposals, w e ha v e learned that the proposal density is not v alid, at little computational or real-time cost. W e can then re-scale the proposal until Φ ( θ | y ) < 1, and then gradually increase M until w e get a good approximation to p ( u ) . In our experience, ev en if an acceptance rate appears to be lo w (sa y , 0.0001), w e can still collect dra ws in parallel, so the “clock time” remains much less than the time w e spend trying to optimize selection of the proposal. 24 For example, in the Cauchy example in S ection 3.1, w e set the proposal co v ariance to be the inv erse Hessian at the posterior mode, scaled by a factor of 200. W e needed such a large scale factor because the normal approximation at the mode shows no correlation, ev en though there is ob vious correlation in the tails. If one knew upfront the extent of the tail dependence, one might ha v e chosen a proposal density that is more highly correlated, and that might giv e a higher acceptance rate. But of course one seldom, if ev er , kno ws the shape of any target posterior density up front. S o ev en though an acceptance percentage of 1.3% ma y appear to be low , w e should consider the amount of time it w ould take to impro v e the proposal density , and especially the number of MCMC iterations it w ould take to get enough dra ws that are equiv alent to the same number of independent GDS draws. 5.3 Cases requir ing further research This paper demonstrated that GDS is a viable alter nativ e to MCMC for a large class of Ba y esian non-Gaussian and Gaussian hierarchical models. Of course it w ould be my opic to claim that GDS is appropriate for all models. By the same token, w e cannot assert that GDS w ould not w ork for any of the models described below . These models are topics requiring additional research. Models with discrete or combinator ial optimization elements In models that include both discrete and continuous parameters, finding the posterior mode becomes a mixed- integer nonlinear program (MINLP). An example is the Ba y esian v ariable selection pr ob- lem (George and McCulloch 1997). The difficulty lies in the fact that MINLPs are kno wn to be NP-complete, and thus ma y not scale w ell for large problems. Hidden Marko v models with multiple discrete states might be similarly difficult to estimate using GDS. Also, it is not immediately clear ho w one might select a proposal density when some 25 parameters are discrete. Intractable likelihoods or posteriors There are many popular models, namely binar y , ordered and multinomial probit models, for which the likelihood of the obser v ed data is not a v ailable in closed form. When direct numerical approximations to these likeli- hoods (e.g., Monte Carlo integration) is not tractable, MCMC with data augmentation is a popular estimation tool (e.g., Albert and Chib 1993). That said, recent adv ances in parallelization using graphical processing units (GPUs) might make numerical estima- tion of integrals more practical than it w as ev en 10 y ears ago; see Suchard et al. (2010). If this is the case, and the log posterior remains sufficiently smooth, then GDS could be a viable, efficient alternativ e to data augmentation in these kinds of models. Missing data problems MCMC-based approaches to multiple imputation of missing data could suffer from the same kinds of problems: the latent parameter , introduced for the data augmentation step, is only w eakly identified on its o wn. Nor mally , w e are not interested in the missing v alues themselv es. If the number of missing data points is small, perhaps one could treat the representation of the missing data points as if they w er e parameters. But the implications of this require additional research. Spatial models, and other models with dense Hessians GDS does not explicitly re- quire conditional independence, so one might consider using it for spatial or contagion models (e.g., Y ang and Allenb y 2003). How ev er , without a conditional independence assumption, the Hessian of the log posterior will not be sparse, and that ma y restrict the size of datasets for which GDS is practical. 26 6 Conclusions In this paper , w e presented a new method, Generalized Direct Sampling (GDS), to sam- ple from posterior distributions. This method has the potential to bypass MCMC-based Ba y esian inference for large, complex models with continuous, bounded posterior densi- ties. Unlike MCMC, GDS generates independent dra ws that one could collect in parallel. The implementation of GDS is straightfor w ard, and requires only a function that returns the v alue of the unnor malized log posterior density . In addition, GDS allows for fast and accurate computation of marginal likelihoods, which can then be used for model comparison. There are many other w a ys to conduct Ba y esian inference, and continued impro v ement of MCMC remains an important stream of research. Nev ertheless, it w ould be hard to ignore the opportunities for parallelization that make algorithms like GDS v er y attractiv e alternativ es. Of course, one could employ parallel computational techniques as part of a sequential algorithm. But, to repeat an earlier sentence, using parallel technology to generate a single dra w is not the same as generating all of the requir ed dra ws themselv es in parallel. By exploiting the adv antages of parallel computing, as in this paper , GDS could pro v e to be a successful addition to the Ba y esian practitioner ’s computational toolkit. References [1] Atchade, Y . F . (2006) An Adaptiv e V ersion for the Metropolis Adjusted Langevin Algorithm with a T runcated Drift. Methodology and Computing in Applied Probability 8, 235-254. [2] Bell, B. (2012) CppAD: A Package for C++ Algorithmic Differentiation. Computational Infras- tructure for Operations Research http://www .coin-or .org/CppAD. 27 [3] Braun, M. and Moe, W . (2012) Online Adv ertising Response Models: Incorporating Multiple Creativ es and Impression Histories. W orking paper . Massachusetts Institute of T echnology . [4] Coleman, T . F ., Garbo w , B. S. and Mor ´ e, J. J. (1985a) S oftw are for Estimating Sparse Hessian Matrices. ACM T ransactions on Mathematical Software 11(4) 363-377. [5] Coleman, T . F ., Garbo w , B. S. and Mor ´ e, J. J. (1985b) Algorithm 636: FORTRAN Subroutines for Estimating Sparse Hessian Matrices. ACM T ransactions on Mathematical Software 11(4) 378. [6] Conn, A., Gould, N., and T oint, P . (2000) T rust-Region Methods Philadelphia: Society for Industrial and Applied Mathematics and Mathematical Programming Society . [7] Gelman, A., Carlin, J. B., Ster n, H. S., and Rubin, D. B. (2003) Bayesian Data Analysis . Boca Raton, Fla.: Chapman and Hall. [8] George, E. I. and McCulloch, R. E. (1997) Approaches for Ba y esian V ariable S election. Sta- tistica Sinica 7, 339-373. [9] Gew eke, J. (1991) Ev aluating the Accuracy of Sampling-Based Approaches to the Calcula- tion of Posterior Moments. Bayesian Statistics Ne w Y ork: Oxford Univ ersity Press. 169-193 [10] Girolami, M., and Calder head, B. (2011) Riemann Manifold Langevin and Hamiltonian Monte Carlo. Journal of the Royal Statistical Society , Series B (with discussion), 73, 1-37. [11] Lenk, P . (2009) Simulation Pseudo-Bias Correction to the Har monic Mean Estimator of In- tegrated Likelihoods. Journal of Computational and Graphical Statistics , 18(4) 941-960. [12] Nocedal, J. and W right, S. J. (2006) Numerical Optimization New Y ork: Springer . [13] Papaspiliopoulous, O. and Roberts, G. (2008). Stability of the Gibbs Sampler for Ba y esian Hierarchical Models. Annals of Statistics , 36, 95-117. [14] Pow ell, M. J. D. and T oint, Ph. L. (1979) On the Estimation of Sparse Hessian Matrices. SIAM Journal on Numerical Analysis 16(6) 1060-1074. [15] T ibbits, M.M., Haran, M. and Liechty , J.C. (2010). Parallel Multiv ariate Slice Sampling. Statis- tics and Computing , 21(3), 415-430. [16] W alker , S.G., Laud, P .W ., Zantedeschi, D., and Damien, P . (2010). Direct Sampling. Journal of Computational and Graphical Statistics , 20(3), 692-713. [17] Suchard, M.A., W ang, Q., Chan, C., Frelinger , J., Cron, A., and W est, M. (2010). Understand- ing GPU Programming for Statistical Computation: Studies in Massiv ely Parallel Massiv e Mixtures. Journal of Computational and Graphical Statistics , 19(2), 419-438. [18] Y ang, S., and Allenby , G. (2003) Modeling Interdependent Consumer Preferences. Journal of Marketing Resear ch 40(3), 282-294. 28

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment