Adaptive Evolutionary Clustering

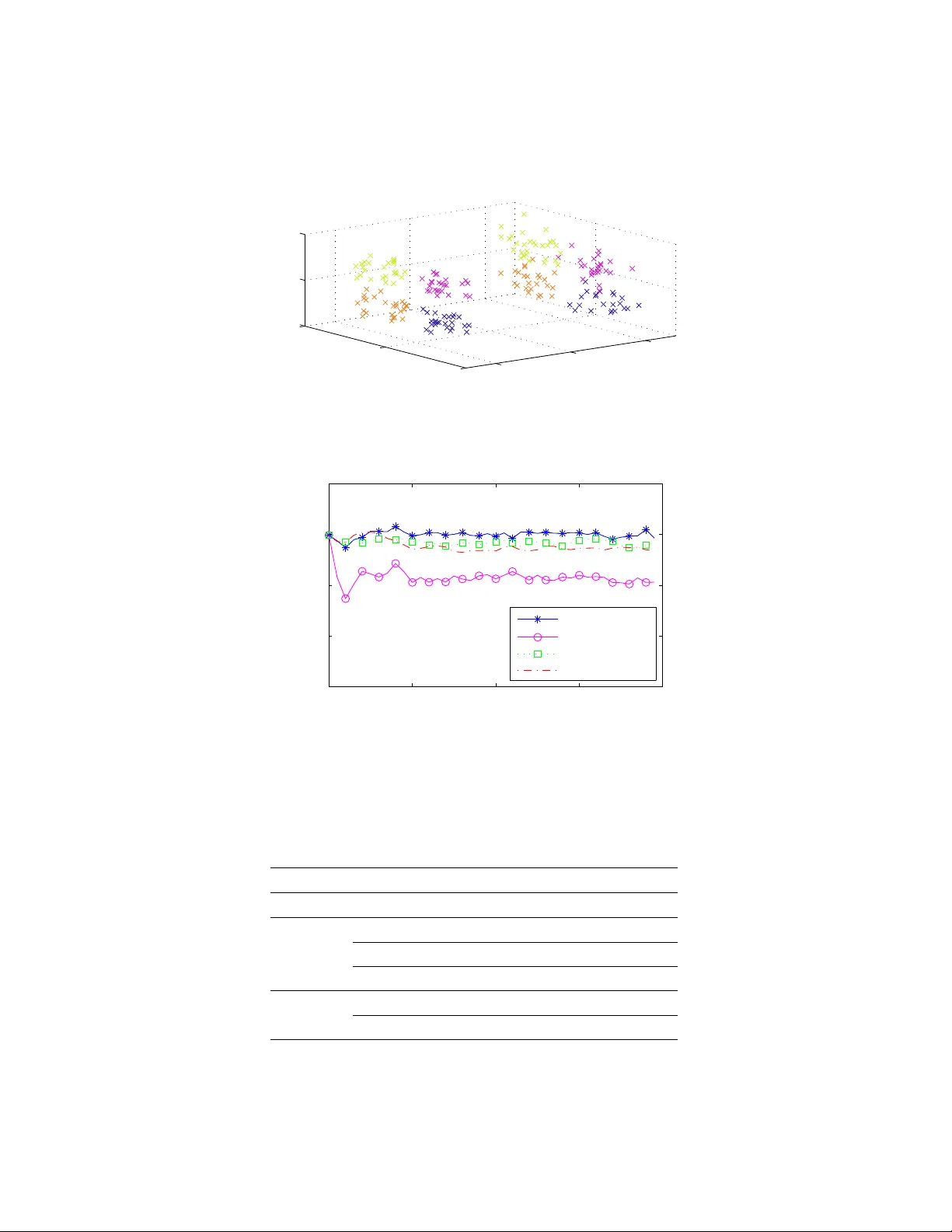

In many practical applications of clustering, the objects to be clustered evolve over time, and a clustering result is desired at each time step. In such applications, evolutionary clustering typically outperforms traditional static clustering by pro…

Authors: Kevin S. Xu, Mark Kliger, Alfred O. Hero III