Active Clustering: Robust and Efficient Hierarchical Clustering using Adaptively Selected Similarities

Hierarchical clustering based on pairwise similarities is a common tool used in a broad range of scientific applications. However, in many problems it may be expensive to obtain or compute similarities between the items to be clustered. This paper in…

Authors: Brian Eriksson, Gautam Dasarathy, Aarti Singh

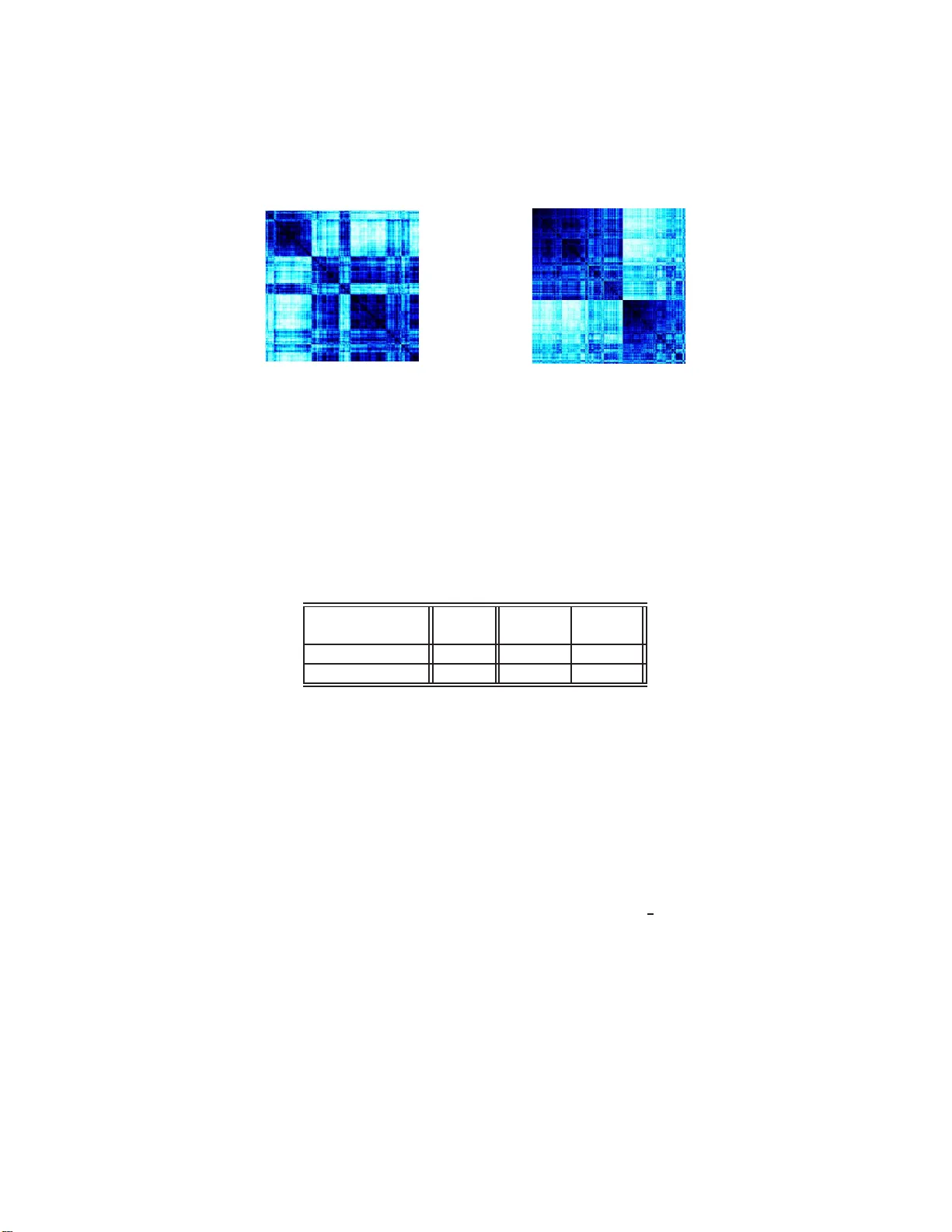

Activ e Clustering: Robust and Efficien t Hierarc hical Clustering using Adaptiv ely Selected Similariti es Brian Eriksson, Gautam Dasarath y , Aarti Singh, Robert No wak ∗ Abstract Hierarc hical clustering bas ed on pairwise similari ties is a common to ol used in a b road ran ge of scien tific applications. Ho w ever, in m any prob- lems it ma y b e exp ensiv e to obtain or compute similarities b et ween the items to be clustered. This paper inv estigates the hierar chical clustering of N items based on a small subset of pairwise similarities, significan tly less th an the complete set of N ( N − 1) / 2 s imilarities. First, w e s how that if th e in tracluster similarities exceed intercluster similarities , th en it is p ossible to correctly determine the hierarchical clustering from as few as 3 N log N similariti es. W e demonstrate th is order of m agnitud e sa vings in the num b er of pairwise similarities necessitates sequentially selecting whic h similarities to obtain in an adaptive fashion, rather than pic king them at random. W e then prop ose an active clust ering meth od that is ro- bust to a limited fraction of anomalous similarities, and show how even in the presence of these noisy similarit y v alues w e can resolv e the hierarc hical clustering u sing only O N log 2 N pairwise similarities. 1 In tro duction Hierarchical clustering based on pair wise similarities ar is es routinely in a wide v ariet y of eng ineering a nd scientific pr oblems. These problems include infer ring gene b ehavior from microa rray da ta [1], In ternet top ology disco very [2], detect- ing commun ity structur e in so cial net works [3], advertising [4], and databas e management [5, 6 ]. It is o ften the cas e that there is a significant cost asso ci- ated with obtaining each similarit y v alue. F or example, in the c a se of Int erne t top ology inference, the determina tio n of similar it y v alues require s many probe pack ets to be sent thro ugh the netw or k, which c an place a sig nifican t burden on the net work reso ur ces. In other situations, the similar ities may b e the result o f ∗ B. Erikss on is with the Departmen t of Computer Science, Boston Universit y . G. Dasarathy and R. Now ak are wi th the Department of Electrical and Comput er Engineering, Univ ersity of Wisconsin - Madison. A . Singh is with the Machine Learning Departmen t, Carnegie Mellon Unive rs it y . 1 exp ensiv e exp eriments or requir e an exp ert human to p erform the co mpa risons, again placing a sig nifican t cost on their colle ction. The po tential cost of obtaining similarities motiv ates a natural question: Is it possible to r eliably cluster items using less than the complete, exha ustiv e set of all pair wise similar ities? W e will show that the answer is yes, particularly un- der the condition that intracluster similarity v alues are g reater than int er cluster similarity v alues, w hich we will define as the Tight Clust ering (TC) condition. W e also co ns ider extensions o f the prop osed approa c h to mo re challenging sit- uations in which a significant fraction o f intracluster similarity v alues may be smaller than intercluster s imilarit y v alues. This a llo ws fo r ro bust, prov ably- correct clustering ev en when the TC conditio n does not hold uniformly . The TC condition is s atisfied in man y situations. F or ex ample, the T C con- dition holds if the similarities are generated b y a branching pr ocess (or tree structure) in which the similarity be t ween items is a monotonic increasing func- tion of the dista nce from the branching ro ot to their nea rest co mmon branch po in t (ancestor ). This sort of pro cess ar ises na turally in clustering no des in the Int erne t [7]. Also notice that, for s uitably chosen similarity metrics, the data can satisfy the TC condition even when the clusters hav e co mplex structures. F or example, if simila rities betw een tw o points are defined as the length of the longest edge on the shortes t path b etw een them on a nearest- ne ig h b or g raph, then they satisfy the TC condition giv en the clusters do not ov erla p. Addition- ally , density based simila rit y metr ic s [8] also allow for arbitra ry cluster s hapes while satisfying the TC co nditio n. One natural appr oach is to try to cluster using a s mall subset o f randomly chosen pairwise similarities. How ever, we show that this is quite ineffective in general. W e instead prop ose an active appr oach that seq uen tially se le cts simi- larities in a n adaptive fashio n, and thus we call the pro cedure active clustering . W e show that under the TC condition, it is p ossible to relia bly determine the unambiguous hierarchical clustering of N items using at most 3 N log N of the total of N ( N − 1) / 2 po ssible pa irwise similar ities. Since it is clea r tha t we m ust o btain at leas t one similar it y for each of the N items, this is ab o ut a s go o d as o ne could hop e to do. Then, to bro aden the applicability of the pro- po sed theo ry and metho d, we prop ose a robust a ctiv e clustering metho dology for situations where a random subset of the pairwise similar ities are unreliable and therefore fa il to meet the TC co ndition. In this case, we show how using only O N log 2 N actively chosen pa irwise similarities, we can still r eco ver the underlying hierarchical clustering with high probability . Both o f the clustering metho dologies rely solely on the relative o rdering b e- t ween the similarities, which means they a re in v ariant to strictly monotonic transformatio ns of the simila r ities ( e.g., scaling or shifts). Therefore, these techn iques will b e automa tically robust without the need for an additional pre- pro cessing step for situations where similar it y calibr ation is an issue, such a s sub- jective human-annotated features aris ing in a pplications like the Net fl ix Pr oblem [9]. While there hav e been pr ior attempts at developing ro bus t pro cedures for hierarchical clustering [1 0, 11, 12], these w or ks do not try to optimize the num b er 2 of similarity v alues needed to robus tly identif y the tr ue clustering, and mostly require all O N 2 similarities. Other prior work has attempted to develop efficient active clustering methods [13, 1 4, 15], but the prop osed tec hniques are ad-ho c a nd do not provide any theore tica l g uarantees. Outside of clustering literature ther e are some in teres ting connections emerge b et ween this problem and prior w ork on graphical mo del inference [2, 16], whic h w e exploit here. The pa p er is orga nized as follows. The hierar chical clustering problem a nd our tight clustering co nditio n is introduced in Sectio n 2. In Sectio n 3, we de- scrib e the propo s ed metho dology for resolving the hierarchical clustering using a limited num ber of pairwise v alues in the no iseless setting. Robust metho ds for clustering in the presence of similar it y erro rs and outliers ar e derived in Section 4. Finally , experiments on syn thetic and real data sets are presented in Section 5. 2 The Hierarc hical Clustering Problem Let X = { x 1 , x 2 , . . . , x N } be a colle c tio n o f N items. Our goal will b e to resolve a hier ar chic al clustering o f these items. Definition 1. A cluster C is define d as any su bset of X . A c o l le ction of clusters T is c al le d a hi er ar chic al clusteri ng if ∪ C i ∈T C i = X and for any C i , C j ∈ T , only one of the fol lowing is true (i) C i ⊂ C j , (ii) C j ⊂ C i , (iii) C i ∩ C j = ∅ . The hierarchical clustering T has the form o f a tree, where each no de cor- resp onds to a pa r ticular cluster. The tre e is binary if for every C k ∈ T that is no t a leaf of the tree, there exists prop er subsets C i and C j of C k , such that C i ∩ C j = ∅ , and C i ∪ C j = C k . The binar y tree is said to be c omplete if it ha s N leaf no des, each corresp onding to o ne of the individual items. Without loss of genera lit y , we will assume that T is a complete (possibly un balanced) binary tree, since a n y non-bina ry tre e ca n b e r epresented by an equiv alent binar y tree. Let S = { s i,j } denote the c o llection of a ll pairwis e similarities betw een the items in X , with s i,j denoting the similarity be tw een x i and x j and assuming s i,j = s j,i . The tra ditional hierarchical cluster ing problem us e s the complete set of pair wise similarities to infer T . In order to gua rant ee tha t T can b e correctly identified from S , the similarities m ust confor m to the hierarch y of T . W e consider the follo wing sufficient condition. Definition 2. The t riple ( X , T , S ) satisfies t he Tight C lustering (TC) Con- dition if for every set of thr e e items { x i , x j , x k } such that x i , x j ∈ C and x k 6∈ C , for some C ∈ T , the p airwise similarities satisfi es, s i,j > max ( s i,k , s j,k ) . In words, the TC condition implies that the similarity b etw een all pairs within a cluster is greater than the similarity with resp ect to any item outside the cluster. W e can cons ider us ing off-the-shelf hiera rc hical clustering metho d- ologies, suc h as b ottom-up agglomerative clustering [17], on the set of pairwise similarities that s atisfies the TC condition. Bottom-up agglo mer ativ e cluster ing 3 is a recurs iv e pro cess that b egins with singleto n clusters ( i.e ., the N individual items to be clustere d). At each step of the a lgorithm, the pair of mo st similar clusters are mer ged. The pro cess is rep eated unt il all items ar e merged into a single cluster. It is ea sy to se e that if the TC condition is satis fie d, then the standard b ottom-up agglomera tiv e clus tering algor ithms such as single link age, av erage link age and complete link age will all pro duce T given the complete similarity matrix S . V arious agglomer a tiv e clustering algo rithms differ in how the similarity b et ween tw o clusters is defined, but every tec hnique r equires all N ( N − 1) / 2 pa irwise similarity v alues sinc e all similarities must b e compared at the v ery first step. T o prop erly cluster the items using fewer similarities requires a more so phis- ticated adaptive approach where similar ities are carefully selected in a s equen- tial manner. Before contemplating such approaches, w e first demonstrate that adaptivity is necessar y , and that simply pic king similarities at random will not suffice. Prop osition 1 . L et T b e a hier ar chic al clu s t ering of N items and c onsider a cluster of size m in T for some m ≪ N . If n p airwise similarities, with n < N m ( N − 1 ) , ar e sele cte d uniformly at r andom fr om the p airwise similarity matrix S , t hen any clust ering pr o c e dur e wil l fail to r e c over t he cluster with high pr ob a bility. Pr o of . In order for any pro cedure to identify the m -sized cluster, we need to measure at least m − 1 of the m 2 similarities b etw een the cluster items. Let p = m 2 / N 2 be the pr obabilit y that a randomly chosen similarity v alue will be betw een items ins ide the cluster. If we uniformly sample n s imilarities, then the exp ected num ber of similar ities betw een items inside the cluster is appr o ximately n m 2 / N 2 (for m ≪ N ). Given Ho effding’s inequality , w ith high probability the nu mber o f observed pair wise similar ities inside the cluster will b e clo se to the exp ected v alue. It fo llows that we require n m 2 / N 2 = n m ( m − 1) N ( N − 1) ≥ m − 1, and therefor e w e require n ≥ N m ( N − 1) to rec o nstruct the clus ter with high probability . This r esult shows that if we want to reliably rec over cluster s of size m = N α (where α ∈ [0 , 1]), then the n umber of ra ndomly selected similar ities must exceed N (1 − α ) ( N − 1). In simple terms, rando mly chosen similar ities will not adequately sample all clus ters. As the cluster size decrea ses ( i.e., as α → 0) this means that almost all pa irwise similar ities are needed if chosen at r a ndom. This is more than are needed if the similarities are selec ted in a se quen tial a nd adaptive manner. In Section 3, we prop ose a seq uen tial metho d that requir e s a t most 3 N log N pa irwise similarities to determine the corr ect hierar c hical clustering. 4 3 Activ e Hierarc hical Clustering under the TC Condition F rom Prop osition 1, it is clear that unles s we acq uire almost all of the pairwise similarities, reco nstruction of the clustering hierarchy when sampling at random will fail with high proba bility . In this section, we demonstrate that under the assumption that the TC condition holds, an active clustering metho d base d on adaptively sele cte d s imila rities enables one to p erform hier archical clustering efficiently . T ow ar ds this end, we consider the work in [16] wher e the a uthors are concerned with a v ery differen t problem, namely , the iden tification of causality relationships among binary rando m v ariables. W e pres e nt a mo dified a da ptation of pr ior work here in the context of our hier archical clustering from pairwise similarities problem. F rom our discussio n in the previous s e ction, it is ea sy to see that the problem of r econstructing the hiera r c hical cluster ing T of a given se t of items X = { x 1 , x 2 , . . . , x N } can be rein terpreted as the problem of recovering a binary tree whose leaves are { x 1 , x 2 , . . . , x N } . In [16], the authors define a sp ecial type of test o n tr iple s of leav es called the le adership test which identifies the “leader” o f the triple in ter ms of the underlying clustering tree structure. A leaf x k is said to be the le ader of the triple ( x i , x j , x k ) if the path from the ro ot of the tre e to x k do es no t contain the neare s t common ancestor of x i and x j . An example of this prop erty can b e seen in Figure 1. This prior w or k shows that one c a n efficiently recons tr uct the entire tr e e T using only these leadership tests. x x x i j k Figure 1: T ree structure where x k is the leader of the triple ( x i , x j , x k ). The following lemma demonstrates that giv en obs erv ed pa irwise similarities satisfying the TC condition, an outl ier test using pairwise similar ities will correctly resolve the leader of a tr iple o f items. Lemma 1 . L et X b e a c ol le ction of items e quipp e d with p airwise similarities S and hier ar chic al clus tering T . F or any thr e e items { x i , x j , x k } fr om X , define outlie r ( x i , x j , x k ) = x i : ma x( s i,j , s i,k ) < s j,k x j : max( s i,j , s j,k ) < s i,k x k : max( s i,k , s j,k ) < s i,j (1) If ( X , T , S ) satisfies the TC c ondition, t hen ou tlier ( x i , x j , x k ) c oincides with the le ader of the same triple with r esp e ct to t he tr e e structur e c onveye d by T . 5 Pr o of . Suppo se that x k is the lea der of the triple with resp ect to T . This o ccurs if and only if there is a clus ter C ∈ T such that x i , x j ∈ C and x k ∈ T \ C . By the TC condition, this implies that s i,j > max( s i,k , s j,k ). Therefore x k is the outlie r of the s ame tr iple. Note that outl ier r elies o nly o n the order ing of similarity v alues, a nd there- fore it is in v ariant to mo no tonic transforma tio ns of the s imilarities. More pr e- cisely , let f b e a str ic tly mono to nic function and define f ( S ) := { f ( s i,j ) } to be the set of pa irwise simila rities under this tr ansformation. If ( X , T , S ) sa tisfies the TC condition, then so do es ( X , T , f ( S )). In w ords, the TC condition do es not require precise calibration o f similarit y v a lues . The c lus tering a lgorithm we prop ose is ca lle d OUTL IERclust er , and is given below in Algor ithm 1. The pr ocedure is based on the o utlier test and a tree reconstructio n algor ithm due to [1 6]. In Theor em 3.1, we show that the a lgo- rithm determines the correct hiera rc hical clustering using O ( N lo g N ) pair wise similarities. Theorem 3.1. A ssume that t he triple ( X , T , S ) s atisfies the Tight Clustering (TC) c ondition wher e T is a c omplete (p ossible unb alanc e d) binary tr e e that is unknown. Then, O UTLIERcl uster r e c ove rs T exactly u sing at most 3 N log 3 / 2 N adaptivel y sele cte d p airwi se similarity values. Pr o of . F rom Appendix I I of [1 6], we find a metho dology that r equires at most N log 3 / 2 N lea dership tests to exactly r econstruct the unique hier archical clus- tering of N items. Le mma 1 shows that under the TC condition, each leader- ship tes t ca n b e p erformed using only 3 ada ptively selected pa ir wise simila rities. Therefore, we ca n reconstruct the hierarchical clustering T fro m a set of items X using at most 3 N log 3 / 2 N a daptiv ely s elected pairwise similarit y v alues. 3.1 Tigh t Clustering E xperiments In T able 1 we se e the results of b oth clustering techniques ( OUTLI ERcluste r and b ottom-up ag glomerative cluster ing) on v arious synthetic tree top ologies given the Tight Clustering (TC) c o ndition. The p erformance is in terms of the num b er of pa irwise similarities required b y the a g glomerative cluster ing metho dology , denoted b y n agg , and the num b er of similar ities requir ed by our OUTLIE Rcluster metho d, n outlier . The metho dolog ie s are performed on b oth a balanced bina ry tree of v arying size ( N = 128 , 256 , 51 2) and a synthetic Internet tree top ology generated using the technique fro m [18]. As see n in the table, our techn ique resolves the underlying tree structure using at most 11% of the pair- wise similarities required by the b ottom-up agglomer ativ e clustering approach. As the nu mber of items in the top ology increase s , further improv ements are seen using OUTLIERclu ster . Due to the pairwise similarities satisfying the TC condition, bo th metho dologie s reso lv e a binary repr esen tation of the underlying tree structure exactly . 6 Algorithm 1 - OUTLIER cluster ( X , S ) Giv en : 1. set of items, X = { x 1 , x 2 , ...x N } . 2. matrix of pairwise similarities, S . Clustering Pro cess : Initialize clustering tree T = { x 1 , x 2 , { x 1 , x 2 }} . F or i = { 3 , 4 , ..., N } 1. Set tree T i = T . 2. While n umber of items in T i , denoted # T i , is greater tha n 2 (a) Select a subtree , T C ⊂ T i , such that the num ber of items in the subtree, # T C , satisfies # T i 3 < # T C ≤ 2# T i 3 . (b) Find items x j , x k ∈ T C such that no s ubtr ees in T C (bes ide s T C ) contain b oth x j , x k . (c) If: x i = outli er ( x i , x j , x k ), replace T C in T i with item x j , Else: set T i = T C . 3. Let x j , x k be the tw o remaining items in T i . 4. Let T ′ be the s mallest subtree in T containing b oth x j , x k . This subtree contains T ′ j , T ′ k , such that x j ∈ T ′ j , x k ∈ T ′ k , T ′ = T ′ j S T ′ k , and T ′ j T T ′ k = ∅ . 5. If: ou tlier ( x i , x j , x k ) = x i , replace T ′ in T with {T ′ , { x i }} . Elseif: o utlier ( x i , x j , x k ) = x j , replace T ′ k in T with {T ′ k , { x i }} . Else: ou tlier ( x i , x j , x k ) = x k , replace T ′ j in T with {T ′ j , { x i }} . Output : Hierarchical cluster tree, T . T able 1 : Compar ison o f OUT LIERclus ter a nd Agg lomerative Clustering on v ar- ious topolo gies sa tisfying the Tight Clus tering condition. T op ology Size n agg n outlier n outlier n agg Balanced N = 128 8,128 876 10.78% Binary N = 256 32,640 2,206 6.21% N = 512 130,81 6 4,561 3.49% Synt hetic Internet N = 768 294,528 8,490 2.88% 3.2 F ragili ty of OUTLIERclu ster OUTLIE Rcluster determines the correct clustering hierarch y when all the pair- wise similar ities ar e consistent with the hiera rc hy T , but it can fail if one or 7 more of the pair wise similarities are inconsistent. In Figure 2, we examine the per formance o f O UTLIERcl uster on a bala nced binary tree (with N = 2 56) when a small num b er of calls to outl ier in OU TLIERclu ster return an inc o r- rect item with resp ect to the underlying hiera rc hy T . The inco rrect ca ses ar e selected at r andom. This p erforma nce measured in terms of r min , the size of the smallest corr ectly resolved cluster ( i.e., all clusters of s ize r min or la rger ar e reconstructed correctly for the clustering) av era ged ov er 150 separate expe ri- men ts. As seen in the table, with only tw o o utlier tests erroneous at random, we find that this co rrupts the clustering reco ns truction us ing OUTLIE Rcluster significantly . This can attr ibuted to the g reedy construction of the clustering hierarch y using this methodolog y , where if one of the initial items is incorrectly placed in the hier arch y , this will result in a ca scading effect that will drastically reduce the accuracy the clus ter ing. 0 1 2 3 0 5 10 15 20 25 30 35 Number of in cor rect o ut l i e r tes ts r min Figure 2 : F ragility of OUTL IERclust er when a se lected num b e r of ou tlier tests are incorrect for a bala nc e d binar y tree of size N = 256 . 4 Robust Activ e Clustering Suppo se tha t most, but not al l , of the o utlier tests agree with T . This may o ccur if a subset o f the simila rities ar e in some sense inconsistent, erroneo us or anomalous. W e will assume that a certain subset of the simila rities produce correct outli er tests and the r est may not. Thes e s imilarities that pro duce correct tes ts ar e said to be c onsistent with the hierar chy T . Ou r go al is to r e c ov er the clusters of T despite the fact that the similarities ar e not always c onsistent with it. Definition 3. The subset of c onsistent similarities is denote d S C ⊂ S . These similarities satisfy the fol lowing pr op erty: if s i,j , s j,k , s i,k ∈ S C then outlie r ( x i , x j , x k ) r eturns the le ader of the triple ( x i , x j , x k ) in T (i.e., the outlier test is c onsistent with r esp e ct to T ). W e adopt the following probabilistic mode l for S C . Each similarity in S fails to be consistent indep endently with probability at most q < 1 / 2 ( i.e., 8 mem b ership in S C is termed b y rep eatedly to ssing a biased coin). The exp ected cardinality of S C is E [ | S C | ] ≥ (1 − q ) | S | . Under this mo del, there is a lar ge probability that one or mor e of outlier tests will yield an incorr ect leader with resp ect to T . Thus, our tree reconstr uction algo rithm in Section 3 will fa il to recov er the tree with lar ge probability . W e there fore pursue a different approa c h based on a top-down r ecursive clustering pro cedure that uses voting to ov ercome the effects of inco rrect tests. The key element of the top-down pro cedure is a robust alg o rithm for cor- rectly splitting a giv en cluster in T into its t wo sub clusters, presented in Algo- rithm 1. Roughly sp eaking, the pro cedure quantifies ho w frequently tw o items tend to agr ee on o utlier tests drawn from a small random s ubsample of other items. If they tend to agree freq uen tly , then they are clustered tog ether; other- wise they a r e not. W e show that this algorithm ca n determine the correct split of the input cluster C with high pro babilit y . The degr ee to which the split is “balanced” affects performa nce, and we need the following definition. Definition 4. L et C b e any non-le af cluster in T and denote its su b clusters by C L and C R ; i.e. , C L T C R = ∅ and C L S C R = C . The balance factor of C is η C := min {|C L | , |C R |}\|C | . Theorem 4.1. L et 0 < δ ′ < 1 and thr eshold γ ∈ (0 , 1 / 2) . Consider a cluster C ∈ T with b alanc e factor η C ≥ η and disjo int s ub clusters C R and C L , and assume the fol lowing c onditions hold: • A1 - The p airwi se simila rities ar e c onsistent with pr ob ability at le ast 1 − q , for some q ≤ 1 − 1 √ 2(1 − δ ′ ) . • A2 - q , η satisfy (1 − (1 − q ) 2 ) < γ < (1 − q ) 2 η . If m ≥ c 0 log(4 n/δ ′ ) and n > 2 m (wher e the c onstant c 0 dep ends on q , γ , η and δ ′ ), t hen with pr ob ability at le ast 1 − δ ′ the output of split ( C , m , δ ′ ) is t he c orr e ct sub clusters, C R and C L . The pro of of the theorem is given in the App endix. The theor em a b ov e shows that the algorithm is guaranteed (with high pr obabilit y) to correctly s plit clusters that are sufficien tly large for a certain range of q and η , as spe cified by A2 . A b ound on the constant c 0 is given in Equation 3 in the pro of, but the impo rtan t fact is that it do es not dep end on n , the num b er of items in C . Thus all but the very sma llest clusters c a n b e r eliably split. Note tha t total num b er of similarities required by sp lit is at most 3 m n . So if we take m = c 0 log(4 n/δ ′ ), the tota l is at most 3 c 0 n log(4 n/δ ′ ). The key po in t of the lemma is this: inste ad of using al l O ( n 2 ) similarities, spli t only r e quir es O ( n log n ). The a llo wable range in A2 is non-deg enerate and covers an interesting regime of problems in which q is not too large and η is not to o small, this is shown in Figure 3. The allow able rang e o f γ cannot be determined without k no wledge of η and q , so in practice γ ∈ (0 , 1 / 2 ) is a user- selected par ameter (we use γ = 0 . 30 in all our e x periments in the fo llowing section), and the Theo rem holds for the corres p onding s e t of ( q , η ) in A2 . 9 Algorithm 2 : split ( C , m , γ ) Input : 1. A single cluster C consisting of n items. 2. Parameters m < n/ 2 and γ ∈ (0 , 1 / 2 ) Initialize : 1. Select tw o s ubsets S V , S A ⊂ C unifor mly at ra ndom (with replacement) containing m items each. 2. Select a “s eed” item x j ∈ C uniformly at random and let C j ∈ {C R , C L } denote the subcluster it b elongs to. Split : • F or each x i ∈ C and x k ∈ S A \ x i , compute the out lier fr action of S V : c i,k := 1 |S V \ { x i , x k }| X x ℓ ∈S V \{ x i ,x k } 1 { outlier ( x i ,x k ,x ℓ )= x ℓ } where 1 denotes the indica tor fun ction. • Compute the outlier agr e ement on S A : a i,j := X x k ∈S A \{ x i ,x j } 1 { c i,k >γ and c j,k >γ } + 1 { c i,k <γ and c j,k <γ } / |S A \ { x i , x j }| • Assign item x i to a subcluster accor ding to x i ∈ C j : if a i,j ≥ 1 / 2 C / C j : if a i,j < 1 / 2 Output : sub c lusters C j , C / C j . W e now give our robust active hiera rch ica l clustering algor ithm, RAcl uster . Given an initial single cluster of N items, the split metho dology of Algorithm 1 is r ecursively p erformed unt il all sub clusters are of size less than or equal to 2 m , the minim um res olv able cluster size where we can ov ercome inconsistent similarities thro ugh voting. The o utput is a hierar c hical clustering T ′ . The algorithm is summarized in Algor ithm 3. Theorem 4.1 sho ws that it suffices to use O ( n log n ) similarities for eac h call of sp lit , where n is the s ize of the cluster in ea c h call. Now if the splits a r e balanced, the depth of the complete cluster tree will b e O (log N ), with O (2 ℓ ) calls to split at lev el ℓ in volving c lus ters of s ize n = O ( N/ 2 ℓ ). An easy calcu- 10 0 0.1 0.2 0.3 0.4 0.5 0 0.05 0.1 0.15 0.2 q η Success Guaranteed Figure 3: The sha de d r egion depicts the range of q and η for which Theore m 4.1 can guarantee corr ect recovery o f sub clusters using the split algo rithm. Algorithm 3 : RAclu ster ( C , m , γ ) Giv en : 1. C , n items to be hierar c hically clustered. 2. parameters m < n / 2 and γ ∈ (0 , 1 / 2) P artitioning : 1. Find {C L , C R } = split ( C , m , γ ). 2. Ev aluate hierarchical subtrees, T L , T R , of cluster C using: T L = RAclus ter ( C L , m , γ ) : if |C L | > 2 m C L : otherw is e T R = RAclus ter ( C R , m , γ ) : if |C R | > 2 m C R : otherwise Output : Hierar c hical c lus tering T ′ = {T L , T R } containing sub clusters of size > 2 m . lation then shows that the total num ber of similarities required by RAcl uster is then O ( N log 2 N ), compar ed to the total n umber whic h is O ( N 2 ). The p er- formance g uarantee for the ro bust active clustering alg orithm are summarized in the follo wing main theorem. Theorem 4 . 2. L et X b e a c ol le ction of N items with underlying hier ar chic al clustering stru ct ur e T and let 0 < δ < 1 . I f m = k 0 log 8 δ N , for a c onstant k 0 > 0 , then RAclus ter ( X , m , γ ) uses O ( N log 2 N ) similarities and with pr ob- ability at le ast 1 − δ r e c overs al l clusters C ∈ T t hat have size > 2 m , with b alanc e fa ctor η C ≥ η , and satisfy A1 holding with δ ′ = δ 2 N 1 / log( 1 1 − η ) and A2 of The or em 4.1. 11 The pro of of the theorem is g iv en in the Appendix. The constant k 0 is sp ecified in Equatio n 3. Roughly sp eaking, the theorem implies that under the conditions of the Theo rem 4 .1 w e can r o bustly re c o ver all clusters of size O (log N ) o r larger using o nly O ( N log 2 N ) s imilarities. Comparing this result to Theorem 3.1, we note thr ee cos ts asso ciated with b eing r obust to inconsistent similarities: 1) we requir e O ( N log 2 N ) rather than O ( N log N ) similarity v alues; 2) the deg ree to which the clusters ar e ba lanced no w plays a role (in the c o nstan t η ); 3) we cannot guar an tee the recov ery of clusters smaller than O (log N ) due to v oting. 5 Robust Cluste ring Exp erimen ts T o tes t our ro bust clustering metho dolog y we fo cus on exper imen tal r esults from a balanced binary tree using synthesized simila rities and a rea l-w or ld data set using genetic microarr a y data ([19]). The synt hetic binary tree exp eriments allows us to observe the characteristics of our alg o rithm while controlling the amount of inconsistency with resp ect to the Tig h t Cluster ing (TC) condition, while the rea l world da ta g iv es us p ersp ective on pr o blems where the tree struc- ture and TC conditio n is ass umed, but not known. In or der to quan tify the p erformance of the tree r econstruction algorithms, consider the no n-unique par tial or dering, π : { 1 , 2 , ..., N } → { 1 , 2 , ..., N } , result- ing from the or de r ing of items in the reco nstructed tree. F o r a set o f observed similarities, given the o riginal or de r ing of the items from the true tree s tructure we would ex pect to find the larg est s imilarit y v a lues clustered around the diag- onal o f the similarity matrix. Meanwhile, a ra ndom o rdering of the items would hav e the larg e similar ity v alues p otentially scattered aw ay from the diagonal. T o ass e ss p erformanc e o f our reconstr ucted tree str uctures, w e will consider the r ate of dec a y for simila rit y v alues off the diag onal of the reo r dered items, b s d = 1 N − d P N − d i =1 s π ( i ) ,π ( i + d ) . Using b s d , we define a dis tribution ov er the the a v- erage off-diago nal similar it y v alues, and compute the entrop y of this distr ibution as follows: b E ( π ) = − N − 1 X i =1 b p π i log b p π i (2) Where b p π i = P N − 1 d =1 b s d − 1 b s i . This en tropy v alue provides a measure of the qua lit y of a partial or de r - ing induced b y the tr ee reco nstruction alg o rithm. F or a balanced binar y tree with N=512, we find that for the origina l order ing, b E ( π or iginal ) = 2 . 2 3 23, and for the random ordering, b E ( π r andom ) = 2 . 702. This motiv ates exa min- ing the estimated ∆-entropy of our clustering r econstruction-based or derings as b E ∆ ( π ) = b E ( π r andom ) − b E ( π ), wher e we no rmalize the reconstructed c lustering ent ro p y v alue with resp ect to a r andom per m utation o f the items. The quality of our clustering methodo logies will b e examined, where the lar ger the estimated ∆-entrop y , the higher the quality of our estimated clustering. 12 F or the synthetic bina r y tree exp eriment s, we crea ted a balanced binary tre e with 512 items. W e generated s imilarit y b et ween ea c h pair of items such that 100 · (1 − q )% of the pairwis e similarities c hos en at random are co nsisten t with the TC condition ( ∈ S C ). The re ma ining 1 00 · q % o f the pair wise s imilarities were inconsis ten t with the TC co ndition. W e examined the p erformance of bo th standa rd b ottom-up ag glomerative clustering a nd o ur Robust Clustering algorithm, RAc luster , for pairwise similar ities with q = 0 . 05 , 0 . 15 , 0 . 2 5. The results presented here are averaged ov er 10 r andom realization of noisy sy nthetic data and setting the threshold γ = 0 . 30. W e used the similarity voting budge ts m = 40 and m = 80, which r equire 3 8 % and 65% of the complete se t of simi- larities, resp ectively . Performanc e g ains ar e shown using our ro bust clustering approach in T able 2 in terms of bo th the estimated ∆-entropy a nd r min , the size of the s mallest corr ectly res olv ed cluster (where all cluster s o f size r min or la r ger are r econstructed corr ectly for the clustering). Comparis ons betw een ∆-entrop y and r min show a clear correlation betw een high ∆-en tro p y and high clustering reconstr uction resolution. While w e make no theoretical guar an tees for reconstruction when the cluster s are smaller than m o r when the prop erties of the c lus ters ( q , η ) are o utside the fea sible reg ion of Fig ure 3, these res ults show that these clusters can be po ssibly resolved in practice. T able 2: Clustering ∆-entrop y results for synthetic binary tre e with N = 512 for Agglomerative Clustering and RAclus ter . Agglo. Robust Robust Clustering (m=40) (m=80) q ∆- En tropy r min ∆-Entrop y r min ∆-Entrop y r min 0 . 05 0.3 666 460.8 1.01 78 6.8 1.01 78 7.2 0 . 15 0.0 899 512 1.0161 16 1.0161 15.2 0 . 25 0.0 133 512 0.9360 38 4 1.0119 57.6 In terms of a real w orld da ta set, w e test our methodolo g ies against a set of gene microa r ray data. Our genetic data s et [19] consists of 1,0 24 yeast genes with 7 expressio ns each, from which w e exhaus tiv ely genera te the standard Pearson correla tion using the expr ession vectors for every pair of g enes. Our robust clus- tering metho do logy is p erformed o n the datasets using the thr eshold γ = 0 . 30 and similarity voting budg ets m = 10 a nd m = 80, requiring at most 18% and 61% of the total similar ities (when N = 512), resp ectively . The r esults in T able 3 (av eraged over 10 random p ermutations of the datasets) show that ag ain our robust clustering metho dology o utperfor ms ag glomerative cluster ing in terms of estimated ∆-entrop y o f the re o rdered elements, while als o not req uir ing to observe every pair wise s imilarit y v alue. In Figur e 4 we see the reo rdered sim- ilarity matr ices given b oth ag glomerative clustering and our r obust clustering metho dology , RAcluster . 13 (A) (B) Figure 4 : Reordered pair wise s imila rit y ma trices, Gene microarr a y data with N = 10 24 using (A) - Agglomer ativ e Cluster ing and (B) - Robust Clustering using m = 80 (re q uiring only 43% of the similarities). An ideal clustering would orga nize items so that the similar it y matrix is dark blue (high similar it y) clusters/blo cks on the diago nal and light blue (low similar it y) v alues off the diagonal blo cks. The robust clus tering is clearly closer to this ideal ( i.e., B compared to A). T able 3: ∆-entropy r esults for r eal world gene micro array datas e t using b oth Agglomera tiv e Clustering and Robust Clustering algorithms. Dataset Agglo. Robust Robust (m=10) (m=80) Gene (N=512) 0.1417 0.1912 0.2035 Gene (N=1024) 0.0761 0.1325 0.1703 6 Conclusions Despite the wide ra nging a pplications of hierarchical clustering (biology , ne t- working, scientifi c simulation), relatively little work has b een done o n examining the n umber of pair wise similarity v alues nee ded to r esolve the hiera rc hical de- pendenc ie s in the presence of noise. The g oal of our work was to use dra stically few er selected pairwise similarit y v alues to reduce the total n umber of pairwise similarities needed to res olv e the hier archical dep endency structure while re- maining robust to po ten tial outliers in the data . When there are no outliers, we pr esen ted a metho dology that re quires no mor e than 3 N log 3 2 N similarity v alues to r eco ver the clustering hierarchy . W e then show ed tha t in the pr esence of inconsistent similarity v alues we o nly requir e on the or der of O N log 2 N similarities to ro bustly recover the clustering. These r esults op en hier archical clustering to a new realm of large-s c ale problems that w er e pr eviously imprac- tical to ev a luate. 14 7 App endix 7.1 Pro of of Theorem 4.1 Since the outli er tests can b e err oneous, we instead use a tw o-ro und voting pro cedure to correctly determine whether tw o items x i and x j are in the sa me sub-cluster or no t. Please refer to Algo rithm 1 for definitions of the relev ant quantities. The follo wing lemma establishes that the outlier fractio n v alues c i,k can reveal whether tw o items x i , x k are in the sa me subcluster or not, pro vided that the num ber of voting items m = |S V | is large enough and the similarity s i,k is consistent (later we will sho w tha t a second ro und of v oting ca n remov e this requirement). Lemma 2 . Consider two items x i and x k . Under assumptions A1 and A2 and assuming s i,k ∈ S C , c omp aring the outlier c ount values c i,k to a thr eshold γ wil l c orr e ctly indic ate whether x i , x k ar e in the same sub cluster with pr ob ability at le ast 1 − δ C 2 for m ≥ log(4 /δ C ) 2 min ( γ − 1 + (1 − q ) 2 ) 2 , ((1 − q ) 2 η − γ ) 2 . Pr o of . Let Ω i,k := 1 { s i,k ∈ S C } be the even t that the simila rit y b et ween items x i , x k is in the consistent subset (se e Definition 3). Under A1 , the exp ected outlier fraction ( c i,k ) conditioned on x i , x k and Ω i,k can b e bo unded in tw o cases; when they belong to the same subcluster and when they do not: E [ c i,k | x i , x k ∈ C L or x i , x k ∈ C R , Ω i,k ] ≥ (1 − q ) 2 η E [ c i,k | x i ∈ C R , x k ∈ C L or x i ∈ C L , x k ∈ C R , Ω i,k ] ≤ 1 − (1 − q ) 2 A2 s tipula tes a gap b etw een the tw o b ounds. Ho effding’s Inequality ensures that, with high pr obabilit y , c i,k will not significantly dev iate b elo w/a bov e the low er/upp er b ound. Thresho lding c i,k at a level γ b et ween the b ounds will probably correctly determine whether x i and x k are in the same subclus ter or not. Mor e precisely , if m ≥ log(4 /δ C ) 2 min ( γ − 1 + (1 − q ) 2 ) 2 , ((1 − q ) 2 η − γ ) 2 , then with proba bility at lea st (1 − δ C / 2) the threshold test correctly determines if the items are in the same subcluster . Next, note tha t w e ca nnot use the cluster count c i,j directly to decide the placement of x i since the condition s i,j ∈ S C may not ho ld. In order to b e r o bust 15 to erro rs in s i,j , we emplo y a second ro und of voting based on an indep endent set of m randomly selected agr e ement items, S A . The agr e ement fr action , a i,j , is the av era ge o f the n umber of times the item x i agrees with the cluster ing decision of x j on S A . Lemma 3. Consider the fol lowing pr o c e d ur e: x i ∈ C j : if a i,j ≥ 1 2 C c j : if a i,j < 1 2 Under assumptions A1 and A2 , with pr ob abili ty at le ast 1 − δ C 2 , t he ab ove pr o c e dur e b ase d on m = |S A | agr e ement items wil l c orr e ctly determine if t he items x i , x j ar e in the same sub cluster, pr ovide d m ≥ log(4 /δ C ) 2 (1 − δ C ) ( 1 − q ) 2 − 1 2 2 . Pr o of . Define Φ i,j as the ev ent that similarities s i,k , s j,k are b oth consistent ( i.e., s i,k , s j,k ∈ S C ) and thresholding the cluster counts c i,k , c j,k at level γ co rrectly indicates if the underlying items b elong to the same sub cluster or not. Then using Lemma 2 and the unio n bound we can bo und the pr o babilities, P (Φ i,j ) ≥ (1 − δ C ) ( 1 − q ) 2 P Φ C i,j ≤ 1 − (1 − δ C ) ( 1 − q ) 2 Then the conditional ex p ectations o f the ag reemen t counts, a i,j , can b e b ounded as, E [ a i,j | x i / ∈ C j ] ≤ P Φ C i,j ≤ 1 − (1 − q ) 2 (1 − δ C ) E [ a i,j | x i ∈ C j ] ≥ P (Φ i,j ) ≥ ( 1 − q ) 2 (1 − δ C ) Since q ≤ 1 − 1 / p 2(1 − δ ′ ) and δ C = δ ′ /n (as defined below), there is a gap betw een these tw o b ounds that includes the v alue 1 / 2. Ho effding’s Inequality ensures that w ith high pr obabilit y a i,j will no t significantly devia te ab ov e/b elow the upper/lower b ound. Thus, thresholding a i,j at 1 / 2 will resolve whether the t wo items x i , x j are in the same or different sub clusters with pro babilit y at least (1 − δ C / 2) provided m ≥ log(4 /δ C ) / 2 (1 − δ C ) (1 − q ) 2 − 1 2 2 . By combining Lemmas 2 a nd 3, we can state the following. The s plit metho dology of Algo r ithm 1 will succ e s sfully determine if tw o items x i , x j are in the sa me sub cluster with probability at least 1 − δ C under assumption A1 and A2 , pro vided m ≥ max log(4 /δ C ) 2 (1 − δ C ) (1 − q ) 2 − 1 2 2 , log(4 /δ C ) 2 min ( γ − 1 + (1 − q ) 2 ) 2 , ((1 − q ) 2 η − γ ) 2 16 and the cluster under co nsideration ha s a t least 2 m items. In or der to succes s fully deter mine the subcluster assignment s for all n items of the cluster C with probability at least 1 − δ ′ , requires setting δ C = δ ′ n ( i.e., taking the union b ound ov er all n items). Thus we ha ve the requirement m ≥ c 0 ( δ ′ , η , q , γ ) log (4 n/δ ′ ) where the constan t ob eys c 0 ( δ ′ , η , q , γ ) ≥ max 1 2 (1 − δ ′ ) (1 − q ) 2 − 1 2 2 , 1 2 min ( γ − 1 + (1 − q ) 2 ) 2 , ((1 − q ) 2 η − γ ) 2 (3) Finally , this result and assumptions A1-A2 imply that the algor ithm sp lit ( C , m , γ ) correctly determine the tw o sub clusters of C with probability at least 1 − δ ′ . 7.2 Pro of of Theorem 4.2 Lemma 4. A binary tr e e with N le aves and b alanc e fa ctor η C ≥ η has depth of at most L ≤ lo g N/ log ( 1 1 − η ) . Pr o of . Consider a binar y tree structure w ith N leaves (items) with balance factor η ≤ 1 / 2 . After depth of ℓ , the num be r of items in the la rgest cluster are bo unded b y (1 − η ) ℓ N . If L denotes the maxim um depth level, then there can only b e 1 item in the larg e st cluster after depth of L , we hav e 1 ≤ ( 1 − η ) L N . The en tire hie r archical cluster ing can b e resolved if all the cluster s are r e- solved corr ectly . With a max im um depth of L , the to tal num b er of clusters M in the hiera rc hy is b ounded b y P L ℓ =0 2 ℓ ≤ 2 ( L +1) ≤ 2 N 1 / log( 1 1 − η ) , using the result of Lemma 4. Therefore , the probability that some cluster in the hiera rc hy is not r esolved ≤ M δ ′ ≤ 2 N 1 / log ( 1 1 − η ) δ ′ (where split s ucceeds with probability > 1 − δ ′ ). Therefore, for all clusters (whic h satisfy the co nditions A1 a nd A2 of Theo rem 4.1 and ha ve size > 2 m ) can b e resolved with probability 1 − δ (by setting δ ′ = δ 2 N 1 / log( 1 1 − η ) ), from the pr oof of Theorem 4.1, we require, m ≥ max log 8 δ N 1+(1 / log ( 1 1 − η )) 2 1 − δ 2 N 1+(1 / log( 1 1 − η )) 2 (1 − q ) 2 − 1 2 ! 2 , log 8 δ N 1+(1 / log 1 1 − η ) 2 min ( γ − 1 + (1 − q ) 2 ) 2 , ((1 − q ) 2 η − γ ) 2 17 which holds if m = k 0 ( δ, η , q , γ ) log 8 δ N where, k 0 ( δ, η , q , γ ) ≥ c 0 ( δ, η , q , γ ) / 1 + 1 / log 1 1 − η (4) Given this choice of m , we find that the RAclus ter metho dology in Algo- rithm 3 for a set of N items will resolve a ll clusters that satisfy A1 and A2 of Theorem 4.1 and have size > 2 m , with probability at least 1 − δ . F urthermor e, the algo rithm only requires O N log 2 N total pair wis e simi- larities. By r unning the RA cluster metho dology , using the result from Lemma 4, each item will ha ve the split methodolo g y p erformed at most log N / log( 1 1 − η ) times ( i.e., once for each depth level of the hierarch y). If m = k 0 ( δ, η , q , γ ) log 8 δ N for RAc luster , each call to split will require only 3 k 0 ( δ, η , q , γ ) log 8 δ N pair- wise similar ities p er item. Given N total items, we find that the RAc luster metho dology r equires at mos t 3 k 0 ( δ, η , q , γ ) N log N log( 1 1 − η ) log 8 δ N pairwise simi- larities. References [1] H. Y u and M. Gerstein, “Genomic Analysis of the Hier a rch ica l Structure of Regulatory Netw or ks,” in Pr o c e e di ngs of the National Ac ademy of S cienc es , vol. 10 3 , 200 6 , pp. 14,724 –14,731 . [2] J. Ni, H. Xie, S. T a tik onda, and Y. R. Y ang, “E fficien t and Dynamic Rout- ing T o polog y Inference from End-to - End Mea s uremen ts,” in IEEE/A CM T r ansactions on Networking , vol. 18, F ebr ua ry 2010, pp. 12 3–135. [3] M. Girv an and M. Newma n, “Communit y Structure in So cial and Biological Net works,” in Pr o c e e dings of the National A c ademy of Scienc es , vol. 99, pp. 7821– 7826. [4] R. K . Sr iv a sta v a, R. P . Leone, and A. D. Sho ck er, “Ma rket Structure Anal- ysis: Hiera rc hical Clustering o f Pro ducts Based on Substitution-in- Use ,” in The Jou rn al of Marketing , vol. 4 5 , pp. 38– 48. [5] S. Chaudhuri, A. Sar ma , V. Ganti, and R. Ka ushik, “Leveraging agg re- gate co nstraint s for deduplicatio n,” in Pr o c e e dings of SIGMOD Confer enc e 2007 , pp. 437–44 8. [6] A. Ara su, C. R´ e, and D. Suciu, “Lar g e-scale deduplication with constraints using dedupalog,” in Pr o c e e dings of ICDE 2009 , pp. 952–9 63. [7] R. Ramasubrama nian, D. Malkhi, F. Kuhn, M. Balakrishnan, and A. Akella, “On The T reeness of Internet Latency and Bandwidth,” in Pr o- c e e d ings of AC M SIGMETRICS Confer enc e , Seattle, W A, 2009. 18 [8] Sa jama a nd A. Orlitsky , “E s timating and Computing Density-Based Dis- tance Metrics,” in Pr o c e e dings of the 22nd I n ternational Confer enc e on Machine L e arning (ICML) , 2005, pp. 76 0–767. [9] G. Potter, “ Putting the Collab orator B ac k Int o Co llabor ativ e Filtering ,” in Pr o c e e dings of the 2nd Netflix -KDD Workshop , vol. 2, La s V egas, NV, August 2008. [10] M. Balca n and P . Gupta, “Robust Hierarchical Clustering ,” in Pr o c e e dings of t he Confer enc e on L e arning The ory (COL T ) , July 2010 . [11] G. Karypis, E. Han, a nd V. Kumar , “CHAMELE ON: A Hierarchical Clus- tering Alg orithm Using Dynamic Mo deling,” in IEEE Computer , vol. 32 , 1999, pp. 68–75. [12] S. Guha, R. Ras togi, and K. Shim, “ROCK: A Robust Clustering Algo r ithm for Categ orical Attributes,” in Information Systems , vol. 25, July 20 00, pp. 345–3 66. [13] T. Hofmann and J . M. Buhmann, “ Activ e Data Clustering,” in A dvanc es in Neu ra l Information Pr o c essing Systems (N IPS) , 1 998, pp. 52 8–534. [14] T. Zo ller and J. Buhmann, “Active Learning fo r Hiera rc hical Pairwise Data Clustering,” in Pr o c e e d ings of the 15th International Confer enc e on Patt ern R e c o g nition , vol. 2, 20 00, pp. 186 –189. [15] N. Grira, M. Crucianu, a nd N. Bo ujemaa, “ Activ e Semi-Sup ervised F uzzy Clustering,” in Pattern R e c o gnition , vol. 41, May 2008, pp. 1 851–186 1. [16] J. Pearl and M. T arsi, “Structuring Causal T r ees,” in J ournal of Complex- ity , v ol. 2, 1986 , pp. 60 –77. [17] T. Hastie, R. Tibshir ani, and J. F riedman, The Elements of Statistic al L e arning . Spr inger, 20 01. [18] L. Li, D. Alderso n, W. Willinger , and J. Do yle, “A Fir s t-Principles Ap- proach to Unders tanding the Internet’s Router-Level T o polog y ,” in Pr o- c e e d ings of AC M SIGCOMM Confer enc e , 200 4 , pp. 3– 14. [19] J. DeRisi, V. Iyer, a nd P . Brown, “Exploring the metabo lic a nd genetic control o f gene e x pression on a geno mic scale,” in Scienc e , vol. 2 78, Octob er 1997, pp. 680–686 . 19

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment