Cooperative encoding for secrecy in interference channels

This paper investigates the fundamental performance limits of the two-user interference channel in the presence of an external eavesdropper. In this setting, we construct an inner bound, to the secrecy capacity region, based on the idea of cooperativ…

Authors: O. Ozan Koyluoglu, Hesham El Gamal

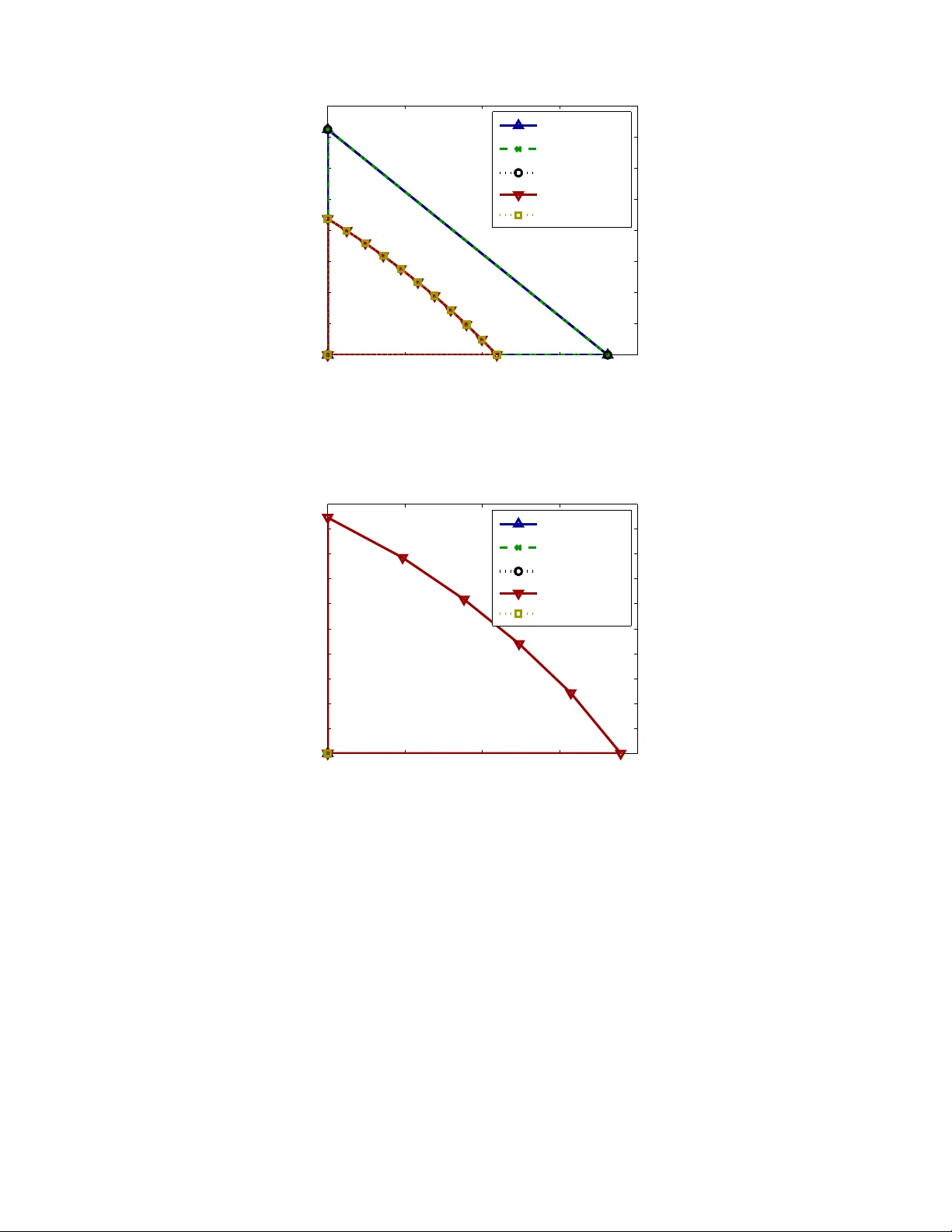

1 Cooperati v e encoding for sec rec y in interf erence channels O. Ozan K oyluoglu and Hesham El Gamal Abstract This p aper investigates the fu ndamen tal performan ce limits of the two-user interfer ence channel in th e pr esence of an external ea vesdroppe r . In this setting, we construct an inner bound, to the secrecy capacity region, based on the idea of cooperativ e en coding in which the tw o users cooperatively design their randomized cod ebook s a nd jointly optimize their channel prefixing distributions. Our achiev ability sch eme also utilizes message-splitting in order to allo w for p artial decoding of the interfer ence at the non -intended receiver . Outer boun ds are then derived and used to establish th e optimality o f the proposed scheme in cer tain cases. In the Ga ussian case, th e previously propo sed cooperative jamming and no ise-forwardin g techniq ues are shown to be special cases of our p roposed approa ch. Overall, our results p rovide structural insigh ts on h ow th e interfer ence can b e exploited to incr ease the secrecy capacity of wireless networks. Index T erms Cooperative enc oding, information theoretic security , interferen ce cha nnel. I . I N T RO D U C T I O N W i thout the secrec y constraint, the interference channel has been in vestigated extensively in the litera- ture. The best k nown achie vable region was obtained in [2] and was recently sim plified in [3]. Howe ver , except for some special cases (e.g., [4]–[8]), characterizing the capacity region of the two-user Gaussi an interference channel remains as an open problem. On the other hand , recent attempts have shed light on the fundamental l imits of the interference channels with confidential messages [9]–[12]. Nonetheless, the external eavesdropper scenario, the mod el considered here, has no t been addressed adequately in the literature. In fact, to t he best of our knowledge, the onl y relev ant work is the recent stud y on t he achiev able secure degrees of freedom (DoF) of the K -user Gaus sian in terference channels under a frequency selective fading mo del [11], [12]. This work dev elops a general approach for cooperative encoding for the (discrete) two-user m emoryless interference channels operated in the presence of a passive eave sdropper . The proposed scheme allo ws for cooperation in two d istinct ways: 1 ) The two users joi ntly optimize their randomized (two-dimensional) codebooks [13], and 2 ) The two u sers join tly i ntroduce random ness in the transmitted sig nals, to confuse the eav esdropper , via a cooperativ e channel prefixing app roach [14]. Remarkably , the two m ethods, respectiv ely , are helpful in adding decodable and undecodabl e random- ness to the channel. The proposed scheme also u tilizes message-splitting and partial decoding to enlarge the ac hiev able secrecy rate region [2]. W e then deri ve outer bounds to the secrecy capacity re gion and use them to establish t he o ptimality of the proposed scheme for som e classes of channels. In addition, we ar gue that s ome coding techniq ues for the secure d iscrete multiple access channel and relay-eavesdropper channel can be obtained as special cases of our cooperative encodi ng approach. O. Ozan Ko yluoglu and Hesham El Gamal are wit h the Department of El ectrical and Computer E ngineering, T he Ohio State Univ ersity , Columbu s, OH 43210, USA. (e-mail: { koy luogo,helgamal } @ec e.osu.edu). This work is submitted to IEE E Transaction s on Information T heory i n May 2009, and rev ised in September 2010. This research was supported in part by the National Science Founda tion under Grant CCF -07-28762 , and in part by Los Alamos National Laboratory and Qatar National Research Fund. The first author is supported in part by t he Pr esidential Fellowship A ward of the Ohio State Univ ersity . 2 Recently , noise forwarding (or jamming) has been shown to enhance th e achiev able secrecy rate region of sever al Gaussi an mult i-user channels (e.g., [15] , [16]). The basic idea is to all ow eac h transmitter to allocate only a fr action of the av ai lable power for its randomized codebook and use the rest for the generation of in dependent noise samp les. The su perposition of the two signals is th en transmitted. W ith the appropriate power allocation policy , on e can ensure th at the jamming sign al results i n maximal ambigui ty at the eav esdropper wh ile incurin g only a m inimal l oss in the achie va ble rate at the legitimate receive r(s). Our work re veals the f act that this n oise injection technique can be obtain ed as a m anifestation of the cooperativ e channel prefixing approach. Based on this obs erv ation, we obtai n a larger achie vable region for the secrecy rate in the Gauss ian multiple access chann el. The rest of the paper is o rga nized as fol lows. Section II i s de voted to t he discrete mem oryless scenario where the main results o f the paper are deriv ed and few sp ecial cases are analyzed. The analy sis for t he Gaussian channels, along with nu merical re sults in selected scenarios, are g iv en in Section III . Fin ally , we off er some concludin g remarks in Section IV. The proofs are collected in the appendices to enhance the flow of the paper . I I . S E C U R I T Y F O R T H E D I S C R E T E M E M O RY L E S S I N T E R F E R E N C E C H A N N E L A. System Model and Notations Throughout th is paper , vectors are denoted as x i = { x (1) , · · · , x ( i ) } , where we omit th e s ubscript i if i = n , i.e., x = { x (1) , · · · , x ( n ) } . Random variables are denoted with capital letters X , whi ch are defined over s ets denoted b y the calligraphic letters X , and random vectors are denoted as bol d-capital letters X i . Again, we drop t he subscript i for X = { X (1) , · · · , X ( n ) } . W e define, [ x ] + , max { 0 , x } , ¯ α , 1 − α , and γ ( x ) , 1 2 log 2 (1 + x ) . The delta functi on δ ( x ) is d efined as δ ( x ) = 1 , if x = 0 ; δ ( x ) = 0 , if x 6 = 0 . Also, we use the foll owing shorthand for probabil ity distri butions: p ( x ) , p ( X = x ) , p ( x | y ) , p ( X = x | Y = y ) . The same not ation wi ll b e u sed for join t dis tributions. For any randomized codebook, w e construct 2 nR rows (correspondin g to message indices) and 2 nR x code words per ro w (corresponding to randomization indices), where we refer to R as th e secrecy rate and R x as t he randomi zation rate. (Please refer to Fig. 1.) Finally , for a giv en set S , R S , P i ∈S R i for secrecy rates and R x S , P i ∈S R x i for the randomization rates. Our discrete memoryless two-user interference channel with an (external) ea vesdropper (IC-E) is denoted by ( X 1 × X 2 , p ( y 1 , y 2 , y e | x 1 , x 2 ) , Y 1 × Y 2 × Y e ) , for some finite sets X 1 , X 2 , Y 1 , Y 2 , Y e (see Fig. 2). Here the sym bols ( x 1 , x 2 ) ∈ X 1 × X 2 are the channel inputs and the symbol s ( y 1 , y 2 , y e ) ∈ Y 1 × Y 2 × Y e are the channel outputs observed at the decoder 1 , decoder 2 , and at the ea vesdropper , respectively . T he channel is memoryless and time-in va riant: p ( y 1 ( i ) , y 2 ( i ) , y e ( i ) | x i 1 , x i 2 , y i − 1 1 , y i − 1 2 , y i − 1 e ) = p ( y 1 ( i ) , y 2 ( i ) , y e ( i ) | x 1 ( i ) , x 2 ( i )) . W e assume th at each transmitt er k ∈ { 1 , 2 } h as a secret mess age W k which is t o be transmitted to the respecti ve recei ver in n channel uses and to be secured from the ea vesdropper . In this sett ing, an ( n, M 1 , M 2 , P e, 1 , P e, 2 ) secret codebook has the following components: 1 ) The secret message set W k = { 1 , ..., M k } ; k = 1 , 2 . 2 ) A stochastic encoding function f k ( . ) at transmitter k which maps the secret messages to the transmitted symbols: f k : w k → X k for each w k ∈ W k ; k = 1 , 2 . 3 ) Decoding function φ k ( . ) at receiv er k wh ich maps the recei ved sym bols to an estimate of the message: φ k ( Y k ) = ˆ w k ; k = 1 , 2 . The reliability of transmissio n is measured by the following probabili ties of error P e,k = 1 M 1 M 2 X ( w 1 ,w 2 ) ∈W 1 ×W 2 Pr φ k ( Y k ) 6 = w k | ( w 1 , w 2 ) is sent , 3 1 N X ( w, w x ) 1 w 2 N R 1 w x 2 N R x Fig. 1. Randomized codebook of W yner . For a gi ven message index, a column is randomly chosen, and the corresponding chann el input is transmitted. Designing the number of columns properly is the key to prove that the security constraint is met. W e refer to this codebook as randomized (two-dimen sional) codebook. Y e Encoder 1 Encoder 2 Channel Deco der 1 Deco der 2 Ea ve sdropper p ( y 1 , y 2 , y e | x 1 , x 2 ) W 2 W 1 X 2 X 1 Y 2 Y 1 ˆ W 1 ˆ W 2 H ( W 1 , W 2 | Y e ) Fig. 2. The discrete memoryless interference channel with an eavesdro pper (IC -E). for k = 1 , 2 . The secrecy is measured by the equiv o cation rate 1 n H ( W 1 , W 2 | Y e ) . W e say that t he rate t uple ( R 1 , R 2 ) is achiev able for the IC-E i f, for any given ǫ > 0 , there exists an ( n, M 1 , M 2 , P e, 1 , P e, 2 ) secret codebook such that, 1 n log( M 1 ) = R 1 1 n log( M 2 ) = R 2 , max { P e, 1 , P e, 2 } ≤ ǫ, and R 1 + R 2 − 1 n H ( W 1 , W 2 | Y e ) ≤ ǫ (1) for s uffi ciently large n . The secrecy capacity region is t he closure of the set o f al l achiev able rate pai rs ( R 1 , R 2 ) a nd is denoted as C IC-E . Finally , we note that the secrec y requirement impos ed on the fu ll message set im plies t he secrec y of individual messages. In oth er words, 1 n I ( W 1 , W 2 ; Y e ) ≤ ǫ implies 1 n I ( W k ; Y e ) ≤ ǫ for k = 1 , 2 . 4 B. Inner Bound In this section, we introduce the cooperativ e encoding scheme, and derive an inner bou nd to C IC-E . The proposed strategy allows for coopera tion in design of t he randomized codeboo ks, as well as in channel prefixing [14]. This way , each user wil l add only a sufficient am ount of randomness as the oth er user will help to increase the randomness seen by the eavesdropper . T he achiev abl e s ecrecy rate region usi ng this approach is stated in the following result. Theor em 1: R IC-E , the closure of ( [ p ∈P R ( p ) ) ⊂ C IC-E , (2) where P denotes the set of all joint distributions of the random variables Q , C 1 , S 1 , O 1 , C 2 , S 2 , O 2 , X 1 , X 2 that factors as 1 p ( q , c 1 , s 1 , o 1 , c 2 , s 2 , o 2 , x 1 , x 2 ) = p ( q ) p ( c 1 | q ) p ( s 1 | q ) p ( o 1 | q ) p ( c 2 | q ) p ( s 2 | q ) p ( o 2 | q ) p ( x 1 | c 1 , s 1 , o 1 ) p ( x 2 | c 2 , s 2 , o 2 ) , (3) and R ( p ) is the closure of all ( R 1 , R 2 ) satisfying R 1 = R C 1 + R S 1 , R 2 = R C 2 + R S 2 , ( R C 1 , R x C 1 , R S 1 , R x S 1 , R C 2 , R x C 2 , R x O 2 ) ∈ R 1 ( p ) , ( R C 2 , R x C 2 , R S 2 , R x S 2 , R C 1 , R x C 1 , R x O 1 ) ∈ R 2 ( p ) , ( R x C 1 , R x S 1 , R x O 1 , R x C 2 , R x S 2 , R x O 2 ) ∈ R e ( p ) , and R C 1 ≥ 0 , R x C 1 ≥ 0 , R S 1 ≥ 0 , R x S 1 ≥ 0 , R x O 1 ≥ 0 , R C 2 ≥ 0 , R x C 2 ≥ 0 , R S 2 ≥ 0 , R x S 2 ≥ 0 , R x O 2 ≥ 0 , (4) for a given joint distribution p . R 1 ( p ) is t he set of all tuples ( R C 1 , R x C 1 , R S 1 , R x S 1 , R C 2 , R x C 2 , R x O 2 ) satisfying R S + R x S ≤ I ( S ; Y 1 |S c , Q ) , ∀S ⊂ { C 1 , S 1 , C 2 , O 2 } . (5) R 2 ( p ) is t he rate region defined by reversing the indices 1 and 2 ev erywhere i n the expression for R 1 ( p ) . R e ( p ) is the set of all tuples ( R x C 1 , R x S 1 , R x O 1 , R x C 2 , R x S 2 , R x O 2 ) satisfying R x S ≤ I ( S ; Y e |S c , Q ) , ∀S ( { C 1 , S 1 , O 1 , C 2 , S 2 , O 2 } , R x S = I ( S ; Y e | Q ) , S = { C 1 , S 1 , O 1 , C 2 , S 2 , O 2 } . (6) Pr oof: W e detail the coding scheme here. The rest of the proof, giv en in Appendix A, sho ws that the proposed coding scheme satisfies both the reliability and the security constraints. Fix some p ( q ) , p ( c 1 | q ) , p ( s 1 | q ) , p ( o 1 | q ) , p ( x 1 | c 1 , s 1 , o 1 ) , p ( c 2 | q ) , p ( s 2 | q ) , p ( o 2 | q ) , and p ( x 2 | c 2 , s 2 , o 2 ) for the channel given b y p ( y 1 , y 2 , y e | x 1 , x 2 ) . Genera te a random typical sequence q n , where p ( q n ) = n Q i =1 p ( q ( i )) and each entry is chosen i.i.d. according to p ( q ) . E very node knows the s equence q n . Codebook Generation: Consider transmitter k ∈ { 1 , 2 } that has s ecret message W k ∈ W k = { 1 , 2 , · · · , M k } , where M k = 2 nR k . W e construct each element in the codebook ensemble as follows. 1 Here Q , C 1 , S 1 , O 1 , C 2 , S 2 , and O 2 are defined on arbitrary finite sets Q , C 1 , S 1 , O 1 , C 2 , S 2 , and O 2 , respectiv ely . 5 Random codeb ook w k x k w C k w S k Encoder k p ( x k | c k , s k , o k ) Channel prefixing q c k ( w C k , w x C k ) s k ( w S k , w x S k ) o k ( w x O k ) Randomized codeb ook p ( o k | q ) p ( s k | q ) p ( c k | q ) Randomized codeb ook Fig. 3. Proposed encoder structure for the IC-E. • Generate M C k M x C k = 2 n ( R C k + R x C k − ǫ 1 ) sequences c n k , ea ch with probability p ( c n k | q n ) = n Q i =1 p ( c k ( i ) | q ( i )) , where p ( c k ( i ) | q ( i )) = p ( c k | q ) for each i . Dist ribute these into M C k = 2 nR C k bins, w here th e bi n ind ex is w C k . Eac h bin has M x C k = 2 n ( R x C k − ǫ 1 ) code words, where we denote t he cod e word in dex as w x C k . Represent each code word with these two indices, i.e., c n k ( w C k , w x C k ) . • Sim ilarly , generate M S k M x S k = 2 n ( R S k + R x S k − ǫ 1 ) sequences s n k , each with probabilit y p ( s n k | q n ) = n Q i =1 p ( s k ( i ) | q ( i )) , where p ( s k ( i ) | q ( i )) = p ( s k | q ) for each i . Distribute these i nto M S k = 2 nR S k bins, where the bin index is w S k . Each bin has M x S k = 2 n ( R x S k − ǫ 1 ) code words, where we denote the code word index as w x S k . Represent each codew ord with these t wo indices, i.e., s n k ( w S k , w x S k ) . • Final ly , generate M x O k = 2 n ( R x O k − ǫ 1 ) sequences o n k , each with probabi lity p ( o n k | q n ) = n Q i =1 p ( o k ( i ) | q ( i )) , where p ( o k ( i ) | q ( i )) = p ( o k | q ) for each i . Denote each sequence by inde x w x O k and represent each code word wi th this index, i.e., o n k ( w x O k ) . Choose M k = M C k M S k , and assign each pair ( w C k , w S k ) to a secret message w k . Note that, R k = R C k + R S k for k = 1 , 2 . Every node in the network knows these codebooks. Encoding: Consider again user k ∈ { 1 , 2 } . T o send w k ∈ W k , user k gets corresponding indices w C k and w S k . Then user k obtains the foll owing codew ords: • From the codebook for C k , user k random ly choos es a codew ord in bin w C k according to th e u niform distribution, where the codew ord index is deno ted by w x C k and it get s the corresponding entry of the codebook, i.e. c n k ( w C k , w x C k ) . • Sim ilarly , from the codebo ok for S k , user k randomly choos es a codew ord in bin w S k according to the uniform distribution, where the code word index is denoted by w x S k and it gets the corresponding entry of the codebook, i.e. s n k ( w S k , w x S k ) . • Final ly , from t he codebook for O k , it random ly chooses a code word, whi ch is denoted by o n k ( w x O k ) . Then, user k , generates the channel i nputs x n k , where each entry is chosen ac cording to p ( x k | c k , s k , o k ) using the codew ords c n k ( w C k , w x C k ) , s n k ( w S k , w x S k ) , and o n k ( w x O k ) ; and it transm its the const ructed x n k in n channel uses. See Fig. 3. Decoding: Here we remark that although each user n eeds to decode only its own message, we also requi re recei vers to decode comm on and ot her information of the other transmit ter . Suppose receive r 1 has recei ved y n 1 . Let A n 1 ,ǫ denote the set of typical ( q n , c n 1 , s n 1 , c n 2 , o n 2 , y n 1 ) sequences. Decoder 1 chooses ( w C 1 , w x C 1 , w S 1 , w x S 1 , w C 2 , w x C 2 , w x O 2 ) s. t. ( q n , c n 1 ( w C 1 , w x C 1 ) , s n 1 ( w S 1 , w x S 1 ) , c n 2 ( w C 2 , w x C 2 ) , o n 2 ( w x O 2 ) , y n 1 ) ∈ A n 1 ,ǫ , if such a tuple exists and is u nique. Ot herwise, the decoder declares an error . Decoding at receiver 2 is symmetric and a description of it can be obtained by rev ersing th e indices 1 and 2 above. Refer to Appendix A for the rest of the proof. The following remarks are now in order . 6 1) The auxil iary random variable Q serves as a tim e-sharing parameter . 2) The auxiliary var iable C 1 is used to construct the common secure signal of transmitter 1 that has to be decoded at both recei vers, where t he randomized encoding technique of [13] is u sed for this construction. Similarly , C 2 is used for the common secured signal of user 2 . 3) The auxiliary va riable S 1 is used t o con struct the s elf secure sign al th at h as to be decoded at recei ver 1 but not at receiv er 2 , where the random ized encoding technique of [13 ] is used for this construction. Similarly , S 2 is used for the self secure signal of user 2 . 4) The auxil iary variable O 1 is used to construct the other signal of transm itter 1 that has to be decoded at r eceiv er 2 but not at re ceiv er 1 (con ventional random codi ng [17] is u sed to cons truct this signal). Sim ilarly , O 2 is used for the other signal of user 2 . Note t hat we use R x O 1 , R x O 2 , and set R O 1 = R O 2 = 0 . 5) Compared to the Han-Kobayashi s cheme [2], the common and self random v ariables are constructed with randomized (two-dimensional) codebooks. This w ay , t hey are used not only for transmitting information, but also for adding random ness. Moreover , we have two additional random variables in thi s achie va bility scheme. These extra random v ariables, namely O 1 and O 2 , are used t o facilitate cooperation amo ng th e network users by adding extra randomness to the channel which h as to be decoded by th e no n-intended receiver . W e note that, compared to random variables C k and S k , the randomization added via O k is considered as interference at the receiv er k . 6) The gain that can be lev eraged from coo perativ e encoding can be attributed to the freedom in the allocation of randomizatio n rates at the two users (e.g., see (6)). This allows the users to cooperativ ely add randomness to impair the eav esdropper with a mi nimal impact on the achiev able rate at the legitimate recei vers. Cooperative channel p refixing, on the other hand, can be achiev ed by the joint optimizatio n of the probabilis tic channel p refixes p ( x 1 | c 1 , s 1 , o 1 ) and p ( x 2 | c 2 , s 2 , o 2 ) . C. Ou ter Bounds Theor em 2: For any ( R 1 , R 2 ) ∈ C IC-E , R 1 ≤ max p ∈P O I ( V 1 ; Y 1 | V 2 , U ) − I ( V 1 ; Y e | U ) (7) R 2 ≤ max p ∈P O I ( V 2 ; Y 2 | V 1 , U ) − I ( V 2 ; Y e | U ) , (8) where P O is the set of joint distributions that factors as p ( u, v 1 , v 2 , x 1 , x 2 ) = p ( u ) p ( v 1 | u ) p ( v 2 | u ) p ( x 1 | v 1 ) p ( x 2 | v 2 ) . Pr oof: Refer to Appendix B. Theor em 3: For channels satisfying I ( V 2 ; Y 2 | V 1 ) ≤ I ( V 2 ; Y 1 | V 1 ) (9) for any distribution that factors as p ( v 1 , v 2 , x 1 , x 2 ) = p ( v 1 ) p ( v 2 ) p ( x 1 | v 1 ) p ( x 2 | v 2 ) , an upper bound on t he sum-rate of the IC-E is give n by R 1 + R 2 ≤ max p ∈P O I ( V 1 , V 2 ; Y 1 | U ) − I ( V 1 , V 2 ; Y e | U ) , (10) where U , V 1 , and V 2 are auxiliary random variables, and P O is th e s et of joi nt di stributions that factors as p ( u, v 1 , v 2 , x 1 , x 2 ) = p ( u ) p ( v 1 | u ) p ( v 2 | u ) p ( x 1 | v 1 ) p ( x 2 | v 2 ) . Pr oof: Refer to Appendix C. The pre vious sum-rate upper bound also holds for the set of channels satisfying I ( V 2 ; Y 2 ) ≤ I ( V 2 ; Y 1 ) (11) for any d istribution that factors as p ( v 1 , v 2 , x 1 , x 2 ) = p ( v 1 ) p ( v 2 ) p ( x 1 | v 1 ) p ( x 2 | v 2 ) . Finally , it is evident t hat one can obtain another upper bound by rev ersing the i ndices 1 and 2 in abov e expressions. 7 D. Special Cases This section focuses on few special cases, where sharp resul ts on the secrecy capacity region can be derived. In all these scenarios, achie vability i s established using the prop osed cooperati ve encoding scheme. T o simp lify the presentation, we first define the following set o f probabili ty distrib utions. For random va riables T 1 and T 2 , P ( T 1 , T 2 ) , p ( q , t 1 , t 2 , x 1 , x 2 ) | p ( q , t 1 , t 2 , x 1 , x 2 ) = p ( q ) p ( t 1 | q ) p ( t 2 | q ) p ( x 1 | t 1 ) p ( x 2 | t 2 ) . Cor ol lary 4: If the IC-E satis fies I ( V 2 ; Y 2 | V 1 , Q ) ≤ I ( V 2 ; Y e | Q ) I ( V 2 ; Y e | V 1 , Q ) ≤ I ( V 2 ; Y 1 | Q ) (12) for all i nput distributions that factors as p ( q ) p ( v 1 | q ) p ( v 2 | q ) p ( x 1 | v 1 ) p ( x 2 | v 2 ) , then its secrecy capacity region is given by C IC-E = the closure of [ p ∈P ( S 1 ,O 2 ) R S 1 ( p ) , where R S 1 ( p ) is the set of rate-tuples ( R 1 , R 2 ) satisfying R 1 ≤ [ I ( S 1 ; Y 1 | O 2 , Q ) − I ( S 1 ; Y e | Q )] + R 2 = 0 , (13) for any p ∈ P ( S 1 , O 2 ) . Pr oof: Refer to Appendix D. Cor ol lary 5: If the IC-E satis fies I ( V 2 ; Y e | Q ) ≤ I ( V 2 ; Y 1 | Q ) ≤ I ( V 2 ; Y 2 | Q ) I ( V 1 ; Y e | V 2 , Q ) ≤ I ( V 1 ; Y 1 | V 2 , Q ) I ( V 2 ; Y 2 | V 1 , Q ) ≤ I ( V 2 ; Y 1 | V 1 , Q ) (14) for all input dis tributions that factors as p ( q ) p ( v 1 | q ) p ( v 2 | q ) p ( x 1 | v 1 ) p ( x 2 | v 2 ) , then its secrec y sum capacity is giv en as follows. max ( R 1 ,R 2 ) ∈C IC-E R 1 + R 2 = max p ∈P ( S 1 ,C 2 ) I ( S 1 , C 2 ; Y 1 | Q ) − I ( S 1 , C 2 ; Y e | Q ) . Pr oof: Refer to Appendix E. Cor ol lary 6: If the IC-E satis fies I ( V 2 ; Y e | Q ) ≤ I ( V 2 ; Y 1 | V 1 , Q ) ≤ I ( V 2 ; Y e | V 1 , Q ) I ( V 2 ; Y 2 | V 1 , Q ) ≤ I ( V 2 ; Y 1 | V 1 , Q ) (15) for all input dis tributions that factors as p ( q ) p ( v 1 | q ) p ( v 2 | q ) p ( x 1 | v 1 ) p ( x 2 | v 2 ) , then its secrec y sum capacity is giv en as follows. max ( R 1 ,R 2 ) ∈C IC-E R 1 + R 2 = ma x p ∈P ( S 1 ,O 2 ) I ( S 1 , O 2 ; Y 1 | Q ) − I ( S 1 , O 2 ; Y e | Q ) . W e also note that, in this case, O 2 will not increase the sum-rate, and hence, we can set |O 2 | = 1 Pr oof: Refer to Appendix F. Another case for wh ich the cooperativ e encodin g approach can att ain the su m-capacity is the following. 8 Cor ol lary 7: If the IC-E satis fies I ( V 2 ; Y 1 | Q ) ≤ I ( V 2 ; Y e | V 1 , Q ) ≤ I ( V 2 ; Y 1 | V 1 , Q ) I ( V 2 ; Y 2 | V 1 , Q ) ≤ I ( V 2 ; Y 1 | V 1 , Q ) (16) for all input dis tributions that factors as p ( q ) p ( v 1 | q ) p ( v 2 | q ) p ( x 1 | v 1 ) p ( x 2 | v 2 ) , then its secrec y sum capacity is giv en as follows. max ( R 1 ,R 2 ) ∈C IC-E R 1 + R 2 = ma x p ∈P ( S 1 ,O 2 ) I ( S 1 , O 2 ; Y 1 | Q ) − I ( S 1 , O 2 ; Y e | Q ) . Pr oof: Refer to Appendix G. Now , w e u se our results on the IC-E to shed more light on the secrecy capacity o f the discrete memoryless m ultiple access chann el. In particular , it is easy t o see t hat th e m ultiple access channel with an ea vesdropper (MA C-E) defined by p ( y 1 , y e | x 1 , x 2 ) i s equi v alent to the IC -E defined by p ( y 1 , y 2 , y e | x 1 , x 2 ) = p ( y 1 , y e | x 1 , x 2 ) δ ( y 2 − y 1 ) . This allows for specializing the results obtained earlier to the MA C-E. Cor ol lary 8: R MA C-E , the closure of [ p ∈P ( C 1 ,C 2 ) R ( p ) , where the channel is giv en by p ( y 1 , y e | x 1 , x 2 ) δ ( y 2 − y 1 ) . Furthermore, the following result characterizes the secrecy s um rate of the weak MA C-E. Cor ol lary 9 (MA C-E with a weak eavesdr opper): If the eav esdropper is weak for the MA C-E, i.e., I ( V 1 ; Y e | V 2 ) ≤ I ( V 1 ; Y 1 | V 2 ) I ( V 2 ; Y e | V 1 ) ≤ I ( V 2 ; Y 1 | V 1 ) , (17) for all input distributions that factor as p ( v 1 ) p ( v 2 ) p ( x 1 | v 1 ) p ( x 2 | v 2 ) , then th e secure sum -rate capacity is characterized as the following. max ( R 1 ,R 2 ) ∈C MA C-E R 1 + R 2 = max p ∈P ( C 1 ,C 2 ) I ( C 1 , C 2 ; Y 1 | Q ) − I ( C 1 , C 2 ; Y e | Q ) Pr oof: Refer to Appendix H. Another special case of our m odel is the relay-eave sdropper channel wi th a deaf helper . In this scenario, transmitter 1 has a secret message for receiver 1 and transm itter 2 is only interested in helping transmit ter 1 in increasing it s secure transmi ssion rates. Here, the random variable O 2 at transmi tter 2 is utilized to add randomn ess to the network. Again, the regions g iv en earlier can b e specialized to t his scenario. For example, th e fol lowing region i s achie va ble for this relay-ea vesdropper model. R RE , the closure of the con vex hul l of [ p ∈P ( S 1 ,O 2 , |Q| =1) R ( p ) , (18) where P ( S 1 , O 2 , |Q| = 1) denotes the probabili ty di stributions in P ( S 1 , O 2 ) with a deterministi c Q . For this relay-eav esdropper scenario, the no ise forwarding (NF) scheme prop osed in [18] achieves the following rate. R [NF] = max p ∈P ( S 1 ,O 2 , |Q| =1) R 1 ( p ) , (19) where R 1 ( p ) , [ I ( S 1 ; Y 1 | O 2 ) + min { I ( O 2 ; Y 1 ) , I ( O 2 ; Y e | S 1 ) } − min { I ( O 2 ; Y 1 ) , I ( O 2 ; Y e ) } − I ( S 1 ; Y e | O 2 )] + . The following result shows that N F is a special case of the cooperative encoding schem e and provides a simplification of the achie vable secrecy rate. 9 Cor ol lary 10 : ( R [NF] , 0) ∈ R RE , where R [NF] can be simplified as follows. R [NF] = max p ∈P ( S 1 ,O 2 , |Q| =1) s. t. I ( O 2 ; Y e ) ≤ I ( O 2 ; Y 1 ) I ( S 1 ; Y 1 | O 2 ) + min { I ( O 2 ; Y 1 ) , I ( O 2 ; Y e | S 1 ) } − I ( S 1 , O 2 ; Y e ) . (20) Pr oof: Refer to Appendix I. Finally , th e next result establi shes the o ptimality of NF in certain relay-ea vesdropper channels. Cor ol lary 11 : Noise Forw arding scheme is optimal for t he relay-ea vesdropper channels w hich satisfy I ( V 2 ; Y 1 ) ≤ I ( V 2 ; Y e | V 1 ) , (21) for all input dis tributions that factor as p ( v 1 ) p ( v 2 ) p ( x 1 | v 1 ) p ( x 2 | v 2 ) , and the corresponding s ecrec y capacity is C RE = max p ∈ P ( S 1 , O 2 , |Q| = 1) s.t. I ( O 2 ; Y e ) ≤ I ( O 2 ; Y 1 ) I ( S 1 , O 2 ; Y 1 ) − I ( S 1 , O 2 ; Y e ) . Pr oof: Refer to Appendix J. I I I . S E C U R I T Y F O R T H E G AU S S I A N I N T E R F E R E N C E C H A N N E L A. Inner Bound and Numerical Results In its s tandard form [19], t he two us er Gauss ian Interference C hannel with an Eavesdropper (GIC-E) is giv en b y Y 1 = X 1 + √ c 21 X 2 + N 1 Y 2 = √ c 12 X 1 + X 2 + N 2 (22) Y e = √ c 1 e X 1 + √ c 2 e X 2 + N e , where N r ∼ N (0 , 1) is the noi se at each receive r r = 1 , 2 , e and the a verage powe r con straints are 1 n n P t =1 ( X k ( t )) 2 ≤ P k for k = 1 , 2 . The secrecy capacity region of t he GIC-E is denoted as C GIC-E . The goal here is to specialize the results obtained in the pre vious sectio n to t he Gaussian s cenario and illustrate the gains th at can b e l e veraged from th e coop erativ e coding for randomized codebooks and for channel prefixing, and from time sharing. For thi s scenario, the Gaussian codebooks are used and the same regions will be achiev able after taking into account the power constraint at the users. T ow ards this end, we will need the foll owing d efinitions. Consider a p robability mass function on the tim e sharing parameter denoted by p ( q ) . Let A ( p ( q )) denote the set of all poss ible power allocations, i.e., A ( p ( q )) , P c 1 ( q ) , P s 1 ( q ) , P o 1 ( q ) , P j 1 ( q ) , P c 2 ( q ) , P s 2 ( q ) , P o 2 ( q ) , P j 2 ( q ) P q ∈Q ( P c k ( q ) + P s k ( q ) + P o k ( q ) + P j k ( q )) p ( q ) ≤ P k , for k = 1 , 2 . Now , we define a set of joint distributions P G for the Gaussian case as follows. P G , p | p ∈ P , ( P c 1 ( q ) , P s 1 ( q ) , P o 1 ( q ) , P j 1 ( q ) , P c 2 ( q ) , P s 2 ( q ) , P o 2 ( q ) , P j 2 ( q )) ∈ A ( p ( q )) , C 1 ( q ) ∼ N (0 , P c 1 ( q )) , S 1 ( q ) ∼ N (0 , P s 1 ( q )) , O 1 ( q ) ∼ N (0 , P o 1 ( q )) , J 1 ( q ) ∼ N (0 , P j 1 ( q )) , C 2 ( q ) ∼ N (0 , P c 2 ( q )) , S 2 ( q ) ∼ N (0 , P s 2 ( q )) , O 2 ( q ) ∼ N (0 , P o 2 ( q )) , J 2 ( q ) ∼ N (0 , P j 2 ( q )) , X 1 ( q ) = C 1 ( q ) + S 1 ( q ) + O 1 ( q ) + J 1 ( q ) , X 2 ( q ) = C 2 ( q ) + S 2 ( q ) + O 2 ( q ) + J 2 ( q ) , where th e Gaus sian mo del given in (22) gives p ( y 1 , y 2 , y e | x 1 , x 2 ) . Usin g this set of di stributions, we obtain the following achie vable secrecy rate region for the GIC-E. 10 Cor ol lary 12 : R GIC-E , the closure of ( S p ∈P G R ( p ) ) ⊂ C GIC-E . It is interesting to see that our particular choice of the channel prefixing distribution p ( x k | c k , s k , o k ) in the above corollary corresponds to a s uperposition coding approach where X k = C k + S k + O k + J k . This observation establis hes the fact that noise injection scheme of [15] and jammi ng scheme of [16] are special cases of the channel prefixing technique of [14]. The following computati onally sim ple subregion wil l be used t o generate some of our n umerical result s. Cor ol lary 13 : R GIC-E 2 ⊂ R GIC-E ⊂ C GIC-E , where R GIC-E 2 , the con vex closure of S p ∈P G 2 R ( p ) , and P G 2 , { p | p ∈ P G , |Q| = 1 , P s 1 ( q ) = P o 1 ( q ) = P s 2 ( q ) = P o 2 ( q ) = 0 for any Q = q } . Another simpli fication can be obtain ed from the following TDM A-like approach. Here we di vide the n channel uses int o two parts of lengths represented by α n and (1 − α ) n , where 0 ≤ α ≤ 1 and αn is assumed to be an integer . During the first period, transmitter 1 generates randomized code words using power P s 1 (1) and transmit ter 2 jam s the channel u sing power P j 2 (1) . For the second period, t he roles of the users are re versed, where t he users use powers P s 2 (2) and P j 1 (2) . W e refer t o this scheme cooperativ e TDMA (C-TDMA) which achiev es t he following region. Cor ol lary 14 : R C-TDMA ⊂ R GIC-E ⊂ C GIC-E , where R C-TDMA , the closure of [ α ∈ [0 , 1] αP s 1 (1)+(1 − α ) P j 1 (2) ≤ P 1 αP j 2 (1)+(1 − α ) P s 2 (2) ≤ P 2 ( R 1 , R 2 ) , where R 1 = α 2 " log 1 + P s 1 (1) 1 + c 21 P j 2 (1) − log 1 + c 1 e P s 1 (1) 1 + c 2 e P j 2 (1) # + , (23) and R 2 = (1 − α ) 2 " log 1 + P s 2 (2) 1 + c 12 P j 1 (2) − log 1 + c 2 e P s 2 (2) 1 + c 1 e P j 1 (2) # + . (24) Pr oof: This is a subregion of the C GIC-E , where we use a time sharing random variable sati sfying p ( q = 1) = α and p ( q = 2) = 1 − α , and utilize the random variables S 1 and S 2 . The proof also follows by respectiv e single-user Gaussian wi retap channel result [20] wi th the m odified noise variances due to the jamming signals. In t he C- TDMA scheme above, we only add randomness by noise injection at the helper node. Howe ver , our cooperative encodi ng schem e (Corollary 12 ) all ows for the i mplementation of more general cooperation strate gies. For e xample, in a more general TDMA approach, each u ser can help the other via both the design of random ized codebook and channel prefixing (i.e., the noise forwar ding scheme described in Section III-B2). In additi on, one can deve lop enhanced transmissio n strategies with a t ime- sharing random v ariable of cardinality greater than 2 . W e now provide numerical results for the following subregions of the achie vable re gion giv en in Corollary 12. • R GIC-E 2 : Here we utilize both cooperativ e rando mized codebook design and channel prefixing. • R GIC-E 2 (rc or cp): Here we ut ilize either cooperativ e randomized codebook design (rc) or channel prefixing (cp) scheme at a transmitter , but not both. 11 0 0.2 0.4 0.6 0.8 0 0.1 0.2 0.3 0.4 0.5 0.6 0.7 0.8 R 1 (bps) R 2 (bps) R 2 GIC−E R 2 GIC−E (rc or cp) R 2 GIC−E (ncp) R C−TDMA R C−TDMA (ncp) Fig. 4. Numerical results for GIC-E with c 12 = c 21 = 1 . 9 , c 1 e = c 2 e = 0 . 5 , P 1 = P 2 = 10 . The t hree schemes, performance of which are giv en by R GIC-E 2 , R GIC-E 2 (rc or cp) , and R GIC-E 2 (ncp), hav e the same performance and outperform t he ones represented by R C-TDMA and R C-TDMA (ncp), which achie v e the same region. 0 0.05 0.1 0.15 0.2 0 0.02 0.04 0.06 0.08 0.1 0.12 0.14 0.16 0.18 0.2 R 1 (bps) R 2 (bps) R 2 GIC−E R 2 GIC−E (rc or cp) R 2 GIC−E (ncp) R C−TDMA R C−TDMA (ncp) Fig. 5. Numerical results for GIC-E with c 12 = c 21 = 0 . 6 , c 1 e = c 2 e = 1 . 1 , P 1 = P 2 = 10 . Only the scheme represented by R C-TDMA achie ves positive rates. • R GIC-E 2 (ncp): Here we only utilize cooperati ve randomized codebook desi gn, no channel prefixing (ncp) is implemented. • R C-TDMA : This re gion is an example of utilizin g both time-sharing and cooperativ e channel prefixing. • R C-TDMA (ncp): This region is a subregion of R C-TDMA , for which we set th e jammi ng powers to zero. The first scenario depicted in Fig. 4 shows the gain of fered by t he cooperati ve encoding technique, as compared with the v arious cooperative T DMA approaches. Als o, it is sh own that cooperative channel pre- fixing does n ot increase the secrecy rate region i n this particul ar scenario. In Fig. 5, we consider a channel with a rather capable ea vesdropper . In t his case, it is s traightforward to verify that t he corresponding single user channels hav e zero secrecy capacities. Howe ver , wi th the appropriate cooperation strategies between the two i nterfering users , the t wo users can achie ve non-zero rates (as reported i n the figure). In Fig. 6, we cons ider an asym metric scenario, in which t he first user has a weak channel to the ea vesdropper , but the second us er has a strong channel to the eav esdropper . In this case, the proposed cooperativ e encoding technique all ows the s econd user to achie ve a positive secure transmiss ion rate, which is not possib le by 12 0 0.1 0.2 0.3 0.4 0.5 0.6 0.7 0 0.05 0.1 0.15 0.2 0.25 0.3 0.35 R 1 (bps) R 2 (bps) R 2 GIC−E R 2 GIC−E (rc or cp) R 2 GIC−E (ncp) R C−TDMA R C−TDMA (ncp) Fig. 6. Numerical results f or GIC -E with c 12 = 1 . 9 , c 21 = 1 , c 1 e = 0 . 5 , c 2 e = 1 . 6 , P 1 = P 2 = 10 . T he schemes represented by R C-TDMA and R C-TDMA (ncp) does not achie ve positiv e rates for user 2 . exploiting o nly the channel prefixing and time-sharing techniques. In addition , by prefixing the channel, the second user can help the first one to increase its secure transm ission rate. Finally , we note that for some channel coefficients R C-TDMA outperforms R GIC-E 2 and for s ome ot hers R GIC-E 2 outperforms R C-TDMA . Therefore, i n general, the propos ed techniques (cooperati ve randomized codeboo k design, cooperati ve channel prefixing, and time-sharing) should be exploited sim ultaneously as con sidered in Corollary 12. B. Special Cases 1) The Mu ltiple Access Channel: First, we define a set of probabil ity d istributions P G 3 , p | p ∈ P G , P s 1 ( q ) = P o 1 ( q ) = P s 2 ( q ) = P o 2 ( q ) = 0 for any Q = q . Using this notation, on e can easily see that t he re gion R GIC-E in Corollary 12 reduces to the following achie vable secrecy rate region for the Gaussian Multip le Access Channel with an Eav esdropper (GMA C- E). R GMA C-E , the closure of ( [ p ∈P G 3 R ( p ) ) , where th e expressions i n the region R ( p ) are calculated for th e channel giv en by p ( y 1 , y e | x 1 , x 2 ) δ ( y 2 − y 1 ) . The region R GMA C-E generalizes t he one obtained in [16 ] for the two us er case. The underlying reason is that, in th e achie v able scheme of [16], the users are either transmitting th eir code words or jammi ng the channel whereas, in our approach, the users c an tra nsmit t heir codewords and jam the channel simultaneous ly . In addition, our cooperative TDM A approach generalizes the one proposed in [16], as we allow the two users to cooperate in the design of rando mized codebook s and channel prefixing during t he time slots dedicated to either one. 2) The Relay-Eavesdr opp er Chan nel: In the pre vious section, we argued that the nois e forwarding (NF) scheme of [18 ] can be obtained as a sp ecial case of o ur generalized cooperation scheme. Here, we demo nstrate the positive impact of channel p refixing on increasing the achie v able secrecy rate of the Gaussian relay-eav esdropper channel. In particular , the propos ed re gion for the Gaussian IC-E, when specialized to the Gaussian relay-ea vesdropper sett ing, results in R GRE , closure of the con vex hull of ( [ p ∈P G 4 R ( p ) ) , 13 where P G 4 , p | p ∈ P G , |Q| = 1 , P c 1 ( q ) = P o 1 ( q ) = P c 2 ( q ) = P s 2 ( q ) = 0 . One the other hand, noise forwarding wit h no channel prefixing (GNF-ncp) results i n the foll owing achie vable rate. R [GNF-ncp] = 1 2 log ( 1 + P 1 ) − 1 2 log ( 1 + c 1 e P 1 ) − min 1 2 log 1 + c 21 P 2 1 + P 1 , 1 2 log 1 + c 2 e P 2 1 + c 1 e P 1 + min 1 2 log 1 + c 21 P 2 1 + P 1 , 1 2 log (1 + c 2 e P 2 ) + , (25) where we choose X 1 = S 1 ∼ N (0 , P 1 ) and X 2 = O 2 ∼ N (0 , P 2 ) in the expression of R [NF] (see also [18]). Numerically , t he positi ve impact of channel prefixing is illustrated in the foll owing example. First, i t is easy to see that the following secrecy rate is achie vable with channel prefixing R 1 = 1 2 log 1 + P 1 1 + c 21 P 2 − 1 2 log 1 + c 1 e P 1 1 + c 2 e P 2 + , since ( R 1 , 0) ∈ R GRE (i.e., we set P s 1 = P 1 and P j 2 = P 2 ). Now , we let c 1 e = c 2 e = 1 and P 1 = P 2 = 1 , resulting in R [GNF-ncp] = 0 and R 1 > 0 if c 21 < 1 . I V . C O N C L U S I O N S This work considered t he two-user interference channel with an (external) ea vesdropper . An i nner bound on the achiev able secrecy rate region was deriv ed using a scheme that combines cooperative random ized codebook design, channel prefixing, message splitting, and time-sharing techniques. More specifically , our achiev able s cheme allows the tw o users to cooperatively construct t heir randomi zed cod ebooks and channel prefixing distributions. Outer bounds are then deriv ed and used to establi sh the optimalit y of the proposed scheme in so me special cases. For the Gauss ian scenario, channel p refixing was used t o allow the users to transmit independent ly generated noise samples usin g a fraction of the av ailable power . M oreover , as a s pecial case of time sharing, we hav e developed a novel coo perativ e TDMA scheme, where a user can add structu r ed and uns tructur ed noise to the channel during the allocated slot for the ot her user . It is shown that this scheme reduces t o the no ise forwarding scheme proposed earlier for the relay-eave sdropper channel. In the Gaussian m ultiple-access setting , our cooperative encodin g and channel prefixing scheme was shown to enlarge the achiev able regions obtained in previous works. The mos t interesting aspect of our results is, perhaps, the illum ination of the rol e of i nterference in cooperativ ely adding randomness to increase the achiev abl e s ecrecy rates in mult i-user networks. A P P E N D I X A P R O O F O F T H E O R E M 1 Pr obability of Error Analysis: Belo w we show that the decoding error probabil ity of user k averaged ove r the ensemble can be arbitrarily made small for sufficiently large n . This d emonstrates the e xistence of a codebook with the property that max( P e, 1 , P e, 2 ) ≤ ǫ , for any given ǫ > 0 . The analys is foll ows from similar arguments given in [2]. See also [17] for joint typ ical decoding error comp utations. Here, for any give n ǫ > 0 , each receive r can decode correspond ing m essages give n above with an error probabi lity less t han ǫ as n → ∞ , if the rates satisfy the following equations. R S + R x S ≤ I ( S ; Y 1 |S c , Q ) , ∀S ⊂ { C 1 , S 1 , C 2 , O 2 } , (26) 14 R S + R x S ≤ I ( S ; Y 2 |S c , Q ) , ∀S ⊂ { C 1 , O 1 , C 2 , S 2 } . (27) Equiv ocation Computation: W e first write the following. H ( W 1 , W 2 | Y e ) = H ( W C 1 , W S 1 , W C 2 , W S 2 | Y e ) ≥ H ( W C 1 , W S 1 , W C 2 , W S 2 | Y e , Q ) = H ( W C 1 , W S 1 , W C 2 , W S 2 , Y e | Q ) − H ( Y e | Q ) = H ( C 1 , S 1 , O 1 , C 2 , S 2 , O 2 | Q ) − H ( Y e | Q ) + H ( W , Y e | C 1 , S 1 , O 1 , C 2 , S 2 , O 2 , Q ) − H ( C 1 , S 1 , O 1 , C 2 , S 2 , O 2 |W , Y e , Q ) ≥ H ( C 1 , S 1 , O 1 , C 2 , S 2 , O 2 | Q ) − H ( Y e | Q ) + H ( Y e | C 1 , S 1 , O 1 , C 2 , S 2 , O 2 , Q ) − H ( C 1 , S 1 , O 1 , C 2 , S 2 , O 2 |W , Y e , Q ) = H ( C 1 , S 1 , O 1 , C 2 , S 2 , O 2 | Q ) − H ( C 1 , S 1 , O 1 , C 2 , S 2 , O 2 |W , Y e , Q ) − I ( C 1 , S 1 , O 1 , C 2 , S 2 , O 2 ; Y e | Q ) , (28) where we us e the set notation W , ( W C 1 , W S 1 , W C 2 , W S 2 ) to ease the p resentation, and the inequaliti es are due to the fact that conditionin g does not increase entropy , Here, H ( C 1 , S 1 , O 1 , C 2 , S 2 , O 2 | Q ) = n ( R C 1 + R x C 1 + R S 1 + R x S 1 + R x O 1 + R C 2 + R x C 2 + R S 2 + R x S 2 + R x O 2 − 6 ǫ 1 ) , (29) as, gi ven Q = q , the tuple ( C 1 , S 1 , O 1 , C 2 , S 2 , O 2 ) has 2 n ( R C 1 + R x C 1 + R S 1 + R x S 1 + R x O 1 + R C 2 + R x C 2 + R S 2 + R x S 2 + R x O 2 − 6 ǫ 1 ) possible v alues each with equal probability . Secondly , I ( C 1 , S 1 , O 1 , C 2 , S 2 , O 2 ; Y e | Q ) ≤ nI ( C 1 , S 1 , O 1 , C 2 , S 2 , O 2 ; Y e | Q ) + nǫ 2 , (30) where ǫ 2 → 0 as n → ∞ . See, for example, Lemm a 8 of [13]. Lastly , for any W C 1 = w C 1 , W S 1 = w S 1 , W C 2 = w C 2 , W S 2 = w S 2 , and Q = q , we ha ve H ( C 1 , S 1 , O 1 , C 2 , S 2 , O 2 | W C 1 = w C 1 , W S 1 = w S 1 , W C 2 = w C 2 , W S 2 = w S 2 , Y e , Q = q ) ≤ nǫ 3 , for some ǫ 3 → 0 as n → ∞ . Thi s is due to t he Fano’ s inequality together wi th the randomized codeboo k construction: Given all t he m essage (bin) indices of two users, ea vesdropper can decode t he randomi zation indices amo ng tho se bins. D ue t o joi nt typicality , this latter ar gument holds as lo ng as the rates satisfy the following equations. R x S ≤ I ( S ; Y e |S c , Q ) , ∀S ⊂ { C 1 , S 1 , O 1 , C 2 , S 2 , O 2 } . (31) This follo ws as given bin indices W C 1 , W S 1 , W C 2 , and W S 2 , this r educes to MA C probabilit y of error computation among t he code words of those bins. See [17] for details of computing error probabilities in MA C. Th en, a veraging over W C 1 , W S 1 , W C 2 , W S 2 , and Q , we obtain H ( C 1 , S 1 , O 1 , C 2 , S 2 , O 2 | W C 1 , W S 1 , W C 2 , W S 2 , Y e , Q ) ≤ nǫ 3 . (32) Hence, using (29), (30), and (32) in (28) we obtain R 1 + R 2 − 1 n H ( W 1 , W 2 | Y n e ) ≤ 6 ǫ 1 + ǫ 2 + ǫ 3 , ǫ → 0 , (33) 15 as n → ∞ , where we set R x S = I ( S ; Y e | Q ) , S = { C 1 , S 1 , O 1 , C 2 , S 2 , O 2 } . (34) Combining (26), (27), (31), and (34) we obtain the result, i.e., R ( p ) is achiev able for any p ∈ P . A P P E N D I X B P R O O F O F T H E O R E M 2 W e b ound R 1 below . The bound o n R 2 can be ob tained by following similar st eps below and re versing the indices 1 and 2 . W e first state the following definitions. For any random variable Y , ˜ Y i +1 , [ Y ( i + 1) · · · Y ( n )] , and I 1 , n X i =1 I ( ˜ Y i +1 e ; Y 1 ( i ) | Y i − 1 1 ) (35) ˆ I 1 , n X i =1 I ( Y i − 1 1 ; Y e ( i ) | ˜ Y i +1 e ) (36 ) I 2 , n X i =1 I ( ˜ Y i +1 e ; Y 1 ( i ) | Y i − 1 1 , W 1 ) (37) ˆ I 2 , n X i =1 I ( Y i − 1 1 ; Y e ( i ) | ˜ Y i +1 e , W 1 ) (38 ) Then, we consider the following bound. R 1 − ǫ ≤ 1 n H ( W 1 | Y e ) = 1 n ( H ( W 1 ) − I ( W 1 ; Y e )) = 1 n ( H ( W 1 | Y 1 ) + I ( W 1 ; Y 1 ) − I ( W 1 ; Y e )) ≤ ǫ 1 + 1 n n X i =1 I ( W 1 ; Y 1 ( i ) | Y i − 1 1 ) − n X i =1 I ( W 1 ; Y e ( i ) | ˜ Y i +1 e ) ! = ǫ 1 + 1 n n X i =1 I ( W 1 ; Y 1 ( i ) | Y i − 1 1 , ˜ Y i +1 e ) + I 1 − I 2 − n X i =1 I ( W 1 ; Y e ( i ) | Y i − 1 1 , ˜ Y i +1 e ) − ˆ I 1 + ˆ I 2 = ǫ 1 + 1 n n X i =1 I ( W 1 ; Y 1 ( i ) | Y i − 1 1 , ˜ Y i +1 e ) − n X i =1 I ( W 1 ; Y e ( i ) | Y i − 1 1 , ˜ Y i +1 e ) ! , where the first inequality is due to Lemma 15 gi ven at the end of this secti on, the second inequality is due to the Fano’ s inequality at the receive r 1 wit h som e ǫ 1 → 0 as n → ∞ , th e l ast equalit y is du e to observations I 1 = ˆ I 1 and I 2 = ˆ I 2 (see [14, Lemma 7 ]). W e define U ( i ) , ( Y i − 1 1 , ˜ Y i +1 e , i ) , V 1 ( i ) , ( U ( i ) , W 1 ) , and V 2 ( i ) , ( U ( i ) , W 2 ) . Using st andard techniques (see, e.g., [17]), we introduce a random variable J , which is uniformly dis tributed over 16 { 1 , · · · , n } , and continue as below . R 1 − ǫ ≤ ǫ 1 + 1 n n X i =1 I ( W 1 ; Y 1 ( i ) | Y i − 1 1 , ˜ Y i +1 e ) − n X i =1 I ( W 1 ; Y e ( i ) | Y i − 1 1 , ˜ Y i +1 e ) ! = ǫ 1 + 1 n n X i =1 I ( V 1 ( i ); Y 1 ( i ) | U ( i )) − n X i =1 I ( V 1 ( i ); Y e ( i ) | U ( i )) ! = ǫ 1 + n X j =1 I ( V 1 ( j ); Y 1 ( j ) | U ( j )) p ( J = j ) − n X j =1 I ( V 1 ( j ); Y e ( j ) | U ( j )) p ( J = j ) ≤ ǫ 1 + n X j =1 I ( V 1 ( j ); Y 1 ( j ) | V 2 ( j ) , U ( j )) p ( J = j ) − n X j =1 I ( V 1 ( j ); Y e ( j ) | U ( j )) p ( J = j ) = ǫ 1 + I ( V 1 ; Y 1 | V 2 , U ) − I ( V 1 ; Y e | U ) , (39) where the last inequality follows from the fact that V 1 ( j ) → U ( j ) → V 2 ( j ) , which implies I ( V 1 ( j ); Y 1 ( j ) | U ( j )) ≤ I ( V 1 ( j ); Y 1 ( j ) | V 2 ( j ) , U ( j )) , after using the fact that con ditioning does not increase entropy , and the last equality follows by using a s tandard information theoretic argument in which we define random variables for the singl e-letter expression, e.g., V 1 has the same distribution as V 1 ( J ) . Hence, we obtain the bound, R 1 ≤ [ I ( V 1 ; Y 1 | V 2 , U ) − I ( V 1 ; Y e | U )] + , (40) for s ome auxil iary random va riables that fac tors as p ( u ) p ( v 1 | u ) p ( v 2 | u ) p ( x 1 | v 1 ) p ( x 2 | v 2 ) p ( y 1 , y 2 , y e | x 1 , x 2 ) . Lemma 15: The secrecy constraint R 1 + R 2 − 1 n H ( W 1 , W 2 | Y e ) ≤ ǫ implies that R 1 − 1 n H ( W 1 | Y e ) ≤ ǫ, and R 2 − 1 n H ( W 2 | Y e ) ≤ ǫ. Pr oof: 1 n H ( W 1 | Y e ) = 1 n H ( W 1 , W 2 | Y e ) − 1 n H ( W 2 | Y e , W 1 ) ≥ R 1 − ǫ + R 2 − 1 n H ( W 2 | Y e , W 1 ) = R 1 − ǫ + 1 n H ( W 2 ) − 1 n H ( W 2 | Y e , W 1 ) ≥ R 1 − ǫ, (41) where the second equality follows by H ( W 2 ) = nR 2 , and the last inequality follows due t o the fact that conditionin g does not increase entropy . Second s tatement follows from a simil ar ob serva tion. 17 A P P E N D I X C P R O O F O F T H E O R E M 3 From ar guments giv en in [5, Lemma], the assumed property of the channel implies the following. I ( V 2 ; Y 2 | V 1 ) ≤ I ( V 2 ; Y 1 | V 1 ) (42) Then, by considering V 1 ( i ) = W 1 and V 2 ( i ) = W 2 , for i = 1 , · · · , n , we get I ( W 2 ; Y 2 | W 1 ) ≤ I ( W 2 ; Y 1 | W 1 ) (43) W e continue as follows. 1 n H ( W 1 , W 2 | Y 1 ) = 1 n H ( W 1 | Y 1 ) + 1 n H ( W 2 | Y 1 , W 1 ) ≤ ǫ 1 + 1 n H ( W 2 | Y 1 , W 1 ) ≤ ǫ 1 + 1 n H ( W 2 | Y 2 , W 1 ) ≤ ǫ 1 + 1 n H ( W 2 | Y 2 ) ≤ ǫ 1 + ǫ 2 , (44) where the first inequality is due t o the Fano’ s inequality at the receiver 1 with some ǫ 1 → 0 as n → ∞ , the second inequality fol lows from (43), the third one is due to conditio ning can not increase entropy , and the last one follows from the Fano’ s inequality at the recei ver 2 with som e ǫ 2 → 0 as n → ∞ . W e t hen proceed following the standard techniques gi ven in [13 ], [14 ]. W e first state the foll owing definitions. I 1 , n X i =1 I ( ˜ Y i +1 e ; Y 1 ( i ) | Y i − 1 1 ) (45) ˆ I 1 , n X i =1 I ( Y i − 1 1 ; Y e ( i ) | ˜ Y i +1 e ) (46 ) I 3 , n X i =1 I ( ˜ Y i +1 e ; Y 1 ( i ) | Y i − 1 1 , W 1 , W 2 ) (47) ˆ I 3 , n X i =1 I ( Y i − 1 1 ; Y e ( i ) | ˜ Y i +1 e , W 1 , W 2 ) , (48) where ˜ Y i +1 = [ Y ( i + 1) · · · Y ( n )] for random variable Y . 18 Then, we bound the sum rate as follows. R 1 + R 2 − ǫ ≤ 1 n H ( W 1 , W 2 | Y e ) = 1 n H ( W 1 , W 2 | Y 1 ) + I ( W 1 , W 2 ; Y 1 ) − I ( W 1 , W 2 ; Y e ) ! ≤ ǫ 1 + ǫ 2 + 1 n n X i =1 I ( W 1 , W 2 ; Y 1 ( i ) | Y i − 1 1 ) − n X i =1 I ( W 1 , W 2 ; Y e ( i ) | ˜ Y i +1 e ) ! = ǫ 1 + ǫ 2 + 1 n I 1 − I 3 − ˆ I 1 + ˆ I 3 + n X i =1 I ( W 1 , W 2 ; Y 1 ( i ) | Y i − 1 1 , ˜ Y i +1 e ) − n X i =1 I ( W 1 , W 2 ; Y e ( i ) | Y i − 1 1 , ˜ Y i +1 e ) = ǫ 1 + ǫ 2 + 1 n n X i =1 I ( W 1 , W 2 ; Y 1 ( i ) | Y i − 1 1 , ˜ Y i +1 e ) − n X i =1 I ( W 1 , W 2 ; Y e ( i ) | Y i − 1 1 , ˜ Y i +1 e ) ! , where the first i nequality is du e t o the secrecy requirement, the l ast inequality fol lows by (44), and th e last equali ty fol lows by the fact that I 1 = ˆ I 1 and I 3 = ˆ I 3 , which can be shown using arguments simil ar to [14, Lemma 7 ]. W e define U ( i ) , ( Y i − 1 1 , ˜ Y i +1 e , i ) , V 1 ( i ) , ( U ( i ) , W 1 ) , and V 2 ( i ) , ( U ( i ) , W 2 ) . Using st andard techniques (see, e.g., [17]), we introduce a random variable J , which is uniformly dis tributed over { 1 , · · · , n } , and continue as below . 1 n n P i =1 I ( W 1 , W 2 ; Y 1 ( i ) | Y i − 1 1 , ˜ Y i +1 e ) − n P i =1 I ( W 1 , W 2 ; Y e ( i ) | Y i − 1 1 , ˜ Y i +1 e ) ! = 1 n n X i =1 I ( V 1 ( i ) , V 2 ( i ); Y 1 ( i ) | U ( i )) − n X i =1 I ( V 1 ( i ) , V 2 ( i ); Y e ( i ) | U ( i )) ! = n X j =1 I ( V 1 ( j ) , V 2 ( j ); Y 1 ( j ) | U ( j )) p ( J = j ) − n X j =1 I ( V 1 ( j ) , V 2 ( j ); Y e ( j ) | U ( j )) p ( J = j ) = I ( V 1 , V 2 ; Y 1 | U ) − I ( V 1 , V 2 ; Y e | U ) , (49) where, using a standard information theoretic argument, we hav e defined the random v ariables for the single-letter expre ssion, e.g., V 1 has the same distribution as V 1 ( J ) . No w , due to the memo ryless property of the channel, we ha ve ( U, V 1 , V 2 ) → ( X 1 , X 2 ) → ( Y 1 , Y 2 , Y e ) , which i mplies p ( y 1 , y 2 , y e | x 1 , x 2 , v 1 , v 2 , u ) = p ( y 1 , y 2 , y e | x 1 , x 2 ) . As we define V 1 ( J ) = ( U ( J ) , W 1 ) and V 2 ( J ) = ( U ( J ) , W 2 ) , we hav e V 1 → U → V 2 , which implies p ( v 1 , v 2 | u ) = p ( v 1 | u ) p ( v 2 | u ) . Finally , as X 1 ( J ) is a stochastic function of W 1 , X 2 ( J ) is a stochastic function of W 2 , and W 1 and W 2 are independent, we have X 1 ( J ) → V 1 ( J ) → V 2 ( J ) , X 2 ( J ) → V 2 ( J ) → V 1 ( J ) , and X 1 ( J ) → ( V 1 ( J ) , V 2 ( J )) → X 2 ( J ) , wh ich together impl ies that p ( x 1 , x 2 | v 1 , v 2 , u ) = p ( x 1 , x 2 | v 1 , v 2 ) = p ( x 1 | v 1 , v 2 ) p ( x 2 | v 1 , v 2 ) = p ( x 1 | v 1 ) p ( x 2 | v 2 ) . Using t his in the above equation, we obtain a sum-rate bound, R 1 + R 2 ≤ [ I ( V 1 , V 2 ; Y 1 | U ) − I ( V 1 , V 2 ; Y e | U )] + , (50) for s ome auxil iary random va riables that fac tors as p ( u ) p ( v 1 | u ) p ( v 2 | u ) p ( x 1 | v 1 ) p ( x 2 | v 2 ) p ( y 1 , y 2 , y e | x 1 , x 2 ) , if (9) holds. 19 A P P E N D I X D P R O O F O F C O RO L L A RY 4 Achiev ability follows from Theorem 1 b y only uti lizing S 1 and O 2 together with th e second equatio n in (12), where we set R 2 = 0 and set R 1 as fol lows. For a give n p ∈ P ( S 1 , O 2 ) , if I ( S 1 ; Y 1 | O 2 , Q ) ≤ I ( S 1 ; Y e | Q ) , we set R 1 = 0 ; ot herwise we assign the following rates. R S 1 = I ( S 1 ; Y 1 | O 2 , Q ) − I ( S 1 ; Y e | Q ) R x S 1 = I ( S 1 ; Y e | Q ) R x O 2 = I ( O 2 ; Y e | S 1 , Q ) , where R 1 = R S 1 . Con verse foll ows from Theorem 2. That is, if ( R 1 , R 2 ) is achieav able, then R 1 ≤ max p ∈P O I ( S 1 ; Y 1 | O 2 , Q ) − I ( S 1 ; Y e | Q ) and R 2 = 0 , due t o the first condition giv en in (12). A P P E N D I X E P R O O F O F C O RO L L A RY 5 Achiev ability follows from Theorem 1 by on ly utilizing S 1 and C 2 together with the channel con dition giv en in (14). F or a giv en p ∈ P ( S 1 , C 2 ) , if I ( S 1 , C 2 ; Y 1 | Q ) ≤ I ( S 1 , C 2 ; Y e | Q ) , we set R 1 = R 2 = 0 ; otherwise we assign the following rates. R S 1 = I ( S 1 ; Y 1 | C 2 , Q ) − I ( S 1 ; Y e | C 2 , Q ) R x S 1 = I ( S 1 ; Y e | C 2 , Q ) R C 2 = I ( C 2 ; Y 1 | Q ) − I ( C 2 ; Y e | Q ) R x C 2 = I ( C 2 ; Y e | Q ) , where R 1 = R S 1 and R 2 = R C 2 . Con verse follows from Theorem 3 as the needed conditio n is satisfied by the channel. A P P E N D I X F P R O O F O F C O RO L L A RY 6 Achiev ability follows from Th eorem 1 by only utilizing S 1 and O 2 together with the channel conditi on giv en in (15). For a gi ven p ∈ P ( S 1 , O 2 ) , if I ( S 1 , O 2 ; Y 1 | Q ) ≤ I ( S 1 , O 2 ; Y e | Q ) , we set R 1 = R 2 = 0 ; otherwise we assign the following rates. R S 1 = I ( S 1 , O 2 ; Y 1 | Q ) − I ( S 1 , O 2 ; Y e | Q ) R x S 1 = I ( S 1 , O 2 ; Y e | Q ) − I ( O 2 ; Y 1 | S 1 , Q ) R x O 2 = I ( O 2 ; Y 1 | S 1 , Q ) , where R 1 = R S 1 and R 2 = 0 . Con verse follows from Theorem 3 as the needed conditio n is satisfied by the channel. A P P E N D I X G P R O O F O F C O RO L L A RY 7 Achiev ability follows from Th eorem 1 by only utilizing S 1 and O 2 together with the channel conditi on giv en in (16). For a gi ven p ∈ P ( S 1 , O 2 ) , if I ( S 1 , O 2 ; Y 1 | Q ) ≤ I ( S 1 , O 2 ; Y e | Q ) , we set R 1 = R 2 = 0 ; otherwise we assign the following rates. R S 1 = I ( S 1 , O 2 ; Y 1 | Q ) − I ( S 1 , O 2 ; Y e | Q ) R x S 1 = I ( S 1 ; Y e | Q ) R x O 2 = I ( O 2 ; Y e | S 1 , Q ) , where R 1 = R S 1 and R 2 = 0 . Con verse follows from Theorem 3 as the needed conditio n is satisfied by the channel. 20 A P P E N D I X H P R O O F O F C O RO L L A RY 9 For a given MA C-E wi th p ( y 1 , y e | x 1 , x 2 ) , we consider an IC-E defined by p ( y 1 , y 2 , y e | x 1 , x 2 ) = p ( y 1 , y e | x 1 , x 2 ) δ ( y 2 − y 1 ) and utilize Theorem 1 with p ∈ P ( C 1 , C 2 ) satisfying (17). Then, the achiev abl e region becomes R 1 = R C 1 ≤ I ( C 1 ; Y 1 | C 2 , Q ) − R x C 1 R 2 = R C 2 ≤ I ( C 2 ; Y 1 | C 1 , Q ) + R x C 1 − I ( C 1 , C 2 ; Y e | Q ) , R 1 + R 2 = R C 1 + R C 2 ≤ I ( C 1 , C 2 ; Y 1 | Q ) − I ( C 1 , C 2 ; Y e | Q ) , (51) where I ( C 1 ; Y e | Q ) ≤ R x C 1 ≤ I ( C 1 ; Y e | C 2 , Q ) and R x C 2 = I ( C 1 , C 2 ; Y e | Q ) − R x C 1 . H ence, R 1 + R 2 = [ I ( C 1 , C 2 ; Y 1 | Q ) − I ( C 1 , C 2 ; Y e | Q )] + is achiev able for any p ∈ P ( C 1 , C 2 ) satisfying (17). The following ou ter bound on the sum rate follows by Th eorem 3, as the cons tructed IC-E sat isfies the needed condition of the theorem. R 1 + R 2 ≤ I ( C 1 , C 2 ; Y 1 | Q ) − I ( C 1 , C 2 ; Y e | Q ) , for any p ∈ P ( C 1 , C 2 ) . Which is what needed to be shown. A P P E N D I X I P R O O F O F C O R O L L A RY 1 0 W e first remark that R [ NF ] will remai n the same if we restrict the union over the s et of probabili ty distributions ˜ P ( S 1 , O 2 , |Q| = 1) , { p | p ∈ P ( S 1 , O 2 , |Q| = 1) , I ( O 2 ; Y e ) ≤ I ( O 2 ; Y 1 ) } . As for any p ∈ P ( S 1 , O 2 , |Q| = 1) satisfying I ( O 2 ; Y e ) ≥ I ( O 2 ; Y 1 ) , R 1 ( p ) = [ I ( S 1 ; Y 1 | O 2 ) − I ( S 1 ; Y e | O 2 )] + since I ( O 2 ; Y 1 ) ≤ I ( O 2 ; Y e ) ≤ I ( O 2 ; Y e | S 1 ) in this case. And the highest rate achiev able with the NF schem e occurs if O 2 is chosen to be determin istic, and hence I ( O 2 ; Y 1 ) = I ( O 2 ; Y e ) case will result in the highest rate among th e probability distributions p ∈ P ( S 1 , O 2 , |Q| = 1) satis fying I ( O 2 ; Y e ) ≥ I ( O 2 ; Y 1 ) . Therefore, without loss of generality , we can write R [NF] = max p ∈ ˜ P ( S 1 ,O 2 , |Q| =1) R 1 ( p ) , where R 1 ( p ) = [ I ( S 1 ; Y 1 | O 2 ) + min { I ( O 2 ; Y 1 ) , I ( O 2 ; Y e | S 1 ) } − I ( S 1 , O 2 ; Y e )] + . Now , fix some p ∈ ˜ P ( S 1 , O 2 , |Q| = 1) , and set R x O 2 = min { I ( O 2 ; Y 1 ) , I ( O 2 ; Y e | S 1 ) } , R x S 1 = I ( S 1 , O 2 ; Y e ) − min { I ( O 2 ; Y 1 ) , I ( O 2 ; Y e | S 1 ) } , and R S 1 = I ( S 1 ; Y 1 | O 2 ) − I ( S 1 , O 2 ; Y e ) + min { I ( O 2 ; Y 1 ) , I ( O 2 ; Y e | S 1 ) } , where we set R 1 = 0 if t he latter is negati ve. Here, R 1 = I ( S 1 ; Y 1 | O 2 ) + min { I ( O 2 ; Y 1 ) , I ( O 2 ; Y e | S 1 ) } − I ( S 1 , O 2 ; Y e ) + is achie vable, i.e., ( R 1 ( p ) , 0) ∈ R RE for any p ∈ ˜ P ( S 1 , O 2 , |Q| = 1) . Observing that ( R [NF] , 0) ∈ R RE , we conclude that the noise forwarding (NF) scheme of [18] is a special case of the proposed scheme. A P P E N D I X J P R O O F O F C O R O L L A RY 1 1 For any gi ven p ∈ P ( S 1 , O 2 , |Q| = 1) satisfyin g I ( O 2 ; Y e ) ≤ I ( O 2 ; Y 1 ) , we see that R 1 = [ I ( S 1 , O 2 ; Y 1 ) − I ( S 1 , O 2 ; Y e )] + is achie v able du e to (21) and Corollary 10 . The con verse follows by Theorem 3 as the needed con dition is satisfied by cons idering an IC-E defi ned as p ( y 1 , y e | x 1 , x 2 ) δ ( y 2 − y 1 ) , where we set |Q| = 1 in the u pper b ound as the tim e sharing random variable is not needed for this s cenario, and further limit the input distributions to satisfy I ( O 2 ; Y e ) ≤ I ( O 2 ; Y 1 ) . The latter does not reduce the maximization for the upper bound due to a simil ar reasoning given in the Proof of Corollary 10. 21 R E F E R E N C E S [1] O. O . K o yluoglu and H. El Gamal, “On t he Secrecy R ate R egion for the Interference Channel, ” in Pro c. 2008 IEEE International Symposium on P ersonal, Indoor and Mobile Radio Communications (PIMRC’08) , Cannes, F rance, S ep. 2008. [2] T . Han and K. K obayash i, “ A ne w achiev able rate region for the interference channel, ” IEEE T rans. Inf . Theory , vol. 27, no. 1, pp. 49–60, Jan. 1981. [3] H. Chong, M. Motani, H. Garg , and H. El Gamal, “On the Han-Kob ayashi region for the interference channel, ” IEE E T rans . Inf. Theory , vol. 54, pp. 3188–3195, Jul. 2008. [4] H. Sato, “The capacity of the gaussian interference channel under strong interference, ” IEEE T ra ns. Inf. Theory , vol. 27, no. 6, pp. 786–788, Nov . 1981. [5] M. H. Costa and A. El Gamal, “The capacity region of t he discrete memoryless interference channel with strong interference, ” IEEE T ra ns. Inf. T heory , vol. 33, no. 5, pp. 710–711, S ep. 1987. [6] X. Shang, G. Kramer , and B. Chen, “ A ne w outer bound and the noisy-interference sum-rate capacity for gaussian interference channels, ” IEEE T ra ns. Inf. T heory , vol. 55, no. 2, pp. 689–699, Feb . 2009. [7] V . S . Annapureddy and V . V . V eerav alli, “Gaussian interference networks: Sum capacity in the l o w interference regime and new outer bounds on the capacity region, ” IEEE T rans . Inf. Theory , vol. 55, no. 7, pp. 3032–3050 , Jul. 2009. [8] A. S. Motahari and A. K. Khandani, “Capacity bounds for t he gaussian interference channel, ” IE EE T ra ns. Inf. Theory , vol. 55, no. 2, pp. 620–643, Feb . 2009. [9] Y . Liang, A. Somekh-Baruch, H. V . Poor , S. Shamai, and S. V erdu, “Cognitiv e interference channels with confiden tial messages, ” in Pr oc. of the 45th A nnual A llerton Confer ence on Communication, Contr ol and Computing , Monticello, IL, Sep. 2007. [10] R. Liu, I. Maric, P . Spasoje vic, and R. D. Y ates, “Discrete memoryless interference and broadcast channels with confidential messages: Secrecy rate regions, ” IE EE Tr ans. Inf. Theory , vol. 54, no. 6, pp. 2493– 2507, Jun. 2008. [11] O. O. K oyluo glu, H. El Gamal, L. L ai, and H. V . P oor , “On the secure degrees of freedom in the K-user gaussian i nterference channel, ” in Pro c. 2008 IEEE International Symposium on Information Theory (I SIT08) , T oronto, ON, C anada, Jul. 2008. [12] O. O. Ko yluoglu, H. El Gamal, L. L ai, and H. V . Poor , “Interference alignment for secrecy , ” IEEE Tr ans. Inf. T heory , to appear . [13] A. D. W yner , “The Wire-T ap Channel, ” T he B ell System T echnical Journal , vol. 54, no. 8, pp. 1355-1387, Oct. 1975. [14] I. Csisz ´ ar and J. K ¨ orner , “Broadcast channels with confidential messages, ” IEEE T ra ns. Inf. Theory , vol. 24, no. 3, pp. 339-348, May . 1978. [15] R. Negi and S. Goel, “Secret communication using artificial noise, ” in P r oc. 2005 IEEE 62nd V ehicular T echno logy Confer ence, (VTC-2005-F all) , 2005. [16] E . T ekin and A. Y ener , “The general Gaussian multiple access and two-way wire-tap channels: Achiev able rates and cooperati ve jamming, ” IEE E Tr ans. Inf. T heory , vol. 54, no. 6, pp. 2735 -2751, Jun. 2008. [17] T . Cov er and J. Thomas, “Elements of Information Theory , ” John W iley and Sons, Inc., 1991. [18] L . L ai and H. El Gamal, “The relay-eav esdropper channel: Cooperation for secrecy , ” IEEE T ran s. Inf. Theory , vo l. 54, no. 9, pp. 4005- 4019, Sep. 2008. [19] A. Carleial, “Interference chann els, ” IEEE Tr ans. Inf. Theory , vol. 24, no. 1, pp. 60-70, Jan. 1978. [20] S . Leung-Y an-Cheong and M. Hellman, “The gaussian wire-t ap channel, ” IEEE T r ans. Inf. Theory , v ol. 24, no. 4, pp. 451-456, Jul. 1978.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment