Simple, Efficient, and Neural Algorithms for Sparse Coding

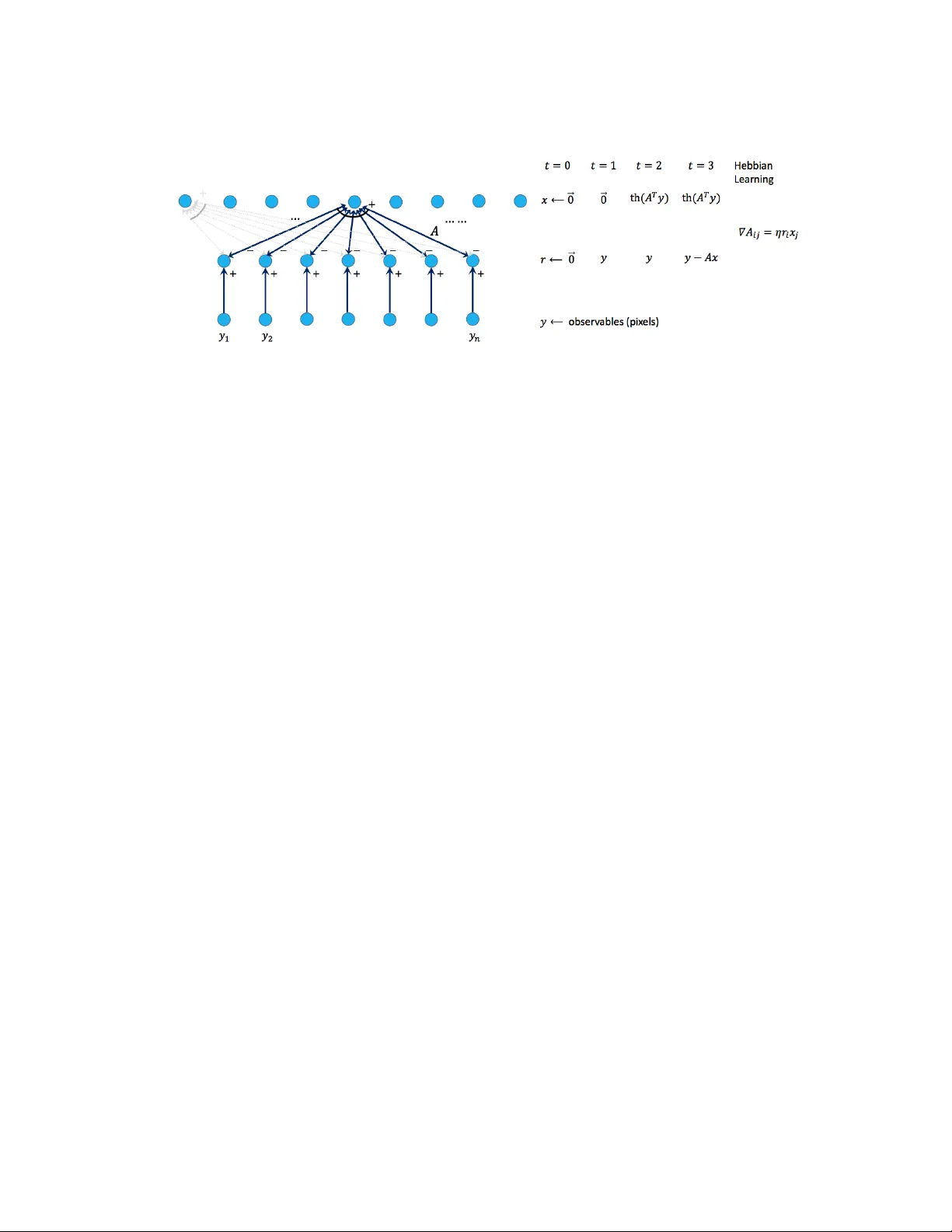

Sparse coding is a basic task in many fields including signal processing, neuroscience and machine learning where the goal is to learn a basis that enables a sparse representation of a given set of data, if one exists. Its standard formulation is as …

Authors: Sanjeev Arora, Rong Ge, Tengyu Ma