Data Fusion by Matrix Factorization

For most problems in science and engineering we can obtain data sets that describe the observed system from various perspectives and record the behavior of its individual components. Heterogeneous data sets can be collectively mined by data fusion. F…

Authors: Marinka v{Z}itnik, Blav{z} Zupan

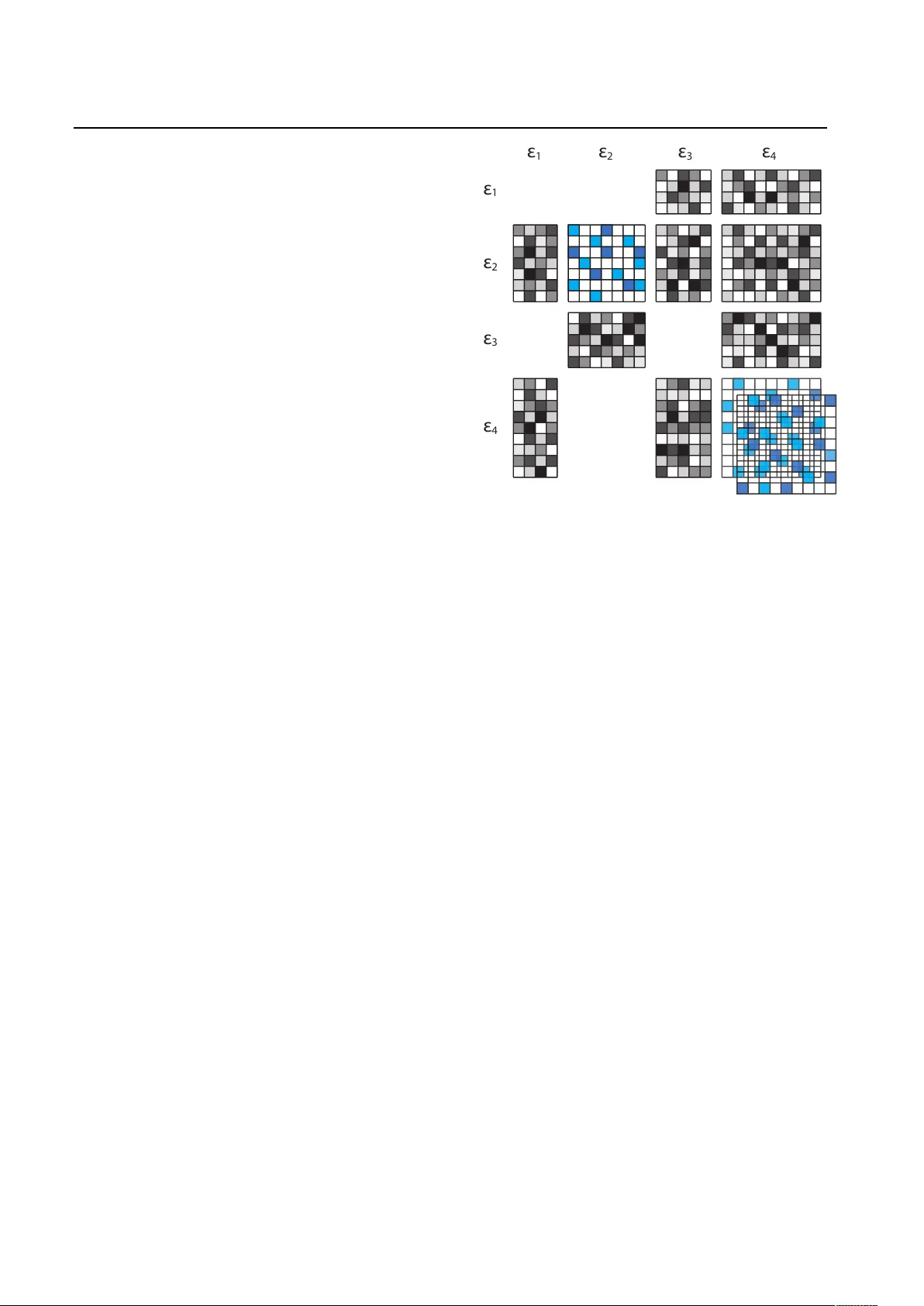

Data F usion b y Matrix F actorization Marink a ˇ Zitnik marinka.zitnik@fri.uni-lj.si F acult y of Computer and Information Science, Universit y of Ljubljana, T r ˇ za ˇ sk a 25, SI-1000 Ljubljana, Slo venia Bla ˇ z Zupan blaz.zup an@fri.uni-lj.si F acult y of Computer and Information Science, Universit y of Ljubljana, T r ˇ za ˇ sk a 25, SI-1000 Ljubljana, Slo venia Department of Molecular and Human Genetics, Baylor College of Medicine, Houston, TX-77030, USA Abstract F or most problems in science and engineer- ing w e can obtain data that descri b e the system from v ari ou s p ersp ectiv es and record the b e haviour of its individual components. Heterogeneous data sources can b e collec- tiv ely mined by data f us i on. F usion can fo- cus on a specific target relation and exploit directly asso ciated data together with data on the context or additional constrain ts. In the pap er w e describe a data fusion approach with pe nal i ze d matrix tri-factorization that sim ultaneously factorizes data matrices to rev eal hidden asso ciations. The approac h can directly consider an y data sets that can b e expressed in a matrix, including those from attribute-based represen tations, on tolo- gies, asso ciations and netw orks. W e dem on- strate its utility on a gene function prediction problem in a case study with eleven di ↵ erent data sources. Our fusion algorithm compares fa vourably to state-of-the-art multiple kernel learning and ac hieves hi gh er accuracy than can b e obtained from any s i ngl e dat a source alone. 1. I ntro duction Data abounds in all areas of human endea vour. Big data ( Cuzzo crea et al. , 2011 ) is not only large in vol- ume and ma y include thousands of features , but it is also heterogeneous. W e may gather v ar i ous data sets that are directly related to the problem, or data sets that are loosely related to our study but could b e useful when combined with other data sets. Con- sider, for example, the exp osome ( Rappaport & Smit h , 2010 ) that encompasses the totality of human endeav- our in the study of disease. Let us sa y that we ex- amine susceptibilit y to a particular disease and hav e access to the patients’ clinical data toget h er with data on their demographics, h abi t s , living en vironments, friends, relatives, and mo vie-watc hing habits and genre on tology . Mining suc h a div erse data collection may rev eal in teresting patterns that w ould remain hidden if considered only directly related, clinical data. What if the disease was less common in living areas with more op en spaces or in en vironments where p eople need to w alk instead of driv e to the nearest gro cer y ? Is the disease less common among those that watc h come- dies and ignore p olitics and news? Metho ds for data fusion can collectiv ely treat data sets and com bine diverse data sources even when they di ↵ er in their conceptual, con textual and typographical rep- resen tation ( Aerts et al. , 2006 ; Bostr¨ om et al. , 2007 ). Individual data sets may b e incomplete, y et becau se of their div ersity and complemen tarity , fusion impro ves the robustness and predictive p erformance of the re- sulting mo dels. Data fusion approaches can b e classified into three main categories according to the modelin g stage at which fusion tak es place ( P avlidis et al. , 2002 ; Sc h¨ olkopf et al. , 2004 ; Maragos et al. , 2008 ; Greene & Cunningham , 2009 ). Early (or ful l) inte gr ati on trans- forms all data sources into a single, feature-based ta- ble and treats this as a single data set. This is theo- retically the most p ow erful scheme of m ultimo dal fu- sion b ecause the inferred mo del can contain an y type of relationships b et ween the features from within and b etw een the data sources. E ar ly integration relies on pro cedures for featur e construction. F or our exposome example, patient-speci fi c data would need to include b oth clinical data and information from the mo vie genre on tologies. The former may b e t ri v i al as this data is already related to each sp ecific patie nt, while the latter requires more complex feature engineerin g. Note: Please refer to the extended version of this paper published in the IEEE T ransac tions on Pattern Analysis and Machine Intelligence (10.1 109/TP AMI.2014.2343973). Data F usion b y Matrix F actorization Early integration neglects the modular structure of the data. In late (de cision) inte gr ation , each data source gives rise to a separate mo del. Predictions of these mo dels are fused b y mo del weigh ting. Again, pr ior to mo del inference, it is necessary to transform eac h data s et to enc o d e relations to th e target concept. F or our ex- ample, information on the movie preferences of friends and relatives would need to be mapp ed to disease asso- ciations. Such transformations ma y not be trivial and w ould need to be crafted indep endently for each data source. Once the data are transformed, the fusion can utilize any of the existing ensem ble methods. The youngest branch of data fusion algorit hm s is in- terme diate (p artial) inte gr ation . It relies on algorithms that explicitly address the m ultiplicity of data and fuse them through inference of a join t model. Intermediate in tegration do es n ot fus e th e inp ut dat a, nor do es it de- v elop separate mo dels for each data source. It inst e ad retains the stru ct u r e of the data sources and merges them at the level of a predict i ve mo del. This particul ar approac h is often preferr e d b ecause of its sup erior pre- dictive accuracy ( Pa vlidis et al. , 2002 ; Lanckriet et al. , 2004b ; Gev aert et al. , 2006 ; T ang et al. , 2009 ; v an Vliet et al . , 2012 ), but for a giv en model t yp e, it requires the dev elopment of a new inference algorithm. In this pap er we rep ort on the developmen t of a new metho d for intermediate data fusi on based on con- strained matrix factorization. Our aim w as to con- struct an algorithm that requires only m in i mal trans- formation (if any at all) of input data and can fuse attribute-based represen tations, on tologies, asso ci a- tions and net works. W e b egin with a description of related work and the background of matrix factoriza- tion. W e then present our data fusion algorithm and finally demonst r at e its utilit y in a study comparing it to state-of-the-art intermediate integration with multi- ple kernel learning and early integration with random forests. 2. B a ckground and Related W ork Appro ximate matri x f act or iz at ion estimates a data matrix R as a pro duct of low-rank matrix factors that are found by solving an optimization problem. In tw o- factor decomp osition, R = R n ⇥ m is decomp osed to a pro duct WH ,w h e r e W = R n ⇥ k , H = R k ⇥ m and k ⌧ min( n, m ). A large class of matrix factori za- tion al gori t h ms minimize discrepancy b et ween the ob- serv ed matr i x and its low-rank approximation, such that R ⇡ WH . F or instan ce , SVD, non-negative ma- trix factorization and exp onential fam il y PCA all min- imize Bregman div ergence ( Singh & Gordon , 2008 ). The cost function can b e further extended with v ari- ous constrain ts ( Zhang et al. , 2011 ; W ang et al. , 2012 ). W ang et al. (2008) ( W ang et al. , 2008 )d e v i s e da p enalized matrix tri-factorization to include a set of m ust-link and cannot-link constrain ts. W e exploit th i s approac h to include relations b etw een ob jects of the same t yp e. Const rai nts w ould for i ns t anc e allow us to include informati on from movie genre on tologies or so cial netw ork friendships. Although oft en used in data analysis for dimension- alit y reduction, clustering or lo w-rank appro ximation, there hav e b een few applications of matri x factoriza- tion for data fusion. Lange et al. (2005) ( Lange & Bu h man n , 2005 ) proposed an early integration by non-negative matrix factori zat i on of a target matri x , whic h was a conv ex com bination of similarit y matri- ces obtain ed from m ultiple information sou rc es. Their w ork is similar to t hat of W ang et al. (2012) ( W ang et al. , 2012 ), who applied non-negative matrix tri- factorization with input matr i x completion. Zhang et al. (2012) ( Zhang et al. , 2012 ) prop osed a join t matrix factorization to decomp ose a n umber of data matrices R i in to a common basis matrix W and di ↵ eren t co e ffi cient matrices H i , such that R i ⇡ WH i by m i n i m i z i n g P i || R i WH i || . T hi s is an interme- diate integration approach with di ↵ erent data sources that describ e ob jects of the same type. A similar ap- proac h but with added netw ork-regularized constraints has also been prop osed ( Zhang et al. , 2011 ). Our w ork extends these t wo approac hes by simultaneously deal- ing with heterogeneous data sets and ob jects of di ↵ er- en t t yp es. In the paper we use a v ariant of three-factor matrix factorization that decomposes R into G 2 R n ⇥ k 1 , F 2 R m ⇥ k 2 and S 2 R k 1 ⇥ k 2 suc h that R ⇡ GSF T ( W ang et al. , 2008 ). Appro ximation can b e rewritten such that en try R ( p, q ) is approximated by an inner pro duct of the p -th row of matrix G and a linear combination of the columns of matrix S , w eighted by the q -th column of F . The matrix S , which has relat i vely few vectors compared to R ( k 1 ⌧ n , k 2 ⌧ m ), is used to represen t man y data vectors, and a go o d approximation can on l y b e achiev ed in the presence of the latent structure in the original data. W e are currently witnessing increasi n g interest in the join t treatment of heterogeneous data sets and th e emergence of approac hes sp e ci fic al ly designed for data fusion. These include canonical correlation analy- sis ( Chaudhuri et al. , 2009 ), combining man y inter- action netw orks into a comp osit e netw ork ( Mostafavi & Morris , 2012 ), multiple graph clustering with l in ked Note: Please refer to the extended version of this paper published in the IEEE T ransac tions on Pattern Analysis and Machine Intelligence (10.1 109/TP AMI.2014.2343973). Data F usion b y Matrix F actorization matrix factorization ( T ang et al. , 2009 ), a mixture of Mark ov chains associ at ed with di ↵ erent graphs ( Zhou & Burges , 2007 ), dep en de nc y -se eking clustering algo- rithms with v ariational Ba yes ( Klami & Kaski , 2008 ), laten t f act or analysis ( Lop es et al. , 2011 ; Lutt i n en &I l i n , 2009 ), nonparametric Bay es ens e mble learn- ing ( Xing & Dunson , 2011 ), approac hes based on Ba yesian theory ( Zhang & Ji , 2006 ; Alexeyenk o & Sonnhammer , 2009 ; Huttenhow er et al. , 2009 ), neural net works ( Carp enter et al. , 2005 ) and mo dule guided random forests ( Chen & Zhang , 2013 ). These ap- proac hes either fuse input data (early integration) or predictions (late in tegration) and do not directly com- bine heterogeneous representation of ob jects of d i ↵ er- en t t yp es. A state- of- th e- art approach to in termediate data in- tegration is kernel-based learning. Multiple k ernel learning (MKL) has b een pioneered by Lanc kriet et al. (2004) ( Lanckriet et al. , 2004a ) and is an ad di t i ve extension of single k ernel SVM to incorp orate mul- tiple k ernels in classification, regression and cl u st e r- ing. The MKL assumes that E 1 ,..., E r are r di ↵ erent representations of the same set of n ob jects. Exten- sion from single to m ultiple data sources is ac hieved b y additive com bination of k ernel matrices, giv en b y ⌦ = P r i =1 ✓ i K i 8 i : ✓ i 0 , P r i =1 ✓ i =1 , K i ⌫ 0 , where ✓ i are w eights of the kernel matrices, is a p a- rameter determining the norm of constraint p osed on co e ffi cients (for L 2 ,L p -norm MKL, see ( Kloft et al. , 2009 ; Y u et al. , 2010 ; 2012 )) and K i are normal- ized kernel matrices centered in the Hilbert space. Among other improv emen ts, Y u et al. (2010) ex- tended the framework of t h e MKL in Lanckriet et al. (2004) ( Lanckriet et al. , 2004a ) by opt i mi z in g v ari- ous norms in the dual problem of SVMs that allows non-sparse optimal kernel co e ffi cients ✓ ⇤ i .T h e h e t - erogeneity of data sources in the MKL is resolved by transforming di ↵ eren t ob ject types and data struc- tures (e.g., strings, vectors, graphs) into k ernel ma- trices. These transformations dep end on the choice of the kernels , whic h in turn a ↵ ect the metho d’s p erfor- mance ( Debnath & T ak ahashi , 2004 ). 3. Da t a F usion Algorithm Our data fusion algorithm considers r ob ject t yp es E 1 ,..., E r and a collection of data sources, each relat- ing a pair of ob ject types ( E i , E j ). In our introductory example of t h e exp osome, ob ject t yp es could b e a pa- tien t, a disease or a living environmen t, among others. If there are n i ob jects of t yp e E i ( o i p is p -th ob je ct of ty p e E i ) and n j ob jects of type E j ,w er e p r e s e n tt h e observ ations from th e data source that relates ( E i , E j ) ε 1 ε 2 ε 3 ε 4 ε 1 ε 2 ε 3 ε 4 Figure 1. C o n ce p tu a l fusion configuration of four ob ject ty p e s , E 1 , E 2 , E 3 and E 4 . Data so u rce s relate pairs of ob- ject t yp es (matrices with shades of gray). F or example, data matrix R 42 relates ob ject types E 4 and E 2 . Some re- lations are missing; there is no d at a source relating E 3 and E 1 . Constraint matrices (in blue) relate ob jects of the same t yp e. In our example, constraints are provided for ob ject ty p e s E 2 (one constraint matrix) and E 4 (tw o constrain t matrices). for i 6 = j in a sparse m atr i x R ij 2 R n i ⇥ n j . An exam- ple of such a matrix w ould b e one that relates patien ts and drugs by rep orting on their current prescriptions. Notice that in general matrices R ij and R ji need not b e symmetric. A data source that provides relations b etw een ob jects of the same type E i is represented by a constrain t matrix ⇥ i 2 R n i ⇥ n i . Examples of suc h constraints are so cial netw orks and drug interactions. In real-w orld scenarios we migh t not hav e access to relations b etw een all pairs of ob ject typ e s. Our data fusion algorit hm still in tegrates all av ailable data if an underlying graph of relations b et ween ob ject types is connected. Fig. 1 shows an example of a blo ck config- uration of the fusion system with four ob ject types. T o re t ain th e blo c k structure of our syste m, w e prop ose the sim ultaneous factorization of all relation matrices R ij constrained b y ⇥ i . The resulting system c ontains factors that are sp ecific to eac h data source and fac- tors that are sp ecific to each ob ject type. Through factor sh ar in g we fuse the data but also iden tify source- sp ecific pattern s. The prop osed fusion approach is di ↵ erent from treat- ing an entire system (e.g., from Fig. 1 ) as a large single Note: Please refer to the extended version of this paper published in the IEEE T ransac tions on Pattern Analysis and Machine Intelligence (10.1 109/TP AMI.2014.2343973). Data F usion b y Matrix F actorization matrix. F actorization of suc h a matrix would yield fac- tors that are not ob ject type-sp ecific and would thus disregard th e structure of the sys te m. W e also show (Sec. 5.5 ) that suc h an approach is inferior in terms of predictive perfor man ce. W e apply data fusion to infer relations betw een tw o target ob ject t yp es, E i and E j (Sec. 3.4 and Se c . 3.5 ). This relation, enco ded in a target matrix R ij ,w i l l b e observed in the context of all other data source s (Sec. 3.1 ). W e assume that R ij is a [0 , 1]-matrix that is only partially observ ed. Its entries indicate a de- gree of relation, 0 denoting no relation an d 1 denot- ing the strongest relation. W e aim to predict u n ob- serv ed en tries in R ij b y reconstructing them through matrix factorization. Such treatment in general ap- plies to m ulti-class or m ulti-lab el classification tasks, whic h are con venien tly addressed b y m ultiple kernel fusion ( Y u et al. , 2010 ), with whic h w e compare our p erformance in this pap er . 3.1. F actorization An input to data fusion is a relation blo ck matrix R that conceptually represents all relation matrices: R = 2 6 6 6 4 0R 12 ··· R 1 r R 21 0 ··· R 2 r . . . . . . . . . . . . R r 1 R r 2 ··· 0 3 7 7 7 5 . (1) Ab l o c ki nt h e i -th row and j -th column ( R ij ) of ma- trix R represents the relationship betw een ob ject type E i and E j .T h e p -th ob ject of t yp e E i (i.e. o i p ) and q -th ob ject of type E j (i.e. o j q ) are related by R ij ( p, q ). W e additionally consider constraints relating ob j ec t s of the same typ e. Several data sources may b e av ailable for each ob ject type. F or instance, p ersonal relations ma y b e observed from a so cial netw ork or a family tree. Assume th er e are t i 0 data sources for ob ject t yp e E i represented b y a set of constraint matrices ⇥ ( t ) i for t 2 { 1 , 2 ,...,t i } . Constraints are collectively enco ded in a set of constraint blo ck diagonal matrices ⇥ ( t ) for t 2 { 1 , 2 ,..., max i t i } : ⇥ ( t ) = 2 6 6 6 6 4 ⇥ ( t ) 1 0 ··· 0 0 ⇥ ( t ) 2 ··· 0 . . . . . . . . . . . . 00 ··· ⇥ ( t ) r 3 7 7 7 7 5 . (2) The i -th blo c k along the main diagonal of ⇥ ( t ) is zero if t>t i . En tries in constraint matrices are positive for ob jects that are not similar and negativ e for ob ject s that are similar. The former are kno wn as cannot-link constraints b ecause they imp ose p enalties on the cur- ren t appro ximation of t he matrix factors, and the lat- ter are must-link constraints, which are rewards that reduce the v alue of the cost function during optimiza- tion. The block matrix R is tri-factorized into blo c k matrix factors G and S : G = 2 6 6 6 4 G n 1 ⇥ k 1 1 0 ··· 0 0G n 2 ⇥ k 2 2 ··· 0 . . . . . . . . . . . . 00 ··· G n r ⇥ k r r 3 7 7 7 5 , S = 2 6 6 6 4 0S k 1 ⇥ k 2 12 ··· S k 1 ⇥ k r 1 r S k 2 ⇥ k 1 21 0 ··· S k 2 ⇥ k r 2 r . . . . . . . . . . . . S k r ⇥ k 1 r 1 S k r ⇥ k 2 r 2 ··· 0 3 7 7 7 5 . (3) A factorization rank k i is assigned to E i during infer- ence of the factorized system. F actors S ij define the relation betw een ob ject typ es E i and E j , wh i l e factors G i are s p ecific to ob jects of type E i and are used in the reconstruction of every relation with this ob ject t yp e. In this wa y , eac h relation matrix R ij obtains its o wn factoriz ati on G i S ij G T j with factor G i ( G j ) that is shared across relations whic h inv olve ob ject types E i ( E j ). This can also b e observ ed from the blo ck struc- ture of the reconstructed system GSG T : 2 6 6 6 4 0G 1 S 12 G T 2 ··· G 1 S 1 r G T r G 2 S 21 G T 1 0 ··· G 2 S 2 r G T r . . . . . . . . . . . . G r S r 1 G T 1 G r S r 2 G T 2 ··· 0 3 7 7 7 5 . (4) The ob jective function minimized by penalized matrix tri-factorization ensures go o d appro ximation of the in- put data and adherence to must-link and cannot-link constraints. W e extend it to include m ultiple con- straint matrices for eac h ob ject type: min G 0 || R GSG T || + max i t i X t =1 tr( G T ⇥ ( t ) G ) , (5) where || · || and tr( · ) denote the F rob enius norm and trace, resp ecti vely . F or decomposi t ion of relation matrices we derive up dating rules based on the p enalized matrix tri- factorization of W ang et al. (2008) ( W ang et al. , 2008 ). The algorithm for solving the optimization problem in Eq. ( 5 ) initializes matrix factors (see Sec. 3.6 ) and im- pro ves them iteratively . Successiv e up date s of G i and S ij con verge to a lo cal minimum of the optimization problem. Note: Please refer to the extended version of this paper published in the IEEE T ransac tions on Pattern Analysis and Machine Intelligence (10.1 109/TP AMI.2014.2343973). Data F usion b y Matrix F actorization Multiplicative u p dating rules are derived from W ang et al. (2008) ( W ang et al. , 2008 ) b y fixing one matrix factor (i.e. G ) and considering the roots of the par- tial deriv ative with resp ect to the other matrix factor (i.e. S , and vice-versa) of the Lagrangian function. The latter is constru ct e d from the ob jective function giv en in Eq. ( 5 ). The up date rule alternates b etw een first fixing G and up dating S , and then fixin g S and up dating G , until conv ergence. The up date rule for S is: S ( G T G ) 1 G T RG ( G T G ) 1 (6) The rule for factor G incorporates data on constrain ts. W e updat e each eleme nt of G at p osition ( p, q )b y mu l t i p l y i n g i t w i t h : v u u t ( RG S ) + ( p,q ) +[ G ( SG T GS ) ] ( p,q ) + P t (( ⇥ ( t ) ) G ) ( p,q ) ( RG S ) ( p,q ) +[ G ( SG T GS ) + ] ( p,q ) + P t (( ⇥ ( t ) ) + G ) ( p,q ) . (7) Here, X + ( p,q ) is defined as X ( p, q )i fe n t r y X ( p, q ) 0 else 0. Similarly , the X ( p,q ) is X ( p, q )i f X ( p, q ) 0 else it is set to 0. Therefore, both X + and X are non-negative matrices. This definition is applied to matrices in Eq. ( 7 ), i.e. RG S , SG T GS , and ⇥ ( t ) for t 2 { 1 , 2 ,..., max i t i } . 3.2. Stopping Criteria In this paper we apply data fu si on to infer relations b etw een t wo tar get ob ject types, E i and E j .W e h e n c e define the stopping criteria that observes conv ergence in approximating only the target matrix R ij . Our con- v ergence criteria is: || R ij G i S ij G T j || < ✏ , (8) where ✏ i s a user-defined parameter, p ossibly refined through observing log en tries of several runs of the fac- torization algorithm. In our exp eriments ✏ was set to 10 5 . T o reduc e the computational load, the conv er- gence criteria was assessed only ev ery fifth iteration only . 3.3. P arameter Estimation T o fuse data sources on r ob ject t yp es, it is necessary to estimate r factorization ranks, k 1 ,k 2 ,...,k r . These are chosen from a predefined interv al of possible v alues for each rank b y esti m ati n g the mo del quality . T o reduce the num b er of needed factorization runs w e mimic the bisection metho d by first testing rank v alues at the midpoint and borders of sp ecified ranges and then for each rank v alue selecting the subinterv al for whic h b etter model quality was ac hieved. W e ev aluate the mo dels through the explained v ariance, the residual sum of squares (RSS) and a measure based on the cophenetic correlation co e ffi cien t ⇢ . W e compute these measures from the target relation matrix R ij . The RSS is c om- puted from observed entries in R ij as RSS( R ij )= P ( o i p ,o j q ) 2 A ( E i , E j ) ⇥ ( R ij G i S ij G T j )( p, q ) ⇤ 2 , where A ( E i , E j ) is the set of known asso ciations b et ween ob jects of E i and E j . The explained v ariance for R ij is r 2 ( R ij )=1 RSS( R ij ) / P p,q [ R ij ( p, q )] 2 . The cophenetic correlation score was implemen ted as describ ed in ( Brunet et al. , 2004 ). W e assess the three qualit y scores through internal cross-v alidation and observe ho w r 2 ( R ij ), RSS( R ij ) and ⇢ ( R ij ) v ary as factorization ranks c hange. W e select ranks k 1 ,k 2 ,...,k r where the cophenetic co ef- ficien t b egins to fall, the explained v ariance is high and the RSS curve sho ws an inflection p oint ( Hutc hins et al. , 2008 ). 3.4. Prediction from Matrix F actors The approximate relation matrix b R ij for the target pair of ob ject types E i and E j is reconstructed as: b R ij = G i S ij G T j . (9) When the mo del is requested to prop ose relat i on s for a new ob ject o i n i +1 of t yp e E i that w as not included in the training data, we need to estimate its factorized representation and use the resulting factors for predic- tion. W e formulate a non-negative linear least-squares (NNLS) and solve it with an e ffi cien t in terior point Newton-like metho d ( V an Benthem & Keenan , 2004 ) for min x 0 || ( SG T ) T x o i n i +1 || 2 ,w h e r e o i n i +1 2 R P n i is the original description of ob ject o i n i +1 across all a v ailable relation matrices an d x 2 R P k i is its fac- torized representation. A solution vector x T is added to G and a new b R ij 2 R ( n i +1) ⇥ n j is computed using Eq. ( 9 ). W e would like to identify ob ject pairs ( o i p ,o j q ) for which the predicted degree of relation b R ij ( p, q ) is unusually high. W e are interested in candidate pairs ( o i p ,o j q ) for which the estimated association score b R ij ( p, q )i s greater than the mean estimated score of all known relations of o i p : b R ij ( p, q ) > 1 |A ( o i p , E j ) | X o j m 2 A ( o i p , E j ) b R ij ( p, m ) , (10) where A ( o i p , E j ) is the set of all ob jects of E j related to o i p . Notice that this rule is row-cen tric, that is, given an ob ject of t yp e E i , it searc hes for ob je ct s of the oth er ty p e ( E j ) that it could b e related to. W e can mo dify Note: Please refer to the extended version of this paper published in the IEEE T ransac tions on Pattern Analysis and Machine Intelligence (10.1 109/TP AMI.2014.2343973). Data F usion b y Matrix F actorization the ru l e to become column-centric, or even combine the tw o rules. F or example, let us consider that we are studying dis- ease predisp ositions for a set of patients. Let the pa- tien ts b e ob jects of t yp e E i and diseases ob jects of t yp e E j . A patient-cen tric rule w ould consider a patient and his medical history and through Eq. ( 10 ) propose a set of new disease associat ions . A disease-ce ntric rule w ould instead consider all patien ts already associ ate d with a sp ecific disease and iden tify ot h er patien ts with a su ffi ciently high association score. In our exp eriments we c ombine ro w-centric and column-centric approaches. W e first apply a ro w- cen tric approac h to iden tify candidates of t yp e E i and then estimate the strength of asso ciation to a sp ecific ob ject o j q b y reporting a p ercentile of asso ciation score in the distribution of scores for all true associations of o j q , that is, by considering the scores in the q -ed column of b R ij . 3.5. An Ensem ble Approach to Prediction Instead of a single mo del, we construct an ensemble of factorization mo dels. The resulting matrix factors in eac h mo del di ↵ er due to the initial random conditions or small random p erturbations of selected factorization ranks. W e use each factorization system for infer en ce of associat i ons (Sec. 3.4 ) and then select the candidate pair through a ma jority vote. That is, the rule from Eq. ( 10 ) must apply in more than one half of f act or iz ed systems of the ensemble. Ensem bles impro ved the pre- dictive accuracy and stability of the factorized system and the robustness of the results. In our exp eriments the ensembles combined 15 factorizati on mo dels. 3.6. Matrix F actor Initializati on The inference of the fac tor i ze d system in Sec. 3.1 is sensitive t o the initialization of factor G . Prop er ini- tialization sidesteps the issue of lo cal con vergence and reduces the num b er of iterations needed to obtain ma- trix factors of equal quality . W e initialize G by sepa- rately initializing eac h G i , using algorithms for single- matrix factorization. F actors S are computed from G (Eq. ( 6 )) and do not require initialization. W ang et al. (2008) ( W ang et al. , 2008 ) and several other authors ( Lee & Seung , 2000 ) use simple random initialization. Other more informed initialization algo- rithms include random C ( Albrigh t et al. , 2006 ), ran- dom Acol ( Albri ght et al. , 2006 ), non-negative double SVD and its v ariants ( Boutsidis & Gallop oulos , 2008 ), and k -means clustering or relaxed SVD-cen troid ini- tialization ( Al b ri ght et al. , 2006 ). W e show that the latter approaches are indeed b ett er ov er a random ini- tialization (Sec. 5.4 ). W e use random Acol in our case study . Random Acol computes eac h column of G i as an element-wise av erage of a random subset of columns in R ij . 4. Exp erimen ts W e considered a gene function prediction problem from molecular biology , where recent tec hnological ad- v ancemen ts hav e al l ow ed researchers to collect large and div erse exp erimental data sets. D at a integration is an imp or t ant aspect of bioinformatics and the s ub - ject of muc h recent research ( Y u et al. , 2010 ; V aske et al. , 2010 ; Mor eau & T ranchev en t , 2012 ; Mostafavi & Morris , 2012 ). It is expected that v ar iou s data sources are complementary and that high accuracy of predic- tions can b e achiev ed through data fusion ( Parikh & P olik ar , 2007 ; P andey et al. , 2010 ; Sa v age et al. , 2010 ; Xing & Dunson , 2011 ). W e studie d the fusi on of eleven di ↵ eren t data sources to predict gene function in the so cial amoeba Dictyostelium disc oideum and rep ort on the cross-v alidated accuracy for 148 gene annotation terms (classes). W e compare our data fusion algorithm to an early in- tegration b y a random forest ( Boulesteix et al. , 2008 ) and an in termediate in tegration b y m ultiple kernel learning (MKL) ( Y u et al. , 2010 ). Kernel-based fu- sion used a multi-class L 2 norm MKL with V apnik ’ s SVM ( Y e et al. , 2008 ). The MKL was formulated as a second order cone program (SOCP) and its dual prob- lem w as solved by the conic optimization solv er Se- DuMi 1 . Random for es t s from the Orange 2 data min- ing suite w ere used with default parameters. 4.1. Data The social amo eb a D. disc oideum , a p opular model or- ganism in biomedical res ear ch, has ab out 12 , 000 genes, some of which are functionally annotated with terms from Gen e Ontology 3 (GO). The annotation is rat he r sparse and only ⇠ 1 , 400 genes hav e exp erimentally de- riv ed annotations. The Dictyostelium comm unity can th us gain from computational mo dels with accurate function predicti on s. W e observ ed six ob ject types: genes (t yp e 1), ontology terms (type 2), experimental conditions (type 3), pub- lications from the PubMed database (PMID) (type 4), Medical Sub ject Headings (MeSH) descriptors (t yp e 1 http://sedumi.ie.lehigh.edu 2 http://orange.biolab.si 3 http://www.geneontology.org Note: Please refer to the extended version of this paper published in the IEEE T ransac tions on Pattern Analysis and Machine Intelligence (10.1 109/TP AMI.2014.2343973). Data F usion b y Matrix F actorization 5), and KEGG 4 path wa ys (type 6). Ob ject types and their observed relat i on s are shown in Fig. 2 .T h e data included gene expression measured during di ↵ er- en t time-p oints of a 24-hour developmen t cycle ( Parikh et al. , 2010 )( R 13 , 14 exp erimental conditions), gene annotations with ex p erimental evidence co de to 148 generic slim terms from the GO ( R 12 ), PMIDs and their associated D. disc oideum genes from Dictybase 5 ( R 14 ), genes participating in KEGG pathw ays ( R 16 ), assignments of MeSH descriptors to publications from PubMed ( R 45 ), references to published work on asso- ciations b etw een a sp ecific GO term and gene pro duct ( R 42 ), and asso ciations of enzymes inv olved in KEGG path wa ys and related to GO terms ( R 62 ). T o bal anc e R 12 , our target relation matrix, w e added an equal n umber of non-asso ciations for which there is no evidence of an y type in the GO. W e constrain our system by considering gene interaction scores from STRING v9.0 6 ( ⇥ 1 ) and sli m term similarity scores ( ⇥ 2 ) computed as 0 . 8 hops , where hops is the length of the shortest path b etw een tw o terms in the GO graph. Similarly , MeSH descriptors are constrained with the a verage num b er of hops in the MeSH hierarch y b e- t ween each pair of descriptors ( ⇥ 5 ). Constraints b e- t ween KEGG pathw ays corresp ond to the num b er of common ortholog groups ( ⇥ 6 ). The slim subset of GO terms w as used to limit the optimization complexity of the MK L and the num b er of v ariables in the SOCP , and to ease the computational bur de n of early inte- gration b y random forests, which inferred a separat e mo del for eac h of the terms. To s t u d y t h e e ↵ ects of data sparseness, we con d uc te d an exp eriment in which we selected either the 100 or 1000 most GO-annotated genes (see second column of T able 1 for sparsity). In a separate exp eri ment we examined prediction s of gene asso ciation with any of nine GO t e rm s that are of sp ecific relev ance to the curren t research in the Dic- tyostelium comm unity (up on consultations with Gad Shaulsky , Ba ylor College of Medicine, Houston, TX; see T able 2 ). Instead of using a generic slim subset of terms, we examined the predictions in the con text of a complete set of GO terms. This resulted in a data set with ⇠ 2 . 000 terms, eac h term having on av erage 9 . 64 direct gene annotations. 4 http://www.kegg.jp 5 http://dictybase.org/Downloads 6 http://string- db.org Gene PMID R 14 Experimental Condition R 13 Θ 1 GO T erm R 12 KEGG Pathway R 16 Θ 2 MeSH Descriptor R 45 R 42 Θ 5 Θ 6 R 62 Figure 2. T h e fusion confi g u ra t io n . Nodes represent ob ject t yp es used in our study . Edges correspond to relation and constraint matrices. The arc that represen ts the target matrix R 12 and its o b jec t types are highlighted. 4.2. Scoring W e estimated the qualit y of inferred mo dels by ten- fold cross-v alidation. In each iteration, we split the gene set to a train and test s et . The data on genes from the test set was entirely omitted from the t r ai n- ing data. W e dev elop ed prediction mo dels from the training data and tested them on the genes from the test set. The p erfor man ce was ev aluated using an F 1 score, a harmonic mean of precision and recall, which w as a veraged across cross-v alidation runs. 4.3. Prepro cessing for Kernel-Based F usion W e generated an RBF k ernel for gene expression mea- surements f r om R 13 with width =0 . 5 and the RBF function ( x i , x j )=e x p ( || x i x j || 2 / 2 2 ), and a linear kernel for [0 , 1]-protein-in teraction matrix from ⇥ 1 . Kernels w ere applied to data mat ri ce s. W e used a linear kernel to generate a kernel matrix from D. disc oideum sp ecific genes that participate in path wa ys ( R 16 ), and a k ernel matrix from PMIDs and their as- so ciated genes ( R 14 ). Sev eral data sources describ e relations b etw een ob ject t yp es other than genes. F or k ernel-based fusion we had to transform them to ex- plicitly relate to genes. F or instance, to relate genes and MeSH descriptors, w e counted the n umber of Note: Please refer to the extended version of this paper published in the IEEE T ransac tions on Pattern Analysis and Machine Intelligence (10.1 109/TP AMI.2014.2343973). Data F usion b y Matrix F actorization publications that w ere asso ciated with a sp ecific gene ( R 14 ) and were assigned a sp ecific MeSH descriptor ( R 45 , see also Fig. 2 ). A linear kernel was applied to the resulting matrix. Kernel matrices that incor- p orated relations b et ween KEGG pathw a ys and GO terms ( R 62 ), and publications and GO terms were ob- tained in similar fashion. T o represen t the hierarc hical structure of MeS H de- scriptors ( ⇥ 5 ), the semantic struc tu r e of the GO graph ( ⇥ 2 ) and ortholog groups that correspond to KEGG path wa ys ( ⇥ 6 ), we considered the ge ne s as no des in three distinct large weigh ted graphs. In the graph for ⇥ 5 , the link betw een t wo genes was weigh ted b y the similarity of their asso ciated sets of MeSH descrip- tors using information from R 14 and R 45 . W e consid- ered the M eS H hierarch y to measure these similarities. Similarly , for th e graph for ⇥ 2 w e considered the GO seman tic structure in computing similarities of sets of GO terms asso ciated with genes. In the graph for ⇥ 6 , the gene edges w ere weigh ted by the num b er of com- mon KEGG ortholog groups. Kernel matrices were constructed with a di ↵ us ion kernel ( K ondor & Laf- fert y , 2002 ). The resulting kernel matrices were centered and n or - malized. In cr oss -v alidation, only the training part of the matrices w as preprocessed for learning, while pre- diction, cen tering and normalization were performed on the en tire data set. The prediction task was de- fined through the classification matrix of genes and their asso ciated GO slim terms from R 12 . 4.4. Prepro cessing for Early Integration The gene-related data matrices prepared for kernel- based fusion w ere also u sed for early integration and w ere concaten at ed in to a single tab l e. Eac h row in the table r e pr es ented a gene profile obtained from all a v ailable data sour ce s. F or our case study , each gene w as characterized by a fixed 9 , 362-dimensional feature v ector. F or eac h GO slim term, w e then separately dev elop ed a classifier with a random forest of classifi- cation trees and rep orted cross-v alidated results. 5. Res ul ts and Discussion 5.1. Predictiv e Performance T able 1 presen ts the cross-v alidated F 1 scores the data set of slim terms. The accuracy of our matrix factor- ization approach is at least comparable to MKL and substantially higher than that of early integration by random forests. The p erformance of all three fusion approac hes improv ed when more genes and hence more data w ere included in the study . Adding genes with sparser profiles also increased the ov erall data sparsity , to which the factorizat i on approac h was least sensitiv e. The accuracy f or nine selected GO terms is given in T able 2 . Our factorization approach yields consis- ten tly higher F 1 scores than the other tw o approac hes. Again, the early integration by random forests is in- ferior to b ot h intermediate integration metho ds. No- tice that, with one or tw o exceptions, F 1 scores are v ery h i gh. This is imp ortant, as all nine gene pro- cesses and functions observed are relev ant for current research of D. disc oideum where the metho ds for data fusion can yield new candidate genes for fo cused ex- p erimental studies. Our fusion approac h is faster than multiple kernel learning. F actorization required 18 minutes of run time on a standard desktop computer c omp are d to 77 min- utes for MKL t o finish one iteration of cross-v alid at ion on a whole-genome data set. Ta b l e 1 . Cross-v alidated F 1 scores for fusion by matrix fac- torization (MF), a kernel-based metho d (MKL) and ran- dom forests (RF). D. disc oideum task MF MKL RF 100 genes 0.799 0.781 0.761 1000 genes 0.826 0.787 0.767 Whole genome 0.831 0.800 0.782 5.2. Sensitivit y to Inclusion of Data Sources Inclusion of additional data sources impro ves the ac- curacy of prediction mo d el s . W e illustrate this in Fig. 3(a) , where we started with only the target data source R 12 and then added either R 13 or ⇥ 1 or b oth. Similar e ↵ ects were observed when w e studied other com binations of data sources (not sh own here for brevit y). 5.3. Sensitivit y to Inclusion of Constraints W e v aried the sparseness of gene constrain t matrix ⇥ 1 b y holding out a random subset of protein-protein in- teractions. W e set the entries of ⇥ 1 that corresp ond to hold-out constraints to zer o so that they did not a ↵ ect the cost function during optimization. Fig. 3(b) sho ws that including additional information on genes in the form of constraints impro ves the predictiv e per- formance of the factorization mo del. 5.4. Matrix F actor Initializati on Study We s t u d i e d t h e e ↵ ect of initialization b y ob ser v i ng the error of the resulting factorization after one and af- ter tw en ty it er at i ons of factorizat i on matrix up dates, Note: Please refer to the extended version of this paper published in the IEEE T ransac tions on Pattern Analysis and Machine Intelligence (10.1 109/TP AMI.2014.2343973). Data F usion b y Matrix F actorization Ta b l e 2 . Gene on tology term-specific cross-v alidated F 1 scores for fusion b y matrix factorization (MF), a kernel-based metho d (MKL) and random forests (RF). GO term name T erm identifier Namespace Size MF MKL RF Activ ation of aden ylate cyclase activity 0007190 BP 11 0.834 0.770 0.758 Chemotaxis 0006935 BP 58 0.981 0.794 0.538 Chemotaxis to cAM 0043327 BP 21 0.922 0.835 0.798 Phago cytosis 0006909 BP 33 0.956 0.892 0.789 Resp onse to bacterium 0009617 BP 51 0.899 0.788 0.785 Cell-cell adhesion 0016337 BP 14 0.883 0.867 0.728 Actin binding 0003779 MF 43 0.676 0.664 0.642 Lysozyme activity 0003796 MF 4 0.782 0.774 0.754 Sequence-sp ecific DNA binding TF A 0003700 MF 79 0.956 0.894 0.732 R 12 R 12 , 1 R 12 , R 13 R 12 , R 13 , 1 0 . 0 0 . 2 0 . 4 0 . 6 0 . 8 1 . 0 Averaged F 1 score 0.0 0.1 0.2 0.3 0.4 0.5 0.6 0.7 0.8 0.9 1.0 Proportion of 1 included in training 0.810 0.815 0.820 0.825 0.830 0.835 Averaged F 1 score Figure 3. A d d i n g new data sources (a) or incorporating more ob ject-type-sp ecific constrain ts in ⇥ 1 (b) b oth increase the accuracy of the matrix factorization-based mo dels. the latter being ab out one fourth of the iterations re- quired for factorization to conv erge. W e estimate the error relativ e to the optimal ( k 1 ,k 2 ,...,k 6 )-rank ap- pro ximation given b y the SVD. F or iter at i on v and matrix R ij the error is computed b y: Err ij ( v )= || R ij G ( v ) i S ( v ) ij ( G T j ) ( v ) || d F ( R ij , [ R ij ] k ) d F ( R ij , [ R ij ] k ) , (11) where G ( v ) i , G ( v ) j and S ( v ) ij are matrix factors obtained after executing v iterations of factorization algorithm. In Eq. ( 11 ) d F ( R ij , [ R ij ] k )= || R ij U k ⌃ k V T k || de- notes the F robenius distance b et ween R ij and its k - rank approximation given by the SVD, where k = max( k i ,k j ) is the approximation rank. Err ij ( v )i sa p essimistic measure of quantitativ e accuracy b ecau se of the choice of k . This error measure is similar to the error of the tw o-factor non-negative matrix factoriza- tion from ( Albright et al. , 2006 ). T able 3 shows the results for the experiment with 1000 most GO-annotated D. disc oideum genes and selected factorization ranks k 1 = 65, k 2 = 35, k 3 = 13, k 4 = 35, k 5 = 30 and k 6 = 10. The informed initialization algorithms surpass the random initialization. Of these, the random Acol algorithm p erforms b est in terms of Note: Please refer to the extended version of this paper published in the IEEE T ransac tions on Pattern Analysis and Machine Intelligence (10.1 109/TP AMI.2014.2343973). Data F usion b y Matrix F actorization Ta b l e 3 . E ↵ ect of initializa t io n algorithm on predictive p ow er of factorization model. Metho d Time G (0) Storage G (0) Err 12 (1) Err 12 (20) Rand. 0.0011 s 618K 5.11 3.61 Rand. C 0.1027 s 553K 2.97 1.67 Rand. Acol 0.0654 s 505K 1.59 1.30 K-means 0.4029 s 562K 2.47 2.20 NNDSVDa 0.1193 s 562K 3.50 2.01 accuracy and is also one of the simplest. 5.5. Early In tegration by Matrix F actorization Our d at a fusion approac h sim ultaneously factorizes in- dividual blo cks of data in R . Alternatively , we could also disregard the data structure, and treat R as a single data matrix. Such data treatment w ould trans- form our data fusion approac h to that of early in- tegration and lose the b enefits of structured system and source-sp ecific factorization. T o prov e this expe r - imen tally , we considered the 1 , 000 most GO-annotated D. disc oideum genes. The resulting cross-v alidated F 1 score for factorization-based early integration was 0 . 576, compared to 0 . 826 obtained wi t h our prop osed data fusion algorithm. This result is not s urp ri s in g as neglecting the structure of the system also causes the loss of the structure in matrix factors and the loss of zero blocks in factors S and G from Eq. ( 3 ). Clearly , data structure carries substan tial information and should b e retained in the mo del. 6. C oncl usi on W e hav e prop osed a new data f us i on algorithm. The approac h is flexible and, in contrast to state-of-the-art k ernel-based metho ds, requires min i mal , if an y , p re - pro cessing of data. This latter feature, together with its excellen t accuracy and time resp onse, are the prin- cipal adv antages of our new algorithm. Our approac h can model any collection of data sets, eac h of whic h c an be expressed in a matrix. The gene function prediction task considered in the pap er, which has traditionally b een regarded as a classification p rob - lem ( Larranaga et al. , 2006 ), is just one example of the typ es of problems that can b e addre sse d with our metho d. W e an ticipate the utility of factorization- based data fusion in m ultiple-target learning, asso ci- ation mining, clustering, link prediction or structured output prediction . Ac kno wledgments W e thank Janez Dem ˇ sar and Gad Shaulsky for their commen ts on the early version of this manuscript. W e ac knowledge the supp ort for our work from the Slo ve- nian Researc h Agency (P2-0209, J2-9699, L2-1112), National Institute of Health ( P01- HD 39691) and Eu- rop ean Commission (Health-F5-2010-242038). References Aerts, Stein, Lam brech ts, Diether, Maity , Sunit, V an Lo o, Peter, Coessens, Bert, De Smet, F rederik, T ranc heven t, Leon-Charles, De Mo or, Bart, Mary- nen, Peter, Hassan, Bassem, Car me li e t , P eter, and Moreau, Yv es. Gene prioritization through genomic data fusion. Natur e Biote chnolo gy , 24(5):537–544, 2006. ISSN 1087-0156. doi: 10.1038/n bt1203. Albright, R., Co x, J., Duling, D., Langvi l le , A. N., and Meyer, C. D. Algorithms , initializations, and con vergence for the nonnegative matrix f act or iz a- tion. T ec hnical rep ort, Department of Mathematics, North Carolina State Universit y , 2006. Alexeyenk o, Andrey and Sonnhammer, Erik L L. Global netw orks of functional coup l i ng in euk ary- otes from comprehensive data integration. Genome R ese ar ch , 19(6):1107–16, 2009. ISSN 1088-9051. d oi : 10.1101/gr.087528.108. Bostr¨ om, Henrik, Andler, Sten F., B roh ed e, Mar- cus, Johansson, Ronnie , Karlsson, Alexander, v an- Laere, Jo eri, Niklasson, Lars, Nilsson, Maria, P ers- son, Anne, and Ziemke, T om. On the definition of information fusion as a field of research. T ec hnical rep ort, Universit y of Sko vde, School of Humanities and Informatics, Sko vde, Sweden, 2007. Boulesteix, Anne-Laure, P orzelius, Christine, and Daumer, Martin. Microarray-based classification and clinical pred i ct or s: on com bined cl ass ifi er s and additional predictiv e v alue. Bioinformatics , 24(15): 1698–1706, 2008. Boutsidis, Christos and Gallop oulos, Efstrat i os . SVD based initialization: A head start for n onn egat i ve Note: Please refer to the extended version of this paper published in the IEEE T ransac tions on Pattern Analysis and Machine Intelligence (10.1 109/TP AMI.2014.2343973). Data F usion b y Matrix F actorization matrix fact ori z at ion . Pattern R e c o gn. , 41(4):1350– 1362, 2008. ISSN 0031-3203. doi: 10.1016/j.patcog. 2007.09.010. Brunet, Jean-Philipp e, T amay o, Pablo, Golub, T o dd R., and Mesir ov, Jill P . Metagenes and molec- ular pattern disco very using matrix factorization. PNAS , 101(12):4164–4169, 2004. Carp enter, Gail A., Martens, Siegfried, and Ogas, Ogi J. Self-organizing informat ion fusion and hi- erarc hical knowledge discov ery: a new framework using AR TMAP neural netw orks. Neur al Netw. , 18 (3):287–295, 2005. ISSN 0893-6080. doi: 10.1016/j. neunet.2004.12.003. Chaudh uri, Kamalik a, Kak ade, Sham M., Livescu, Karen, and Sridharan, Karthik. Multi-view cluster- ing via canonical correlation analysis. In Pr o c e e dings of the 26th Annual International Confer enc e on Ma- chine L e arning , ICML ’09, pp. 129–136, New Y ork, NY, USA, 2009. ACM. ISBN 978-1-60558-516-1. doi: 10.1145/1553374.1553391. Chen, Zheng and Zhang, W eixiong. Integrativ e anal- ysis using mo dule-guided random forests reveals correlated genetic factors related to mouse w eigh t. PL oS Computational Biolo gy , 9(3):e1002956, 2013. doi: 10.1371/journal.p cbi.1002956. Cuzzo crea, Alfredo, Song, Il-Y eol, and Davis, Karen C. Analytics ov er large-scale multidimensional data: the big data rev olution! In Pr o c e e dings of the ACM 14th international workshop on Data War ehousing and OLAP , DOLAP ’11, pp. 101–104, New Y ork, NY, USA, 2011. ACM. ISBN 978-1-4503-0963-9. doi: 10.1145/2064676.2064695. Debnath, Ramesw ar and T ak ahashi, Haruhisa. Kernel selection for the supp ort vector machine. IEICE T r ansactions , 87-D(12): 2903–2904, 2004. Gev aert, Olivier, De Smet, F rank, Timmerman, Dirk, Moreau, Yves, and De Mo or, Bart. Predicting the prognosis of breast can ce r b y integrating clinic al and microarray data with Ba yesian netw orks. Bioinfor- matics , 22(14):e184–90, 2006. ISSN 1367-4811. doi: 10.1093/bioinformatics/btl230. Greene, Derek and Cunningham, P´ adraig. A ma- trix factorization approach for integrating multiple data views. In Pr o c e e dings of the Eur op e an Con- fer enc e on Machine L e arning and Know le dge Dis- c overy in Datab ases: Part I , ECML PKDD ’09, pp. 423–438, Berlin, Heidelb erg, 2009. Springer- V erlag. ISBN 978-3-642-04179-2. doi: 10.1007/ 978- 3- 642- 04180- 8 \ 45. Hutc hins, Lucie N., Murphy , Sean M., Singh, Priy am, and Grab er, Jo el H. Position-dependent motif char- acterization using non-negative matrix factoriza- tion. Bioinformatics , 24(23):2684–2690, 2008. IS SN 1367-4803. doi: 10.1093/bioinformatics/btn526. Huttenhow er, Curtis, Mu t un gu , K. Tsheko, Indik, Natasha, Y ang, W oongcheol, Schroeder, Mark, F or- man, Joshua J., T roy ansk a ya, Olga G., and Coller, Hilary A. Detaili n g regulatory netw orks through large scale data in tegration. Bioinformatics , 25(24): 3267–3274, 2009. ISSN 1367-4803. doi: 10.1093/ bioinformatics/btp588. Klami, Arto and Kaski, Samuel. Probabilistic ap- proac h to detecting dependenc ie s b etw een data sets. Neur o c omput. , 72(1-3):39–46, 2008. ISSN 0925- 2312. doi: 10.1016/j.neucom.2007.12.044. Kloft, Marius, Br ef el d , Ulf, Sonnenburg, Soren, Lask ov, P av el, Muller, K l aus -Rob ert, and Zien, Alexander. E ffi cie nt and accurate L p -norm multi- ple k ernel learning. A dvanc es in Neur al Information Pr o c essing Systems , 21:997–1005, 2009. Kondor, Risi Imre and La ↵ erty , John D. Di ↵ usion k er- nels on graphs and other discrete input s pac es . In Pr o c e e dings of the Ninete enth International Confer- enc e on Machine L e arning , ICML ’02, pp. 315–322, San F rancisco, CA, US A, 2002. Morgan Kaufmann Publishers Inc. ISBN 1-55860-873-7. Lanc kriet, Gert R. G., Cristianini, Nello, Bartlett, P e- ter, Ghaoui, Laurent El, and Jordan, Michael I. Learning the kernel matrix with semidefinite pro- gramming. J. Mach. L e arn. R es. , 5:27–72, 2004a. ISSN 1532-4435. Lanc kriet, Gert R. G., De Bie, Tijl, Cristianini, Nell o, Jordan, Mi chael I., and Noble, William Sta ↵ ord. A statistical framework for genomic data fusion. Bioinformatics , 20(16):2626–2635, 2004b. ISSN 1367-4803. doi: 10.1093/bioinformatics/bth294. Lange, Tilman and Buhmann, Joachim M. F usion of similarity data in clustering. In NIPS , 2005. Larranaga, P ., Calv o, B., Santana, R., Bielza, C., Gal- diano, J., Inza, I., Lozano, J. A. , Armananzas, R., San tafe, G . , P erez, A., and Robles, V. Mac hine learning in bioinformatics. Brief. Bioinformatics , 7(1):86–112, 2006. Lee, Dani e l D. and Seung, H. Sebastian. Algorithms for non-negati ve matrix factorization. In Leen, T o dd K., Dietteric h, Thomas G., and T resp, V olker (eds.), NIPS , pp. 556–562. MIT Press, 2000. Note: Please refer to the extended version of this paper published in the IEEE T ransac tions on Pattern Analysis and Machine Intelligence (10.1 109/TP AMI.2014.2343973). Data F usion b y Matrix F actorization Lop es, Hedibert F reitas, Gamerman, Dani, and Salazar, Esther. Generalized spatial dynamic factor mo dels. Computational Statis ti cs & Data A nalysis , 55(3):1319–1330, 2011. Luttinen, Jaak ko and Ilin, Alexander . V aria- tional Gaussian-pro cess factor anal y si s for modeli ng spatio-temp oral data. In Bengio, Y osh ua, Sc huur- mans, Dale, La ↵ erty , John D., Williams, Christo- pher K. I., and Culotta, Aron (eds.), NIPS ,p p . 1177–1185. Curran Asso ciates, Inc., 2009. ISBN 9781615679119. Maragos, P etros, Gros, P atrick, Katsamanis, A thanas- sios, and Papandreou, Geor ge . Cross-mo dal in- tegration for p erformance impro ving in multime- dia: A review. In Maragos, P etros, Potamianos, Alexandros, and Gros, Patric k (eds.), Multimo dal Pr o c essing and Inter action , v olume 33 of Multime- dia Systems and Applic ations , pp. 1–46. Spr i n ger US, 2008. ISBN 978-0-387-76315-6. doi: 10.1007/ 978- 0- 387- 76316- 3 1. Moreau, Yves and T ranchev ent, L ´ eon-Charles. Com- putational to ols for prioritizing can d id at e genes: b o osting disease gene discov ery . Natur e R eviews Ge- netics , 13(8):523–536, 2012. ISSN 1471-0064. doi: 10.1038/nrg3253. Mostafa vi, Sara and Morris, Quaid. Combining many in teraction net works to predict gene funct i on and analyze gene lists. Pr ote omics , 12(10):1687–96, 2012. ISSN 1615-9861. P andey , Gaurav, Zhang, Bin, Chang, Aaron N, My- ers, Chad L, Zhu, Jun, Kumar, Vipin, and Schadt, Eric E. An integrativ e m ulti-netw ork and multi- classifier approac h to predict genetic in teractions. PL oS Computational Biolo gy , 6(9), 2010. ISSN 1553-7358. doi: 10.1371/journal.p cbi.1000928. P arikh, An up, Miranda, Edward Roshan, Katoh- Kurasa wa, Mariko, F uller, Danny , Rot, Gregor, Za- gar, Lan, Curk, T omaz, Sucgang, Ric hard, Chen, Rui, Zup an, Blaz, Loomis, William F, Kuspa, Adam, and Shaulsky , Gad. Conserv ed develop- men tal transcriptomes in ev olutionarily div ergent sp ecies. Genome Biolo gy , 11(3):R35, 2010. ISSN 1465-6914. doi: 10.1186/gb- 2010- 11- 3- r35. P arikh, Devi and P olik ar, Robi. An ensemble-based incremental learning approac h to data fusion. IEEE T r ansactions on System s , Man, and Cyb ernetics, Part B , 37(2):437–450, 2007. P avlidis, P aul, Cai, Jins ong, W eston, Jason, and No- ble, William Sta ↵ ord. Learning gene functional clas- sifications from m ultiple data types. Journal of Computational Biolo gy , 9:401–411, 2002. Rappap ort, S. M. and Smith, M. T. Environmen t and disease risks. Scienc e , 330(6003):460–461, 2010. Sa v age, Ric hard S., Ghahramani, Zoubin, G r i ffi n, Jim E., de la Cruz, Bernard J., and Wild, Da vid L. Disco vering transcriptional m o dules by Ba yesian data integration. Bioinformatics , 26(12) : i158–i 167, 2010. ISSN 1367-4803. doi: 10.1093/bioinformatics/ btq210. Sc h¨ olkopf, B, Tsuda, K, and V ert, J-P . Kernel Metho ds in Computational Biolo gy . Computational Molecu- lar Biology . MIT Press, Cam bridge, MA, USA, 8 2004. Singh, Ajit P . and Gordon, Geo ↵ rey J. A unified view of matrix factori z ati on mo dels. In Pr o c e e dings of the Eur op e an c onfer enc e on Machine L e arning and Know le dge Disc overy in Datab ases - Part II ,E C M L PKDD ’08, pp. 358–373, Berlin, Heidelb erg, 2008. Springer-V erlag. ISBN 978-3-540-87480-5. doi: 10. 1007/978- 3- 540- 87481- 2 24. T ang, W ei, Lu, Zhengdong, and Dhillon, Inderjit S. Clustering with multiple graphs. In Pr o c e e dings of the 2009 Ninth IEEE International Confer enc e on Data Mining , ICDM ’09, pp. 1016–1021, W ashing- ton, DC, USA, 2009. IEEE Computer Society . ISBN 978-0-7695-3895-2. doi: 10.1109/ICDM.2009.125. V an Benthem, Mark H. an d Keenan, Michael R. F ast algorithm for the solution of large-scale non- negativity-constrained least squares problems. Jour- nal of Chemometrics , 18( 10) :441–450, 2004. ISSN 1099-128X. doi: 10.1002/cem.889. v an Vliet, Martin H, Horlings, Hugo M, v an de Vi- jv er, Marc J, Reinders, Marcel J T, and W essels, Lo dewyk F A. Integration of clinical and gene ex - pression data has a synergetic e ↵ ect on predicting breast cancer outcome. PL oS One , 7(7):e40358, 2012. ISSN 1932-6203. doi: 10.1371/journal.p one. 0040358. V ask e, Charles J., Benz, Stephen C., Sanborn, J. Zac hary , Earl, Dent, Szeto, Christopher, Zhu, Jingc hun, Haussler, David, and Stuart, Joshua M. Inference of patient-spe c i fic path wa y activities from multi-dimensional cance r genomics data using paradigm. Bioinformatics [ISMB] , 26(12):237–245, 2010. W ang, F ei, Li, T ao, and Zhang, Changshui. Semi- sup ervised clusterin g via matrix factorization. In SDM , pp. 1–12. SIAM, 2008. Note: Please refer to the extended version of this paper published in the IEEE T ransac tions on Pattern Analysis and Machine Intelligence (10.1 109/TP AMI.2014.2343973). Data F usion b y Matrix F actorization W ang, Hua, Huang, Heng, Ding, Chris H. Q., an d Nie, F eiping. Predicting protein-protein interactions from multimod al biological d at a sources via nonneg- ativ e matrix tri-factorization. In Chor, Benny (ed.), RECOMB , v olume 7262 of L e ctur e Notes in Com- puter Scienc e , pp. 314–325. Springe r, 2012. ISBN 978-3-642-29626-0. Xing, Chuanh ua and Dunson, Da vid B. Bay esian infer- ence for genomic data in tegration reduces mi scl as - sification rate in predicting protein-protein interac- tions. PL oS Com pu tati onal Biolo gy , 7(7):e1002110, 2011. ISSN 1553-7358. doi: 10.1371/journal.p cb i . 1002110. Y e, Jieping, Ji, Shuiw ang, and Chen, Jianhui. Multi - class discriminant kernel learning via conv ex pro- gramming. J. Mach. L e arn. R es. , 9:719–758, 2008. ISSN 1532-4435. Y u, Shi, F alc k, Tillmann, Daemen, Anneleen, T ranc heven t, Leon-Charle s , Suyk ens, Johan Ak, De Mo or, Bart, and Moreau, Yves. L 2 -norm multi- ple k ernel learning and its application to biomedi- cal data fusion. BMC Bioinformatics , 11:309, 2010. ISSN 1471-2105. doi: 10.1186/1471- 2105- 11- 309. Y u, Shi, T ranc heven t, Leon, Liu, Xinhai, Glanzel, W olfgang, Suykens, Johan A. K., De Moor, Bart, and Moreau, Yv es. Optimize d data fusion for ker- nel k-means clustering. IEEE T r ans. Pattern Anal. Mach. Intel l. , 34(5):1031–1039, 2012. ISSN 0162- 8828. doi: 10.1109/TP AMI.2011.255. Zhang, Shi-Hua, Li, Qing jiao, Liu, J u an, and Zhou, Xianghong Jasmine. A nov el computational frame- w ork for simultaneous integration of multiple types of genomic data to identify microRNA-gene reg- ulatory mo dul es . Bioinformatics , 27(13):401–409, 2011. Zhang, Shihua, Liu, Chun-Chi, Li, W enyuan, Shen, Hui, Laird, Peter W., and Zhou, Xianghong Jas- mine. Discov ery of multi-dimensional modules by in tegrative analysis of cancer genomic data. Nu- cleic A cids R ese ar ch , 40(19):9379–9391, 2012. doi: 10.1093/nar/gks725. Zhang, Y ongmian and Ji, Qiang. Activ e and dynamic information fusion for multisensor systems with dy- namic Ba yesian netw orks. T r ans. Sys. Man Cyb er. Part B , 36(2):467–472, 2006. ISSN 1083-4419. doi: 10.1109/TSMCB.2005.859081. Zhou, Dengyong and Burges, Christopher J. C. Sp ec- tral clustering and tr ans du ct i ve learni n g with mul- tiple views. In Pr o c e e dings of the 24th international c onfer enc e on Machine le arning , ICML ’07, pp. 1159–1166, New Y ork, NY, USA, 2007. ACM. ISBN 978-1-59593-793-3. doi: 10.1145/1273496.1273642. Note: Please refer to the extended version of this paper published in the IEEE T ransac tions on Pattern Analysis and Machine Intelligence (10.1 109/TP AMI.2014.2343973).

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment