Mathematical Language Processing: Automatic Grading and Feedback for Open Response Mathematical Questions

While computer and communication technologies have provided effective means to scale up many aspects of education, the submission and grading of assessments such as homework assignments and tests remains a weak link. In this paper, we study the probl…

Authors: Andrew S. Lan, Divyanshu Vats, Andrew E. Waters

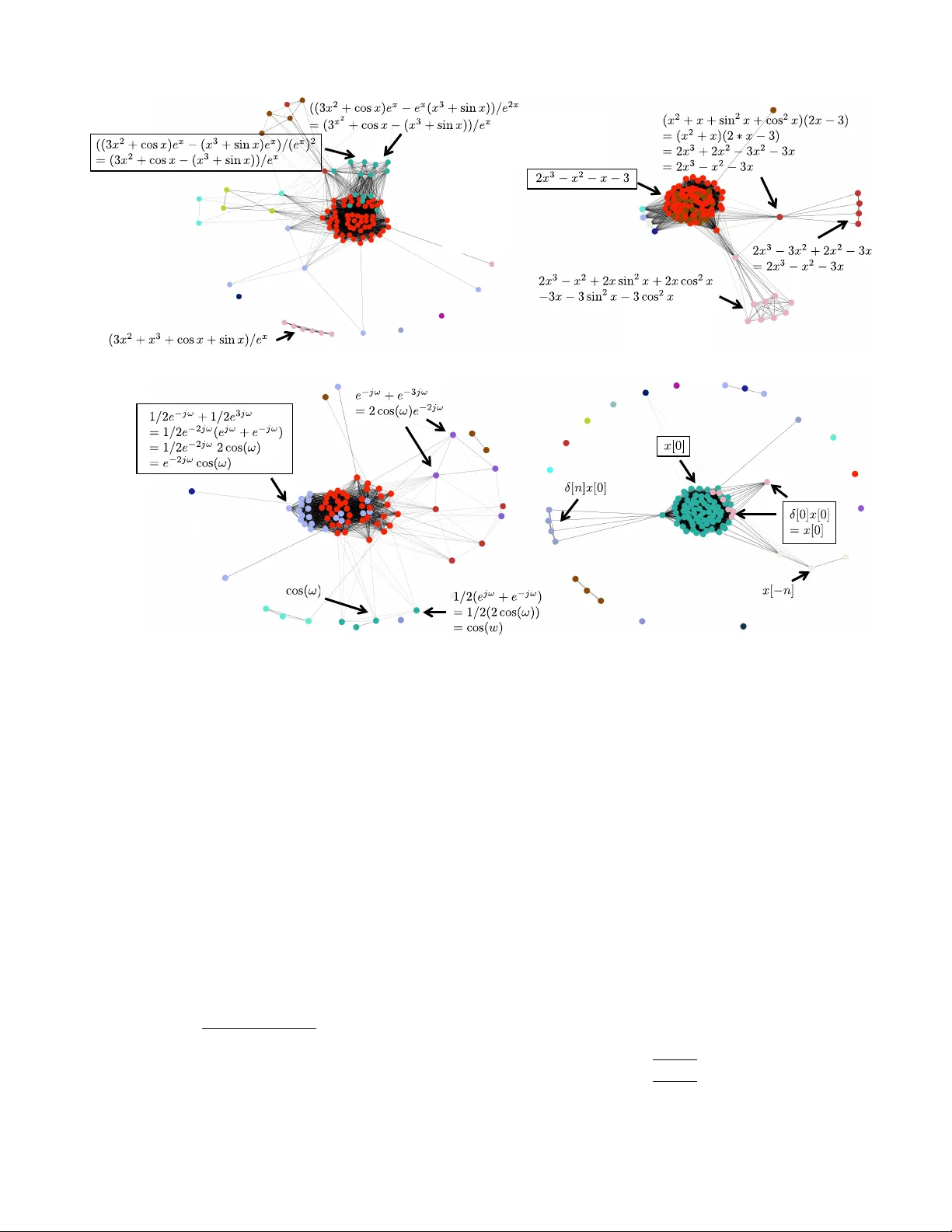

Mathematical Language Pr ocessing: A utomatic Grading and Feedbac k f or Open Response Mathematical Questions Andrew S. Lan, Divyanshu V ats, Andrew E. W aters, Richard G. Baraniuk Rice Uni versity Houston, TX 77005 { mr .lan, dv ats, waters, richb } @sparfa.com ABSTRA CT While computer and communication technologies hav e pro- vided effecti ve means to scale up many aspects of education, the submission and grading of assessments such as homew ork assignments and tests remains a weak link. In this paper, we study the problem of automatically grading the kinds of open r esponse mathematical questions that figure prominently in STEM (science, technology , engineering, and mathematics) courses. Our data-driv en framework for mathematical lan- guage pr ocessing ( MLP ) le verages solution data from a large number of learners to ev aluate the correctness of their solu- tions, assign partial-credit scores, and provide feedback to each learner on the likely locations of any errors. MLP takes inspiration from the success of natural language processing for te xt data and comprises three main steps. First, we con- vert each solution to an open response mathematical ques- tion into a series of numerical featur es . Second, we clus- ter the features from sev eral solutions to uncov er the struc- tures of correct, partially correct, and incorrect solutions. W e dev elop two different clustering approaches, one that lever - ages generic clustering algorithms and one based on Bayesian nonparametrics. Third, we automatically grade the remain- ing (potentially large number of) solutions based on their as- signed cluster and one instructor-pro vided grade per cluster . As a bonus, we can track the cluster assignment of each step of a multistep solution and determine when it departs from a cluster of correct solutions, which enables us to indicate the likely locations of err ors to learners. W e test and validate MLP on real-world MOOC data to demonstrate how it can substantially reduce the human effort required in large-scale educational platforms. A uthor K eyw ords Automatic grading, Machine learning, Clustering, Bayesian nonparametrics, Assessment, Feedback, Mathematical language processing Permission to make digital or hard copies of all or part of this work for personal or classroom use is granted without fee provided that copies are not made or distributed for profit or commercial advantage and that copies bear this notice and the full citation on the first page. Copyrights for components of this work owned by others than A CM must be honored. Abstracting with credit is permitted. T o copy otherwise, or republish, to post on servers or to redistribute to lists, requires prior specific permission and/or a fee. Request permissions from permissions@acm.org. L@S’15 , March 14–March 15, 2015, V ancouver, Canada. Copyright © 2015 A CM ISBN/15/03...$15.00. INTRODUCTION Large-scale educational platforms hav e the capability to rev- olutionize education by providing inexpensiv e, high-quality learning opportunities for millions of learners worldwide. Examples of such platforms include massiv e open online courses (MOOCs) [6, 7, 9, 10, 16, 42], intelligent tutoring systems [43], computer -based homework and testing systems [1, 31, 38, 40], and personalized learning systems [24]. While computer and communication technologies ha ve provided ef- fectiv e means to scale up the number of learners viewing lectures (via streaming video), reading the textbook (via the web), interacting with simulations (via a graphical user in- terface), and engaging in discussions (via online forums), the submission and grading of assessments such as homew ork as- signments and tests remains a weak link. There is a pressing need to find new ways and means to au- tomate two critical tasks that are typically handled by the in- structor or course assistants in a small-scale course: ( i ) grad- ing of assessments, including allotting partial credit for par- tially correct solutions, and ( ii ) providing indi vidualized feed- back to learners on the locations and types of their errors. Substantial progress has been made on automated grading and feedback systems in se veral restricted domains, including essay e v aluation using natural language processing (NLP) [1, 33], computer program e valuation [12, 15, 29, 32, 34], and mathematical proof verification [8, 19, 21]. In this paper, we study the problem of automatically grading the kinds of open response mathematical questions that fig- ure prominently in STEM (science, technology , engineering, and mathematics) education. T o the best of our knowledge, there exist no tools to automatically e valuate and allot partial- credit scores to the solutions of such questions. As a result, large-scale education platforms ha ve resorted either to ov er- simplified multiple choice input and binary grading schemes (correct/incorrect), which are known to con vey less informa- tion about the learners’ knowledge than open response ques- tions [17], or peer-grading schemes [25, 26], which shift the burden of grading from the course instructor to the learners. 1 1 While peer grading appears to have some pedagogical value for learners [30], each learner typically needs to grade se veral solutions from other learners for each question they solve, in order to obtain an accurate grade estimate. (a) A correct solution that receives 3 / 3 credits (d) An incorrect solution that receives 0 / 3c r e d i t s (b) An incorrect solution that receives 2 / 3 credits due to an error in the last expression (c) An incorrect solut ion that receives 1 / 3 credits due to an error in the second expression Figure 1: Example solutions to the question “Find the deri va- tiv e of ( x 3 + sin x ) /e x ” that were assigned scores of 3, 2, 1 and 0 out of 3, respectiv ely , by our MLP - B algorithm. (a) A correct soluti on that makes the simplification sin 2 x + cos 2 x =1i n the first expression (b) A correct solut i on that makes the the simplification s i n 2 x + cos 2 x =1 in the third exp r ess i on ( x 2 + x +s i n 2 x + cos 2 x )(2 x 3) =2 x 3 +2 x 2 +2 x sin 2 x +2 x cos 2 x 3 x 2 3 x 3s i n 2 x 3 cos 2 x =2 x 3 x 2 3 x +2 x (sin 2 x + cos 2 x ) 3(sin 2 x + cos 2 x ) =2 x 3 x 2 3 x +2 x ( 1) 3(1) =2 x 3 x 2 x 3 Figure 2: Examples of tw o dif ferent yet correct paths to solve the question “Simplify the expression ( x 2 + x + sin 2 x + cos 2 x )(2 x − 3) . ” Main Contributions In this paper, we de velop a data-dri ven framework for math- ematical language pr ocessing ( MLP ) that leverages solution data from a large number of learners to e valuate the correct- ness of solutions to open response mathematical questions, assign partial-credit scores, and provide feedback to each learner on the likely locations of any errors. The scope of our framew ork is broad and covers questions whose solution in- volv es one or more mathematical expressions. This includes not just formal proofs b ut also the kinds of mathematical cal- culations that figure prominently in science and engineering courses. Examples of solutions to two algebra questions of various lev els of correctness are given in Figures 1 and 2. In this re gard, our work dif fers significantly from that of [8], which focuses exclusi vely on e valuating logical proofs. Our MLP framework, which is inspired by the success of NLP methods for the analysis of textual solutions (e.g., es- says and short answer), comprises three main steps. First, we conv ert each solution to an open response mathe- matical question into a series of numerical features . In deriv- ing these features, we make use of symbolic mathematics to transform mathematical expressions into a canonical form. Second, we cluster the features from sev eral solutions to un- cov er the structures of correct, partially correct, and incorrect solutions. W e de velop two different clustering approaches. MLP - S uses the numerical features to define a similarity scor e between pairs of solutions and then applies a generic cluster- ing algorithm, such as spectral clustering (SC) [22] or affinity propagation (AP) [11]. W e sho w that MLP - S is also useful for visualizing mathematical solutions. This can help instruc- tors identify groups of learners that make similar errors so that instructors can deliver personalized remediation. MLP - B defines a nonparametric Bayesian model for the solutions and applies a Gibbs sampling algorithm to cluster the solutions. Third, once a human assigns a grade to at least one solution in each cluster , we automatically grade the remaining (po- tentially large number of) solutions based on their assigned cluster . As a bonus, in MLP - B , we can track the cluster as- signment of each step in a multistep solution and determine when it departs from a cluster of correct solutions, which en- ables us to indicate the likely locations of errors to learners. In dev eloping MLP , we tackle three main challenges of ana- lyzing open response mathematical solutions. First, solutions might contain different notations that refer to the same math- ematical quantity . For instance, in Figure 1, the learners use both e − x and 1 e x to refer to the same quantity . Second, some questions admit more than one path to the correct/incorrect solution. For instance, in Figure 2 we see two different yet correct solutions to the same question. It is typically infea- sible for an instructor to enumerate all of these possibilities to automate the grading and feedback process. Third, numer- ically verifying the correctness of the solutions does not al- ways apply to mathematical questions, especially when sim- plifications are required. For e xample, a question that asks to simplify the expression sin 2 x + cos 2 x + x can hav e both 1 + x and sin 2 x + cos 2 x + x as numerically correct answers, since both these e xpressions output the same value for all values of x . Howe ver , the correct answer is 1 + x , since the question ex- pects the learners to recognize that sin 2 x + cos 2 x = 1 . Thus, methods de veloped to check the correctness of computer pro- grams and formulae by specifying a range of different inputs and checking for the correct outputs, e.g., [32], cannot al ways be applied to accurately grade open response mathematical questions. Related W ork Prior work has led to a number of methods for grading and providing feedback to the solutions of certain kinds of open response questions. A linear regression-based approach has been de veloped to grade essays using features extracted from a training corpus using Natural Language Processing (NLP) [1, 33]. Unfortunately , such a simple regression-based model does not perform well when applied to the features extracted from mathematical solutions. Sev eral methods hav e been de- veloped for automated analysis of computer programs [15, 32]. Howe ver , these methods do not apply to the solutions to open response mathematical questions, since they lack the structure and compilability of computer programs. Several methods have also been developed to check the correctness of the logic in mathematical proofs [8, 19, 21]. Ho we ver , these methods apply only to mathematical proofs in volving logical operations and not the kinds of open-ended mathematical cal- culations that are often in volved in science and engineering courses. The idea of clustering solutions to open response questions into groups of similar solutions has been used in a number of pre vious endeav ors: [2, 5] uses clustering to grade short, textual answers to simple questions; [23] uses clustering to visualize a large collection of computer programs; and [28] uses clustering to grade and provide feedback on computer programs. Although the high-le vel concept underlying these works is resonant with the MLP framework, the feature build- ing techniques used in MLP are very different, since the struc- ture of mathematical solutions dif fers significantly from short textual answers and computer programs. This paper is organized as follo ws. In the next section, we dev elop our approach to conv ert open response mathemati- cal solutions to numerical features that can be processed by machine learning algorithms. W e then develop MLP - S and MLP - B and use real-world MOOC data to showcase their ability to accurately grade a large number of solutions based on the instructor’ s grades for only a small number of solu- tions, thus substantially reducing the human effort required in large-scale educational platforms. W e close with a discus- sion and perspectiv es on future research directions. MLP FEA TURE EXTRA CTION The first step in our MLP framework is to transform a collec- tion of solutions to an open response mathematical question into a set of numerical features. In later sections, we show how the numerical features can be used to cluster and grade solutions as well as generate informativ e learner feedback. A solution to an open response mathematical question will in general contain a mixture of explanatory text and core math- ematical expressions. Since the correctness of a solution de- pends primarily on the mathematical expressions, we will ig- nore the text when deriving features. Ho wev er , we recognize that the text is potentially very useful for automatically gener- ating explanations for various mathematical expressions. W e leav e this av enue for future work. A workhorse of NLP is the bag-of-wor ds model; it has found tremendous success in te xt semantic analysis. This model treats a te xt document as a collection of words and uses the frequencies of the words as numerical features to perform tasks like topic classification and document clustering [4, 5]. A solution to an open response mathematical question con- sists of a series of mathematical expr essions that are chained together by te xt, punctuation, or mathematical delimiters in- cluding = , ≤ , > , ∝ , ≈ , etc. For example, the solution in Figure 1(b) contains the expressions (( x 3 + sin x ) /e x ) 0 , ((3 x 2 + cos x ) e x − ( x 3 + sin x ) e x )) /e 2 x , and (2 x 2 − x 3 + cos x − sin x ) /e x that are all separated by the delimiter “ = ”. MLP identifies the unique mathematical expressions con- tained in the learners’ solutions and uses them as features, effecti vely extending the bag-of-words model to use mathe- matical expressions as features rather than words. T o coin a phrase, MLP uses a novel bag-of-e xpr essions model. Once the mathematical e xpressions ha ve been extracted from a solution, we parse them using SymPy , the open source Python library for symbolic mathematics [36]. 2 SymPy has powerful capability for simplifying expressions. For exam- ple, x 2 + x 2 can be simplified to 2 x 2 , and e x x 2 /e 2 x can be simplifed to e − x x 2 . In this way , we can identify the equi v a- lent terms in expressions that refer to the same mathematical quantity , resulting in more accurate features. In practice for some questions, howe ver , it might be necessary to tone down the level of SymPy’ s simplification. For instance, the key to solving the question in Figure 2 is to simplify the expression using the Pythagorean identity sin 2 x + cos 2 x = 1 . If SymPy is called on to perform such a simplification automatically , then it will not be possible to verify whether a learner has cor- rectly navigated the simplification in their solution. For such problems, it is advisable to perform only arithmetic simplifi- cations. After extracting the expressions from the solutions, we trans- form the expressions into numerical features. W e assume that N learners submit solutions to a particular mathemati- cal question. Extracting the expressions from each solution using SymPy yields a total of V unique expressions across the N solutions. W e encode the solutions in a inte ger-v alued solution feature matrix Y ∈ N V × N whose rows correspond to dif ferent ex- pressions and whose columns correspond to different solu- tions; that is, the ( i, j ) th entry of Y is giv en by Y i,j = times expression i appears in solution j . Each column of Y corresponds to a numerical representation of a mathematical solution. Note that we do not consider the ordering of the expressions in this model; such an extension is an interesting a venue for future work. In this paper , we indicate in Y only the presence and not the frequency of an expression, i.e., Y ∈ { 0 , 1 } V × N and Y i,j = 1 if expression i appears in solution j 0 otherwise. (1) The extension to encoding frequencies is straightforward. T o illustrate how the matrix Y is constructed, consider the solutions in Figure 2(a) and (b). Across both solutions, there are 7 unique expressions. Thus, Y is a 7 × 2 matrix, with each ro w corresponding to a unique expression. Letting the first four ro ws of Y correspond to the four e xpressions in Figure 2(a) and the remaining three ro ws to expressions 2–4 in Figure 2(b), we hav e Y = 1 1 1 1 0 0 0 1 0 0 1 1 1 1 T . 2 In particular , we use the parse expr function. W e end this section with the crucial observation that, for a wide range of mathematical questions, many e xpressions will be shared across learners’ solutions. This is true, for instance, in Figure 2. This suggests that there are a limited number of types of solutions to a question (both correct and incorrect) and that solutions of the same type tend tend to be similar to each other . This leads us to the conclusion that the N solu- tions to a particular question can be effecti vely clustered into K N clusters. In the next two sections, we will develop MLP - S and MLP - B , two algorithms to cluster solutions ac- cording to their numerical features. MLP-S: SIMILARITY-BASED CLUSTERING In this section, we outline MLP - S , which clusters and then grades solutions using a solution similarity-based approach. The MLP - S Model W e start by using the solution features in Y to define a notion of similarity between pairs of solutions. Define the N × N similarity matrix S containing the pairwise similarities be- tween all solutions, with its ( i, j ) th entry the similarity be- tween solutions i and j S i,j = y T i y j min { y T i y i , y T j y j } . (2) The column v ector y i denotes the i th column of Y and corre- sponds to learner i ’ s solution. Informally , S i,j is the number of common expressions between solution i and solution j di- vided by the minimum of the number of expressions in solu- tions i and j . A large/small value of S i,j corresponds to the two solutions being similar/dissimilar . For example, the sim- ilarity between the solutions in Figure 1(a) and Figure 1(b) is 1 / 3 and the similarity between the solutions in Figure 2(a) and Figure 2(b) is 1 / 2 . S is symmetric, and 0 ≤ S i,j ≤ 1 . Equation (2) is just one of any possible solution similarity metrics. W e defer the development of other metrics to future work. Clustering Solutions in MLP - S Having defined the similarity S i,j between two solutions i and j , we now cluster the N solutions into K N clusters such that the solutions within each cluster have high similarity score between them and solutions in different clusters have low similarity score between them. Giv en the similarity matrix S , we can use any of the mul- titude of standard clustering algorithms to cluster solutions. T wo examples of clustering algorithms are spectral cluster - ing (SC) [22] and affinity pr opagation (AP) [11]. The SC algorithm requires specifying the number of clusters K as an input parameter , while the AP algorithm does not. Figure 3 illustrates ho w AP is able to identify clusters of sim- ilar solutions from solutions to four different mathematical questions. The figures on the top correspond to solutions to the questions in Figures 1 and 2, respecti vely . The bottom two figures correspond to solutions to two signal processing questions. Each node in the figure corresponds to a solution, and nodes with the same color correspond to solutions that belong to the same cluster . For each figure, we show a sam- ple solution from some of these clusters, with the boxed solu- tions corresponding to correct solutions. W e can make three interesting observations from Figure 3: • In the top left figure, we cluster a solution with the final answer 3 x 2 + cos x − ( x 3 + sin x )) /e x with a solution with the final answer 3 x 2 + cos x − ( x 3 + sin x )) /e x . Although the later solution is incorrect, it contained a typographical error where 3 ∗ x ∧ 2 was typed as 3 ∧ x ∧ 2 . MLP - S is able to identify this typographical error , since the expres- sion before the final solution is contained in several other correct solutions. • In the top right figure, the correct solution requires iden- tifying the trigonometric identify sin 2 x + cos 2 x = 1 . The clustering algorithm is able to identify a subset of the learners who were not able to identify this relationship and hence could not simplify their final expression. • MLP - S is able to identify solutions that are strongly con- nected to each other . Such a visualization can be extremely useful for course instructors. For example, an instructor can easily identify a group of learners who lack mastery of a certain skill that results in a common error and adjust their course plan accordingly to help these learners. A uto-Grading via MLP - S Having clustered all solutions into a small number K of clus- ters, we assign the same grade to all solutions in the same cluster . If a course instructor assigns a grade to one solution from each cluster , then MLP - S can automatically grade the remaining N − K solutions. W e construct the index set I S of solutions that the course instructor needs to grade as I S = arg max i ∈C k N X j =1 S i,j , k = 1 , 2 , . . . , K , where C k represents the index set of the solutions in cluster k . In words, in each cluster , we select the solution having the highest similarity to the other solutions (ties are broken randomly) to include in I S . W e demonstrate the performance of auto-grading via MLP - S in the e xperimental results section below . MLP-B: BA YESIAN NONP ARAMETRIC CLUSTERING In this section, we outline MLP - B , which clusters and then grades solutions using a Bayesian nonparameterics-based ap- proach. The MLP - B model and algorithm can be interpreted as an extension of the model in [44], where a similar approach is proposed to cluster short text documents. The MLP - B Model Follo wing the ke y observ ation that the N solutions can be effecti vely clustered into K N clusters, let z be the N × 1 cluster assignment v ector , with z j ∈ { 1 , . . . , K } denoting the cluster assignment of the j th solution with j ∈ { 1 , . . . , N } . Using this latent variable, we model the probability of the Figure 3: Illustration of the clusters obtained by MLP - S by applying affinity propagation (AP) on the similarity matrix S corre- sponding to learners’ solutions to four different mathematical questions (see T able 1 for more details about the datasets and the Appendix for the question statements). Each node corresponds to a solution. Nodes with the same color correspond to solutions that are estimated to be in the same cluster . The thickness of the edge between two solutions is proportional to their similarity score. Box ed solutions are correct; all others are in varying de grees of correctness. solution of all learners’ solutions to the question as p ( Y ) = N Y j =1 K X k =1 p ( y j | z j = k ) p ( z j = k ) ! , where y j , the j th column of the data matrix Y , corresponds to learner j ’ s solution to the question. Here we hav e implicitly assumed that the learners’ solutions are independent of each other . By analogy to topic models [4, 35], we assume that learner j ’ s solution to the question, y j , is generated according to a multinomial distribution giv en the cluster assignments z as p ( y j | z j = k ) = Mult ( y j | φ k ) = ( P i Y i,j )! Y 1 ,j ! Y 2 ,j ! . . . Y V ,j ! Φ Y 1 ,j 1 ,k Φ Y 2 ,j 2 ,k . . . Φ Y V ,j V ,k , (3) where Φ ∈ [0 , 1] V × K is a parameter matrix with Φ v ,k denot- ing its ( v , k ) th entry . φ k ∈ [0 , 1] V × 1 denotes the k th column of Φ and charcterizes the multinomial distrib ution o ver all the V features for cluster k . In practice, one often has no information regarding the num- ber of clusters K . Therefore, we consider K as an unknown parameter and infer it from the solution data. In order to do so, we impose a Chinese restaurant process (CRP) prior on the cluster assignments z , parameterized by a parameter α . The CRP characterizes the random partition of data into clus- ters, in analogy to the seating process of customers in a Chi- nese restaurant. It is widely used in Bayesian mixture model- ing literature [3, 14]. Under the CRP prior , the cluster (table) assignment of the j th solution (customer), conditioned on the cluster assignments of all the other solutions, follo ws the dis- tribution p ( z j = k | z ¬ j , α ) = n k, ¬ j N − 1+ α if cluster k is occupied , α N − 1+ α if cluster k is empty , (4) β Φ α z K N Y α α α β Figure 4: Graphical model of the generation process of solu- tions to mathematical questions. α α , α β and β are hyperpa- rameters, z and Φ are latent variables to be inferred, and Y is the observed data defined in (1). where n k, ¬ j represents the number of solutions that belong to cluster k excluding the current solution j , with P K k =1 n k, ¬ j = N − 1 . The vector z ¬ j represents the cluster assignments of the other solutions. The flexibility of allo wing any solution to start a ne w cluster of its own enables us to automatically in- fer K from data. It is kno wn [37] that the expected number of clusters under the CRP prior satisfies K ∼ O ( α log N ) N , so our method scales well as the number of learners N grows large. W e also impose a Gamma prior α ∼ Gam ( α α , α β ) on α to help us infer its value. Since the solution feature data Y is assumed to follow a multi- nomial distribution parameterized by Φ , we impose a sym- metric Dirichlet prior ov er Φ as φ k ∼ Dir ( φ k | β ) because of its conjugacy with the multinomial distrib ution [13]. The graphical model representation of our model is visualized in Figure 4. Our goal next is to estimate the cluster assign- ments z for the solution of each learner , the parameters φ k of each cluster , and the number of clusters K , from the binary- valued solution feature data matrix Y . Clustering Solutions in MLP - B W e use a Gibbs sampling algorithm for posterior inference under the MLP - B model, which automatically groups solu- tions into clusters. W e start by applying a generic clustering algorithm (e.g., K -means, with K = N / 10 ) to initialize z , and then initialize Φ accordingly . Then, in each iteration of MLP - B , we perform the following steps: 1. Sample z : For each solution j , we remove it from its cur- rent cluster and sample its cluster assignment z j from the posterior p ( z j = k | z ¬ j , α, Y ) . Using Bayes rule, we hav e p ( z j = k | z ¬ j , Φ , α, Y ) = p ( z j = k | z ¬ j , φ k , α, y j ) ∝ p ( z j = k | z ¬ j ,α ) p ( y j | z j = k , φ k ) . The prior probability p ( z j = k | z ¬ j , α ) is gi ven by (4). For non-empty clusters, the observed data likelihood p ( y j | z j = k , φ k ) is gi ven by (3). Howe ver , this does not apply to new clusters that are pre viously empty . For a new cluster , we marginalize out φ k , resulting in p ( y j | z j = k , β ) = Z φ k p ( y j | z j = k , φ k ) p ( φ k | β ) = Z φ k Mult ( y j | z j = k , φ k ) Dir ( φ k | β ) = Γ( V β ) Γ( P V i =1 Y i,j + V β ) V Y i =1 Γ( Y i,j + β ) Γ( β ) , where Γ( · ) is the Gamma function. If a cluster becomes empty after we remove a solution from its current cluster , then we remo ve it from our sampling process and erase its corresponding multinomial parame- ter vector φ k . If a new cluster is sampled for z j , then we sample its multinomial parameter vector φ k immediately according to Step 2 below . Otherwise, we do not change φ k until we hav e finished sampling z for all solutions. 2. Sample Φ : For each cluster k , sample φ k from its pos- terior Dir ( φ k | n 1 ,k + β , . . . , n V ,k + β ) , where n i,k is the number of times feature i occurs in the solutions that be- long to cluster k . 3. Sample α : Sample α using the approach described in [41]. 4. Update β : Update β using the fixed-point procedure de- scribed in [20]. The output of the Gibbs sampler is a series of samples that correspond to the approximate posterior distribution of the various parameters of interest. T o mak e meaningful infer - ence for these parameters (such as the posterior mean of a pa- rameter), it is important to appropriately post-process these samples. F or our estimate of the true number of clusters, b K , we simply take the mode of the posterior distribution on the number of clusters K . W e use only iterations with K = b K to estimate the posterior statistics [39]. In mixture models, the issue of “label-switching” can cause a model to be unidentifiable, because the cluster labels can be arbitrarily permuted without affecting the data likelihood. In order to overcome this issue, we use an approach reported in [39]. First, we compute the likelihood of the observed data in each iteration as p ( Y | Φ ` , z ` ) , where Φ ` and z ` represent the samples of these v ariables at the ` th iteration. After the algorithm terminates, we search for the iteration ` max with the largest data likelihood and then permute the labels z ` in the other iterations to best match Φ ` with Φ ` max . W e use b Φ (with columns ˆ φ k ) to denote the estimate of Φ , which is simply the posterior mean of Φ . Each solution j is assigned to the cluster index ed by the mode of the samples from the posterior of z j , denoted by ˆ z j . A uto-Grading via MLP - B W e now detail how to use MLP - B to automatically grade a large number N of learners’ solutions to a mathematical ques- tion, using a small number b K of instructor graded solutions. First, as in MLP - S , we select the set I B of “typical solutions” for the instructor to grade. W e construct I B by selecting one solution from each of the b K clusters that is most representa- tiv e of the solutions in that cluster: I B = { arg max j p ( y j | ˆ φ k ) , k = 1 , 2 , . . . , b K } . In words, for each cluster , we select the solution with the largest lik elihood of being in that cluster . The instructor grades the b K solutions in I B to form the set of instructor grades { g k } for k ∈ I B . Using these grades, we assign grades to the other solutions j / ∈ I B according to ˆ g j = P b K k =1 p ( y j | ˆ φ k ) g k P b K k =1 p ( y j | ˆ φ k ) . (5) That is, we grade each solution not in I B as the average of the instructor grades weighted by the likelihood that the solution belongs to cluster . W e demonstrate the performance of auto- grading via MLP - B in the experimental results section below . Feedback Generation via MLP - B In addition to grading solutions, MLP - B can automatically provide useful feedback to learners on where they made errors in their solutions. For a particular solution j denoted by its column feature value vector y j with V j total expressions, let y ( v ) j denote the feature value v ector that corresponds to the first v ex- pressions of this solution, with v = { 1 , 2 , . . . , V j } . Un- der this notation, we ev aluate the probability that the first v expressions of solution j belong to each of the b K clusters: p ( y ( v ) j | ˆ φ k ) , k = { 1 , 2 , . . . , b K } , for all v . Using these proba- bilities, we can also compute the expected credit of solution j after the first v expressions via ˆ g ( v ) j = P b K k =1 p ( y ( v ) j | ˆ φ k ) g k P b K k =1 p ( y ( v ) j | ˆ φ k ) , (6) where { g k } is the set of instructor grades as defined above. Using these quantities, it is possible to identify that the learner has lik ely made an error at the v th expression if it is most likely to belong to a cluster with credit g k less than the full credit or , alternati vely , if the expected credit ˆ g ( v ) j is less than the full credit. The ability to automatically locate wher e an error has been made in a particular incorrect solution provides many bene- fits. F or instance, MLP - B can inform instructors of the most common locations of learner errors to help guide their instruc- tion. It can also enable an automated tutoring system to gen- erate feedback to a learner as they make an error in the early steps of a solution, before it propagates to later steps. W e demonstrate the ef ficacy of MLP - B to automatically locate learner errors using real-world educational data in the exper- iments section below . EXPERIMENTS In this section, we demonstrate how MLP - S and MLP - B can be used to accurately estimate the grades of roughly 100 open response solutions to mathematical questions by only asking the course instructor to grade approximately 10 solutions. W e also demonstrate how MLP - B can be used to automatically provide feedback to learners on the locations of errors in their solutions. A uto-Grading via MLP - S and MLP - B Datasets Our dataset that consists of 116 learners solving 4 open re- sponse mathematical questions in an edX course. The set T able 1: Datasets consisting of the solutions of 116 learners to 4 mathematical questions on algebra and signal processing. See the Appendix for the question statements. No.of solutions N No.of features (unique expressions) V Question 1 108 78 Question 2 113 53 Question 3 90 100 Question 4 110 45 of questions includes 2 high-school le vel mathematical ques- tions and 2 colle ge-lev el signal processing questions (details about the questions can be found in T able 1, and the question statements are gi ven in the Appendix). For each question, we pre-process the solutions to filter out the blank solutions and extract features. Using the features, we represent the solu- tions by the matrix Y in (1). Every solution was graded by the course instructor with one of the scores in the set { 0 , 1 , 2 , 3 } , with a full credit of 3 . Baseline: Random sub-sampling W e compare the auto-grading performance of MLP - S and MLP - B against a baseline method that does not group the so- lutions into clusters. In this method, we randomly sub-sample all solutions to form a small set of solutions for the instructor to grade. Then, each ungraded solution is simply assigned the grade of the solution in the set of instructor-graded solutions that is most similar to it as defined by S in (2). Since this small set is picked randomly , we run the baseline method 10 times and report the best performance. 3 Experimental setup For each question, we apply four different methods for auto- grading: • Random sub-sampling (RS) with the number of clusters K ∈ { 5 , 6 , . . . , 40 } . • MLP - S with spectral clustering (SC) with K ∈ { 5 , 6 , . . . , 40 } . • MLP - S with affinity propagation (AP) clustering. This al- gorithm does not require K as an input. • MLP - B with hyperparameters set to the non-informati ve values α α = α β = 1 and running the Gibbs sampling al- gorithm for 10,000 iterations with 2,000 b urn-in iterations. MLP - S with AP and MLP - B both automatically estimate the number of clusters K . Once the clusters are selected, we as- sign one solution from each cluster to be graded by the in- structor using the methods described in earlier sections. P erformance metric W e use mean absolute error (MAE), which measures the “av- erage absolute error per auto-graded solution” MAE = P N − K j =1 | ˆ g j − g j | N − K , 3 Other baseline methods, such as the linear regression-based method used in the edX essay grading system [33], are not listed, because they did not perform as well as random sub-sampling in our e xperi- ments. as our performance metric. Here, N − K equals the num- ber of solutions that are auto-graded, and ˆ g j and g j repre- sent the estimated grade (for MLP - B , the estimated grades are rounded to inte gers) and the actual instructor grades for the auto-graded solutions, respectiv ely . Results and discussion In Figure 5, we plot the MAE versus the number of clusters K for Questions 1–4. MLP - S with SC consistently outper- forms the random sampling baseline algorithm for almost all values of K . This performance gain is likely due to the fact that the baseline method does not cluster the solutions and thus does not select a good subset of solutions for the instruc- tor to grade. MLP - B is more accurate than MLP - S with both SC and AP and can automatically estimate the value of K , al- though at the price of significantly higher computational com- plexity (e.g., clustering and auto-grading one question takes 2 minutes for MLP - B compared to only 5 seconds for MLP - S with AP on a standard laptop computer with a 2.8GHz CPU and 8GB memory). Both MLP - S and MLP - B grade the learners’ solutions accu- rately (e.g., an MAE of 0 . 04 out of the full grade 3 using only K = 13 instructor grades to auto-grade all N = 113 solu- tions to Question 2). Moreo ver , as we see in Figure 5, the MAE for MLP - S decreases as K increases, and ev entually reaches 0 when K is lar ge enough that only solutions that are exactly the same as each other belong to the same cluster . In practice, one can tune the v alue of K to achieve a balance be- tween maximizing grading accuracy and minimizing human effort. Such a tuning process is not necessary for MLP - B , since it automatically estimates the v alue of K and achieves such a balance. Feedback Generation via MLP - B Experimental setup Since Questions 3–4 require some f amiliarity with signal pro- cessing, we demonstrate the ef ficacy of MLP - B in pro vid- ing feedback on mathematical solutions on Questions 1–2. Among the solutions to each question, there are a fe w types of common errors that more than one learner mak es. W e take one incorrect solution out of each type and run MLP - B on the other solutions to estimate the parameter ˆ φ k for each clus- ter . Using this information and the instructor grades { g k } , after each expression v in a solution, we compute the proba- bility that it belongs to a cluster p ( y ( v ) j | ˆ φ k ) that does not ha ve full credit ( g k < 3 ), together with the expected credit using (6). Once the expected grade is calculated to be less than full credit, we consider that an error has occurred. Results and discussion T wo sample feedback generation process are shown in Fig- ure 6. In Figure 6(a), we can provide feedback to the learner on their error as early as Line 2, before it carries over to later lines. Thus, MLP - B can potentially become a powerful tool to generate timely feedback to learners as they are solving mathematical questions, by analyzing the solutions it gathers from other learners. CONCLUSIONS 10 20 30 40 0 0.1 0.2 0.3 0.4 0.5 0.6 0.7 No. of clusters K MAE RS MLP−S−SC MLP−S−AP MLP−B (a) Question 1 10 20 30 40 0 0.1 0.2 0.3 0.4 0.5 0.6 0.7 No. of clusters K MAE (b) Question 2 10 20 30 40 0 0.1 0.2 0.3 0.4 0.5 0.6 0.7 No. of clusters K MAE (c) Question 3 10 20 30 40 0 0.1 0.2 0.3 0.4 0.5 0.6 0.7 No. of clusters K MAE (d) Question 4 Figure 5: Mean absolute error (MAE) versus the number of instructor graded solutions (clusters) K , for Questions 1– 4, respectively . For example, on Question 1, MLP - S and MLP - B estimate the true grade of each solution with an aver - age error of around 0 . 1 out of a full credit of 3 . “RS” repre- sents the random sub-sampling baseline. Both MLP - S meth- ods and MLP - B outperforms the baseline method. W e have developed a framew ork for mathematical language processing ( MLP ) that consists of three main steps: ( i ) con- verting each solution to an open response mathematical ques- tion into a series of numerical features; ( ii ) clustering the fea- tures from several solutions to uncov er the structures of cor - rect, partially correct, and incorrect solutions; and ( iii ) auto- matically grading the remaining (potentially large number of) solutions based on their assigned cluster and one instructor- provided grade per cluster . As our experiments have indi- cated, our framework can substantially reduce the human ef- fort required for grading in large-scale courses. As a bonus, MLP - S enables instructors to visualize the clusters of solu- tions to help them identify common errors and thus groups of learners having the same misconceptions. As a further bonus, MLP - B can track the cluster assignment of each step of a mul- tistep solution and determine when it departs from a cluster of correct solutions, which enables us to indicate the locations of errors to learners in real time. Improv ed learning outcomes should result from these innov ations. There are se veral av enues for continued research. W e are cur - rently planning more extensiv e experiments on the edX plat- form in volving tens of thousands of learners. W e are also planning to extend the feature extraction step to take into ac- count both the ordering of expressions and ancillary text in a solution. Clustering algorithms that allow a solution to be- long to more than one cluster could make MLP more robust to outlier solutions and further reduce the number of solutions that the instructors need to grade. Finally , it would be inter- esting to explore how the features of solutions could be used (( x 3 + sin x ) /e x ) 0 = ( e x ( x 3 + sin x ) 0 − ( x 3 + sin x )( e x ) 0 ) /e 2 x p rob . incorrect = 0 . 11 , exp . grade = 3 = ( e x (2 x 2 + cos x ) − ( x 3 + sin x ) e x ) /e 2 x p rob . incorrect = 0 . 66 , exp . grade = 2 = (2 x 2 + cos x − x 3 − sin x ) /e x p rob . incorrect = 0 . 93 , exp . grade = 2 = ( x 2 (2 − x ) + cos x − sin x ) /e x p rob . incorrect = 0 . 99 , exp . grade = 2 (a) A sample feedback generation process where the learner makes an error in the expression in Line 2 while attempting to solv e Question 1. ( x 2 + x + sin 2 x + cos 2 x )(2 x − 3) = ( x 2 + x + 1)(2 x − 3) p rob . incorrect = 0 . 09 , exp . grade = 3 = 4 x 3 + 2 x 2 + 2 x − 3 x 2 − 3 x − 3 p rob . incorrect = 0 . 82 , exp . grade = 2 = 4 x 3 − x 2 − x − 3 p rob . incorrect = 0 . 99 , exp . grade = 2 (b) A sample feedback generation process where the learner makes an error in the expression in Line 3 while attempting to solv e Question 2. Figure 6: Demonstration of real-time feedback generation by MLP - B while learners enter their solutions. After each ex- pression, we compute both the probability that the learner’ s solution belongs to a cluster that does not ha ve full credit and the learner’ s expected grade. An alert is generated when the expected credit is less than full credit. to build predictive models, as in the Rasch model [27] or item response theory [18]. APPENDIX: QUESTION ST A TEMENTS Question 1: Multiply ( x 2 + x + sin 2 x + cos 2 x )(2 x − 3) and simplify your answer as much as possible. Question 2: Find the deriv ativ e of x 3 + sin( x ) e x and simplify your answer as much as possible. Question 3: A discrete-time linear time-inv ariant system has the impulse response shown in the figure (omitted). Calculate H ( e j ω ) , the discrete-time Fourier transform of h [ n ] . Simplify your answer as much as possible until it has no summations. Question 4: Ev aluate the follo wing summation ∞ X k = −∞ δ [ n − k ] x [ k − n ] . Ackno wledgments Thanks to Heather Seeba for administering the data collection process and Christoph Studer for discussions and insights. V isit our website www.sparfa.com , where you can learn more about our project and purchase t-shirts and other merchan- dise. REFERENCES 1. Attali, Y . Construct validity of e-rater in scoring T OEFL essays. T ech. rep., Educational T esting Service, May 2000. 2. Basu, S., Jacobs, C., and V anderwende, L. Power grading: A clustering approach to amplify human effort for short answer grading. T rans. Association for Computational Linguistics 1 (Oct. 2013), 391–402. 3. Blei, D., Griffiths, T ., and Jordan, M. The nested chinese restaurant process and Bayesian nonparametric inference of topic hierarchies. J. A CM 57 , 2 (Jan. 2010), 7:1–7:30. 4. Blei, D. M., Ng, A. Y ., and Jordan, M. I. Latent Drichlet allocation. J. Mac hine Learning Resear ch 3 (Jan. 2003), 993–1022. 5. Brooks, M., Basu, S., Jacobs, C., and V anderwende, L. Divide and correct: Using clusters to grade short answers at scale. In Pr oc. 1st ACM Conf. on Learning at Scale (Mar . 2014), 89–98. 6. Champaign, J., Colvin, K., Liu, A., Fredericks, C., Seaton, D., and Pritchard, D. Correlating skill and improv ement in 2 MOOCs with a student’ s time on tasks. In Pr oc. 1st ACM Conf. on Learning at Scale (Mar . 2014), 11–20. 7. Coursera. https://www .coursera.org/, 2014. 8. Cramer , M., Fisseni, B., K oepke, P ., K ¨ uhlwein, D., Schr ¨ oder , B., and V eldman, J. The Naproche project – Controlled natural language proof checking of mathematical texts, June 2010. 9. Dijksman, J. A., and Khan, S. Khan Academy: The world’ s free virtual school. In APS Meeting Abstracts (Mar . 2011). 10. edX. https://www .edx.org/, 2014. 11. Frey , B. J., and Dueck, D. Clustering by passing messages between data points. Science 315 , 5814 (2007), 972–976. 12. Galenson, J., Reames, P ., Bodik, R., Hartmann, B., and Sen, K. CodeHint: Dynamic and interacti ve synthesis of code snippets. In Pr oc. 36th Intl. Conf. on Softwar e Engineering (June 2014), 653–663. 13. Gelman, A., Carlin, J., Stern, H., Dunson, D., V ehtari, A., and Rubin, D. Bayesian Data Analysis . CRC Press, 2013. 14. Griffiths, T ., and T enenbaum, J. Hierarchical topic models and the nested chinese restaurant process. Advances in Neural Information Pr ocessing Systems 16 (Dec. 2004), 17–24. 15. Gulwani, S., Radi ˇ cek, I., and Zuleger , F . Feedback generation for performance problems in introductory programming assignments. In Pr oc.22nd ACM SIGSOFT Intl. Symposium on the F oundations of Softwar e Engineering (Nov . 2014, to appear). 16. Guo, P ., and Reinecke, K. Demographic dif ferences in how students na vigate through MOOCs. In Pr oc. 1st A CM Conf. on Learning at Scale (Mar . 2014), 21–30. 17. Kang, S., McDermott, K., and Roediger III, H. T est format and correctiv e feedback modify the ef fect of testing on long-term retention. Eur opean J. Cognitive Psychology 19 , 4-5 (July 2007), 528–558. 18. Lord, F . Applications of Item Response Theory to Practical T esting Pr oblems . Erlbaum Associates, 1980. 19. Megill, N. Metamath: A computer language for pur e mathematics . Citeseer , 1997. 20. Minka, T . Estimating a Drichlet distribution. T ech. rep., MIT , Nov . 2000. 21. Naumowicz, A., and K orniłowicz, A. A brief o vervie w of MIZAR. In Theor em Pr oving in Higher Order Logics , vol. 5674 of Lectur e Notes in Computer Science . Aug. 2009, 67–72. 22. Ng, A., Jordan, M., and W eiss, Y . On spectral clustering: Analysis and an algorithm. Advances in Neural Information Pr ocessing Systems 2 (Dec. 2002), 849–856. 23. Nguyen, A., Piech, C., Huang, J., and Guibas, L. Codewebs: Scalable homew ork search for massiv e open online programming courses. In Pr oc. 23rd Intl. W orld W ide W eb Conference (Seoul, K orea, Apr . 2014), 491–502. 24. OpenStaxT utor . https://openstaxtutor .org/, 2013. 25. Piech, C., Huang, J., Chen, Z., Do, C., Ng, A., and K oller , D. T uned models of peer assessment in MOOCs. In Pr oc. 6th Intl. Conf. on Educational Data Mining (July 2013), 153–160. 26. Raman, K., and Joachims, T . Methods for ordinal peer grading. In Pr oc. 20th ACM SIGKDD Intl. Conf. on Knowledge Discovery and Data Mining (Aug. 2014), 1037–1046. 27. Rasch, G. Pr obabilistic Models for Some Intelligence and Attainment T ests . MESA Press, 1993. 28. Riv ers, K., and K oedinger , K. A canonicalizing model for building programming tutors. In Pr oc. 11th Intl. Conf. on Intelligent T utoring Systems (June 2012), 591–593. 29. Riv ers, K., and K oedinger , K. Automating hint generation with solution space path construction. In Pr oc. 12th Intl. Conf. on Intelligent T utoring Systems (June 2014), 329–339. 30. Sadler , P ., and Good, E. The impact of self-and peer-grading on student learning. Educational Assessment 11 , 1 (June 2006), 1–31. 31. Sapling Learning. http://www .saplinglearning.com/, 2014. 32. Singh, R., Gulwani, S., and Solar -Lezama, A. Automated feedback generation for introductory programming assignments. In Pr oc. 34th ACM SIGPLAN Conf. on Pr ogramming Langua ge Design and Implementation , vol. 48 (June 2013), 15–26. 33. Southavilay , V ., Y acef, K., Reimann, P ., and Calvo, R. Analysis of collaborativ e writing processes using revision maps and probabilistic topic models. In Proc. 3r d Intl. Conf. on Learning Analytics and Knowledg e (Apr . 2013), 38–47. 34. Srikant, S., and Aggarwal, V . A system to grade computer programming skills using machine learning. In Pr oc. 20th ACM SIGKDD Intl. Conf. on Knowledg e Discovery and Data Mining (Aug. 2014), 1887–1896. 35. Steyvers, M., and Grif fiths, T . Probabilistic topic models. Handbook of Latent Semantic Analysis 427 , 7 (2007), 424–440. 36. SymPy Dev elopment T eam. Sympy: Python library for symbolic mathematics, 2014. http://www .sympy .or g. 37. T eh, Y . Drichlet process. In Encyclopedia of Machine Learning . Springer , 2010, 280–287. 38. V ats, D., Studer , C., Lan, A. S., Carin, L., and Baraniuk, R. G. T est size reduction for concept estimation. In Pr oc. 6th Intl. Conf. on Educational Data Mining (July 2013), 292–295. 39. W aters, A., Fronczyk, K., Guindani, M., Baraniuk, R., and V annucci, M. A Bayesian nonparametric approach for the analysis of multiple cate gorical item responses. J . Statistical Planning and Infer ence (2014, In press). 40. W ebAssign. https://webassign.com/, 2014. 41. W est, M. Hyperparameter estimation in Drichlet process mixture models. T ech. rep., Duke Uni versity , 1992. 42. W ilko wski, J., Deutsch, A., and Russell, D. Student skill and goal achiev ement in the mapping with Google MOOC. In Pr oc. 1st ACM Conf. on Learning at Scale (Mar . 2014), 3–10. 43. W oolf, B. P . Building Intelligent Interactive T utors: Student-center ed Strate gies for Revolutionizing E-learning . Morgan Kaufman Publishers, 2008. 44. Y in, J., and W ang, J. A Drichlet multinomial mixture model-based approach for short text clustering. In Pr oc. 20th A CM SIGKDD Intl. Conf. on Knowledge Disco very and Data Mining (Aug. 2014), 233–242.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment