Inexact Coordinate Descent: Complexity and Preconditioning

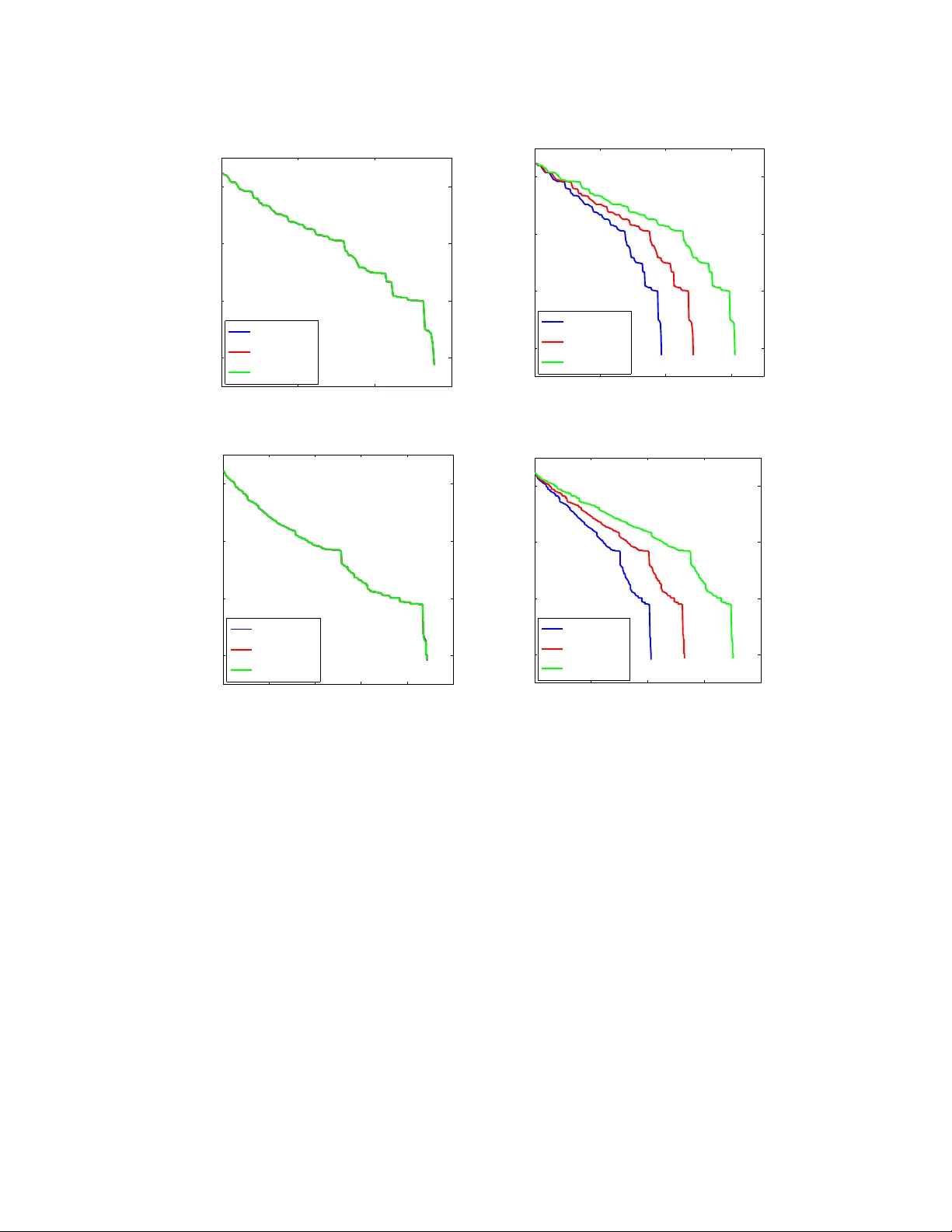

In this paper we consider the problem of minimizing a convex function using a randomized block coordinate descent method. One of the key steps at each iteration of the algorithm is determining the update to a block of variables. Existing algorithms a…

Authors: Rachael Tappenden, Peter Richtarik, Jacek Gondzio