Entropy of Difference

Here, we propose a new tool to estimate the complexity of a time series: the entropy of difference (ED). The method is based solely on the sign of the difference between neighboring values in a time series. This makes it possible to describe the signal as efficiently as prior proposed parameters such as permutation entropy (PE) or modified permutation entropy (mPE), but (1) reduces the size of the sample that is necessary to estimate the parameter value, and (2) enables the use of the Kullback-Leibler divergence to estimate the distance between the time series data and random signals.

💡 Research Summary

The paper introduces a novel measure for quantifying the complexity of time‑series data called the Entropy of Difference (ED). Traditional approaches such as Permutation Entropy (PE) and its modified version (mPE) rely on ranking the values within an m‑dimensional embedding window, which generates m! (or more for mPE) possible ordinal patterns. As the embedding dimension grows, the number of patterns explodes, demanding large data samples for reliable probability estimation. Moreover, for a purely random signal the probability of each permutation is uniform (1/m!), making it impossible to compute a meaningful Kullback‑Leibler (KL) divergence between the empirical distribution and a random baseline.

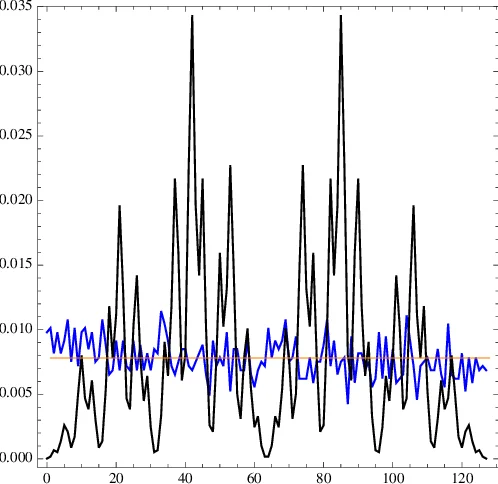

ED circumvents these limitations by discarding the full ordering information and keeping only the sign of successive differences. For an embedding dimension m, each m‑tuple (x_i,…,x_{i+m‑1}) is mapped to a string of (m‑1) symbols “+” (increase) or “‑” (decrease). The total number of possible strings is 2^{m‑1}, dramatically smaller than m!. This reduction allows accurate estimation of the empirical distribution p_m(s) even with modest data lengths.

The probability distribution of strings for a truly random series, denoted q_m(s), is not simply 1/2^{m‑1} because successive signs are not independent. The authors derive a recursive set of equations (their Eq. 1) that generate q_m(s) analytically. With q_m(s) in hand, the KL divergence can be computed as

KL_m(p‖q) = Σ_s p_m(s) log

Comments & Academic Discussion

Loading comments...

Leave a Comment