Discovering Structure in High-Dimensional Data Through Correlation Explanation

We introduce a method to learn a hierarchy of successively more abstract representations of complex data based on optimizing an information-theoretic objective. Intuitively, the optimization searches for a set of latent factors that best explain the …

Authors: Greg Ver Steeg, Aram Galstyan

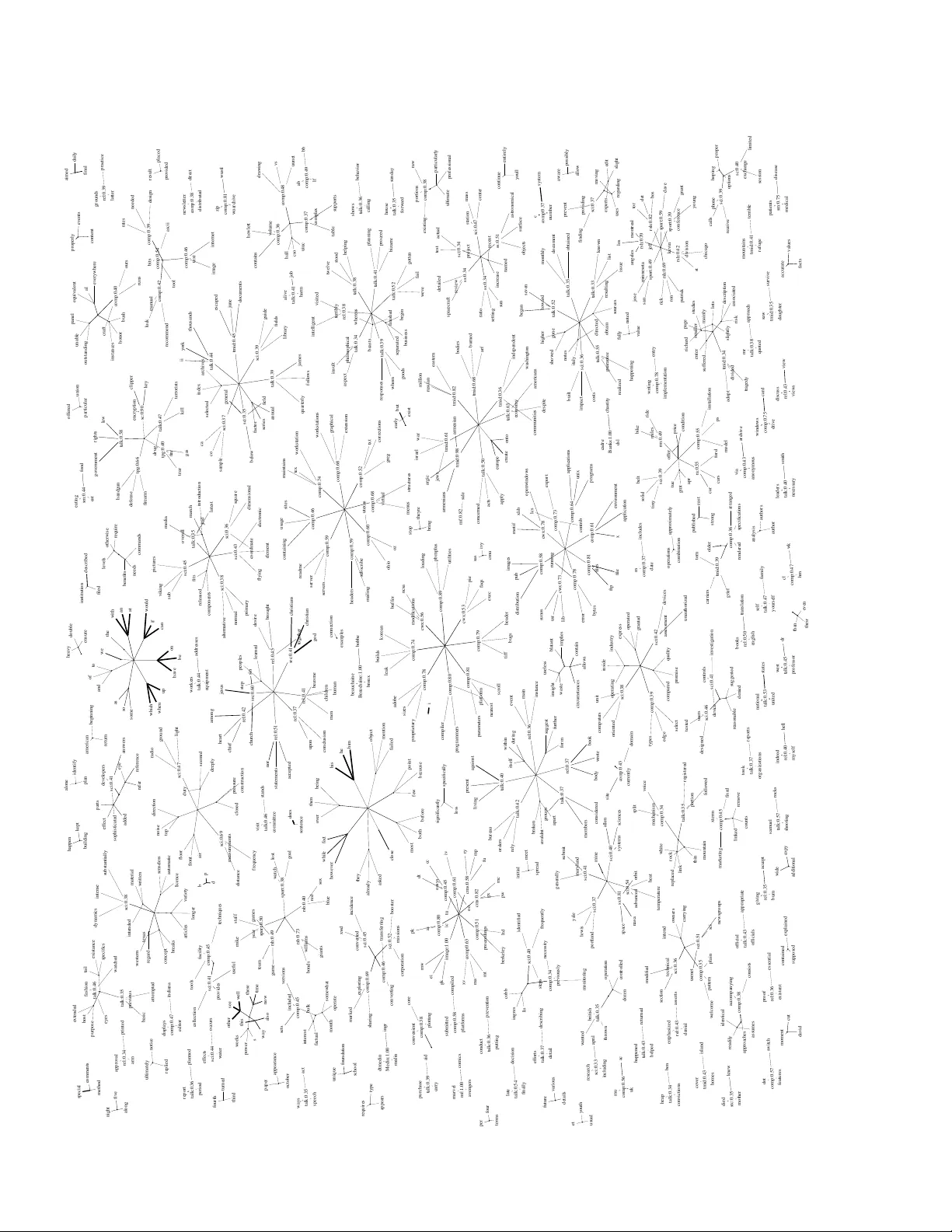

Discov ering Structur e in High-Dimensional Data Thr ough Corr elation Explanation Greg V er Steeg Information Sciences Institute Univ ersity of Southern California Marina del Rey , CA 90292 gregv@isi.edu Aram Galstyan Information Sciences Institute Univ ersity of Southern California Marina del Rey , CA 90292 galstyan@isi.edu Abstract W e introduce a method to learn a hierarchy of successi vely more abstract repre- sentations of complex data based on optimizing an information-theoretic objec- tiv e. Intuitiv ely , the optimization searches for a set of latent factors that best ex- plain the correlations in the data as measured by multi variate mutual information. The method is unsupervised, requires no model assumptions, and scales linearly with the number of variables which makes it an attractiv e approach for very high dimensional systems. W e demonstrate that Correlation Explanation (CorEx) auto- matically discovers meaningful structure for data from diverse sources including personality tests, DN A, and human language. 1 Introduction W ithout any prior knowledge, what can be automatically learned from high-dimensional data? If the variables are uncorrelated then the system is not really high-dimensional but should be viewed as a collection of unrelated univ ariate systems. If correlations exist, howe ver , then some common cause or causes must be responsible for generating them. Without assuming any particular model for these hidden common causes, is it still possible to reconstruct them? W e propose an information- theoretic principle, which we refer to as “correlation explanation”, that codifies this problem in a model-free, mathematically principled way . Essentially , we are searching for latent factors so that, conditioned on these factors, the correlations in the data are minimized (as measured by multi variate mutual information). In other words, we look for the simplest explanation that accounts for the most correlations in the data. As a bonus, building on this information-based foundation leads naturally to an innov ati ve paradigm for learning hierarchical representations that is more tractable than Bayesian structure learning and provides richer insights than neural network inspired approaches [1]. After introducing the principle of “Correlation Explanation” (CorEx) in Sec. 2, we show that it can be efficiently implemented in Sec. 3. T o demonstrate the power of this approach, we begin Sec. 4 with a simple synthetic example and show that standard learning techniques all fail to detect high- dimensional structure while CorEx succeeds. In Sec. 4.2.1, we show that CorEx perfectly reverse engineers the “big fi ve” personality types from survey data while other approaches fail to do so. In Sec. 4.2.2, CorEx automatically discovers in DN A nearly perfect predictors of independent signals relating to gender, geography , and ethnicity . In Sec. 4.2.3, we apply CorEx to text and recover both stylistic features and hierarchical topic representations. After briefly considering intriguing theoretical connections in Sec. 5, we conclude with future directions in Sec. 6. 2 Correlation Explanation Using standard notation [2], capital X denotes a discrete random v ariable whose instances are writ- ten in lowercase. A probability distribution over a random variable X , p X ( X = x ) , is shortened 1 to p ( x ) unless ambiguity arises. The cardinality of the set of values that a random variable can take will always be finite and denoted by | X | . If we hav e n random variables, then G is a subset of indices G ⊆ N n = { 1 , . . . , n } and X G is the corresponding subset of the random v ariables ( X N n is shortened to X ). Entropy is defined in the usual way as H ( X ) ≡ E X [ − log p ( x )] . Higher- order entropies can be constructed in various ways from this standard definition. F or instance, the mutual information between two random variables, X 1 and X 2 can be written I ( X 1 : X 2 ) = H ( X 1 ) + H ( X 2 ) − H ( X 1 , X 2 ) . The following measure of mutual information among many variables was first introduced as “total correlation” [3] and is also called multi-information [4] or multiv ariate mutual information [5]. T C ( X G ) = X i ∈ G H ( X i ) − H ( X G ) (1) For G = { i 1 , i 2 } , this corresponds to the mutual information, I ( X i 1 : X i 2 ) . T C ( X G ) is non- negati v e and zero if and only if the probability distribution factorizes. In fact, total correlation can also be written as a KL div ergence, T C ( X G ) = D K L ( p ( x G ) || Q i ∈ G p ( x i )) . The total correlation among a group of variables, X , after conditioning on some other v ariable, Y , is simply T C ( X | Y ) = P i H ( X i | Y ) − H ( X | Y ) . W e can measure the extent to which Y explains the correlations in X by looking at how much the total correlation is reduced. T C ( X ; Y ) ≡ T C ( X ) − T C ( X | Y ) = X i ∈ N n I ( X i : Y ) − I ( X : Y ) (2) W e use semicolons as a reminder that T C ( X ; Y ) is not symmetric in the arguments, unlike mutual information. T C ( X | Y ) is zero (and T C ( X ; Y ) maximized) if and only if the distribution of X ’ s conditioned on Y factorizes. This would be the case if Y were the common cause of all the X i ’ s in which case Y explains all the correlation in X . T C ( X G | Y ) = 0 can also be seen as encoding local Markov properties among a group of variables and, therefore, specifying a D A G [6]. This quantity has appeared as a measure of the r edundant information that the X i ’ s carry about Y [7]. More connections are discussed in Sec. 5. Optimizing over Eq. 2 can now be seen as a search for a latent factor , Y , that explains the correlations in X . W e can make this concrete by letting Y be a discrete random variable that can take one of k possible values and searching o ver all probabilistic functions of X , p ( y | x ) . max p ( y | x ) T C ( X ; Y ) s.t. | Y | = k , (3) The solution to this optimization is given as a special case in Sec. A. T otal correlation is a functional ov er the joint distribution, p ( x, y ) = p ( y | x ) p ( x ) , so the optimization implicitly depends on the data through p ( x ) . T ypically , we have only a small number of samples drawn from p ( x ) (compared to the size of the state space). T o make matters worse, if x ∈ { 0 , 1 } n then optimizing over all p ( y | x ) in v olves at least 2 n variables. Surprisingly , despite these difficulties we show in the next section that this optimization can be carried out ef ficiently . The maximum achiev able value of this objecti ve occurs for some finite k when T C ( X | Y ) = 0 . This implies that the data are perfectly described by a naiv e Bayes model with Y as the parent and X i as the children. Generally , we expect that correlations in data may result from sev eral different factors. Therefore, we extend the optimization abo ve to include m different factors, Y 1 , . . . , Y m . 1 max G j ,p ( y j | x G j ) m X j =1 T C ( X G j ; Y j ) s.t. | Y j | = k , G j ∩ G j 0 6 = j = ∅ (4) Here we simultaneously search subsets of variables G j and over variables Y j that explain the cor- relations in each group. While it is not necessary to make the optimization tractable, we impose an additional condition on G j so that each variable X i is in a single group, G j , associated with a single “parent”, Y j . The reason for this restriction is that it has been shown that the value of the objectiv e can then be interpreted as a lower bound on T C ( X ) [8]. Note that this objective is valid 1 Note that in principle we could ha ve just replaced Y in Eq. 3 with ( Y 1 , . . . , Y m ) , b ut the state space would hav e been exponential in m , leading to an intractable optimization. 2 and meaningful re gardless of details about the data-generating process. W e only assume that we are giv en p ( x ) or iid samples from it. The output of this procedure giv es us Y j ’ s, which are probabilistic functions of X . If we itera- tiv ely apply this optimization to the resulting probability distribution ov er Y by searching for some Z 1 , . . . , Z ˜ m that explain the correlations in the Y ’ s, we will end up with a hierarchy of variables that forms a tree. W e now sho w that the optimization in Eq. 4 can be carried out ef ficiently e ven for high-dimensional spaces and small numbers of samples. 3 CorEx: Efficient Implementation of Correlation Explanation W e begin by re-writing the optimization in Eq. 4 in terms of mutual informations using Eq. 2. max G,p ( y j | x ) m X j =1 X i ∈ G j I ( Y j : X i ) − m X j =1 I ( Y j : X G j ) (5) Next, we replace G with a set indicator variable, α i,j = I [ X i ∈ G j ] ∈ { 0 , 1 } . max α,p ( y j | x ) m X j =1 n X i =1 α i,j I ( Y j : X i ) − m X j =1 I ( Y j : X ) (6) The non-overlapping group constraint is enforced by demanding that P ¯ j α i, ¯ j = 1 . Note also that we dropped the subscript G j in the second term of Eq. 6 but this has no effect because solutions must satisfy I ( Y j : X ) = I ( Y j : X G j ) , as we now sho w . For fixed α , it is straightforward to find the solution of the Lagrangian optimization problem as the solution to a set of self-consistent equations. Details of the deriv ation can be found in Sec. A. p ( y j | x ) = 1 Z j ( x ) p ( y j ) n Y i =1 p ( y j | x i ) p ( y j ) α i,j (7) p ( y j | x i ) = X ¯ x p ( y j | ¯ x ) p ( ¯ x ) δ ¯ x i ,x i /p ( x i ) and p ( y j ) = X ¯ x p ( y j | ¯ x ) p ( ¯ x ) (8) Note that δ is the Kronecker delta and that Y j depends only on the X i for which α i,j is non-zero. Remarkably , Y j ’ s dependence on X can be written in terms of a linear (in n , the number of variables) number of parameters which are just the marginals, p ( y j ) , p ( y j | x i ) . W e approximate p ( x ) with the empirical distrib ution, ˆ p ( ¯ x ) = P N l =1 δ ¯ x,x ( l ) / N . This approximation allo ws us to estimate marginals with fixed accurac y using only a constant number of iid samples from the true distribution. In Sec. A we show that Eq. 7, which defines the soft labeling of any x , can be seen as a linear function followed by a non-linear threshold, reminiscent of neural networks. Also note that the normalization constant for any x , Z j ( x ) , can be calculated easily by summing ov er just | Y j | = k values. For fixed values of the parameters p ( y j | x i ) , we have an integer linear program for α made easy by the constraint P ¯ j α i, ¯ j = 1 . The solution is α ∗ i,j = I [ j = arg max ¯ j I ( X i : Y ¯ j )] . Howe v er , this leads to a rough optimization space. The solution in Eq. 7 is v alid (and meaningful, see Sec. 5 and [8]) for arbitrary values of α so we relax our optimization accordingly . At step t = 0 in the optimization, we pick α t =0 i,j ∼ U (1 / 2 , 1) uniformly at random (violating the constraints). At step t + 1 , we make a small update on α in the direction of the solution. α t +1 i,j = (1 − λ ) α t i,j + λα ∗∗ i,j (9) The second term, α ∗∗ i,j = exp γ ( I ( X i : Y j ) − max ¯ j I ( X i : Y ¯ j )) , implements a soft-max which con ver ges to the true solution for α ∗ in the limit γ → ∞ . This leads to a smooth optimization and good choices for λ, γ can be set through intuitiv e arguments described in Sec. B. Now that we have rules to update both α and p ( y j | x i ) to increase the v alue of the objecti ve, we simply iterate between them until we achieve con ver gence. While there is no guarantee to find the global optimum, the objective is upper bounded by T C ( X ) (or equiv alently , T C ( X | Y ) is lower bounded by 0). Pseudo-code for this approach is described in Algorithm 1 with additional details provided in Sec. B and source code available online 2 . The ov erall complexity is linear in the number 2 Open source code is av ailable at http://github.com/gregversteeg/CorEx . 3 input : A matrix of size n s × n representing n s samples of n discrete random v ariables set : Set m , the number of latent variables, Y j , and k , so that | Y j | = k output : Parameters α i,j , p ( y j | x i ) , p ( y j ) , p ( y | x ( l ) ) for i ∈ N n , j ∈ N m , l ∈ N n s , y ∈ N k , x i ∈ X i Randomly initialize α i,j , p ( y | x ( l ) ) ; repeat Estimate marginals, p ( y j ) , p ( y j | x i ) using Eq. 8; Calculate I ( X i : Y j ) from marginals; Update α using Eq. 9; Calculate p ( y | x ( l ) ) , l = 1 , . . . , n s using Eq. 7; until con ver gence ; Algorithm 1: Pseudo-code implementing Correlation Explanation (CorEx) of variables. T o bound the complexity in terms of the number of samples, we can always use mini- batches of fixed size to estimate the mar ginals in Eq. 8. A common problem in representation learning is how to pick m , the number of latent variables to describe the data. Consider the limit in which we set m = n . T o use all Y 1 , . . . , Y m in our representation, we would need e xactly one v ariable, X i , in each group, G j . Then ∀ j, T C ( X G j ) = 0 and, therefore, the whole objecti ve will be 0 . This suggests that the maximum v alue of the objecti ve must be achieved for some value of m < n . In practice, this means that if we set m too high, only some subset of latent variables will be used in the solution, as we will demonstrate in Fig. 2. In other words, if m is set high enough, the optimization will result in some number of clusters m 0 < m that is optimal with respect to the objectiv e. Representations with different numbers of layers, different m , and different k can be compared according to how tight of a lower bound they provide on T C ( X ) [8]. 4 Experiments 4.1 Synthetic data ● ● ● ● ● ● ● ● ■ ■ ■ ■ ■ ■ ■ ■ ◆ ◆ ◆ ◆ ◆ ◆ ◆ ◆ ▲ ▲ ▲ ▲ ▲ ▲ ▲ ▲ ▼ ▼ ▼ ▼ ▼ ▼ ▼ ▼ ○ ○ ○ ○ ○ ○ ○ ○ □ □ □ □ □ □ □ □ ◇ ◇ ◇ ◇ ◇ ◇ ◇ ◇ △ △ △ △ △ △ △ △ ▽ ▽ ▽ ▽ ▽ ▽ ▽ ▽ ● ● ● ● ● ● ● ● 2 4 2 5 2 6 2 7 2 8 2 9 2 10 2 11 0.0 0.2 0.4 0.6 0.8 1.0 # Observed Variables, n Accuracy ( ARI ) CorEx ● CorEx ■ Spectral * ◆ K - means ▲ ICA ▼ NMF * ○ N.Net:RBM * □ PCA ◇ Spectral Bi * △ Isomap * ▽ LLE * ● Hierarch. * Y 1 X ... X 1 X ... Y b Z Layer 2 1 0 X c X n Y ... Synthetic model Figure 1: (Left) W e compare methods to recover the clusters of v ariables generated according to the model. (Right) Synthetic data is generated according to a tree of latent variables. T o test CorEx’ s ability to recover latent structure from data we begin by generating synthetic data according to the latent tree model depicted in Fig. 1 in which all the variables are hidden except for the leaf nodes. The most difficult part of reconstructing this tree is clustering of the leaf nodes. If a clustering method can do that then the latent variables can be reconstructed for each cluster easily using EM. W e consider many different clustering methods, typically with several variations 4 of each technique, details of which are described in Sec. C. W e use the adjusted Rand index (ARI) to measure the accuracy with which inferred clusters reco ver the ground truth. 3 W e generated samples from the model in Fig. 1 with b = 8 and varied c , the number of leav es per branch. The X i ’ s depend on Y j ’ s through a binary erasure channel (BEC) with erasure probability δ . The capacity of the BEC is 1 − δ so we let δ = 1 − 2 /c to reflect the intuition that the signal from each parent node is weakly distributed across all its children (but cannot be inferred from a single child). W e generated max(200 , 2 n ) samples. In this example, all the Y j ’ s are weakly correlated with the root node, Z , through a binary symmetric channel with flip probability of 1 / 3 . Fig. 1 shows that for a small to medium number of v ariables, all the techniques reco ver the structure fairly well, but as the dimensionality increases only CorEx continues to do so. ICA and hierarchical clustering compete for second place. CorEx also perfectly recovers the values of the latent factors in this example. For latent tree models, recovery of the latent factors giv es a global optimum of the objectiv e in Eq. 4. Ev en though CorEx is only guaranteed to find local optima, in this example it correctly con ver ges to the global optimum ov er a range of problem sizes. Note that a growing literature on latent tree learning attempts to reconstruct latent trees with the- oretical guarantees [9, 10]. In principle, we should compare to these techniques, but they scale as O ( n 2 ) − O ( n 5 ) (see [31], T able 1) while our method is O ( n ) . In a recent survey on latent tree learning methods, only one out of 15 techniques was able to run on the largest dataset considered (see [31], T able 3), while most of the datasets in this paper are orders of magnitude larger than that one. 0 1 I ( Y j : X i ) t =0 t = 10 Uncorrelated v ariables i =1 ,...,n v j = 1 ... m ↵ i,j t = 50 Figure 2: (Color online) A visualization of structure learning in CorEx, see text for details. Fig. 2 visualizes the structure learning process. 4 This example is similar to that above but includes some uncorrelated random variables to show ho w they are treated by CorEx. W e set b = 5 clusters of v ariables but we used m = 10 hidden v ariables. At each iteration, t , we show which hidden vari- ables, Y j , are connected to input variables, X i , through the connectivity matrix, α (shown on top). The mutual information is shown on the bottom. At the beginning, we started with full connectivity , but with nothing learned we hav e I ( Y j : X i ) = 0 . Over time, the hidden units “compete” to find a group of X i ’ s for which they can explain all the correlations. After only ten iterations the overall structure appears and by 50 iterations it is exactly described. At the end, the uncorrelated random variables ( X i ’ s) and the hidden variables ( Y j ’ s) which have not explained any correlations can be easily distinguished and discarded (visually and mathematically , see Sec. B). 4.2 Discovering Structur e in Diverse Real-W orld Datasets 4.2.1 Personality Sur veys and the “Big Five” P ersonality T raits One psychological theory suggests that there are fi ve traits that largely reflect the dif ferences in personality types [11]: extraver sion , neur oticism , agr eeableness , conscientiousness and openness to experience . Psychologists hav e designed various instruments intended to measure whether indi- viduals exhibit these traits. W e consider a survey in which subjects rate fifty statements, such as, “I am the life of the party”, on a fiv e point scale: (1) disagree, (2) slightly disagree, (3) neutral, (4) slightly agree, and (5) agree. 5 The data consist of answers to these questions from about ten 3 Rand index counts the percentage of pairs whose relativ e classification matches in both clusterings. ARI adds a correction so that a random clustering will giv e a score of zero, while an ARI of 1 corresponds to a perfect match. 4 A video is av ailable online at http://isi.edu/ ˜ gregv/corex_structure.mpg . 5 Data and full list of questions are av ailable at http://personality- testing.info/ _rawdata/ . 5 thousand test-takers. The test was designed with the intention that each question should belong to a cluster according to which personality trait the question gauges. Is it true that there are fiv e factors that strongly predict the answers to these questions? CorEx learned a two-lev el hierarchical representation when applied to this data (full model shown in Fig. C.2). On the first level, CorEx automatically determined that the questions should cluster into five groups. Surprisingly , the five clusters exactly correspond to the big fiv e personality traits as labeled by the test designers. It is unusual to recover the ground truth with perfect accuracy on an unsupervised learning problem so we tried a number of other standard clustering methods to see if they could reproduce this result. W e display the results using confusion matrices in Fig. 3. The details of the techniques used are described in Sec. C but all of them had an adv antage ov er CorEx since they required that we specify the correct number of clusters. None of the other techniques are able to recov er the fiv e personality types exactly . Interestingly , Independent Component Analysis (ICA) [12] is the only other method that comes close. The intuition behind ICA is that it find a linear transformation on the input that minimizes the multi-information among the outputs ( Y j ). In contrast, CorEx searches for Y j ’ s so that multi- information among the X i ’ s is minimized after conditioning on Y . ICA assumes that the signals that giv e rise to the data are independent while CorEx does not. In this case, personality traits like “extra version” and “agreeableness” are correlated, violating the independence assumption. S/Eu/Ea(ARI:0.86) Subsah. Africa(ARI:0.98) Subsah. Africa(ARI:0.53) Subsah. Africa(ARI:0.55) Subsah. Africa(ARI:0.99) Subsah. Africa(ARI:0.98) Subsah. Africa(ARI:0.95) Subsah. Africa(ARI:0.92) Subsah. Africa(ARI:0.52) East(ARI:0.87) America(ARI:0.99) East(ARI:0.74) Oceania(ARI:1.00) East(ARI:0.87) America(ARI:0.55) East(ARI:0.51) gender(ARI:0.95) EurAsia(ARI:0.86) EurAsia(ARI:0.87) Predicted True * * * * Figure 3: (Left) Confusion matrix comparing predicted clusters to true clusters for the questions on the Big-5 personality test. (Right) Hierarchical model constructed from samples of DN A by CorEx. 4.2.2 DNA fr om the Human Genome Diversity Pr oject Next, we consider DN A data taken from 952 indi viduals of div erse geographic and ethnic back- grounds [13]. The data consist of 4170 variables describing dif ferent SNPs (single nucleotide poly- morphisms). 6 W e use CorEx to learn a hierarchical representation which is depicted in Fig. 3. T o ev aluate the quality of the representation, we use the adjusted Rand inde x (ARI) to compare clusters induced by each latent v ariable in the hierarchical representation to dif ferent demographic v ariables in the data. Latent variables which substantially match demographic v ariables are labeled in Fig. 3. The representation learned ( unsupervised ) on the first layer contains a perfect match for Oceania (the Pacific Islands) and nearly perfect matches for America (Native Americans), Subsaharan Africa, and gender . The second layer has three variables which correspond very closely to broad geographic regions: Subsaharan Africa, the “East” (including China, Japan, Oceania, America), and EurAsia. 4.2.3 T ext fr om the T wenty Newsgroups Dataset The twenty newsgroups dataset consists of documents taken from twenty different topical message boards with about a thousand posts each [14]. For analyzing unstructured text, typical feature en- 6 Data, descriptions of SNPs, and detailed demographics of subjects is available at ftp://ftp.cephb. fr/hgdp_v3/ . 6 gineering approaches heuristically separate signals like style, sentiment, or topics. In principle, all three of these signals manifest themselves in terms of subtle correlations in word usage. Recent attempts at learning large-scale unsupervised hierarchical representations of text hav e produced in- teresting results [15], though validation is difficult because quantitative measures of representation quality often do not correlate well with human judgment [16]. T o focus on linguistic signals, we remov ed meta-data like headers, footers, and replies ev en though these giv e strong signals for supervised newsgroup classification. W e considered the top ten thou- sand most frequent tokens and constructed a bag of words representation. Then we used CorEx to learn a fiv e lev el representation of the data with 326 latent variables in the first layer . Details are described in Sec. C.1. Portions of the first three lev els of the tree k eeping only nodes with the highest normalized mutual information with their parents are shown in Fig. 4 and in Fig. C.1. 7 alt.atheism aa rel comp.graphics cg comp comp.os.ms-windo ws.misc cms com p comp.sys.ibm.pc.hardw are cpc comp comp.sys.mac.hardw are cmac comp comp.windo ws.x cwx comp misc.forsale mf mi sc rec.autos ra v ehic rec.motorc ycles rm v ehic rec.sport.baseball rsb s port rec.sport.hock e y rsh sport sci.crypt sc sci sci.electronics se sci sci.med sm sci sci.space ss sci soc.religion.christian src rel talk.politics.guns tpg talk talk.politics.mideast tmid talk talk.politics.misc tmisc talk talk.religion.misc trm re l Figure 4: Portions of the hierarchical representation learned for the twenty newsgroups dataset. W e label latent v ariables that overlap significantly with known structure. Newsgroup names, abbrevia- tions, and broad groupings are shown on the right. T o provide a more quantitativ e benchmark of the results, we again test to what extent learned rep- resentations are related to known structure in the data. Each post can be labeled by the newsgroup it belongs to, according to broad categories (e.g. groups that include “comp”), or by author . Most learned binary variables were active in around 1% of the posts, so we report the fraction of ac- tiv ations that coincide with a known label (precision) in Fig. 4. Most variables clearly represent sub-topics of the newsgroup topics, so we do not expect high recall. The small portion of the tree shown in Fig. 4 reflects intuitiv e relationships that contain hierarchies of related sub-topics as well as clusters of function words (e.g. pronouns like “he/his/him” or tense with “hav e/be”). Once again, several learned variables perfectly captured known structure in the data. Some users sent images in text using an encoded format. One feature matched all the image posts (with per- fect precision and recall) due to the correlated presence of unusual short tokens. There were also perfect matches for three frequent authors: G. Banks, D. Medin, and B. Beauchaine. Note that the learned variables did not trigger if just their names appeared in the text, but only for posts they authored. These authors had elaborate signatures with long, identifiable quotes that ev aded pre- processing but created a strongly correlated signal. Another variable with perfect precision for the “forsale” ne wsgroup labeled comic book sales (b ut did not activ ate for discussion of comics in other newsgroups). Other nearly perfect predictors described extensi ve discussions of Armenia/T urke y in talk.politics.mideast (a fifth of all discussion in that group), specialized unix jargon, and a match for sci.crypt which had 90% precision and 55% recall. When we ranked all the latent factors according to a normalized version of Eq. 2, these e xamples all showed up in the top 20. 5 Connections and Related W ork While the basic measures used in Eq. 1 and Eq. 2 ha ve appeared in sev eral contexts [7, 17, 4, 3, 18], the interpretation of these quantities is an active area of research [19, 20]. The optimizations we 7 An interactiv e tool for exploring the full hierarchy is a v ailable at http://bit.ly/corexvis . 7 define have some interesting but less obvious connections. For instance, the optimization in Eq. 3 is similar to one recently introduced as a measure of “common information” [21]. The objectiv e in Eq. 6 (for a single Y j ) appears exactly as a bound on “ancestral” information [22]. F or instance, if all the α i = 1 /β then Steudel and A y [22] show that the objectiv e is positive only if at least 1 + β variables share a common ancestor in any D A G describing them. This provides extra rationale for relaxing our original optimization to include non-binary values of α i,j . The most similar learning approach to the one presented here is the information bottleneck [23] and its extension the multiv ariate information bottleneck [24, 25]. The motiv ation behind information bottleneck is to compress the data ( X ) into a smaller representation ( Y ) so that information about some relev ance term (typically labels in a supervised learning setting) is maintained. The second term in Eq. 6 is analogous to the compression term. Instead of maximizing a relev ance term, we are maximizing information about all the indi vidual sub-systems of X , the X i . The most redundant information in the data is preferentially stored while uncorrelated random variables are completely ignored. The broad problem of transforming complex data into simpler, more meaningful forms goes under the rubric of r epr esentation learning [26] which shares many goals with dimensionality reduction and subspace clustering. Insofar as our approach learns a hierarchy of representations it superfi- cially resembles “deep” approaches like neural nets and autoencoders [27, 28, 29, 30]. While those approaches are scalable, a common critique is that they in volv e many heuristics discovered through trial-and-error that are difficult to justify . On the other hand, a rich literature on learning latent tree models [31, 32, 9, 10] have excellent theoretical properties but do not scale well. By basing our method on an information-theoretic optimization that can nevertheless be performed quite effi- ciently , we hope to preserve the best of both worlds. 6 Conclusion The most challenging open problems today in v olve high-dimensional data from di verse sources including human behavior , language, and biology . 8 The complexity of the underlying systems makes modeling dif ficult. W e have demonstrated a model-free approach to learn successfully more coarse- grained representations of comple x data by efficiently optimizing an information-theoretic objectiv e. The principle of explaining as much correlation in the data as possible provides an intuiti ve and fully data-driv en way to disco ver pre viously inaccessible structure in high-dimensional systems. It may seem surprising that CorEx should perfectly recover structure in di verse domains without using labeled data or prior knowledge. On the other hand, the patterns discov ered are “low-hanging fruit” from the right point of view . Intelligent systems should be able to learn robust and general pat- terns in the face of rich inputs e ven in the absence of labels to define what is important. Information that is very redundant in high-dimensional data pro vides a good starting point. Sev eral fruitful directions stand out. First, the promising preliminary results in vite in-depth inv esti- gations on these and related problems. From a computational point of view , the main work of the algorithm in volv es a matrix multiplication followed by an element-wise non-linear transform. The same is true for neural networks and they have been scaled to very large data using, e.g., GPUs. On the theoretical side, generalizing this approach to allow non-tree representations appears both feasible and desirable [8]. Acknowledgments W e thank V irgil Griffith, Shuyang Gao, Hsuan-Y i Chu, Shirley Pepke, Bilal Shaw , Jose-Luis Ambite, and Nathan Hodas for helpful con versations. This research was supported in part by AFOSR grant F A9550-12-1-0417 and D ARP A grant W911NF-12-1-0034. References [1] C. Szegedy, W . Zaremba, I. Sutske ver, J. Bruna, D. Erhan, I. Goodfellow, and R. Fergus. Intriguing properties of neural networks. In ICLR , 2014. 8 In principle, computer vision should be added to this list. Howe ver , the success of unsupervised feature learning with neural nets for vision appears to rely on encoding generic priors about vision through heuristics like conv olutional coding and max pooling [33]. Since CorEx is a knowledge-free method it will perform relativ ely poorly unless we find a way to also encode these assumptions. 8 [2] Thomas M Cover and Joy A Thomas. Elements of information theory . W iley-Interscience, 2006. [3] Satosi W atanabe. Information theoretical analysis of multiv ariate correlation. IBM Journal of r esear ch and development , 4(1):66–82, 1960. [4] M Studen ` y and J V ejnarova. The multiinformation function as a tool for measuring stochastic dependence. In Learning in graphical models , pages 261–297. Springer , 1998. [5] Alexander Kraskov , Harald St ¨ ogbauer , Ralph G Andrzejak, and Peter Grassber ger . Hierarchical clustering using mutual information. EPL (Europhysics Letters) , 70(2):278, 2005. [6] J. Pearl. Causality: Models, Reasoning and Inference . Cambridge Uni versity Press, NY , NY , USA, 2009. [7] Elad Schneidman, William Bialek, and Michael J Berry . Synergy , redundancy , and independence in population codes. the Journal of Neuroscience , 23(37):11539–11553, 2003. [8] Greg V er Steeg and Aram Galstyan. Maximally informati ve hierarchical representations of high- dimensional data. , 2014. [9] Animashree Anandkumar , Kamalika Chaudhuri, Daniel Hsu, Sham M Kakade, Le Song, and T ong Zhang. Spectral methods for learning multiv ariate latent tree structure. In NIPS , pages 2025–2033, 2011. [10] Myung Jin Choi, V incent YF T an, Animashree Anandkumar, and Alan S Willsk y . Learning latent tree graphical models. The Journal of Machine Learning Researc h , 12:1771–1812, 2011. [11] Lewis R Goldberg. The dev elopment of markers for the big-five factor structure. Psycholo gical assess- ment , 4(1):26, 1992. [12] Aapo Hyv ¨ arinen and Erkki Oja. Independent component analysis: algorithms and applications. Neur al networks , 13(4):411–430, 2000. [13] N.A. Rosenberg, J.k. Pritchard, J.L. W eber, H.M. Cann, K.K. Kidd, L.A. Zhivoto vsky , and M.W . Feldman. Genetic structure of human populations. Science , 298(5602):2381–2385, 2002. [14] K. Bache and M. Lichman. UCI machine learning repository , 2013. [15] T omas Mik olov , Kai Chen, Greg Corrado, and Jef frey Dean. Efficient estimation of word representations in vector space. , 2013. [16] Jonathan Chang, Jordan L Boyd-Graber , Sean Gerrish, Chong W ang, and David M Blei. Reading tea leav es: How humans interpret topic models. In NIPS , volume 22, pages 288–296, 2009. [17] Elad Schneidman, Susanne Still, Michael J Berry , William Bialek, et al. Network information and con- nected correlations. Physical Review Letters , 91(23):238701, 2003. [18] Nihat A y , Eckehard Olbrich, Nils Bertschinger , and J ¨ urgen Jost. A unifying framework for complexity measures of finite systems. Proceedings of European Complex Systems Society , 2006. [19] P .L. W illiams and R.D. Beer . Nonnegativ e decomposition of multiv ariate information. , 2010. [20] V irgil Grif fith and Christof K och. Quantifying synergistic mutual information. , 2012. [21] Gowtham Ramani Kumar , Cheuk T ing Li, and Abbas El Gamal. Exact common information. arXiv:1402.0062 , 2014. [22] B. Steudel and N. A y . Information-theoretic inference of common ancestors. , 2010. [23] Naftali T ishby , Fernando C Pereira, and William Bialek. The information bottleneck method. arXiv:physics/0004057 , 2000. [24] Noam Slonim, Nir Friedman, and Naftali T ishby . Multiv ariate information bottleneck. Neural Computa- tion , 18(8):1739–1789, 2006. [25] Noam Slonim. The information bottleneck: Theory and applications . PhD thesis, Citeseer , 2002. [26] Y oshua Bengio, Aaron Courville, and Pascal V incent. Representation learning: A review and new per- spectiv es. P attern Analysis and Machine Intelligence, IEEE Tr ansactions on , 35(8):1798–1828, 2013. [27] Geoffrey E Hinton and Ruslan R Salakhutdinov . Reducing the dimensionality of data with neural net- works. Science , 313(5786):504–507, 2006. [28] Y ann LeCun, L ´ eon Bottou, Y oshua Bengio, and Patrick Haffner . Gradient-based learning applied to document recognition. Proceedings of the IEEE , 86(11):2278–2324, 1998. [29] Y ann LeCun and Y oshua Bengio. Con volutional networks for images, speech, and time series. The handbook of brain theory and neural networks , 3361, 1995. [30] Y oshua Bengio, Pascal Lamblin, Dan Popo vici, and Hugo Larochelle. Greedy layer-wise training of deep networks. Advances in neural information processing systems , 19:153, 2007. [31] Rapha ¨ el Mourad, Christine Sinoquet, Nevin L Zhang, T engfei Liu, Philippe Leray , et al. A survey on latent tree models and applications. J. Artif. Intell. Res.(J AIR) , 47:157–203, 2013. 9 [32] Ryan Prescott Adams, Hanna M W allach, and Zoubin Ghahramani. Learning the structure of deep sparse graphical models. , 2009. [33] H. Lee, R. Grosse, R. Ranganath, and A. Ng. Con volutional deep belief networks for scalable unsuper- vised learning of hierarchical representations. In ICML , 2009. [34] F . Pedregosa, G. V aroquaux, A. Gramfort, V . Michel, B. Thirion, O. Grisel, M. Blondel, P . Prettenhofer , R. W eiss, V . Dubourg, J. V anderplas, A. Passos, D. Cournapeau, M. Brucher, M. Perrot, and E. Duchesnay . Scikit-learn: Machine learning in Python. Journal of Machine Learning Resear ch , 12:2825–2830, 2011. 10 Supplementary Material f or “Discovering Structur e in High-Dimensional Data Through Corr elation Explanation” A Derivation of Eqs. 7 and 8 W e want to optimize the follo wing objecti ve. max α,p ( y j | x ) m X j =1 n X i =1 α i,j I ( Y j : X i ) − m X j =1 I ( Y j : X ) s.t. X y j p ( y j | x ) = 1 (10) In principle, we would also like ∀ i, j, α i,j ∈ { 0 , 1 } , P ¯ j α i, ¯ j = 1 , but we begin by solving the optimization for fixed α . W e proceed using Lagrangian optimization. W e introduce a Lagrange multiplier λ j ( x ) for each value of x and each j to enforce the normalization constraint and then reduce the constrained opti- mization problem to the unconstrained optimization of the objectiv e L tot = P j L j . W e show the solution for a single L j , but drop the j index to av oid clutter . (F or fixed α , the optimization for different j totally decouple.) L = X x,y p ( x ) p ( y | x ) X i α i (log p ( y | x i ) − log( p ( y ))) − (log p ( y | x ) − log ( p ( y ))) ! + X x λ ( x )( X y p ( y | x ) − 1) Note that we are optimizing o ver p ( y | x ) and so the marginals p ( y | x i ) , p ( y ) are actually linear func- tions of p ( y | x ) . Next we take the functional deri vati v es with respect to p ( y | x ) and set them equal to 0. Note that this can be done symbolically and proceeds in similar fashion to the detailed calculations of information bottleneck [25]. This leads to the following condition. p ( y j | x ) = 1 Z ( x ) p ( y j ) n Y i =1 p ( y j | x i ) p ( y j ) α i,j But this is only a formal solution since the marginals themselv es are defined in terms of p ( y | x ) . p ( y ) = X x p ( x ) p ( y | x ) , p ( y | x i ) = X x j 6 = i p ( y | x ) p ( x ) /p ( x i ) The partition constant, Z ( x ) can be easily calculated by summing ov er just | Y j | terms. Imagine we are giv en l = 1 , . . . , N samples, x ( l ) , drawn from unknown distribution p ( x ) . If x is very high dimensional, we do not want to enumerate over all possible v alues of x . Instead, we consider the quantity in Eq. 7 and Eq. 8 only for observed samples. p ( y | x ( l ) ) = 1 Z ( x ( l ) ) p ( y ) n Y i =1 p ( y | x ( l ) i ) p ( y ) ! α i,j In log-space, this has an ev en simpler form. log p ( y | x ( l ) ) = (1 − X i α i ) log p ( y ) + n X i =1 α i log p ( y | x ( l ) i ) − log Z ( x ( l ) ) That is, the probabilistic label, y , for any sample, x , is a linear combination of weighted terms for each x i . W e recov er p ( y | x ) by doing a nonlinear transformation consisting of exponentiation and normalization. 11 The consistency requirements which are sums o ver the state space of x can be replaced with sample expectations. p ( y ) = X x p ( x ) p ( y | x ) ≈ 1 N N X j =1 p ( y | x ( l ) ) , with similar estimates for the marginals p ( y | x i ) . In practice, to limit the complexity in terms of the number of samples, we can choose a random subset of samples at each iteration and estimate the probabilistic labels and mar ginals only for them. The details of the optimization over α are described in the next section. Special case for Eq. 3 Note that the optimization in Eq. 3 corresponds to j = 1 , . . . , m with m = 1 and ∀ i, α i = 1 . Con ver gence The updates for the iterativ e procedure described here are guaranteed not to decrease the objecti ve at each step and are guaranteed to con ver ge to a local optimum. Theoretical details are described elsewhere [8]. B Implementation Details f or CorEx As pointed out in Sec. 5, the objectiv e in Eq. 6 (for a single Y j ) appears exactly as a bound on “an- cestral” information [22]. W e use this fact to motiv ate our choice for parameters in Eq. 9. Consider the soft-max function we use to define α ∗ . α ∗ i,j = exp γ ( I ( X i : Y j ) − max ¯ j I ( X i : Y ¯ j )) First of all, we allow γ i,j to take different values at different i, j . W e start by enforcing the form γ i,j = C j /H ( X i ) . That way , the value of the exponent depends on normalized mutual information (NMI) instead of mutual information. The minimum value that can occur is exp( − C j ) . W e set C j = 1 . If the difference of N M I ’ s take the minimum value of − 1 , we get α ∗ i,j ∼ 1 / 3 . According to the Steudel and A y bound, X i can still contrib ute to a non-neg ativ e v alue for the part of objecti v e Eq. 6 that in volves Y j as long as X i shares a common ancestor with at least 1 /α + 1 other v ariables. At the beginning of the learning, this is desirable as it allo ws all Y j ’ s to learn significant structures ev en starting from small values of α i,j . Howe ver , as the computation progresses, we would like to force the soft-max function to get closer to the true hard max solution. T o that end, we set γ i,j = (1 + D j ) /H ( X i ) , where D j = 500 · | E X ( − log Z j ( x )) | . The D j term represents the amount of correlation learned by Y j [8]. For instance, if all p ( y j | x i ) = p ( y j ) , log Z j ( x ) = 0 and Y j has not learned anything. As the computation progresses and Y j learns more structure, we smoothly transition to a hard-max constraint. In all the experiments sho wn here, we set | Y j | = k = 2 . For con ver gence of Algorithm 1, we check when the magnitude of changes of E X log( − P j Z j ( x )) consistently falls belo w a threshold of 10 − 5 or when we reach 1000 iterations, whichev er occurs first. W e set λ = 0 . 3 based on several tests with synthetic data. W e construct higher order representation from the bottom up. After applying Algorithm 1, we take the most likely value of Y j for each sample in the dataset. Then we apply CorEx ag ain using these la- bels as the input. In principle, this sample of Y ’ s does not accurately reflect p ( y ) = P x p ( y | x ) p ( x ) and a more nuanced approximation like contrastiv e div ergence could be used. Howe ver , in prac- tice it seems that CorEx typically learns nearly deterministic functions of x , so that the maximum likelihood labels well reflect the true distrib ution. In Fig. 2, we suggested that uncorrelated random variables could be easily detected. In practice we used a threshold that this was the case if M I ( X i : Y parent ( X i ) ) /min ( H ( X i ) , H ( Y )) < 0 . 05 . At higher layers of representation, this helps us identify root nodes. For the DNA example in Fig. 3, “gender” was a root node, but for visual simplicity all root nodes were connected at the top level. Follo wing similar reasoning as above, we can also check which Y j ’ s hav e learned significant struc- ture by looking at the value of E X ( − log Z j ( x )) . 12 C Implementation Details f or Comparisons W e represented the data from the binary erasure channel either as integers (0[ X i = 0] , 1[ X i = e ] , 2[ X i = 1]) for methods that deal with categorical data, or as floating numbers on the unit interv al for methods that require data of that form, (0[ X i = 0] , 0 . 5[ X i = e ] , 1[ X i = 1]) . In principle, we could also ha ve treated “erased” information as missing. But we treated erasure as another outcome in all cases, including for CorEx. CorEx naturally handles missing information (you can see that Eq. 7 can be easily marginalized to find labels ev en if some variables are missing). W e had to use this fact for the DNA dataset which did have some SNPs missing for some samples. In fact, because CorEx is, in a sense, looking for the most redundant information, it is quite robust to missing information. W e will now briefly describe the settings for various learning algorithms learned. W e used imple- mentations of standard learning techniques in the scikit library for comparisons [34] (v . 0.14). W e only used the standard, default implementation for k-means, PCA, ICA, and “hierarchical cluster- ing” using the W ard method. For spectral clustering we used a Gaussian kernel for the affinity matrix and a nearest neighbors affinity matrix using 3 or 10 neighbors. For spectral bi-clustering we tried clustering either the data matrix or its transpose. W e set the number of clusters to be m in the direction of variables and either 10 or 32 clusters for the variables. Note that the true number of clusterings in the sample space was 2 8 . For NMF we tried Projected Gradient NMF and NMF with the two types of implemented sparseness constraints. For the restricted Boltzmann machine, we used a single layer network with m units and learning rates 0 . 01 , 0 . 05 , 0 . 1 . T o cluster the input variables, we looked for the neuron with the maximum magnitude weight. For dimensionality re- duction techniques like LLE and Isomap, we used either 3 or 10 nearest neighbors and looked for a m component representation. Then we clustered v ariables by looking at which variables contributed most to each component of the representation. C.1 T wenty newsgroups For the twenty newsgroups dataset, scikit has built-in function for retrieving and processing the dataset. W e used the command below , resulting in a dataset with 18 , 846 posts. (Se veral different versions of this dataset are in circulation.) sklearn.datasets.fetch_20newsgroups(subset=’all’, remove=(’headers’,’footers’,’quotes’)). Because we are doing unsupervised learning, we combined the parts of the data normally split into training and testing sets. The attempt to strip footers turned out to be particularly relev ant. The heuristic to do so looks for a single line at the end of the file, set apart from the others by a blank line or some number of dashes. Obviously , many signature lines fail to conform to this format and this resulted in strongly correlated signals. This led to features at layer 1 that were perfect predictors of authors, like Gordon Banks, who always included a quote: “Skepticism is the chastity of the intellect, and it is shameful to surrender it too soon. ” W e considered any collection of upper or lo wer-case letters as a “word”. All characters were lower - cased. Apostrophes were removed (so that “I’ v e” becomes “iv e”). W e considered the top ten thou- sand most frequent w ords. For the thousand most frequent words, for each document we recorded a 0 if the word was not present, 1 if it was present but occurred with less than the av erage frequency , or a 2 if it occurred with more than av erage frequency . For the remaining words we just used a 0/1 representation to reflect if a word was present. CorEx details For the twenty newsgroups data, we trained CorEx in a top-down-bottom-up way . W e started with a “low resolution” model with m = 100 hidden units and k = 2 . W e used the result of this optimization to construct 100 large groups of words. Then, for each (now much smaller) group of w ords, we applied CorEx again to get a more fine-grained representation (and then we dis- card the representation that we used to find the original clustering). The result was a representation at layer 1 with 326 variables. At the next layer we fixed m = 50 , all units were used. At the next two layers we fixed m = 10 , 1 , respectiv ely . 13 Figure C.1: The bottom three layers of the hierarchical representation learned for the twenty ne wsgroups dataset, keeping only the three leaf nodes with the highest normalized mutual information with their parents and up to eight branches per node at layer 2. For latent v ariables, we list an abbre viation of the ne wsgroup it best corresponds to along with the precision. For a zoomable v ersion online go to http://bit.ly/corexvis . 14 (N) Change my mood a lot (N ) W o r r y a b o u t th i n g s (N ) Se l d o m fe e l b l u e (N ) H a v e fr e q u e n t mood swings (N ) G e t i r r i ta te d e a s i l y (N ) O fte n fe e l b l u e (N ) G e t s tr e s s e d o u t e a s i l y (N ) A m r e l a x e d m o s t o f th e ti m e (N ) A m e a s i l y d i s tu r b e d (N ) G e t u p s e t e a s i l y (C) Get chores done right away (C ) O fte n fo r g e t to p u t th i n g s b a c k i n th e i r p r o p e r p l a c e (C ) L i k e o r d e r (C ) A m e x a c ti n g in my work (C ) L e a v e m y belongings around (C ) Pa y a tte n ti o n to d e ta i l s (C ) Ma k e a m e s s o f th i n g s (C ) Sh i r k m y d u ti e s (C ) F o l l o w a s c h e d u l e (C ) A m a l w a y s p r e p a r e d (E) Am the life of the party (E) K e e p i n th e b a c k g r o u n d (E) Sta r t c o n v e r s a ti o n s (E) H a v e l i ttl e to s a y (E) A m q u i e t a r o u n d s tr a n g e r s (E) D o n ' t ta l k a l o t (E) F e e l c o m fo r ta b l e around people (E) T a l k to a l o t o f d i ffe r e n t p e o p l e a t p a r ti e s (E) D o n ' t l i k e to d r a w a tte n ti o n to m y s e l f (E) D o n ' t m i n d b e i n g th e c e n te r o f a tte n ti o n (O) Have a vivid imagination (O ) A m n o t i n te r e s te d i n a b s tr a c t i d e a s (O ) H a v e e x c e l l e n t i d e a s (O ) U s e d i ffi c u l t w o r d s (O ) Sp e n d ti m e r e fl e c ti n g o n th i n g s (O ) A m fu l l o f i d e a s (O ) H a v e a r i c h v o c a b u l a r y (O ) H a v e d i ffi c u l ty u n d e r s ta n d i n g a b s tr a c t i d e a s (O ) D o n o t h a v e a g o o d i m a g i n a ti o n (O ) A m q u i c k to u n d e r s ta n d th i n g s (A) Feel little concern for others (A ) A m n o t i n te r e s te d i n o th e r p e o p l e ' s problems (A ) H a v e a s o ft h e a r t (A ) Ma k e p e o p l e fe e l a t e a s e (A ) A m i n te r e s te d i n p e o p l e (A ) I n s u l t p e o p l e (A ) Sy m p a th i ze w i th o th e r s ' fe e l i n g s (A ) A m n o t r e a l l y i n te r e s te d i n o th e r s (A ) T a k e ti m e o u t fo r o th e r s (A ) F e e l o th e r s ' e m o ti o n s Figure C.2: CorEx learns a hierarchical representation from personality surve ys with 50 questions. The number of latent nodes in the tree and number of lev els are automatically determined. The question groupings at the first lev el exactly correspond to the “big five” personality traits. The prefix of each question indicates the trait test designers intended it to measure. The thickness of each edge represents mutual information between features and the size of each node represents the total correlation that the node captures about its children. 15

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment