Sparse Matrix-based Random Projection for Classification

As a typical dimensionality reduction technique, random projection can be simply implemented with linear projection, while maintaining the pairwise distances of high-dimensional data with high probability. Considering this technique is mainly exploit…

Authors: Weizhi Lu, Weiyu Li, Kidiyo Kpalma

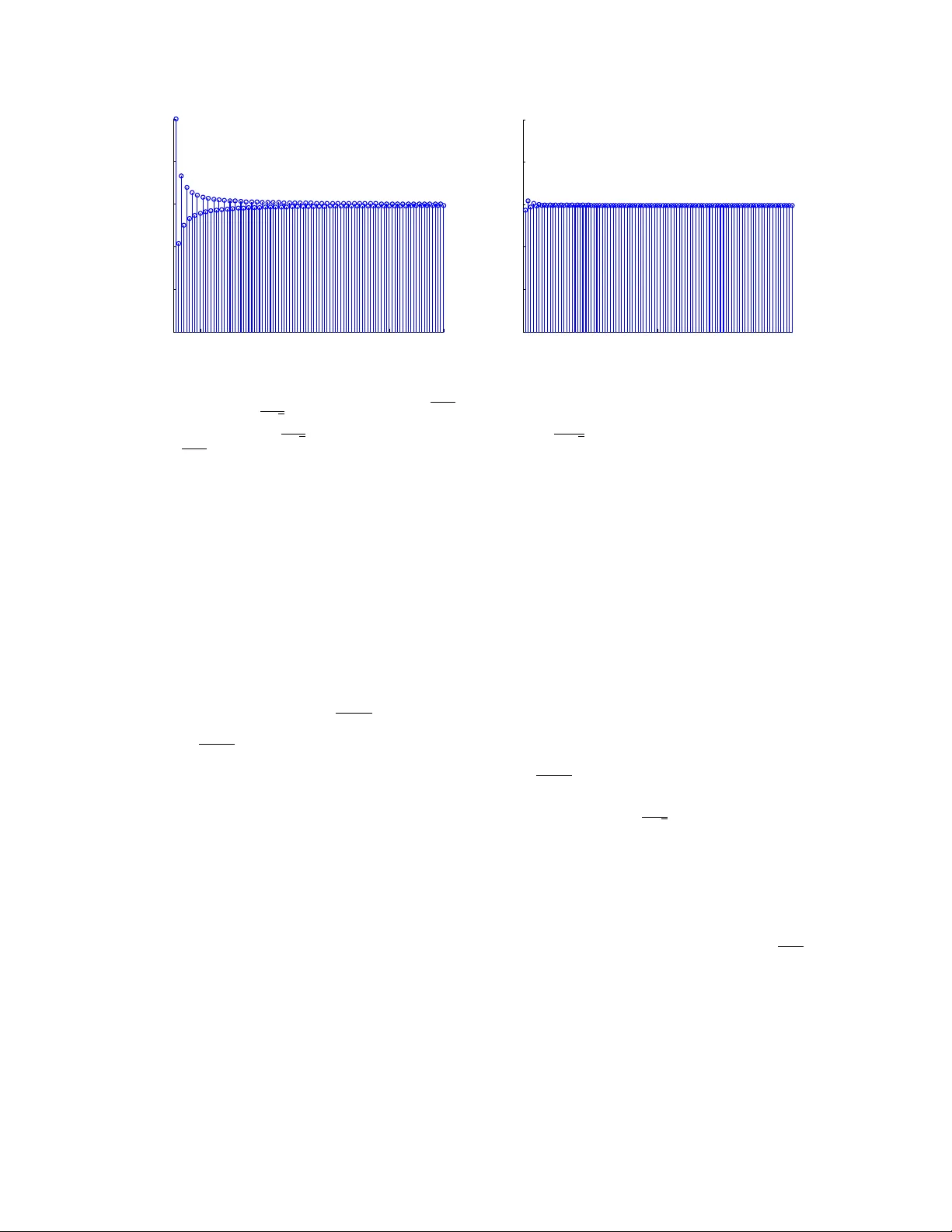

1 Sparse Matrix-based Random Projection for Classification W eizhi Lu, W eiyu Li, Kidiyo Kpalma and Joseph Ronsin Abstract As a typical dimensionality reduction technique, random projection can be simply implemented with linear projection, while maintaining the pairwise distances of high-dimensional data with high probability . Considering this technique is mainly exploited for the task of classification, this paper is dev eloped to study the construction of random matrix from the viewpoint of feature selection, rather than of traditional distance preserv ation. This yields a somewhat surprisi ng theoretical result, that is, the sparse random matrix with exactly one nonzero element per column, can present better feature selection performance than other more dense matrices, if the projection dimension is suf ficiently lar ge (namely , not much smaller than the number of feature elements); otherwise, it will perform comparably to others. For random projection, this theoretical result implies considerable impro vement on both comple xity and performance, which is widely confirmed with the classification e xperiments on both synthetic data and real data. Index T erms Random Projection, Sparse Matrix, Classification, Feature Selection, Distance Preservation, High- dimensional data I . I N T RO D U C T I O N Random projection attempts to project a set of high-dimensional data into a lo w-dimensional subspace without distortion on pairwise distance. This brings attracti ve computational adv antages on the collection and processing of high-dimensional signals. In practice, it has been successfully applied in numerous fields concerning categorization, as shown in [1] and the references therein. Currently the theoretical study of this technique mainly f alls into one of the following two topics. One topic is concerned with the construction of random matrix in terms of distance preservation. In fact, this problem has been sufficiently addressed along with the emergence of Johnson-Lindenstrauss (JL) lemma [2]. The other popular one 2 is to estimate the performance of traditional classifiers combined with random projection, as detailed in [3] and the references therein. Specifically , it may be worth mentioning that, recently the performance consistency of SVM on random projection is prov ed by exploiting the underlying connection between JL lemma and compressed sensing [4] [5]. Based on the principle of distance preserv ation, Gaussian random matrices [6] and a fe w sparse { 0 , ± 1 } random matrices [7], [8], [9] hav e been sequentially proposed for random projection. In terms of implementation complexity , it is clear that the sparse random matrix is more attractiv e. Unfortunately , as it will be proved in the following section II-B, the sparser matrix tends to yield weaker distance preserv ation. This fact largely weakens our interests in the pursuit of sparser random matrix. Ho wev er , it is necessary to mention a problem ignored for a long time, that is, random projection is mainly exploited for various tasks of classification, which prefer to maximize the distances between dif ferent classes, rather than preserve the pairwise distances. In this sense, we are moti vated to study random projection from the viewpoint of feature selection, rather than of traditional distance preservation as required by JL lemma. During this study , howe v er , the property of satisfying JL lemma should not be ignored, because it promises the stability of data structure during random projection, which enables the possibility of conducting classification in the projection space. Thus throughout the paper , all ev aluated random matrices are pre viously ensured to satisfy JL lemma to a certain degree. In this paper , we indeed propose the desired { 0 , ± 1 } random projection matrix with the best feature selection performance, by theoretically analyzing the change trend of feature selection performance o ver the v arying sparsity of random matrices. The proposed matrix presents currently the sparsest structure, which holds only one random nonzero position per column. In theory , it is expected to provide better classification performance over other more dense matrices, if the projection dimension is not much smaller than the number of feature elements. This conjecture is confirmed with extensi ve classification experiments on both synthetic and real data. The rest of the paper is organized as follo ws. In the next section, the JL lemma is first introduced, and then the distance preserv ation property of sparse random matrix ov er v arying sparsity is e v aluated. In section III, a theoretical frame is proposed to predict feature selection performance of random matrices ov er varying sparsity . According to the theoretical conjecture, the currently kno wn sparsest matrix with better performance ov er other more dense matrices is proposed and analyzed in section IV. In section V, the performance adv antage of the proposed sparse matrix is verified by performing binary classification on both synthetic data and real data. The real data incudes three representati ve datasets in dimension reduction: face image, DNA microarray and text document. Finally , this paper is concluded in section 3 VI. I I . P R E L I M I NA R I E S This section first briefly revie ws JL lemma, and then ev aluates the distance preservation of sparse random matrix o v er varying sparsity . For easy reading, we begin by introducing some basic notations for this paper . A random matrix is denoted by R ∈ R k × d , k < d . r ij is used to represent the element of R at the i -th row and the j -th column, and r ∈ R 1 × d indicates the row vector of R . Considering the paper is concerned with binary classification, in the follo wing study we tend to define two samples v ∈ R 1 × d and w ∈ R 1 × d , randomly drawn from two different patterns of high-dimensional datasets V ⊂ R d and W ⊂ R d , respectiv ely . The inner product between two vectors is typically written as h v , w i . T o distinguish from v ariable, the v ector is written in bold. In the proofs of the following lemmas, we typically use Φ( ∗ ) to denote the cumulativ e distribution function of N (0 , 1) . The minimal integer not less than ∗ , and the the maximum inte ger not larger than ∗ are denoted with d∗e and b∗c . A. J ohnson-Lindenstrauss (JL) lemma The distance preserv ation of random projection is supported by JL lemma. In the past decades, se veral v ariants of JL lemma ha ve been proposed in [10], [11], [12]. For the conv enience of the proof of the follo wing Corollary 2, here we recall the version of [12] in the follo wing Lemma 1. According to Lemma 1, it can be observ ed that a random matrix satisfying JL lemma should ha ve E ( r ij ) = 0 and E ( r 2 ij ) = 1 . Lemma 1. [12] Consider r andom matrix R ∈ R k × d , with eac h entry r ij chosen independently fr om a distribution that is symmetric about the origin with E ( r 2 ij ) = 1 . F or any fixed vector v ∈ R d , let v 0 = 1 √ k Rv T . • Suppose B = E ( r 4 ij ) < ∞ . Then for any > 0 , Pr ( k v 0 k 2 ≤ (1 − ) k v k 2 ) ≤ e − ( 2 − 3 ) k 2( B +1) (1) • Suppose ∃ L > 0 suc h that for any inte ger m > 0 , E ( r 2 m ij ) ≤ (2 m )! 2 m m ! L 2 m . Then for any > 0 , Pr ( k v 0 k 2 ≥ (1 + ) L 2 k v k 2 ) ≤ ((1 + ) e − ) k/ 2 ≤ e − ( 2 − 3 ) k 4 (2) 4 B. Sparse random pr ojection matrices Up to now , only a few random matrices are theoretically proposed for random projection. They can be roughly classified into two typical classes. One is the Gaussian random matrix with entries i.i.d dawn from N (0 , 1) , and the other is the sparse random matrix with elements satisfying the distribution below: r ij = √ q × 1 with probability 1 / 2 q 0 with probability 1 − 1 /q − 1 with probability 1 / 2 q (3) where q is allo wed to be 2, 3 [7] or √ d [8]. Apparently the larger q indicates the higher sparsity . Naturally , an interesting question arises: can we continue improving the sparsity of random projection? Unfortunately , as illustrated in Lemma 2, the concentration of JL lemma will decrease as the sparsity increases. In other words, the higher sparsity leads to weaker performance on distance preservation. Ho we ver , as it will be disclosed in the following part, the classification tasks in volving random projection are more sensiti ve to feature selection rather than to distance preservation. Lemma 2. Suppose one class of random matrices R ∈ R k × d , with eac h entry r ij of the distrib ution as in formula (3) , wher e q = k /s and 1 ≤ s ≤ k is an inte ger . Then these matrices satisfy JL lemma with differ ent le vels: the sparser matrix implies the worse pr operty on distance preservation. Pr oof: W ith formula (3), it is easy to deriv e that the proposed matrices satisfy the distribution defined in Lemma 1. In this sense, they also obey JL lemma if the two constraints corresponding to formulas (1) and (2) could be further pro ved. For the first constraint corresponding to formula (1): B = E ( r 4 ij ) = ( p k /s ) 4 × ( s/ 2 k ) + ( − p k /s ) 4 × ( s/ 2 k ) = k /s < ∞ (4) then it is approv ed. For the second constraint corresponding to formula (2): for any integer m > 0 , deri ve E ( r 2 m ) = ( k /s ) m − 1 , and E ( r 2 m ij ) (2 m )! L 2 m / (2 m m !) = 2 m m ! k m − 1 s m − 1 (2 m )! L 2 m . 5 Since (2 m )! ≥ m ! m m , E ( r 2 m ij ) (2 m )! L 2 m / (2 m m !) ≤ 2 m k m − 1 s m − 1 m m L 2 m , let L = (2 k /s ) 1 / 2 ≥ √ 2( k /s ) ( m − 1) / 2 m / √ m , further deri ve E ( r 2 m ij ) (2 m )! L 2 m / (2 m m !) ≤ 1 . Thus ∃ L = (2 k /s ) 1 / 2 > 0 such that E ( r 2 m ij ) ≤ (2 m )! 2 m m ! L 2 m for any integer m > 0 . Then the second constraint is also proved. Consequently , it is deduced that, as s decreases, B in formula (4) will increase, and subsequently the boundary error in formula (4) will get larger . And this implies that the sparser the matrix is, the worse the JL property . I I I . T H E O R E T I C A L F R A M E W O R K In this section, a theoretical frame work is proposed to e v aluate the feature selection performance of random matrices with varying sparsity . As it will be sho wn latter , the feature selection performance would be simply observed, if the product between the dif ference between two distinct high-dimensional v ectors and the sampling/row v ectors of random matrix, could be easily deri ved. In this case, we ha ve to previously kno w the distrib ution of the difference between two distinct high-dimensional vectors. For the possibility of analysis, the distribution should be characterized with a unified model. Unfortunately , this goal seems hard to be perfectly achie ved due to the di versity and complexity of natural data. Therefore, without loss of generality , we typically assume the i.i.d Gaussian distribution for the elements of difference between two distinct high-dimensional vectors, as detailed in the follo wing section III-A. According to the law of large numbers, it can be inferred that the Gaussian distrib ution is reasonable to be applied to characterize the distrib ution of high-dimensional vectors in magnitude. Similarly to most theoretical work attempting to model the real world, our assumption also suf fers from an obvious limitation. Empirically , some of the real data elements, in particular the redundant (indiscriminativ e) elements, tend to be coherent to some extent, rather than being absolutely independent as we assume above. This imperfection probably limits the accuracy and applicability of our theoretical model. Ho wev er , as will be detailed later , this problem can be ignored in our analysis where the difference between pairwise redundant elements is assume to be zero. This also explains why our theoretical proposal can be widely verified in the final experiments in volving 6 a great amount of real data. W ith the aforementioned assumption, in section III-B, the product between high-dimensional vector difference and row vectors of random matrices is calculated and analyzed with respect to the v arying sparsity of random matrix, as detailed in Lemmas 3-5 and related remarks. Note that to mak e the paper more readable, the proofs of Lemmas 3-5 are included in the Appendices. A. Distrib ution of the dif fer ence between two distinct high-dimensional vectors From the viewpoint of feature selection, the random projection is e xpected to maximize the difference between arbitrary two samples v and w from two different datasets V and W , respectively . Usually the dif ference is measured with the Euclidean distance denoted by k Rz T k 2 , z = v − w . Then in terms of the mutual independence of R , the search for good random projection is equi valent to seeking the row vector ˆ r such that ˆ r = arg max r {|h r , z i|} . (5) Thus in the following part we only need to ev aluate the row vectors of R . For the con venience of analysis, the two classes of high-dimensional data are further ideally divided into two parts, v = [ v f v r ] and w = [ w f w r ] , where v f and w f denote the feature elements containing the discriminativ e information between v and w such that E ( v f i − w f i ) 6 = 0 , while v r and w r represent the redundant elements such that E ( v r i − w r i ) = 0 with a tin y variance. Subsequently , r = [ r f r r ] and z = [ z f z r ] are also seperated into two parts corresponding to the coordinates of feature elements and redundant elements, respectiv ely . Then the task of random projection can be reduced to maximizing |h r f , z f i| , which implies that the redundant elements have no impact on the feature selection. Therefore, for simpler e xpression, in the follo wing part the high-dimensional data is assumed to have only feature elements except for specific explanation, and the superscript f is simply dropped. As for the intra-class samples, we can simply assume that their elements are all redundant elements, and then the e xpected v alue of their dif ference is equal to 0, as deri ved before. This means that the problem of minimizing the intra-class distance needs not to be further studied. So in the following part, we only consider the case of maximizing inter -class distance, as described in formula (5). T o explore the desired ˆ r i in formula (5), it is necessary to know the distribution of z . Howe ver , in practice the distribution is hard to be characterized since the locations of feature elements are usually unkno wn. As a result, we hav e to make a relaxed assumption on the distrib ution of z . For a giv en real dataset, the values of v i and w i should be limited. This allo ws us to assume that their dif ference z i is also bounded in amplitude, and acts as some unknown distribution. For the sake of generality , in this 7 paper z i is regarded as approximately satisfying the Gaussian distribution in magnitude and randomly takes a binary sign. Then the distrib ution of z i can be formulated as z i = x with probability 1 / 2 − x with probability 1 / 2 (6) where x ∈ N ( µ, σ 2 ) , µ is a positi ve number , and Pr ( x > 0) = 1 − , = Φ( − µ σ ) is a small positi ve number . B. Pr oduct between high-dimensional vector and r andom sampling vector with varying sparsity This subsection mainly tests the feature selection performance of random ro w vector with varying sparsity . For the sake of comparison, Gaussian random v ectors are also e v aluated. Recall that under the basic requirement of JL lemma, that is E ( r ij ) = 0 and E ( r 2 ij ) = 1 , the Gaussian matrix has elements i.i.d drawn from N (0 , 1) , and the sparse random matrix has elements distrib uted as in formula (3) with q ∈ { d/s : 1 ≤ s ≤ d, s ∈ N } . Then from the following Lemmas 3-5, we present two crucial random projection results for the high- dimensional data with the feature difference element | z i | distributed as in formula (6): • Random matrices will achiev e the best feature selection performance as only one feature element is sampled by each row vector; in other words, the solution to the formula (5) is obtained when r randomly has s = 1 nonzero elements; • The desired sparse random matrix mentioned abov e can also obtain better feature selection perfor- mance than Gaussian random matrices. Note that, for better understanding, we first prov e a relati vely simple case of z i ∈ {± µ } in Lemma 3, and then in Lemma 4 generalize to a more complicated case of z i distributed as in formula (6). The performance of Gaussian matrices on z i ∈ {± µ } is obtained in Lemma 5. Lemma 3. Let r = [ r 1 , ..., r d ] randomly have 1 ≤ s ≤ d nonzer o elements taking values ± p d/s with equal pr obability , and z = [ z 1 , ..., z d ] with elements being ± µ equipr obably , wher e µ is a positive constant. Given f ( r , z ) = |h r , z i| , ther e ar e thr ee r esults r e gar ding the expected value of f ( r i , z ) : 1) E ( f ) = 2 µ q d s 1 2 s d s 2 e C d s 2 e s ; 2) E ( f ) | s =1 = µ √ d > E ( f ) | s> 1 ; 3) lim s →∞ 1 √ d E ( f ) → µ q 2 π . Pr oof: Please see Appendix A. 8 1 10 20 30 40 50 60 70 80 90 100 0.5 0.6 0.7 0.8 0.9 1 s E(f)/( µ d 1/2 ) 2 10 20 30 40 50 60 70 80 90 99 0.5 0.6 0.7 0.8 0.9 1 s>1 (E(f)| s +E(f)| s+1 )/(2 µ d 1/2 ) (a) (b) Fig. 1: The process of 1 µ √ d E ( f ) conv er ging to p 2 /π ( ≈ 0 . 7979) with increasing s is described in (a); and in (b) the a verage v alue of two 1 µ √ d E ( f ) with adjacent s ( > 1) , namely 1 2 µ √ d ( E ( f ) | s + E ( f ) | s +1 ) , is appro ved very close to p 2 /π . Note that E ( f ) is calculated with the formula provided in Lemma 3. Remark on Lemma 3: This lemma discloses that the best feature selection performance is obtained, when only one feature element is sampled by each row vector . In contrast, the performance tends to con verge to a lower lev el as the number of sampled feature elements increases. Ho we ver , in practice the desired sampling process is hard to be implemented due to the fe w kno wledge of feature location. As it will be detailed in the next section, what we can really implement is to sample only one feature element with high probability . Note that with the proof of this lemma, it can also be pro ved that if s is odd, E ( f ) fast decreases to µ p 2 d/π with increasing s ; in contrast, if s is e ven, E ( f ) quickly increases to wards µ p 2 d/π as s increases. But for arbitrary two adjacent s lar ger than 1, their a verage value on E ( f ) , namely ( E ( f ) | s + E ( f ) | s +1 ) / 2 , is very close to µ p 2 d/π . For clarity , the values of E ( f ) ov er v arying s are calculated and sho wn in Figure 1, where instead of E ( f ) , 1 µ √ d E ( f ) is described since only the varying s is concerned. The specific character of E ( f ) ensures that one can still achiev e better performance ov er others by sampling s = 1 element with a relati ve high probability , along with the occurrence of a sequence of s taking consecutiv e v alues slightly lar ger than 1. Lemma 4. Let r = [ r 1 , ..., r d ] randomly have 1 ≤ s ≤ d nonzer o elements taking values ± p d/s with equal pr obability , and z = [ z 1 , ..., z d ] with elements distributed as in formula (6) . Given f ( r , z ) = |h r , z i| , it is derived that: E ( f ) | s =1 > E ( f ) | s> 1 9 if ( 9 8 ) 3 2 [ q 2 π + (1 + √ 3 4 ) 2 π ( µ σ ) − 1 ] + 2Φ( − µ σ ) ≤ 1 . Pr oof: Please see Appendix B. Remark on Lemma 4: This lemma expands Lemma 3 to a more general case where | z i | is allo wed to v ary in some range. In other words, there is an upper bound on σ µ for E ( f ) | s =1 > E ( f ) | s> 1 , since Φ( − µ σ ) decreases monotonically with respect to µ σ . Clearly the larger upper bound for σ µ allo ws more v ariation of | z i | . In practice the real upper bound should be lar ger than that we have deriv ed as a suf ficient condition in this lemma. Lemma 5. Let r = [ r 1 , ..., r d ] have elements i.i.d drawn fr om N (0 , 1) , and z = [ z 1 , ..., z d ] with elements being ± µ equipr obably , where µ is a positive constant. Given f ( r , z ) = |h r , z i| , its expected value E ( f ) = µ q 2 d π . Pr oof: Please see Appendix C. Remark on Lemma 5: Comparing this lemma with Lemma 3, clearly the ro w vector with Gaussian distribution shares the same feature selection le v el with sparse row vector with a relativ ely large s . This e xplains why in practice the sparse random matrices usually can present comparable classification performance with Gaussian matrix. More importantly , it implies that the sparsest sampling process provided in Lemma 3 should outperform Gaussian matrix on feature selection. I V . P RO P O S E D S PA R S E R A N D O M M A T R I X The lemmas of the former section hav e prov ed that the best feature selection performance can be obtained, if only one feature element is sampled by each row v ector of random matrix. It is no w interesting to know if the condition abov e can be satisfied in the practical setting, where the high-dimensional data consists of both feature elements and redundant elements, namely v = [ v f v r ] and w = [ w f w r ] . According to the theoretical condition mentioned above, it is kno wn that the row vector r = [ r f r r ] can obtain the best feature selection, only when || r f || 0 = 1 , where the quasi-norm ` 0 counts the number of nonzero elements in r f . Let r f ∈ R d f , and r r ∈ R d r , where d = d f + d r . Then the desired row vector should ha ve d/d f uniformly distrib uted nonzero elements such that E ( || r f || 0 ) = 1 . Ho we v er , in practice the desired distribution for row vectors is often hard to be determined, since for a real dataset the number of feature elements is usually unknown. In this sense, we are motiv ated to propose a general distribution for the matrix elements, such that || r f || 0 = 1 holds with high probability in the setting where the feature distribution is unkno wn. In other 10 words, the random matrix should hold the distrib ution maximizing the ratio Pr ( || r f || 0 = 1) / Pr ( || r f || 0 ∈ { 2 , 3 , ..., d f } ) . In practice, the desired distribution implies that the random matrix has exactly one nonzero position per column, which can be simply derived as below . Assume a random matrix R ∈ R k × d randomly holding 1 ≤ s 0 ≤ k nonzero elements per column , equi v alently s 0 d/k nonzero elements per r ow , then one can deri ve that Pr ( || r f || 0 = 1) / Pr ( || r f || 0 ∈ { 2 , 3 , ..., d f } ) = Pr ( || r f || 0 = 1) 1 − Pr ( || r f || 0 = 0) − Pr ( || r f || 0 = 1) = C 1 d f C s 0 d/k − 1 d r C s 0 d/k d − C s 0 d/k d r − C 1 d f C s 0 d/k − 1 d r = d f d r ! d !( d r − s 0 d/k +1)! s 0 d/k ( d − s 0 d/k )! − d r !( d r − s 0 d/k +1) s 0 d/k − d f d r ! (7) From the last equation in formula (7), it can be observ ed that the increasing s 0 d/k will reduce the value of formula (7). In order to maximize the value, we hav e to set s 0 = 1 . This indicates that the desired random matrix has only one nonzero element per column. The proposed random matrix with exactly one nonzero element per column presents two obvious adv antages, as detailed belo w . • In complexity , the proposed matrix clearly presents much higher sparsity than existing random projection matrices. Note that, theoretically the very sparse random matrix with q = √ d [8] has higher sparsity than the proposed matrix when k < √ d . Ho we ver , in practice the case k < √ d is usually not of practical interest, due to the weak performance caused by large compression rate d/k ( > √ d ). • In performance, it can be deriv ed that the proposed matrix outperforms other more dense matrices, if the projection dimension k is not much smaller than the number d f of feature elements included in the high-dimensional vector . T o be specific, from Figure 1, it can be observed that the dense matrices with column weight s 0 > 1 share comparable feature selection performance, because as s 0 increases they tend to sample more than one feature element (namely || r f || 0 > 1 ) with higher probability . Then the proposed matrix with s 0 = 1 will present better performance than them, if k ensures || r f || 0 = 1 with high probability , or equi v alently the ratio Pr ( || r f || 0 = 1) / Pr ( || r f || 0 ∈ { 2 , 3 , ..., d f } ) being relati vely large. As sho wn in formula (7), the condition abov e can be better satisfied, as k increases. In versely , as k decreases, the feature selection advantage of the proposed matrix will 11 degrade. Recall that the proposed matrix is weaker than other more dense matrices on distance preserv ation, as demonstrated in section II-B. This means that the proposed matrix will perform worse than others when its feature selection adv antage is not ob vious. In other words, there should exist a lower bound for k to ensure the performance adv antage of the proposed matrix, which is also verified in the following experiments. It can be roughly estimated that the lower bound of k should be on the order of d f , since for the proposed matrix with column weight s 0 = 1 , the k = d f leads to E ( || r f || 0 ) = d/k × d f /d = 1 . In practice, the performance advantage seemingly can be maintained for a relativ ely small k ( < d f ) . F or instance, in the following experiments on synthetic data, the lo wer bound of k is as small as d f / 20 . This phenomenon can be e xplained by the fact that to obtain performance advantage, the probability Pr ( || r f || 0 = 1) is only required to be relati v ely large rather than to be equal to 1, as demonstrated in the remark on Lemma 3. V . E X P E R I M E N T S A. Setup This section verifies the feature selection advantage of the proposed currently sparest matrix (StM) over other popular matrices, by conducting binary classification on both synthetic data and real data. Here the synthetic data with labeled feature elements is provided to specially observe the relation between the projection dimension and feature number, as well as the impact of redundant elements. The real data in volv es three typical datasets in the area of dimensionality reduction: face image, DN A microarray and text document. As for the binary classifier, the classical support vector machine (SVM) based on Euclidean distance is adopted. For comparison, we test three popular random matrices: Gaussian random matrix (GM), sparse random matrix (SM) as in formula (3) with q = 3 [7] and v ery sparse random matrix (VSM) with q = √ d [8]. The simulation parameters are introduced as follo ws. It is known that the repeated random projection tends to improve the feature selection, so here each classification decision is voted by performing 5 times the random projection [13]. The classification accuracy at each projection dimension k is deriv ed by taking the av erage of 100000 simulation runs. In each simulation, four matrices are tested with the same samples. The projection dimension k decreases uniformly from the high dimension d . Moreover , it is necessary to note that, for some datasets containing more than two classes of samples, the SVM classifier randomly selects tw o classes to conduct binary classification in each simulation. For each class of data, one half of samples are randomly selected for training, and the rest for testing. 12 T ABLE I: Classification accuracies on the synthetic data which have d = 2000 and redundant elements suf fering from three different v arying lev els σ r . The best performance is highlighted in bold. The lower bound of projection dimension k that ensures the proposal outperforming others in all datasets is highlighted in bold as well. Recall that the acronyms GM, SM, VSM and StM represent Gaussian random matrix, sparse random matrix with q = 3 , very sparse rand matrix with q = √ d , and the proposed sparsest random matrix, respectiv ely . k 50 100 200 400 600 800 1000 1500 2000 σ r = 8 GM 70.44 67.93 84.23 93.31 95.93 97.17 97.71 98.35 98.74 SM 70.65 67.90 84.43 93.03 95.97 96.86 97.78 98.36 98.80 VSM 70.55 68.05 84.46 93.19 96.00 96.99 97.68 98.38 98.76 StM 70.27 68.09 84.66 94.22 97.11 98.03 98.67 99.37 99.57 σ r = 12 GM 64.89 63.06 76.08 85.04 88.46 90.21 91.16 92.68 93.32 SM 64.67 62.66 75.85 85.03 88.30 90.09 91.21 92.70 93.30 VSM 65.17 62.95 76.12 85.14 88.80 90.46 91.37 92.88 93.64 StM 64.85 63.00 76.82 88.41 93.51 96.12 97.59 99.13 99.68 σ r = 16 GM 60.90 59.42 70.13 78.26 81.70 83.82 84.74 86.50 87.49 SM 60.86 59.58 69.93 78.04 81.66 83.85 84.79 86.55 87.39 VSM 60.98 59.87 70.27 78.49 81.98 84.36 85.27 86.98 87.81 StM 61.09 59.29 71.58 84.56 91.65 95.50 97.24 98.91 99.30 B. Synthetic data experiments 1) Data generation: The synthetic data is dev eloped to ev aluate the two factors as follo ws: • the relation between the lower bound of projection dimension k and the feature dimension d f ; • the negati ve impact of redundant elements, which are ideally assumed to be zero in the previous theoretical proofs. T o this end, two classes of synthetic data with d f feature elements and d − d f redundant elements are generated in tw o steps: • randomly b uild a vector ˜ v ∈ {± 1 } d , then define a vector ˜ w distributed as ˜ w i = − ˜ v i , if 1 ≤ i ≤ d f , and ˜ w i = ˜ v i , if d f < i ≤ d ; • generate two classes of datasets V and W by i.i.d sampling v f i ∈ N ( ˜ v i , σ 2 f ) and w f i ∈ N ( ˜ w i , σ 2 f ) , if 1 ≤ i ≤ d f ; and v r i ∈ N ( ˜ v i , σ 2 r ) and w r i ∈ N ( ˜ w i , σ 2 r ) , if d f < i ≤ d . Subsequently , the distributions on pointwise distance can be approximately derived as | v f i − w f i | ∈ N (2 , 2 σ 2 f ) for feature elements and ( v r i − w r i ) ∈ N (0 , 2 σ 2 r ) for redundant elements, respectiv ely . T o be close to reality , we introduce some unreliability for feature elements and redundant elements by adopting relati vely large variances. Precisely , in the simulation σ f is fixed to 8 and σ r v aries in the set { 8 , 12 , 16 } . Note that, the probability of ( v r i − w r i ) con v erging to zero will decrease as σ r increases. Thus the increasing σ r will be a challenge for our previous theoretical conjecture deriv ed on the assumption of ( v r i − w r i ) = 0 . As for the size of the dataset, the data dimension d is set to 2000, and the feature dimension d f = 1000 . 13 Each dataset consists of 100 randomly generated samples. 2) Results: T able I shows the classification performance of four types of matrices over evenly varying projection dimension k . It is clear that the proposal alw ays outperforms others, as k > 200 (equi valently , the compression ratio k /d > 0 . 1 ). This result exposes two positi ve clues. First, the proposed matrix preserves obvious advantage over others, e ven when k is relati vely small, for instance, k /d f is allowed to be as small as 1/20 when σ r = 8 . Second, with the interference of redundant elements, the proposed matrix still outperforms others, which implies that the previous theoretical result is also applicable to the real case where the redundant elements cannot be simply ne glected. C. Real data experiments Three types of representati ve high-dimensional datasets are tested for random projection o v er e v enly v arying projection dimension k . The datasets are first briefly introduced, and then the results are illustrated and analyzed. Note that, the simulation is dev eloped to compare the feature selection performance of dif ferent random projections, rather than to obtain the best performance. So to reduce the simulation load, the original high-dimensional data is uniformly downsampled to a relati vely low dimension. Precisely , the face image, DN A, and text are reduced to the dimensions 1200, 2000 and 3000, respectiv ely . Note that, in terms of JL lemma, the original high dimension allo ws to be reduced to arbitrary values (not limited to 1200, 2000 or 3000), since theoretically the distance preserv ation of random projection is independent of the size of high-dimensional data [7]. 1) Datasets: • Face image – AR [14] : As in [15], a subset of 2600 frontal faces from 50 males and 50 females are examined. For some persons, the faces were taken at different times, varying the lighting, facial expressions (open/closed eyes, smiling/not smiling) and facial details (glasses/no glasses). There are 6 faces with dark glasses and 6 faces partially disguised by scarfs among 26 faces per person. – Extended Y ale B [16], [17]: This dataset includes about 2414 frontal faces of 38 persons, which suf fer varying illumination changes. – FERET [18]: This dataset consists of more than 10000 faces from more than 1000 persons taken in largely varying circumstances. The database is further di vided into se veral sets which are formed for different ev aluations. Here we ev aluate the 1984 fr ontal faces of 992 persons each with 2 faces separately extracted from sets fa and fb . 14 T ABLE II: Classification accuracies on five face datasets with dimension d = 1200 . For each projection dimension k , the best performance is highlighted in bold. The lo wer bound of projection dimension k that ensures the proposal outperforming others in all datasets is highlighted in bold as well. Recall that the acronyms GM, SM, VSM and StM represent Gaussian random matrix, sparse random matrix with q = 3 , very sparse random matrix with q = √ d , and the proposed sparsest random matrix, respecti vely . k 30 60 120 240 360 480 600 AR GM 98.67 99.04 99.19 99.24 99.30 99.28 99.33 SM 98.58 99.04 99.21 99.25 99.31 99.30 99.32 VSM 98.62 99.07 99.20 99.27 99.30 99.31 99.34 StM 98.64 99.10 99.24 99.35 99.48 99.50 99.58 Ext-Y aleB GM 97.10 98.06 98.39 98.49 98.48 98.45 98.47 SM 97.00 98.05 98.37 98.49 98.48 98.45 98.47 VSM 97.12 98.05 98.36 98.50 98.48 98.45 98.48 StM 97.15 98.06 98.40 98.54 98.54 98.57 98.59 FERET GM 86.06 86.42 86.31 86.50 86.46 86.66 86.57 SM 86.51 86.66 87.26 88.01 88.57 89.59 90.13 VSM 87.21 87.61 89.34 91.14 92.31 93.75 93.81 StM 87.11 88.74 92.04 95.38 96.90 97.47 97.47 GTF GM 96.67 97.48 97.84 98.06 98.09 98.10 98.16 SM 96.63 97.52 97.85 98.06 98.09 98.13 98.16 VSM 96.69 97.57 97.87 98.10 98.13 98.14 98.16 StM 96.65 97.51 97.94 98.25 98.40 98.43 98.53 ORL GM 94.58 95.69 96.31 96.40 96.54 96.51 96.49 SM 94.50 95.63 96.36 96.38 96.48 96.47 96.48 VSM 94.60 95.77 96.33 96.35 96.53 96.55 96.46 StM 94.64 95.75 96.43 96.68 96.90 97.04 97.05 – GTF [19]: In this dataset, 750 images from 50 persons were captured at different scales and orientations under v ariations in illumination and expression. So the cropped faces suffer from serious pose v ariation. – ORL [20]: It contains 40 persons each with 10 faces. Besides slightly v arying lighting and expressions, the faces also under go slight changes on pose. • DN A microarray – Colon [21]: This is a dataset consisting of 40 colon tumors and 22 normal colon tissue samples. 2000 genes with highest intensity across the samples are considered. – ALML [22]: This dataset contains 25 samples taken from patients suffering from acute myeloid leukemia (AML) and 47 samples from patients suf fering from acute lymphoblastic leukemia (ALL). Each sample is expressed with 7129 genes. – Lung [23] : This dataset contains 86 lung tumor and 10 normal lung samples. Each sample holds 7129 genes. 15 T ABLE III: Classification accuracies on three DNA datasets with dimension d = 2000 . For each projection dimension k , the best performance is highlighted in bold. The lower bound of projection dimension k that ensures the proposal outperforming others in all datasets is highlighted in bold as well. Recall that the acronyms GM, SM, VSM and StM represent Gaussian random matrix, sparse random matrix with q = 3 , very sparse random matrix with q = √ d , and the proposed sparsest random matrix, respectiv ely . k 50 100 200 400 600 800 1000 1500 Colon GM 77.16 77.15 77.29 77.28 77.46 77.40 77.35 77.55 SM 77.23 77.18 77.16 77.36 77.42 77.42 77.39 77.54 VSM 76.86 77.19 77.34 77.52 77.64 77.61 77.61 77.82 StM 76.93 77.34 77.73 78.22 78.51 78.67 78.65 78.84 ALML GM 65.11 66.22 66.96 67.21 67.23 67.24 67.28 67.37 SM 65.09 66.16 66.93 67.25 67.22 67.31 67.31 67.36 VSM 64.93 67.32 68.52 69.01 69.15 69.16 69.25 69.33 StM 65.07 68.38 70.43 71.39 71.75 71.87 72.00 72.11 Lung GM 98.74 98.80 98.91 98.96 98.95 98.96 98.95 98.97 SM 98.71 98.80 98.92 98.97 98.96 98.98 98.97 98.97 VSM 98.81 99.21 99.48 99.57 99.58 99.61 99.61 99.61 StM 98.70 99.48 99.69 99.70 99.69 99.72 99.68 99.65 • T ext document [24] 1 – TDT2: The recently modified dataset includes 96 cate gories of total 10212 documents/samples. Each document is represented with vector of length 36771. This paper adopts the first 19 categories each with more than 100 documents, such that each category is tested with 100 randomly selected documents. – 20Ne wsgroups (version 1): There are 20 categories of 18774 documents in this dataset. Each document has vector dimension 61188. Since the documents are not equally distributed in the 20 categories, we randomly select 600 documents for each category , which is nearly the maximum number we can assign to all cate gories. – RCV1: The original dataset contains 9625 documents each with 29992 distinct words, corre- sponding to 4 cate gories with 2022, 2064, 2901, and 2638 documents respectiv ely . T o reduce computation, this paper randomly selects only 1000 documents for each category . 2) Results: T ables II-IV illustrate the classification performance of four classes of matrices on three typical high-dimensional data: face image, DN A microarray and text document. It can be observed that, all results are consistent with the theoretical conjecture stated in section IV. Precisely , the proposed matrix will always perform better than others, if k is larger than some thresholds, i.e. k > 120 (equiv alently , the compression ratio k /d > 1 / 10 ) for all face image data, k > 100 ( k /d > 1 / 20 ) for all DN A 1 Publicly av ailable at http://www .cad.zju.edu.cn/home/dengcai/Data/T extData.html 16 T ABLE IV: Classification accuracies on three T ext datasets with dimension d = 3000 . For each projection dimension k , the best performance is highlighted in bold. The lo wer bound of projection dimension k that ensures the proposal outperforming others in all datasets is highlighted in bold as well. Recall that the acronyms GM, SM, VSM and StM represent Gaussian random matrix, sparse random matrix with q = 3 , very sparse random matrix with q = √ d , and the proposed sparsest random matrix, respecti vely . k 150 300 600 900 1200 1500 2000 TDT2 GM 83.64 83.10 82.84 82.29 81.94 81.67 81.72 SM 83.61 82.93 83.10 82.28 81.92 81.55 81.76 VSM 82.59 82.55 82.72 82.20 81.74 81.47 81.78 StM 82.52 83.15 84.06 83.58 83.42 82.95 83.35 Newsgroup GM 75.35 74.46 72.27 71.52 71.34 70.63 69.95 SM 75.21 74.43 72.29 71.30 71.07 70.34 69.58 VSM 74.84 73.47 70.22 69.21 69.28 68.28 68.04 StM 74.94 74.20 72.34 71.54 71.53 70.46 70.00 RCV1 GM 85.85 86.20 81.65 78.98 78.22 78.21 78.21 SM 86.05 86.19 81.53 79.08 78.23 78.14 78.19 VSM 86.04 86.14 81.54 78.57 78.12 78.05 78.04 StM 85.75 86.33 85.09 83.38 82.30 81.39 80.69 data, and k > 600 ( k /d > 1 / 5 ) for all te xt data. Note that, for some individual datasets, in fact we can obtain smaller thresholds than the uniform thresholds described abo ve, which means that for these datasets, our performance advantage can be ensured in lower projection dimension. It is worth noting that our performance gain usually varies across the types of data. For most data, the gain is on the le vel of around 1% , e xcept for some special cases, for which the gain can achiev e as large as around 5% . Moreov er , it should be noted that the proposed matrix can still present comparable performance with others (usually inferior to the best results not more than 1% ), even as k is smaller than the lower threshold described abo ve. This implies that regardless of the value of k , the proposed matrix is al ways valuable due to its lower complexity and competitive performance. In short, the extensiv e experiments on real data suf ficiently verifies the performance advantage of the theoretically proposed random matrix, as well as the conjecture that the performance advantage holds only when the projection dimension k is large enough. V I . C O N C L U S I O N A N D D I S C U S S I O N This paper has prov ed that random projection can achie ve its best feature selection performance, when only one feature element of high-dimensional data is considered at each sampling. In practice, ho we ver , the number of feature elements is usually unknown, and so the aforementioned best sampling process is hard to be implemented. Based on the principle of achieving the best sampling process with high probability , we practically propose a class of sparse random matrices with exactly one nonzero 17 element per column, which is expected to outperform other more dense random projection matrices, if the projection dimension is not much smaller than the number of feature elements. Recall that for the possibility of theoretical analysis, we have typically assumed that the elements of high-dimensional data are mutually independent, which ob viously cannot be well satisfied by the real data, especially the redundant elements. Although the impact of redundant elements is reasonably av oided in our analysis, we cannot ensure that all analyzed feature elements are exactly independent in practice. This defect might af fect the applicability of our theoretical proposal to some extent, whereas empirically the negati ve impact seems to be negligible, as proved by the experiments on synthetic data. In order to validate the feasibility of the theoretical proposal, e xtensive classification experiments are conducted on v arious real data, including face image, DN A microarray and text document. As it is expected, the proposed random matrix shows better performance than other more dense matrices, as the projection dimension is sufficiently large; otherwise, it presents comparable performance with others. This result suggests that for random projection applied to the task of classification, the proposed currently sparsest random matrix is much more attracti ve than other more dense random matrices in terms of both comple xity and performance. A P P E N D I X A . Proof of Lemma 3 Pr oof: Due to the sparsity of r and the symmetric property of both r j and z j , the function f ( r , z ) can be equiv alently transformed to a simpler form, that is f ( x ) = µ q d s | P i = s i =1 x i | with x i being ± 1 equiprobably . W ith the simplified form, three results of this lemma are sequentially pro ved below . 1) First, it can be easily deri ved that E ( f ( x )) = µ r d s 1 2 s s X i =1 ( C i s | s − 2 i | ) then the solution to E ( f ( x )) turns to calculating P s i =0 ( C i s | s − 2 i | ) , which can be deduced as s X i =0 ( C i s | s − 2 i | ) = 2 sC s 2 − 1 s − 1 if s is ev en 2 sC s − 1 2 s − 1 if s is odd by summing the piece wise function 18 C i s | s − 2 i | = sC 0 s − 1 if i = 0 sC s − i − 1 s − 1 − sC i − 1 s − 1 if 1 ≤ i ≤ s 2 sC i − 1 s − 1 − sC s − i − 1 s − 1 if s 2 < i < s sC s − 1 s − 1 if i = s Further , with C i − 1 s − 1 = i s C i s , it can be deduced that s X i =0 ( C i s | s − 2 i | ) = 2 d s 2 e C d s 2 e s Then the fist result is obtained as E ( f ) = 2 µ r d s 1 2 s d s 2 e C d s 2 e s 2) Follo wing the proof above, it is clear that E ( f ( x )) | s =1 = f ( x ) | s =1 = µ √ d . As for E ( f ( x )) | s> 1 , it is ev aluated under two cases: – if s is odd, E ( f ( x )) | s E ( f ( x )) | s − 2 = 2 √ s 1 2 s s +1 2 C s +1 2 s 2 √ s − 2 1 2 s − 2 s − 1 2 C s − 1 2 s − 2 = p s ( s − 2) s − 1 < 1 namely , E ( f ( x )) decreases monotonically with respect to s . Clearly , in this case E ( f ( x )) | s =1 > E ( f ( x )) | s> 1 ; – if s is e ven, E ( f ( x )) | s E ( f ( x )) | s − 1 = 2 √ s 1 2 s s 2 C s 2 s 2 √ s − 1 1 2 s − 1 s 2 C s 2 s − 1 = r s − 1 s < 1 which means E ( f ( x )) | s =1 > E ( f ( x )) | s> 1 , since s − 1 is odd number for which E ( f ( x )) monotonically decreases. Therefore the proof of the second result is completed. 3) The proof of the third result is dev eloped by employing Stirling’ s approximation [25] s ! = √ 2 π s ( s e ) s e λ s , 1 / (12 s + 1) < λ s < 1 / (12 s ) . Precisely , with the formula of E ( f ( x )) , it can be deduced that 19 – if s is e ven, E ( f ( x )) = µ √ ds 1 2 s s ! s 2 ! s 2 ! = µ r 2 d π e λ s − 2 λ s 2 – if s is odd, E ( f ( x )) = µ √ d s + 1 √ s 1 2 s s ! s +1 2 ! s − 1 2 ! = µ r 2 d π ( s 2 s 2 − 1 ) s 2 e λ s − λ s +1 2 − λ s − 1 2 Clearly lim s →∞ 1 √ d E ( f ( x )) → µ q 2 π holds, whenev er s is e v en or odd. A P P E N D I X B . Proof of Lemma 4 Pr oof: Due to the sparsity of r and the symmetric property of both r j and z j , it is easy to derive that f ( r , z ) = |h r , z i| = q d s | P s j =1 z j | . This simplified formula will be studied in the follo wing proof. T o present a readable proof, we first revie w the distribution sho wn in formula (6) z j ∼ N ( µ, σ ) with probability 1 / 2 N ( − µ, σ ) with probability 1 / 2 where for x ∈ N ( µ, σ ) , Pr ( x > 0) = 1 − , = Φ( − µ σ ) is a tiny positive number . For notational simplicity , the subscript of random variable z j is dropped in the following proof. T o ease the proof of the lemma, we first need to deri v e the e xpected value of | x | with x ∼ N ( µ, σ 2 ) : E ( | x | ) = Z ∞ −∞ | x | √ 2 π σ e − ( x − µ ) 2 2 σ 2 dx = Z 0 −∞ − x √ 2 π σ e − ( x − µ ) 2 2 σ 2 dx + Z ∞ 0 x √ 2 π σ e − ( x − µ ) 2 2 σ 2 dx = − Z 0 −∞ x − µ √ 2 π σ e − ( x − µ ) 2 2 σ 2 dx + Z ∞ 0 x − µ √ 2 π σ e − ( x − µ ) 2 2 σ 2 dx + µ Z ∞ 0 1 √ 2 π σ e − ( x − µ ) 2 2 σ 2 dx − µ Z 0 −∞ 1 √ 2 π σ e − ( x − µ ) 2 2 σ 2 dx = σ √ 2 π e − ( x − µ ) 2 2 σ 2 | 0 −∞ − σ √ 2 π e − ( x − µ ) 2 2 σ 2 | ∞ 0 + µ Pr ( x > 0) − µ Pr ( x < 0) = r 2 π σ e − µ 2 2 σ 2 + µ (1 − 2 Pr ( x < 0)) = r 2 π σ e − µ 2 2 σ 2 + µ (1 − 2Φ( − µ σ )) 20 which will be used man y a time in the following proof. Then the proof of this lemma is separated into two parts as follo ws. 1) This part presents the expected value of f ( r i , z ) for the cases s = 1 and s > 1 . – if s = 1 , f ( r , z ) = √ d | z | ; with the the probability density function of z : p ( z ) = 1 2 1 √ 2 π σ e − ( z − µ ) 2 2 σ 2 + 1 2 1 √ 2 π σ e − ( z + µ ) 2 2 σ 2 one can deri ve that E ( | z | ) = Z ∞ −∞ | z | p ( z ) d z = 1 2 Z ∞ −∞ | z | √ 2 π σ e − ( z − µ ) 2 2 σ 2 dz + 1 2 Z ∞ −∞ | z | √ 2 π σ e − ( z + µ ) 2 2 σ 2 dz with the pre vious result on E ( | x | ) , it is further deduced that E ( | z | ) = r 2 π σ e − µ 2 2 σ 2 + µ (1 − 2Φ( − µ σ )) Recall that Φ( − µ σ ) = , so E ( f ) = √ d E ( | z | ) = r 2 d π σ µ e − µ 2 2 σ 2 + µ √ d (1 − 2Φ( − µ σ )) ≈ µ √ d if is tiny enough as illustrated in formula (6). – if s > 1 , f ( r , z ) = q d s | P s j =1 z | ; let t = P s j =1 z , then according to the symmetric distribution of z , t holds s + 1 different distributions: t ∼ N (( s − 2 i ) µ, sσ 2 ) with probability 1 2 s C i s where 0 ≤ i ≤ s denotes the number of z drawn from N ( − µ, σ 2 ) . Then the PDF of t can be described as p ( t ) = 1 2 s s X i =0 C i s 1 √ 2 π sσ e − ( t − ( s − 2 i ) µ ) 2 2 sσ 2 then, 21 E ( | t | ) = Z ∞ −∞ | t | p ( t ) dt = 1 2 s s X i =0 C i s Z ∞ −∞ | t | 1 √ 2 π sσ e − ( t − ( s − 2 i ) µ ) 2 2 sσ 2 dt = 1 2 s s X i =0 C i s { r 2 s π σ e − ( s − 2 i ) 2 µ 2 2 sσ 2 + µ | s − 2 i | [1 − 2Φ( −| s − 2 i | µ √ sσ )] } subsequently , the expected value of f ( r i , z ) can be expressed as E ( f ) = µ r d s 1 2 s s X i =0 ( C i s | s − 2 i | ) + σ r 2 d π 1 2 s s X i =0 C i s e − ( s − 2 i ) 2 µ 2 2 sσ 2 − 2 µ r d s 1 2 s s X i =0 [ C i s | s − 2 i | Φ( −| s − 2 i | µ √ sσ )] 2) This part deri ves the upper bound of the aforementioned E ( f ) | s> 1 . For simpler expression, the three factors of above expression for E ( f ) | s> 1 are sequentially represented by f 1 , f 2 and f 3 , and then are analyzed, respecti vely . – for f 1 = µ q d s 1 2 s P s i =0 ( C i s | s − 2 i | ) , it can be rewritten as f 1 = 2 µ r d s 1 2 s C d s 2 e s d s 2 e – for f 2 = σ q 2 d π 1 2 s P s i =0 C i s e − ( s − 2 i ) 2 µ 2 2 sσ 2 , first, we can bound e − ( s − 2 i ) 2 µ 2 2 sσ 2 < exp ( − µ 2 σ 2 ) if i < α or i > α e − ( s − 2 i ) 2 µ 2 2 sσ 2 ≤ 1 if α ≤ i ≤ s − α where α = d s − √ s 2 e . T ake it into f 2 , f 2 < σ r 2 d π 1 2 s α − 1 X i =0 C i s e − µ 2 σ 2 + σ r 2 d π 1 2 s s X i = s − α +1 C i s e − µ 2 2 σ 2 + σ r 2 d π 1 2 s s − α X i = α C i s < σ r 2 d π e − µ 2 σ 2 + σ r 2 d π 1 2 s s − α X i = α C i s 22 Since C i s ≤ C d s/ 2 e s , f 2 < σ r 2 d π e − µ 2 σ 2 + σ r 2 d π 1 2 s ( b √ s c + 1) C d s/ 2 e s ≤ σ r 2 d π e − µ 2 σ 2 + σ r 2 d π 1 2 s √ sC d s/ 2 e s + σ r 2 d π 1 2 s C d s/ 2 e s ≤ σ r 2 d π e − µ 2 σ 2 + σ r 2 d π 1 2 s 2 √ s C d s/ 2 e s d s 2 e + σ r 2 d π 1 2 s C d s/ 2 e s with Stirling’ s approximation, f 2 < q 2 d π σ e − µ 2 2 σ 2 + √ d 2 π σ e λ s − 2 λ s/ 2 + q d s 2 π σ e λ s − 2 λ s/ 2 if s is e ven q 2 d π σ e − µ 2 2 σ 2 + √ d 2 σ π ( s 2 s 2 − 1 ) s 2 e λ s − λ s +1 2 − λ s − 1 2 + √ d 2 σ π √ s s +1 ( s 2 s 2 − 1 ) s 2 e λ s − λ s +1 2 − λ s − 1 2 if s is odd – for f 3 = − 2 µ q d s 1 2 s P s i =0 [ C i s | s − 2 i | Φ( −| s − 2 i | µ √ sσ )] , with the pre vious defined α , f 3 ≤ − 2 µ r d s 1 2 s s − α X i = α [ C i s | s − 2 i | Φ( −| s − 2 i | µ √ sσ )] ≤ − 2 µ r d s 1 2 s s − α X i = α [ C i s | s − 2 i | Φ( − µ σ )] = − 2 µ r d s 1 2 s s − α X i = α [ C i s | s − 2 i | ] = − 2 µ r d s 1 2 s (2 sC d s 2 − 1 e s − 1 − 2 sC α − 1 s − 1 ) = − 4 µ √ ds 1 2 s ( C d s 2 − 1 e s − 1 − C α − 1 s − 1 ) ≤ 0 23 finally , we can further deduce that E ( f ) | s> 1 = f 1 + f 2 + f 3 < 2 µ 1 2 s q d s C d s 2 e s + 2 σ π √ de λ s − 2 λ s 2 + q 2 d π σ e − µ 2 2 σ 2 + q d s 2 π σ e λ s − 2 λ s/ 2 − 4 µ √ ds 1 2 s ( C d s 2 − 1 e s − 1 − C α − 1 s − 1 ) if s is e ven 2 µ 1 2 s q d s C d s 2 e s + 2 σ π √ d s 2 s 2 − 1 s 2 e λ s − λ s +1 2 − λ s − 1 2 + q 2 d π σ e − µ 2 2 σ 2 + √ d 2 σ π √ s s +1 ( s 2 s 2 − 1 ) s 2 e λ s − λ s +1 2 − λ s − 1 2 − 4 µ √ ds 1 2 s ( C d s 2 − 1 e s − 1 − C α − 1 s − 1 ) if s is odd = ( q 2 d π µ + 4 σ π √ d ) e λ s − 2 λ s 2 + q 2 d π σ e − µ 2 2 σ 2 + q d s 2 π σ e λ s − 2 λ s/ 2 − 4 µ √ ds 1 2 s ( C d s 2 − 1 e s − 1 − C α − 1 s − 1 ) if s is e ven ( q 2 d π µ + 4 σ π √ d )( s 2 s 2 − 1 ) s 2 e λ s − λ s +1 2 − λ s − 1 2 + q 2 d π σ e − µ 2 2 σ 2 + √ d 2 σ π √ s s +1 ( s 2 s 2 − 1 ) s 2 e λ s − λ s +1 2 − λ s − 1 2 − 4 µ √ ds 1 2 s ( C d s 2 − 1 e s − 1 − C α − 1 s − 1 ) if s is odd 3) This part discusses the condition for E ( f ) | s> 1 < E ( f ) | s =1 = r 2 d π σ e − µ 2 2 σ 2 + µ √ d (1 − 2Φ( − µ σ )) by further relaxing the upper bound of E ( f ) | s> 1 . – if s is e ven, since f 3 ≤ 0 , E ( f ) | s> 1 < ( r 2 d π µ + 2 σ π √ d ) e λ s − 2 λ s 2 + r d s 2 π σ e λ s − 2 λ s/ 2 + r 2 d π σ e − µ 2 2 σ 2 ≤ ( r 2 d π µ + 2 σ π √ d ) + r d s 2 π σ + r 2 d π σ e − µ 2 2 σ 2 = µ √ d ( r 2 π + (1 + 1 √ s ) 2 σ π µ ) + r 2 d π σ e − µ 2 2 σ 2 Clearly E ( f ) | s> 1 < E ( f ) | s =1 , if q 2 π + (1 + 1 √ 2 ) 2 σ π µ ≤ 1 − 2Φ( − µ σ ) . This condition is well satisfied when µ >> σ , since Φ( − µ σ ) decreases monotonically with increasing µ/σ . – if s is odd, with f 3 ≤ 0 , E ( f ) | s> 1 < ( r 2 d π µ + 2 σ π √ d )( s 2 s 2 − 1 ) s 2 + √ d 2 σ π √ s s + 1 ( s 2 s 2 − 1 ) s 2 + r 2 d π σ e − µ 2 2 σ 2 It can be prov ed that ( s 2 s 2 − 1 ) s 2 decreases monotonically with respect to s . This yields that 24 E ( f ) | s> 1 < ( r 2 d π µ + (1 + √ 3 4 ) 2 σ π √ d )( 3 2 3 2 − 1 ) 3 2 + r 2 d π σ e − µ 2 2 σ 2 in this case E ( f ) | s> 1 < E ( f ) | s =1 , if ( 9 8 ) 3 2 ( q 2 π + (1 + √ 3 4 ) 2 σ π µ ) ≤ 1 − 2Φ( − µ σ ) . Summarizing above two cases for s , finally E ( f ) | s> 1 < E ( f ) | s =1 , if ( 9 8 ) 3 2 [ r 2 π + (1 + √ 3 4 ) 2 π ( µ σ ) − 1 ] + 2Φ( − µ σ ) ≤ 1 A P P E N D I X C . Proof of Lemma 5 First, one can rewrite f ( r , z ) = | Σ j = d j =1 ( r j z j ) | = µ | x | , where x ∈ N (0 , d ) , since i.i.d r j ∈ N (0 , 1) and z j ∈ {± µ } with equal probability . Then one can prove that E ( | x | ) = Z 0 −∞ − x √ 2 π d e − x 2 2 d dx + Z ∞ 0 x √ 2 π d e − x 2 2 d dx = 2 Z ∞ 0 √ d √ 2 π e − x 2 2 d d x 2 2 d = 2 √ d Z ∞ 0 1 √ 2 π e − α dα = r 2 d π Finally , it is derived that E ( f ) = µ E ( | x | ) = µ q 2 d π . R E F E R E N C E S [1] N. Goel, G. Bebis, and A. Nefian, “Face recognition experiments with random projection, ” in Pr oceedings of SPIE, Bellingham, W A , pp. 426–437, 2005. [2] W . B. Johnson and J. Lindenstrauss, “Extensions of Lipschitz mappings into a Hilbert space, ” Contemp. Math. , v ol. 26, pp. 189–206, 1984. [3] R. J. Durrant and A. Kaban, “Random projections as regularizers: Learning a linear discriminant ensemble from fe wer observations than data dimensions, ” Pr oceedings of the 5th Asian Conference on Machine Learning (A CML 2013). JMLR W & CP , vol. 29, pp. 17–32, 2013. [4] R. Calderbank, S. Jafarpour , and R. Schapire, “Compressed learning: Universal sparse dimensionality reduction and learning in the measurement domain, ” T echnical Report , 2009. [5] R. Baraniuk, M. Dav enport, R. DeV ore, and M. W akin, “ A simple proof of the restricted isometry property for random matrices, ” Constructive Approximation , vol. 28, no. 3, pp. 253–263, 2008. 25 [6] P . Indyk and R. Motwani, “ Approximate nearest neighbors: T ow ards removing the curse of dimensionality , ” in Proceedings of the 30th Annual ACM Symposium on Theory of Computing , pp. 604–613, 1998. [7] D. Achlioptas, “Database-friendly random projections: Johnson–Lindenstrauss with binary coins, ” J. Comput. Syst. Sci. , vol. 66, no. 4, pp. 671–687, 2003. [8] P . Li, T . J. Hastie, and K. W . Church, “V ery sparse random projections, ” in Pr oceedings of the 12th ACM SIGKDD international confer ence on Knowledge discovery and data mining , 2006. [9] A. Dasgupta, R. K umar , and T . Sarlos, “ A sparse Johnson–Lindenstrauss transform, ” in Pr oceedings of the 42nd ACM Symposium on Theory of Computing , 2010. [10] S. Dasgupta and A. Gupta, “ An elementary proof of the Johnson–Lindenstrauss lemma, ” T echnical Report, UC Berk ele y , no. 99–006, 1999. [11] J. Matou ˇ sek, “On variants of the Johnson–Lindenstrauss lemma, ” Random Struct. Algorithms , vol. 33, no. 2, pp. 142–156, 2008. [12] R. Arriaga and S. V empala, “ An algorithmic theory of learning: Robust concepts and random projection, ” Journal of Machine Learning , vol. 63, no. 2, pp. 161–182, 2006. [13] X. Z. Fern and C. E. Brodley , “Random projection for high dimensional data clustering: A cluster ensemble approach, ” in Pr oceedings of the 20th International Confer ence on Machine Learning , 2003. [14] A. Martinez and R. Benav ente, “The AR face database, ” T echnical Report 24, CVC , 1998. [15] A. Martinez, “PCA versus LDA, ” IEEE T rans. P AMI , vol. 23, no. 2, pp. 228–233, 2001. [16] A. Georghiades, P . Belhumeur, and D. Kriegman, “From few to many: Illumination cone models for face recognition under variable lighting and pose, ” IEEE T rans. P AMI , vol. 23, no. 6, pp. 643–660, 2001. [17] K. Lee, J. Ho, and D. Krie gman, “ Acquiring linear subspaces for face recognition under variable lighting, ” IEEE T rans. P AMI , vol. 27, no. 5, pp. 684–698, 2005. [18] P . J. Phillips, H.W echsler , and P . Rauss, “The FERET database and e valuation procedure for face-recognition algorithms, ” Image and V ision Computing , vol. 16, no. 5, pp. 295–306, 1998. [19] A. V . Nefian and M. H. Hayes, “Maximum likelihood training of the embedded HMM for face detection and recognition, ” IEEE International Conference on Image Pr ocessing , 2000. [20] F . Samaria and A. Harter , “Parameterisation of a stochastic model for human face identification, ” In 2nd IEEE W orkshop on Applications of Computer V ision, Sarasota, FL , 1994. [21] U. Alon, N. Barkai, D. A. Notterman, K. Gish, S. Ybarra, D. Mack, and A. J. Levine, “Broad patterns of gene expression rev ealed by clustering analysis of tumor and normal colon tissues probed by oligonucleotide arrays, ” Proceedings of the National Academy of Sciences , vol. 96, no. 12, pp. 6745–6750, 1999. [22] T . R. Golub, D. K. Slonim, P . T amayo, C. Huard, M. Gaasenbeek, J. P . Mesirov , H. Coller, M. L. Loh, J. R. Downing, M. A. Caligiuri, C. D. Bloomfield, and E. S. Lander, “Molecular classification of cancer: Class discovery and class prediction by gene expression monitoring, ” Science , vol. 286, no. 5439, pp. 531–537, 1999. [23] D. G. Beer , S. L. Kardia, C.-C. C. Huang, T . J. Giordano, A. M. Levin, D. E. Misek, L. Lin, G. Chen, T . G. Gharib, D. G. Thomas, M. L. Lizyness, R. Kuick, S. Hayasaka, J. M. T aylor, M. D. Iannettoni, M. B. Orringer, and S. Hanash, “Gene- expression profiles predict surviv al of patients with lung adenocarcinoma, ” Natur e medicine , v ol. 8, no. 8, pp. 816–824, Aug. 2002. [24] D. Cai, X. W ang, and X. He, “Probabilistic dyadic data analysis with local and global consistency , ” in Pr oceedings of the 26th Annual International Conference on Machine Learning (ICML ’09) , 2009, pp. 105–112. 26 [25] N. G. de Bruijn, Asymptotic methods in analysis . Do ver , March 1981.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment