Learning Phrase Representations using RNN Encoder-Decoder for Statistical Machine Translation

In this paper, we propose a novel neural network model called RNN Encoder-Decoder that consists of two recurrent neural networks (RNN). One RNN encodes a sequence of symbols into a fixed-length vector representation, and the other decodes the represe…

Authors: Kyunghyun Cho, Bart van Merrienboer, Caglar Gulcehre

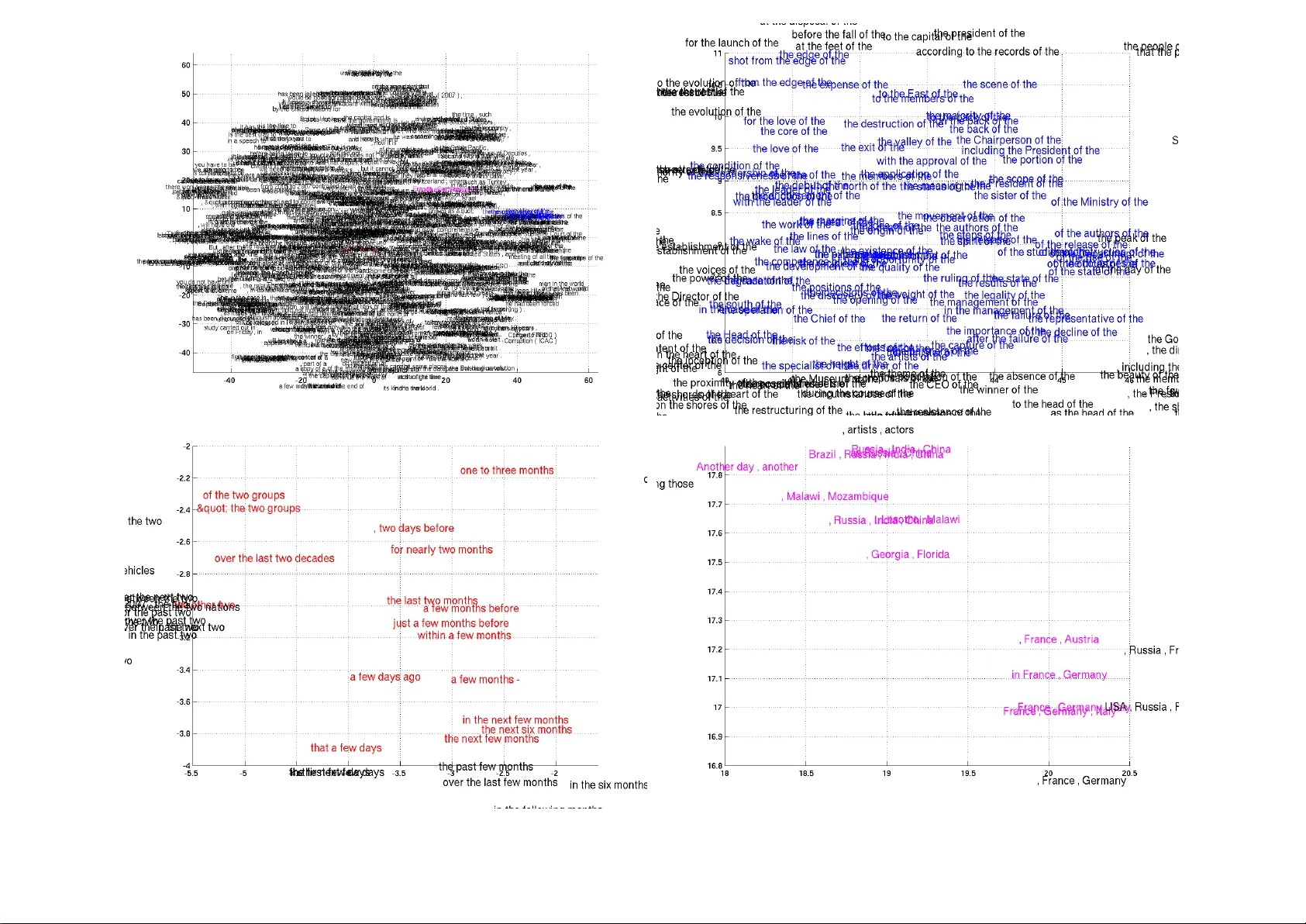

Learning Phrase Representations using RNN Encoder –Decoder f or Statistical Machine T ranslation K yungh yun Cho Bart van Merri ¨ enboer Caglar Gulcehre Uni versit ´ e de Montr ´ eal firstname.lastname@umontreal.ca Dzmitry Bahdanau Jacobs Uni versity , German y d.bahdanau@jacobs-university.de F ethi Bougares Holger Schwenk Uni versit ´ e du Maine, France firstname.lastname@lium.univ-lemans.fr Y oshua Bengio Uni versit ´ e de Montr ´ eal, CIF AR Senior Fello w find.me@on.the.web Abstract In this paper , we propose a nov el neu- ral network model called RNN Encoder– Decoder that consists of two recurrent neural networks (RNN). One RNN en- codes a sequence of symbols into a fixed- length vector representation, and the other decodes the representation into another se- quence of symbols. The encoder and de- coder of the proposed model are jointly trained to maximize the conditional prob- ability of a target sequence giv en a source sequence. The performance of a statisti- cal machine translation system is empiri- cally found to improve by using the con- ditional probabilities of phrase pairs com- puted by the RNN Encoder –Decoder as an additional feature in the existing log-linear model. Qualitati vely , we sho w that the proposed model learns a semantically and syntactically meaningful representation of linguistic phrases. 1 Introduction Deep neural networks hav e shown great success in v arious applications such as objection recognition (see, e.g., (Krizhevsk y et al., 2012)) and speech recognition (see, e.g., (Dahl et al., 2012)). Fur - thermore, many recent w orks showed that neu- ral networks can be successfully used in a num- ber of tasks in natural language processing (NLP). These include, but are not limited to, language modeling (Bengio et al., 2003), paraphrase detec- tion (Socher et al., 2011) and word embedding ex- traction (Mikolov et al., 2013). In the field of sta- tistical machine translation (SMT), deep neural networks hav e begun to show promising results. (Schwenk, 2012) summarizes a successful usage of feedforward neural networks in the framew ork of phrase-based SMT system. Along this line of research on using neural net- works for SMT , this paper focuses on a novel neu- ral network architecture that can be used as a part of the con v entional phrase-based SMT system. The proposed neural network architecture, which we will refer to as an RNN Encoder–Decoder , con- sists of two recurrent neural networks (RNN) that act as an encoder and a decoder pair . The en- coder maps a v ariable-length source sequence to a fixed-length vector , and the decoder maps the v ec- tor representation back to a v ariable-length tar get sequence. The two networks are trained jointly to maximize the conditional probability of the target sequence gi ven a source sequence. Additionally , we propose to use a rather sophisticated hidden unit in order to impro ve both the memory capacity and the ease of training. The proposed RNN Encoder–Decoder with a nov el hidden unit is empirically e valuated on the task of translating from English to French. W e train the model to learn the translation probabil- ity of an English phrase to a corresponding French phrase. The model is then used as a part of a stan- dard phrase-based SMT system by scoring each phrase pair in the phrase table. The empirical e val- uation re veals that this approach of scoring phrase pairs with an RNN Encoder–Decoder improv es the translation performance. W e qualitati vely analyze the trained RNN Encoder–Decoder by comparing its phrase scores with those gi ven by the e xisting translation model. The qualitati ve analysis sho ws that the RNN Encoder–Decoder is better at capturing the lin- guistic regularities in the phrase table, indirectly explaining the quantitati ve impro vements in the ov erall translation performance. The further anal- ysis of the model re veals that the RNN Encoder– Decoder learns a continuous space representation of a phrase that preserves both the semantic and syntactic structure of the phrase. 2 RNN Encoder –Decoder 2.1 Preliminary: Recurr ent Neural Networks A recurrent neural network (RNN) is a neural net- work that consists of a hidden state h and an optional output y which operates on a v ariable- length sequence x = ( x 1 , . . . , x T ) . At each time step t , the hidden state h h t i of the RNN is updated by h h t i = f h h t − 1 i , x t , (1) where f is a non-linear acti vation func- tion. f may be as simple as an element- wise logistic sigmoid function and as com- plex as a long short-term memory (LSTM) unit (Hochreiter and Schmidhuber , 1997). An RNN can learn a probability distribution ov er a sequence by being trained to predict the next symbol in a sequence. In that case, the output at each timestep t is the conditional distribution p ( x t | x t − 1 , . . . , x 1 ) . For example, a multinomial distribution ( 1 -of- K coding) can be output using a softmax acti vation function p ( x t,j = 1 | x t − 1 , . . . , x 1 ) = exp w j h h t i P K j 0 =1 exp w j 0 h h t i , (2) for all possible symbols j = 1 , . . . , K , where w j are the ro ws of a weight matrix W . By combining these probabilities, we can compute the probabil- ity of the sequence x using p ( x ) = T Y t =1 p ( x t | x t − 1 , . . . , x 1 ) . (3) From this learned distribution, it is straightfor- ward to sample a ne w sequence by iterati vely sam- pling a symbol at each time step. 2.2 RNN Encoder –Decoder In this paper, we propose a nov el neural network architecture that learns to encode a variable-length sequence into a fix ed-length v ector representation and to decode a given fixed-length vector rep- resentation back into a variable-length sequence. From a probabilistic perspectiv e, this new model is a general method to learn the conditional dis- tribution over a variable-length sequence condi- tioned on yet another variable-length sequence, e.g. p ( y 1 , . . . , y T 0 | x 1 , . . . , x T ) , where one x 1 x 2 x T y T ' y 2 y 1 c De c o de r E n c ode r Figure 1: An illustration of the proposed RNN Encoder–Decoder . should note that the input and output sequence lengths T and T 0 may dif fer . The encoder is an RNN that reads each symbol of an input sequence x sequentially . As it reads each symbol, the hidden state of the RNN changes according to Eq. (1). After reading the end of the sequence (marked by an end-of-sequence sym- bol), the hidden state of the RNN is a summary c of the whole input sequence. The decoder of the proposed model is another RNN which is trained to generate the output se- quence by predicting the next symbol y t gi ven the hidden state h h t i . Ho wev er , unlike the RNN de- scribed in Sec. 2.1, both y t and h h t i are also con- ditioned on y t − 1 and on the summary c of the input sequence. Hence, the hidden state of the decoder at time t is computed by , h h t i = f h h t − 1 i , y t − 1 , c , and similarly , the conditional distribution of the next symbol is P ( y t | y t − 1 , y t − 2 , . . . , y 1 , c ) = g h h t i , y t − 1 , c . for giv en activ ation functions f and g (the latter must produce v alid probabilities, e.g. with a soft- max). See Fig. 1 for a graphical depiction of the pro- posed model architecture. The two components of the proposed RNN Encoder–Decoder are jointly trained to maximize the conditional log-likelihood max θ 1 N N X n =1 log p θ ( y n | x n ) , (4) where θ is the set of the model parameters and each ( x n , y n ) is an (input sequence, output se- quence) pair from the training set. In our case, as the output of the decoder , starting from the in- put, is differentiable, we can use a gradient-based algorithm to estimate the model parameters. Once the RNN Encoder–Decoder is trained, the model can be used in two ways. One way is to use the model to generate a tar get sequence giv en an input sequence. On the other hand, the model can be used to scor e a giv en pair of input and output sequences, where the score is simply a probability p θ ( y | x ) from Eqs. (3) and (4). 2.3 Hidden Unit that Adaptively Remembers and Forgets In addition to a novel model architecture, we also propose a new type of hidden unit ( f in Eq. (1)) that has been moti vated by the LSTM unit b ut is much simpler to compute and implement. 1 Fig. 2 sho ws the graphical depiction of the proposed hid- den unit. Let us describe how the activ ation of the j -th hidden unit is computed. First, the r eset gate r j is computed by r j = σ [ W r x ] j + U r h h t − 1 i j , (5) where σ is the logistic sigmoid function, and [ . ] j denotes the j -th element of a vector . x and h t − 1 are the input and the pre vious hidden state, respec- ti vely . W r and U r are weight matrices which are learned. Similarly , the update gate z j is computed by z j = σ [ W z x ] j + U z h h t − 1 i j . (6) The actual acti vation of the proposed unit h j is then computed by h h t i j = z j h h t − 1 i j + (1 − z j ) ˜ h h t i j , (7) where ˜ h h t i j = φ [ Wx ] j + U r h h t − 1 i j . (8) In this formulation, when the reset gate is close to 0, the hidden state is forced to ignore the pre- vious hidden state and reset with the current input 1 The LSTM unit, which has shown impressive results in sev eral applications such as speech recognition, has a mem- ory cell and four gating units that adaptively control the in- formation flow inside the unit, compared to only two gating units in the proposed hidden unit. For details on LSTM net- works, see, e.g., (Gra ves, 2012). z r h h ~ x Figure 2: An illustration of the proposed hidden acti vation function. The update gate z selects whether the hidden state is to be updated with a new hidden state ˜ h . The reset gate r decides whether the previous hidden state is ignored. See Eqs. (5)–(8) for the detailed equations of r , z , h and ˜ h . only . This ef fectiv ely allows the hidden state to dr op any information that is found to be irrelev ant later in the future, thus, allo wing a more compact representation. On the other hand, the update gate controls ho w much information from the previous hidden state will carry over to the current hidden state. This acts similarly to the memory cell in the LSTM network and helps the RNN to remember long- term information. Furthermore, this may be con- sidered an adaptiv e v ariant of a leaky-integration unit (Bengio et al., 2013). As each hidden unit has separate reset and up- date gates, each hidden unit will learn to capture dependencies ov er different time scales. Those units that learn to capture short-term dependencies will tend to ha ve reset gates that are frequently ac- ti ve, but those that capture longer-term dependen- cies will hav e update gates that are mostly activ e. In our preliminary experiments, we found that it is crucial to use this ne w unit with gating units. W e were not able to get meaningful result with an oft-used tanh unit without any gating. 3 Statistical Machine T ranslation In a commonly used statistical machine translation system (SMT), the goal of the system (decoder , specifically) is to find a translation f giv en a source sentence e , which maximizes p ( f | e ) ∝ p ( e | f ) p ( f ) , where the first term at the right hand side is called translation model and the latter language model (see, e.g., (K oehn, 2005)). In practice, howe ver , most SMT systems model log p ( f | e ) as a log- linear model with additional features and corre- sponding weights: log p ( f | e ) = N X n =1 w n f n ( f , e ) + log Z ( e ) , (9) where f n and w n are the n -th feature and weight, respecti vely . Z ( e ) is a normalization constant that does not depend on the weights. The weights are often optimized to maximize the BLEU score on a de velopment set. In the phrase-based SMT frame work introduced in (K oehn et al., 2003) and (Marcu and W ong, 2002), the translation model log p ( e | f ) is factorized into the translation probabilities of matching phrases in the source and tar get sentences. 2 These probabilities are once again considered additional features in the log-linear model (see Eq. (9)) and are weighted accordingly to maximize the BLEU score. Since the neural net language model was pro- posed in (Bengio et al., 2003), neural networks hav e been used widely in SMT systems. In many cases, neural networks hav e been used to r escore translation hypotheses ( n -best lists) (see, e.g., (Schwenk et al., 2006)). Recently , howe ver , there has been interest in training neural networks to score the translated sentence (or phrase pairs) using a representation of the source sentence as an additional input. See, e.g., (Schwenk, 2012), (Son et al., 2012) and (Zou et al., 2013). 3.1 Scoring Phrase Pairs with RNN Encoder –Decoder Here we propose to train the RNN Encoder– Decoder (see Sec. 2.2) on a table of phrase pairs and use its scores as additional features in the log- linear model in Eq. (9) when tuning the SMT de- coder . When we train the RNN Encoder–Decoder , we ignore the (normalized) frequencies of each phrase pair in the original corpora. This measure w as taken in order (1) to reduce the computational ex- pense of randomly selecting phrase pairs from a large phrase table according to the normalized fre- quencies and (2) to ensure that the RNN Encoder – Decoder does not simply learn to rank the phrase pairs according to their numbers of occurrences. One underlying reason for this choice was that the existing translation probability in the phrase ta- ble already reflects the frequencies of the phrase 2 W ithout loss of generality , from here on, we refer to p ( e | f ) for each phrase pair as a translation model as well pairs in the original corpus. W ith a fixed capacity of the RNN Encoder–Decoder , we try to ensure that most of the capacity of the model is focused to ward learning linguistic regularities, i.e., distin- guishing between plausible and implausible trans- lations, or learning the “manifold” (region of prob- ability concentration) of plausible translations. Once the RNN Encoder–Decoder is trained, we add a ne w score for each phrase pair to the exist- ing phrase table. This allows the ne w scores to en- ter into the e xisting tuning algorithm with minimal additional ov erhead in computation. As Schwenk pointed out in (Schwenk, 2012), it is possible to completely replace the existing phrase table with the proposed RNN Encoder– Decoder . In that case, for a given source phrase, the RNN Encoder–Decoder will need to generate a list of (good) target phrases. This requires, how- e ver , an expensi ve sampling procedure to be per- formed repeatedly . In this paper, thus, we only consider rescoring the phrase pairs in the phrase table. 3.2 Related Appr oaches: Neural Networks in Machine T ranslation Before presenting the empirical results, we discuss a number of recent works that hav e proposed to use neural networks in the conte xt of SMT . Schwenk in (Schwenk, 2012) proposed a simi- lar approach of scoring phrase pairs. Instead of the RNN-based neural network, he used a feedforward neural network that has fixed-size inputs (7 words in his case, with zero-padding for shorter phrases) and fix ed-size outputs (7 words in the target lan- guage). When it is used specifically for scoring phrases for the SMT system, the maximum phrase length is often chosen to be small. Ho wever , as the length of phrases increases or as we apply neural networks to other variable-length sequence data, it is important that the neural network can han- dle v ariable-length input and output. The pro- posed RNN Encoder–Decoder is well-suited for these applications. Similar to (Schwenk, 2012), De vlin et al. (De vlin et al., 2014) proposed to use a feedfor - ward neural network to model a translation model, ho wev er , by predicting one word in a target phrase at a time. They reported an impressiv e impro ve- ment, b ut their approach still requires the maxi- mum length of the input phrase (or context words) to be fixed a priori. Although it is not exactly a neural network the y train, the authors of (Zou et al., 2013) proposed to learn a bilingual embedding of words/phrases. They use the learned embedding to compute the distance between a pair of phrases which is used as an additional score of the phrase pair in an SMT system. In (Chandar et al., 2014), a feedforward neural network was trained to learn a mapping from a bag-of-words representation of an input phrase to an output phrase. This is closely related to both the proposed RNN Encoder–Decoder and the model proposed in (Schwenk, 2012), except that their in- put representation of a phrase is a bag-of-words. A similar approach of using bag-of-words repre- sentations was proposed in (Gao et al., 2013) as well. Earlier , a similar encoder–decoder model us- ing two recursiv e neural networks was proposed in (Socher et al., 2011), but their model was re- stricted to a monolingual setting, i.e. the model reconstructs an input sentence. More recently , an- other encoder–decoder model using an RNN was proposed in (Auli et al., 2013), where the de- coder is conditioned on a representation of either a source sentence or a source context. One important dif ference between the pro- posed RNN Encoder –Decoder and the approaches in (Zou et al., 2013) and (Chandar et al., 2014) is that the order of the w ords in source and tar- get phrases is taken into account. The RNN Encoder–Decoder naturally distinguishes between sequences that hav e the same words but in a differ - ent order , whereas the aforementioned approaches ef fectiv ely ignore order information. The closest approach related to the proposed RNN Encoder–Decoder is the Recurrent Contin- uous T ranslation Model (Model 2) proposed in (Kalchbrenner and Blunsom, 2013). In their pa- per , they proposed a similar model that consists of an encoder and decoder . The difference with our model is that the y used a con volutional n -gram model (CGM) for the encoder and the hybrid of an in verse CGM and a recurrent neural network for the decoder . They , howe ver , ev aluated their model on rescoring the n -best list proposed by the con ventional SMT system and computing the per- plexity of the gold standard translations. 4 Experiments W e e valuate our approach on the English/French translation task of the WMT’14 workshop. 4.1 Data and Baseline System Large amounts of resources are av ailable to b uild an English/French SMT system in the frame work of the WMT’14 translation task. The bilingual corpora include Europarl (61M words), ne ws com- mentary (5.5M), UN (421M), and two crawled corpora of 90M and 780M w ords respectiv ely . The last two corpora are quite noisy . T o train the French language model, about 712M words of crawled newspaper material is av ailable in addi- tion to the target side of the bitexts. All the word counts refer to French words after tokenization. It is commonly ackno wledged that training sta- tistical models on the concatenation of all this data does not necessarily lead to optimal per - formance, and results in extremely large mod- els which are dif ficult to handle. Instead, one should focus on the most rele vant subset of the data for a giv en task. W e have done so by applying the data selection method proposed in (Moore and Le wis, 2010), and its extension to bi- texts (Axelrod et al., 2011). By these means we selected a subset of 418M words out of more than 2G words for language modeling and a subset of 348M out of 850M w ords for train- ing the RNN Encoder –Decoder . W e used the test set newstest2012 and 2013 for data selection and weight tuning with MER T , and newstest2014 as our test set. Each set has more than 70 thousand words and a single refer- ence translation. For training the neural networks, including the proposed RNN Encoder–Decoder , we limited the source and target vocabulary to the most frequent 15,000 words for both English and French. This cov ers approximately 93% of the dataset. All the out-of-vocab ulary words were mapped to a special token ( [ UNK ] ). The baseline phrase-based SMT system w as built using Moses with default settings. This sys- tem achie ves a BLEU score of 30.64 and 33.3 on the development and test sets, respectiv ely (see T a- ble 1). 4.1.1 RNN Encoder–Decoder The RNN Encoder–Decoder used in the experi- ment had 1000 hidden units with the proposed gates at the encoder and at the decoder . The in- put matrix between each input symbol x h t i and the hidden unit is approximated with two lower -rank matrices, and the output matrix is approximated Models BLEU de v test Baseline 30.64 33.30 RNN 31.20 33.87 CSLM + RNN 31.48 34.64 CSLM + RNN + WP 31.50 34.54 T able 1: BLEU scores computed on the dev elop- ment and test sets using different combinations of approaches. WP denotes a word penalty , where we penalizes the number of unknown words to neural networks. similarly . W e used rank-100 matrices, equiv alent to learning an embedding of dimension 100 for each word. The acti v ation function used for ˜ h in Eq. (8) is a hyperbolic tangent function. The com- putation from the hidden state in the decoder to the output is implemented as a deep neural net- work (Pascanu et al., 2014) with a single interme- diate layer having 500 maxout units each pooling 2 inputs (Goodfello w et al., 2013). All the weight parameters in the RNN Encoder – Decoder were initialized by sampling from an isotropic zero-mean (white) Gaussian distrib ution with its standard deviation fixed to 0 . 01 , except for the recurrent weight parameters. F or the re- current weight matrices, we first sampled from a white Gaussian distrib ution and used its left singu- lar vectors matrix, follo wing (Saxe et al., 2014). W e used Adadelta and stochastic gradient descent to train the RNN Encoder–Decoder with hyperparameters = 10 − 6 and ρ = 0 . 95 (Zeiler , 2012). At each update, we used 64 randomly selected phrase pairs from a phrase ta- ble (which was created from 348M words). The model was trained for approximately three days. Details of the architecture used in the experi- ments are explained in more depth in the supple- mentary material. 4.1.2 Neural Language Model In order to assess the ef fectiveness of scoring phrase pairs with the proposed RNN Encoder– Decoder , we also tried a more traditional approach of using a neural network for learning a target language model (CSLM) (Schwenk, 2007). Espe- cially , the comparison between the SMT system using CSLM and that using t he proposed approach of phrase scoring by RNN Encoder–Decoder will clarify whether the contributions from multiple neural networks in dif ferent parts of the SMT sys- tem add up or are redundant. W e trained the CSLM model on 7-grams from the target corpus. Each input word was projected into the embedding space R 512 , and they were concatenated to form a 3072- dimensional v ector . The concatenated v ector was fed through two rectified layers (of size 1536 and 1024) (Glorot et al., 2011). The output layer was a simple softmax layer (see Eq. (2)). All the weight parameters were initialized uniformly be- tween − 0 . 01 and 0 . 01 , and the model was trained until the validation perplexity did not improve for 10 epochs. After training, the language model achie ved a perplexity of 45.80. The validation set was a random selection of 0.1% of the corpus. The model was used to score partial translations dur- ing the decoding process, which generally leads to higher gains in BLEU score than n-best list rescor- ing (V aswani et al., 2013). T o address the computational complexity of using a CSLM in the decoder a b uffer was used to aggregate n-grams during the stack- search performed by the decoder . Only when the b uffer is full, or a stack is about to be pruned, the n-grams are scored by the CSLM. This allows us to perform fast matrix- matrix multiplication on GPU using Theano (Bergstra et al., 2010; Bastien et al., 2012). −60 −50 −40 −30 −20 −10 0 −14 −12 −10 −8 −6 −4 −2 0 RNN Scores (log) TM Scores (log) Figure 3: The visualization of phrase pairs accord- ing to their scores (log-probabilities) by the RNN Encoder–Decoder and the translation model. 4.2 Quantitative Analysis W e tried the follo wing combinations: 1. Baseline configuration 2. Baseline + RNN 3. Baseline + CSLM + RNN 4. Baseline + CSLM + RNN + W ord penalty Source T ranslation Model RNN Encoder–Decoder at the end of the [a la fin de la] [ ´ r la fin des ann ´ ees] [ ˆ etre sup- prim ´ es ` a la fin de la] [ ` a la fin du] [ ` a la fin des] [ ` a la fin de la] for the first time [ r c pour la premi r ¨ ere fois] [ ´ et ´ e donn ´ es pour la premi ` ere fois] [ ´ et ´ e comm ´ emor ´ ee pour la premi ` ere fois] [pour la premi ` ere fois] [pour la premi ` ere fois ,] [pour la premi ` ere fois que] in the United States and [ ? aux ? tats-Unis et] [ ´ et ´ e ouvertes aux ´ Etats- Unis et] [ ´ et ´ e constat ´ ees aux ´ Etats-Unis et] [aux Etats-Unis et] [des Etats-Unis et] [des ´ Etats-Unis et] , as well as [ ? s , qu’] [ ? s , ainsi que] [ ? re aussi bien que] [, ainsi qu’] [, ainsi que] [, ainsi que les] one of the most [ ? t ? l’ un des plus] [ ? l’ un des plus] [ ˆ etre retenue comme un de ses plus] [l’ un des] [le] [un des] (a) Long, frequent source phrases Source T ranslation Model RNN Encoder–Decoder , Minister of Commu- nications and Trans- port [Secr ´ etaire aux communications et aux trans- ports :] [Secr ´ etaire aux communications et aux transports] [Secr ´ etaire aux communications et aux trans- ports] [Secr ´ etaire aux communications et aux transports :] did not comply with the [vestimentaire , ne correspondaient pas ` a des] [susmentionn ´ ee n’ ´ etait pas conforme aux] [pr ´ esent ´ ees n’ ´ etaient pas conformes ` a la] [n’ ont pas respect ´ e les] [n’ ´ etait pas conforme aux] [n’ ont pas respect ´ e la] parts of the world . [ c gions du monde .] [r ´ egions du monde con- sid ´ er ´ ees .] [r ´ egion du monde consid ´ er ´ ee .] [parties du monde .] [les parties du monde .] [des parties du monde .] the past few days . [le petit texte .] [cours des tout derniers jours .] [les tout derniers jours .] [ces derniers jours .] [les derniers jours .] [cours des derniers jours .] on Friday and Satur- day [vendredi et samedi ` a la] [vendredi et samedi ` a] [se d ´ eroulera vendredi et samedi ,] [le vendredi et le samedi] [le vendredi et samedi] [vendredi et samedi] (b) Long, rare source phrases T able 2: The top scoring target phrases for a small set of source phrases according to the translation model (direct translation probability) and by the RNN Encoder–Decoder . Source phrases were randomly selected from phrases with 4 or more w ords. ? denotes an incomplete (partial) character . r is a Cyrillic letter ghe . The results are presented in T able 1. As e x- pected, adding features computed by neural net- works consistently improv es the performance ov er the baseline performance. The best performance was achiev ed when we used both CSLM and the phrase scores from the RNN Encoder–Decoder . This suggests that the contributions of the CSLM and the RNN Encoder – Decoder are not too correlated and that one can expect better results by impro ving each method in- dependently . Furthermore, we tried penalizing the number of words that are unkno wn to the neural networks (i.e. words which are not in the short- list). W e do so by simply adding the number of unkno wn words as an additional feature the log- linear model in Eq. (9). 3 Ho wev er , in this case we 3 T o understand the effect of the penalty , consider the set of all words in the 15,000 large shortlist, SL. All words x i / ∈ SL are replaced by a special token [ UNK ] before being scored by the neural networks. Hence, the conditional probability of any x i t / ∈ SL is actually given by the model as p ( x t = [ UNK ] | x

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment