Towards Using Unlabeled Data in a Sparse-coding Framework for Human Activity Recognition

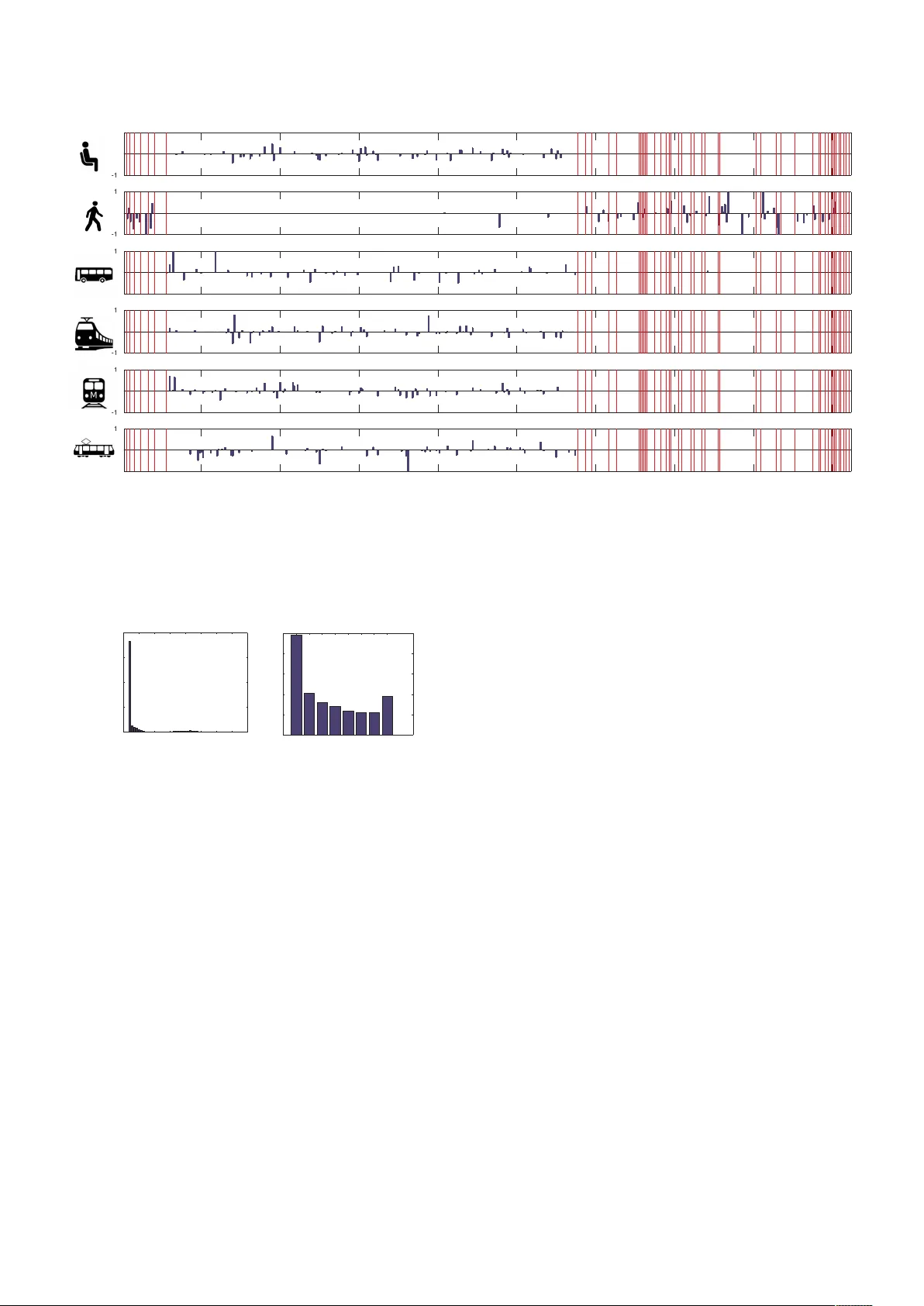

We propose a sparse-coding framework for activity recognition in ubiquitous and mobile computing that alleviates two fundamental problems of current supervised learning approaches. (i) It automatically derives a compact, sparse and meaningful feature…

Authors: Sourav Bhattacharya, Petteri Nurmi, Nils Hammerla