Convex Total Least Squares

We study the total least squares (TLS) problem that generalizes least squares regression by allowing measurement errors in both dependent and independent variables. TLS is widely used in applied fields including computer vision, system identification…

Authors: Dmitry Malioutov, Nikolai Slavov

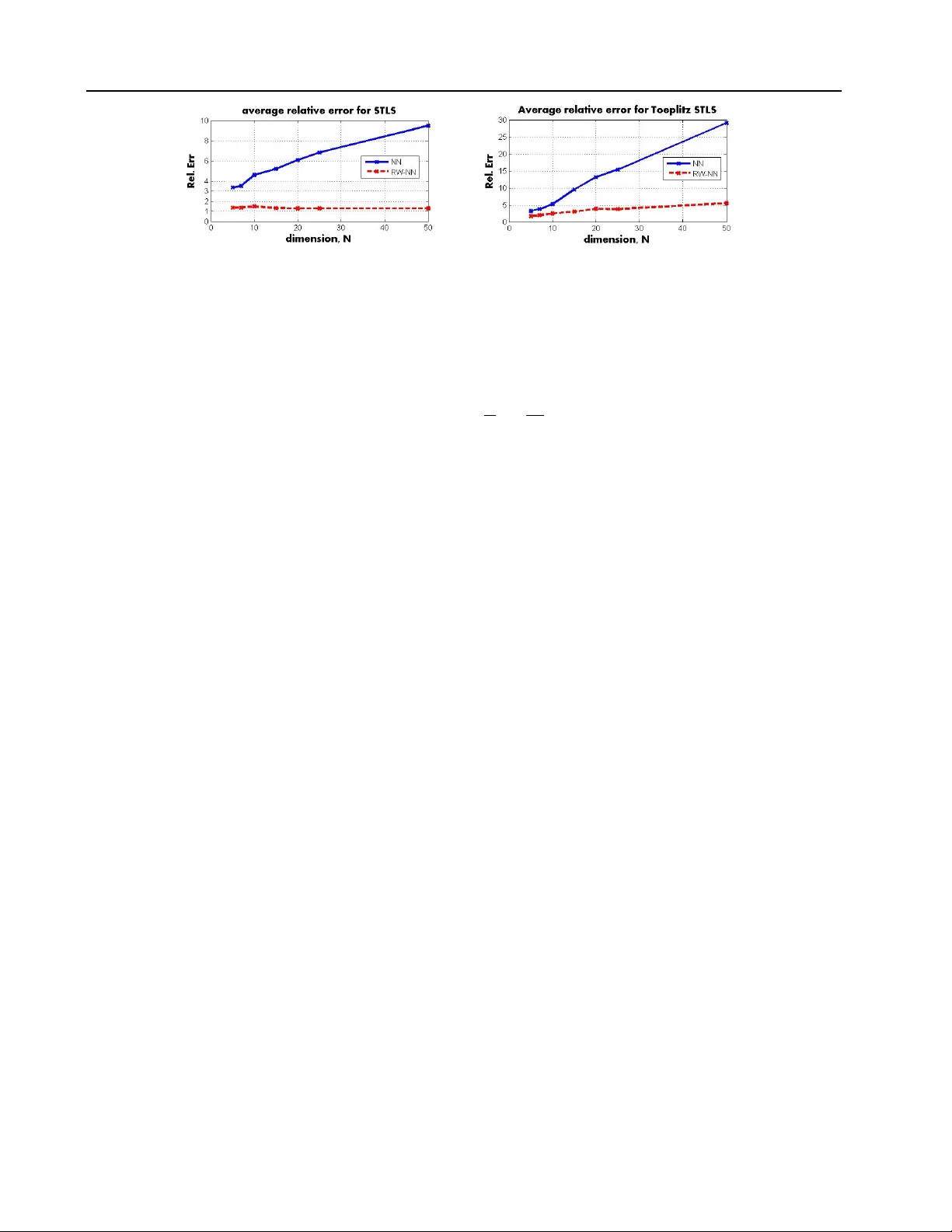

Con v ex T otal Least Squares Dmitry Maliouto v dmalioutov@us.ibm.com IBM Researc h, 1101 Kitcha wan Road, Y orkto wn Heights, NY 10598 USA Nik olai Slav o v nsla vo v@alum.mit.edu Departmen ts of Physics and Biology , MIT, 77 Massach usetts Av enue, Cam bridge, MA 02139, USA Abstract W e study the total least squares (TLS) prob- lem that generalizes least squares regression b y allo wing measuremen t errors in both de- p enden t and indep enden t v ariables. TLS is widely used in applied fields including com- puter vision, system iden tification and econo- metrics. The sp ecial case when all dep enden t and indep enden t v ariables hav e the same lev el of uncorrelated Gaussian noise, known as ordinary TLS, can b e solved by singular v alue decomp osition (SVD). How ev er, SVD cannot solve man y imp ortant practical TLS problems with realistic noise structure, suc h as having v arying measuremen t noise, kno wn structure on the errors, or large outliers re- quiring robust error-norms. T o solve suc h problems, we develop con vex relaxation ap- proac hes for a general class of structured TLS (STLS). W e sho w both theoretically and exp erimentally , that while the plain n u- clear norm relaxation incurs large approxi- mation errors for STLS, the re-w eighted nu- clear norm approach is very effectiv e, and ac hieves b etter accuracy on c hallenging STLS problems than p opular non-conv ex solvers. W e describ e a fast solution based on aug- men ted Lagrangian form ulation, and apply our approach to an imp ortant class of bi- ological problems that use p opulation av- erage measuremen ts to infer cell-t yp e and ph ysiological-state sp ecific expression lev els that are v ery hard to measure directly . Pr o c e e dings of the 31 st International Confer enc e on Ma- chine L e arning , Beijing, China, 2014. JMLR: W&CP v ol- ume 32. Cop yright 2014 b y the author(s). 1. In tro duction T otal least squares is a p ow erful generalization of or- dinary least squares (LS) which allows errors in the measured explanatory v ariables ( Golub & V an Loan , 1980 ). It has become an indispensable to ol in a v ariety of disciplines including chemometrics, system identifi- cation, astronomy , computer vision, and econometrics ( Mark ovsky & V an Huffel , 2007 ). Consider a least squares problem y ≈ X β , where we would lik e to find co efficien ts β to b est predict the target vector y based on measured v ariables X . The usual assumption is that X is kno wn exactly , and that the errors come from i.i.d. additive Gaussian noise n : y = X β + n . The LS problem has a simple closed-form solution b y minimiz- ing k y − X β k 2 2 with resp ect to β . In man y applications not only y but also X is known only appro ximately , X = X 0 + E x , where X 0 are the uncorrupted v alues, and E x are the unknown errors in observed v ariables. The total least squares (TLS) formulation, or errors in v ariables regression, tries to jointly minimize errors in y and in X ( ` 2 -norm of n and F rob enius norm of E x ): min n ,E x , β k n k 2 2 + k E x k 2 F where y = ( X − E x ) β + n (1) While the optimization problem in this form is not con vex, it can in fact b e reformulated as finding the closest rank-deficien t matrix to a given matrix, and solv ed in closed form via the singular v alue decomp o- sition (SVD) ( Golub & V an Loan , 1980 ). Man y error-in-v ariables problems of practical in terest ha ve additional information: for example, a subset of the entries in X may b e known exactly , we ma y know differen t entries with v arying accuracy , and in general X may exhibit a certain structure, e.g. blo ck-diagonal, T o eplitz, or Hank el in system iden tification literature ( Mark ovsky et al. , 2005 ). F urthermore, it is often im- p ortan t to use an error-norm robust to outliers, e.g. Hub er loss or ` 1 -loss. Unfortunately , with rare excep- tions 1 , none of these problems allo w an efficient solu- tion, and the state of the art approac h is to solve them 1 A closed form solution exists when subsets of columns are Con vex T otal Least Squares b y lo cal optimization metho ds ( Mark ovsky & Usevic h , 2014 ; Zh u et al. , 2011 ; Srebro & Jaakk ola , 2003 ).The only av ailable guarantee is typically the abilit y to reach a stationary p oin t of the non-conv ex ob jective. In this pap er w e propose a principled formulation for STLS based on con vex relaxations of matrix rank. Our approach uses the re-weigh ted nuclear norm re- laxation ( F azel et al. , 2001 ) and is highly flexible: it can handle very general linear structure on errors, in- cluding arbitrary weigh ts (changing noise for differ- en t entries), patterns of observ ed and unobserved er- rors, T o eplitz and Hank el structures, and ev en norms other than the F rob enius norm. The nuclear norm relaxation has b een successfully used for a range of mac hine learning problems inv olving rank constraints, including low-rank matrix completion, low-order sys- tem approximation, and robust PCA ( Cai et al. , 2010 ; Chandrasek aran et al. , 2011 ). The STLS problem is conceptually different in that w e do not seek low-rank solutions, but on the contrary nearly full-rank solu- tions. W e show b oth theoretically and exp erimentally that while the plain nuclear norm form ulation incurs large approximation errors, these can b e dramatically impro ved by using the re-weigh ted n uclear norm. W e suggest fast first-order methods based on Augmen ted Lagrangian multipliers ( Bertsek as , 1982 ) to compute the STLS solution. As part of ALM we deriv e new up- dates for the re-weigh ted nuclear-norm based on solv- ing the Sylv ester’s equation, whic h can also be used for man y other machine learning tasks relying on matrix- rank, including matrix completion and robust PCA. As a case study of our approach to STLS w e consider an imp ortant application in biology , quantification of cellular heterogeneit y ( Slav ov & Botstein , 2011 ). W e dev elop a new representation for the problem as a large structured linear system, and extend it to handle noise b y a structured TLS problem with blo ck-diagonal er- ror structure. Exp eriments demonstrate the effective- ness of STLS in recov ering physiological-state sp ecific expression lev els from aggregate measurements. 1.1. T otal Least Squares W e first review the solution of ordinary TLS prob- lems. W e simplify the notation from ( 1 ): combining our noisy data X and y in to one matrix, ¯ A , [ X − y ], and the errors in to E , [ E x − n ] we hav e min k E k 2 F where ( ¯ A − E ) β 1 = 0 . (2) The matrix ¯ A is in general full-rank, and a solution can b e obtained by finding a rank-deficient matrix closest fully known; a F ourier transform based approach can handle block-circulant errors E x ( Beck & Ben-T al , 2005 ). to ¯ A in terms of the F rob enius norm. This finds small- est errors E x and n suc h that y + n is in the range space of X − E x . The closest rank-deficient matrix is sim- ply obtained by computing the SVD, ¯ A = U S V T and setting the smallest singular v alue to b e zero. Structured TLS problems ( Mark ovsky & V an Huffel , 2007 ) allow more realistic errors E x : with subsets of measuremen ts that may be known exactly; w eights re- flecting differen t measurement noise for eac h entry; re- quiring linear structure of errors E x suc h as T o eplitz that is crucial in decon volution problems in signal pro- cessing. Unfortunately , the SVD does not apply to an y of these more general versions of TLS ( Srebro & Jaakk ola , 2003 ; Marko vsky & V an Huffel , 2007 ). Ex- isting solutions to structured TLS problems formulate a non-con vex optimization problem and attempt to solv e it by lo cal optimization ( Marko vsky & Usevich , 2014 ) that suffers from lo cal optima and lack of guar- an tees on accuracy . W e follow a different route and use a con vex relaxation for the STLS problem. 2. STLS via a n uclear norm relaxation The STLS problem in a general form can b e describ ed as follo ws ( Marko vsky & V an Huffel , 2007 ). Using the notation in Section 1.1 , supp ose our observed matrix ¯ A is M × N with full column rank. W e aim to find a nearby rank-deficient matrix A , rank( A ) ≤ N − 1, where the errors E hav e a certain linear structure: min k W E k 2 F , where rank( A ) ≤ N − 1 A = ¯ A − E , and L ( E ) = b (3) The k ey comp onents here are the linear equalities that E has to satisfy , L ( E ) = b . This notation represents a set of linear constrain ts tr( L T i E ) = b i , for i = 1 , .., J . In our application to cell heterogeneit y quantification these constraints correspond to knowing certain entries of A exactly , i.e. E ij = 0 for some subset of entries, while other entries v ary freely . One may require other linear structure such as T oeplitz or Hank el. W e also allo w an element-wise w eigh ting W E , with W i,j ≥ 0 on the errors, as some observ ations may b e measured with higher accuracy than others. Finally , while we fo cus on the F rob enius norm of the error, any other con vex error metric, for example, mean absolute er- ror, or robust Hub er loss, could b e used instead. The main difficulty in the formulation is p osed by the non- con vex rank constrain t. The STLS problem is a special case of the structured low-rank appro ximation prob- lem, where rank is exactly N − 1 ( Marko vsky & Use- vic h , 2014 ). Next, we prop ose a tractable formulation for STLS based on conv ex relaxations of matrix rank. W e start b y form ulating the n uclear-norm relaxation for TLS and then improv e up on it by using the re- Con vex T otal Least Squares w eighted nuclear norm. The n uclear norm k A k ∗ is a p opular relaxation used to conv exify rank constraints ( Cai et al. , 2010 ), and it is defined as the sum of the singular v alues of the matrix A , i.e. k A k ∗ = P i σ i ( A ). It can b e viewed as the ` 1 -norm of the singular v alue sp ectrum 2 fa voring few non-zero singular v alues, i.e., matrices with low-rank. Our initial nuclear norm re- laxation for the STLS problem is: min k A k ∗ + α k W E k 2 F suc h that A = ¯ A − E , and L ( E ) = b (4) The parameter α balances error residuals vs. the nu- clear norm (pro xy for rank). W e chose the largest α , i.e. smallest nuclear norm p enalty , that still pro duces rank( A ) ≤ N − 1. This can b e achiev ed by a simple binary searc h ov er α . In contrast to matrix comple- tion and robust PCA, the STLS problem aims to find almost fully dense solutions with rank N − 1, so it re- quires different analysis to ols. W e present theoretical analysis sp ecifically for the STLS problem in Section 4 . Next, we describ e the re-w eighted n uclear norm, whic h, as we show in Section 4 , is b etter suited for the STLS problem than the plain n uclear norm. 2.1. Reweigh ted n uclear norm and the log-determinan t heuristic for rank A very effective impro vemen t of the nuclear norm comes from re-weigh ting it ( F azel et al. , 2001 ; Mohan & F azel , 2010 ) based on the log-determinant heuristic for rank. T o motiv ate it, we first describ e a closely related approach in the vector case (where instead of searc hing for low-rank matrices one would like to find sparse vectors). Suppose that we seek a sparse so- lution to a general conv ex optimization problem. A p opular approac h p enalizes the ` 1 -norm of the solu- tion x k x k 1 = P i | x i | to encourage sparse solutions. A dramatic improv ement in finding sparse signals can b e obtained simply by using the w eighted ` 1 -norm, i.e. P i w i | x i | with suitable p ositive weigh ts w i ( Can- des et al. , 2008 ) instead of a plain ` 1 -norm. Ideally the w eights would b e based on the unknown signal, to pro vide a closer approximation to sparsit y ( ` 0 -norm) b y p enalizing large elemen ts less than small ones. A practical solution first solves a problem inv olving the un weigh ted ` 1 -norm, and uses the solution ˆ x to de- fine the w eights w i = 1 δ + | ˆ x i | , with δ a small p ositiv e constan t. This iterative approac h can b e seen as an iterativ e lo cal linearization of the concav e log-p enalty for sparsit y , P i log( δ + | x i | ) ( F azel et al. , 2001 ; Candes et al. , 2008 ). In b oth empirical and emerging theoret- ical studies( Needell , 2009 ; Kha jehnejad et al. , 2009 ) 2 F or diagonal matrices A the nuclear norm is exactly equiv- alent to the ` 1 -norm of the diagonal elements. re-w eighting the ` 1 -norm has b een shown to provide a tigh ter relaxation of sparsity . In a similar wa y , the re-w eighted n uclear norm tries to p enalize large singular v alues less than small ones by in tro ducing p ositiv e w eights. There is an analogous direct connection to the iterative linearization for the conca ve log-det relaxation of rank ( Mohan & F azel , 2010 ). Recall that the problem of minimizing the nu- clear norm sub ject to conv ex set constraints C , min k A k ∗ suc h that A ∈ C , (5) has a semi-definite programming (SDP) represen tation ( F azel et al. , 2001 ). Introducing auxiliary symmetric p.s.d. matrix v ariables Y , Z 0, w e rewrite it as: min A,Y ,Z tr( Y ) + tr( Z ) s.t. Y A A T Z 0 , A ∈ C (6) Instead of using the conv ex nuclear norm relaxation, it has b een suggested to use the concav e log-det ap- pro ximation to rank: min A,Y ,Z log det( Y + δ I ) + log det( Z + δ I ) s.t. Y A A T Z 0 , A ∈ C (7) Here I is the identit y matrix and δ is a small p ositive constan t. The log-det relaxation provides a closer ap- pro ximation to rank than the nuclear norm, but it is more challenging to optimize. By iteratively lineariz- ing this ob jective one obtains a sequence of weigh ted n uclear-norm problems ( Mohan & F azel , 2010 ): min A,Y ,Z tr(( Y k + δ I ) − 1 Y ) + tr(( Z k + δ I ) − 1 Z ) s.t. Y A A T Z 0 , A ∈ C (8) where Y k , Z k are obtained from the previous itera- tion, and Y 0 , Z 0 are initialized as I . Let W k 1 = ( Y k + δ I ) − 1 / 2 and W k 2 = ( Z k + δ I ) − 1 / 2 then the prob- lem is equiv alent to a w eigh ted n uclear norm optimiza- tion in eac h iteration k : min A,Y ,Z k W k 1 AW k 2 k ∗ s.t. A ∈ C (9) The re-weigh ted nuclear norm approach iteratively solv es conv ex weigh ted nuclear norm problems in ( 9 ): Re-w eighted n uclear norm algorithm: Initialize: k = 0, W 0 1 = W 0 2 = I . (1) Solv e the w eighted NN problem in ( 9 ) to get A k +1 . (2) Compute the SVD: W k 1 A k +1 W k 2 = U Σ V T , and set Y k +1 = ( W k 1 ) − 1 U Σ U T ( W k 1 ) − 1 and Z k +1 = ( W k 2 ) − 1 V Σ V T ( W k 2 ) − 1 . Con vex T otal Least Squares (3) Set W k 1 = ( Y k + δ I ) − 1 / 2 and W k 2 = ( Z k + δ I ) − 1 / 2 . There are v arious w a ys to solv e the plain and w eighted n uclear norm STLS form ulations, including interior- p oin t metho ds ( T oh et al. , 1999 ) and iterative thresh- olding ( Cai et al. , 2010 ). In the next section we fo cus on augmen ted Lagrangian metho ds (ALM) ( Bertsek as , 1982 ) whic h allo w fast conv ergence without using com- putationally exp ensiv e second-order information. 3. F ast computation via ALM While the weigh ted nuclear norm problem in ( 9 ) can b e solved via an interior p oint metho d, it is computa- tionally exp ensive even for mo dest size data b ecause of the need to compute Hessians. W e develop an effec- tiv e first-order approac h for STLS based on the aug- men ted Lagrangian multiplier (ALM) metho d ( Bert- sek as , 1982 ; Lin et al. , 2010 ). Consider a general equal- it y constrained optimization problem: min x f ( x ) suc h that h ( x ) = 0 . (10) ALM first defines an augmented Lagrangian function: L ( x , λ , µ ) = f ( x ) + λ T h ( x ) + µ 2 k h ( x ) k 2 2 (11) The augmen ted Lagrangian method alternates opti- mization ov er x with up dates of λ for an increasing sequence of µ k . The motiv ation is that either if λ is near the optimal dual solution for ( 10 ), or, if µ is large enough, then the solution to ( 11 ) approaches the global minimum of ( 10 ). When f and h are b oth con- tin uously differen tiable, if µ k is an increasing sequence, the solution conv erges Q -linearly to the optimal one ( Bertsek as , 1982 ). The work of ( Lin et al. , 2010 ) ex- tended the analysis to allo w ob jective functions in volv- ing nuclear-norm terms. The ALM metho d iterates the follo wing steps: Augmen ted Lagrangian Multiplier metho d (1) x k +1 = arg min x L ( x , λ k , µ k ) (2) λ k +1 = λ k + µ k h ( x k +1 ) (3) Update µ k → µ k +1 (w e use µ k = a k with a > 1). Next, we derive an ALM algorithm for nuclear-norm STLS and extend it to use reweigh ted nuclear norms based on a solution of the Sylv ester’s equations. 3.1. ALM for nuclear-norm STLS W e would like to solve the problem: min k A k ∗ + α k E k 2 F , such that (12) ¯ A = A + E , and L ( E ) = b T o view it as ( 10 ) we hav e f ( x ) = k A k ∗ + α k E k 2 F and h ( x ) = { ¯ A − A − E , L ( E ) − b } . Using Λ as our matrix Lagrangian m ultiplier, the augmented Lagrangian is: min E : L ( E )= b k A k ∗ + α k E k 2 F + tr(Λ T ( ¯ A − A − E )) + µ 2 k ¯ A − A − E k 2 F . (13) Instead of a full optimization ov er x = ( E , A ), w e use co ordinate descen t which alternates optimizing ov er eac h matrix v ariable holding the other fixed. W e do not w ait for the coordinate descen t to con verge at eac h ALM step, but rather up date Λ and µ after a single it- eration, following the inexact ALM algorithm in ( Lin et al. , 2010 ) 3 . Finally , instead of relaxing the con- strain t L ( E ) = b , w e k eep the constrained form, and follo w each step by a pro jection ( Bertsek as , 1982 ). The minimum of ( 13 ) ov er A is obtained b y the singu- lar v alue thresholding op eration ( Cai et al. , 2010 ): A k +1 = S µ − 1 ¯ A − E k + µ − 1 k Λ k (14) where S γ ( Z ) soft-thresholds the singular v alues of Z = U S V T , i.e. ˜ S = max( S − γ , 0) to obtain ˆ Z = U ˜ S V T . The minim um of ( 13 ) ov er E is obtained by setting the gradient with resp ect to E to zero, follow ed by a pro jection 4 on to the affine space defined b y L ( E ) = b : ˜ E k +1 = 1 2 α + µ k Λ k + µ k ( ¯ A − A ) and E k +1 = Π E : L ( E )= b ˜ E k +1 (15) 3.2. ALM for re-weigh ted n uclear-norm STLS T o use the log-determinant heuristic, i.e., the re- w eighted nuclear norm approach, we need to solve the w eighted nuclear norm subproblems: min k W 1 AW 2 k ∗ + α k E k 2 F where (16) ¯ A = A + E , and L ( E ) = b There is no known analytic thresholding solution for the weigh ted nuclear norm, so instead we follow ( Liu et al. , 2010 ) to create a new v ariable D = W 1 AW 2 and add this definition as an additional linear constrain t: min k D k ∗ + α k E k 2 F where (17) ¯ A = A + E , D = W 1 AW 2 , and L ( E ) = b No w we hav e tw o Lagrangian multipliers Λ 1 and Λ 2 3 This is closely related to the p opular alternating direction of multipliers metho ds ( Boyd et al. , 2011 ). 4 F or many constrain ts of interest this pro jection is highly effi- cient: when the constrain t fixes some entries E ij = 0, pro jection simply re-sets these entries to zero. Pro jection onto T o eplitz structure simply takes an a verage along each diagonal, e.t.c Con vex T otal Least Squares Algorithm 1 ALM for weigh ted NN-STLS Input: ¯ A , W 1 , W 2 , α rep eat • Up date D via soft-thresholding: D k +1 = S µ − 1 k W 1 AW 2 − 1 /µ k Λ k 2 . • Up date E as in ( 15 ). • Solv e Sylvester system for A in ( 19 ). • Up date Λ k +1 1 = Λ k 1 + µ k ( ¯ A − A − E ), Λ k +1 2 = Λ k 2 + µ k ( D − W 1 AW 2 ) and µ k → µ k +1 . un til conv er g ence and the augmen ted Lagrangian is min E : L ( E )= b k D k ∗ + α k E k 2 F + tr(Λ T 1 ( ¯ A − A − E )) + tr(Λ T 2 ( D − W 1 AW 2 )) + µ 2 k ¯ A − A − E k 2 F + µ 2 k D − W 1 AW 2 k 2 F (18) W e again follow an ALM strategy , optimizing ov er D , E , A separately follow ed by up dates of Λ 1 , Λ 2 and µ . Note that ( Deng et al. , 2012 ) considered a strategy for minimizing re-weigh ted nuclear norms for matrix completion, but instead of using exact minimization o ver A , they to ok a step in the gradient direction. W e deriv e the exact up date, which turns out to b e very efficien t via a Sylvester equation form ulation. The up dates o ver D and o ver E lo ok similar to the un- rew eighted case. T aking a deriv ative with resp ect to A we obtain a linear system of equations in an un usual form: − Λ 1 − W y Λ 2 W Z − µ ( ¯ A − A − E ) − µW 1 ( D − W 1 AW 2 ) W 2 = 0. Rewriting it, we obtain: A + W 2 1 AW 2 2 = 1 µ k (Λ 1 + W 1 Λ 2 W 2 )+( ¯ A − E )+ W 1 D W 2 (19) w e can see that it is in the form of Sylvester equation arising in discrete Ly apunov systems ( Kailath , 1980 ): A + B 1 AB 2 = C (20) where A is the unknown, and B 1 , B 2 , C are co efficient matrices. An efficient solution is describ ed in ( Bartels & Stewart , 1972 ). These ALM steps for rew eighted n uclear norm STLS are summarized in Algorithm 1 . T o obtain the full algorithm for STLS, we com bine the ab o ve algorithm with steps of re-weigh ting the n uclear norm and the binary search ov er α as describ ed in Section 2.1 . W e use it for exp eriments in Section 5 . A faster algorithm that a voids the need for a binary searc h will b e presented in a future publication. 4. Accuracy analysis for STLS In context of matrix completion and robust PCA, the n uclear norm relaxation has strong theoretical accu- racy guarantees ( Rech t et al. , 2010 ; Chandrasek aran et al. , 2011 ). W e now study accuracy guaran tees for the STLS problem via the n uclear norm and the rew eighted nuclear norm approac hes. The analysis is conducted in the plain TLS setting, where the optimal solution is av ailable via the SVD, and it gives v aluable insigh t into the accuracy of our approach for the m uch harder STLS problem. In particular, we quantify the dramatic b enefit of using reweigh ting. In this section w e study a simplification of our STLS algorithm, where w e set the regularization parameter α once and do not up date it through the iterations. The full adaptiv e ap- proac h from Section 2.1 is analyzed in the addendum to this pap er where we sho w that it can in fact recov er the exact SVD solution for plain TLS. W e first consider the problem min k A − ¯ A k 2 F suc h that rank( A ) ≤ N − 1. F or the exact solution via the SVD, the minim um appro ximation error is simply the square of the last singular v alue E r r S V D = k ˆ A S V D − ¯ A k 2 F = σ 2 N . The nuclear-norm appro ximation will hav e a higher error. W e solve min k A − ¯ A k 2 F + α k A k ∗ for the smallest choice of α that makes A rank-deficient. A closed form solution for A is the soft-thresholding op- eration with α = σ N . It subtracts α from all the singu- lar v alues, making the error E rr nn = N σ 2 N . While it is bounded, this is a substan tial increase from the SVD solution. Using the log-det heuristic, we obtain muc h tigh ter accuracy guaran tees even when we fix α , and do not update it during re-w eighting. Let a i = σ i σ N , the ratio of the i -th and the smallest singular v alues. In the app endix using ‘log-thresholding’ w e derive that E r r rw-nn ≈ σ 2 N 1 + 1 2 X i 1, or p ow er-law deca y σ i = σ N ( N − i + 1) p . The approximation er- rors are E r r exp = σ 2 N 1 + 1 2 P i 0. This is a separable problem with a closed form solution for each coordinate 6 (con trast this with the 5 T aking the SVD A = U S V T we hav e k U S V k 2 F = k S k 2 F and k U S V k ∗ = k S k ∗ since U , V are unitary . 6 F or δ small enough, the global minimum is alwa ys at 0, but if y > 2 √ α there is also a lo cal minimum with a large domain of attraction b etw een 0 and y . Iterativ e linearization metho ds with small enough step size starting at y will conv erge to this local minimum. 0.05 0.1 0.15 0.2 0.25 0.3 0 0.1 0.2 0.3 0.4 0.5 Growth Rate, h − 1 F ractio n of Cells HOC P ha se LO C Ph ase Figure 4. STLS infers accurate fractions of cells in different physiological phases from measurements of p opulation-av erage gene expression across growth rate. soft-thresholding op eration): x i = 1 2 ( y i − δ ) + p ( y i − δ ) 2 − 4( α − y i δ ) , y i > 2 √ α 1 2 ( y i + δ ) − p ( y i + δ ) 2 − 4( α + y i δ ) , y i < − 2 √ α 0 , otherwise (26) Assuming that δ is negligible, then we hav e: x i ≈ 1 2 ( y i + p y 2 i − 4 α ) , if y i > 2 √ α 1 2 ( y i − p y 2 i − 4 α ) , if y i < − 2 √ α 0 , otherwise, (27) and we chose α to annihilate the smallest entry in x , i.e. α = 1 4 min i y 2 i . Sorting the en tries in | y | in increas- ing order, with y 0 = y min , and defining a i = | y i | | y 0 | , we ha ve a i ≥ 1 and the error in approximating the i-th en try , for i > 0 is E r r i = | x i − y i | 2 = y 2 0 2 a i − q a 2 i − 1 2 ≤ y 2 0 2 a 2 i . (28) Also, by our choice of α , we ha ve E r r 0 = y 2 0 for i = 0. The appro ximation error quickly decreases for larger en tries. In contrast, for ` 1 soft-thresholding, the errors of approximating large entries are as bad as the ones for small en tries. This analysis extends directly to the log-det heuristic for relaxing matrix rank. 7. Conclusions W e considered a conv ex relaxation for a very ric h class of structured TLS problems, and provided theoreti- cal guarantees. W e also developed an efficient first- order augmented Lagrangian m ultipliers algorithm for rew eighted nuclear norm STLS, which can b e applied b ey ond TLS to matrix completion and robust PCA problems. W e applied STLS to quantifying cellular heterogeneit y from p opulation a verage measurements. In future work we will study STLS with sparse and group sparse solutions, and explore connections to ro- bust LS ( El Ghaoui & Lebret , 1997 ). Con vex T otal Least Squares References Bartels, R. H. and Stewart, G. W. Solution of the matrix equation AX+ XB = C. Communic ations of the ACM , 15(9):820–826, 1972. Bec k, A. and Ben-T al, A. A global solution for the structured total least squares problem with blo ck circulan t matrices. SIAM Journal on Matrix Anal- ysis and Applic. , 27(1):238–255, 2005. Bertsek as, D. P . Constr aine d Optim. and L agr ange Multiplier Metho ds . Academic Press, 1982. Bo yd, S., Parikh, N., Chu, E., Peleato, B., and Eck- stein, J. Distributed optimization and statistical learning via the alternating direction method of mul- tipliers. F oundations and T r ends in Machine L e arn- ing , 3(1):1–122, 2011. Cai, J., Candes, E. J., and Shen, Z. A singular v alue thresholding algorithm for matrix completion. SIAM Journal on Optim. , 20(4):1956–1982, 2010. Candes, E. J., W akin, M. B., and Boyd, S. P . Enhanc- ing sparsity by reweigh ted l1 minimization. J. of F ourier Analysis and Applic. , 14(5):877–905, 2008. Chandrasek aran, V., Sanghavi, S., Parrilo, P . A., and Willsky , A. S. Rank-sparsit y incoherence for ma- trix decomp osition. SIAM Journal on Optim. , 21 (2), 2011. Deng, Y., Dai, Q., Liu, R., Zhang, Z., and Hu, S. Lo w-rank structure learning via log-sum heuristic reco very . arXiv pr eprint arXiv:1012.1919 , 2012. El Ghaoui, L. and Lebret, H. Robust solutions to least- squares problems with uncertain data. SIAM J. on Matrix Analysis and Applic. , 18(4):1035–1064, 1997. F azel, M., Hindi, H., and Boyd, S. P . A rank minimiza- tion heuristic with application to minim um order system approximation. In IEEE A meric an Contr ol Confer enc e , 2001. Golub, G. H. and V an Loan, C. F. An analysis of the total least squares problem. SIAM Journal on Numeric al Analysis , 17(6):883–893, 1980. Kailath, T. Line ar systems . Prentice-Hall, 1980. Kha jehnejad, A., Xu, W., Av estimehr, S., and Hassibi, B. W eighted ` 1 minimization for sparse reco v ery with prior information. In IEEE Int. Symp osium on Inf. The ory, 2009. , pp. 483–487, 2009. Lin, Z., Chen, M., and Ma, Y. The augmented Lagrange multiplier metho d for exact recov ery of corrupted lo w-rank matrices. arXiv pr eprint arXiv:1009.5055 , 2010. Liu, G., Lin, Z., Y an, S., Sun, J., Y u, Y., and Ma, Y. Robust recov ery of subspace structures by lo w-rank represen tation. arXiv pr eprint arXiv:1010.2955 , 2010. Mark ovsky , I. and Usevich, K. Softw are for weigh ted structured low-rank approximation. J. Comput. Appl. Math. , 256:278–292, 2014. Mark ovsky , I. and V an Huffel, S. Overview of total least-squares metho ds. Signal pr o c essing , 87(10): 2283–2302, 2007. Mark ovsky , I., Willems, J. C., V an Huffel, S., De Moor, B., and Pintelon, R. Application of structured to- tal least squares for system iden tification and model reduction. Automatic Contr ol, IEEE T r ans. on , 50 (10):1490–1500, 2005. Mohan, K. and F azel, M. Reweigh ted n uclear norm minimization with application to system identifica- tion. In Americ an Contr ol Confer enc e , 2010. Needell, D. Noisy signal recov ery via iterative rew eighted l1-minimization. In F orty-Thir d Asilo- mar Confer enc e on Signals, Systems and Comput- ers, 2009 , pp. 113–117. IEEE, 2009. Rec ht, B., F azel, M., and Parrilo, P . A. Guaranteed minim um-rank solutions of linear matrix equations via nuclear norm minimization. SIAM R eview , 52 (3):471–501, 2010. Sla vo v, N. and Botstein, D. Coupling among growth rate resp onse, metab olic cycle, and cell division cy- cle in y east. Mole cular bio. of the c el l , 22(12), 2011. Sla vo v, N., Macinsk as, J., Caudy , A., and Botstein, D. Metab olic cycling without cell division cycling in respiring y east. Pr o c e e dings of the National A c ademy of Scienc es , 108(47), 2011. Sla vo v, Nikolai, Airoldi, Edoardo M., v an Oudenaar- den, Alexander, and Botstein, David. A conserved cell growth cycle can account for the environmen tal stress resp onses of div ergent euk aryotes. Mole cular Biolo gy of the Cel l , 23(10):1986–1997, 2012. Srebro, N. and Jaakk ola, T. W eighted low-rank appro ximations. In Int. Conf. Machine L e arning (ICML) , 2003. T oh, K. C., T o dd, M. J., and T ¨ ut ¨ unc ¨ u, R. H. SDPT3 – a Matlab softw are pack age for semidefinite pro- gramming, version 1.3. Optim. Metho d. Softw. , 11 (1–4):545–581, 1999. Zh u, H., Giannakis, G. B., and Leus, G. W eigh ted and structured sparse total least-squares for p erturb ed compressiv e sampling. In IEEE Int. Conf. A c ous- tics, Sp e e ch and Signal Pr o c. , 2011.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment