Recurrent Models of Visual Attention

Applying convolutional neural networks to large images is computationally expensive because the amount of computation scales linearly with the number of image pixels. We present a novel recurrent neural network model that is capable of extracting inf…

Authors: Volodymyr Mnih, Nicolas Heess, Alex Graves

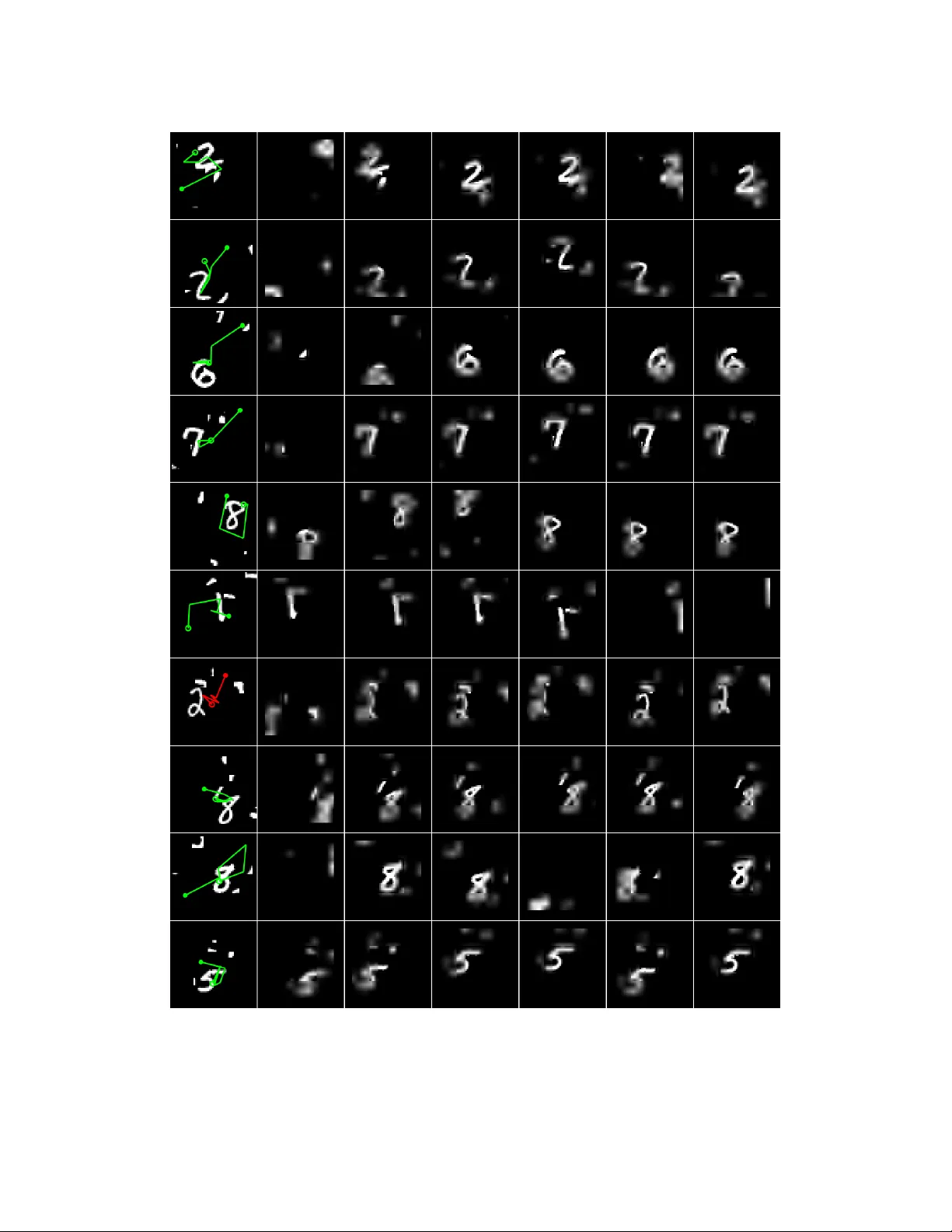

Recurr ent Models of V isual Attention V olodymyr Mnih Nicolas Heess Alex Gra ves K oray Kavukcuoglu Google DeepMind { vmnih,heess,gravesa,korayk } @ google.com Abstract Applying con volutional neural networks to large images is computationally e x- pensiv e because the amount of computation scales linearly with the number of image pixels. W e present a novel recurrent neural network model that is ca- pable of e xtracting information from an image or video by adaptiv ely selecting a sequence of regions or locations and only processing the selected regions at high resolution. Like con volutional neural networks, the proposed model has a degree of translation in variance built-in, b ut the amount of computation it per- forms can be controlled independently of the input image size. While the model is non-differentiable, it can be trained using reinforcement learning methods to learn task-specific policies. W e e valuate our model on se veral image classification tasks, where it significantly outperforms a con volutional neural network baseline on cluttered images, and on a dynamic visual control problem, where it learns to track a simple object without an explicit training signal for doing so. 1 Introduction Neural network-based architectures have recently had great success in significantly adv ancing the state of the art on challenging image classification and object detection datasets [8, 12, 19]. Their excellent recognition accuracy , howe ver , comes at a high computational cost both at training and testing time. The large con volutional neural networks typically used currently take days to train on multiple GPUs e ven though the input images are do wnsampled to reduce computation [12]. In the case of object detection processing a single image at test time currently takes seconds when running on a single GPU [8, 19] as these approaches effecti vely follow the classical sliding windo w paradigm from the computer vision literature where a classifier , trained to detect an object in a tightly cropped bounding box, is applied independently to thousands of candidate windows from the test image at different positions and scales. Although some computations can be shared, the main computational expense for these models comes from con volving filter maps with the entire input image, therefore their computational complexity is at least linear in the number of pix els. One important property of human perception is that one does not tend to process a whole scene in its entirety at once. Instead humans focus attention selectiv ely on parts of the visual space to acquire information when and where it is needed, and combine information from different fixations ov er time to build up an internal representation of the scene [18], guiding future eye movements and decision making. Focusing the computational resources on parts of a scene sav es “bandwidth” as fewer “pixels” need to be processed. But it also substantially reduces the task complexity as the object of interest can be placed in the center of the fixation and irrelev ant features of the visual en vironment (“clutter”) outside the fixated region are naturally ignored. In line with its fundamental role, the guidance of human eye mov ements has been extensi vely studied in neuroscience and cogniti ve science literature. While lo w-lev el scene properties and bottom up processes (e.g. in the form of salienc y; [11]) play an important role, the locations on which humans fixate have also been sho wn to be strongly task specific (see [9] for a revie w and also e.g. [15, 22]). In this paper we take inspiration from these results and develop a no vel frame work for attention-based task-driv en visual processing with neural networks. Our model considers attention-based processing 1 of a visual scene as a contr ol pr oblem and is general enough to be applied to static images, videos, or as a perceptual module of an agent that interacts with a dynamic visual en vironment (e.g. robots, computer game playing agents). The model is a recurrent neural network (RNN) which processes inputs sequentially , attending to different locations within the images (or video frames) one at a time, and incrementally combines information from these fixations to build up a dynamic internal representation of the scene or envi- ronment. Instead of processing an entire image or e ven bounding box at once, at each step, the model selects the next location to attend to based on past information and the demands of the task. Both the number of parameters in our model and the amount of computation it performs can be controlled independently of the size of the input image, which is in contrast to con volutional networks whose computational demands scale linearly with the number of image pixels. W e describe an end-to-end optimization procedure that allows the model to be trained directly with respect to a giv en task and to maximize a performance measure which may depend on the entire sequence of decisions made by the model. This procedure uses backpropagation to train the neural-network components and polic y gradient to address the non-differentiabilities due to the control problem. W e show that our model can learn ef fecti ve task-specific strategies for where to look on se veral image classification tasks as well as a dynamic visual control problem. Our results also suggest that an attention-based model may be better than a conv olutional neural network at both dealing with clutter and scaling up to large input images. 2 Pre vious W ork Computational limitations hav e recei ved much attention in the computer vision literature. For in- stance, for object detection, much work has been dedicated to reducing the cost of the widespread sliding window paradigm, focusing primarily on reducing the number of windows for which the full classifier is ev aluated, e.g. via classifier cascades (e.g. [7, 24]), removing image regions from consideration via a branch and bound approach on the classifier output (e.g. [13]), or by proposing candidate windo ws that are likely to contain objects (e.g. [1, 23]). Even though substantial speedups may be obtained with such approaches, and some of these can be combined with or used as an add-on to CNN classifiers [8], the y remain firmly rooted in the window classifier design for object detection and only exploit past information to inform future processing of the image in a v ery limited way . A second class of approaches that has a long history in computer vision and is strongly motiv ated by human perception are saliency detectors (e.g. [11]). These approaches prioritize the processing of potentially interesting (“salient”) image regions which are typically identified based on some measure of local lo w-le vel feature contrast. Saliency detectors indeed capture some of the properties of human eye movements, but they typically do not to integrate information across fixations, their saliency computations are mostly hardwired, and they are based on lo w-level image properties only , usually ignoring other factors such as semantic content of a scene and task demands (b ut see [22]). Some works in the computer vision literature and elsewhere e.g. [2, 4, 6, 14, 16, 17, 20] hav e em- braced vision as a sequential decision task as we do here. There, as in our work, information about the image is gathered sequentially and the decision where to attend next is based on pre vious fixa- tions of the image. [4] employs the learned Bayesian observer model from [5] to the task of object detection. The learning framew ork of [5] is related to ours as they also employ a policy gradient formulation (cf. section 3) b ut their o verall setup is considerably more restricti ve than ours and only some parts of the system are learned. Our work is perhaps the most similar to the other attempts to implement attentional processing in a deep learning framew ork [6, 14, 17]. Our formulation which employs an RNN to integrate visual information over time and to decide how to act is, ho we ver , more general, and our learning procedure allows for end-to-end optimization of the sequential decision process instead of relying on greedy action selection. W e further demonstrate ho w the same general architecture can be used for ef ficient object recognition in still images as well as to interact with a dynamic visual en vironment in a task-driv en way . 3 The Recurrent Attention Model (RAM) In this paper we consider the attention problem as the sequential decision process of a goal-directed agent interacting with a visual environment. At each point in time, the agent observes the environ- ment only via a bandwidth-limited sensor , i.e. it ne ver senses the en vironment in full. It may extract 2 l t-1 g t Glimpse Sen sor x t ρ(x t , l t-1 ) θ g 0 θ g 1 θ g 2 Glimpse Netw o r k : f g ( θ g ) l t-1 g t l t a t l t g t+1 l t+1 a t+1 h t h t+1 f g (θ g ) h t-1 f l (θ l ) f a (θ a ) f h (θ h ) f g (θ g ) f l (θ l ) f a (θ a ) f h (θ h ) x t ρ(x t , l t-1 ) l t-1 Glimpse Sen sor A) B) C) Figure 1: A) Glimpse Sensor: Given the coordinates of the glimpse and an input image, the sen- sor extracts a r etina-like representation ρ ( x t , l t − 1 ) centered at l t − 1 that contains multiple resolution patches. B) Glimpse Network: Given the location ( l t − 1 ) and input image ( x t ) , uses the glimpse sensor to extract retina representation ρ ( x t , l t − 1 ) . The retina representation and glimpse location is then mapped into a hidden space using independent linear layers parameterized by θ 0 g and θ 1 g respec- tiv ely using rectified units follo wed by another linear layer θ 2 g to combine the information from both components. The glimpse network f g ( . ; { θ 0 g , θ 1 g , θ 2 g } ) defines a trainable bandwidth limited sensor for the attention network producing the glimpse representation g t . C) Model Architecture: Overall, the model is an RNN. The core netw ork of the model f h ( . ; θ h ) takes the glimpse representation g t as input and combining with the internal representation at previous time step h t − 1 , produces the new internal state of the model h t . The location netw ork f l ( . ; θ l ) and the action network f a ( . ; θ a ) use the internal state h t of the model to produce the ne xt location to attend to l t and the action/classification a t respectiv ely . This basic RNN iteration is repeated for a variable number of steps. information only in a local region or in a narrow frequency band. The agent can, howe ver , actively control how to deploy its sensor resources (e.g. choose the sensor location). The agent can also affect the true state of the en vironment by e xecuting actions. Since the en vironment is only partially observed the agent needs to integrate information over time in order to determine how to act and how to deploy its sensor most effecti vely . At each step, the agent receives a scalar reward (which depends on the actions the agent has executed and can be delayed), and the goal of the agent is to maximize the total sum of such rew ards. This formulation encompasses tasks as di verse as object detection in static images and control prob- lems like playing a computer game from the image stream visible on the screen. F or a game, the en vironment state would be the true state of the game engine and the agent’ s sensor would operate on the video frame shown on the screen. (Note that for most games, a single frame would not fully specify the game state). The en vironment actions here would correspond to joystick controls, and the reward would reflect points scored. For object detection in static images the state of the en vi- ronment would be fix ed and correspond to the true contents of the image. The en vironmental action would correspond to the classification decision (which may be executed only after a fixed number of fixations), and the rew ard would reflect if the decision is correct. 3.1 Model The agent is built around a recurrent neural network as shown in Fig. 1. At each time step, it processes the sensor data, integrates information over time, and chooses how to act and how to deploy its sensor at next time step: Sensor: At each step t the agent receiv es a (partial) observation of the environment in the form of an image x t . The agent does not hav e full access to this image but rather can extract information from x t via its bandwidth limited sensor ρ , e.g. by focusing the sensor on some region or frequency band of interest. In this paper we assume that the bandwidth-limited sensor extracts a retina-like representation ρ ( x t , l t − 1 ) around location l t − 1 from image x t . It encodes the region around l at a high-resolution but uses a progressively lower resolution for pixels further from l , resulting in a vector of much 3 lower dimensionality than the original image x . W e will refer to this lo w-resolution representation as a glimpse [14]. The glimpse sensor is used inside what we call the glimpse network f g to produce the glimpse feature vector g t = f g ( x t , l t − 1 ; θ g ) where θ g = { θ 0 g , θ 1 g , θ 2 g } (Fig. 1B). Internal state: The agent maintains an interal state which summarizes information extracted from the history of past observations; it encodes the agent’ s knowledge of the en vironment and is in- strumental to deciding how to act and where to deploy the sensor . This internal state is formed by the hidden units h t of the recurrent neural network and updated over time by the cor e network : h t = f h ( h t − 1 , g t ; θ h ) . The external input to the network is the glimpse feature vector g t . Actions: At each step, the agent performs two actions: it decides how to deploy its sensor via the sensor control l t , and an en vironment action a t which might affect the state of the en vironment. The nature of the en vironment action depends on the task. In this work, the location actions are chosen stochastically from a distribution parameterized by the location network f l ( h t ; θ l ) at time t : l t ∼ p ( ·| f l ( h t ; θ l )) . The environment action a t is similarly drawn from a distribution conditioned on a second network output a t ∼ p ( ·| f a ( h t ; θ a )) . For classification it is formulated using a softmax output and for dynamic environments, its exact formulation depends on the action set defined for that particular en vironment (e.g. joystick mov ements, motor control, ...). Reward: After executing an action the agent receiv es a ne w visual observation of the en vironment x t +1 and a reward signal r t +1 . The goal of the agent is to maximize the sum of the re ward signal 1 which is usually very sparse and delayed: R = P T t =1 r t . In the case of object recognition, for example, r T = 1 if the object is classified correctly after T steps and 0 otherwise. The above setup is a special instance of what is known in the RL community as a Partially Observ- able Markov Decision Process (POMDP). The true state of the en vironment (which can be static or dynamic) is unobserv ed. In this view , the agent needs to learn a (stochastic) polic y π (( l t , a t ) | s 1: t ; θ ) with parameters θ that, at each step t , maps the history of past interactions with the environment s 1: t = x 1 , l 1 , a 1 , . . . x t − 1 , l t − 1 , a t − 1 , x t to a distribution ov er actions for the current time step, sub- ject to the constraint of the sensor . In our case, the policy π is defined by the RNN outlined above, and the history s t is summarized in the state of the hidden units h t . W e will describe the specific choices for the abov e components in Section 4. 3.2 T raining The parameters of our agent are given by the parameters of the glimpse network, the core network (Fig. 1C), and the action network θ = { θ g , θ h , θ a } and we learn these to maximize the total re ward the agent can expect when interacting with the en vironment. More formally , the policy of the agent, possibly in combination with the dynamics of the environ- ment (e.g. for game-playing), induces a distribution ov er possible interaction sequences s 1: N and we aim to maximize the rew ard u nder this distribution: J ( θ ) = E p ( s 1: T ; θ ) h P T t =1 r t i = E p ( s 1: T ; θ ) [ R ] , where p ( s 1: T ; θ ) depends on the policy Maximizing J exactly is non-tri vial since it in volves an e xpectation ov er the high-dimensional inter- action sequences which may in turn in volve unkno wn en vironment dynamics. V iewing the problem as a POMDP , howe ver , allows us to bring techniques from the RL literature to bear: As shown by W illiams [26] a sample approximation to the gradient is given by ∇ θ J = T X t =1 E p ( s 1: T ; θ ) [ ∇ θ log π ( u t | s 1: t ; θ ) R ] ≈ 1 M M X i =1 T X t =1 ∇ θ log π ( u i t | s i 1: t ; θ ) R i , (1) where s i ’ s are interaction sequences obtained by running the current agent π θ for i = 1 . . . M episodes. The learning rule (1) is also kno wn as the REINFORCE rule, and it in volv es running the agent with its current policy to obtain samples of interaction sequences s 1: T and then adjusting the parameters θ of our agent such that the log-probability of chosen actions that ha ve led to high cumulati ve reward is increased, while that of actions having produced lo w reward is decreased. 1 Depending on the scenario it may be more appropriate to consider a sum of discounted rewards, where rew ards obtained in the distant future contribute less: R = P T t =1 γ t − 1 r t . In this case we can ha ve T → ∞ . 4 Eq. (1) requires us to compute ∇ θ log π ( u i t | s i 1: t ; θ ) . But this is just the gradient of the RNN that defines our agent ev aluated at time step t and can be computed by standard backpropagation [25]. V ariance Reduction : Equation (1) provides us with an unbiased estimate of the gradient but it may hav e high v ariance. It is therefore common to consider a gradient estimate of the form 1 M M X i =1 T X t =1 ∇ θ log π ( u i t | s i 1: t ; θ ) R i t − b t , (2) where R i t = P T t 0 =1 r i t 0 is the cumulativ e re ward obtained following the execution of action u i t , and b t is a baseline that may depend on s i 1: t (e.g. via h i t ) but not on the action u i t itself. This estimate is equal to (1) in expectation but may ha ve lower v ariance. It is natural to select b t = E π [ R t ] [21], and this form of baseline known as the v alue function in the reinforcement learning literature. The resulting algorithm increases the log-probability of an action that was followed by a larger than expected cumulative reward, and decreases the probability if the obtained cumulative reward was smaller . W e use this type of baseline and learn it by reducing the squared error between R i t ’ s and b t . Using a Hybrid Super vised Loss: The algorithm described abov e allo ws us to train the agent when the “best” actions are unknown, and the learning signal is only provided via the re ward. For instance, we may not kno w a priori which sequence of fixations pro vides most information about an unkno wn image, but the total rew ard at the end of an episode will gi ve us an indication whether the tried sequence was good or bad. Howe ver , in some situations we do kno w the correct action to take: F or instance, in an object detection task the agent has to output the label of the object as the final action. For the training images this label will be kno wn and we can directly optimize the policy to output the correct label associated with a training image at the end of an observ ation sequence. This can be achiev ed, as is common in supervised learning, by maximizing the conditional probability of the true label giv en the observ ations from the image, i.e. by maximizing log π ( a ∗ T | s 1: T ; θ ) , where a ∗ T corresponds to the ground-truth label(-action) associated with the image from which observ ations s 1: T were obtained. W e follow this approach for classification problems where we optimize the cross entropy loss to train the action netw ork f a and backpropagate the gradients through the core and glimpse networks. The location network f l is always trained with REINFORCE. 4 Experiments W e e valuated our approach on several image classification tasks as well as a simple game. W e first describe the design choices that were common to all our experiments: Retina and location encodings: The retina encoding ρ ( x, l ) extracts k square patches centered at location l , with the first patch being g w × g w pixels in size, and each successiv e patch having twice the width of the pre vious. The k patches are then all resized to g w × g w and concatenated. Glimpse locations l were encoded as real-v alued ( x, y ) coordinates 2 with (0 , 0) being the center of the image x and ( − 1 , − 1) being the top left corner of x . Glimpse network: The glimpse network f g ( x, l ) had two fully connected layers. Let Linear ( x ) de- note a linear transformation of the vector x , i.e. Linear ( x ) = W x + b for some weight matrix W and bias vector b , and let Rect ( x ) = max ( x, 0) be the rectifier nonlinearity . The output g of the glimpse network was defined as g = Rect ( Linear ( h g ) + Linear ( h l )) where h g = Rect ( Linear ( ρ ( x, l ))) and h l = Rect ( Linear ( l )) . The dimensionality of h g and h l was 128 while the dimensionality of g was 256 for all attention models trained in this paper . Location network: The polic y for the locations l was defined by a two-component Gaussian with a fixed v ariance. The location network outputs the mean of the location policy at time t and is defined as f l ( h ) = Linear ( h ) where h is the state of the core network/RNN. Core network: For the classification experiments that follo w the core f h was a network of rectifier units defined as h t = f h ( h t − 1 ) = R ect ( Linear ( h t − 1 ) + Linear ( g t )) . The experiment done on a dynamic en vironment used a core of LSTM units [10]. 2 W e also e xperimented with using a discrete representation for the locations l but found that it was difficult to learn policies ov er more than 25 possible discrete locations. 5 (a) 28x28 MNIST Model Error FC, 2 layers (256 hiddens each) 1.35 % 1 Random Glimpse, 8 × 8 , 1 scale 42.85% RAM, 2 glimpses, 8 × 8 , 1 scale 6.27% RAM, 3 glimpses, 8 × 8 , 1 scale 2.7% RAM, 4 glimpses, 8 × 8 , 1 scale 1.73% RAM, 5 glimpses, 8 × 8 , 1 scale 1.55% RAM, 6 glimpses, 8 × 8 , 1 scale 1.29 % RAM, 7 glimpses, 8 × 8 , 1 scale 1.47% (b) 60x60 T ranslated MNIST Model Error FC, 2 layers (64 hiddens each) 7.56% FC, 2 layers (256 hiddens each) 3.7% Con volutional, 2 layers 2.31% RAM, 4 glimpses, 12 × 12 , 3 scales 2.29% RAM, 6 glimpses, 12 × 12 , 3 scales 1.86 % RAM, 8 glimpses, 12 × 12 , 3 scales 1.84 % T able 1: Classification results on the MNIST and T ranslated MNIST datasets. FC denotes a fully- connected network with two layers of rectifier units. The con volutional network had one layer of 8 10 × 10 filters with stride 5, followed by a fully connected layer with 256 units with rectifiers after each layer . Instances of the attention model are labeled with the number of glimpses, the number of scales in the retina, and the size of the retina. (a) Random test cases for the Translated MNIST task. (b) Random test cases for the Cluttered Translated MNIST task. Figure 2: Examples of test cases for the Translated and Cluttered T ranslated MNIST tasks. 4.1 Image Classification The attention network used in the following classification e xperiments made a classification decision only at the last timestep t = N . The action netw ork f a was simply a linear softmax classifier defined as f a ( h ) = exp ( Linear ( h )) / Z , where Z is a normalizing constant. The RNN state vector h had dimensionality 256 . All methods were trained using stochastic gradient descent with momentum of 0 . 9 . Hyperparameters such as the learning rate and the v ariance of the location policy were selected using random search [3]. The reward at the last time step was 1 if the agent classified correctly and 0 otherwise. The rewards for all other timesteps were 0 . Centered Digits: W e first tested the ability of our training method to learn successful glimpse policies by using it to train RAM models with up to 7 glimpses on the MNIST digits dataset. The “retina” for this experiment w as simply an 8 × 8 patch, which is only big enough to capture a part of a digit, hence the experiment also tested the ability of RAM to combine information from multiple glimpses. Note that since the first glimpse is always random, the single glimpse model is ef fecti vely a classifier that gets a single random 8 × 8 patch as input. W e also trained a standard feedforward neural network with two hidden layers of 256 rectified linear units as a baseline. The error rates achiev ed by the different models on the test set are shown in T able 1a. W e see that each additional glimpse impro ves the performance of RAM until it reaches its minimum with 6 glimpses, where it matches the performance of the fully connected model training on the full 28 × 28 centered digits. This demonstrates the model can successfully learn to combine information from multiple glimpses. Non-Centered Digits: The second problem we considered w as classifying non-centered digits. W e created a new task called Translated MNIST , for which data was generated by placing an MNIST digit in a random location of a larger blank patch. T raining cases were generated on the fly so the effecti ve training set size was 50000 (the size of the MNIST training set) multiplied by the possible number of locations. Figure 2a contains a random sample of test cases for the 60 by 60 T ranslated MNIST task. T able 1b shows the results for sev eral different models trained on the Translated MNIST task with 60 by 60 patches. In addition to RAM and two fully-connected networks we also trained a network with one con volutional layer of 16 10 × 10 filters with stride 5 follo wed by a rectifier nonlinearity and then a fully-connected layer of 256 rectifier units. The con volutional network, the RAM networks, and the smaller fully connected model all had roughly the same number of parameters. Since the con volutional network has some degree of translation in variance b uilt in, it 6 (a) 60x60 Cluttered T ranslated MNIST Model Error FC, 2 layers (64 hiddens each) 28.96% FC, 2 layers (256 hiddens each) 13.2% Con volutional, 2 layers 7.83% RAM, 4 glimpses, 12 × 12 , 3 scales 7.1% RAM, 6 glimpses, 12 × 12 , 3 scales 5.88% RAM, 8 glimpses, 12 × 12 , 3 scales 5.23% (b) 100x100 Cluttered T ranslated MNIST Model Error Con volutional, 2 layers 16.51% RAM, 4 glimpses, 12 × 12 , 4 scales 14.95% RAM, 6 glimpses, 12 × 12 , 4 scales 11.58% RAM, 8 glimpses, 12 × 12 , 4 scales 10.83% T able 2: Classification on the Cluttered T ranslated MNIST dataset. FC denotes a fully-connected network with two layers of rectifier units. The con volutional network had one layer of 8 10 × 10 filters with stride 5, followed by a fully connected layer with 256 units in the 60 × 60 case and 86 units in the 100 × 100 case with rectifiers after each layer . Instances of the attention model are labeled with the number of glimpses, the size of the retina, and the number of scales in the retina. All models except for the big fully connected network had roughly the same number of parameters. Figure 3: Examples of the learned policy on 60 × 60 cluttered-translated MNIST task. Column 1: The input image with glimpse path overlaid in green. Columns 2-7: The six glimpses the network chooses. The center of each image sho ws the full resolution glimpse, the outer low resolution areas are obtained by upscaling the low resolution glimpses back to full image size. The glimpse paths clearly show that the learned policy av oids computation in empty or noisy parts of the input space and directly explores the area around the object of interest. attains a significantly lower error rate of 2 . 3% than the fully connected networks. Ho we ver , RAM with 4 glimpses gets roughly the same performance as the con volutional network and outperforms it for 6 and 8 glimpses, reaching roughly 1 . 9% error . This is possible because the attention model can focus its retina on the digit and hence learn a translation in v ariant policy . This experiment also shows that the attention model is able to successfully search for an object in a big image when the object is not centered. Cluttered Non-Centered Digits: One of the most challenging aspects of classifying real-world images is the presence of a wide range clutter . Systems that operate on the entire image at full resolution are particularly susceptible to clutter and must learn to be inv ariant to it. One possible advantage of an attention mechanism is that it may make it easier to learn in the presence of clutter by focusing on the rele vant part of the image and ignoring the irrele vant part. W e test this hypothesis with sev eral experiments on a new task we call Cluttered T ranslated MNIST . Data for this task was generated by first placing an MNIST digit in a random location of a larger blank image and then adding random 8 by 8 subpatches from other random MNIST digits to random locations of the image. The goal is to classify the complete digit present in the image. Figure 2b shows a random sample of test cases for the 60 by 60 Cluttered T ranslated MNIST task. T able 2a shows the classification results for the models we trained on 60 by 60 Cluttered T ranslated MNIST with 4 pieces of clutter . The presence of clutter makes the task much more difficult but the performance of the attention model is af fected less than the performance of the other models. RAM with 4 glimpses reaches 7 . 1% error , which outperforms fully-connected models by a wide margin and the con v olutional neural network by 0 . 7% , and RAM trained with 6 and 8 glimpses achiev es ev en lower error . Since RAM achieves larger relativ e error improvements ov er a con volutional network in the presence of clutter these results suggest the attention-based models may be better at dealing with clutter than con volutional netw orks because they can simply ignore it by not looking at 7 it. T wo samples of learned policy is shown in Figure 6 and more are included in the supplementary materials. The first column shows the original data point with the glimpse path overlaid. The location of the first glimpse is marked with a filled circle and the location of the final glimpse is marked with an empty circle. The intermediate points on the path are traced with solid straight lines. Each consecutive image to the right shows a representation of the glimpse that the network sees. It can be seen that the learned policy can reliably find and explore around the object of interest while av oiding clutter at the same time. T o further test this hypothesis we also performed experiments on 100 by 100 Cluttered T ranslated MNIST with 8 pieces of clutter . The test errors achieved by the models we compared are shown in T able 2b. The results show similar impro vements of RAM over a con volutional network. It has to be noted that the overall capacity and the amount of computation of our model does not change from 60 × 60 images to 100 × 100 , whereas the hidden layer of the con volutional network that is connected to the linear layer grows linearly with the number of pix els in the input. 4.2 Dynamic En vironments One appealing property of the recurrent attention model is that it can be applied to videos or inter - activ e problems with a visual input just as easily as to static image tasks. W e test the ability of our approach to learn a control policy in a dynamic visual en vironment while perceiving the en vironment through a bandwidth-limited retina by training it to play a simple game. The game is played on a 24 by 24 screen of binary pixels and in volves two objects: a single pixel that represents a ball falling from the top of the screen while bouncing off the sides of the screen and a two-pixel paddle posi- tioned at the bottom of the screen which the agent controls with the aim of catching the ball. When the falling pixel reaches the bottom of the screen the agent either gets a rew ard of 1 if the paddle ov erlaps with the ball and a rew ard of 0 otherwise. The game then restarts from the beginning. W e trained the recurrent attention model to play the game of “Catch” using only the final re ward as input. The network had a 6 by 6 retina at three scales as its input, which means that the agent had to capture the ball in the 6 by 6 highest resolution region in order to know its precise position. In addition to the two location actions, the attention model had three game actions (left, right, and do nothing) and the action network f a used a linear softmax to model a distribution o ver the game actions. W e used a core network of 256 LSTM units. W e performed random search to find suitable hyper -parameters and trained each agent for 20 mil- lion frames. A video of the best agent, which catches the ball roughly 85% of the time, can be do wnloaded from http://www.cs.toronto.edu/ ˜ vmnih/docs/attention.mov . The video sho ws that the recurrent attention model learned to play the game by tracking the ball near the bottom of the screen. Since the agent was not in any way told to track the ball and was only rewarded for catching it, this result demonstrates the ability of the model to learn effecti ve task-specific attention policies. 5 Discussion This paper introduced a novel visual attention model that is formulated as a single recurrent neural network which takes a glimpse window as its input and uses the internal state of the network to select the next location to focus on as well as to generate control signals in a dynamic environment. Although the model is not differentiable, the proposed unified architecture is trained end-to-end from pixel inputs to actions using a policy gradient method. The model has several appealing prop- erties. First, both the number of parameters and the amount of computation RAM performs can be controlled independently of the size of the input images. Second, the model is able to ignore clutter present in an image by centering its retina on the rele v ant re gions. Our e xperiments sho w that RAM significantly outperforms a con v olutional architecture with a comparable number of parame- ters on a cluttered object classification task. Additionally , the flexibility of our approach allo ws for a number of interesting e xtensions. For example, the netw ork can be augmented with another action that allo ws it terminate at any time point and make a final classification decision. Our preliminary experiments show that this allows the network to learn to stop taking glimpses once it has enough in- formation to make a confident classification. The network can also be allo wed to control the scale at which the retina samples the image allowing it to fit objects of different size in the fixed size retina. In both cases, the extra actions can be simply added to the action network f a and trained using the policy gradient procedure we hav e described. Giv en the encouraging results achieved by RAM, ap- plying the model to large scale object recognition and video classification is a natural direction for future work. 8 Supplementary Material Figure 4: Examples of the learned policy on 60 × 60 cluttered-translated MNIST task. Column 1: The input image from MNIST test set with glimpse path ov erlaid in green (correctly classified) or red (false classified). Columns 2-7: The six glimpses the network chooses. The center of each image shows the full resolution glimpse, the outer low resolution areas are obtained by upscaling the lo w resolution glimpses back to full image size. The glimpse paths clearly show that the learned policy av oids computation in empty or noisy parts of the input space and directly explores the area around the object of interest. 9 Figure 5: Examples of the learned policy on 60 × 60 cluttered-translated MNIST task. Column 1: The input image from MNIST test set with glimpse path ov erlaid in green (correctly classified) or red (false classified). Columns 2-7: The six glimpses the network chooses. The center of each image shows the full resolution glimpse, the outer low resolution areas are obtained by upscaling the lo w resolution glimpses back to full image size. The glimpse paths clearly show that the learned policy av oids computation in empty or noisy parts of the input space and directly explores the area around the object of interest. 10 Figure 6: Examples of the learned policy on 60 × 60 cluttered-translated MNIST task. Column 1: The input image from MNIST test set with glimpse path ov erlaid in green (correctly classified) or red (false classified). Columns 2-7: The six glimpses the network chooses. The center of each image shows the full resolution glimpse, the outer low resolution areas are obtained by upscaling the lo w resolution glimpses back to full image size. The glimpse paths clearly show that the learned policy av oids computation in empty or noisy parts of the input space and directly explores the area around the object of interest. 11 References [1] Bogdan Alexe, Thomas Deselaers, and V ittorio Ferrari. What is an object? In CVPR , 2010. [2] Bogdan Alexe, Nicolas Heess, Y ee Whye T eh, and V ittorio Ferrari. Searching for objects driv en by context. In NIPS , 2012. [3] James Bergstra and Y oshua Bengio. Random search for hyper -parameter optimization. The Journal of Machine Learning Resear ch , 13:281–305, 2012. [4] Nicholas J. Butko and Javier R. Mo vellan. Optimal scanning for faster object detection. In CVPR , 2009. [5] N.J. Butko and J.R. Movellan. I-pomdp: An infomax model of eye movement. In Proceedings of the 7th IEEE International Confer ence on Development and Learning , ICDL ’08, pages 139 –144, 2008. [6] Misha Denil, Loris Bazzani, Hugo Larochelle, and Nando de Freitas. Learning where to attend with deep architectures for image tracking. Neural Computation , 24(8):2151–2184, 2012. [7] Pedro F . Felzenszwalb, Ross B. Girshick, and David A. McAllester . Cascade object detection with de- formable part models. In CVPR , 2010. [8] Ross B. Girshick, Jeff Donahue, Tre vor Darrell, and Jitendra Malik. Rich feature hierarchies for accurate object detection and semantic segmentation. CoRR , abs/1311.2524, 2013. [9] Mary Hayhoe and Dana Ballard. Eye mov ements in natural behavior . T r ends in Cognitive Sciences , 9(4):188 – 194, 2005. [10] Sepp Hochreiter and J ¨ urgen Schmidhuber . Long short-term memory . Neural computation , 9(8):1735– 1780, 1997. [11] L. Itti, C. K och, and E. Nieb ur. A model of salienc y-based visual attention for rapid scene analysis. IEEE T ransactions on P attern Analysis and Machine Intelligence , 20(11):1254–1259, 1998. [12] Alex Krizhevsk y , Ilya Sutske ver , and Geoff Hinton. Imagenet classification with deep conv olutional neural networks. In Advances in Neural Information Pr ocessing Systems 25 , pages 1106–1114, 2012. [13] Christoph H. Lampert, Matthew B. Blaschko, and Thomas Hofmann. Beyond sliding windows: Object localization by efficient subwindo w search. In CVPR , 2008. [14] Hugo Larochelle and Geoffrey E. Hinton. Learning to combine foveal glimpses with a third-order boltz- mann machine. In NIPS , 2010. [15] Stefan Mathe and Cristian Sminchisescu. Action from still image dataset and inv erse optimal control to learn task specific visual scanpaths. In NIPS , 2013. [16] Lucas Paletta, Gerald Fritz, and Christin Seifert. Q-learning of sequential attention for visual object recognition from informativ e local descriptors. In CVPR , 2005. [17] M. Ranzato. On Learning Where T o Look. ArXiv e-prints , 2014. [18] Ronald A. Rensink. The dynamic representation of scenes. V isual Cognition , 7(1-3):17–42, 2000. [19] Pierre Sermanet, David Eigen, Xiang Zhang, Micha ¨ el Mathieu, Rob Fergus, and Y ann LeCun. Overfeat: Integrated recognition, localization and detection using conv olutional networks. CoRR , abs/1312.6229, 2013. [20] Kenneth O. Stanley and Risto Miikkulainen. Evolving a roving e ye for go. In GECCO , 2004. [21] Richard S. Sutton, David Mcallester , Satinder Singh, and Y ishay Mansour . Policy gradient methods for reinforcement learning with function approximation. In NIPS , pages 1057–1063. MIT Press, 2000. [22] Antonio T orralba, Aude Oliv a, Monica S Castelhano, and John M Henderson. Contextual guidance of eye mov ements and attention in real-world scenes: the role of global features in object search. Psychol Rev , pages 766–786, 2006. [23] K E A v an de Sande, J.R.R. Uijlings, T Ge vers, and A.W .M. Smeulders. Segmentation as Selective Search for Object Recognition. In ICCV , 2011. [24] Paul A. V iola and Michael J. Jones. Rapid object detection using a boosted cascade of simple features. In CVPR , 2001. [25] Daan W ierstra, Alexander Foerster , Jan Peters, and Juergen Schmidhuber . Solving deep memory pomdps with recurrent policy gradients. In ICANN . 2007. [26] R.J. W illiams. Simple statistical gradient-following algorithms for connectionist reinforcement learning. Machine Learning , 8(3):229–256, 1992. 12

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment