Primitives for Dynamic Big Model Parallelism

When training large machine learning models with many variables or parameters, a single machine is often inadequate since the model may be too large to fit in memory, while training can take a long time even with stochastic updates. A natural recours…

Authors: Seunghak Lee, Jin Kyu Kim, Xun Zheng

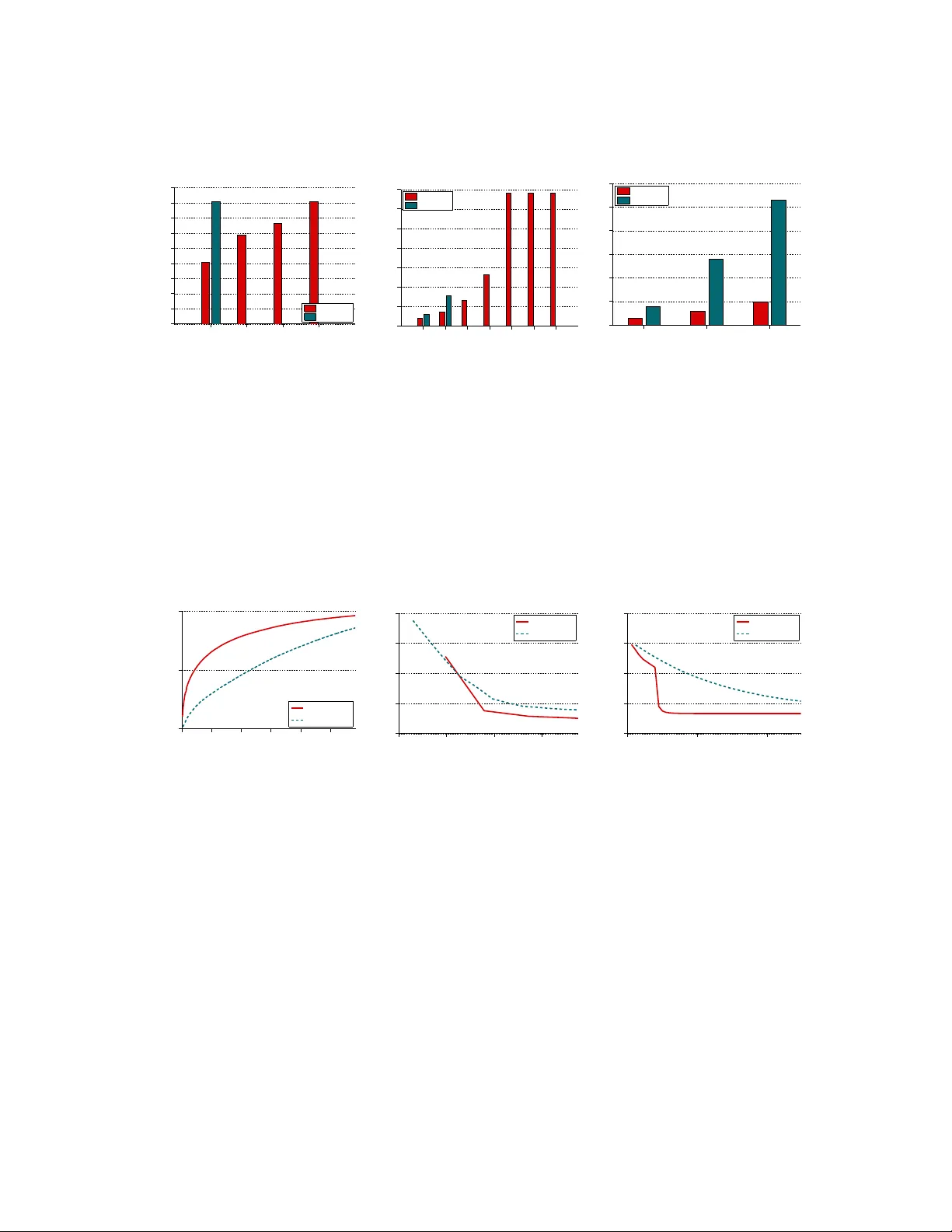

Primiti v es for Dynamic Big Model Parallelism Seunghak Lee, Jin K yu Kim, Xun Zheng, Qirong Ho, Garth A. Gibson, Eric P . Xing ∗ School of Computer Science Carnegie Mellon Uni v ersity , Pittsb urgh, P A, U.S.A. ∗ email: epxing@cs.cmu.edu Nov ember 21, 2021 Abstract When training large machine learning models with man y variables or parameters, a single machine is often inadequate since the model may be too large to fit in memory , while training can take a long time e ven with stochastic updates. A natural recourse is to turn to distrib uted cluster computing, in order to harness additional memory and processors. Howe ver , naiv e, unstructured parallelization of ML algorithms can make inefficient use of distributed memory , while failing to obtain proportional con ver gence speedups — or can e ven result in di vergence. W e de velop a frame work of primiti ves for dynamic model-parallelism, STRADS, in order to explore partitioning and update scheduling of model variables in distrib uted ML algorithms — thus improving their memory efficienc y while presenting new opportunities to speed up con ver gence without compromising inference correct- ness. W e demonstrate the efficacy of model-parallel algorithms implemented in STRADS versus popular implementations for T opic Modeling, Matrix F actorization and Lasso. 1 1. INTR ODUCTION Sensory techniques and digital storage media have impro ved at a breakneck pace, leading to mas- si ve “Big Data” collections that hav e been the focus of recent efforts to achiev e scalable machine learning (ML). Numerous data-par allel algorithmic and system solutions, both heuristic and prin- cipled, ha ve been proposed to speed up inference on Big Data [6, 14, 16, 22]; ho we ver , large-scale ML also encompasses Big Model problems [7], in which models with millions if not billions of v ariables and/or parameters (such as in deep netw orks [5] or large-scale topic models [17]) must be estimated from big (or e ven modestly-sized) datasets. These Big Model problems seem to ha ve re- cei ved less attention in ML communities, which, in turn, has limited their application to real-w orld problems. Big Model problems are challenging because a lar ge number of model variables must be ef- ficiently updated until model con ver gence. Data-parallel algorithms such as stochastic gradient descent [24] concurrently update all model v ariables gi ven a subset of data samples, but this re- quires e very w orker to ha ve full access to all global v ariables — which can be very large, such as the billions of v ariables in Deep Neural Networks [5], or this paper’ s lar ge scale topic model with 22M bigrams by 10K topics (200 billion variables) and matrix factorization with rank 2K on a 480K-by-10K matrix (1B variables). Furthermore, data-parallelism does not consider the possi- bility that some variables may be more important than others for algorithm con vergence, a point that we shall demonstrate through our Lasso implementation (run on 100M coefficients). On the other hand, model-parallel algorithms such as coordinate descent [4] are well-suited to Big Model problems, because parallel workers focus on subsets of model v ariables. This allows the variable space to be partitioned for memory efficienc y , and also allows some variables to be prioritized ov er others. Ho wev er , model-parallel algorithms are usually dev eloped for a specific application such as Matrix Factorization [9] or Lasso [4] — thus, there is utility in dev eloping programming primiti ves that can tackle the common challenges of Big Model problems, while also e xposing ne w opportunities such as v ariable prioritization. Existing distributed framew orks such as MapReduce [6] and GraphLab [14] have shown that 2 T able 1: Summary of LD A, MF , and Lasso on STRADS (detailed pseudocode is in the relev ant sections). Schedule Push and Pull Largest STRADS experiment T opic Modeling (LDA) W ord rotation scheduling Collapsed Gibbs sampling 10K topics, 3.9M docs with 21.8M vocab MF Round-robin scheduling Coordinate descent rank-2K, 480K-by-10K matrix Lasso Dynamic priority scheduling Coordinate descent 100M features, 50K samples common primitiv es such as Map/Reduce or Gather/Apply/Scatter can be applied to a variety of ML applications. Crucially , these frameworks automatically decide which v ariable to update next — MapReduce executes all Mappers at the same time, followed by all Reducers, while GraphLab chooses the next node based on its “chromatic engine” and the user’ s choice of graph consistency model. While such automatic scheduling is con venient, it does not offer the fine-grained control needed to av oid parallelization of variables with subtle interdependencies not seen in the super - ficial problem or graph structure (which can then lead to algorithm di ver gence, as in Lasso [4]). Moreov er , it does not allow users to e xplicitly prioritize variables based on ne w criteria. T o improv e upon these framew orks, we dev elop new primiti ves for dynamic Big Model paral- lelism: schedule , push and pull , which are executed by our STRADS system (STRucture-A w are Dynamic Scheduler). These primiti ves are inspired by the simplicity and wide applicability of MapReduce, but also pro vide the fine control needed to explore novel ways of performing dy- namic model-parallelism. Schedule specifies the next subset of model variables to be updated in parallel, push specifies how individual workers compute partial results on those variables, and pull specifies how those partial results are aggregated to perform the full variable update. A final “automatic primiti ve”, sync , ensures that distributed workers hav e up-to-date v alues of the model v ariables, and is automatically ex ecuted at the end of pull ; the user does not need to implement sync . T o explore the utility of STRADS, we implement schedule , push and pull for three popular ML applications (T able 1): T opic Modeling (LD A), Lasso, and Matrix Factorization (MF). Our goal is not to best specialized implementations in performance, but to demonstrate that STRADS primiti ves enable Big Model problems to be solved with modest programming ef fort. In particular , we tackle topic modeling with 3.9M docs, 10K topics and 21.8M vocab ulary ( 200 B variables), 3 Schedule( Push%pull( Sync( Worker( Worker( Worker( Worker( Worker( Worker( Worker( Worker( Worker( Worker( Worker( Worker( Master( Master( Master( Schedule( Push + pull( Sync( Schedule( Push%pull( Sync( { x j } { z 1 j } { z 2 j } { z 3 j } { z 4 j } { x j } x j X i z i j { x j } Figure 1: High-lev el view of our STRADS primiti ves for dynamic model parallelism. MF with rank-2K on a 480K-by-10K matrix ( 1 B v ariables), and Lasso with 100M features (100M v ariables). 2. PRIMITIVES FOR D YN AMIC MODEL P ARALLELISM “Model parallelism” refers to parallelization of an ML algorithm ov er the space of shared model v ariables, rather than the space of (usually i.i.d.) data samples. At a high lev el, model v ariables are the changing intermediate quantities that an ML algorithm iterativ ely updates, until con ver gence is reached. For example, the coefficients in regression are model variables, which are iterativ ely updated using algorithmic strategies lik e coordinate descent. Model parallelism can be contrasted with data parallelism, in which the ML algorithm is par- allelized ov er indi vidual data samples, such as in stochastic optimization algorithms [25]. A ke y adv antage of the model-parallel approach is that it explicitly partitions the model v ariables into subsets, allo wing ML problems with massi ve model spaces to be tackled on machines with limited memory . Figure 3 shows this adv antage: for topic modeling, STRADS uses less memory per ma- chine as the number of machines increases, unlike the data-parallel Y ahooLD A algorithm. As our experiments will confirm, this means that STRADS can handle larger ML models (gi ven suf ficient machines), whereas Y ahooLD A is strictly constrained by the memory of the smallest machine. This has practical consequences — STRADS LD A can handle bigram vocab ularies with over 20 million term-pairs on modest hardware (enabling large-scale topic modeling applications), while Y ahooLDA cannot. 4 // Generic STRADS application schedule() { // Select U vars x[j] to be sent // to the workers for updating ... return (x[j_1], ..., x[j_U]) } push(worker = p, vars = (x[j_1],...,x[j_U])) { // Compute partial update z for U vars x[j] // at worker p ... return z } pull(workers = [p], vars = (x[j_1],...,x[j_U]), updates = [z]) { // Use partial updates z from workers p to // update U vars x[j]. sync() is automatic. ... } Figure 2: STRADS user -defined primiti ves: schedule , push , pull . W e sho w the basic functional signature of each primiti ve, using pseudocode. T o enable users to systematically and programmatically exploit model parallelism, our pro- posed STRADS framew ork defines a set of primiti ves. Similar to the map-reduce paradigm, these primiti ves are functions that a user writes for his/her ML problem, and STRADS repeatedly exe- cutes these functions to create an iterativ e model-parallel algorithm (Figures 1, 2). Our primitiv es are schedule , push and pull , and a single “round” or iteration of STRADS executes them in that order . In addition, there is an automatic primiti ve, sync , which the user does not ha ve to write. 5 16 32 64 128 0 1000 2000 3000 4000 5000 6000 2.5M vocab, 10k topics Number of machines Memory usage (M) STRADS YahooLDA Figure 3: T opic Modeling: Memory usage per machine , for model-parallellism (STRADS) vs data- parallellism (Y ahooLD A). W ith more machines, STRADS LD A uses less memory per mac hine , because it explicitly partitions the model space. Schedule: This primitiv e determines the parallel order for updating model variables; as shown in Figure 2, schedule selects U model v ariables to be dispatched for updates (Figure 1). W ithin the schedule function, the programmer may access all data D and all model variables x , in order to decide which U variables to dispatch. The simplest possible schedule is to select model variables according to a fix ed sequence, or drawn uniformly at random. As we shall later see, schedule also allo ws model v ariables to be selected in a way that: (1) dynamically focuses work ers on the fastest- con ver ging v ariables, while av oiding already-con verged variables; (2) av oids parallel dispatch of v ariables with inter-dependencies, which can lead to di vergence and incorrect e xecution. Push and Pull: These primitiv es control the flow of model variables x and data D from the master scheduler machines(s) to and from the workers (Figure 1). The push primitiv e dispatches a set of variables { x j 1 , . . . , x j U } to each worker p , which then computes a partial update z for { x j 1 , . . . , x j U } (or a subset of it). When writing push , the user can take adv antage of data par- titioning: e.g., when only a fraction 1 P of the data samples are stored at each worker , the p -th worker should compute partial results z p j = P D i f x j ( D i ) by iterating over its 1 L data points D i . 6 The pull primiti ve is used to aggre gate the partial results { z p j } from all work ers, and commit them to the v ariables { x j 1 , . . . , x j U } . Our STRADS LD A, Lasso and MF applications partition the data samples uniformly ov er machines. Synchronization: The model v ariables x are globally accessible through a distrib uted, parti- tioned k ey-v alue store (represented by standard arrays in our pseudocode). Sync is a built-in prim- iti ve that ensures all push workers can access up-to-date model variables, and is automatically ex ecuted whenev er pull writes to any v ariable x [ j ] . The user does not need to implement sync . A variety of key-v alue store synchronization schemes exist, such as Bulk Synchronous Parallel (BSP), Stale Synchronous P arallel (SSP) [13], and Asynchronous Parallel (AP). Each presents a dif ferent trade-off: BSP is simple and correct but easily bottlenecked by slow workers, AP is usu- ally effecti ve but risks algorithmic errors and div ergence because it has no error guarantees, and SSP is fast and guaranteed to con ver ge but requires more engineering work and parameter tuning. In this paper , we use BSP for sync throughout; we lea ve the use of alternati ve schemes lik e SSP or AP as future work. 3. HARNESSING MODEL-P ARALLELISM IN ML APPLICA TIONS THR OUGH STRADS In this section, we shall explore how users can apply model-parallelism to their own ML applica- tions, using the STRADS primitiv es. W e shall cov er 3 ML application case studies, with the intent of showing that model-parallelism in STRADS can be simple and effecti ve, yet also po werful enough to expose ne w and interesting opportunities for speeding up distributed ML. 3.1 Latent Dirichlet Allocation (LD A) W e introduce STRADS programming through topic modeling via LD A [3]. Big LD A models provide a strong use case for model-parallelism: when thousands of topics and millions of words are used, the LD A model contains billions of global variables, and data-parallel implementations face the difficult challenge of providing access to all these v ariables; in contrast, model-parallellism explicitly di vides up the v ariables, so that workers only need to access a fraction at a gi ven time. 7 Formally , LD A takes a corpus of N documents as input, and outputs K topics (each topic is just a categorical distribution o ver all V unique words in the corpus) as well as N K -dimensional topic vectors (soft assignments of topics to documents). The LD A model is P( W | Z , θ , β ) = N Y i =1 M i Y j =1 P( w ij | z ij , β )P( z ij | θ i ) , where (1) w ij is the j -th token (word position) in the i -th document, (2) M i is the number of tokens in document i , (3) z ij is the topic assignment for w ij , (4) θ i is the topic vector for document i , and (5) β is a matrix representing the K V -dimensional topics. LDA is commonly reformulated as a “collapsed” model [12] in which θ , β are integrated out for faster inference. Inference is performed using Gibbs sampling, where each z ij is sampled in turn according to its distrib ution conditioned on all other variables, P( z ij | W , Z − ij ) . T o perform this computation without having to iterate over all W , Z , suf ficient statistics are kept in the form of a “doc-topic” table D (analogous to θ ), and a “word-topic” table B (analogous to β ). More precisely , D ik counts the number of assignments z ij = k in doc i , while B v k counts the number of tokens w ij = v such that z ij = k . STRADS implementation: In order to perform model-parallelism, we first identify the model v ariables, and create a schedule strategy over them. In LD A, the assignments z ij are the model v ariables, while D , B are summary statistics o ver the z ij that are used to speed up the sampler . Our schedule strategy equally di vides the V words into U subsets V 1 , . . . , V U (where U is the number of workers). Each worker will only process words from one subset V a at a time. Subsequent in vocations of schedule will “rotate” subsets amongst workers, so that every worker touches all U subsets e very U in vocations. For data partitioning, we divide the document tokens W e venly across workers, and denote w orker p ’ s set of tokens by W q p . During push , suppose that work er p is assigned to subset V a by schedule . This worker will only Gibbs sample the topic assignments z ij such that (1) ( i, j ) ∈ W q p and (2) w ij ∈ V a . In other words, w ij must be assigned to w orker p , and must also be a word in V a . The latter condition is the source of model-parallelism: observe how the assignments z ij are chosen for sampling based on word 8 // STRADS LDA schedule() { dispatch = [] // Empty list for a=1..U // Rotation scheduling idx = ((a+C-1) mod U) + 1 dispatch.append( V[q_idx] ) return dispatch } push(worker = p, vars = [V_a, ..., V_U]) { t = [] // Empty list for (i,j) in W[q_p] // Fast Gibbs sampling if w[i,j] in V_p t.append( (i,j,f_1(i,j,D,B)) ) return t } pull(workers = [p], vars = [V_a, ..., V_U], updates = [t]) { for all (i,j) // Update sufficient stats (D,B) = f_2([t]) } Figure 4: STRADS LDA pseudocode. Definitions for f 1 , f 2 , q p are in the text. C is a global model v ariable. di visions V a . Note that all z ij will be sampled exactly once after U in vocations of schedule . W e use the fast Gibbs sampler from [20] to push update z ij ← f 1 ( i, j, D , B ) , where f 1 ( · ) represents the fast Gibbs sampler equation. The pull step simply updates the sufficient statistics D , B using the new z ij , and we represent this procedure as a function ( D , B ) ← f 2 ([ z ij ]) . Figure 4 provides pseudocode for STRADS LD A. Model parallelism results in low error: Parallel Gibbs sampling is not generally guaranteed to con ver ge [11], unless the variables being parallel-sampled are conditionally independent of each 9 0 100 200 300 −1 −0.5 0 0.5 1 1.5 2 2.5M vocab, 5K topics, 64 machines Iteration s −error STRADS Figure 5: STRADS LD A: s -error ∆ r,t at each iteration, on the W ikipedia unigram dataset with K = 5000 and 64 machines. other . Because STRADS LD A assigns workers to disjoint words V and documents w ij , each worker’ s v ariables z ij are (almost) conditionally independent of other workers, except for a single shared dependenc y: the column sums of B (denoted by s , and stored as an extra row appended to B ), which are required for correct normalization of the Gibbs sampler conditional distributions in f 1 () . The column sums s are synced at the end of e very pull , but will go out-of-sync during worker pushes . T o understand how error in s affects sampler con ver gence, consider the Gibbs sampling conditional distribution for a topic indicator z ij : P( z ij | W , Z − ij ) ∝ P( w ij | z ij , W − ij , Z − ij )P( z ij | Z − ij ) = γ + B w ij ,z ij V γ + P V v =1 B v ,z ij × α + D i,z ij K α + P K k =1 D i,k . In the first term, the denominator quantity P V v =1 B v ,z ij is exactly the sum o ver the z ij -th column of B , i.e. s z ij . Thus, errors in s induce errors in the probability distribution U w ij ∼ P( w ij | z ij , W − ij , Z − ij ) , which is just the discrete probability that topic z ij will generate word w ij . As a proxy for the error in U , we can measure the dif ference between the true s and its local copy ˜ s p on worker p . If s = ˜ s p , then U has zero error . W e can show that the error in s is empirically negligible (and hence the error in U is also small). 10 Consider a single STRADS LD A iteration t , and define its s -error to be ∆ t = 1 P M P P p =1 k ˜ s p − s k 1 , (1) where M is the total number of tokens w ij . The s -error ∆ r,t must lie in [0 , 2] , where 0 means no error . Figure 5 plots the s -error for the “W ikipedia unigram” dataset (refer to our experiments section for details), for K = 5000 topics and 64 machines (128 processor cores total). The s -error is ≤ 0 . 002 throughout, confirming that STRADS LD A exhibits very small parallelization error . 3.2 Matrix Factorization (MF) STRADS’ s model-parallelism benefits other models as well: we now consider Matrix Factorization (collaborati ve filtering), which can be used to predict users’ unknown preferences, giv en their kno wn preferences and the preferences of others. While most MF implementations tend to focus on small decompositions with rank K ≈ 100 [23, 9, 21], we are interested in enabling larger decompositions with rank > 1000 , where the much larger factors (billions of variables) pose a challenge for purely data-parallel algorithms (such as naiv e SGD) that need to share all variables across all workers; ag ain, STRADS addresses this by explicitly di viding v ariables across workers. Formally , MF takes an incomplete matrix A ∈ R N × M as input, where N is the number of users, and M is the number of items/preferences. The idea is to discover rank- K matrices W ∈ R N × K and H ∈ R K × M such that WH ≈ A . Thus, the product WH can be used to predict the missing entries (user preferences). Formally , let Ω be the set of indices of observed entries in A , let Ω i be the set of observed column indices in the i -th row of A , and let Ω j be the set of observed row indices in the j -th column of A . Then, the MF task is defined as an optimization problem: min W , H P ( i,j ) ∈ Ω ( a i j − w i h j ) 2 + λ ( k W k 2 F + k H k 2 F ) . (2) This can be solved using parallel CD [21], with the follo wing update rule for H : ( h k j ) ( t ) ← P i ∈ Ω j r i j + ( w i k ) ( t − 1) ( h k j ) ( t − 1) ( w i k ) ( t − 1) λ + P i ∈ Ω j { ( w i k ) ( t − 1) } 2 , (3) 11 where r i j = a i j − ( w i ) ( t − 1) ( h j ) ( t − 1) for all ( i, j ) ∈ Ω , and a similar rule holds for W . STRADS implementation: Our schedule strategy is to partition the rows of A into U disjoint index sets q p , and the columns of A into U disjoint index sets r p . W e then dispatch the model v ariables W , H in round-robin fashion, according to these sets q p , r p . T o update elements of W , each worker p computes partial updates on its assigned columns r p of A and H , and analogously for H and ro ws q p of A and W . The sets q p , r p also tie neatly into data partitioning: we merely hav e to di vide A into U pairs of submatrices (where U is the number of work ers), and store the the submatrices A q p and A r p at the p -th worker . Consider the push update for H (the case for W is similar). T o parallel-update a specific element ( h k j ) ( t ) , we need ( w i k ) ( t − 1) for all i ∈ Ω j , and ( h j ) ( t − 1) . W e then compute ( a k j ) ( t ) p ← g 1 ( k , j, p ) := X i ∈ (Ω j ) p n r i j + ( w i k ) ( t − 1) ( h k j ) ( t − 1) o ( w i t ) ( t − 1) , ( b k j ) ( t ) p ← g 2 ( k , j, p ) := X i ∈ (Ω j ) p n ( w i k ) ( t − 1) o 2 , where Ω j are the (observed) elements of column A j in worker p ’ s ro w-submatrix A q p . Finally , pull aggregates the updates: ( h k j ) ( t ) ← g 3 ( k , j , [( a k j ) ( t ) p , ( b k j ) ( t ) p ]) := P U p =1 ( a k j ) ( t ) p λ + P U p =1 ( b k j ) ( t ) p , with a similar definition for updating W using ( w i k ) ( t ) ← f 3 () and f 1 ( i, k , p ) , f 2 ( i, k , p ) . This push-pull scheme is free from parallelization error: when W are updated by push , they are mu- tually independent because H is held fixed, and vice-versa. Figure 6 shows the STRADS MF pseudocode. 12 // STRADS Matrix Factorization schedule() { // Round-robin scheduling if counter <= U // Do W return W[q_counter] else // Do H return H[r_(counter-U)] } push(worker = p, vars = X[s]) { z = [] // Empty list if counter <= U // X is from W for row in s, k=1..K z.append( (f_1(row,k,p),f_2(row,k,p)) ) else // X is from H for col in s, k=1..K z.append( (g_1(k,col,p),g_2(k,col,p)) ) return z } pull(workers=[p], vars=X[s], updates=[z]) { if counter <= U // X is from W for row in s, k=1..K W[row,k] = f_3(row,k,[z]) else // X is from H for col in s, k=1..K H[k,col] = g_3(k,col,[z]) counter = (counter mod 2 * U) + 1 } Figure 6: STRADS MF pseudocode. Definitions for f 1 , g 1 , . . . and q p , r p are in the text. counter is a global model v ariable. 3.3 Lasso STRADS not only supports simple static schedules , but also dynamic, adaptiv e strategies that take the model state into consideration. Consider Lasso regression [19], which discovers a small 13 subset of features/dimensions that predict the output y . While Lasso can be solved by random parallelization ov er each dimension’ s coefficients, this strategy fails to con ver ge in the presence of strong dependencies between dimensions [4]. Our STRADS Lasso implementation tackles this challenge by (1) av oiding the simultaneous update of coefficients whose dimensions are highly inter-dependent, and (2) prioritizing coef ficients that contrib ute the most to algorithm con vergence. These properties complement each other in an algorithmically ef ficient way , as we shall see. Formally , Lasso can be defined as an optimization problem: min β ` ( X , y , β ) + λ X j | β j | , (4) where λ is a regularization parameter that determines the sparsity of β , and ` ( · ) is a non-negati ve con ve x loss function such as squared-loss or logistic-loss; we assume that X and y are standard- ized and consider (4) without an intercept. For simplicity but without loss of generality , we let ` ( X , y , β ) = 1 2 k y − X β k 2 2 , and note that it is straightforward to use other loss functions. Lasso can be solved using coordinate descent (CD) updates [8]; by taking the gradient of (4), we obtain the CD update rule for β j : β ( t ) j ← S ( x T j y − X k 6 = j x T j x k β ( t − 1) k , λ ) , (5) where S ( · , λ ) is a soft-thresholding operator [8], defined by S ( β j , λ ) ≡ sign ( β ) ( | β | − λ ) . STRADS implementation: Our Lasso schedule strategy picks variables dynamically , according to the model state. First, we define a probability distribution c = [ c 1 , . . . , c j ] ov er the β ; the purpose of c is to prioritize β j ’ s during schedule , and thus speed up con ver gence. In particular , we observe that choosing β j with probability c j = f 1 ( j ) : ∝ | β ( t j − 2) j − β ( t j − 1) j | + η substantially speeds up the Lasso conv ergence rate (see supplement for our theoretical motiv ation), where η is a small positi ve constant, and t j is the iteration counter for the j -th variable. T o pre vent non-con ver gence due to dimension inter-dependencies [4], we only schedule β j and β k for concurrent updates if x T j x k ≈ 0 . This is performed as follo ws: first, select U 0 candidates β j s 14 from the probability distribution c to form a set C . Next, choose a subset B ⊆ C of size U ≤ U 0 such that x T j x k < ρ for all j, k ∈ B , where ρ ∈ (0 , 1] ; we represent this selection procedure 1 by the function f 2 ( C ) . Here U 0 and ρ are user-defined parameters. W e will show that this schedule with suf ficiently large U 0 and small ρ greatly speeds up con ver gence over nai ve random scheduling. Finally , we execute push and pull to update the { β j } ∈ B using U workers in parallel. The ro ws of the data matrix X are partitioned into U submatrices, and the p -th worker stores the sub- matrix X p ; W ith X partitioned in this manner, we need to modify the update rule Eq. (5) accord- ingly . Using U workers, push computes U partial summations for each selected β j , denoted by { z ( t ) j, 1 , . . . , z ( t ) j,U } , where z ( t ) j,p represents the partial summation for the j -th β in the p -th worker at the t -th iteration: z ( t ) j,p ← f 3 ( p, j ) := ( x p j ) T y − X k 6 = j ( x p j ) T ( x p k ) β ( t − 1) k (6) After all pushes hav e been completed, pull updates β j via β ( t ) j = f 4 ( j, [ z ( t ) j,p ]) := S ( P U p =1 z ( t ) j,p , λ ) . Figure 7 illustrates the STRADS LASSO pseudocode. 1 Note that this procedure is inexpensi ve: by selecting U 0 candidate β ’ s first, only U 0 2 dependencies need to be checked, as opposed to J 2 where J is the total number of β . 15 // STRADS Lasso schedule() { // Priority-based scheduling for all j // Get new priorities c_j = f_1(j) for a=1..U’ // Prioritize betas random draw s_a using [c_1, ..., c_j] // Get ’safe’ betas (j_1, ..., j_U) = f_2(s_1, ..., s_U’) return (b[j_1], ..., b[j_U]) } push(worker = p, vars = (b[j_1],...,b[j_U])) { z = [] // Empty list for a=1..U // Compute partial sums z.append( f_3(p,j_a) ) return z } pull(workers = [p], vars = (b[j_1],...,b[j_U]), updates = [z]) { for a=1..U // Aggregate partial sums b[j_a] = f_4(j_a,[z]) } Figure 7: STRADS Lasso pseudocode. Definitions for f 1 , f 2 , . . . are given in the te xt. 4. EXPERIMENTS W e now demonstrate that our STRADS implementations of LD A, MF and Lasso can (1) reach larger model sizes than other baselines; (2) conv erge at least as fast, if not faster , than other baselines; (3) with additional machines, STRADS uses less memory per machine (efficient par- titioning). For baselines, we used (a) a STRADS implementation of distrib uted Lasso with only a naiv e round-robin scheduler (Lasso-RR), (b) GraphLab’ s Alternating Least Squares (ALS) im- plementation of MF [14], (c) Y ahooLD A for topic modeling [1]. Note that Lasso-RR imitates the 16 random scheduling scheme proposed by Shotgun algorithm on STRADS. W e chose GraphLab and Y ahooLDA, as the y are popular choices for distributed MF and LD A. W e conducted experiments on two clusters [10] (with 2-core and 16-core machines respec- ti vely), to show the effecti veness of STRADS model-parallelism across dif ferent hardware. W e used the 2-core cluster for LD A, and the 16-core cluster for Lasso and MF . The 2-core cluster con- tains 128 machines, each with two 2.6GHz AMD cores and 8GB RAM, and connected via a 1Gbps network interface. The 16-core cluster contains 9 machines, each with 16 2.1GHz AMD cores and 64GB RAM, and connected via a 40Gbps network interface. All our experiments use a fixed data size, and we v ary the number of machines and/or the model size (unless otherwise stated). 4.1 Datasets Latent Dirichlet Allocation W e used 3 . 9 M English W ikipedia abstracts, and conducted experi- ments using both unigram (1-word) tokens ( V = 2 . 5 M unique unigrams, 179 M tokens) and bigram (2-word) tokens ( V = 21 . 8 M unique bigrams, 79 M tokens). W e note that our bigram vocab ulary ( 21 . 8 M) is an order of magnitude larger than recently published results [1], demonstrating that STRADS scales to very large models. W e set the number of topics to K = 5000 and 10000 (again, significantly larger than recent literature [1]), which creates extremely large word-topic tables: 12 . 5 B elements (unigram) and 109 B elements (bigram). Matrix F actorization W e used the Nexflix dataset [2] for our MF experiments: 100M anoni- mized ratings from 480,189 users on 17,770 mo vies. W e v aried the rank of W , H from K = 20 to 2000 , which exceeds the upper limit of pre vious MF papers [23, 9, 21]. Lasso W e used synthetic data with 50K samples and J = 10 M to 100M features, where e v- ery feature x j has only 25 non-zero samples. T o simulate correlations between adjacent features (which exist in real-world data), we first added U nif (0 , 1) noise to x 1 . Then, for j = 2 , . . . , J , with 0.9 probability we add j = U nif (0 , 1) noise to x j , otherwise we add 0 . 9 j − 1 + 0 . 1 U nif (0 , 1) to x j . 17 4.2 Speed and Model Sizes 2.5M/5k 2.5M/10k 21.8M/5k 21.8M/10k 0 100 200 300 400 500 600 700 800 900 64 machines Vocab/Topics Seconds STRADS YahooLDA 20 40 80 160 320 1000 2000 0 200 400 600 800 1000 1200 1400 6620 34194 9 machines Ranks Seconds STRADS GraphLab 10M 50M 100M 0 1 2 3 4 5 6 x 10 5 9 machines Features Seconds STRADS Lasso−RR Figure 8: Conv ergence time versus model size for STRADS and baselines for (left) LD A, (center) MF , and (right) Lasso. W e omit the bars if a method did not reach 98% of STRADS’ s con ver gence point (Y ahooLD A and GraphLab-MF failed at 2.5M-V ocab/10K-topics and rank K ≥ 80 , respecti vely). STRADS not only reaches larger model sizes than Y ahooLD A, GraphLab, and Lasso-RR, but also con ver ges significantly faster . 0 1 2 3 4 5 x 10 4 −3.5 −3 −2.5 x 10 9 2.5M vocab, 5K topics 32 machines Seconds Log−Likelihood STRADS YahooLDA 0 50 100 150 0.5 1 1.5 2 2.5 80 ranks 9 machines Seconds RMSE STRADS GraphLab 0 500 1000 0.05 0.1 0.15 0.2 0.25 100M features 9 machines Seconds Objective STRADS Lasso−RR Figure 9: Conv ergence trajectories of dif ferent methods for (left) LD A, (center) MF , and (right) Lasso. Figure 8 shows the time taken by each algorithm to reach a fix ed objecti ve v alue (ov er a range of model sizes), as well as the lar gest model size that each baseline was capable of running. For LD A and MF , STRADS handles much larger model sizes than either Y ahooLD A (could only handle 5K topics on the unigram dataset) or GraphLab (could only handle rank < 80 ), while con verg- ing more quickly; we attribute STRADS’ s faster con ver gence to lo wer parallelization error (LD A only) and reduced synchronization requirements through careful model partitioning (LD A, MF). In particular , Y ahooLD A stores nearly the whole word-topic table on ev ery machine, so its max- imum model size is limited by the smallest machine (Figure 3). For Lasso, STRADS con verges 18 0 1 2 3 x 10 4 −3.4 −3.2 −3 −2.8 −2.6 −2.4 x 10 9 2.5M vocab, 5K topics Seconds Log−Likelihood STRADS (16 machines) STRADS (32 machines) STRADS (64 machines) STRADS (128 machines) 16 32 64 128 0 1 2 3 4 5 6 7 8 x 10 4 2.5M vocab, 5K topics Number of machines Seconds STRADS (16 machines) STRADS (32 machines) STRADS (64 machines) STRADS (128 machines) Figure 10: STRADS LD A scalablity with increasing machines using a fixed model size. (Left) Con ver- gence trajectories; (Right) T ime taken to reach a log-likelihood of − 2 . 6 × 10 9 . more quickly than Lasso-RR because of our dynamic schedule strategy , which is graphically cap- tured in the con ver gence trajectory seen in Figure 9 — observe that STRADS’ s dynamic schedule causes the Lasso objectiv e to plunge quickly to the optimum at around 250 seconds. W e also see that STRADS LD A and MF achiev ed better objectiv e v alues, confirming that STRADS model- parallelism is fast without compromising con vergence quality . 4.3 Scalability In Figure 10, we sho w the con ver gence trajectories and time-to-con ver gence for STRADS LD A using different numbers of machines at a fixed model size (unigram with 2.5M vocab and 5K top- ics). The plots confirm that STRADS LD A exhibits faster con vergence with more machines, and that the time to con ver gence almost halves with e very doubling of machines (near-linear scaling). 5. DISCUSSION AND RELA TED WORK As a frame work of user-programmable primitiv es for dynamic Big Model-parallelism, STRADS provides the follo wing benefits: (1) scalability and efficient memory utilization, allo wing larger models to be run with additional machines (because the model is partitioned, rather than dupli- cated across machines); (2) the ability to in voke dynamic schedules that reduce model variable dependencies across w orkers, leading to lower parallelization error and thus faster , correct con ver - gence. 19 While the notion of model-parallelism is not ne w , our contrib ution is to study it within the con- text of a programmable system (STRADS), using primitiv es that enable general, user-programmable partitioning and static/dynamic scheduling of variable updates (based on model dependencies). Pre vious works explore aspects of model-parallelism in a more specific context: Scherrer et al. [18] proposed a static model partitioning scheme specifically for parallel coordinate descent, while GraphLab [15, 14] statically pre-partitions data and v ariables through a graph abstraction. An important direction for future research is to reduce the communication costs of using STRADS. Currently , STRADS adopts a star topology from scheduler machines to workers, which causes the scheduler to e ventually become a bottleneck as we increase the number of machines. T o mitigate this issue, we wish to explore dif ferent sync schemes such as an asynchronous paral- lelism [1] and stale synchronous parallelism [13]. W e also want to explore the use of STRADS for other popular ML applications, such as support vector machines and logistic re gression. REFERENCES [1] Amr Ahmed, Moahmed Aly , Joseph Gonzalez, Shrav an Narayanamurthy , and Alexander J Smola. Scalable inference in latent v ariable models. In WSDM , pages 123–132. ACM, 2012. [2] James Bennett and Stan Lanning. The netflix prize. In Pr oceedings of KDD cup and workshop , volume 2007, page 35, 2007. [3] David M Blei, Andre w Y Ng, and Michael I Jordan. Latent dirichlet allocation. the Journal of mac hine Learning r esear ch , 3:993–1022, 2003. [4] Joseph K Bradley , Aapo Kyrola, Danny Bickson, and Carlos Guestrin. Parallel coordinate descent for l1-regularized loss minimization. ICML , 2011. [5] J. Dean, G. Corrado, R. Monga, K. Chen, M. Devin, Q. V . Le, M. Z. Mao, M. Ranzato, A. W . Senior , P . A. T ucker , et al. Large scale distributed deep netw orks. In NIPS , pages 1232–1240, 2012. [6] Jeffre y Dean and Sanjay Ghemawat. MapReduce: simplified data processing on large clusters. Com- munications of the A CM , 51(1):107–113, 2008. 20 [7] Jianqing Fan, Richard Samworth, and Y ichao W u. Ultrahigh dimensional feature selection: beyond the linear model. The J ournal of Machine Learning Resear ch , 10:2013–2038, 2009. [8] J. Friedman, T . Hastie, H. Hofling, and R. T ibshirani. Pathwise coordinate optimization. Annals of Applied Statistics , 1(2):302–332, 2007. [9] Rainer Gemulla, Erik Nijkamp, Peter J Haas, and Y annis Sismanis. Large-scale matrix factorization with distributed stochastic gradient descent. In Pr oceedings of the 17th ACM SIGKDD international confer ence on Knowledge discovery and data mining , pages 69–77. A CM, 2011. [10] Garth Gibson, Gary Grider , Andree Jacobson, and W yatt Llo yd. Probe: A thousand-node experimental cluster for computer systems research. USENIX; login , 38, 2013. [11] J. Gonzalez, Y . Lo w , A. Gretton, and C. Guestrin. P arallel gibbs sampling: From colored fields to thin junction trees. In International Confer ence on Artificial Intelligence and Statistics , pages 324–332, 2011. [12] Thomas L Griffiths and Mark Steyv ers. Finding scientific topics. Pr oceedings of the National Academy of Sciences of the United States of America , 101(Suppl 1):5228–5235, 2004. [13] Q. Ho, J. Cipar , H. Cui, J.-K. Kim, S. Lee, P . B. Gibbons, G. Gibson, G. R. Ganger , and E. P . Xing. More ef fective distrib uted ml via a stale synchronous parallel parameter server . In NIPS , 2013. [14] Y . Lo w , J. Gonzalez, A. Kyrola, D. Bickson, C. Guestrin, and J. M. Hellerstein. Distributed GraphLab: A Frame work for Machine Learning and Data Mining in the Cloud. PVLDB , 2012. [15] Y ucheng Low , Joseph Gonzalez, Aapo K yrola, Dann y Bickson, Carlos Guestrin, and Joseph M. Heller- stein. Graphlab: A new parallel frame work for machine learning. In U AI , July 2010. [16] Grzegorz Malewicz, Matthew H Austern, Aart JC Bik, James C Dehnert, Ilan Horn, Naty Leiser, and Grzegorz Czajko wski. Pregel: a system for large-scale graph processing. In Pr oceedings of the 2010 A CM SIGMOD International Conference on Mana gement of data , pages 135–146. A CM, 2010. [17] David Newman, Arthur Asuncion, Padhraic Smyth, and Max W elling. Distributed algorithms for topic models. The J ournal of Machine Learning Resear ch , 10:1801–1828, 2009. 21 [18] Chad Scherrer , Ambuj T e wari, Mahantesh Halappanav ar , and Da vid Haglin. Feature clustering for accelerating parallel coordinate descent. NIPS , 2012. [19] R. T ibshirani. Regression shrinkage and selection via the lasso. Journal of the Royal Statistical Society . Series B (Methodological) , 58(1):267–288, 1996. [20] Limin Y ao, David Mimno, and Andrew McCallum. Efficient methods for topic model inference on streaming document collections. 2009. [21] Hsiang-Fu Y u, Cho-Jui Hsieh, Si Si, and Inderjit Dhillon. Scalable coordinate descent approaches to parallel matrix factorization for recommender systems. In ICDM 2012 , pages 765–774. IEEE, 2012. [22] M. Zaharia, M. Chowdhury , M. J. Franklin, S. Shenker , and I. Stoica. Spark: cluster computing with working sets. In Pr oceedings of the 2nd USENIX conference on Hot topics in cloud computing , 2010. [23] Y . Zhou, D. W ilkinson, R. Schreiber , and R. Pan. Large-scale parallel collaborativ e filtering for the netflix prize. In Algorithmic Aspects in Information and Mana gement , pages 337–348. Springer , 2008. [24] Martin Zinkevich, John Langford, and Alex J Smola. Slow learners are fast. In Advances in Neural Information Pr ocessing Systems , pages 2331–2339, 2009. [25] Martin Zinkevich, Markus W eimer , Lihong Li, and Alex J Smola. Parallelized stochastic gradient descent. In Advances in Neural Information Pr ocessing Systems , pages 2595–2603, 2010. 22

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment