Semi-Separable Hamiltonian Monte Carlo for Inference in Bayesian Hierarchical Models

Sampling from hierarchical Bayesian models is often difficult for MCMC methods, because of the strong correlations between the model parameters and the hyperparameters. Recent Riemannian manifold Hamiltonian Monte Carlo (RMHMC) methods have significa…

Authors: Yichuan Zhang, Charles Sutton

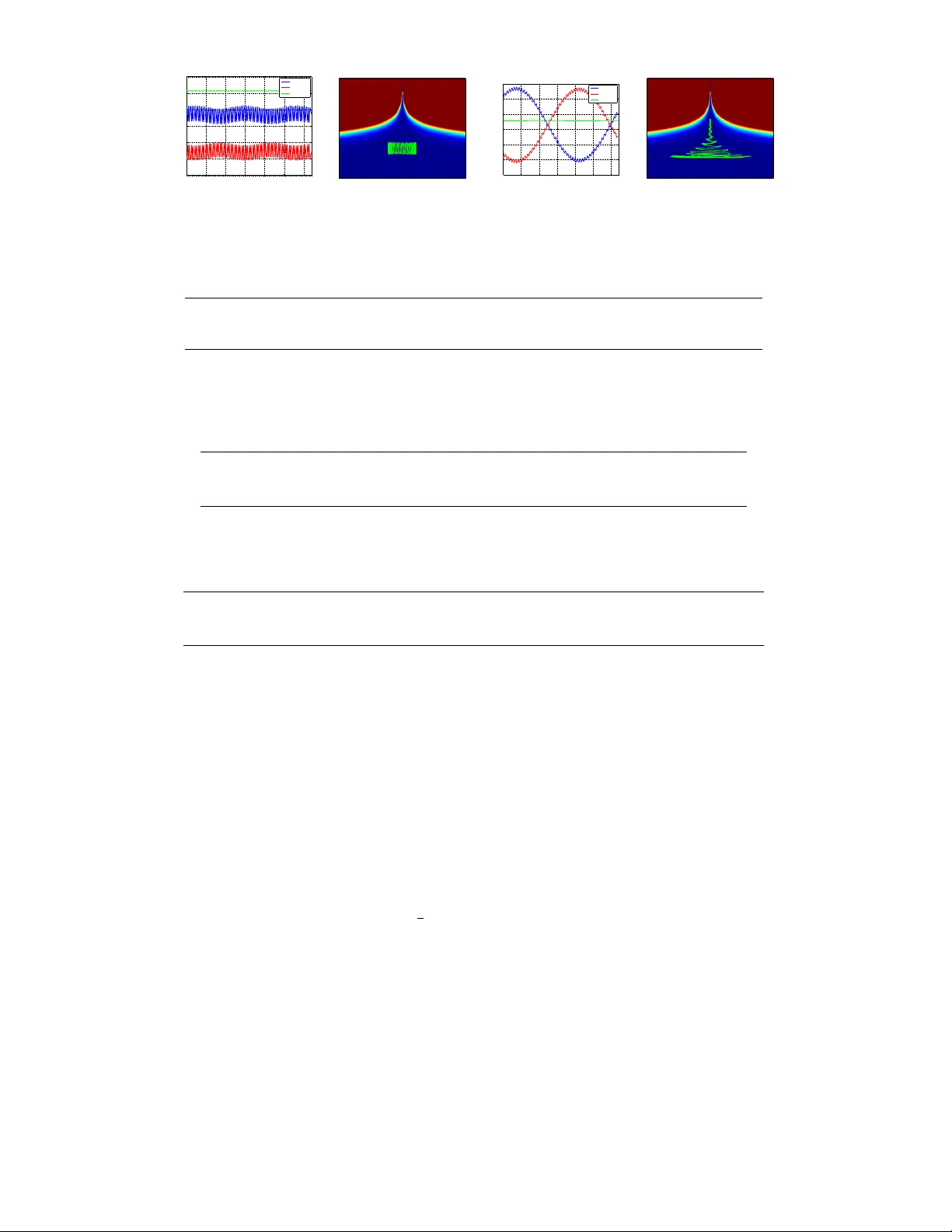

Semi-Separable Hamiltonian Monte Carlo f or Infer ence in Bayesian Hierar chical Models Y ichuan Zhang School of Informatics Univ ersity of Edinbur gh Y.Zhang-60@sms.ed.ac.uk Charles Sutton School of Informatics Univ ersity of Edinbur gh c.sutton@inf.ed.ac.uk Abstract Sampling from hierarchical Bayesian models is often difficult for MCMC meth- ods, because of the strong correlations between the model parameters and the hyperparameters. Recent Riemannian manifold Hamiltonian Monte Carlo (RMHMC) methods have significant potential adv antages in this setting, but are computationally expensi ve. W e introduce a ne w RMHMC method, which we call semi-separable Hamiltonian Monte Carlo , which uses a specially designed mass matrix that allows the joint Hamiltonian ov er model parameters and hyperparam- eters to decompose into two simpler Hamiltonians. This structure is exploited by a new inte grator which we call the alternating blockwise leapfr og algorithm . The resulting method can mix faster than simpler Gibbs sampling while being simpler and more efficient than pre vious instances of RMHMC. 1 Introduction Bayesian statistics provides a natural w ay to manage model complexity and control o verfitting, with modern problems in volving complicated models with a large number of parameters. One of the most po werful adv antages of the Bayesian approach is hierarchical modeling, which allo ws partial pooling across a group of datasets, allowing groups with little data to borrow information from similar groups with lar ger amounts of data. Ho wev er , such models pose problems for Marko v chain Monte Carlo (MCMC) methods, because the joint posterior distribution is often pathological due to strong correlations between the model parameters and the hyperparameters [3]. F or example, one of the most powerful MCMC methods is Hamiltonian Monte Carlo (HMC). Ho wev er, for hierarchical models even the mixing speed of HMC can be unsatisfactory in practice, as has been noted sev eral times in the literature [3, 4, 11]. Riemannian manifold Hamiltonian Monte Carlo (RMHMC) [7] is a recent extension of HMC that aims to ef ficiently sample from challenging posterior distrib utions by exploiting local geometric properties of the distrib ution of interest. Howe ver , it is computationally too expensi ve to be applicable to lar ge scale problems. In this work, we propose a simplified RMHMC method, called Semi-Separable Hamiltonian Monte Carlo (SSHMC), in which the joint Hamiltonian o ver parameters and hyperparameters has special structure, which we call semi-separ ability , that allo ws it to be decomposed into tw o simpler , separa- ble Hamiltonians. This condition allows for a ne w efficient algorithm which we call the alternating blockwise leapfr og algorithm . Compared to Gibbs sampling, SSHMC can make significantly larger mov es in hyperparameter space due to shared terms between the two simple Hamiltonians. Com- pared to pre vious RMHMC methods, SSHMC yields simpler and more computationally efficient samplers for many practical Bayesian models. 2 Hierarchical Bayesian Models Let D = {D i } N i =1 be a collection of data groups where i th data group is a collection of iid obser- vations y j = { y j i } N i i =1 and their inputs x j = { x j i } N i i =1 . W e assume the data follows a parametric 1 distribution p ( y i | x i , θ i ) , where θ i is the model parameter for group i . The parameters are assumed to be drawn from a prior p ( θ i | φ ) , where φ is the hyperparameter with prior distribution p ( φ ) . The joint posterior ov er model parameters θ = ( θ 1 , . . . , θ N ) and hyperparameters φ is then p ( θ , φ |D ) ∝ N Y i =1 p ( y i | x i , θ i ) p ( θ i | φ ) p ( φ ) . (1) This hierar chical Bayesian model is popular because the parameters θ i for each group are coupled, allowing the groups to share statistical strength. Howe ver , this property causes difficulties when approximating the posterior distribution. In the posterior, the model parameters and hyperparameters are strongly correlated. In particular, φ usually controls the variance of p ( θ | φ ) to promote partial pooling, so the v ariance of θ | φ , D depends strongly on φ . This causes dif ficulties for many MCMC methods, such as the Gibbs sampler and HMC. An illustrative example of pathological structure in hierarchical models is the Gaussian funnel distribution [11]. Its density function is defined as p ( x , v ) = Q n i =1 N ( x i | 0 , e − v ) N ( v | 0 , 3 2 ) , where x is the v ector of low-le vel parameters and v is the variance h yperparameters. The pathological correlation between x and v is illustrated by Figure 1. 3 Hamiltonian Monte Carlo on P osterior Manifold Hamiltonian Monte Carlo (HMC) is a gradient-based MCMC method with auxiliary variables. T o generate samples from a target density π ( z ) , HMC constructs an ergodic Markov chain with the in variant distribution π ( z , r ) = π ( z ) π ( r ) , where r is an auxiliary variable. The most common choice of π ( r ) is a Gaussian distribution N ( 0 , G − 1 ) with precision matrix G . Giv en the current sample z , the transition kernel of the HMC chain includes three steps: first sample r ∼ π ( r ) , second propose a new sample ( z 0 , r 0 ) by simulating the Hamiltonian dynamics and finally accept the proposed sample with probability α = min { 1 , π ( z 0 , r 0 ) /π ( z , r ) } , otherwise leave z unchanged. The last step is a Metropolis-Hastings (MH) correction. Define H ( z , r ) := − log π ( z , r ) . The Hamiltonian dynamics is defined by the differential equations ˙ z = ∂ r H ˙ r = − ∂ z H , where z is called the position and r is called the momentum . It is easy to see that ˙ H ( z , r ) = ∂ z H ˙ z + ∂ r H ˙ r = 0 , which is called the energy preserv ation property [10, 11]. In physics, H ( z , r ) is known as the Hamiltonian ener gy , and is decomposed into the sum of the potential energy U ( z ) := − log π ( z ) and the kinetic energy K ( r ) := − log π ( r ) . The most used discretized simulation in HMC is the leapfr og algorithm, which is given by the recursion r ( τ + / 2) = r ( τ ) − 2 ∇ z U ( τ ) (2a) z ( τ + ) = z ( τ ) + ∇ r K ( τ + / 2) (2b) r ( τ + ) = r ( τ + / 2) − 2 ∇ θ U ( τ + ) , (2c) where is the step size of discretized simulation time. After L steps from the current sample ( z (0) , r (0)) = ( z , r ) , the ne w sample is proposed as the last point ( z 0 , r 0 ) = ( z ( L ) , r ( L )) . In Hamiltonian dynamics, the matrix G is called the mass matrix . If G is constant w .r .t. z , then z and r are independent in π ( z , r ) . In this case we say that H ( z , r ) is a separable Hamiltonian. In particular , we use the term standar d HMC to refer to HMC using the identity matrix as G . Although HMC methods often outperform other popular MCMC methods, they may mix slowly if there are strong correlations between variables in the target distribution. Neal [11] sho wed that HMC can mix faster if G is not the identity matrix. Intuiti vely , such a G acts like a preconditioner . Howe ver , if the curvature of π ( z ) v aries greatly , a global preconditioner can be inadequate. For this reason, recent work, notably that on Riemannian manifold HMC (RMHMC) [7], has con- sidered non-separable Hamiltonian methods, in which G ( z ) varies with position z , so that z and r are no longer independent in π ( z , r ) . The resulting Hamiltonian H ( z , r ) = − log π ( z , r ) is called a non-separable Hamiltonian. For example, for Bayesian inference problems, Girolami et al. [7] proposed using the Fisher Information Matrix (FIM) of π ( θ ) , which is the metric tensor of posterior manifold. Ho wever , for a non-separable Hamiltonian, the simple leapfrog dynamics (2a)-(2c) do not yield a valid MCMC method, as the y are no longer re versible. Simulation of general non-separable systems requires the generalized leapfrog integrator (GLI) [7], which requires computing higher or- der deri v ativ es to solve a system of non-linear dif ferential equations. The computational cost of GLI in general is O ( d 3 ) where d is the number of parameters, which is prohibitive for lar ge d . 2 In hierarchical models, there are tw o w ays to sample the posterior using HMC. One w ay is to sample the joint posterior π ( θ , φ ) directly . The other way is to sample the conditional π ( θ | φ ) and π ( φ | θ ) , simulating from each conditional distrib ution using HMC. This strategy is called HMC within Gibbs [11]. In either case, HMC chains tend to mix slo wly in h yperparameter space, because the huge v ari- ation of potential energy across different hyperparameter v alues can easily overwhelm the kinetic energy in separable HMC [11]. Hierarchical models also pose a challenge to RMHMC, if we w ant to sample the model parameters and hyperparameters jointly . In particular , the closed-form FIM of the joint posterior π ( θ , φ ) is usually una vailable. Due to this problem, e ven sampling some toy models like the Gaussian funnel using RMHMC becomes challenging. Betancourt [2] proposed a new metric that uses a transformed Hessian matrix of π ( θ ) , and Betancourt and Girolami [3] demon- strate the power of this method for efficiently sampling hyperparameters of hierarchical models on some simple benchmarks like Gaussian funnel. Ho wev er , the transformation requires computing eigendecomposition of the Hessian matrix, which is infeasible in high dimensions. Because of these technical difficulties, RMHMC for hierarchical models is usually used within a block Gibbs sampling scheme, alternating between θ and φ . This RMHMC within Gibbs strategy is useful because the simulation of the non-separable dynamics for the conditional distrib utions may hav e much lo wer computational cost than that for the joint one. Howe ver , as we ha ve discussed, in hierarchical models these v ariables tend be very strongly correlated, and it is well-kno wn that Gibbs samplers mix slowly in such cases [13]. So, the Gibbs scheme limits the true po wer of RMHMC. 4 Semi-Separable Hamiltonian Monte Carlo In this section we propose a non-separable HMC method that does not have the limitations of Gibbs sampling and that scales to relati vely high dimensions, based on a novel property that we will call semi-separability . W e introduce new HMC methods that rely on semi-separable Hamilto- nians, which we call semi-separable Hamiltonian Monte Carlo (SSHMC) . 4.1 Semi-Separable Hamiltonian In this section, we define the semi-separable Hamiltonian system. Our tar get distrib ution will be the posterior π ( θ , φ ) = log p ( θ , φ |D ) of a hierarchical model (1), where θ ∈ R n and φ ∈ R m . Let ( r θ , r φ ) ∈ R m + n be the v ector of momentum v ariables corresponding to θ and φ respectiv ely . The non-separable Hamiltonian is defined as H ( θ , φ , r θ , r φ ) = U ( θ , φ ) + K ( r θ , r φ | θ , φ ) , (3) where the potential ener gy is U ( θ , φ ) = − log π ( θ , φ ) and the kinetic energy is K ( r θ , r φ | θ , φ ) = − log N ( r θ , r φ ; 0 , G ( θ , φ ) − 1 ) , which includes the normalization term log | G ( θ , φ ) | . The mass matrix G ( θ , φ ) can be an arbitrary p.d. matrix. For example, pre vious work on RMHMC [7] has chosen G ( θ , φ ) to be FIM of the joint posterior π ( θ , φ ) , resulting in an HMC method that requires O ( m + n ) 3 time. This limits applications of RMHMC to large scale problems. T o attack these computational challenges, we introduce restrictions on the mass matrix G ( θ , φ ) to enable efficient simulation. In particular , we restrict G ( θ , φ ) to ha ve the form G ( θ , φ ) = G θ ( φ , x ) 0 0 G φ ( θ ) , where G θ and G φ are the precision matrices of r θ and r φ , respectively . Importantly , we restrict G θ ( φ , x ) to be independent of θ and G φ ( θ ) to be independent of φ . If G has these properties, we call the resulting Hamiltonian a semi-separable Hamiltonian. A semi-separable Hamiltonian is still in general non-separable, as the two random vectors ( θ , φ ) and ( r θ , r φ ) are not independent. The semi-separability property has important computational adv antages. First, because G is block diagonal, the cost of matrix operations reduces from O (( n + m ) k ) to O ( n k ) . Second, and more important, substituting the restricted mass matrix into (3) results in the potential and kinetic energy: U ( θ , φ ) = − X i [log p ( y i | θ i , x i ) + log p ( θ i | φ )] − log p ( φ ) , (4) K ( r θ , r φ | φ , θ ) = 1 2 [ r T θ G θ ( x , φ ) r θ + r T φ G φ ( θ ) r φ + log | G θ ( x , φ ) | + log | G φ ( θ ) | ] . (5) 3 If we fix ( θ , r θ ) or ( φ , r φ ) , the non-separable Hamiltonian (3) can be seen as a separable Hamilto- nian plus some constant terms. In particular , define the notation A ( r θ | φ ) = 1 2 r T θ G θ ( x , φ ) r θ , A ( r φ | θ ) = 1 2 r T φ G φ ( θ ) r φ . Then, considering ( φ , r φ ) as fixed, the non-separable Hamiltonian H in (3) is different from the following separable Hamiltonian H 1 ( θ , r θ ) = U 1 ( θ | φ , r φ ) + K 1 ( r θ | φ ) , (6) U 1 ( θ | φ , r φ ) = − X i [log p ( y i | θ i , x i ) + log p ( θ i | φ )] + A ( r φ | θ ) + 1 2 log | G φ ( θ ) | , (7) K 1 ( r θ | φ ) = A ( r θ | φ ) (8) only by some constant terms that do not depend on ( θ , r θ ) . What this means is that any update to ( θ , r θ ) that leaves H 1 in variant leav es the joint Hamiltonian H inv ariant as well. An example is the leapfrog dynamics on H 1 , where U 1 is considered the potential energy , and K 1 the kinetic energy . Similarly , if ( θ , r θ ) are fixed, then H differs from the follo wing separable Hamiltonian H 2 ( φ , r φ ) = U 2 ( φ | θ , r θ ) + K 2 ( r φ | θ ) , (9) U 2 ( φ | θ , r θ ) = − X i log p ( θ i | φ ) − log p ( φ ) + A ( r θ | φ ) + 1 2 log | G θ ( x , φ ) | , (10) K 2 ( r φ | θ ) = A ( r φ | θ ) (11) only by terms that are constant with respect to ( φ , r φ ) . Notice that H 1 and H 2 are coupled by the terms A ( r θ | φ ) and A ( r φ | θ ) . Each of these terms appears in the kinetic energy of one of the separable Hamiltonians, but in the potential energy of the other one. W e call these terms auxiliary potentials because they are potential energy terms introduced by the auxiliary variables. These auxiliary potentials are k ey to our method (see Section 4.3). 4.2 Alternating block-wise leapfrog algorithm 432 433 434 435 436 437 438 439 440 441 442 443 444 445 446 447 448 449 450 451 452 453 454 455 456 457 458 459 460 461 462 463 464 465 466 467 468 469 470 471 472 473 474 475 476 477 478 479 480 481 482 483 484 485 choices of mass matrix within the semi-separable frame w ork, or the use of SSHMC within discrete models, follo wing pre vious w ork in discrete HMC [12, 15]. Algorithm 1 SSHMC by ABLA Require: ( ✓ , ) Sample r ⇠ N (0 ,G ✓ ( , x )) and r ⇠ N (0 ,G ( ✓ )) for l in 1 , 2 ,...,L do ( ✓ ( l + ✏ / 2) , r ( l + ✏ / 2) ) leapfrog ( ✓ ( l ) , r ( l ) ✓ ,H 1 , ✏ / 2) ( ( l + ✏ ) , r ( l + ✏ ) ) leapfrog ( ( l ) , r ( l ) ,H 2 , ✏ ) ( ✓ ( l + ✏ ) , r ( l + ✏ ) ) leapfrog ( ✓ ( l ) , r ( l ) ,H 1 , ✏ / 2) end for Draw u ⇠ U (0 , 1) if u< min(1 ,e H ( ✓ , , r ✓ , r ) H ( ✓ ( L ✏ ) , ( L ✏ ) , r ( L ✏ ) , r ( L ✏ ) ) ) then ( ✓ 0 , 0 , r 0 ✓ , r 0 ) ( ✓ ( L ✏ ) , ( L ✏ ) , r ( L ✏ ) ✓ , r ( L ✏ ) ) else ( ✓ 0 , 0 , r 0 ✓ , r 0 ) ( ✓ , , r ✓ , r ) end if retur n ( ✓ 0 , 0 ) 9 Now we introduce an ef ficient SSHMC method that exploits the semi-separability property . As described in the previous section, any update to ( θ , r θ ) that leav es H 1 in variant also leav es the joint Hamiltonian H in variant, as does any up- date to ( φ , r φ ) that leav es H 2 in variant. So a natural idea is simply to alternate between sim- ulating the Hamiltonian dynamics for H 1 and that for H 2 . Crucially , e ven though the total Hamiltonian H is not separable in general, both H 1 and H 2 ar e separable. Therefore when sim- ulating H 1 and H 2 , the simple leapfrog method can be used, and the more complex GLI method is not required. W e call this method the alternating bloc k-wise leapfr og algorithm (ABLA), shown in Algo- rithm 1. In this figure the function “leapfrog” returns the result of the leapfrog dynamics (2a)-(2c) for the gi ven starting point, Hamiltonian, and step size. W e call each iteration of the loop from 1 . . . L an ALBA step . For simplicity , we have shown one leapfrog step for H 1 and H 2 for each ALBA step, but in practice it is useful to use multi- ple leapfrog steps per ALB A step. ABLA has discretization error due to the leapfrog discretization, so the MH correction is required. If it is possible to simulate H 1 and H 2 exactly , then H is preserv ed exactly and there is no need for MH correction. T o show that the SSHMC method by ALB A preserves the distribution π ( θ , φ ) , we also need to show the ABLA is a time-rev ersible and v olume-preserving transformation in the joint space of ( θ , r θ , φ , r φ ) . Let X = X θ , r θ × X φ , r φ where ( θ , r θ ) ∈ X θ , r θ and ( φ , r φ ) ∈ X φ , r φ . Obviously , any rev ersible and v olume-preserving transformation in a subspace of X is also re versible and v olume- preserving in X . It is easy to see that each leapfrog step in the ABLA algorithm is re versible and volume-preserving in either X θ , r θ or X φ , r φ . One more property of integrator of interest is 4 symplecticity . Because each leapfrog integrator is symplectic in the subspace of X [10], they are also symplectic in X . Then because ABLA is a composition of symplectic leapfrog inte grators, and the composition of symplectic transformations is symplectic, we know ABLA is symplectic. W e emphasize that ABLA is actually not a discretized simulation of the semi-separable Hamiltonian system H , that is, if starting at a point ( θ , r θ , φ , r φ ) in the joint space, we run the exact Hamiltonian dynamics for H for a length of time L , the resulting point will not be the same as that returned by ALB A at time L ev en if the discretized time step is infinitely small. For example, ALB A simulates H 1 with step size 1 and H 2 with step size 2 where 1 = 2 2 , when 2 → 0 that preserves H . 4.3 Connection to other methods Although the SSHMC method may seem similar to RMHMC within Gibbs (RMHMCWG), SSHMC is actually very dif ferent. The difference is in the last two terms of (7) and (10); if these are omit- ted from SSHMC and the Hamiltonians for π ( θ | φ ) , then we obtain HMC within Gibbs. Partic- ularly important among these two terms is the auxiliary potential, because it allows each of the separable Hamiltonian systems to borr ow ener gy from the other one. For example, if the previous leapfrog step increases the kinetic energy K 1 ( r θ | φ ) in H 1 ( θ , r θ ) , then, in the next leapfrog step for H 2 ( φ , r φ ) , we see that φ will have greater potential energy U 2 ( φ | θ , r θ ) , because the auxil- iary potential A ( r θ | φ ) is shared. That allo ws the leapfrog step to accommodate a larger change of log p ( φ | θ ) using A ( r θ | φ ) . So, the chain will mix faster in X φ . By the symmetry of θ and φ , the auxiliary potential will also accelerate the mixing in X θ . Another way to see this is that the dynamics in RMHMCWG for ( r φ , φ ) preserves the distribution π ( θ , r φ , φ ) = π ( θ , φ ) N ( r φ ; 0 , G φ ( φ ) − 1 ) but not the joint π ( θ , φ , r θ , r φ ) . That is because the Gibbs sampler does not take into account the effect of φ on r θ . In other words, the Gibbs step has the stationary distribution π ( φ , r φ | θ ) rather than π ( φ , r φ | θ , r θ ) . The difference between the tw o is the auxiliary potential. In contrast, the SSHMC methods preserve the Hamiltonian of π ( θ , φ , r θ , r φ ) . 4.4 Choice of mass matrix The choice of G θ and G φ in SSHMC is usually similar to RMHMCWG. If the Hessian matrix of − log p ( θ | y , x , φ ) is independent of θ and al ways p.d., it is natural to define G θ as the in verse of the Hessian matrix. Ho wev er , for some popular models, e.g., logistic regression, the Hessian matrix of the lik elihood function depends on the parameters θ . In this case, one can use any approximate Hes- sian B , like the Hessian at the mode, and define G θ := ( B + B ( φ )) − 1 , where B ( φ ) is the Hessian of the prior distribution. Such a rough approximation is usually good enough to improve the mixing speed, because the main difficulty is the correlation between model parameters and hyperparameters. In general, because the computational bottleneck in HMC and SSHMC is computing the gradient of the tar get distribution, both methods have the same computational comple xity O ( lg ) , where g is the cost of computing the gradient and l is the total number of leapfrog steps per iteration. Howe ver , in practice we find it very beneficial to use multiple steps in each blockwise leapfrog update in ALB A; this can cause SSHMC to require more time than HMC. Also, depending on the mass matrix G θ , the cost of leapfrog a step in ABLA may be different from those in standard HMC. For some choices of G θ , the leapfrog step in ABLA can be ev en faster than one leapfrog step of HMC. For example, in many models the computational bottleneck is the gradient ∇ φ log Z ( φ ) , Z ( φ ) is the normalization in prior . Recall that G θ is a function of φ . If | G θ | = Z ( φ ) − 1 , Z ( φ ) will be canceled out, av oiding computation of ∇ φ log Z ( φ ) . One example is using G x = e v I in Gaussian funnel distribution aforementioned in Section 2. A potential problem of such G θ is that the curvature of the likelihood function p ( D | θ ) is ignored. But when the data in each group is sparse and the parameters θ are strongly correlated, this G θ can giv e nearly optimal mixing speed and make SSHMC much faster . In general, any choice of G θ and G φ that would be valid for separable HMC with Gibbs is also v alid for SSHMC. 5 Experimental Results In this section, we compare the performance of SSHMC with the standard HMC and RMHMC within Gibbs [7] on four benchmark models. 1 The step size of all methods are manually tuned so 1 Our use of a Gibbs scheme for RMHMC follows standard practice [7]. 5 5 10 15 20 25 30 0 50 100 150 200 250 300 time energy potential Kinetic Hamlt x 1 v 5 10 15 20 25 30 −300 −200 −100 0 100 200 300 time energy potential Kinetic Hamlt x 1 v HMC with diagonal constant mass SSHMC (semi-separable mass) Figure 1: The trace of ener gy over the simulation time and the trajectory of the first dimension of 100 dimensional Gaussian x 1 (vertical axis) and hyperparameter v (horizontal axis). The two simulations start with the same initial point sampled from the Gaussian Funnel. time(s) min ESS( x , v ) min ESS/s ( x , v ) MSE( E [ v ] , E [ v 2 ] ) HMC 5.16 (302.97, 26.30) (58.64, 5.09) (2.28, 1.34) RMHMC(Gibbs) 2.7 (2490.98, 8.93) ( 895.15 , 3.21) (1.95, 1.33) SSHMC 37.35 ( 3868.79 , 1541.67 ) (103.57, 41.27 ) ( 0.04 , 0.02 ) T able 1: The result of ESS of 5000 samples on 100 + 1 dimensional Gaussian Funnel distribution. x are model parameters and v is the hyperparameter . The last column is the mean squared error of the sample estimated mean and variance of h yperparameter . running time(s) ESS θ (min, med, max) ESS v min ESS/s HMC 378 (2.05, 3.68, 4.79) × 10 3 815 2.15 RMHMC(Gibbs) 411 (0.8, 4.08 , 4.99 ) × 10 3 271 0.6 SSHMC 385.82 ( 2.5 , 3.42, 4.27) × 10 3 2266 5.83 T able 2: The results of ESS of 5000 samples after 1000 burn-in on Hierarchical Bayesian Logistic Regression. θ are 200 dimensional model parameters and v is the hyperparameter . time (s) ESS x (min, med, max) ESS ( β , σ, φ ) min ESS/s HMC 162 (1.6, 2.2, 5.2) × 10 2 (50, 50, 128) 0.31 RMHMC(Gibbs) 183 (12.1, 18.4, 33.5) × 10 2 (385, 163, 411) 0.89 SSHMC 883 ( 78.4 , 98.9 , 120.7 ) × 10 2 ( 4434 , 1706 , 1390 ) 1.57 T able 3: The ESS of 20000 posterior samples of Stochastic V olatility after 10000 burn-in. x are latent volatilities over 2000 time lags and ( β , σ, φ ) are hyperparameters. Min ESS/s is the lowest ESS ov er all parameters normalized by running time. that the acceptance rate is around 70 - 85% . The number of leapfrog steps are tuned for each method using preliminary runs. The implementation of RMHMC we used is from [7]. The running time is wall-clock time measured after burn-in. The performance is e valuated by the minimum Ef fectiv e Sample Size (ESS) over all dimensions (see [6]). When considering the different computational complexity of methods, our main ef ficiency metric is time normalized ESS. 5.1 Demonstration on Gaussian Funnel W e demonstrate SSHMC by sampling the Gaussian Funnel (GF) defined in Section 2. W e con- sider n = 100 dimensional low-le vel parameters x and 1 hyperparameter v . RMHMC within Gibbs on GF has block diagonal mass matrix defined as G x = − ∂ 2 v log p ( x, v ) − 1 = e v I and G v = − E x [ ∂ 2 v log p ( x, v )] − 1 = ( n + 1 9 ) − 1 . W e use the same mass matrix in SSHMC, because it is semi-separable. W e use 2 leapfrog steps for low-le vel parameters and 1 leapfrog step for the hyperparameter in ABLA and the same leapfrog step size for the two separable Hamiltonians. W e generate 5000 samples from each method after 1000 burn-in iterations. The ESS per second (ESS/s) and mean squared error (MSE) of the sample estimated mean and variance of the hyperpa- rameter are giv en in T able 1. Notice that RMHMC is much more efficient for the lo w-level v ariable because of the adaptiv e mass matrix with hyperparameter . Figure 1 illustrates a dramatic difference between HMC and SSHMC. It is clear that HMC suffers from oscillation of the hyperparameter in a narrow region. That is because the kinetic energy limits the change of hyperparameters [3, 11]. In contrast, SSHMC has much wider energy v ariation and the trajectory spans a larger range of hy- 6 0.94 0.95 0.96 0.97 0.98 0.99 1 0 0.01 0.02 0.03 0.04 0.05 0.06 0.07 0.1 0.15 0.2 0.25 0.3 0.35 0 0.01 0.02 0.03 0.04 0.05 0.06 0.07 0.4 0.6 0.8 1 1.2 1.4 0 0.02 0.04 0.06 0.08 0.1 0.12 RMHMC SSHMC HMC Figure 2: The normalized histogram of 20000 posterior samples of hyperparameters (from left to right φ , σ , β ) after 10000 burn-in samples. The data is generated by the hyperparameter ( φ = 0 . 98 , σ = 0 . 15 , β = 0 . 65) . It is clear that empirical distributions by the three methods are consistent. But, it is clear that SSHMC and RMHMC con ver ges faster than HMC. perparameter v . The energy variation of SSHMC is similar to the RMHMC with Soft-Abs metric (RMHMC-Soft-Abs) reported in [2], an instance of general RMHMC without Gibbs. But compared with [2], each ABLA step is about 100 times faster than each generalized leapfrog step and SSHMC can generate around 2.5 times more effecti ve samples per second than RMHMC-Soft-Abs. Although RHMC within Gibbs has better ESS/s on the low lev el v ariables, its estimation of the mean and v ari- ance is biased, indicating that the chain has not yet mixed. More important, T able 1 shows that the samples generated by SSHMC gi ve nearly unbiased estimates of the mean and variance of the hyperparameter , which neither of the other methods are able to do. 5.2 Hierarchical Bayesian Logistic Regression In this e xperiment, we consider hierarchical Bayesian logistic regression with e xponential prior for the variance h yperparameter v , that is p ( w , φ |D ) ∝ Y i Y j σ ( y ij w T i x ij ) N ( w i | 0 , v I ) Exp ( v | λ ) , where σ is the logistic function σ ( z ) = 1 / (1 + exp( − z )) and ( y ij , x ij ) is the j th data points the i th group. W e use the Statlog (German credit) dataset from [1]. This dataset includes 1000 data points and each data has 16 categorical features and 4 numeric features. Bayesian logistic regression on this dataset has been considered as a benchmark for HMC [7, 8], but the previous w ork uses only one group in their experiments. T o make the problem more interesting, we partition the dataset into 10 groups according to the feature Purpose . The size of group varies from 9 to 285. There are 200 model parameters ( 20 parameters for each group) and 1 hyperparameter . W e consider the reparameterization of the hyperparameter γ = log v . For RMHMC within Gibbs, the mass matrix for group i is G i := I ( x , θ ) − 1 , where I ( x , θ ) is the Fisher Information matrix for model parameter w i and constant mass G v . In each iteration of the Gibbs sampler , each w i is sampled from by RMHMC using 6 generalized leapfrog steps and v is sampled using 6 leapfrog steps. For SSHMC, G i := Cov ( x ) + exp( γ ) I and the same constant mass G v . The results are shown in T able 2. SSHMC again has much higher ESS/s than the other methods. 5.3 Stochastic V olatility A stochastic volatility model we consider is studied in [9], in which the latent volatilities are modeled by an auto-regressi ve AR(1) process such that the observations are y t = t β exp( x t / 2) with latent variable x t +1 = φx t + η t +1 . W e consider the distributions x 1 ∼ N (0 , σ 2 / (1 − φ 2 )) , t ∼ N (0 , 1) and η t ∼ (0 , σ 2 ) . The joint probability is defined as p ( y , x , β , φ, σ ) = T Y t =1 p ( y t | x t , β ) p ( x 1 ) T Y t =2 p ( x t | x t − 1 , φ, σ ) π ( β ) π ( φ ) φ ( σ ) , where the prior π ( β ) ∝ 1 /β , σ 2 ∼ In v- χ 2 (10 , 0 . 05) and ( φ + 1) / 2 ∼ beta (20 , 1 . 5) . The FIM of p ( x | α, β , φ, y ) depends on the hyperparameters b ut not x , but the FIM of p ( α, β , φ | x , y ) depends on ( α, β , φ ) . For RMHMC within Gibbs we consider FIM as the metric tensor follo wing [7]. For SSHMC, we define G θ as in verse Hessian of log p ( x | α, β , φ, y ) , but G φ as an identity matrix. In each ABLA step, we use 5 leapfrog steps for updates of x and 2 leapfrog steps for updates of the hyperparameters, so that the running time of SSHMC is about 7 times that of standard HMC. 7 5 10 15 20 25 30 5 10 15 20 25 30 5 10 15 20 25 30 5 10 15 20 25 30 0 1 2 3 4 5 6 7 0 0.02 0.04 0.06 0.08 0.1 0.12 RMHMC SSHMC 0 0.05 0.1 0.15 0.2 0.25 0.3 0.35 0 0.02 0.04 0.06 0.08 0.1 0.12 0.14 0.16 RMHMC SSHMC Figure 3: Sample mean of latent fields using RMHMC (left 1) and SSHMC (left 2). The normalized histogram of sampled hyperparameter σ (right 1) and β (right 2). W e dra w 5000 samples from both methods after 1000 burn-in. The true hyperparameter v alues are ( σ = 1 . 9 , β = 0 . 03) . time(h) ESS x (min, med, max) ESS( σ, β ) min ESS/h SSHMC 2.6 ( 7.8 , 30 , 39 ) × 10 2 ( 2101 , 270 ) 103.8 RMHMC(Gibbs) 2.64 (1, 29, 38.3) × 10 2 (200, 46) 16 T able 4: The ESS of 5000 posterior samples from 32x32 LGCPP after 1000 burn-in samples. x is the 1024 dimensional vector of latent variables and ( σ, β ) are the hyperparameters of the Gaussian Process prior . “min ESS/h” means minimum ESS per hour . W e generate 20000 samples using each method after 10000 burn-in samples. The histogram of hyperparameters is sho wn in Figure 2. It is clear that all methods approximately conv erge to the same distrib ution. But from T able 3, we see that SSHMC generates almost two times as many ESS/s as RMHMC within Gibbs. 5.4 Log-Gaussian Cox Point Process The log-Gaussian Cox Point Process (LGCPP) is another popular testing benchmark [5, 7, 14]. W e follow the experimental setting of Girolami and Calderhead [7]. The observ ations Y = { y ij } are counts at the location ( i, j ) , i, j = 1 , . . . , d on a regular spatial grid, which are conditionally independent given a latent intensity process Λ = { λ ( i, j ) } with mean mλ ( i, j ) = m exp( x i,j ) , where m = 1 /d 2 , X = { x i,j } , x = V ec ( X ) and y = V ec ( Y ) . X is assigned by a Gaus- sian process prior, with mean function m ( x i,j ) = µ 1 and covariance function Σ( x i,j , x i 0 ,j 0 ) = σ 2 exp( − δ ( i, i 0 , j, j 0 ) /β d ) where δ ( · ) is the Euclidean distance between ( i, j ) and ( i 0 , j 0 ) . The log joint probability is giv en by log p ( y , x | µ, σ, β ) = P i,j y i,j x i,j − m exp( x i,j ) − 1 2 ( x − µ 1 ) T Σ − 1 ( x − µ 1 ) . W e consider a 32 × 32 grid that has 1024 latent v ariables. Each latent variable x i,j corresponds to a single observation y i,j . W e consider RMHMC within Gibbs with FIM of the conditional posteriors. See [7] for the definition of FIM. The generalized leapfrog steps are required for updating ( σ, β ) , but only the leapfrog steps are required for updating x . Each Gibbs iteration tak es 20 leapfrog steps for x and 1 general leapfrog step for ( σ, β ) . In SSHMC, we use G x = Σ − 1 and G ( σ,β ) = I . In each ABLA step, the update of x tak es 2 leapfrog steps and the update of ( α , β ) takes 1 leapfrog step. Each SSHMC transition takes 10 ALBA steps. W e do not consider HMC on LGCPP , because it mixes extremely slowly for hyperparameters. The results of ESS are giv en in T able 4. The mean of sampled latent variable and the histogram of sampled hyperparameters are giv en in Figure 3. It is clear that the samples of RMHMC and SSHMC are consistent, so both methods are mixing well. Ho wev er , SSHMC generates about six times as many ef fecti ve samples per hour as RMHMC within Gibbs. 6 Conclusion W e hav e presented Semi-Separable Hamiltonian Monte Carlo (SSHMC), a new v ersion of Rieman- nian manifold Hamiltonian Monte Carlo (RMHMC) that aims to retain the flexibility of RMHMC for difficult Bayesian sampling problems, while achie ving greater simplicity and lower computational complexity . W e tested SSHMC on se veral different hierarchical models, and on all the models we considered, SSHMC outperforms both HMC and RMHMC within Gibbs in terms of number of ef- fectiv e samples produced in a fixed amount of computation time. Future work could consider other choices of mass matrix within the semi-separable framework, or the use of SSHMC within discrete models, following pre vious work in discrete HMC [12, 15]. 8 References [1] K. Bache and M. Lichman. UCI machine learning repository , 2013. URL http://archive.ics. uci.edu/ml . [2] M. J. Betancourt. A General Metric for Riemannian Manifold Hamiltonian Monte Carlo. ArXiv e-prints , Dec. 2012. [3] M. J. Betancourt and M. Girolami. Hamiltonian Monte Carlo for Hierarchical Models. ArXiv e-prints , Dec. 2013. [4] K. Choo. Learning hyperparameters for neural network models using Hamiltonian dynamics . PhD thesis, Citeseer , 2000. [5] O. F . Christensen, G. O. Roberts, and J. S. Rosenthal. Scaling limits for the transient phase of local Metropolis–Hastings algorithms. Journal of the Royal Statistical Society: Series B (Statistical Methodol- ogy) , 67(2):253–268, 2005. [6] C. J. Geyer . Practical Markov Chain Monte Carlo. Statistical Science , pages 473–483, 1992. [7] M. Girolami and B. Calderhead. Riemann manifold Langevin and Hamiltonian Monte Carlo methods. Journal of the Royal Statistical Society: Series B (Statistical Methodology) , 73(2):123–214, 2011. ISSN 1467-9868. doi: 10.1111/j.1467- 9868.2010.00765.x. URL http://dx.doi.org/10.1111/j. 1467- 9868.2010.00765.x . [8] M. D. Hoffman and A. Gelman. The no-U-turn sampler: Adaptiv ely setting path lengths in Hamiltonian Monte Carlo. Journal of Machine Learning Research , In press. [9] S. Kim, N. Shephard, and S. Chib. Stochastic volatility: likelihood inference and comparison with ARCH models. The Review of Economic Studies , 65(3):361–393, 1998. [10] B. Leimkuhler and S. Reich. Simulating Hamiltonian dynamics , volume 14. Cambridge Uni versity Press, 2004. [11] R. Neal. MCMC using Hamiltonian dynamics. Handbook of Markov Chain Monte Carlo , pages 113–162, 2011. [12] A. Pakman and L. Paninski. Auxiliary-v ariable exact Hamiltonian Monte Carlo samplers for binary distributions. In Advances in Neural Information Pr ocessing Systems 26 , pages 2490–2498. 2013. [13] C. P . Robert and G. Casella. Monte Carlo statistical methods , volume 319. Citeseer, 2004. [14] Z. W ang, S. Mohamed, and N. de Freitas. Adaptive Hamiltonian and Riemann manifold Monte Carlo samplers. In International Confer ence on Machine Learning (ICML) , pages 1462–1470, 2013. URL http://jmlr.org/proceedings/papers/v28/wang13e.pdf . JMLR W&CP 28 (3): 1462– 1470, 2013. [15] Y . Zhang, C. Sutton, A. Storkey , and Z. Ghahramani. Continuous relaxations for discrete Hamiltonian Monte Carlo. In Advances in Neural Information Pr ocessing Systems (NIPS) , 2012. 9

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment