Augur: a Modeling Language for Data-Parallel Probabilistic Inference

It is time-consuming and error-prone to implement inference procedures for each new probabilistic model. Probabilistic programming addresses this problem by allowing a user to specify the model and having a compiler automatically generate an inferenc…

Authors: Jean-Baptiste Tristan, Daniel Huang, Joseph Tassarotti

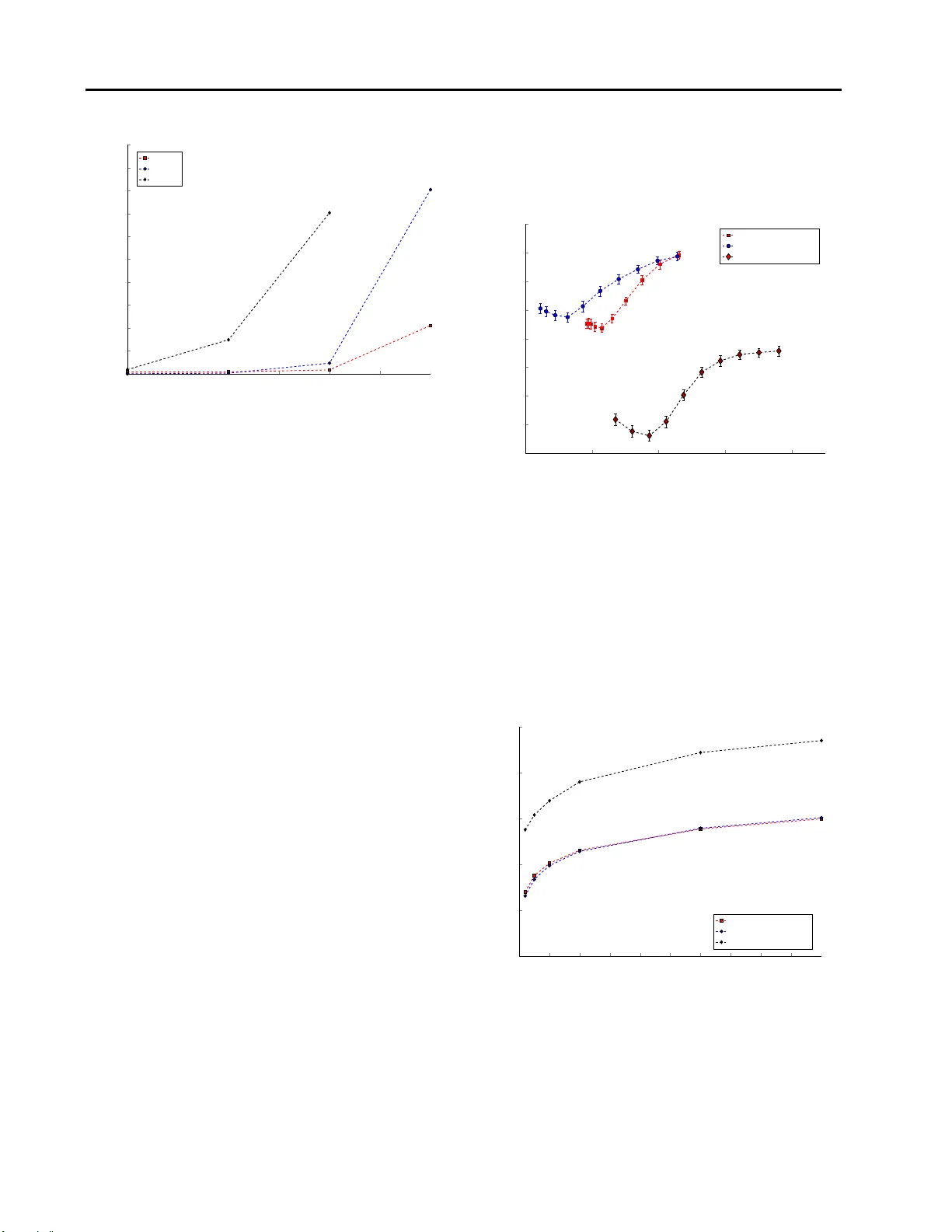

A ugur: a Modeling Language f or Data-Parallel Pr obabilistic Infer ence Jean-Baptiste T ristan J E A N . BA P T I S T E . T R I S T A N @ O R AC L E . C O M Oracle Labs Daniel Huang D E H UA N G @ FA S . H A R V A R D . E D U Harvard Uni versity Joseph T assarotti J TA S S A RO @ CS . C M U . E D U Carnegie Mellon Uni versity Adam Pocock A DA M . P O C O C K @ O R AC L E . C O M Oracle Labs Stephen J. Gr een S T E P H E N . X . G R E E N @ O R AC L E . C O M Oracle Labs Guy L. Steele, Jr G U Y . S T E E L E @ O R AC L E . C O M Oracle Labs Abstract It is time-con s uming and error-prone to imple- ment inference pr ocedures for each new pr ob- abilistic mod el. Probabilistic pro gramming ad- dresses this pro blem by allowing a user to spec- ify the mod el and having a co mpiler au tomati- cally generate an inference p rocedure for it. For this ap proach to be pra cti cal, it is impo rtant to generate inferen ce code that h as rea s onab le per- forman ce. In this paper, we present a proba- bilistic p rogramming lan guage and comp i ler for Bayesian networks desig ned to make ef fective use of data-p arallel architectures such as GPUs. W e show that the comp iler can generate data- parallel inference cod e scalable to thou sands o f GPU cores by mak i ng use of the co nditional in- depend ence relatio nships in the Bayesian net- work. 1. Intr oduction Machine learning , and e s pecially prob abilistic mo deling, can be difficult to apply . A u ser needs to not only d es ign the model, but also impleme nt the r ight inference pro ce- dure. Th ere are m an y dif feren t inference algorith ms, most Copyright 2014 by the author(s). of which a re conceptua l ly co mplicated and dif ficult to im- plement at scale. Despite th e enthusiasm that many people who practice data analysis hav e for machine learning, this complexity is a barrie r to deploymen t. Any effort to sim- plify the use of mach ine learning would thus be very u s eful. Probabilistic prog ramming ( ? ) , as introduced in the BUGS project ( ? ), is a way to simplify the app lication of machine learning b as ed on Bayesian inference. The key f eature of probab ilis tic p rogramming is separation of concer ns: the user specifies what need s to be learned b y describing a probab ilis tic model, while the compiler automa ti cally gen- erates the how , that is, the inferenc e p rocedure. In particu- lar , the programmer writes code th at describes a probability distribution. Using a compiler-genera ted inference algo- rithm, the program mer th en s amples fro m this distribution. Howe ver , doing inference on probabilistic program s is computatio nally intensive and challenging. As a result, de- veloping algorithms to perfor m inference is an active area of research. These include d eterministic approx imations (such as variational metho ds) an d Monte Carlo appro xima- tions (such as MCMC a lgorithms). T he prob lem is that most of th ese algo rithms are co nceptually co mplicated, and it is n ot clear , especially for non-experts, which one would work best for a gi ven model. T o address th e perfo rmance issues, our work h as been driven by two observations. The first ob serv ation is that good performan ce starts with an appropriate infer ence al- gorithm, and selecting the righ t algo rithm is often t he hard- A ugur: a Modeling Language f or Data-Pa rallel Probabilistic Infer ence est problem. For example, if our comp i ler only emits Metropolis-Ha s tings inference, there are models for wh ich our pr ogramming langu age will be of no use, ev en given large a mounts of computatio nal power . W e must d es ign the compiler in such a way that we can include the latest research on inferen ce while reusing pre-existing analyses and optimizatio ns, or e ven mix inference techniques. Con- sequently , we hav e desig ned o ur comp iler as a mo dular framework wh ere on e can add a new inf erence algo rithm while reusing already implemented analyses and optimiza- tions. For that purpose, our compiler uses a n interme diate representatio n (IR) f or probability distributions that serves as a target for mode li ng languag es an d as a basis for infer- ence algorithm s . W e will show this IR is key to scaling the compiler and the inferen ce to very large networks. The second observation is if we wish to contin ue to ben e- fit from advances in hardware we must focu s on p roducing highly parallel inferen ce algorith ms. W e cla i m th at many MCMC inference alg orithms are highly data- parallel ( ?? ) within a single Markov Chain if we take a dv antage of the condition al independ ence relationships of the input model (e.g. the a s sumptio n of i.i.d. data makes the likelihood in- depend ent across data points). Moreover, we can au t omati- cally gen erate good data-para l lel in ference with a compiler . Such in ference will r un very efficiently on highly parallel architecture s such as Graphics Proce s sing Units (GPUs). I t is imp ortant to note that parallelism br ings an interesting trade-off for per formance since som e inference techniq ues can result in less parallelism and will not scale as well. In this p aper , we pr esent our compilation framew ork , named Augur . T o star t (Section 2 ), we d emonstrate how to specify two pop ular m odels in our lang uage and u s e them to do lea rning and prediction . Then, (Section 3 ), we describe how we support d if ferent modelin g languages by embed ding them into Scala using Scala’ s macro sys- tem, wh ich pr o vides type checking and IDE suppor t, and we describe the p robabilistic IR. Next, (Section 4 ), we describe d ata-parallel versions of Metropo lis -Hastings and Gibbs samp li ng that scale on the GPU and speed up sam- pling. Our co mpiler also in cludes a Metrop olis-W ithin- Gibbs sampler but we d o not detail these in this pap er . Th en (Section 5 ), we pr esent the r es ults of some bench marks, which includ e compa risons against other imp lementations of infer ence for a r e gression, a Gaussian Mix ture Mo del, and LD A. Finally (Section 6 ), we revie w the literature in probab ilis tic programm i ng. Our main results are: first, no t only are some inference al- gorithms highly data-parallel an d am enable to GPU e xecu- tion, but a co mpiler can automa tically generate such GPU implementatio ns effectively; seco nd, for the compiler to be able to h andle large m odel specifications (such as LDA) it is ke y to use a symb olic r epresentation of the distribution 1 object LDA { 2 class sig( var phi : Array[Double], 3 var theta : Array[Double], 4 var z : Array[Int], 5 var w : Array[Int]) 6 7 val model = bayes { 8 (K: Int, V: Int, M: Int, N: Array[Int]) => { 9 val alpha = vector(K,0.1) 10 val beta = vector(V,0.1) 11 val phi = Dirichlet(V,beta).sample(K) 12 val theta = Dirichlet(K,alpha).sample(M) 13 val w = 14 for (i <- 1 to M) yield { 15 for (j <- 1 to N(i)) yield { 16 val z: Int = 17 Categorical(K,theta(i)).sample() 18 Categorical(V,phi(z)).sample() 19 }} 20 observe(w) 21 }}} Figure 1. Specification of the latent Dirichlet allocation model in Augur . The model specifies the probability distrib ution p ( φ, θ, z | w ) . T h e ke yword bayes introduces the modeling language for Bayesian networks. rather than constructing the graphical model. 2. The A ugur Language As e xamples, we first present the specification of tw o mod- els in A ugur , L atent Dirichlet Alloc ation (LD A) ( ? ) and multiv ariate regression. Then we show how the LDA model is used to learn th e topics pr es ent in a set of d ocu- ments. Th e supplemen tary material con tains five e xamp les of p robabilistic models in Au gur includin g a po lynomial regression, a categor ical and a Gaussian Mixtur e Mod el (GMM), a Naive Bay es classifier, and a Hidd en Markov Model (HMM). 2.1. Specifying the Models The LD A model specification is shown in Figu re 1 . The pr obability distribution is defin ed as a Scala object ( object LDA ) and is compo sed of two decla rations. First, we d eclare the support of th e probability distribution as a class that mu st be named sig . Here the supp ort is composed of four arrays, with one each for the distrib ution of topics per do cument ( theta ), the distribution of words per topic ( ph i ), the topics assigned to th e words ( z ), and the words in th e corp us ( w ). The su pport is used to store the inferr ed mo del pa rameters. These last two arrays are flat repre s entation s of ragge d array s , and so we do not re- quire the docum ents to be of equal length. The second dec l aration specifies the Bayesian network as- sociated with LDA and makes use of our domain specific languag e for Bayesian n etw orks. The DSL is m arked by the bayes keyword and delimited by the following enclosing A ugur: a M o deling Language for Data-P arallel Probabilistic Inference 1 object LinearRegr ession { 2 class sig( var w: Array[Double], var b: Double, 3 var tau: Double, var x: Array[Double], 4 var y: Array[Double]) 5 6 val model = bayes { 7 (K: Int, N: Int, l: Double, u: Double) => { 8 9 val w = Gaussian(0, 10).sample(K) 10 val b = Gaussian(0, 10).sample() 11 val tau = InverseGamma(3.0, 1.0).sample() 12 val x = for (i <- 1 to N) 13 yield Uniform(l, u).sample(K) 14 val y = for (i <- 1 to N) yield { 15 val phi = for (j <- 1 to K) yield w(j) * x(i)(j) 16 Gaussian((phi.sum) + b, tau).sample() 17 } 18 observe(x, y) 19 }}} Figure 2. Specification of a multiv ariate regressio n in Augur . brackets. The model first declare s the parameter s of the model: K fo r the numbe r of topics, V for the vocab ulary size, M for the number of documents, and N for the array that associates each docum ent with its size. In the model itself, we define the hy per -param eters (values alpha an d beta ) for the Dirichlet distributions and draw K Dirichlet samp les of d imension V for the distribution of words per topic ( phi ) and M Dirichlet samples of dimen - sion K for the distrib ution of to pics per docum ent ( theta ). Then, for each word in each documen t , we dr a w a top ic z from the ta , and fin ally a word fro m phi based on the topic we drew for z . The r e gression model in Figure 2 is defined in the same way an d uses similar lan guage features. In this example the suppo rt com prises the ( x , y ) data points, the weights w , the bias b , and the noise tau . The model uses an additio nal sum function to sum across the feature vector . 2.2. Using the model Once a mod el is s pecified, it can be used as any other Scala object by writing standard Scala co de. For instance, on e may want to use the LDA mode l with a training corpus to learn a distribution of word s per to pic and the n use it to learn the p er -docum ent topic distribution of a test cor- pus. An implementation is presented in Figure 3 . First the progr ammer must allocate the parameter ar rays which con- tain the infer red values. Then the signatu re of the model is con s tructed wh ich enc apsulates the paramete rs. The LDA.model.ma p co mmand return s the MAP es timate of the parameters giv en the observed words. T o test the mo del, a new signatu re is constructed con- taining the test documents, and the previously infer red phi values. Then LDA.model.map is called again, but with both the ph is and th e words obser v ed ( by sup plying Set("phi") ) . The inferred thetas for the test d ocuments are stored in s test.theta . 3. A Modular Compilation Framework Before we d etail the architecture of o ur compiler , it is use- ful to und erstand ho w a model goes from a specification down to CUD A code running o n the GPU. There are two distinct compilation phases. The first happen s when the progr ammer compiles the p rogram with scalac (assuming that the co de fro m Figure 1 is in a file named LDA.sca la ) scalac -classpath augur.jar LDA.scala The file augur.jar is the package co ntaining our com- piler . The fir s t ph ase of compilation happen s statically , d ur - ing normal scalac compilation . In this phase, the b lock of code following the bayes keyword is transformed into our intermediate representation fo r pr obability distri- butions. The second co mpilation p hase hap pens at ru n- time, when the prog rammer calls the LDA.m odel.map method. At that point, the I R is transfor med, an alyzed, an d optimized, and finally , CUDA c ode is emitted and run. Our framew ork is therefore com posed of two distinct com- ponen t s that comm unicate throug h the IR: the fro nt end, where domain specific language s are con verted into the IR, and the b ack en d, where the IR can be comp iled down to various inference a lgorithms (curren tl y Metrop olis- Hastings, Gibb s samplin g, and Metropolis-Wit hin- Gibbs). T o d efine a modeling langu age in th e front end, we make use of the Scala macro system. The m acro system allows us to define a set of functio ns (called “macros”) that will be executed by the Scala compiler o n th e code enclosed by the macro. W e are curr ently focusing on Bayesian n et- works, b ut other DSLs (e.g ., Markov ran dom fields) could be added without modificatio ns to the back end. The im- plementation of the macros to defin e th e Bayesian network languag e is con ceptually uninteresting so we omit further details. Our Bayesian n etw ork lan guage is fairly standar d, with the notable exception that it is implicitly parallel. Separating the com pilation into two d is tinct pha s es gives us many advantages. As our language is implemen ted using Scala’ s macro system it provides autom atic syntax hig h- lighting, method n ame com pletion and co de refactoring in any IDE which supports Scala. This greatly improves the usability o f the DSL as no special tools need to be de vel- oped to sup port it. This macro system a ll ows Augur to use Scala’ s parser , semantic an alyzer (e.g., to check that vari- ables have been defined), and typ e checker . Also we b ene- fit from th e Scala compiler’ s optimization s such as con stant folding and dead code elimination. Then, because the IR is c ompiled to CUD A code at run- time , we know the v alues o f all the h yper -para meters and A ugur: a M o deling Language for Data-P arallel Probabilistic Inference 1 val phi = new Array[Double](k * v) 2 val theta_train = new Array[Double](doc_num_tra in * k) 3 val z_train = new Array(num_tokens_train) 4 val s_train = new LDA.sig(phi, theta_train, z_train, w_train) 5 LDA.model.map(Set(), (k, v, doc_num_trai n , docs_length_train), s_train, samples_num, Infer.GIBBS) 6 7 val z_test = new Array(num_token s_test ) 8 val theta_test = new Array[Double](doc_num_test * k) 9 val s_test = new LDA.sig(phi, theta_test, z_test, w_test) 10 LDA.model.map(Set("phi"), (k, v, doc_num_test, docs_length_test), s_test, samples_num, Infer.GIBBS) Figure 3. Example use of the LDA. Function LDA.m odel.map returns a maximum a po steriori estimation. It tak es a s ar guments the se t of v ariables to observe (on top of the ones declared as observed in the model specification), the hyperparameters, the init ial param eters, the output parameters, the number of iterations and the inference to use. The parameters are stored in LDA.sig . the size of the dataset. This enab les b etter optimizatio n strategies, an d also gi ves us k ey insigh ts into how to extract parallelism ( Section 4.2 ). For example, wh en comp il ing LD A, we kn o w that the num ber of topics is much smaller than th e number of d ocuments a nd thus parallelizing over docume nts will p roduce more parallelism than p aralleliz- ing over topics. Finally , we also pr o vide a library which defin es standard distributions such as Gaussian, Dirichlet, etc. In addition to these standard distributions, each model denotes its o wn user-defined distribution. All of th es e distrib utions ar e sub- types o f the Dist supertype. Currently , the Dist interface provides two metho ds: map , which implem ents maximum a posteriori estimation, and sample , which returns a se- quence of samples. 4. Generation of Data-Parallel Infer ence When an infer ence procedu re is in voked on a mod el (e.g. LDA.model.ma p ), the IR is comp iled down to CUD A in- ference co de for that m odel. I nformally , our IR expr es sions are generated from this Backus-Naur form grammar: P ::= p ( → X ) p ( → X | → X ) P P 1 P N Y i P Z X P d x { P } c The goal of th e IR is to m ak e the sources of par allelis m in the mo del more explicit and to support an alysis of th e prob - ability distributions p resent in the model. For example, a Q indicates that each sub-term can be ev aluated in parallel. The u se of su ch a symbo lic r epresentation for the m odel is key to scale to large networks. Indeed , as we will show in the experimen tal ev aluation (Section 5 ), p opular prob- abilistic p rogramming lan guage im plementations such as JAGS or Stan reify the grap hical mod el, resulting in un - reasonable memory consumptio n f or m odels s uch as LD A. A consequence of o ur sy mbolic rep resentation is that it be- comes more dif ficult to discover conjug ac y r elationships, a point we will come back to. In the r est of th is section, we explain how the co mpiler gen- erates data- parallel samp l ers th at exploit the co nditional in - depend ence structur e o f the model. W e will u s e o ur two examples to explain ho w the comp iler analyz es the model and genera t es the inference code. 4.1. Generating data-para ll el MH samplers If the user wants to use Metro polis-Hastings inferen ce o n a m odel, the co mpiler needs to emit co de for a fu nction f that is p roportional to the distribution the user w ants to sample from. This fu nction is then linked with our library implementatio n of Metropolis-Hastings. The function f is composed of the product o f the prior and the likelihood of the mo del and is extracted auto matically from the model specification. For example, applied to our regression ex- ample, f is d efined as f ( x , y , τ , b , w ) = p ( b ) p ( τ ) p ( w ) p ( x ) p ( y | x , b, τ , w ) which is equal to (and represented in our IR as) p ( b ) p ( τ ) K Y k p ( w k ) ! N Y n p ( x n ) p ( y n | x n · w + b, τ ! In this form, the compiler knows that th e distrib ution fac- torizes into a large num ber of terms th at can be e valuated in parallel a nd th en efficiently multiplied together ; m ore specifically , it k no ws that the data is i.i. d. and th at it can o p- timize accordin gly . In this case, each ( x, y ) contributes to the likelihood in dependently , and they ca n be ev aluated in parallel. In practice, we work in lo g-space, so we perform summations. The compiler can then gener ate th e CUD A code for th e ev aluation of f f rom the IR representatio n. This code genera t ion step is concep tually simple an d we will not explain it further . It is interesting to no te that d es pite the simplicity of this parallelization the code scales reasonably well: the re is a large amount of parallelism becau s e it is ro ughly p ropor- tional to the number o f data p oints; uncovering the para l- lelism in the cod e does not in crease the overall quantity of A ugur: a M o deling Language for Data-P arallel Probabilistic Inference computatio n that has to be p erformed; and the r atio o f com- putation to g lobal memory accesses is high enoug h to hide memory latency bottlenecks. 4.2. Generating data-para ll el Gibbs s amplers Alternatively , an d mor e interestingly , the com piler can gen- erate a Gibbs sam pler for some models. For instance, we would like to gen erate a Gibbs sampler for LD A, as a sim- ple Metropolis-Hastings sampler will ha ve a very low ac- ceptance ratio. Cur rently w e ca nnot generate a collapsed or b locked sampler , but there is interesting work r elated to dynamica ll y collapsing or block ing variables ( ? ), an d we leav e it to future work to extend ou r compiler with this c a- pability . T o gen erate a Gibbs samp ler , the comp i ler needs to Figure out how to sample from each u ni variate distrib ution. As an example, to d ra w θ m as part of the ( τ + 1) th sample , the co mpiler needs to gen erate code that samples from th e following distrib ution p ( θ τ +1 m | w τ +1 , z τ +1 , θ τ +1 1 , ..., θ τ +1 m − 1 , θ τ m +1 , ..., θ τ M ) As we previously explained, our c ompiler uses a symbolic representatio n o f the model: the upside is that it m ak es it possible to scale to lar ge networks, but th e downside is that it be comes more ch allenging to uncover conju gac y rela- tions and independen ce between v ariables. T o accomplish this, th e compiler implem ents an alg ebraic re write system that attempts to rewrite the above exp ress ion in terms of expressions it knows (i.e., the joint distribution of the en- tire model). W e show a few selected r ules below to give a flav or of the rewrite system. (a) P P ⇒ 1 (b) R P ( x ) Q d x ⇒ Q R P ( x ) dx (c) N Q i P ( x i ) ⇒ N Q i { P ( x i ) } q ( i )= tr ue N Q i { P ( x i ) } q ( i )= f alse (d) P ( x ) ⇒ P ( x,y ) R P ( x,y ) d y Rule (a) states that like ter ms can be can celed. Rule (b ) says that terms that do not depend on the v ariable of inte- gration can b e pulled out of the integral. Rule (c) says that we can partition a prod uct over N-terms into two products, one wher e a pred icate q is satisfied on the indexing vari- able and on e where it is not. Rule (d) is a co mbination o f the prod uct and sum rule. Cur rently , the rewrite sy s tem is just comprised of ru l es we foun d useful in practice, and it is easy to extend the system to add more re write rules. Going back to our exam ple, the co mpiler rewrites the de- sired expression into the one below: p ( θ τ +1 m ) N ( m ) Q j p ( z mj | θ τ +1 m ) R p ( θ τ +1 m ) N ( m ) Q j p ( z mj | θ τ +1 m ) dθ τ +1 m In this fo rm, it is clear that each θ 1 , . . . , θ m is indep endent of the othe rs after conditioning on the other ran dom vari- ables. As a r es ult, they may all be sampled in parallel. At each step, the com piler can test fo r a conjug ac y r elation. In the ab o ve form, the com piler recognize s that the z mj are drawn from a cate gorical distribution and θ m is dr a wn from a Dirichlet, and can exploit the fact that these are conjug ate distributions. The posterior distribution for θ m is: Dirich let ( α + c m ) where c m is a vector whose k th entry is the number of z associated with d ocument m that were assigned topic k . Impor t antly , th e comp iler now knows that the drawing of each z must include a countin g phase. The case of the φ variables is more interesting . In this case, we want to sample from p ( φ τ +1 k | w τ +1 , z τ +1 , θ τ +1 , φ τ +1 1 , ..., φ τ +1 k − 1 , φ τ k +1 , ..., φ τ K ) After the application of the re write system to this expres- sion, the compiler discovers that this is equal to p ( φ k ) M Q i N ( i ) Q j { p ( w i | φ z ij ) } k = z ij R p ( φ k ) M Q i N ( i ) Q j { p ( w i | φ z ij ) } k = z ij dφ k The key o bserv ation th at the co mpiler takes advantage o f to reach this conclusion i s the fact that the z ar e distributed accordin g to a ca te gorical distribution and are u s ed to ind e x into the φ ar ray . Therefo re, they p artition the set of w ord s w into K d i sjoint sets w 1 ⊎ ... ⊎ w k , one for each topic. More concretely , th e pr obability of word s drawn from top ic k can be rewritten in partitioned form using rule (c) as M Y i N ( i ) Y j { p ( w ij | φ z ij ) } k = z ij This expresses the intuition that once a word’ s topic is fixed, th e word depend s on only o ne o f the φ k distribu- tions. In this fo rm, the compiler recogn izes that it should draw from Dirich let ( β + c k ) where c k is the coun t of words assigned to top i c k . In gen - eral, the com piler detects patterns like th e above wh en it A ugur: a M o deling Language for Data-P arallel Probabilistic Inference notices th at samples d ra wn fro m cate gor ical distributions are being used to index into arrays. Finally , the com piler turns to analy zing the z ij . In th i s case, it will again detect that they can be samp led in parallel but it will n ot be able to detec t a conjug ac y relationsh i p. It will then detect that the z ij are dr a wn f rom discrete distribu- tions, so that the univariate d is tribution can be calculated exactly and sampled from. In cases where the distributions are continuou s , it can tr y to use an other approx i mate sam- pling method as a subrou ti ne for drawing th at variable. One concer n with such a rewrite system is that it may fail to find a c onjugacy relation if the model has a comp l icated structure. So far we ha ve found our re write system to be robust and it can find all the usual co njugacy relations fo r models su ch as LD A, Gau s sian Mixtur e Mod els or Hid- den Markov Mode ls , but it suffers fr om th e same short- comings as imp lementations of BUGS when d eeper math- ematics are r equired to discover a conju gacy relation (as would be the case for instance for a non-lin ear regre s sion). In the cases where a conjug ac y r elation cannot be foun d, the compiler will (like B UGS) resor t to using Metropolis- Hastings and therefo re exploit the inheren t parallelism of these algorithms. Finally , note that the rewrite rules are applied deterministi- cally and the pr ocess will al ways termin ate and prod uce the same result. Overall, the cost of analysis is negligible com- pared to the sampling tim e for large data sets. Althoug h the re write system is simple, it enables us to use a c oncise symbolic repr es entation for the mod el an d thereby scale to large netw ork s . 4.3. Data-para llel Operations on Distribu tions T o pro duce ef ficient par allel code, the co mpiler needs to uncover p arallelism, but we also need to rely o n a g ood library of d ata-parallel operations for d is tributions. For in- stance, in th e case of LDA, there ar e two steps in which we need to draw fr om many Dirichlet distributions in par- allel. In the first case, wh en dr a wing the topic distrib utions for th e docum ents, each th read can dr a w on e of the θ i by generating K Gamma variates and normalizing them ( ? ). Since the number of documen ts is usually very large, th is produ ces eno ugh parallelism to make full use of th e GPU’ s cores. Howe ver , this will no t produce sufficient parallelism when drawing th e φ k , becau s e th e nu mber of top ics is usually small com pared to the number o f cores. Con s equen tly , we use a different proced ure (Algorithm 1 ). T o g enerate K Dirichlet variates o ver V categories with concentration pa- rameters α 11 , . . . , α K V , we first need to gener ate a matrix A where A ij ∼ Ga mma ( α ij ) and then no rmalize each row of this ma t rix. For samplin g the θ i , we w ere effecti vely Algorithm 1 Sampling from K Dirichlet V ariates Input: m atrix a o f size k by n for i = 0 to n − 1 in p arallel do for j = 0 to k − 1 do v [ i, j ] ∼ Ga mma ( a [ i, j ]) end for v × → 1 end for Output: m atrix v launching a thread fo r each r o w . Now that the nu mber of co lumns is mu ch larger than th e nu mber of rows, we launch a thr ead to gene rate the gamm a variates for e ach column, a nd then separate l y com pute a n ormalizing con- stant for each ro w by multipying th e matrix b y an all- ones vector using CUBLAS. Th is is an instance where the two- stage compilation p rocedure (Section 3 ) is usef ul, because the comp i ler is a ble to use inform ation abou t th e relati ve sizes of K and V to decid e that Algorithm 1 will be m ore efficient than th e simple scheme. This sort of optimization is not un ique to the Dirichlet dis- tribution. For example, when gener ating a large numb er of multinorm al variates by apply ing a lin ear tran sformation to a vector o f normal variates, the strategy f or extracting parallelism may change based on the number of variates to generate, th e dim ension of the multinorm al, and the num- ber of GPU cores. W e fou nd that to use th e GPU effectiv ely we h ad to d e velop the languag e in co ncert with the creation of a library of data-pa rallel operatio ns on distributions. 4.4. Parallelism & Inference T r adeoffs It is difficult to giv e a cost model for Augur pro grams. T raditiona l approaches are not nec es sarily appropr i ate for probab ilis tic programs because there are tradeoffs between faster sam pling times and conv ergence which are not easy to characterize. I n particular, dif ferent inference methods may af fect th e amount o f parallelism th at can b e exploited in a mo del. For example, in th e case of multivariate re- gression, we can use the Metropolis-Hastings sampler pre- sented ab o ve, which lets u s samp le fr om all the weights in par allel. However , we m ay be better off generatin g a Metropolis-Within-Gibbs sampler where the weights ar e sampled one at a time. This reduces the amo unt of ex- ploitable parallelism, b ut it may con verge faster , an d there may still be enoug h p arallelism in each step of Metropolis- Hastings by ev aluating the likelihood in parallel. In the Hidd en-Markov model, once again, one may try to sample the state of the Markov chain in pa rallel using a Metropolis-Ha s tings sam pler just for these variables. If the HMM is small this may be a go od way to make use of a GPU. Of course, for a large HMM it will be more effecti ve A ugur: a M o deling Language for Data-P arallel Probabilistic Inference to sample the states of the Markov ch ain in sequen ce, and in this case there is less parallelism to exploit. In each of these cases, it is not clear which of these alterna- ti ves is better , and d i fferent models may perform best with different settings. Despite these difficulties, because Augur tries to parallelize operation s over array s , u sers can max- imize the amount of p arallelism in their mode l s by struc- turing them so that data and pa rameters are st ored in large, flattened arrays. In addition, as more options and inference strategies are added to Augur, users will be able to experi- ment with the tradeoffs of different inference method s i n a way that would be too time-consuming t o d o manually . 5. Experimental Study T o assess the ru ntime perform ance of the inferen ce code generated by Augur , we pre s ent the results o f bench marks for the two examples presented throu ghout the paper and for a Gaussian Mix t ure Model (GMM). More detailed in- formation on the experiments can be found in the sup ple- mentary material. For th e mu lti v ariate regression a nd GMM, we co mpare Au- gur’ s per formance to th ose of two p opular langu ages for statistical modeling , J AGS ( ? ) and Stan ( ? ). J A GS is an implemen tation of the BUGS lan guage, and perf orms inference using Gibbs sampling, adap t ative M etropolis- Hastings, an d slice sampling. Stan uses No-U-Turn sam- pling, a v ariant of Hamilto nian Mon te Carlo sam pling. In the regression exper i ment, we configured Augu r to use Metropolis-Ha s tings 1 , while for the GMM experim ents Augur generated a Gibbs sampler . In addition to J A GS and Stan, for the LD A benchmarks we also compare Augur to a handwritten CUD A imp lementa- tion of a Gibbs samp l er and a Scala implemen tation of a collapsed Gibbs sampler ( ? ) fro m the Factorie library ( ? ). The fo rmer gives us a refer ence com parison f or what migh t be possible for a manually optimize d GPU im plementation, while the latter giv es a ba s eline for a Sca l a implemen tation that does not use GPUs. 5.1. Experimental Setup For the linear regression experiment, we used data sets from the UCI regression re pository ( ? ) . Th e Gau s sian M i x- ture Model experiments used two synthetic data sets, o ne generated fro m 3 clusters, the other fr om 4 clusters. For the 1 A better way to do inference in the case of t h e regression would hav e been for Augur to produ ce a Gibbs sampler, but this is not currently implemented. The conjugac y relati o n f o r the weights is not just an application of co njugacy rules ( ? ). W e c ould add specific rules for linear regressions ( wh ich is what J A GS does). Howe v er , we leav e it for future work to make t h e com- piler user extensible. LD A benchm ark, we used a c orpus extracted f rom the sim- ple English variant of W ikiped ia, with standard stopwords removed. T his gives a corpus with 48556 documents, a vo- cabulary size o f 372 76 words, and ap proximately 3.3 mil- lion tokens. Fro m that we sampled 1000 docum ents to use as a test set, removing w ord s which ap pear only in th e test set. T o ev aluate the mod el fit we use the log pr edicti ve probab ility measure ( ? ) o n t he test set. All exper iments were run on a single workstation with an Intel Core i7 3770 @3.4GHz CPU, 32 GB RAM, a Sam - sung 840 SSD, and an NVIDI A Gefo rce Titan. The Titan runs on the Kepler arch itecture. All probab ili ty v alues are calculated in double precision. The CPU perfo rmance re- sults using F actorie are calculated using a sing le thread, as the multi-thr eaded samplers are n either stab le n or p erfor - mant in the tested relea s e. The GPU results use all 896 double- precision ALU cores av ailable in the T itan 2 . 5.2. Results In general, our r es ults show that once the p roblem is large enoug h we can amo rtize Augur’ s startup cost of mode l compilation to CUD A, nvcc com pilation to a GPU binary , and c opying the data to and from the GPU. Th is co st is approx imately 1 0 seconds on average across all o ur exper - iments. After this poin t Augu r scales to larger numb ers of samples in shor ter runtimes than c omparable systems. More experimental detail is in the supplementary material. Our exper iments with regression show that Augu r’ s infer- ence is similar to J AGS in runtime and performanc e, and better than Stan . This is not a sur prise because the regres- sions have fe w random variables and the data sets ar e rela- ti vely small, and so m aking use of the GPU is not justified (except maybe f or muc h larger data sets). Howe ver , the results are very different for mo dels with latent variables where the numb er of variables grows with the data set. For instan ce, using the GMM e xamp le, we show (Figur e 8 ) that Aug ur scales b etter th an J AGS and Stan . For a hundred thousand d ata points, Augur draws a thousand sam ples in about 3 minutes wh ereas J AGS n eeds more than 21 m inutes and Stan requires m ore than 6 hours. Each system found the correct means and variances for the clu s ters, th e aim here is to mea s ure the scalin g of runtime with p roblem size. Results fro m th e LDA experiment are p resented in Figure 5 and use pred icti ve proba bility to co mpare conver genc e over time. W e compute the predictive proba bility and record the time after drawing 2 i samples, fo r i ran ging from 0 to 11 in- clusiv e. The time is reported in seconds. It takes Au gur 8.1 seconds to draw its first sample for LD A. The in ference for 2 The T itan has 2688 single-precision ALU cores, but single precision resulted in poor quality inference results, though the speed was greatly impro ved. A ugur: a M o deling Language for Data-P arallel Probabilistic Inference 10 2 10 3 10 4 10 5 0 2 4 6 8 10 12 14 16 18 20 22 24 26 28 30 Data Size (points) Runtime (minutes) Sampling Time v . Data Size (Synthetic) Augur Jags Stan Figure 4. Evolution of r u ntime to draw a thousand samples for v arying data set sizes for a Gaussian Mixture Model. S tan ’ s last data point is cropped, it took 380 minutes. Augur is very close to tha t of the hand-written CUD A im- plementation , an d m uch faster than the Factorie collap sed Gibbs sampler . Indeed , it takes 6.7 more hou rs f or the col- lapsed LDA imp l ementa t ion to draw 2 048 sam ples tha n it does for Augur . W e also implemented LD A in the J AGS and Stan systems but th e y ru n in to scalab i lity issues. The Stan version o f LD A uses 55 gigab ytes of RAM b ut failed to draw a second sample given a week of computatio n time. Unfo rtunately , we cou l d no t apply J A GS becau se it requ ires more th an 128 gigabytes of memory . I n compar is on, Augur uses less than a gigaby te of memory for this experiment. 6. Related W ork T o our knowledge, BUGS ( ? ) was th e first p robabilistic progr amming language. Inter est ingly , mo s t of t he key co n- cepts of pr obabilistic progra mming alread y ap peared in the first paper to in troduce BUGS ( ? ). Since th en, research in probabilistic pro gramming languages has been focused in two directio ns: improvin g perfo rmance and scalab ili ty throug h better inference generation ; and, increasing ex- pressiv eness an d building the fo undations o f a univ ersal probab ilis tic programm i ng language. These tw o directions are useful criteria to c ompare p robabilistic p rogramming languag es . In terms of language expressiveness, Augur is cu rrently limited to the specification o f Bayesian networks. It is pos- sible to extend this language (e.g., non-param etric models) or to add new modeling languages (e.g., Markov random field), b ut o ur c urrent f ocus is on im proving the inference generation . That is in contrast with langu ages like Ha n- 1 10 10 2 10 3 10 4 10 5 − 1 . 65 − 1 . 6 − 1 . 55 − 1 . 5 − 1 . 45 − 1 . 4 − 1 . 35 − 1 . 3 − 1 . 25 · 10 5 1 2 2 2 2 3 2 4 2 5 2 6 2 7 2 8 2 9 2 10 2 11 1 2 2 2 2 3 2 4 2 5 2 6 2 7 2 8 2 9 2 10 2 11 1 2 2 2 2 3 2 4 2 5 2 6 2 7 2 8 2 9 2 10 2 11 Runtime (seconds) Log10 Predictiv e Probability Predictiv e Probability v . Training T ime Augur Cuda Factorie(Collapsed) Figure 5. Evolution of the predictiv e probability over time f o r up to 2 048 sa mples and f o r thre e implementations of LDA inference: Augur , hand-written CUD A, Factorie’ s Collapsed Gibbs. sei ( ? ), Odds ( ? ), Stochastic Lisp ( ? ) and Ibal ( ? ) which focus o n in creasing expressi veness, at the expense of per - forman ce. Howe ver , as Au gur is embe dded in the Scala progr amming langu age, we have access to the wide vari- ety of lib raries on the JVM platform and benefit f rom Scala tools. Augur, like Stan ( ? ) and BUGS ( ?? ) is a dom ain specific pro babilistic lang uage fo r Baye s ian networks, b ut it is embedded in su ch a way that it has a very good inte- gration with the rest of Scala, which is crucial to software projects where d ata an alysis is on ly one co mponent of a larger artifact. Augur is not the only system designed for scalability and perfor mance. It is also the case of D i mple ( ? ), Factorie ( ? ), Infer .net ( ? ) and Figaro ( ?? ) , and the latest versions of Church ( ? ). Dimple foc uses o n pe rformance u s ing special- ized in ference hardware, thou gh it does provid e an in t er- face for CPU code. Factorie m ainly focuses on un directed networks, and is a Scala library rather than a DSL (u nlik e all the other systems mentioned). It has multiple inference backends, and aims to be a gener al pu rpose mach i ne learn- ing package . Infer .net is the system most similar to A ugur , in that it has a tw o p hase compilation appro ach, though it is based around variational m ethods. A block Gibbs sam- pler exists but is only fun ctional on a su bset of the mod- els. Figaro foc uses on a different set of inference tech- niques, including techniq ues wh i ch use exact infe rence in discrete space s ( the y also h a ve Metropolis-Hastings infer- ence). Chu rch p ro vides the ability to mix different infer - ence algorithms and has some parallel capability , b ut it is focused o n task-par allelis m for mu lt icores rather than on data-para l lelism for parallel architectures. Graph Lab ( ? ) is another f rame work for par allel machine learn ing which is more focused on multipro cess or an d distributed compu t - A ugur: a M o deling Language for Data-P arallel Probabilistic Inference ing than on the kind of data-parallelism a v ailable on GPUs. The key difference between Augur and these o ther lan - guages is the systematic g eneration of data- parallel alg o- rithms f or large numb ers of cores (i.e., th ousands) on gen - erally av ailable GPU hardware, and the use of a symb olic representatio n of the model in the compiler . 7. Conclusion W e fin d th at it is possible to automa t ically gen erate par allel MCMC-based inference algo rithms, and it is also possible to extract su f ficient parallelism to saturate a modern GPU with thousands of cores. Our compiler achieves this with no extra info rmation b e yond that w hich is nor mally en - coded in a graph i cal mod el description and uses a symbolic representatio n that a ll ows scaling to large models (par t icu- larly fo r laten t variable mod els such as LD A). I t also makes it ea s y to ru n dif ferent in ference algorithms and ev aluate the tradeoffs between con vergence an d samp ling time. The generated inference code is competitive in terms of sample quality with other probabilistic prog ramming systems, and for large problems generates samples much more quickly . A. Examples of Model Specification W e present a fe w examples of model specificatio ns in Au- gur, covering th ree important topics in m achine learning: regression ( A.1 ), clusterin g ( A.2 , A.3 , A.5 ), and classifica- tion ( A.4 ). Our goal is to show how a fe w p opular models can be p rogrammed in Au gur . For each of these examples, we first describe the support of the model, and then sketch the gen erati ve p rocess, relating the m ost complex parts of the progra m to their usual mathem atical no tation. A.1. Univariate polynomial regression Our first example mod el is for u ni v ariate po lynomial re- gression (Figure 6 ). The mod el’ s sup port is composed of the array w f or the weights of each m ononomial, x fo r the domain data points and y for their image. T he param eters of the mod el are: N , the dataset size and M , the orde r of the polyno mial. For simplicity , this example assumes that the domain of x ranges from 0 to 2. The generative process is: W e first indep endently draw each o f the M weigh ts , w i ∼ N (0 , 1) , th en draw ( x, y ) as follows: x j ∼ Uniform (0 , 2) (1) y j ∼ N ( M X i w i x i j , 1) . (2) For simplicity , the model is p resented with many “hard- wired” param eters, but it is possible to pa rameterize the model to contro l the noise level, or the d omain o f x . A.2. Catego rical mixture The third example is a categorical mixtu re model (Figure 7 ). T he model’ s suppo rt is comp osed of an array z for the cluster selection, x fo r the d ata points that we d ra w , t heta for th e priors of th e categorical that represents the d ata, and phi for the prio r of the indicator variable. Th e parameter s of the model ar e: N data size, K number o f clusters, and V for the vocabulary size. The gener ati ve process is: For each of the N data po ints we want to draw , we select a cluster z acco rding to their distribution ph i and then draw fro m the categorical with distribution gi ven by theta(z) . A.3. Gaussian Mixture Model The fourth example is a un i v ariate Gaussian mixture mode l (Figure 8 ). The model’ s sup port is composed of an array z for the cluster selection, x for the data points that we draw , mu for the p riors over the clu s ter mean s , sigma fo r the priors of the cluster variances, and phi for the p rior o f the indicator v ariable. The parameters of the model ar e: N d ata size, K number of clusters. The gener ati ve process is: For each of the N data po ints we want to draw , we select a cluster z acco rding to their distribution pi an d then draw from the Gaussian centered at mu(z) and of standard deviation sigma(z) . A.4. Naive bayes classifier The fifth e xample is a b inary naive Bayes classifier (Figure 9 ). The supp ort is compo sed of an a rray c fo r the class and an array f for the features, pC the prior on the positi ve class, and pFgivenC an array for the probability of each binary f eature giv en the class. The hyperpar ameters of the model are: N the nu mber of d ata points, K the n umber of features and. The features form a 2-dim ensional matrix b ut again the user has to “flatten” the matrix into an array . The gen erati ve p rocess is: First we draw the probability of an event being in one class or the other as pC . W e use pC has the parameter to decide for each ev ent in which class it falls ( c ). T hen, for each feature, we dr a w the prob ability of the feature o ccurring, pFg ivenC , depe nding on whether the event is in the class o r n ot. Finally , we d ra w the f eatures f for each ev ent. A.5. Hidden Markov Model The sixth example is a hidden Markov mo del (Figure 10 ) where the observation are the re s ult of coin flips. Th e sup- port is composed of the result of the coin flips fli ps , the priors for each of the coins bias , th e tran si tion matrix to decide how to ch ange coin tran sition matrix , and the states of the Markov chain th at indicates which coin is A ugur: a M o deling Language for Data-P arallel Probabilistic Inference 1 object Univariate PolynomialRegression { 2 3 import scala.math._ 4 5 class sig( var w: Array[Double], var x: Array[Double], var y: Array[Double]) 6 7 val model = bayes { 8 (N: Int, M: Int) => { 9 10 val w = Gaussian(0,1).sample(M) 11 val x = Uniform(0,2).sample(N) 12 val bias = Gaussian(0,1).sample 13 val y = for (i <- 1 to N) { 14 val monomials = for (j <- 1 to M) yield { w(j) * pow(x(i),j) } 15 Gaussian((monomials.sum) + bias, 1).sample() 16 } 17 18 observe(x, y) 19 } 20 } 21 } Figure 6. Specification of a uni v ariate polynomial regression 1 object Categorica lMixture { 2 class sig( var z: Array[Int], var x: Array[Int], var theta: Array[Double], var phi: Array[Double]) 3 val model = bayes { 4 (N: Int, K: Int, V: Int) => { 5 6 val alpha = vector(V,0.5) 7 val beta = vector(K,0.5) 8 9 val theta = Dirichlet(V,alpha).sample(K) 10 val phi = Dirichlet(K,beta).sample() 11 12 val x = for (i <- 1 to N) { 13 val z = Categorical(K, phi).sample() 14 Categorical(N,theta(z)).sample() 15 } 16 observe(x) 17 } 18 } 19 } Figure 7. Specification of a categorical mixture mod el 1 object GaussianMi xture { 2 3 class sig( var z: Array[Int], var x: Array[Double], var mu: Array[Double], var sigma: Array[Double], var phi: Array[Double]) 4 5 val model = bayes { 6 (N: Int, K: Int, V: Int) => { 7 8 val alpha = vector(V,0.1) 9 10 val phi = Dirichlet(V,alpha).sample() 11 val mu = Gaussian(0,10).sample(K) 12 val sigma = InverseGamma(1,1).sample(K) 13 14 val x = for (i <- 1 to N) { 15 val z = Categorical(K, phi).sample() 16 Gaussian(mu(z), sigma(z)).sample() 17 } 18 19 observe(x) 20 } 21 } 22 } Figure 8. Specification of a Gaussian mixture model A ugur: a M o deling Language for Data-P arallel Probabilistic Inference 1 object NaiveBayes Classifier { 2 3 class sig( var c: Array[Int], var f: Array[Int], var pC: Double, var pFgivenC: Array[Double]) 4 5 val model = bayes { 6 (N: Int, K: Int) => { 7 8 val pC = Beta(0.5,0.5).sample() 9 val c = Bernoulli(pC).sample(N) 10 11 val pFgivenC = Beta(0.5,0.5).sample(K * 2) 12 13 val f = for (i <- 1 to N) { 14 for (j <- 1 to K) { 15 Bernoulli(pFgivenC(j * 2 + c(i))).sample() 16 } 17 } 18 19 observe(f, c) 20 } 21 } 22 } Figure 9. Specification of a nai ve Bayes classifier 1 object HiddenMark ovModel { 2 class sig( var flips: Array[Int], var bias: Array[Double], var transition_matri x : Arr ay[ Double], var MC_states: Array[Int]) 3 4 val model = bayes { 5 (N: Int, number_states: Int) => { 6 val v = vector(number_states,0.1) 7 val transition_m atrix = Dirichlet(number_s tates ,v).sample(number_states) 8 val bias = Beta(1.0,1.0).sample(number_states) 9 10 val MC_states: IndexedSeq[Int] = for (i <- 1 to N) yield Categorical(number_states,transition _matrix (MC_states(max(0, i-1)))).sample() 11 12 val flips = for (i <- 1 to N) Bernoulli(bias(MC_states(i))).sample() 13 14 observe(flips) 15 } 16 } 17 } Figure 10. Specification of a Hidden Mark ov Model A ugur: a M o deling Language for Data-P arallel Probabilistic Inference Algorithm 2 Sampling from Dirichlet( α ) M times Input: ar ray α o f size n for M docum ents in parallel do for i = 0 to n − 1 do v [ i ] ∼ Gamm a ( a [ i ]) end for s = n − 1 P 0 a [ i ] in parallel for i = 0 to n − 1 in par allel do v [ i ] = v [ i ] s end for end for Output: ar ray v being used for the flip MC stat es . Th e two parameters of the model are the size of the data N , and the nu mber of coins being used number states . The generative process is: dr a w a tran si tion matrix for the Markov chain, a b ias for each of the co ins, decide what coin is to b e used in each state, using the transition matrix, and based on this, flip th e coin that should be used for each state. B. Simple Data-Parallel Sampling fr om M Dirichlet Distribu tions The algorithm in 2 presents a simple way to draw from a number of Dirichlet d is tributions in parallel o n a GPU. It works well if the number M is very lar ge. On the contrary , it is a bottleneck if M is small or much lesser than the di- mension of the Dirichlet distributions. C. Experimental study This section con tains add itional data from the benchm arks. C.1. Multivariate Regres sion In o ur regression experiment, we comp are Aug ur ag ainst two other models, o ne impleme nted in Jags ( 11 ) and one in Stan ( 12 ). Th es e models a re bo th based upon the BMLR code developed by Kruschke ( ? ). Each system u s es the same priors and hyperpa rameters. The regression experimental protocol w as as f ollo ws: each dataset h ad 10 9 0%/10% tr ain/test sp li ts generated , a nd each dataset was tested using 10 different random initiali- sations across each of th e train/test splits. Then t he number of samples was varied between 100, 20 0, 500, 1000, 2000, 5000 3 . Th is gi ves a total of 600 run s of each system on 3 Stan and J A GS had a b urn in of an additional 50% samples to allow for the adaptiv e tuning of the samplers, without these extra samples for adaptation the performance o f both of the m was poor . 0 5 10 15 20 25 30 35 40 0 200 400 600 800 1 , 000 1 , 200 1 , 400 1 , 600 1 , 800 2 , 000 2 , 200 100 200 500 1000 2000 5000 150 300 750 1500 3000 7500 100 200 500 1000 2000 5000 Runtime (seconds) RMSE RMSE v . Training T ime (Concrete) Augur Jags Stan Figure 13. Result of Multiv ariate Reg ression on the Concrete Compressi ve Strength data set. each dataset. The presented figu res average ac ross both the random seeds and the train/test splits to pr oduce one po int per n umber of samp les . W e th en p lot average RMSE on the test sets against a verage runtime. In figur es 1 3 , 14 , 15 and 16 we pr es ent results on the Con- crete comp ressi ve, win equality-red, winequality-white and Y ach t Hy drodynamics datasets from the UCI repo s itory ( ? ). In Concrete and Y acht we p resent results fro m J A GS, Stan and Augu r . On the winequality experiments we o nly present resu lt s from J A GS and Au gur due to mach i ne time constraints (Stan’ s runtime was too high to perfo rm su f fi- ciently many exper i ments on larger datasets). J AGS is us- ing a Gibbs sampler for the weig hts and the bias, an d uses a slice sampler for the variance of the n oise. Augu r uses random walk Metr opolis-Hastings, an d Stan is using the No-U-T urn variant of H amiltonian Mo nte Carlo. W e can see that Augur has a startup cost of a bout 10 seconds, and Stan has a startup cost of a bout 2 0 seco nds. A fter th at po int Augur can draw samples more quickly th an bo th Stan and J AGS, though due to J AGS’ s low startup time (¡¡1 seco nd) it is only on large datasets with many samples th at Augur provides a speedup. The RMSEs of J AGS and Augur con verge to app roximately similar v alues, though Augur takes longer to con verge (in terms of the number of samples, and total ru ntime) as Metropolis-Ha s tings is a less efficient inference algorithm for r e gression than a tuned Gib bs sampler . As mentioned in section 5 of the paper J AGS h as a special case for work- ing with lin ear r e gression models which alters the samplin g proced ure, and this feature is not currently available in Au- gur . Augur’ s Metropolis-Hastings algorithm does not use such tuning. A ugur: a M o deling Language for Data-P arallel Probabilistic Inference 1 model { 2 for ( i in 1:N ) { 3 y[i] ˜ dnorm( y.hat[i] , 1/tau ) 4 y.hat[i] <- b0 + inprod( b[1:nPred] , x[i,1:nPred] ) 5 } 6 tau ˜ dgamma( 1 , 1 ) 7 b0 ˜ dnorm( 0 , 0.01 ) 8 for ( j in 1:nPred ) { 9 b[j] ˜ dnorm( 0 , 0.01 ) 10 } 11 } Figure 11. Multi variate Re gression in Jags 1 data { 2 int nPred; 3 int nData; 4 real y[nData]; 5 matrix[nData,nPred] x; 6 vector[nData] b0vec; 7 } 8 parameter s { 9 real b0; 10 vector[nPred] b; 11 real tau; 12 } 13 transforme d parameter s { 14 vector[nData] mu; 15 vector[nData] offset; 16 offset <- b0vec * b0; 17 mu <- x * b + offset; 18 } 19 model { 20 b0 ˜ normal(0,10); 21 tau ˜ gamma(1,1); 22 for (d in 1:nPred) 23 b[d] ˜ normal(0,10); 24 y ˜ normal(mu, 1/sqrt(tau)); 25 } Figure 12. Multi variate Re gression in Stan A ugur: a M o deling Language for Data-P arallel Probabilistic Inference 0 1 2 3 4 5 6 7 8 9 10 11 1 2 13 14 15 0 10 20 30 40 50 60 70 80 100 200 500 1000 2000 5000 150 300 750 1500 3000 7500 Runtime (seconds) RMSE RMSE v . Training T ime (winequality-red) Augur Jags Figure 14. Result of Multi v ariate Regression on the winequality- red data set. 0 5 10 15 20 25 30 35 40 45 50 55 60 0 20 40 60 80 100 120 140 160 100 200 500 1000 2000 5000 150 300 750 1500 3000 7500 Runtime (seconds) RMSE RMSE v . Training T ime (winequality-white) Augur Jags Figure 15. Result of Multi v ariate Regression on the winequality- white data set. W e find th at the regression resu lt s sho w that Augur is com- petitiv e with other systems, though linear regression mod- els tend no t to be large enough to p roperly exploit all the computatio n av ailable in the GPU. C.2. Gaussian Mixture Model The Gaussian Mixture Mod el results pre s ented in section 5 of the p aper show h o w each o f th e three systems scale as the dataset size is increased. W e sample d 100 , 000 dat- apoints from two different mixtu re distributions: on e with 4 gaussians centered at { -5 ,-1,1,5 } with stand ard deviation { 1,0.1 ,2,1 } , an d one with 3 gaussians cen tered at { -5, 0 , 5 } with standard deviations { 0 .1,0.1,0.1 } . Eac h dataset had a flat mix ing d istrib ution, that is draws fr om each g aus- sian were equiprob able. From each dataset we subsampled − 2 0 2 4 6 8 10 12 1 4 16 18 2 0 22 24 26 28 30 32 34 8 10 12 14 16 18 20 22 100 5000 150 7500 100 5000 Runtime (seconds) RMSE RMSE v . Training T ime (Y acht) Augur Jags Stan Figure 16. Result of Multi variate Regression on the Y acht Hydro- dynamics data set. 1 model { 2 for (i in 1:N){ 3 z[i] ˜ dcat(theta) 4 y[i] ˜ dnorm(mu[z[i]],sigma[z[i]]) 5 } 6 theta[1:K] ˜ ddirch(alpha) 7 for (k in 1:K) { 8 alpha[k] <- 1 9 mu[k] ˜ dnorm(0,0.01) 10 sigma[k] ˜ dgamm a (1,1) 11 } 12 } Figure 17. GMM in Jags smaller datasets using 100, 1000 and 10 , 00 0 datapo i nts. W e used the GMM p resented in the paper fo r Aug ur , for Stan we used the GMM listed in the modelling handboo k, and for J A GS we wrote a stand ard GMM ( s hown in figure 17 , b ased upon Augur ’ s. Each mod el used the same p rior distributions and hyperparamete rs. Figure 4 in the paper is from th e dataset with 4 centre s . In figure 18 we show the runtim e of the remain ing dataset with 3 centres. For comp utational reaso ns we stopped Stan’ s fi- nal run after 3 ho urs (Stan took a pprox. 6 hou rs to comp lete on the first dataset). Her e we can see that Augur ’ s runtime scales much mo re slowly as the dataset size is incr eased. J AGS remains reasonably compe ti tive until 100 , 000 d ata points, at which point Augur is faster by a f actor of 7. Stan is also relati vely competitive but scales e xtremely poo rly as the number of datapo i nts is increased. C.3. LD A In an attempt to confirm the result presented in the pap er , we present another result (figure 19 ) measuring the predic- A ugur: a M o deling Language for Data-P arallel Probabilistic Inference 10 2 10 3 10 4 10 5 0 2 4 6 8 10 12 14 16 18 20 Dataset Size (#points) Runtime (minutes) Scalability (Data Set One) Augur Jags Stan Figure 18. Evolution of runtime to draw a thousand samples for v arying data set sizes for a Gaussian Mixture Model. Stan’ s 100 , 000 data point was not generated . ti ve pr obability averaging ac ross multiple run s using dif fer- ent train/test splits. In this experiment, we a veraged acro ss 10 runs with dif feren t train/test splits and present the tim- ings with error bars. W e also ran an exper imment across 10 dif ferent random initializations and seeds, and all algo- rithms ag ain showed robustness to the variation. W e re- duced the maximu m numb er of samples to 512 as gener- ating results for the Collapsed Gibb s sampler was p roving prohib it ive in terms of runtime for repeated experiments. A third e xper iment (figure 20 ) reports on the n atural loga- rithm of ru n time in millisecon ds to draw 512 samples as the nu mber of topics varies. The sparse implemen tation’ s runnin g time d oes not increase as quickly a s Au gur’ s as the number of topics increases. As a result, it runs faster when the n umber of topics is large. This is be cause Au- gur’ s Gib bs samp l er is linear in the number o f topics during the step of sampling each o f the z ij . The collapsed Gib bs sampler’ s performan ce w orsen when the num ber of topics is incr eased, as seen in o ur results a nd in the e xperim ents in ( ? ). Ag ain, Augur’ s g enerated co de is on par with the hand-wr itten CUD A implemen tation. W e experimented with the SparseLD A imp lementation which f orms Factorie’ s stan dard LD A mo del, but this im- plementation proved to be unreliable. The predictive pro b- ability measure actually decreased a s more samples were drawn u s ing the Sp arseLD A implem entation. W e are work- ing with the developers of Factorie to investigate this prob- lem. T he Sp arseLD A im plementation is in teresting as it uses a set o f LDA specific assumptions to generate a highly optimised Gib bs sampler . W e f ound Augur to b e competi- ti ve in terms of r untime when drawing more than 256 sam- ples. With smaller sample sizes there is insufficient com- 1 10 10 2 10 3 10 4 − 1 . 65 − 1 . 6 − 1 . 55 − 1 . 5 − 1 . 45 − 1 . 4 − 1 . 35 − 1 . 3 − 1 . 25 · 10 5 1 2 2 2 2 3 2 4 2 5 2 6 2 7 2 8 2 9 1 2 2 2 2 3 2 4 2 5 2 6 2 7 2 8 2 9 1 2 2 2 2 3 2 4 2 5 2 6 2 7 2 8 2 9 Runtime (seconds) log 10 Predictiv e Probability Predictiv e Probability v . Training T ime Augur Cuda Factorie(Collapsed) Figure 19. A verage o ver 10 runs of the e volution o f the predicti ve probability ov er time. 0 50 100 150 200 250 300 350 400 450 500 1 10 10 2 10 3 10 4 10 5 Number of T opics Runtime (seconds) Effect of T opic Number on Performance Augur Cuda Factorie(Collapsed) Figure 20. Comparison of scalability of Augur , hand-written CUD A, and Factorie’ s collapsed Gibb s w .r .t the number of top- ics. A ugur: a M o deling Language for Data-P arallel Probabilistic Inference putation to amortize the compilatio n costs.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment