Advances in Learning Bayesian Networks of Bounded Treewidth

This work presents novel algorithms for learning Bayesian network structures with bounded treewidth. Both exact and approximate methods are developed. The exact method combines mixed-integer linear programming formulations for structure learning and …

Authors: Siqi Nie, Denis Deratani Maua, Cassio Polpo de Campos

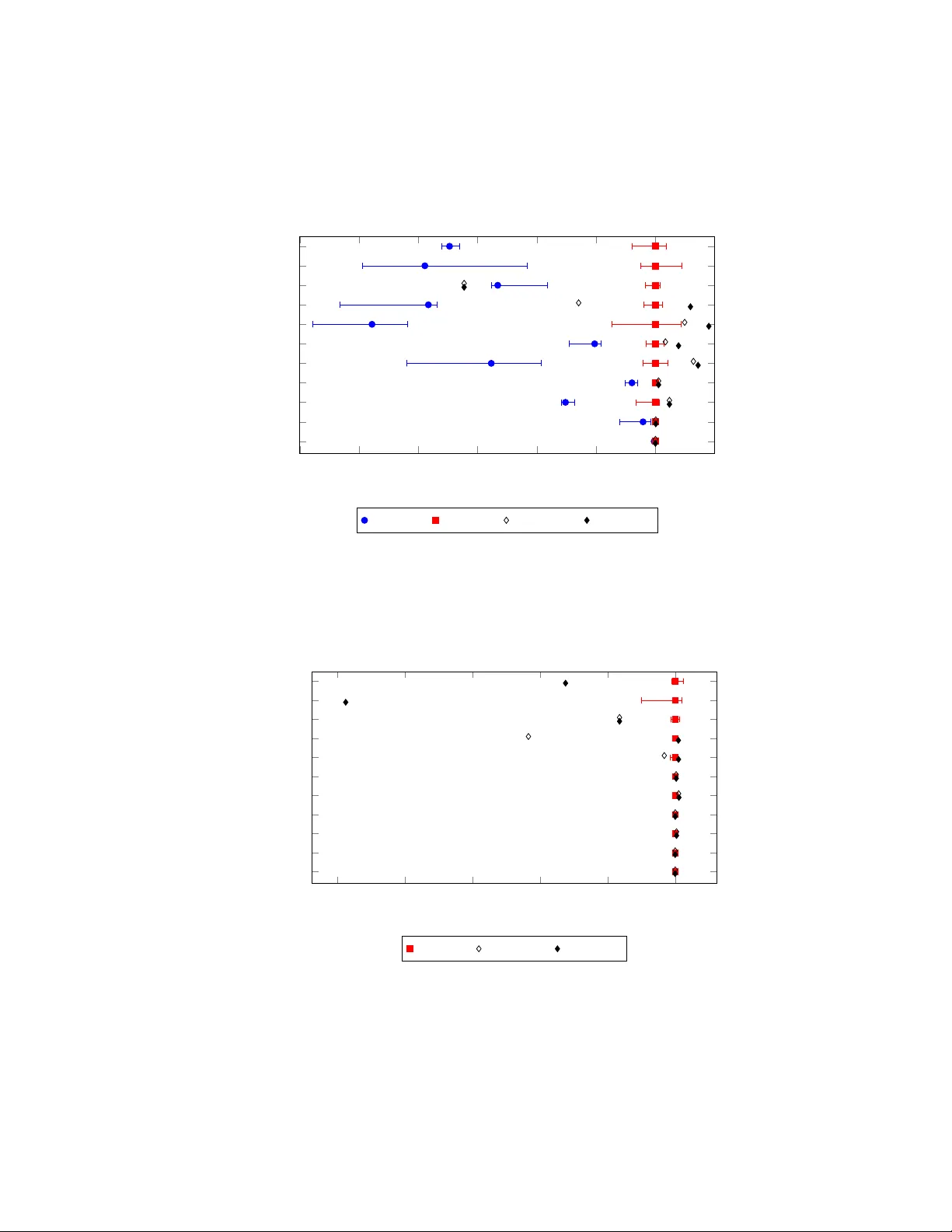

Adv ances in Learning Bayesian Networks of Bounded T ree width Siqi Nie ∗ Denis Deratani Mau ´ a † Cassio Polpo de Campos ‡ Qiang Ji § September 24, 2018 Abstract This work presents novel algorithms for learning Bayesian network structures with bounded tree width. Both exact and approximate methods are de veloped. The exact method combines mix ed-integer linear programming formulations for struc- ture learning and tree width computation. The approximate method consists in uni- formly sampling k -trees (maximal graphs of treewidth k ), and subsequently select- ing, exactly or approximately , the best structure whose moral graph is a subgraph of that k -tree. Some properties of these methods are discussed and pro ven. The approaches are empirically compared to each other and to a state-of-the-art method for learning bounded treewidth structures on a collection of public data sets with up to 100 variables. The experiments show that our exact algorithm outperforms the state of the art, and that the approximate approach is fairly accurate. 1 Intr oduction Bayesian networks are graphical models widely used to represent joint probability dis- tributions on complex multiv ariate domains [31]. A Bayesian network comprises two parts: a directed acyclic graph (the structure) describing the relationships among vari- ables in the model, and a collection of conditional probability tables from which the joint distribution can be reconstructed. As the number of variables in the model in- creases, specifying the underlying structure becomes a tedious and difficult task, and practitioners often resort to learning Bayesian networks directly from data. Here, learn- ing a Bayesian network refers to inferring the underlying graphical structure from data, a task well-known to be NP-hard [13]. ∗ Email: nies@rpi.edu. Affiliation: Rensselaer Polytechnic Institute, USA. † Email: denis.maua@usp.br. Affiliation: Univ ersidade de S ˜ ao Paulo, Brazil. ‡ Email: cassio@idsia.ch. Affiliation: Dalle Molle Institute for Artificial Intelligence, Switzerland. § Email: jiq@rpi.edu. Affiliation: Rensselaer Polytechnic Institute, USA. 1 Learned Bayesian netw orks are commonly used for drawing inferences such as querying the posterior probability of some variable after evidence is entered (a task known as belief updating), finding the mode of the joint distribution (known as most probable explanation or MAP inference), or selecting a configuration of a subset of the variables that maximizes their conditional probability (known as marginal MAP inference). All those inferences are NP-hard to compute ev en approximately [1, 18, 19, 21, 38], and all known (exact and prov ably good) algorithms ha ve worst-case time complexity that is exponential in the treewidth [19, 24, 31, 34], which is a measure of connectedness of the graph. Polynomial-time algorithms for such inferences do exist, but the y provide no guarantees on the quality of the solution they deliv er , which raises doubts as to whether occasional bad results are a consequence of suboptimal structure learning or of approximate inference. In fact, under widely belie ved assumptions from complexity theory , exponential time complexity in the treewidth is inevitable for any algorithm that provides provably good inferences [11, 33]. Thus, learning network structures of small treewidth is essential if one wishes to perform reliable and efficient inference. This is particularly important in the presence of missing data, as learning methods usually resort to some kind of Expectation-Maximization procedure that re- quires performing belief updating in the network at e very iteration [25]. In those cases inefficient inference leads to great computational cost of learning; unreliable inference leads to learning underfitted/ov erfitted structures. Since estimating a netw ork’ s tree width is itself an NP-hard task [2], e xtending cur- rent methods for learning Bayesian networks to the case of bounded treewidth while maintaining their relativ e efficiency and accuracy is not trivial. In comparison to un- constrained Bayesian network learning, few algorithms have been designed for the bounded treewidth case. Korhonen and Parviainen [32] showed that learning bounded treewidth Bayesian networks is NP-hard, and developed an exact algorithm based on dynamic programming that learns optimal n -node structures of treewidth at most ω in time 3 n n ω + O (1) , which is above the 2 n n O (1) time required by the best worst-case algorithms for learning optimal Bayesian networks with no constraint on treewidth [40]. Elidan and Gould [23] combined se veral heuristics to treewidth computation and network structure learning in order to design approximate methods. Others have addressed the similar (but not equiv alent) problem of learning undirected models of bounded treewidth [3, 12, 42]. V ery recently , there seems to be an increase of interest in the topic. Berg et al. [6] showed that the problem of learning bounded treewidth Bayesian networks can be reduced to a weighted maximum satisfiability problem, and subsequently solved by weighted MAX-SA T solv ers. They report experimental results showing that their approach outperforms Korhonen and Parviainen’ s dynamic program- ming approach. In the same year , Parviainen et al. [36] showed that the problem can be reduced to a mixed-integer linear program (MILP), and then solved by off-the-shelf MILP optimizers (e.g. CPLEX). Their reduced MILP problem howe ver has exponen- tially many constraints in the number of v ariables. Following the w ork of Cussens [16], the authors avoid creating such large programs by a cutting plane generation mecha- nism, which iterativ ely includes a new constraint while the optimum is not found. The generation of each ne w constraint (cutting plane) requires solving another MILP prob- lem. The works of [6] and [36] hav e been developed independently and simultaneously with our work presented here; for this reason, we do not compare our methods with 2 theirs. W e intend to do so in the near future. In this paper, we present two novel ideas for score-based Bayesian network struc- ture learning with a hard constraint on tree width. W e first introduce a mixed integer lin- ear programming formulation of the problem (Section 3) that builds on existing MILP formulations for unconstrained structure learning of Bayesian networks [16, 17] and for computing the treewidth of a graph [27]. The designed formulation is able to find a score-maximizer Bayesian network of tree width smaller than a given constant for models containing many more variables than K orhonen and Parviainen’ s method, as we empirically demonstrate in Section 5. Unlike the MILP formulation of Parviainen et al. [36], the MILP problem we generate is of polynomial size in the number of vari- ables, and does not require the use of cutting planes techniques. This makes for a clean and succinct formulation that can be solved with a single call of a MILP optimizer . A better understanding of cases where one approach is preferred to the other is yet to be achiev ed. Since linear programming relaxations are used for solving the MILP problem, any MILP formulation can be used to provide approximate solutions and error estimates in an an ytime f ashion (i.e., the method can be stopped at any time during the computation with a feasible solution). Howe ver , the MILP formulations (both ours and the one proposed by Parviainen et al. [36]) cannot cope with very large domains, ev en if we agreed on obtaining only approximate solutions. This is because the minimum size of the MILP problems is cubic in the number of variables (hence it is difficult even to start the MILP solver for large domains), and there is probably little we can do to considerably improve this situation (a further discussion on that is given in Section 3). This limitation is observed in the experiments reported in Section 5, where our MILP formulation requires a much larger amount of time to obtain much poorer solutions for networks with ov er 50 variables. In order to deal with lar ge domains, we devise (in Section 4) an approximate method based on a uniform sampling of k -trees (maximal triangulated graphs of tree- width k ), which is achieved by using a fast computable bijection between k -trees and Dandelion codes [10]. For each sampled k -tree, we either run an exact algorithm sim- ilar to the one proposed in [32] (when computationally appealing) to learn the score- maximizing network whose moral graph is a subgraph of that k -tree, or we resort to a much more efficient method that takes partial variable orderings uniformly at random from a (relativ ely small) space of orderings that are compatible with the k -tree. W e discuss the time and sample complexity of both variants, and compare it to those of similar schemes for learning unconstrained networks. W e show empirically (in Sec- tion 5) that the double sampling scheme (of k -trees and partial variable orderings) is very effecti ve in learning close to optimal structures in a selected set of data sets. W e conclude in Section 6 by noting that the methods we propose can be considered as state-of-the-art, and by suggesting possible improvements. T o start, Section 2 presents some background knowledge on learning Bayesian netw orks. 3 2 Pr eliminaries A Bayesian network is a concise graphical representation of a multiv ariate domain, where each random variable is associated with a node of its underlying directed ac yclic graph (D A G) and local conditional probability distrib utions are specified for the vari- able giv en its parents in the graph (we often refer to variables and nodes in the graph interchangeably). Let N be { 1 , . . . , n } and consider a finite set X = { X i : i ∈ N } of categorical random variables X i taking values in finite sets X i . Formally , a Bayesian network is a triple ( X , G, θ ) , where G = { N , A } is a D A G whose nodes are in one-to-one corre- spondence with v ariables in X , and θ = { θ i ( x i , x π i ) } is a set of numerical parameters specifying (conditional) probability values θ i ( x i , x π i ) = P ( x i | x π i ) , for every node i in G , value x i of X i and assignment x π i to the parents π i of X i , according to G . The structure G (that is, the DA G of the network) represents a set of stochastic indepen- dence assessments among v ariables in X . In particular , G represents a set of graphical Markov conditions: e very variable X i is conditionally independent of its nondescen- dant nonparents giv en its parents. As a consequence, a Bayesian network ( X , G, θ ) uniquely defines a joint probability distribution ov er X as the product of its parame- ters [31, Chapter 3.2.3]: P ( x 1 , . . . , x n ; G, θ ) = Y i ∈ N θ i ( x i , x π i ) . (1) Learning the structure G from data is a challenging problem. One approach is to identify , for each variable, the minimal set of v ariables that makes that v ariable conditionally independent of others (Mark ov blanket), which is usually done by means of statistical tests of stochastic independence or information theoretic measures [41]. Alternativ ely , structural learning can be posed as a combinatorial optimization problem in which one seeks the structure that maximizes a score function that relates to the data likelihood, while avoiding some excessiv e model complexity . Commonly used score functions include the Minimum Description Length (which is equiv alent to the Bayesian Information Criterion) [39], and Bayesian Dirichlet (likelihood) equiv alent uniform score [9, 15, 28]. These functions follow dif ferent rationale but they all satisfy two properties: (i) they can be written as a sum of local score functions that depend only on the parent set of each node and on the data, and (ii) the local score functions can be efficiently computed and stored. Score-based structure learning is a difficult task, and research on this topic has been very acti ve [4, 17, 29, 30, 32, 44, 45]. In score-based Bayesian network learning we seek a D A G structure G ∗ such that G ∗ = argmax G ∈G n X i ∈ N s i ( π i ) , (2) where G n is the class of all D A Gs with n nodes, s i are local score functions that depend only on the parent set π i as giv en by G (i.e., the computation of each s i ( π i ) depends only on the values that X i and X π i take in the data set). W e assume (unless otherwise stated) that local scores s i ( π i ) hav e been previously computed and can be retrieved at constant time. Despite the decomposability of the score functions, the optimization 4 cannot be performed locally lest it almost certainly introduce directed cycles in the graph. W e say that a cycle in an undirected graph has a chord if there are two nodes in the cycle which are connected by an edge outside the cycle. A chordal graph is an undirected graph in which all cycles of length four or more hav e a chord. Any graph can be made chordal by inserting edges, a process called chor dalization [2, 8]. The treewidth of a chordal graph is the size of its largest clique minus one. The treewidth of an arbitrary undirected graph is the minimum treewidth ov er all chordalizations of it. The moral graph of a DA G is the undirected graph obtained by connecting any two nodes with a common child and dropping arc directions. The tree width of a D A G is the treewidth of its corresponding moral graph. The tree width of a Bayesian network ( X, G, θ ) is the treewidth of the D A G G . An elimination order is a linear ordering of the nodes in a graph. W e say that an elimination order is perfect if for every node in the order its higher-ordered neighbors form a clique (i.e., are pairwise connected). A graph admits a perfect elimination order if and only if it is chordal. Perfect elimination orders can be computed in linear time if they exist. The elimination of a node according to an elimination order is the process of pairwise connecting all of its higher-ordered neighbors. Thus, the elimination of all nodes produces a chordal graph for which the elimination order used is perfect. The edges inserted by the elimination process are called fill-in edges. Giv en a perfect elimination order, the tree width of the graph can be computed as the maximum number of higher ordered neighbors in the graph. The reason why most score functions penalize model complexity (as given by the number of free numerical parameters) is that data likelihood always increases by aug- menting the number of parents of a v ariable (and hence the number of free parameters in the model), which leads to ov erfitting and poor generalization. The way scores pe- nalize model complexity generally leads to structures of bounded in-degree and helps in pre venting ov erfitting, but even bounded in-degree graphs can have large tree width (for instance, directed square grids hav e treewidth equal to the square root of the num- ber of nodes, yet ha ve maximum in-degree equal to two), which yields a great problem to subsequent probabilistic inferences with the model. There are at least two direct reasons to aim at learning Bayesian networks of bounded treewidth: (i) As discussed pre viously , all known exact algorithms for prob- abilistic inference hav e e xponential time complexity in the treewidth, and networks with very high treewidth are usually the most challenging for approximate methods; (ii) Previous empirical results [23, 37] suggest that bounding the treewidth might im- prov e model performance on held-out data. There is also e vidence that bounding the treewidth does not impose a great b urden on the e xpressivity of the model for real data sets [7]. The goal of learning Bayesian networks of bounded tree width is to search for G ∗ such that G ∗ = argmax G ∈G n,k X i ∈ N s i ( π i ) , (3) where G n,k is the class of all DA Gs of tree width not (strictly) greater than k . From a theoretical point of vie w , this is no easy task. K orhonen and Parviainen [32] adapted 5 Srebro’ s complexity result for Markov networks [42] to show that learning the struc- ture of Bayesian networks of bounded treewidth strictly greater than one is NP-hard. Dasgupta’ s results also pro ve this hardness if the score maximizes data likelihood [20] (in the case of networks of treewidth one, that is, directed trees with at most one parent per node, learning can be performed ef ficiently by the Chow and Liu’ s algorithm [14]). 3 Mixed integer linear pr ogramming The first contribution of this work is the mix ed integer linear programming (MILP) formulation that we design to exactly solve the problem of structure learning with bounded treewidth. MILP formulations have shown to be very effecti ve to learning Bayesian networks without the treewidth bound [4, 16], surpassing other attempts in a range of data sets. Moreover , the great language power of a MILP problem allows us to encode the treewidth constraint in a natural manner , which might not be easy with other structure learning approaches [22, 29, 35, 44, 45]. W e note that computing the treewidth of a graph is an NP-hard problem itself [2], e ven if there are linear algorithms that are only exponential in the treewidth [8] (these algorithms might be seen mostly as theoretical results, since their practical use is shadowed by very large hidden con- stants). Hence, one should not hope to enforce a bound on the tree width (which should work for any chosen bound) without a machinery that is not at least as powerful as NP . The novel formulation is based on combining the MILP formulation for struc- ture learning in [17] with the MILP formulation presented in [27] for computing the treewidth of an undirected graph. There are although crucial differences, which we highlight later on. W e have av oided the use of sophisticated techniques for MILP in the context of structure learning, such as constraint generation [4, 16], because we are interested in pro viding a clean and succinct MILP formulation, which can be ran using off-the-shelf solv ers without additional coding. Since our formulation is a combination of two previous MILP formulations of dis- tinct problems, we will present each formulation separately , and then describe how to combine them into a concise MILP problem. 3.1 A MILP f ormulation for bounding the tr eewidth Consider a graph G = ( N , E ) . W e begin with the MILP formulation of the class of all supergraphs of a graph G that hav e treewidth less than or equal to a gi ven v alue w : X j ∈ N y ij ≤ w , ∀ i ∈ N , (4a) ( n + 1) · y ij ≤ n + z j − z i , ∀ i, j ∈ N , (4b) y ij + y j i = 1 , ∀ ( i, j ) ∈ E , (4c) y ij + y ik − ( y j k + y kj ) ≤ 1 , ∀ i, j, k ∈ N , (4d) z i ∈ [0 , n ] , ∀ i ∈ N , (4e) y ij ∈ { 0 , 1 } , ∀ i, j ∈ N . (4f) 6 The formulation above is based on encoding all possible elimination orders of the nodes of G . A chordalization G 0 = ( N , E 0 ) of G of treewidth at most w can be obtained from a feasible solution (if it exists) of the program by setting E 0 = { ij ∈ N × N : y ij = 1 or y j i = 1 } . Constraint (4a) ensures G 0 has treewidth at most w by bounding the number of higher-ordered neighbors of ev ery node i (which is an alternativ e way of defining the treewidth of chordal graphs). The v ariables z i , i ∈ N , take (real) values in [0 , n ] (Constraint (4e)) and partially define an elimination order of the nodes: a node i is eliminated before node j if z i < z j (the specification is partial since its allows for two nodes i and j with z i = z j ). This order does not need to be linear because there are cases where multiple linearizations of the partial order are equally good in building a chordalization G 0 of G (i.e., in minimizing the maximum clique size of G 0 ). In such cases, two nodes i and j might be assigned the same v alue z i = z j indicating that eliminating z i before z j and the con verse results in chordal graphs with the same treewidth. The variables y ij , i, j ∈ N , are { 0 , 1 } -valued (Constraint (4f)) and indicate whether node i precedes j in the order (i.e., whether z i < z j ) and an edge exists among them in the resulting chordal graph G 0 (recall that an elimination process always produces a chordal graph). Although the values z i are not forced to be integers in our formulation, in practice they will most likely be so. Constraint (4b) allo ws y ij to be 1 only if j appears after i in the order (it in fact requires that z j ≥ z i + 1 to allow y ij to be one). Constraint (4c) ensures G 0 is a supergraph of G . Constraint (4d) guarantees that the elimination ordering induced by z i , i ∈ N , is perfect for G 0 : if j and k are higher ordered neighbors of i in G 0 , then j and k are also neighbors in G 0 , that is, either y j k or y kj must be 1 . The practical dif ference of this formulation with respect to the one in [27] lies in the f act that we allow partial elimination orders, and we do not need integer v ariables to enforce such orders. A bottleneck is the specification of Constraint (4d), as there are Θ( n 3 ) such constraints. The following result is an immediate conclusion of the abov e reasoning. Proposition 1 The graph G has tree width at most w if and only if the set defined by Constraints (4) is non empty . Proposition 2 Let z i , y ij , i, j ∈ N , be variables satisfying Constraints (4a) – (4f) . Then the graph G 0 = ( E 0 , N ) , wher e E 0 = { ij ∈ N × N : y ij = 1 or y j i = 1 } , is a chor dalization of G with tr eewidth at most w , and any elimination or der consistent with the partial or der induced by z i is perfect for G 0 . 3.2 A MILP f ormulation for structur e learning W e no w turn our attention to the MILP formulation of the structure learning part. Con- sider a chordal (undirected) graph M = ( N , E ) , a perfect elimination order for M , and let y ij , i, j ∈ N , be { 0 , 1 } -valued v ariables such that y ij = 1 if and only if E contains ij and i is eliminated before j . For each node i in N let F i be the collection of all allowed parent sets for that node (these sets can be specified manually by the user or simply defined as the subsets of N \ { i } with cardinality less than a giv en bound). W e denote an element of F i as F it , with t = 1 , . . . , |F i | (hence F it ⊂ N ). The following MILP formulation specifies the class of all D A Gs over N that are consistent with the 7 parent sets F i and whose moral graph is a subgraph of M : X t π it = 1 , ∀ i ∈ N , (5a) ( n + 1) π it ≤ n + v j − v i , ∀ i ∈ N , ∀ t, ∀ j ∈ F it , (5b) π it ≤ y ij + y j i , ∀ i ∈ N , ∀ t, ∀ j ∈ F it , (5c) π it ≤ y j k + y kj , ∀ i ∈ N , ∀ t, ∀ j, k ∈ F it , (5d) v i ∈ [0 , n ] , ∀ i ∈ N , (5e) π it ∈ { 0 , 1 } , ∀ i ∈ N , ∀ t, (5f) where the scope of the ∀ t in each constraint is 1 , . . . , |F i | . A D AG D = ( N , A ) can be obtained from a solution to the above program by setting A = { i ← j : i ∈ N , j ∈ N , π it = 1 and j ∈ F it } . The variables v i , i ∈ N , take v alues in [0 , n ] (Constraint (5e)) and partially specify a topological order of the nodes in D : if v i > v j then j is not an ancestor of i . The variables π it , i ∈ N , t = 1 , . . . , |F i | , are { 0 , 1 } -valued (Constraint (5f)) and indicate whether the t -th parent set in F i was chosen for node i . Constraint (5a) enforces that e xactly one parent set is chosen for each node. Constraint (5b) forces those choices to be ac yclic, that is, to respect the topological order induced by the variables v i (with ties broken arbitrarily for nodes i, j with v i = v j ). Here too the order does not need to be linear . In fact, only the relati ve ordering of nodes that are connected in M is relev ant because Constraints (5c) and (5d) ensure that arcs appear in D only if the corresponding edges in the moral graph of D exist in M (Constraint (5d) is responsible for having the moralization of the graph f alling inside M ). Proposition 3 Let v i , π it , i ∈ N , t = 1 , . . . , |F i | , be variables satisfying Constraints (5) . Then the dir ected graph D = ( N , A ) , wher e A = { i ← j : i ∈ N , j ∈ N , π it = 1 and j ∈ F it } is acyclic and consistent with every set F i . Moreo ver the moral graph of D is a subgraph of M . A corollary of the above result is that the tree width of D is at most the treewidth of M [8]. 3.3 Combining the MILP f ormulations W e can no w put together the two pre vious MILP formulations to reach the follo w- ing MILP formulation for the problem of learning D A Gs of treewidth bounded by a constant w : maximize: X it π it · s i ( F it ) (6a) 8 subject to: X j ∈ N y ij ≤ w , ∀ i ∈ N , (6b) ( n + 1) · y ij ≤ n + z j − z i , ∀ i, j ∈ N , (6c) y ij + y ik − ( y j k + y kj ) ≤ 1 , ∀ i, j, k ∈ N , (6d) X t π it = 1 , ∀ i ∈ N , (6e) ( n + 1) π it ≤ n + v j − v i , ∀ i ∈ N , ∀ t, ∀ j ∈ F it , (6f) π it ≤ y ij + y j i , ∀ i ∈ N , ∀ t, ∀ j ∈ F it , (6g) π it ≤ y j k + y kj , ∀ i ∈ N , ∀ t, ∀ j, k ∈ F it , (6h) z i ∈ [0 , n ] ,v i ∈ [0 , n ] , ∀ i ∈ N , (6i) y ij ∈ { 0 , 1 } , ∀ i, j ∈ N , (6j) π it ∈ { 0 , 1 } , ∀ i ∈ N , ∀ t. (6k) As the following result sho ws, the MILP formulation above specifies D A Gs of bounded treewidth: Theorem 1 Let y ij , z i , v i , π it , i, j ∈ N , t = 1 , . . . , |P i | , be variables satisfying Con- straints (6b) – (6k) , and define a dir ected graph D = ( N , A ) , wher e A = { i ← j : i ∈ N , j ∈ N , ∃ t s.t. π it = 1 and j ∈ F it } . Then D is a acyclic, consistent with the par ents sets P i , and has tr eewidth at most w . Corollary 1 If y ij , z i , v i , π it , i, j ∈ N , t = 1 , . . . , |P i | , maximize (6a) and satisfy (6b) – (6k) , then the D A G D as defined above is the solution to the optimization in (3) . The MILP formulation (6) can be directly fed into any off-the-shelf MILP opti- mizer . According to Corollary (1), the outcome will alw ays be an optimum struc- ture if enough resources (memory and time) are given. Standard MILP optimizers (e.g. CPLEX) often employ branch-and-bound (or branch-and-cut) procedures, which are able to be halted prematurely at any time and still provide a valid solution and an outer bound for the maximum score. Hence, the MILP formulation also provides an anytime algorithm for learning Bayesian networks of bounded treewidth: the proce- dure can be stopped at time and still pro vide an approximate solutions and error bound. Moreov er , the quality of the approximation solution returned increases with time, while the error bounds monotonically decrease and ev entually con ver ge to zero. 3.4 Comparison with the dynamic programming appr oach T o v alidate the practical feasibility of our MILP formulation, we compare it against the the dynamic programming method proposed previously for this problem [32], which we call K&P from now on. 1 T able 1 show the time performance of our MILP for- mulation and that of K&P on a collection of reasonably small data sets from the UCI 1 W e used the freely av ailable code provided by the authors at http://www .cs.helsinki.fi/u/jazkorho/aistats- 2013/. 9 repository 2 (discretized ov er the median v alue, when needed) and small values of the treewidth bound. More details about these data are presented in Section 5. The exper - iments hav e been run with a limit of 64GB in memory usage and maximum number of parents per node equal to three (the latter restriction facilitates the experiments and does not impose a constraint in the possible tree widths that can be found). While one shall be careful when directly comparing the times between methods, as the imple- mentations use different languages (we are running CPLEX 12.4, K&P uses a Cython 3 compiled Python code), we note that our MILP formulation is orders of magnitude faster than K&P , and able to solve many problems which the latter could not (in Sec- tion 5 we show the results of e xperiments with much lar ger domains). A time limit of 3h was giv en to the MILP , in which case its own estimation of the error is reported (in fact, it found the optimal structure in all instances, but was not able to certify it to be optimal within 3h). T able 1: Computational time to find the optimal Bayesian network structure. Empty cells indicate that the method failed to solv e the instance because of excessi ve memory consumption. Limit of 3h was giv en to MILP , in which case its own estimation of the error is reported. s, m, h mean seconds, minutes and hours, respectiv ely . METHOD TW NURSER Y BREAST HOUSING ADUL T n = 9 n = 10 n = 14 n = 15 MILP 2 1s 31s 3h [2.4%] 3h [0.39%] 3 < 1s 19s 25m 3h [0.04%] 4 < 1s 8s 80s 40m 5 < 1s 8s 56s 37s K&P 2 7s 26s 128m 137m 3 72s 5m – – 4 12m 103m – – 5 131m – – – The results in the table show that our MILP formulations lar gely outperforms K&P , being able to handle much larger problems. Y et we see from these experiments that both algorithms scale poorly in the number of variables. In particular, K&P cannot cope with data sets containing more than a dozen of v ariables. The results suggest that the MILP problems become easier as the treewidth bound increases. This is likely a consequence of the increase of the space of feasible solutions, which makes the linear relaxations used for solving the MILP problem tighter , thus reducing the computational load. This is probably aggrav ated by the small number of variables in these data sets (hence, by increasing the tree width we ef fectiv ely approximate an unbounded learning situation). W e shall demonstrate empirically in Section 5 that the quality of solutions found by the MILP approach in a reasonable amount of time de grades quickly as the number of variables reaches several dozens. Indeed, the MILP formulation is unable to find 2 Obtained from http://archiv e.ics.uci.edu/ml/. 3 http://www .cython.or g. 10 reasonable solutions for data sets containing 100 variables, which is not surprising giv en that number of Constraints (6d) and (6h) is cubic in the number of v ariables; thus, as n increases ev en the linear relaxations of the MILP problem become hard to solve. In the next section, we present a clever sampling algorithm over the space of k -trees to o vercome such limitations and handle large domains. The MILP formulation just described will set a baseline for the performance of such approximate approach. 4 Sampling k -tr ees using Dandelion codes In this section we dev elop an approximate method for learning bounded treewidth Bayesian networks that is based on sampling graphs of bounded treewidth and sub- sequently finding D A Gs whose moral graph is a subgraph of that graph. The approach is designed aiming at data sets with large domains, which cannot be handled by the MILP formulation. A nai ve approach to designing an approximate method would be to extend one of the sampling methods for unconstrained Bayesian network learning. For instance, we could en vision a rejection sampling approach, which would sample structures using some av ailable procedure (for instance, by sampling topological orderings and then greedily finding a D A G structure consistent with that order, as in [43]), and verify their treewidth, discarding the structure when the test fails. There are two great issues with this approach: (i) the computation of tree width is a hard problem, and ev en if there are linear-time algorithms (but exponential on the treewidth), they perform poorly in practice; (ii) virtually all structures would be discarded due to the fact that complex structures tend to ha ve larger scores than simple ones, at least for the most used score functions (their penalizations reduce the local complexity of the model, but are not able to constrain a global property such as tree width). W e empirically verified these facts, but will not report further on them here. Another natural approach to the problem is to consider both an elimination order for the variables (from which the treewidth can be computed) and a topological order (from which one can greedily search for parent sets without creating cycles in the graph). It is straightforward to uniformly sample from the space of orderings, but the combined ov erall number of such orderings is quite high: ( n !) 2 ≈ e 2 n log n − 2 n (from the Stirling approximation). W e propose an interesting way that is more efficient in terms of the size of the sampling space, and yet can be sampled uniformly (uniform sampling is a desirable property , as it ensures a good coverage of the space and is superior to other options if one has no prior information about the search space). This approach is based on the set of k -trees. Definition 1 A k -tr ee is defined in the following r ecursive way: (1) A ( k + 1) -clique is a k -tr ee. (2) If T 0 k = ( V , E ) is a k -tr ee with nodes V and edges E , K ⊆ V is a k -clique and v ∈ N \ V , then T k = ( V ∪ { v } , E ∪ { ( v , x ) | x ∈ K } ) is a k -tree . W e denote by T n,k the set of all k -trees o ver n nodes. In fact, a Bayesian netw ork with treewidth bounded by k is closely related to a k -tree. Because k -trees are exactly the maximal graphs with tree width k (graphs to which no more edges can be added without 11 increasing their treewidth), we kno w that the moral graph of the optimal structure has to be a subgraph of a k -tree [32]. The idea is to sample k -trees and then search for the best structure whose moral graph is one of the subgraphs of the k -tree. While directly sampling a k -tree might not be trivial, Caminiti et al. [10] proposed a linear time method for coding and decoding k -trees into what is called Dandelion codes (the set of such codes is denoted by A n,k ). Moreov er , they established a bijecti ve mapping between codes in A n,k and k -trees in T n,k . The code ( Q, S ) ∈ A n,k is a pair where Q ⊆ N with | Q | = k and S is a list of n − k − 2 pairs of integers drawn from N ∪ { } , where is an arbitrary number not in N . For example, Q = { 2 , 3 , 9 } and S = [(0 , ) , (2 , 1) , (8 , 3) , (8 , 2) , (1 , 3) , (5 , 3)] is a Dandelion code of a (single) 3 -tree ov er 11 nodes (that is , n = 11 , k = 3 ). Dandelion codes can be sampled uniformly at random by a trivial linear-time algorithm that uniformly chooses k elements out of N to build Q , and then uniformly samples n − k − 2 pairs of integers in N ∪ { } . Theorem 2 [10] Ther e is a bijection mapping elements of A n,k and T n,k that is com- putable in time linear in n and k . Giv en T k ∈ T n,k , we can use the dynamic programming algorithm proposed in [32] to find the optimal structure whose moral graph is a subgraph of T k . Our implementa- tion follo ws the ideas in [32], b ut can also be seen as extending the di vide-and-conquer method of [26] to account for all possible di visions of nodes. This results in the fol- lowing theorem. Theorem 3 [32] F or any fixed k , given (a k -tr ee) G = ( N , E ) ∈ G n,k and the scoring function for each node v ∈ N , we can find a D AG whose moralized graph is a subgraph of G maximizing the score in time and space O ( n ) . W e can combine the linear-time sampling of k -trees described in Theorem 2 with the linear -time learning of bounded structures consistent with a graph in the abo ve theorem to obtain an algorithm for learning bounded treewidth Bayesian networks. The algorithm is described in Algorithm 1 [V ersion 1]. Algorithm 1 Learning a structure of bounded treewidth by sampling Dandelion codes. There are two versions, according to the choice for step 2.c. Input a score function s i , ∀ i ∈ N . Output a D A G G best . 1 Initialize π best i as an empty set for all i ∈ N 2 Repeat until a certain number of iterations is reached: 2.a Uniformly sample ( Q, S ) ∈ A n,k ; 2.b Decode ( Q, S ) into T k ∈ T n,k ; 2.c [V ersion 1] Find a D AG G that maximizes the score function and is consistent with T k . 2.c [V ersion 2] Sample σ using Algorithm 2, and (greedily) find a DA G G that maxi- mizes the score function and is consistent with both σ and T k . 2.d If P i ∈ N s i ( π G i ) > P i ∈ N s i ( π best i ) , update π best i , ∀ i . 12 Theorem 4 The sampling space of Algorithm 1 [V ersion 1] is less than e n log( nk ) . Each of its iter ations runs in linear time in n (but e xponential in k ). Pr oof. The follow equality holds [5]. |T n,k | = n k · k ( n − k ) + 1 n − k − 2 . (7) It is not hard to see that the maximum happens for k ≤ n/ 2 (because of the symmetry of n k and of k ( n − k ) around n/ 2 , while n − k − 2 decreases with the increase of k ). By manipulating this number and applying Stirling’ s approximation for the factorials, we obtain: |T n,k | ≤ √ ne n log n +1 − n n − k e n − k k e k k n − k − 2 ( n − k ) n − k − 2 ≤ e √ n ( n − k ) 2 e n log n k n − 2 k − 2 ≤ e n log n +( n − 2 k ) log k , which is less than e n log( nk ) . The decoding algorithm has complexity linear in n (The- orem 2), as well as the method to uniformly sample a Dandelion code, and the method to find the best D A G consistent with a k -tree (Theorem 3). While the running time of Algorithm 1 [V ersion 1] is linear in n , the computational complexity of step 2.c, which uses the method in [32], is exponential in the treewidth (more precisely , it is Θ( k · 3 k · ( k + 1)! · n ) ). Hence, one cannot hope to use it with moderately high tree width bounds (say , larger than 8). Re garding the sample space, according to the above theorem it is slightly higher than that of order-based learning of unconstrained Bayesian networks (e.g. [43]), especially if k n . Howe ver , each iteration of step 2.c needs considerable more ef fort than the corresponding iteration in the unbounded case (yet, as it is a method theoretically linear in n , more efficient implementations of the algorithm that searches within a giv en k -tree might bring an additional boost to this approach in the future). As just explained, the main practical drawback of Algorithm 1 [V ersion 1] is step 2.c, which process each sampled k -tree. In the sequel we propose a ne w approach ([V ersion 2]) that is much faster (per iteration), at the price of a slight increase in the sampling space. W e will empirically compare these approaches in the next section. Let σ define a partial order of the nodes. W e say that a DA G G is consistent with σ if, ∀ j ∈ pa i (as defined by G ), there is no directed path from i to j in σ . In other words, σ constrains the valid topological orderings for the nodes in G . W e do not force σ to be a linear order, because we are only interested in orderings that specify , for each edge in a k -tree T k , which of the tw o ending points precedes the other (in other words, we are only interested in possible ways of orienting the edges of the k-tree). There are multiple linear orderings that achiev e the very same result for T k , and our goal is to sample from the smallest possible space of orderings (if we used a linear order , then the sampling space would be n ! ). A partial order σ can be represented as a DA G G : i is smaller than j in σ if and only if node i is an ancestor of node j in G . Giv en a k-tree T k , we will sample σ by following 13 the same recursiv e process as in Definition 1. This is described in Algorithm 2. The procedure produces partial orders (i.e., D AGs) whose underlying graph (obtained by ignoring arc directions) is exactly the graph T k . Note that the treewidth of the DA G corresponding to σ might exceed the treewidth of k . This does not affect the correctness of Algorithm 1, as σ is only used to specify which node preceeds which node in the order , and hence which are the possible parents; the actual parents are chosen so that the treewidth bound is respect. This can be done ef ficiently using T k . Algorithm 2 Sampling a partial order within a k -tree. Input a k -tree T k with n nodes Output a partial order defined by a D A G σ . 1 Initialize σ = T k . Arbitrarily choose a ( k + 1) -clique R of σ and call it the root clique; 2 Uniformly sample the directions of the arcs in σ linking the k + 1 nodes in R , without creating cycles in σ , and mark these nodes as done ; 3 T ake a node v that is linked to k done nodes; 4 Uniformly sample the directions of the arcs in σ between v and these k done nodes, without creating cycles in σ , and mark v as done ; 5 Go to Step 3 unless all nodes are done . Theorem 5 Algorithm 2 samples D A Gs σ on a sample space of size k ! · ( k + 1) n − k and runs in linear time in n and k . Pr oof. The sampling of the k + 1 nodes in the root clique tak es time O ( k ) by sampling one of the ( k + 1)! ways to choose the arcs without creating cycles. W e assume that an appropriate structure representing T k is kno wn (e.g., a tree-decomposition with n − k − 1 nodes), so Steps 1 and 3 can be done in O ( k ) time. For each iteration of Step 4, we spend time O ( k ) because there are only k + 1 ways to direct the edges, as this is equiv alent to placing v in its relati ve order with respect to the already ordered k neighbors. Hence the total running time is O ( kn ) and the sampling space is ( k + 1)! · ( k + 1) n − k − 1 = k ! · ( k + 1) n − k . The following result sho ws that the sampling space of this version of the sampling algorithm remains reasonably small, especially for k n (it would be also small if k is close to n , then |T n,k | decreases drastically , so the total sampling space would also decrease). Theorem 6 The sampling space of Algorithm 1 [V ersion 2] is less than e n log( n ( k +1) 2 ) . Each of its iter ations runs in linear time in n and k . Pr oof. As before, the decoding algorithm (Theorem 2) and the method to uniformly sample a Dandelion code run in linear time in both n and k . Algorithm 2 samples the ordering σ in linear time too. Finally , finding the best DA G consistent with a k -tree T k and σ is a greedy procedure over all nodes (choosing the parent set of a node each time): the treewidth cannot exceed k because we take a subgraph of T k , and no cycles can be formed if we respect σ . 14 T able 2: Dimensions of data sets. D A T A SET V AR. SAMPLES nursery 9 12960 breast 10 699 housing 14 506 adult 15 32561 zoo 17 101 letter 17 20000 mushroom 22 8124 wdbc 31 569 audio 62 200 hill 100 606 community 100 1994 Although the sampling space of V ersion 2 is larger than the one of V ersion 1, V er- sion 2 is much faster per iteration. This allows us to explore a much larger region of the space of k -tress than V ersion 1 can within a fixed amount of time. Moreov er, one can run V ersion 2 without pre-computing the score function: when scores are needed, they are computed and stored into a hash table for further accesses, thus closely match- ing another desirable characteristic of order-based learning methods for unbounded treewidth (namely , to avoid computing all scores a priori). 5 Experiments W e empirically analyze the accurac y of Algorithm 1 by comparing its two versions with each other and with the values obtained by the MILP method. As before, we use a collection of data sets from the UCI repository of varying dimensionality , with variables discretized over the median value when needed. The number of (binary) variables and samples in each data set are described in T able 2. Some columns of the original data sets audio and community were discarded: 7 variables of audio had always a constant value, 5 variables of community hav e almost a different value per sample (such as personal data), and 22 variables hav e missing data (T able 2 shows dimensions after this pre-processing). In all experiments, we maximize the Bayesian Dirichlet likelihood equi valent uniform (BDeu) score with equiv alent sample size equal to one [28]. W e use treewidth bounds of 4 and 10, and maximum parent set size of 3 (for hill and community , it was set as 2; ne vertheless, the MILP formulation is the one with a strong dependency on the maximum parent set size, as scores need to be pre-computed). T o be fair among runs, we hav e pre-computed all scores, and have considered them as input of the problem. The MILP has been optimized by CPLEX 12.4 with a memory limit of 64GB. W e have allowed it to run up to three hours, and ha ve also collected the incumbent solution after 10 minutes. Algorithm 1 has been giv en only 10 minutes (in either version). 15 70 75 80 85 90 95 100 105 nursery breast housing adult zoo letter mushroom wdbc audio hill community RELA TIVE SCORE (%) T reewidth ≤ 4 V ersion 1 V ersion 2 MILP-10m MILP-3h Figure 1: Performance of methods relativ e to the solution found by the V ersion 2 of Algorithm 1 with a treewidth limit of four . MILP results are missing for community and hill because it was not able to produce a solution for those cases. 0 20 40 60 80 100 nursery breast housing adult zoo letter mushroom wdbc audio hill community RELA TIVE SCORE (%) T reewidth ≤ 10 V ersion 2 MILP-10m MILP-3h Figure 2: Performance of methods relativ e to the solution found by the V ersion 2 of Algorithm 1 with a tree width limit of ten. MILP results after 10 minutes are missing for community and hill because it was not able to produce a solution within that time. 16 T o account for the variability of the performance of the sampling methods with respect to the sampling seed, we ran each version of Algorithm 1 ten times on each data set with different seeds. W e report the minimum, median and maximum obtained values o ver those runs for each dataset. W e show the relativ e scores (in percentage) of the approximate methods (V ersions 1 and 2 of Algorithm 1 and the best score found by the MILP formulation within 10 minutes and 3 hours) with respect to V ersion 2’ s median score, for treewidth bounds of four (Figure 1) and ten (Figure 2). The relative score is computed as the ratio of the obtained value and the median score of V ersion 2, so higher values are better . Moreover , a value higher than 100% sho ws that the method outperformed V ersion 2, whereas a value smaller than 100% shows the con- verse. The raw data used in the figures appear in T ables 3 (for Figure 1) and 4 (for Figure 2). The exponential dependence on treewidth of V ersion 1 made it intractable to run with treewidth bound greater than 8. W e see from the plot on top that V ersion 2 is largely superior to V ersion 1, e ven if the former might only find suboptimal networks for a giv en k -tree. This is probably a consequence of the much lower running times per iteration, which allows V ersion 2 to explore a much larger set of k -trees. It also suggests that spending time finding good k -trees is more worthy than optimizing net- work structures for a gi ven k -tree. W e also see that the MILP formulation scales poorly with the number of v ariables, being unable to obtain satisfactory solutions for data sets with more than 50 variables. On the hill data set with treewidth ≤ 4 , CPLEX running the MILP formulation was not able to output any solution within 10 minutes, and the solution obtained within 3 hours is far left of the zoomed area of the graph in Figure 1; on the community data set with treewidth ≤ 4 , CPLEX did not find any solution within 3 hours. Regarding the tree width bound of ten (Figure 2), we observe that V ersion 2 is very accurate and outperforms the MILP formulation in the lar ger data sets. It is worth noting that both versions of Algorithm 1 were implemented in Matlab; hence, the comparison with the approximate solution of running the MILP formulation with the same amount of time (10 minutes) might be unfair , as we expect to produce better results by an appropriate re-coding of our sampling methods in a more efficient language (one could also try to improv e the MILP formulation, although it will ev en- tually suf fer from the problems discussed in Section 3). Nevertheless, the results sho w that V ersion 2 is very competiti ve e ven in this scenario. 6 Conclusions W e hav e created ne w exact and approximate procedures to learn Bayesian networks of bounded treewidth. They perform well and are of immediate practical use. The de- signed mixed-inte ger linear programming (MILP) formulation improves on MILP for - mulations for related tasks, especially regarding the specification of treewidth-related constraints. It solves the problem exactly and surpasses a state-of-the-art method both in size of networks and treewidth that it can handle. Even if results indicate it is bet- ter than the state of the art, MILP is not so accurate and might fail in large domains. For that purpose, we ha ve proposed a double sampling idea that provides means to learn Bayesian netw orks in lar ge domains and high treewidth limits, and is empirically shown to perform very well in a collection of public data sets. It scales well, because 17 its complexity is linear both in the domain size and in the treewidth bound. There are certainly other search methods that can be integrated with our sampling approach, for instance a local search after ev ery iteration of sampling, local permutations of order- ings that are compatible with the k -trees, etc. W e leav e the study of these and other av enues for future work. During the making of this work, two closely related works appeared in the litera- ture. [6] dev eloped an exact learning procedure based on maximum satisfiability . [36] dev eloped an alternativ e MILP formulation of the problem with exponentially many constraints, and used cutting plane generation techniques to improv e on performance. These works hav e been developed independently and simultaneously with our work presented here; future work should compare their performance empirically against the methods proposed here. 7 Acknowledgments This work was partly supported by the grant N00014-12-1-0868 from the US Of fice of Navy Research, the Swiss NSF grant n. 200021 146606/1, and the F APESP grant n. 2013/23197-4. Refer ences [1] A. M. Abdelbar and S. M. Hedetniemi. Approximating MAPs for belief networks is NP-hard and other theorems. Artif. Intell. , 102(1):21–38, 1998. [2] S. Arnbor g, D. Corneil, and A. Proskuro wski. Complexity of finding embeddings in a k-tree. SIAM J. on Matrix Analysis and Applications , 8(2):277–284, 1987. [3] F . R. Bach and M. I. Jordan. Thin junction trees. In Advances in Neural Inf. Pr oc. Systems 14 , pages 569–576, 2001. [4] M. Barlett and J. Cussens. Advances in Bayesian Netw ork Learning using Inte ger Programming. In Pr oc. 29th Conf. on Uncertainty in AI , pages 182–191, 2013. [5] L. W . Beineke and R. E. Pippert. On the number of k-dimensional trees. J. of Comb . Theory , 6:200–205, 1969. [6] J. Ber g, M. J ”arvisalo, and B. Malone. Learning optimal bounded treewidth Bayesian networks via maximum satisfiability . In Pr oc. 17th Int. Conf. on AI and Stat. , pages 86–95, 2014. JMLR W&CP 33. [7] A. Be ygelzimer and I. Rish. Approximability of probability distributions. In Advances in Neural Inf . Pr oc. Systems 16 , pages 377–384, 2003. [8] H. L. Bodlaender . A linear time algorithm for finding tree-decompositions of small treewidth. SIAM J. on Computing , 25(6):1305–1317, 1996. [9] W . Buntine. Theory refinement on Bayesian networks. In Pr oc. 7th Conf . on Uncertainty in AI , pages 52–60, 1991. 18 [10] S. Caminiti, E. G. Fusco, and R. Petreschi. Bijective linear time coding and decoding for k-trees. Theory of Comp. Systems , 46(2):284–300, 2010. [11] V . Chandrasekaran, N. Srebro, and P . Harsha. Complexity of inference in graphi- cal models. In Pr oc. 24th Conf. on Uncertainty in AI , pages 70–78, 2008. [12] A. Chechetka and C. Guestrin. Efficient principled learning of thin junction trees. In Advances in Neural Inf . Pr oc. Systems , pages 273–280, 2007. [13] D. M. Chickering. Learning Bayesian networks is NP-complete. In Learning fr om Data: AI and Stat. V , pages 121–130. Springer-V erlag, 1996. [14] C. Cho w and C. Liu. Approximating discrete probability distributions with de- pendence trees. Inf. Theory , IEEE T rans. on , 14(3):462–467, 1968. [15] G. F . Cooper and E. Herskovits. A Bayesian method for the induction of proba- bilistic networks from data. Mach. Learning , 9(4):309–347, 1992. [16] J. Cussens. Bayesian network learning with cutting planes. In Pr oc. 27th Conf. on Uncertainty in AI , pages 153–160, 2011. [17] J. Cussens, M. Bartlett, E. M. Jones, and N. A. Sheehan. Maximum Likelihood Pedigree Reconstruction using Integer Linear Programming. Genetic Epidemiol- ogy , 37(1):69–83, 2013. [18] P . Dagum and M. Luby . Approximating probabilistic inference in Bayesian belief networks is NP-hard. Artif. Intell. , 60(1):141–153, 1993. [19] A. Darwiche. Modeling and Reasoning with Bayesian Networks . Cambridge Univ ersity Press, 2009. [20] S. Dasgupta. Learning polytrees. In Pr oc. 15th Conf. on Uncertainty in AI , pages 134–141, 1999. [21] C. P . de Campos. Ne w Complexity Results for MAP in Bayesian Networks. In Pr oc. Int. J oint Conf. on AI , pages 2100–2106, 2011. [22] C. P . de Campos, Z. Zeng, and Q. Ji. Structure learning of Bayesian networks using constraints. In Pr oc. 26th Int. Conf. on Mach. Learning , pages 113–120, 2009. [23] G. Elidan and S. Gould. Learning Bounded T reewidth Bayesian Networks. J. of Mach. Learning Res. , 9:2699–2731, 2008. [24] S. Ermon, C. P . Gomes, A. Sabharwal, and B. Selman. T aming the curse of dimensionality: Discrete integration by hashing and optimization. In Proc. 30th Int. Conf. on Mac h. Learning , pages 334–342, 2013. [25] N. Friedman. The Bayesian structural EM algorithm. In Pr oc. 14th Conf. on Uncertainty in AI , pages 129–138, 1998. 19 [26] N. Friedman, I. Nachman, and D. Pe’er . Learning Bayesian network structure from massi ve datasets: The ”sparse candidate” algorithm. In Pr oc. 15th Conf. on Uncertainty in AI , pages 206–215, 1999. [27] A. Grigoriev , H. Ensinck, and N. Usotskaya. Integer linear programming formu- lations for tree width. T echnical report, Maastricht Res. School of Economics of T ech. and Organization, 2011. [28] D. Heckerman, D. Geiger , and D. M. Chickering. Learning Bayesian networks: The combination of knowledge and statistical data. Mach. Learning , 20(3):197– 243, 1995. [29] R. Hemmecke, S. Lindner, and M. Studen ´ y. Characteristic imsets for learning Bayesian network structure. Int. J. of Appr ox. Reasoning , 53(9):1336–1349, 2012. [30] T . Jaakkola, D. Sontag, A. Globerson, and M. Meila. Learning bayesian network structure using LP relaxations. In Pr oc. 13th Int. Conf. on AI and Stat. , pages 358–365, 2010. JMLR W&CP 9. [31] D. K oller and N. Friedman. Probabilistic Gr aphical Models . MIT press, 2009. [32] J. H. K orhonen and P . Parviainen. Exact learning of bounded tree-width Bayesian networks. In Pr oc. 16th Int. Conf. on AI and Stat. , pages 370–378, 2013. JMLR W&CP 31. [33] J. H. P . Kwisthout, H. L. Bodlaender, and L. C. v an der Gaag. The Necessity of Bounded T ree width for Ef ficient Inference in Bayesian Networks. In Proc. 19th Eur opean Conf. on AI , pages 237–242, 2010. [34] D. D. Mau ´ a and C. P . de Campos. Anytime marginal MAP inference. In Pr oc. 28th Int. Conf. on Mac h. Learning , pages 1471–1478, 2012. [35] P . Parviainen and M. K oivisto. Exact structure discovery in Bayesian networks with less space. In Pr oc. 25th Conf. on Uncertainty in AI , pages 436–443, 2009. [36] P . Parviainen, H. S. Farahani, and J. Lagergren. Learning bounded tree-width Bayesian networks using inte ger linear programming. In Pr oc. 17th Int. Conf . on AI and Stat. , pages 751–759, 2014. JMLR W&CP 33. [37] E. Perrier , S. Imoto, and S. Miyano. Finding optimal Bayesian network gi ven a super-structure. J. of Mach. Learning Res. , 9(2):2251–2286, 2008. [38] D. Roth. On the hardness of approximate reasoning. Artif. Intell. , 82(1–2):273– 302, 1996. [39] G. Schw arz. Estimating the dimension of a model. Annals of Stat. , 6(2):461–464, 1978. [40] T . Silander and P . Myllymaki. A simple approach for finding the globally optimal Bayesian network structure. In Proc. 22nd Conf. on Uncertainty in AI , pages 445–452, 2006. 20 [41] P . Spirtes and C. Meek. Learning Bayesian networks with discrete variables from data. In Pr oc. 1st Int. Conf. on Knowledge Discovery and Data Mining , pages 294–299, 1995. [42] N. Srebro. Maximum likelihood bounded tree-width Markov networks. Artif. Intell. , 143(1):123–138, 2003. [43] M. T eyssier and D. K oller . Ordering-based search: A simple and ef fectiv e algo- rithm for learning Bayesian networks. In Pr oc. 21st Conf. on Uncertainty in AI , pages 584–590, 2005. [44] C. Y uan and B. Malone. An Improv ed Admissible Heuristic for Learning Optimal Bayesian Networks. In Pr oc. 28th Conf. on Uncertainty in AI , pages 924–933, 2012. [45] C. Y uan and B. Malone. Learning optimal Bayesian networks: A shortest path perspectiv e. J. of Artif . Intell. Res. , 48:23–65, 2013. 21 T able 3: Performance of methods within 10 minutes of time limit (except for last column, which is 3 hours) and treewidth limit of four . Maximum, median and minimum are respect to 10 runs with different random seeds. MILP results are missing when it was not able to produce a solution within the time limit. When the incumbent solution is not yet proven optimal, after the last column there is the maximum error of the incumbent solution. Algorithm 1 – V ersion 1 Algorithm 1 – V ersion 2 MILP D A TSET MAX MIN MEDIAN MAX MIN MEDIAN 10m 3h nursery -72235.68 -72327.59 -72239.34 -72159.27 -72159.27 -72159.27 -72159.27 -72159.27 breast -2696.62 -2770.36 -2714.39 -2685.24 -2686.87 -2685.99 -2685.24 -2685.24 housing -3430.32 -3470.83 -3458.86 -3186.99 -3249.59 -3196.41 -3159.1 -3159.1 adult -204063.81 -206209.91 -205008.65 -200771.07 -201277.83 -200950.56 -200426.42 -200426.42 zoo -644.55 -737.13953 -676.20 -576.58 -588.89 -582.57 -564.49 -562.35 letter -196931.49 -202689.46 -198054.51 -186553.59 -189419.55 -187901.06 -186335.69 -184308.01 mushroom -76431.11 -84982.26 -79416.18 -59147.71 -62745.76 -60431.06 -58976.54 -57827.36 [14.9%] wdbc -8825.27 -9810.43 -8907.39 -7158.26 -7274.97 -7201.37 -7700.21 -6994.85 [9.0%] audio -2345.23 -2473.97 -2458.18 -2122.33 -2149.55 -2131.19 -2541.25 -2541.25 [23.5%] hill -1275.01 -1510.71 -1411.53 -1112.05 -1151.12 -1137.03 – -42347.68 [99.1%] community -111792.03 -113826.56 -112910.15 -92433.71 -95183.13 -93300.99 – – 22 T able 4: Performance of methods within 10 minutes of time limit (except for last column, which is 3 hours) and treewidth limit of ten. Maximum, median and minimum are respect to 10 runs with different random seeds. MILP results are missing when it was not able to produce a solution within the time limit. When the incumbent solution is not yet proven optimal, after the last column there is the maximum error of the incumbent solution. Algorithm 1 – V ersion 2 MILP D A T ASET MAX MIN MEDIAN 10m 3h nursery -72159.27 -72159.27 -72159.27 -72159.27 -72159.27 breast -2685.24 -2685.24 -2685.24 -2685.24 -2685.24 housing -3159.71 -3180.67 -3171.55 -3159.1 -3159.1 adult -200398.91 -200513.6 -200419.75 -200362.49 -200362.49 zoo -564.24 -572.13 -567.76 -562.08 -561.54 letter -184077.80 -184840.36 -184552.17 -184030.33 -183972.77 mushroom -55604.24 -56950.21 -56116.23 -57987.74 -55562.44 [9.8%] wdbc -6943.96 -6984.57 -6958.57 -12313.03 -6893.55 [7.5%] audio -2094.76 -2146.76 -2122.17 -2541.25 -2541.25 [23.8%] hill -980.06 -1109.28 -999.11 – -42347.68 [99.1%] community -89556.12 -92656.88 -91709.72 – -135844.68 [45.9%] 23

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment